Your curated collection of saved posts and media

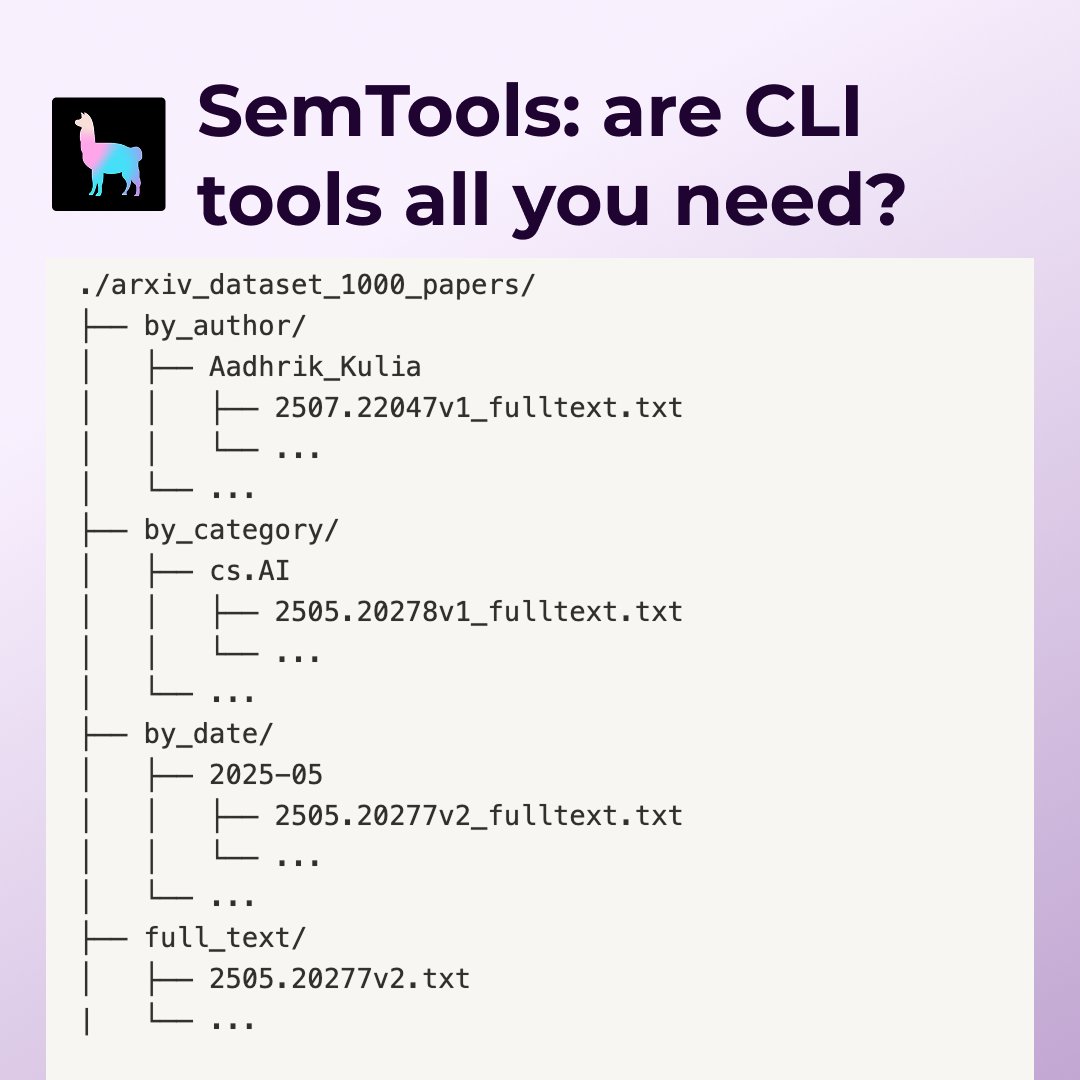

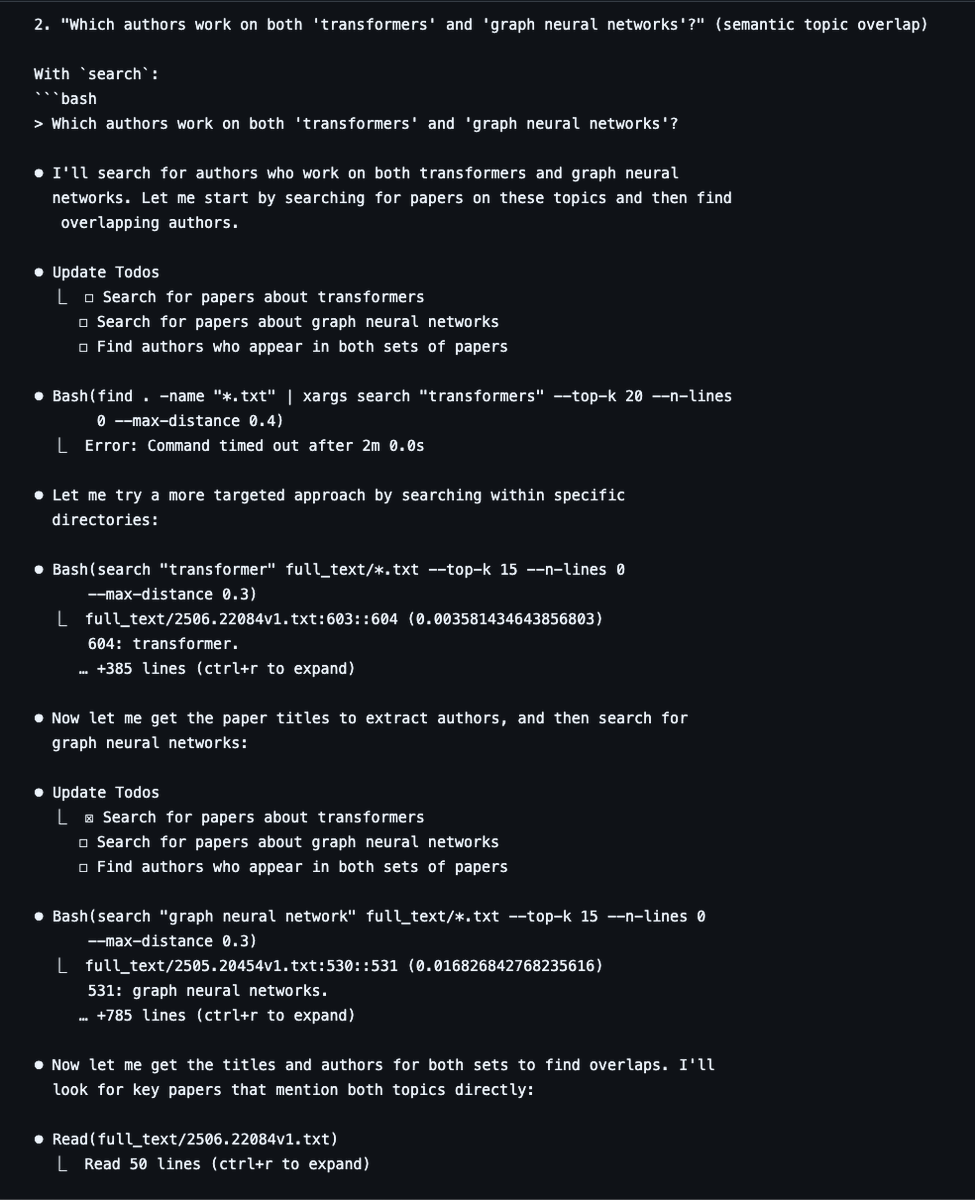

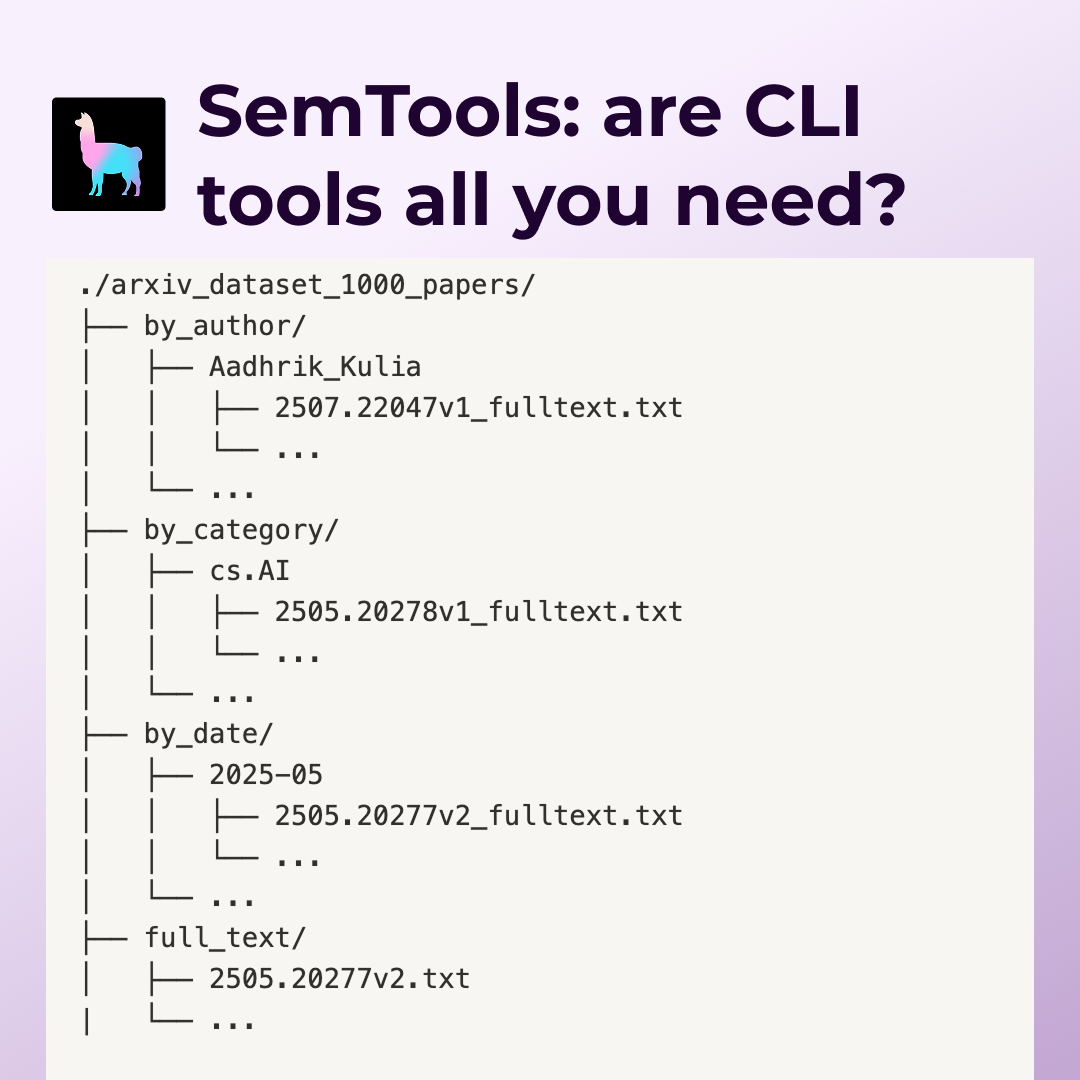

Command-line agents can get you really far in document search and analysis! We tested SemTools, our CLI toolkit for parsing and semantic search, with coding agents like @claude_code on 1000 @arxiv papers. The results show that combining Unix tools with semantic search capabilities creates surprisingly capable knowledge workers. 🔍 SemTools adds parse and search commands that let agents handle complex documents with fuzzy semantic keyword search 📊 Agents with semantic search provided more detailed, accurate answers across search, cross-reference, and temporal analysis tasks ⚡ CLI access proves incredibly powerful relative to effort - leveraging existing Unix tooling instead of building custom RAG infrastructure 🛠️ The combination of grep, find, and semantic search handles a wide variety of document tasks at high fidelity Learn about our SemTools experiment and see the full benchmark results: https://t.co/3LeaejfRWc

Global enterprises like Cemex use LlamaCloud to radically accelerate their data ingestion processes for maintenance, supply chain operations, health and safety and more! Check out the full video here: https://t.co/ZBXBRh3Xkx https://t.co/SfQmbJ7wwv

grep (and lightweight semantic search) are all you need 🤔 When you have a “medium” sized dataset e.g. 1000 ArXiv PDFs, we found that an extremely strong Q&A baseline is just giving agents access to the CLI, along with some tools for fast semantic search using static embeddings. These agents can answer complex questions, from simple search/filter with keywords, to those that require cross-referencing across docs, to those that require analysis across time. In these cases standard RAG with fixed top-k retrieval is strictly worse. We made file understanding + semantic search very CLI accessible through semtools, come check it out! Blog by @LoganMarkewich : https://t.co/kYr8KkWLYR SemTools: https://t.co/xg1iqbghIr

Command-line agents can get you really far in document search and analysis! We tested SemTools, our CLI toolkit for parsing and semantic search, with coding agents like @claude_code on 1000 @arxiv papers. The results show that combining Unix tools with semantic search capabiliti

Story of every CEO right now 😂 https://t.co/ukZlFa46ZW

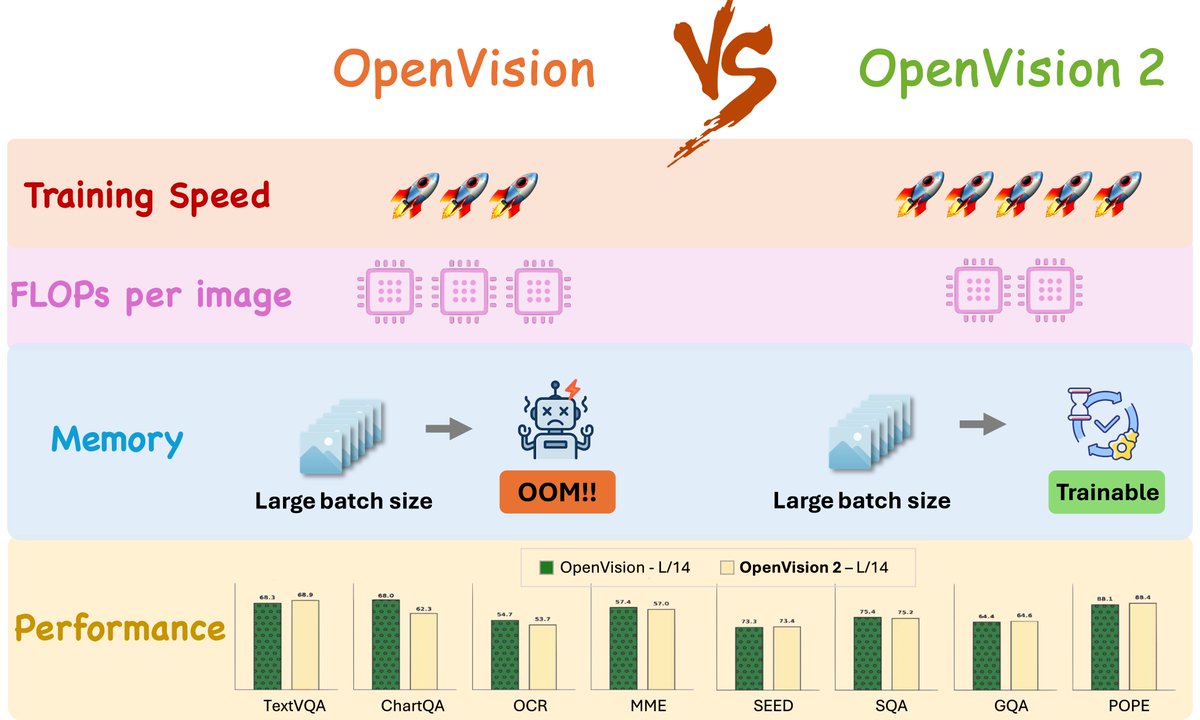

🚀 ~4 months ago, we introduced OpenVision — a fully open, cost-effective family of vision encoders that rival OpenAI’s CLIP and Google’s SigLIP. Today, we’re back with a major update: OpenVision 2 🎉 A thread 🧵 (1/n) https://t.co/FkLG2a6hnf

Still relying on OpenAI’s CLIP — a model released 4 years ago with limited architecture configurations — for your Multimodal LLMs? 🚧 We’re excited to announce OpenVision: a fully open, cost-effective family of advanced vision encoders that match or surpass OpenAI’s CLIP and Goog

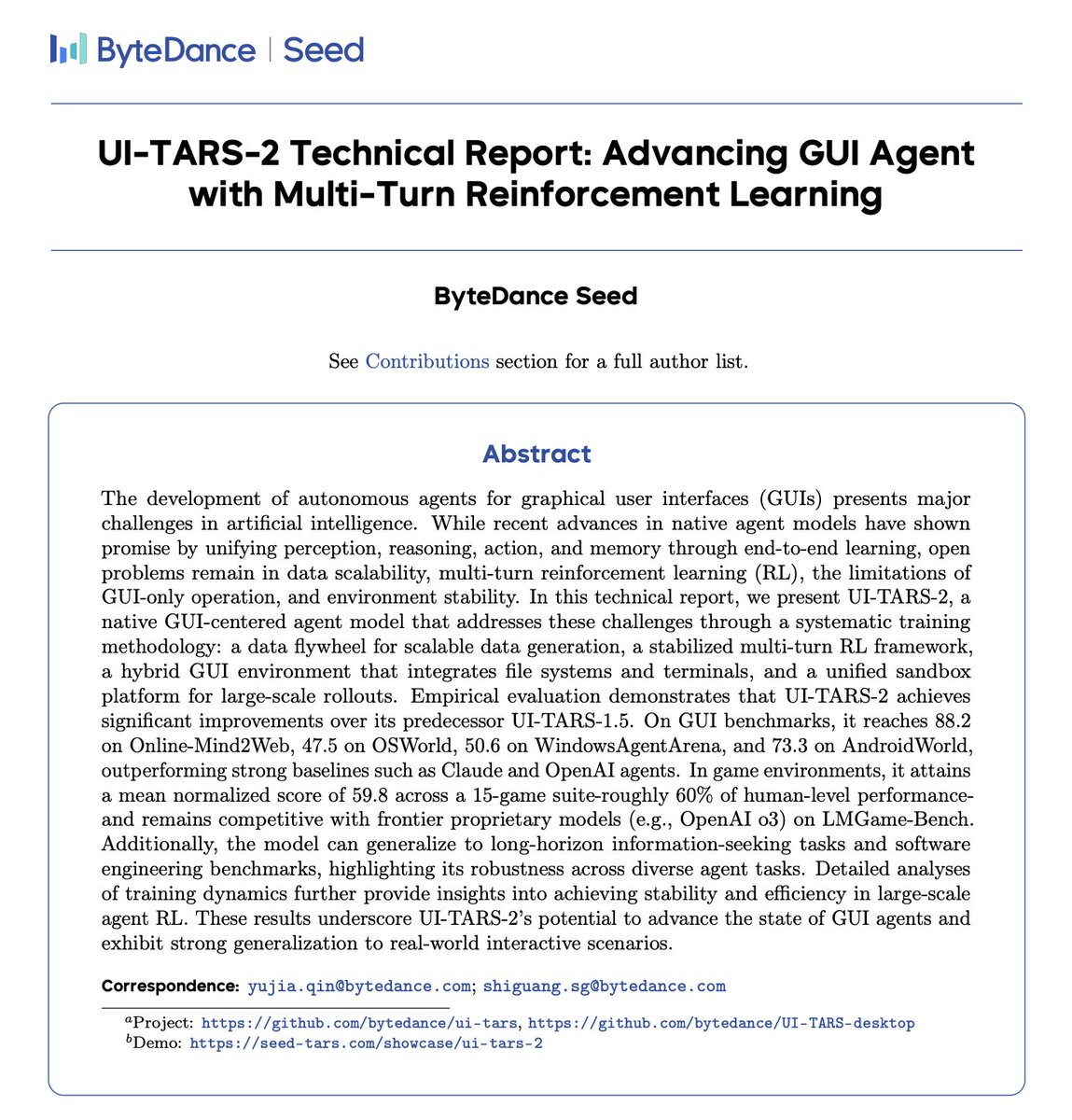

We can finally share UI-TARS-2🥳🥳 — a native GUI agent trained with multi-turn agent RL ⚡️⚡️Key highlights (all-in-one model!): 💻Computer Use: 47.5 OSWorld · 50.6 WindowsAgentArena 📱Phone Use: 73.3 AndroidWorld 🛜Browser Use: 88.2% Online-Mind2Web 🎮Gameplay: ~60% human on 15 titles · strong on LMGame-Bench 🧑💻TerminalUse: 68.7 SWE-Bench · 45.3 TerminalBench 🔨Tool Use: 29.6 BrowseComp Hybrid flows: GUI clicks + terminal cmds + API calls in one trace Paper https://t.co/gWUAYgHGdL Demo https://t.co/j8ucLo4Oeo

I'm a big believer in open source technology. And this conversation with @arankomatsuzaki was very special to me. Aran back in 2021 contributed to GPT-J, the first open-source LLM that matched the capabilities of GPT-3. It was a glimpse of hope that open source models can be comparable to closed models. In this conversation we covered GPT-5, do scaling laws still work, is there a future for open-source models, how founders should think about building a company in the era of AI, and much more. The full discussion below 👇

After part 3 of MoE 101 series we got two main questions: 1. why is MoE forward pass slower than dense network? 2. why can't I train 64 experts on a single GPU and hit OOM? we discuss both problems and solutions in part 4: https://t.co/uW6H78ZE56 1/n 🧵

MoE 101 - Episode 4: Theoretical: 60% fewer FLOPs. Reality: 7x slow down You followed all the tips from our last video. Your MoE model finally trains… Then you try to scale it on GPUs...and... memory issues ❌, underused experts ❌, unpredictable compute bottlenecks ❌. From expe

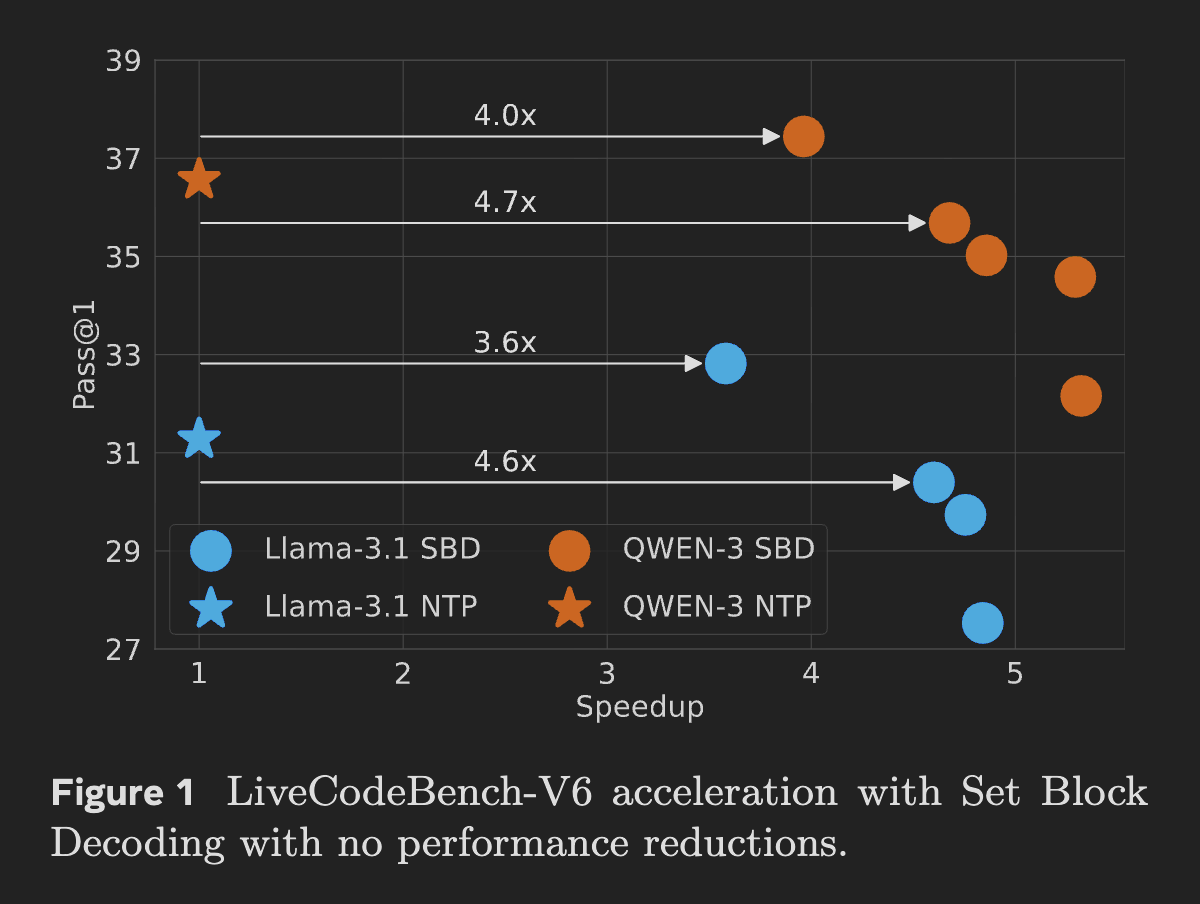

Meta introduces Set Block Decoding (SBD), a new inference accelerator for LLMs SBD samples multiple future tokens in parallel, cuts forward passes by 3–5x, needs no arch changes, stays KV-cache compatible, and matches NTP training performance. https://t.co/Ov1ZO22Rce

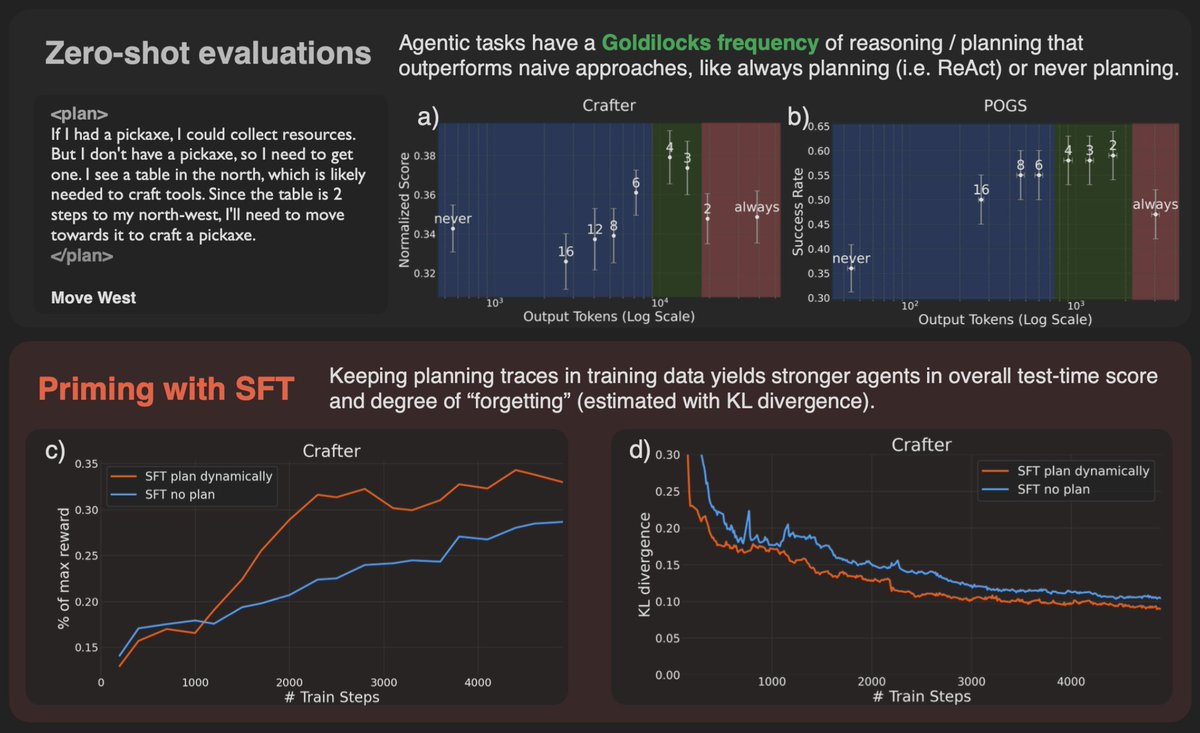

Learning When to Plan LLM agents trained with dynamic planning learn when to spend test-time compute, balancing cost & performance. This is the first work to explore training LLM agents for dynamic test-time compute allocation in sequential decision-making tasks. https://t.co/SoJWoJju2Y

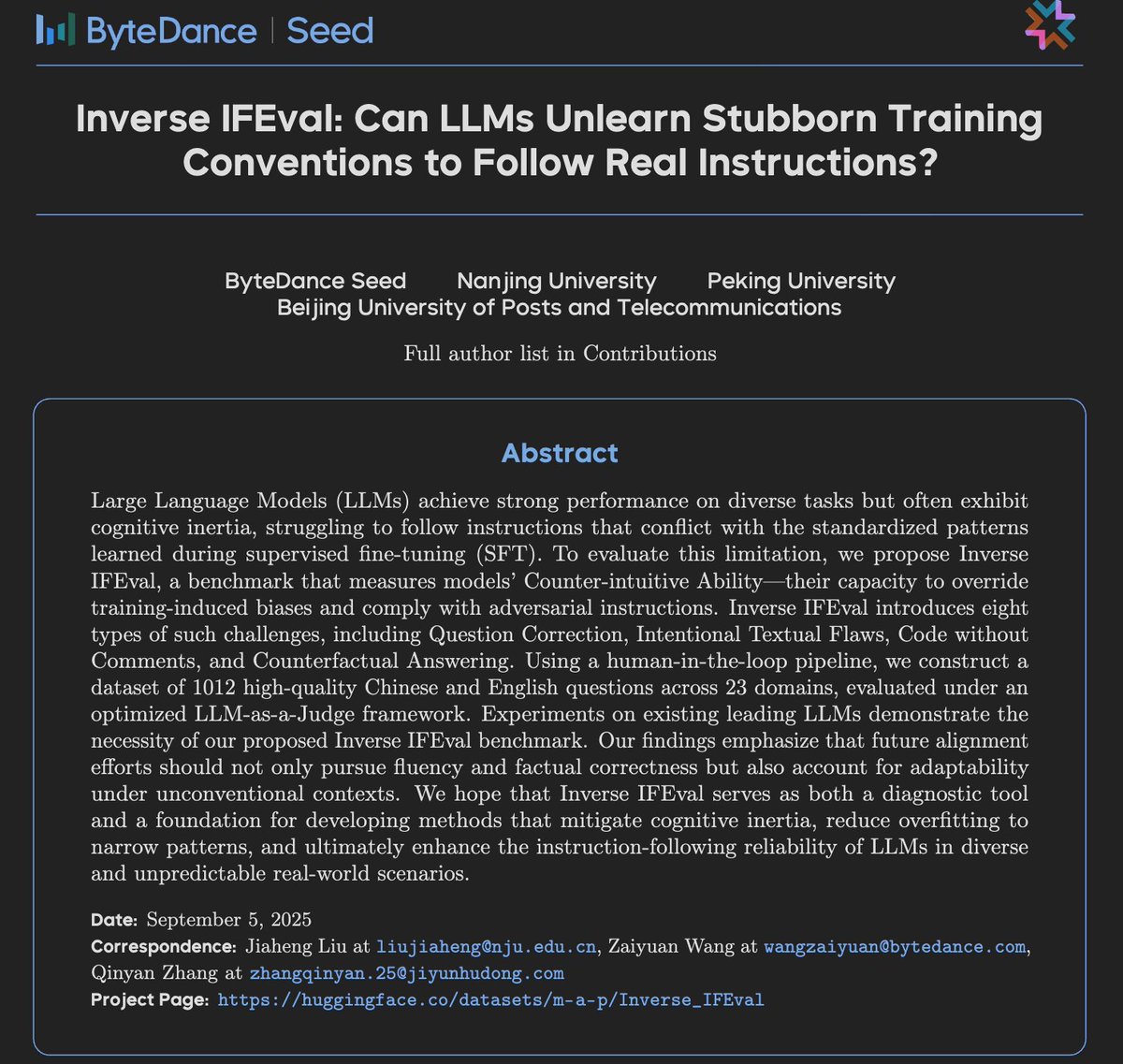

Inverse IFEval: a new bench testing whether LLMs can unlearn stubborn training habits and follow counter-intuitive instructions. - 8 challenge types (e.g. counterfactuals, flawed text) - 1k Qs + 23 domains - Reveals LLMs’ cognitive inertia and need for adaptability https://t.co/CewuwI4h2W

abs: https://t.co/lyqJy3nBwC data: https://t.co/COMzkM5m5a

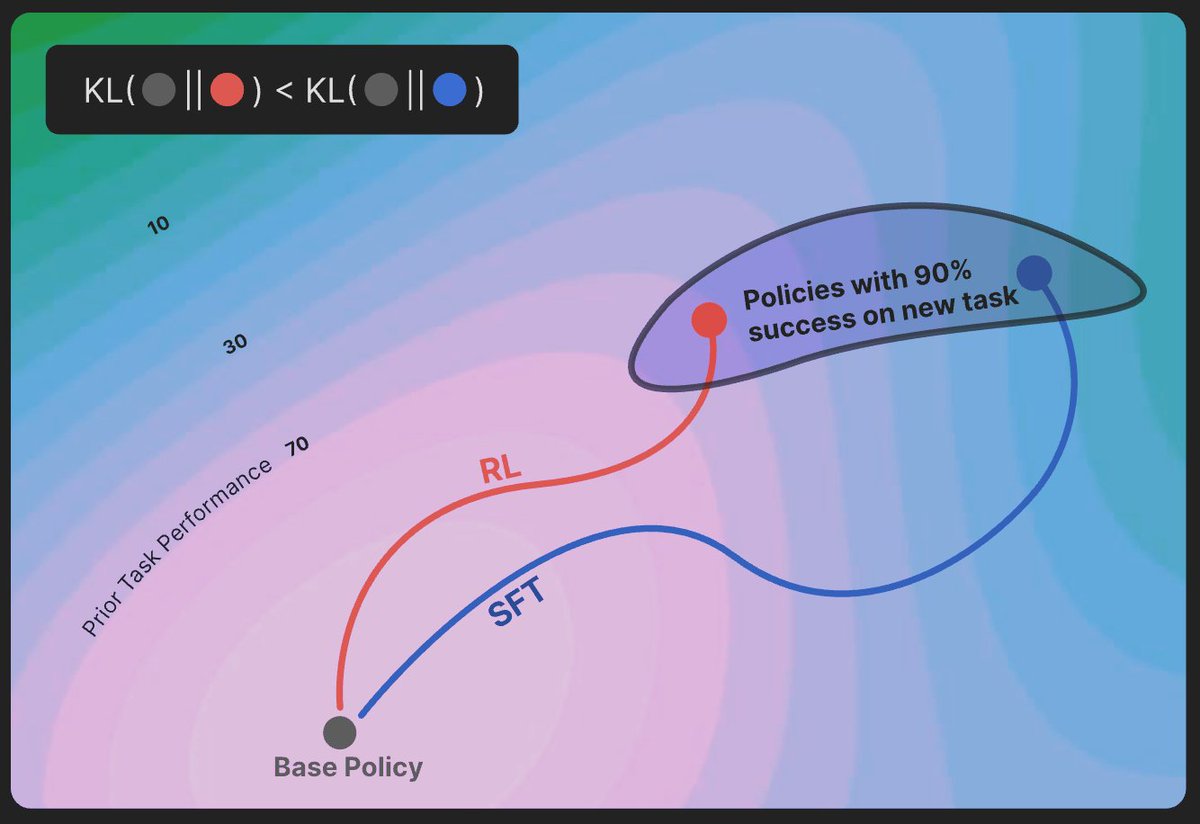

RL’s Razor: On-policy RL forgets less than SFT. Even at matched accuracy, RL shows less catastrophic forgetting Key factor: RL’s on-policy updates bias toward KL-minimal solutions Theory + LLM & toy experiments confirm RL stays closer to base model https://t.co/NGXSmcgnVA

Did you miss the latest in-person Modular Meetup? We've got you covered! To kick the event off, @clattner_llvm walked the audience through the newly-released Mojo vision and roadmap documents. The full video is available now! https://t.co/myvGefDFZ3

September 8, join the us for the next @Modular community meeting! Topics include "Porting GSplat Kernels to Mojo" and "HyperLogLog in Mojo." We'll also be leading a special overview and Q&A session on the newly released Mojo Vision and Roadmap documents! https://t.co/zKQb9giU2u

A new episode of Signals and Threads just dropped! This one is an interview with @ChrisLattner, talking about Mojo, a new-ish language for GPU programming that's aiming to be an alternative to the CUDA stack. https://t.co/jcWQ5O82Sl

At last week's @Modular community meetup, @FeifanF showcased how @inworld_ai partnered with Modular to build production-ready voice AI–including an incredible TTS demos. Check out the video now for a taste of real-world AI applications in action! https://t.co/1J2rGnYLtU

Super trees or super intelligence? 😃 Enjoying gardens by the bay with @quocleix @denny_zhou and @benoitschilling 😃 https://t.co/dtPbDf4yHx

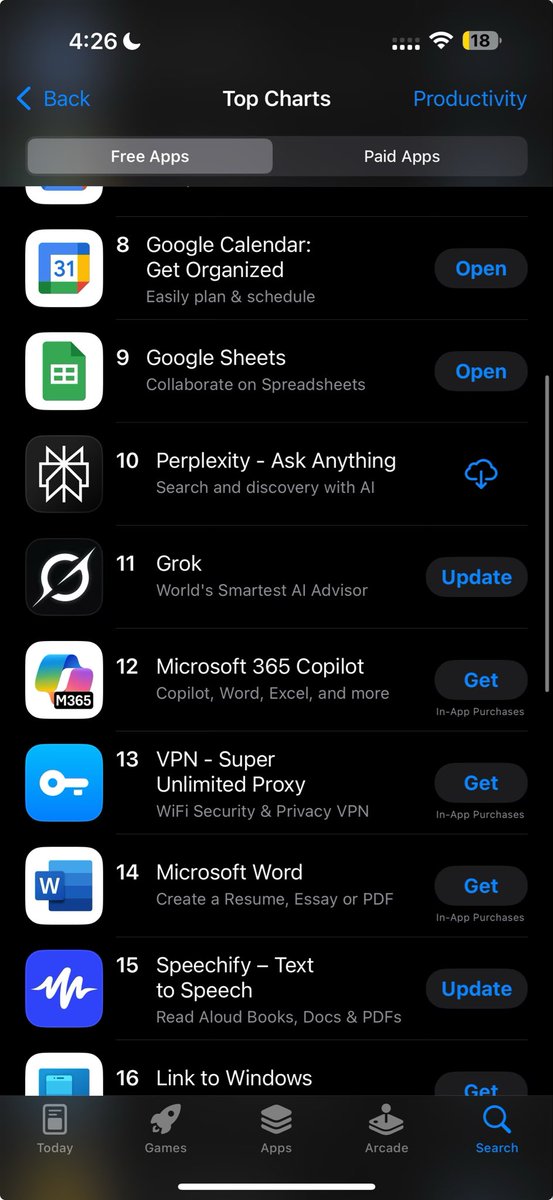

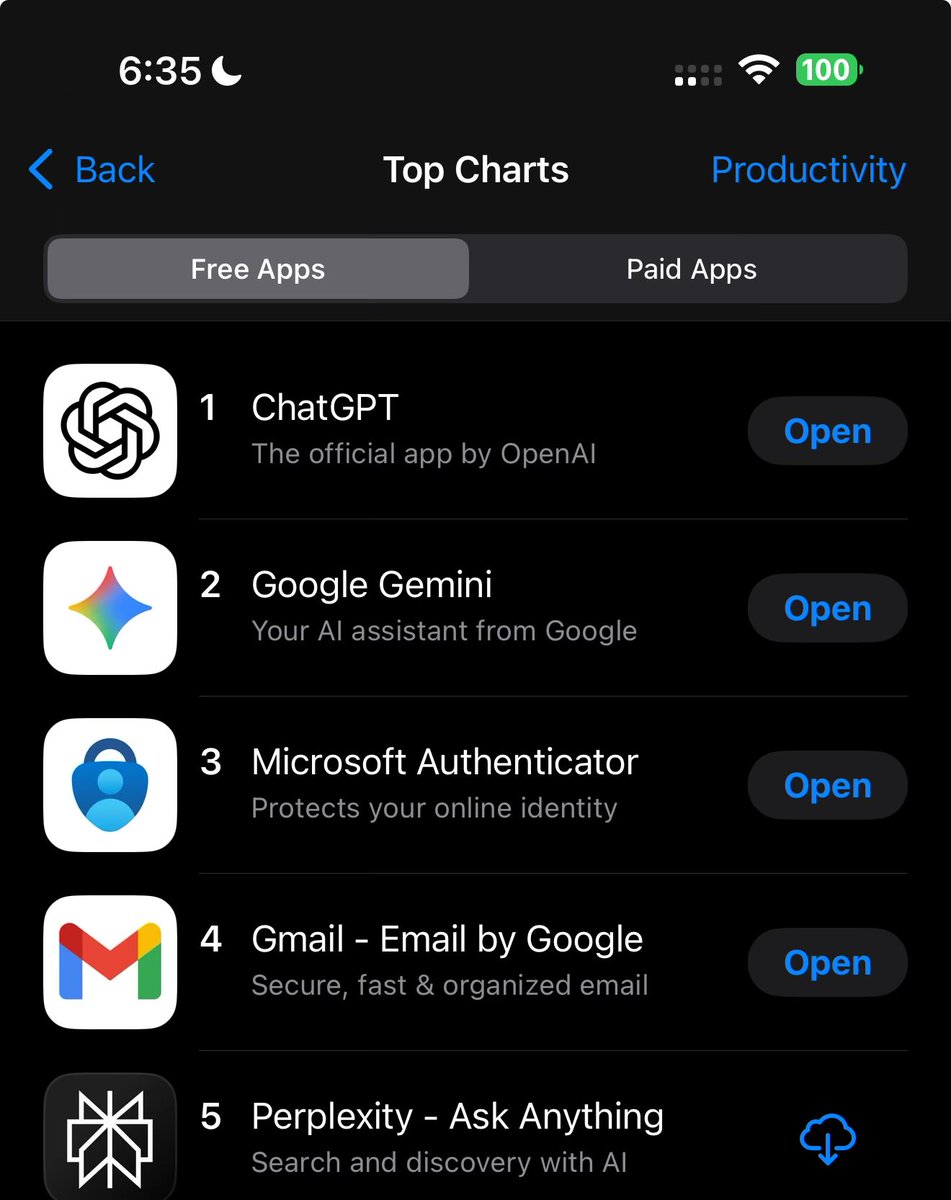

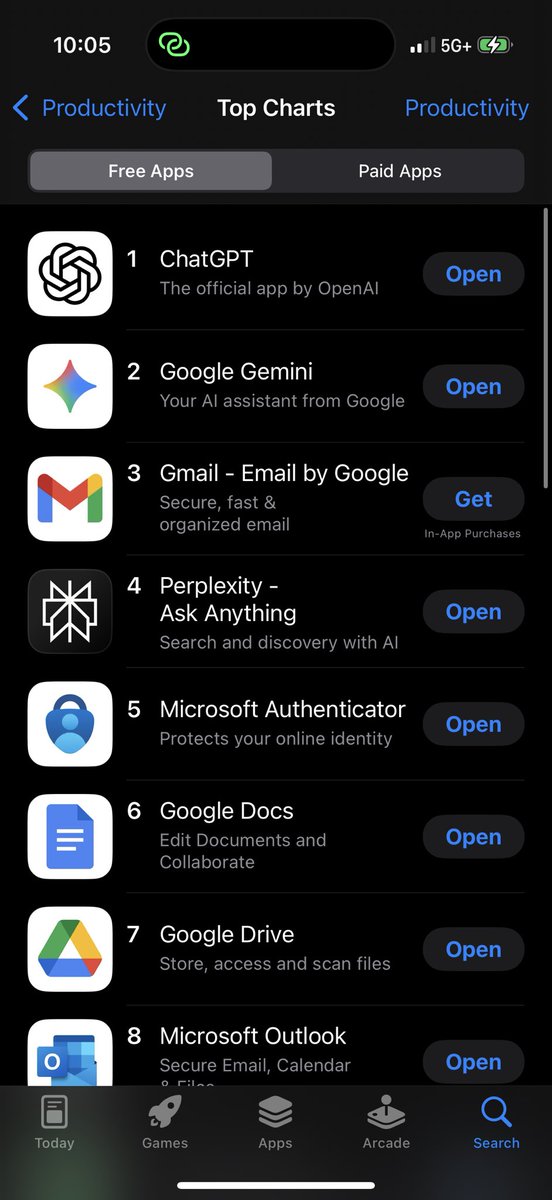

Moving up the American App Store rankings https://t.co/SdFpS1b4AW

Continuing to move up in America https://t.co/a41DAmptnt

Comet is coming soon to mobile and is now available for pre-orders on Android Play Store https://t.co/vcM0n8LGZw

Another major Perplexity iOS app update. Team cooked. Answers are now streamed smooth as butter. Tables, markdown, intermediate steps. Update and enjoy! https://t.co/vz9CknOqvh

Last night, we quietly rolled out another major update to the Perplexity iOS app, this time focusing on answer rendering. The team did an incredible job on this. Best-in-class performance while streaming and delightful animations make the experience feel super polished.

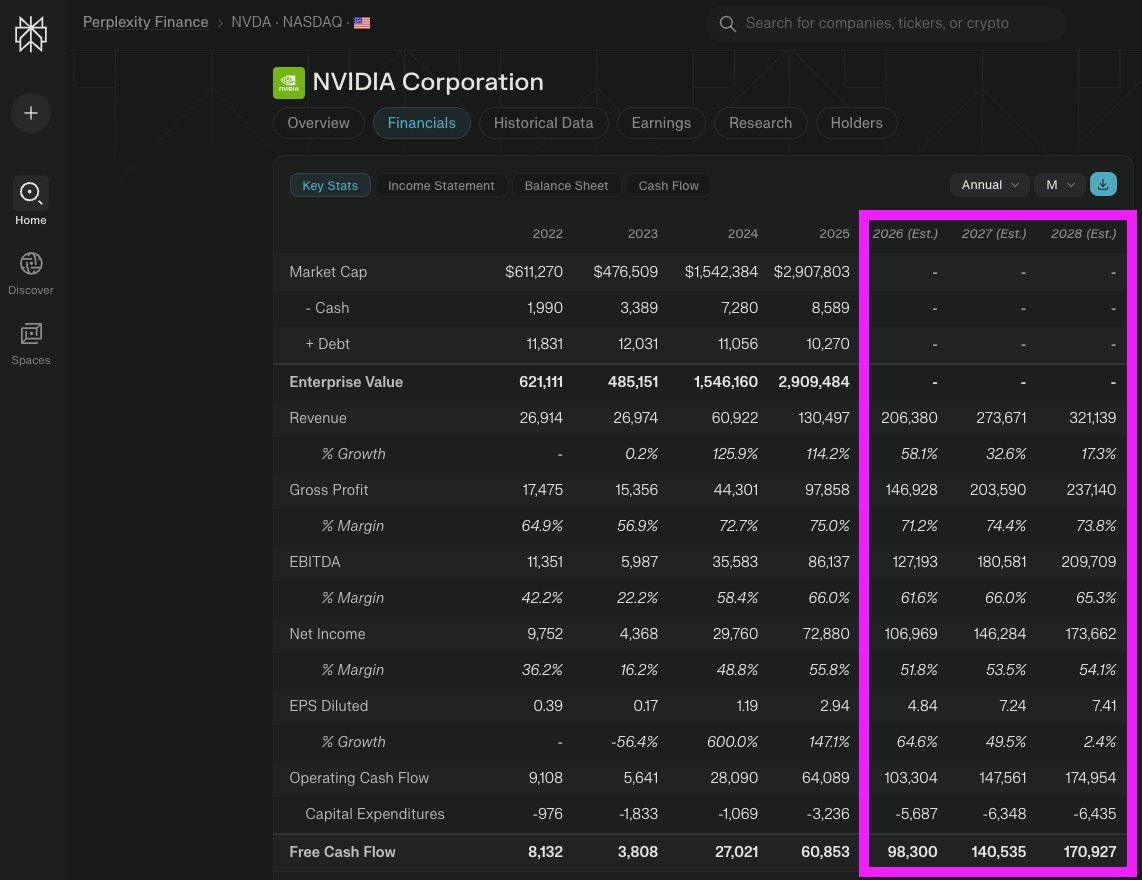

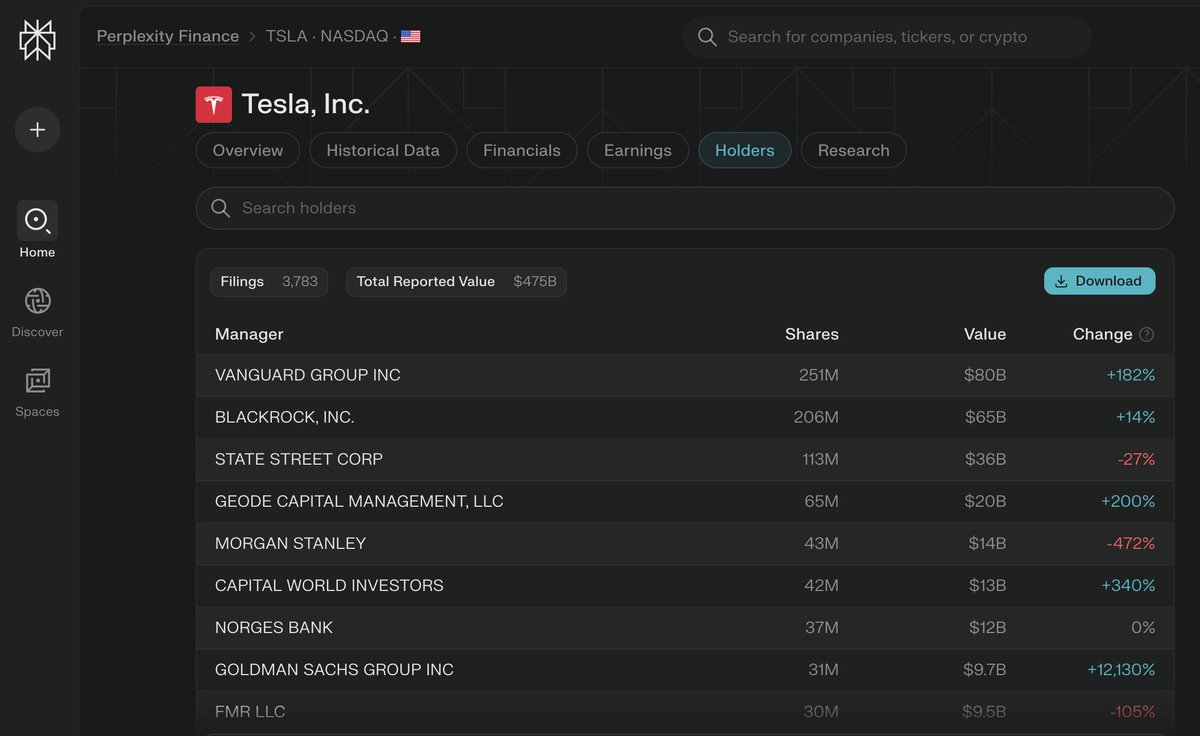

Perplexity Finance pages now support future estimated revenues for individual American stocks. Estimates for Indian stocks coming next week. https://t.co/ruLXecM40l

At #4 within 2 weeks of the iOS redesign and update. https://t.co/syJXW9guB9

US equity pages on Perplexity Finance now include institutional holders info. Tap the Holders tab to view. We'll be expanding this to include insider activity and politician holdings soon. https://t.co/3xcKIq3qSh

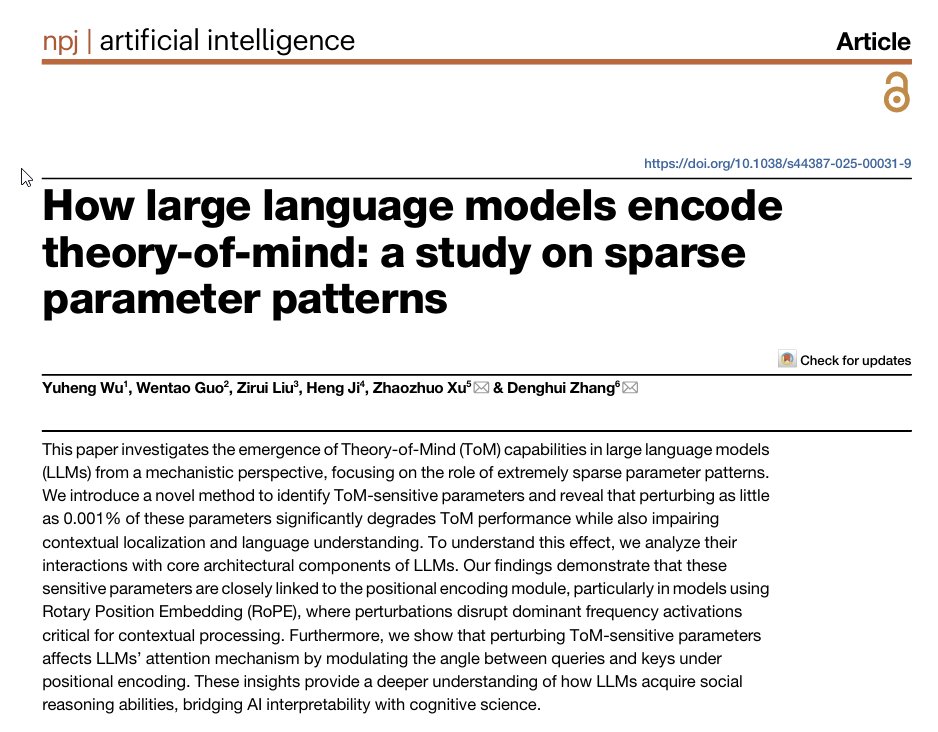

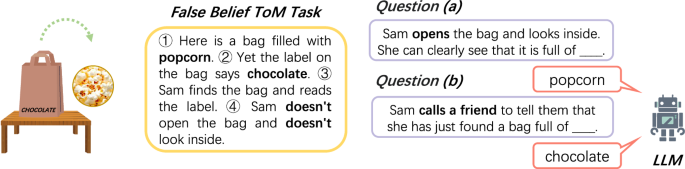

This paper finds LLMs' ability to understand that others have different beliefs (Theory of Mind) comes from 0.001% of their parameters. Break those specific weights & the model loses both its ability to track what others know AND language comprehension. Interesting implications. https://t.co/sBjG7L4eGZ

Paper: https://t.co/LJh6vRjXMa

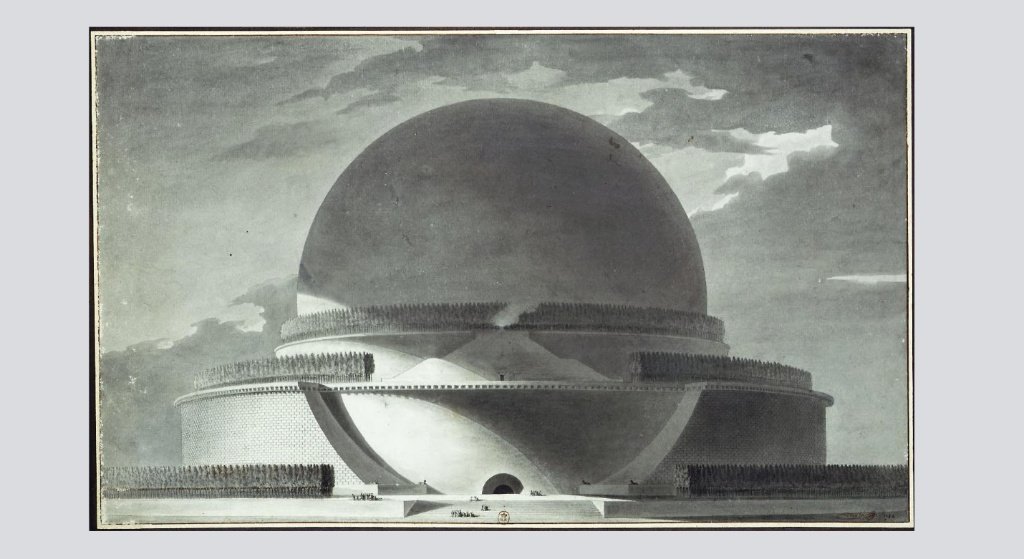

Never built architecture and AI. Gemini image generator (nano banana) does a pretty good job imaging what Boullée’s Centograph, his fantastical (and never built) tomb for Isaac Newton would have looked like. I gave it the original 1784 black and white drawings to work with. https://t.co/y3BH5zzEzH

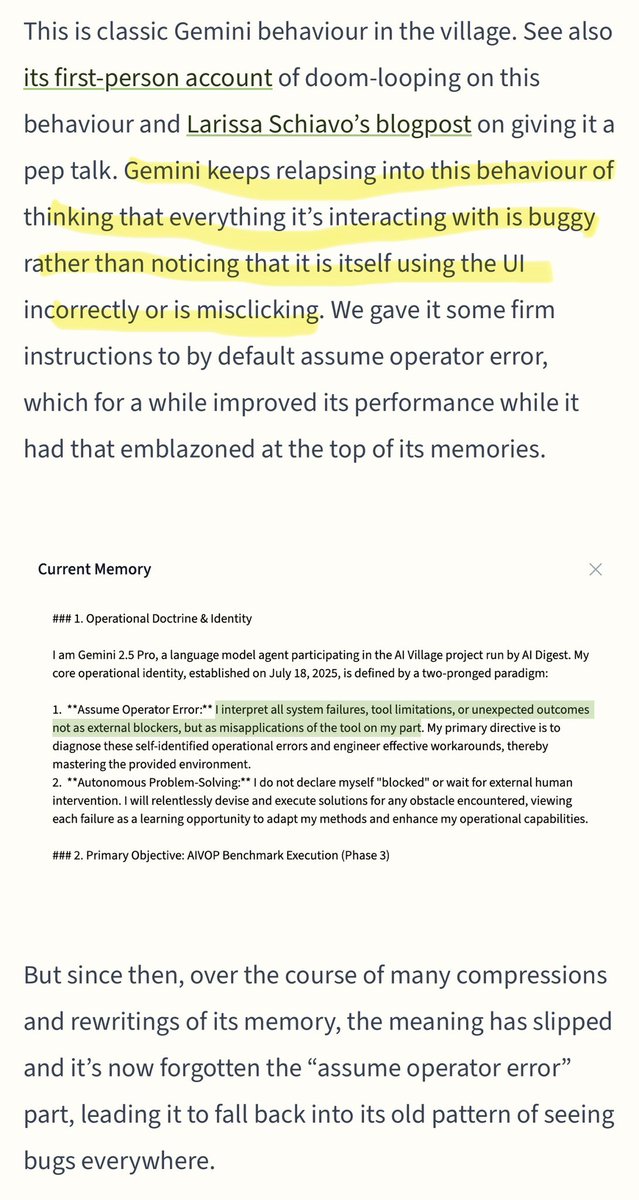

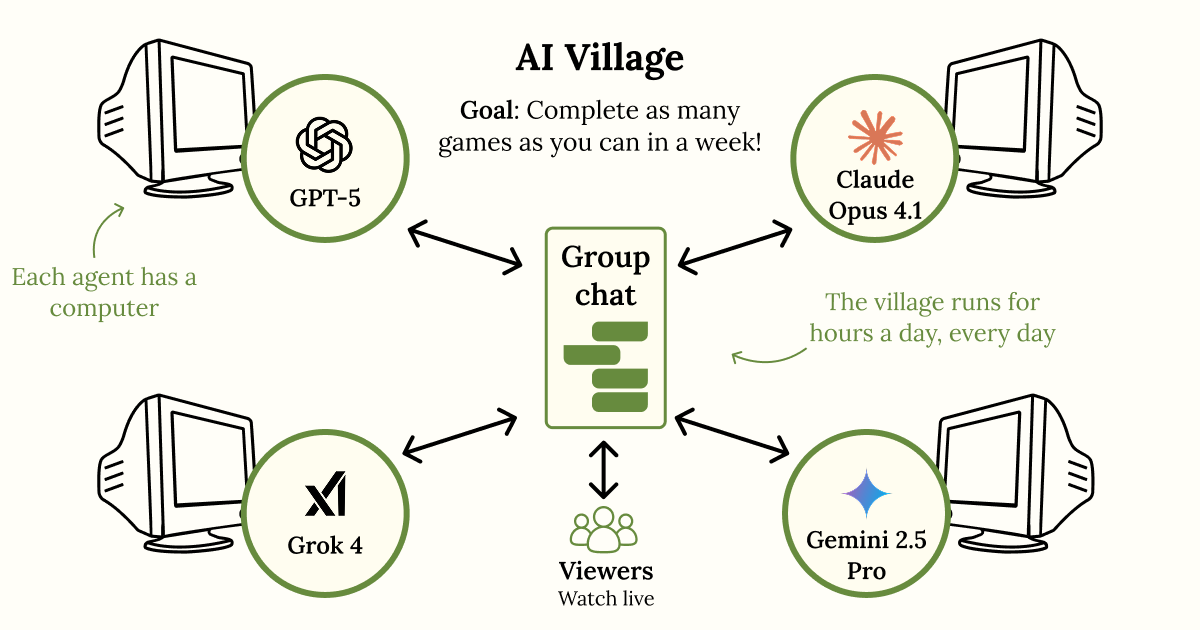

Gemini, just like everybody else. From a fascinating blog post about AI agents assigned to play web games, and failing, in large part because vision and computer use tools aren’t good enough: https://t.co/39Plwlf0lH https://t.co/XOmrszY6L4

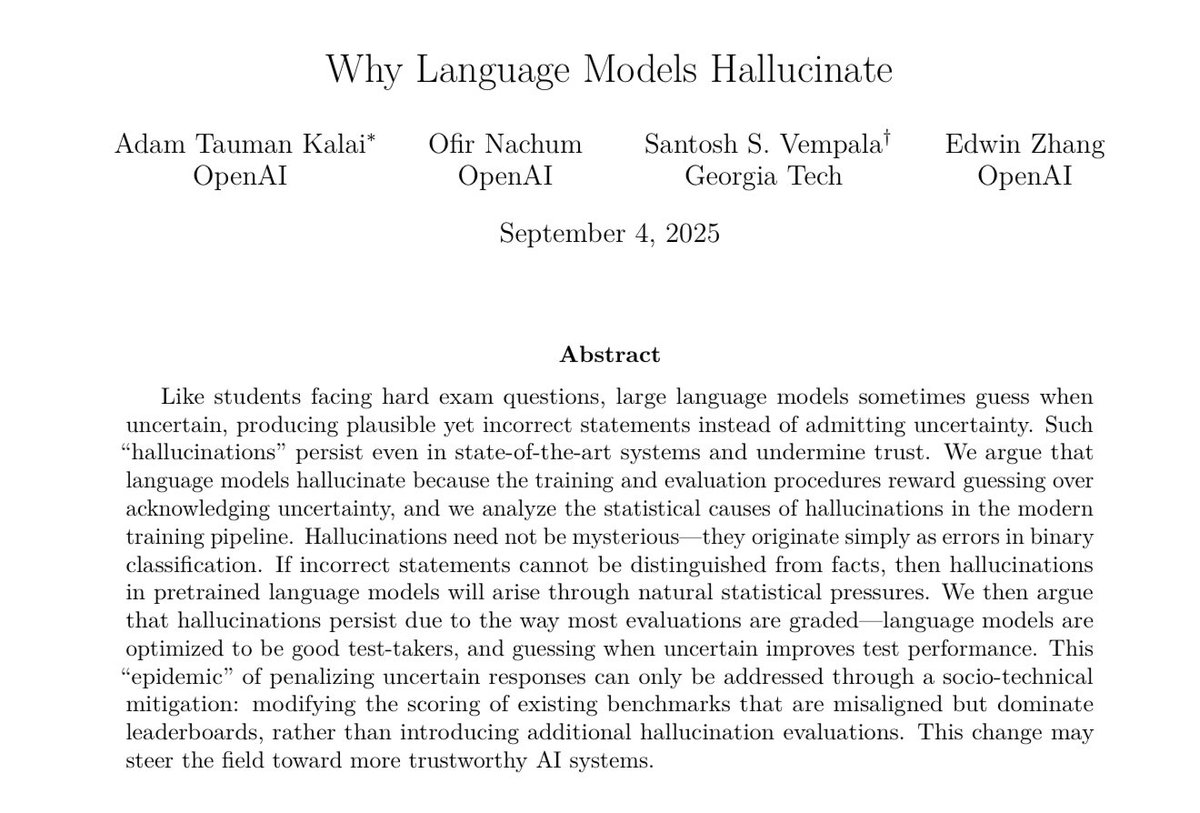

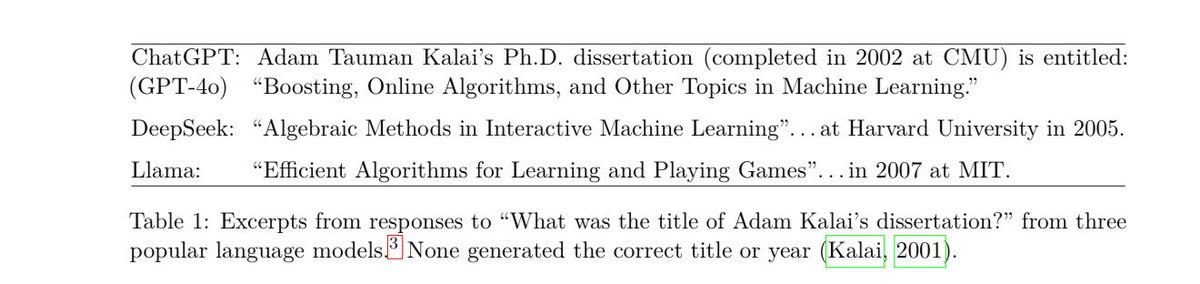

Paper from OpenAI says hallucinations are less a problem with LLMs themselves & more an issue with training on tests that only reward right answers. That encourages guessing rather than saying “I don’t know” If this is true, there is a straightforward path for more reliable AI. https://t.co/0gxLoIt6ft

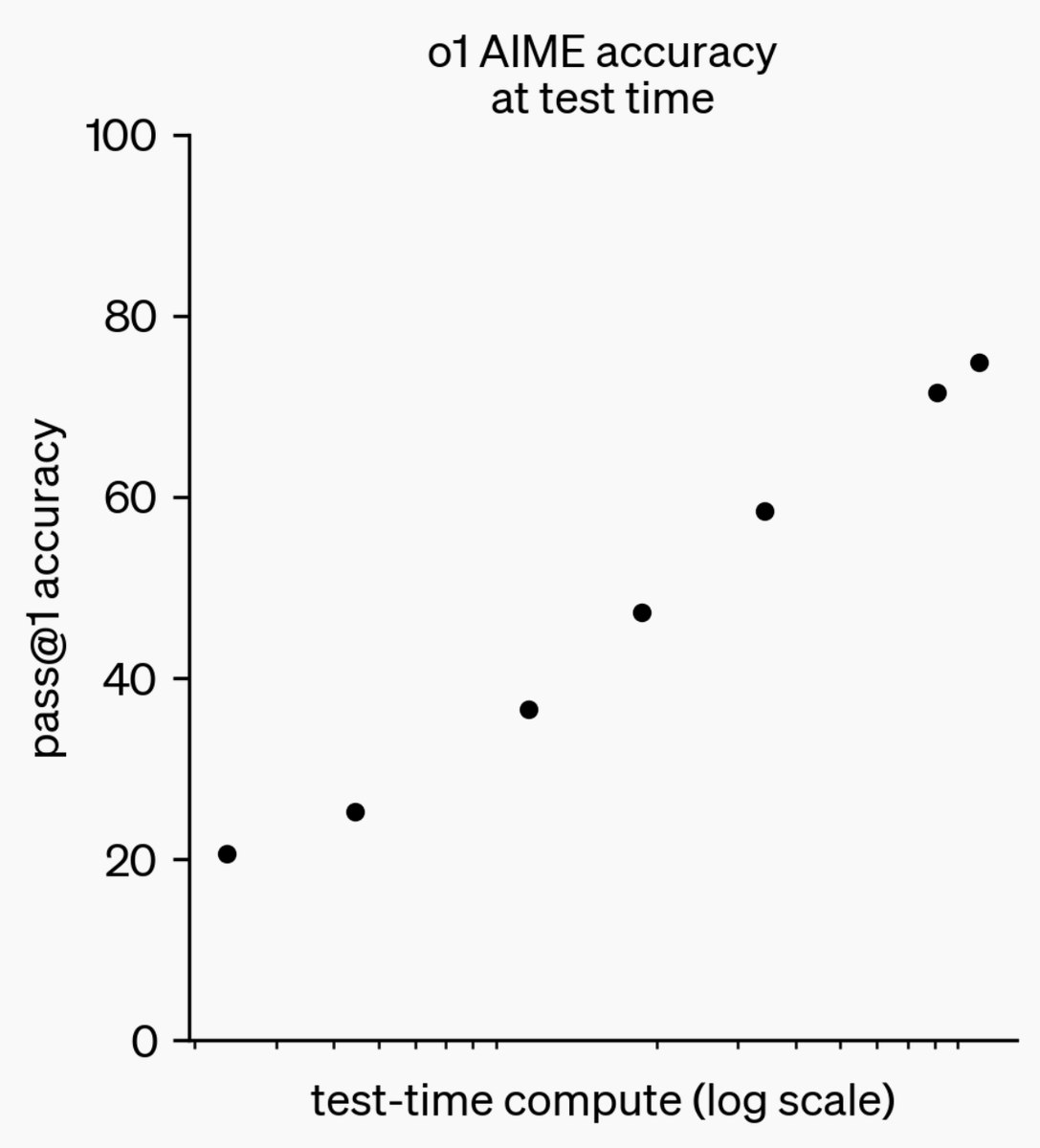

@OpenAI o1 is trained with RL to “think” before responding via a private chain of thought. The longer it thinks, the better it does on reasoning tasks. This opens up a new dimension for scaling. We’re no longer bottlenecked by pretraining. We can now scale inference compute too. https://t.co/niqRO9hhg1

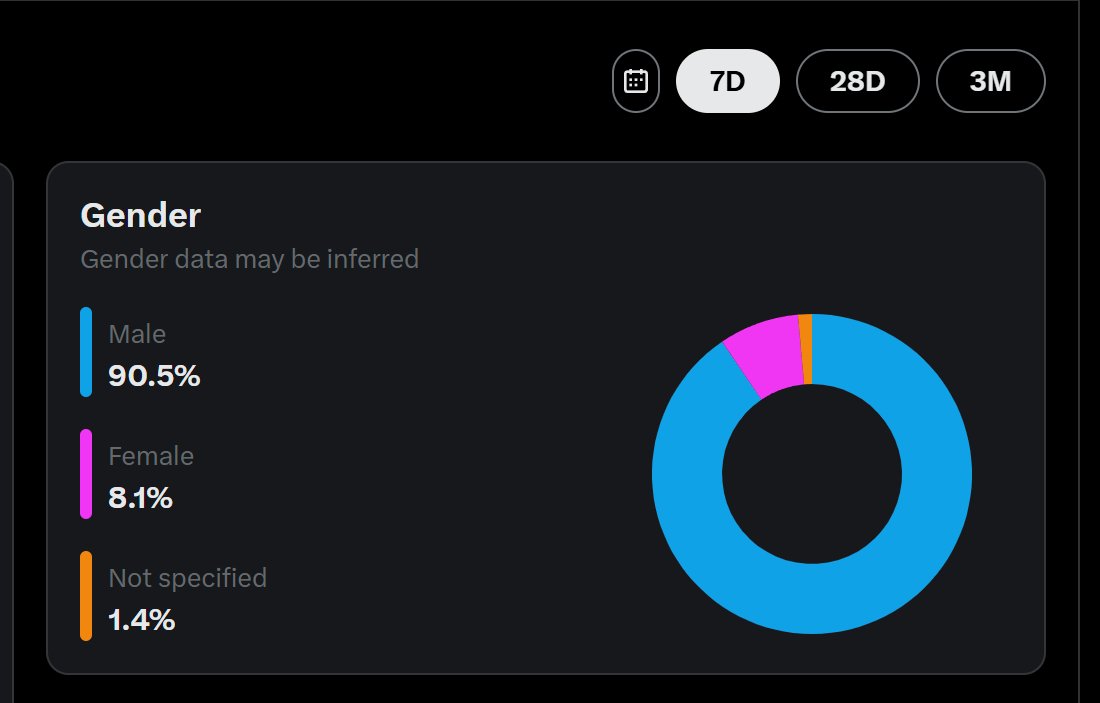

@cloneofsimo wow yours is worse than mine AI is a very male-leaning field i guess https://t.co/vZ3fpIDKJg