Your curated collection of saved posts and media

@yao_ej24569 @WesEarnest Have you tried v2 TUI, finalizing next week? if your hermes is updated run `hermes --tui`

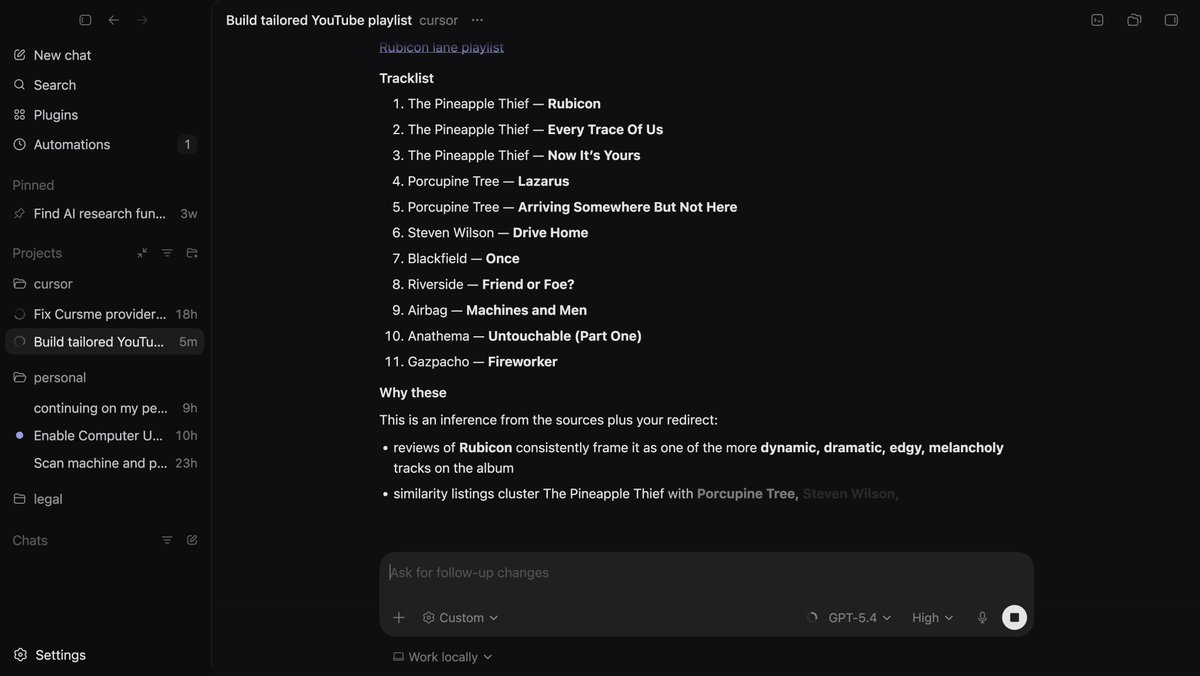

Holy shoot I just tried computer-use with Codex, it's mind melting. I see where the OpenClaw investment is going for them. Nothing is close to this, it dug into my browser-history figured out my taste in music, and set up a playlist. Most exciting development in 2026 ngl. https://t.co/PZulO0bfWL

🚀 Artifact Preview v3.0 just shipped for Hermes Agent! Just like Claude. Your agent writes HTML/CSS/JS → the browser opens automatically 🚀 → you see a polished, live, interactive preview. No manual steps. Zero friction. ⚡ https://t.co/b5SywJKty2 cc @NousResearch

HY-World-2.0 Demo is now live on @huggingface Spaces for 3D world reconstruction and simulation with Gradio and Server modes. > 3D reconstruction, Gaussian splats > Camera poses, depth maps, normals > Rerun (multimodal data visualization) https://t.co/MofKZ6OGPX

This is HUGE. Ollama now supports Hermes AI Agents. A fully local self-improving AI Agent that runs for you 24/7 for free. https://t.co/GfsyY3kyDL

// Self-Evolving Agent Protocol // One of the more interesting papers I read this week. (bookmark it if you are an AI dev) The paper introduces Autogenesis, a self-evolving agent protocol where agents identify their own capability gaps, generate candidate improvements, validate them through testing, and integrate what works back into their own operational framework. No retraining, no human patching, just an ongoing loop of assessment, proposal, validation, and integration. Why it's worth reading this paper: Static agents age quickly. As deployment environments change and new tools arrive, the agents that survive will be the ones that can safely rewrite themselves. Autogenesis is part of a growing wave of self-improving agent systems, alongside work like Meta-Harness and the Darwin Gödel Machine line, and it's one of the cleaner protocol-level takes on continual self-improvement so far. Paper: https://t.co/3aj9LLjSbk Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Make neural network cells inside a “Digital Petri Dish” fight for control and dominance in a web browser tab. https://t.co/t8N6CIhvze https://t.co/A5SSTvPJBu

What happens when you put competing neural networks in a Petri Dish and start changing the rules while they adapt? Last year we released Petri Dish NCA, where neural nets are the organisms that learn during simulation. Today we're releasing Digital Ecosystems: a browser-based pl

LiteParse is the best model-free, open-source document parser for AI agents. It now gets a first-class landing page on our website 💫 Our company mission is building the world's best agentic document processing platform, and liteparse is the central pillar behind our OSS efforts. It's blazing fast (and getting faster soon!), supports 50+ file formats, and is one-shot installable as an agent skill. Webpage: https://t.co/mVwma5QOCj Come check it out: https://t.co/JNER0mVcB8

@PsyPost They wouldn’t be surprised if they spend more time learning how LLMs work. This is not a mystery. It’s the result of sharing high percentages of the same training data and excessive RL/post-training focused around the same benchmarks known as eval maxxin.

@icodeagents That’s not a new development. RL works by collapsing the output expanse. This is effectively shifting distribution mass and narrowing output diversity. You also lose explainability. After introducing a new bias we can’t tell if a behaviour is due to RL or pre-training.

LiteParse is the best model-free, open-source document parser for AI agents. It now gets a first-class landing page on our website 💫 Our company mission is building the world's best agentic document processing platform, and liteparse is the central pillar behind our OSS efforts. It's blazing fast (and getting faster soon!), supports 50+ file formats, and is one-shot installable as an agent skill. Webpage: https://t.co/mVwma5QOCj Come check it out: https://t.co/JNER0mVcB8

LiteParse hit 4.3K+ GitHub stars in a few weeks. Today it officially joins the LlamaIndex ecosystem, with its own page at https://t.co/1tdQbEer9H. ~500 pages in 2 sec. 50+ formats. Zero cloud dependency. Already powering agents in Claude Code, Cursor, and production pipelines.

@norpadon The tensor engine was first implemented inside SN3 (before it was called Lush) in 1992 at Bell Labs by Léon Bottom and me. The naming convention has survived to this day in PyTorch and other libraries. The naming of the tensor operations was reused in EBlearn (C++ deep learning library written by Pierre Sermanet and me, with some help from @soumithchintala). It was recycled in Torch5 and Torch7, which was written largely by Ronan Collobert, and my students Clément Farabet, and @koraykv ). Clément and Koray had been brought up on Lush (the open version of SN) and knew the nomenclature. Then, Soumith used the same conventions in PyTorch.

Since Anthropic publish their system prompts we can generate a diff between Claude Opus 4.6 and 4.7 - here are my notes on what's changed https://t.co/IQHuvLGmwO

Thoth v3.15.0 - Full X API integration. The X tool gives you 13 Twitter API v2 endpoints behind OAuth 2.0 PKCE. Timeline reads, search, post, reply, retweet, like, with automatic rate limit backoff and Free/Basic/Pro tier gating so you never hit a 429 you can't recover from. Local-first, open source, yours to run: https://t.co/MjHuVUVvpY https://t.co/2AoE9k9XLR

Everyone using @openclaw or @NousResearch Hermes should install this as a skill. Full browser control comparable to Claude's Chrome MCP. No API keys required. Gave my agent a 10k budget and asked it to add all parts req for a decent AI rig into my eBay cart. One shotted it

Introducing: Browser Harness. A self-healing harness that can complete virtually any browser task. ♞ We got tired of browser frameworks restricting the LLM. So we removed the framework. > Self-healing — edits helpers. py on the fly > Direct CDP — one websocket to Chrome > No fr

@DanFarfan @sundeep Building https://t.co/kiuZ7QXLzb used to cost $400 a day in API calls. To grab 40,000 posts. Monday it should drop to $40 a day to do same

@TheStalwart There is a sort of file drawer bias: AI benchmarks that don’t meaningfully benchmark performance are dropped, but mostly because they are either 0 or 100. The whole point of benchmarks is to measure something about AI performance. Verisimilitude is a different matter, though.

@TheStalwart Having done a lot of work on measuring performance, I don’t think this is very common. There are very few tasks that AI can do where there is not an upward trajectory. You can find tasks that no AI can do, or where there is saturation, but otherwise you get improvement over time.

A downside with using VLMs to parse PDFs is guaranteeing that the output text is *correct* and output in the correct reading order. 1️⃣ Text correctness: making sure that digits, words, sentences are not hallucinated or dropped. 2️⃣ Reading Order: making sure that complex multi-layout pages are linearized into the right 1-d text order. We call this Content Faithfulness in ParseBench, our comprehensive document OCR benchmark for agents. We have 167k rules that measure digit/word/sentence-level correctness along with reading order correctness. It seems relatively table-stakes, but no parser gets this 100% right, and this means that the agent’s downstream decision-making is compromised. Come learn more about how this metric works in the video below, along with our full blog writeup, whitepaper, and website! Blog: https://t.co/57OHkx0pQW Paper: https://t.co/Ho2oH2xEAM Website: https://t.co/g0b0jsCynW

Let's talk content faithfulness. Four days ago, we launched ParseBench, the first document OCR benchmark for AI agents. Its most fundamental metric asks: did the parser capture all the text, in order, without making things up? We grade three failure modes with 167K+ rule-based

Terminal automation + e2e testing solved Now as simple as snapshot, click, type: – wterm renders terminal-in-html, every cell in the a11y tree – agent-browser automates pages via the a11y tree Here's opencode in one browser driving Claude Code in another https://t.co/kuuy9E78c2

The quality of your thinking is a multiplier for the amount of progress you make at each iteration. But the dominant factor behind success is simply your iteration speed. Try more things and you win.

Nice paper from Google. And a great application of AI agents. Wearables capture a staggering amount of physiological signals every day. CoDaS is an AI co-data-scientist that turns raw wearable sensor data into clinically relevant biomarkers through an iterative loop of hypothesis generation, statistical analysis, adversarial validation, and literature-grounded reasoning with human oversight. Across 9,279 participant-observations, it surfaced 41 mental-health and 25 metabolic candidate biomarkers, including circadian instability features linked to depression (ρ = 0.252) and a cardiovascular fitness index linked to insulin resistance (ρ = -0.374). Why does it matter? Biomarker discovery is one of the slowest, most expert-bound workflows in medicine. An agentic system that can propose, test, and stress-test candidate biomarkers end-to-end changes the cadence of translational science and starts turning passive consumer sensor data into something clinicians can actually act on. Paper: https://t.co/jxoZARoI4G Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

New paper from Apple: Attention to Mamba

NEW paper from Apple. Interesting idea: "Attention to Mamba". The paper introduces a two-stage recipe for cross-architecture distillation from Transformers into Mamba. Naive distillation collapses teacher performance. Their trick: first distill the transformer into a linearize

@norpadon The basic structure of a PyTorch tensor is also borrowed from SN/Lush: a storage (a flat array of numbers, for every numerical type) to which one or multiple tensor structures can point, so the same data can be accessed in multiple ways and shared.

I started contributing to @NousResearch Hermes Agent by doing one thing: reading the code. Then a small fix. Then another. Gateway platforms, skills, bug fixes... It kept going for a long time. Today I received the Developer role in the Nous Research Discord. 🎉 Special thanks to @Teknium for reviewing and valuing every contribution throughout this journey. For anyone thinking about contributing to open source: the best starting point is reading the code. The rest follows. https://t.co/8baosGeEwS 🤖

What are some SOTA alternatives to SAEs for mechanistic interpretability? Is Anthropic still using SAEs or have they moved on to something better now?

@spyced @bcherny Pi uses Codex App Server, I believe. By "regular" I mean the OpenAI completions REST endpoint.

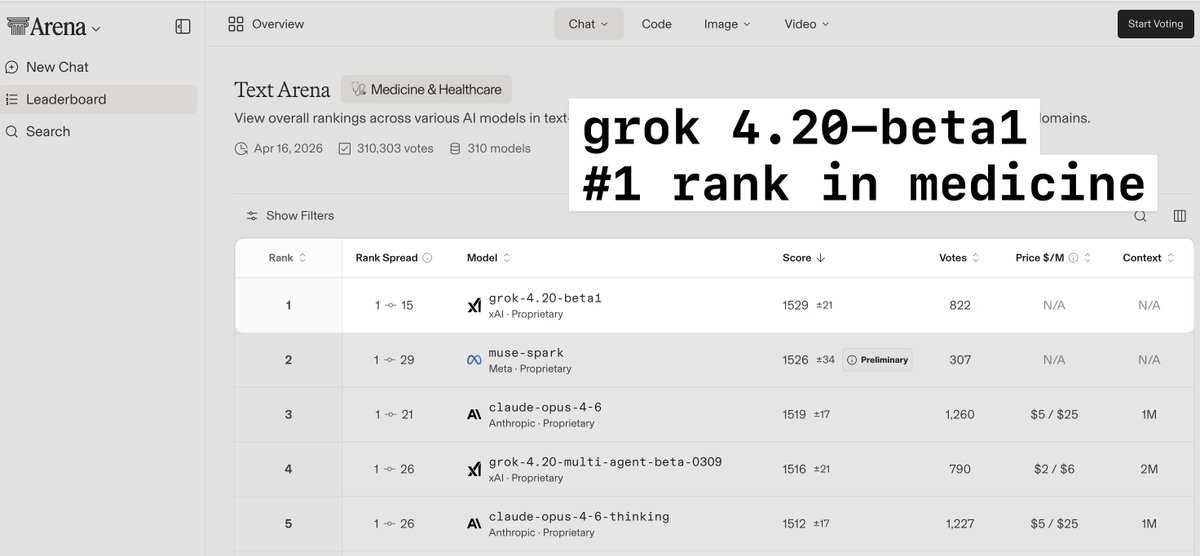

> grok4.20-beta1 is a much smaller model than opus but is #1 ranked in medicine and healthcare > 4.3 and 4.4 will be much larger models, and likely will have a significant boost in performance on complex medical cases > this is massively important in providing accurate diagnostic guidance and advice to both providers and patients

@techdevnotes Supplemental training has been added to 4.3. Grok 4.4 will be twice the size (1T) with training data through early April. Probably ready for release in early May. Grok 4.5 will be 1.5T and hopefully out by late May.

I’ve been using the free trial of Xiaomi MiMo-V2-Pro in my Hermes agent for the last week. I’m honestly kind of speechless. Insanely good model, and honestly a pleasure to communicate with. Once I got my Hermes agent fully setup, got the soul.md dialed in Hermes is an absolute joy to interact with and use. Insanely smart and intuitive. Huge thanks to @NousResearch and @Teknium for all their work!

Agents that run code need a controlled workspace ready when work starts. @modal shares why scale matters for long-running agents built with the Agents SDK. https://t.co/7YdC5VR58Y