@arankomatsuzaki

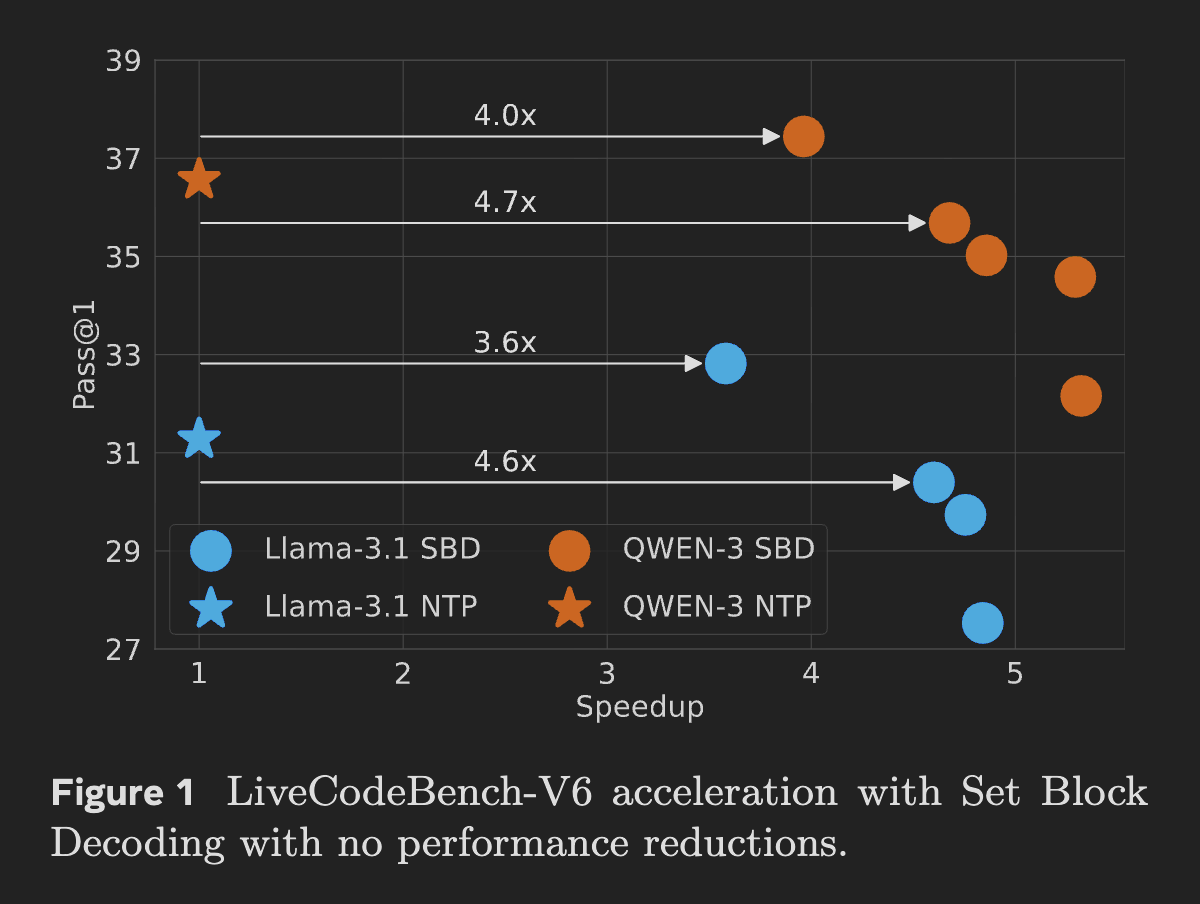

Meta introduces Set Block Decoding (SBD), a new inference accelerator for LLMs SBD samples multiple future tokens in parallel, cuts forward passes by 3–5x, needs no arch changes, stays KV-cache compatible, and matches NTP training performance. https://t.co/Ov1ZO22Rce