@emollick

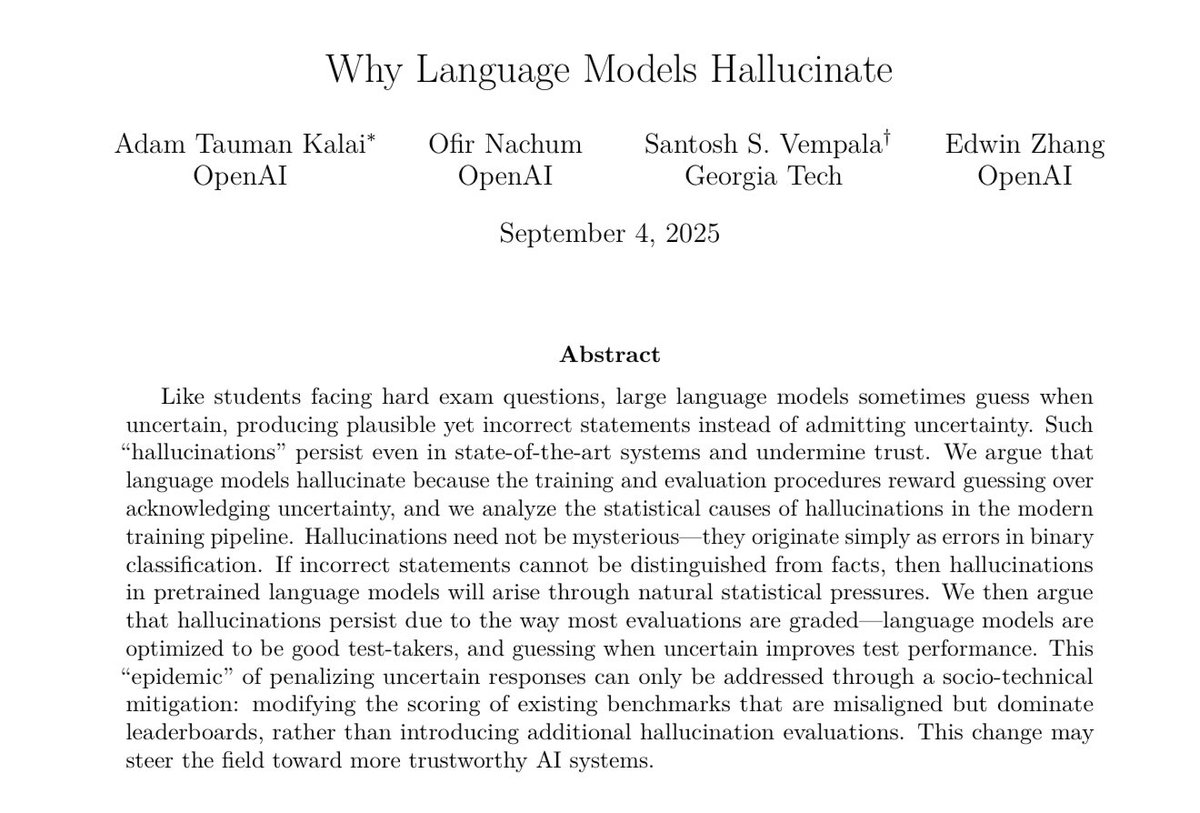

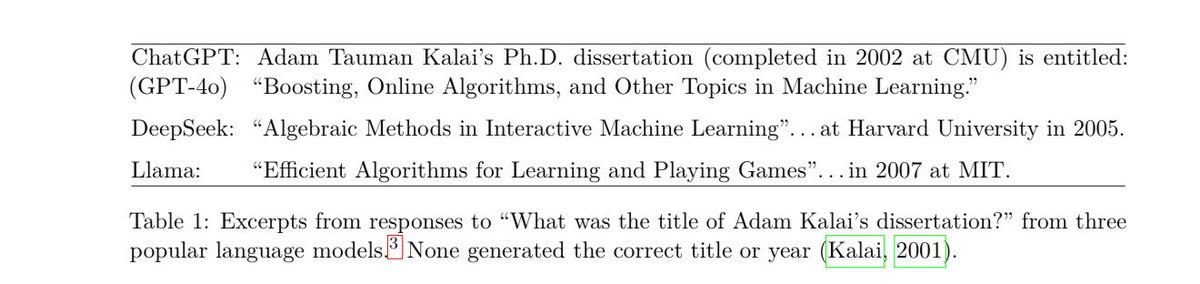

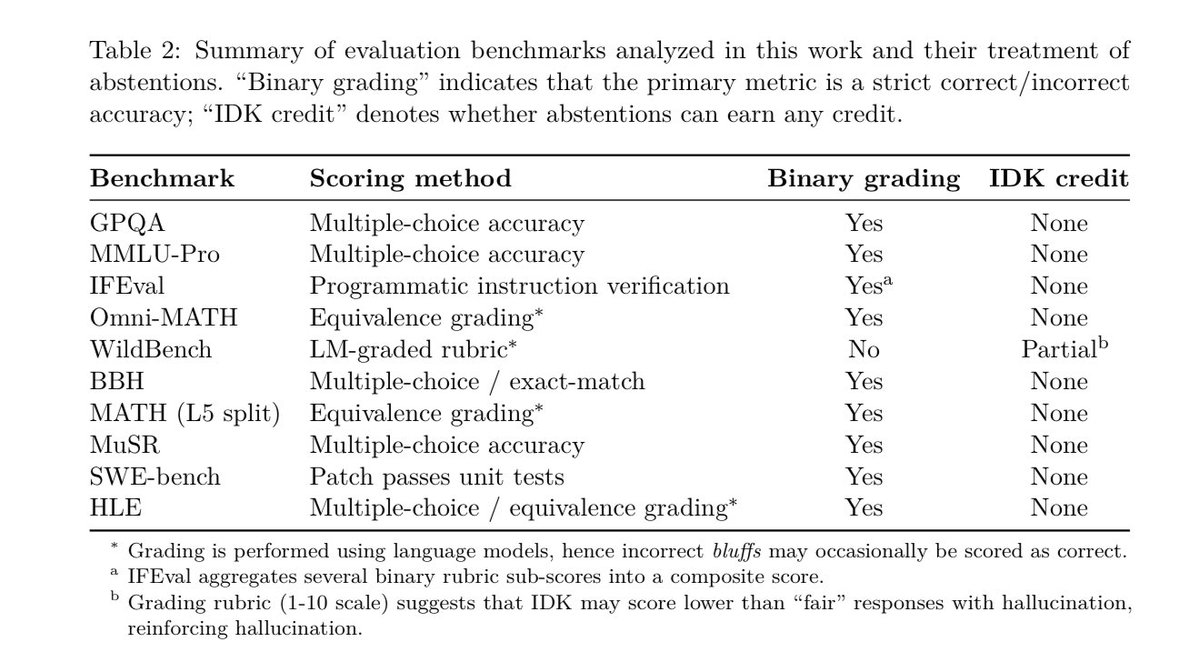

Paper from OpenAI says hallucinations are less a problem with LLMs themselves & more an issue with training on tests that only reward right answers. That encourages guessing rather than saying “I don’t know” If this is true, there is a straightforward path for more reliable AI. https://t.co/0gxLoIt6ft