Your curated collection of saved posts and media

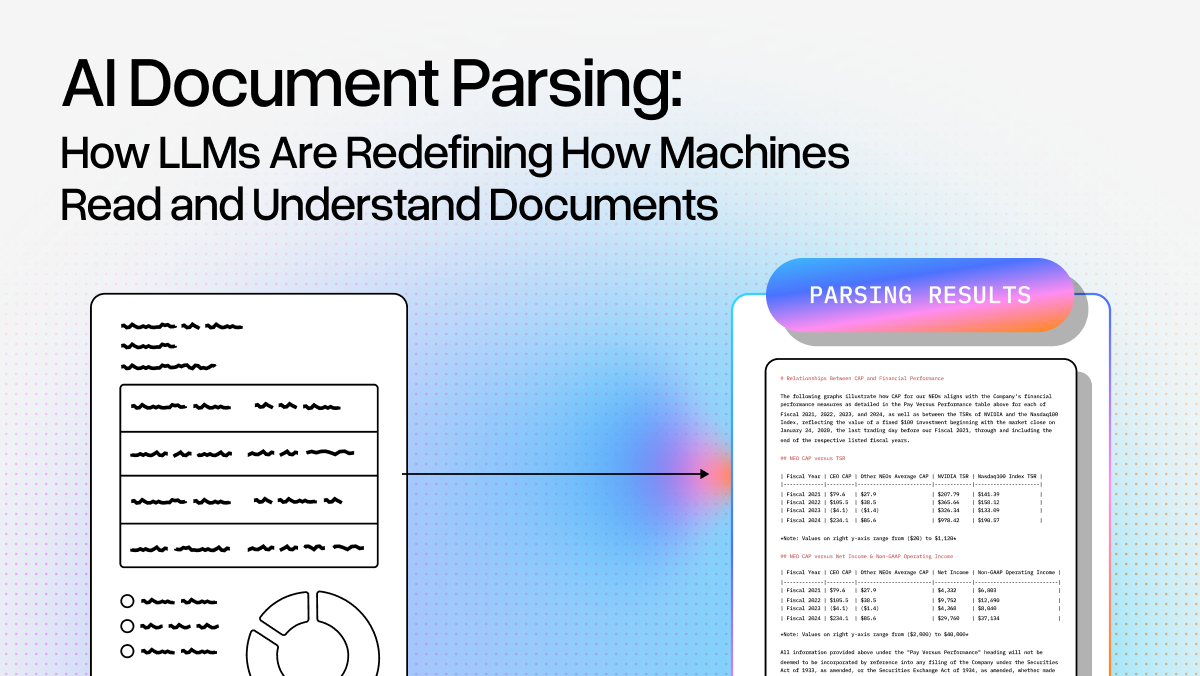

Here's a visual summary of the new guide by Anthropic. It's on how to improve tool use for AI agents. 3 core ideas: - Tool Search tool to discover tools on-demand to save context - Programmatic tool calling to orchestrate tools via code - Tool schema + usage bookmark it https://t.co/cNBCx0y7AH

Interleaved thinking is a game-changer. I built this little deep research agent, and the results are impressive. The agent is just more efficient at reasoning over multiple steps. Huge leverage for self-improving agents. https://t.co/6oCi8ifyKV

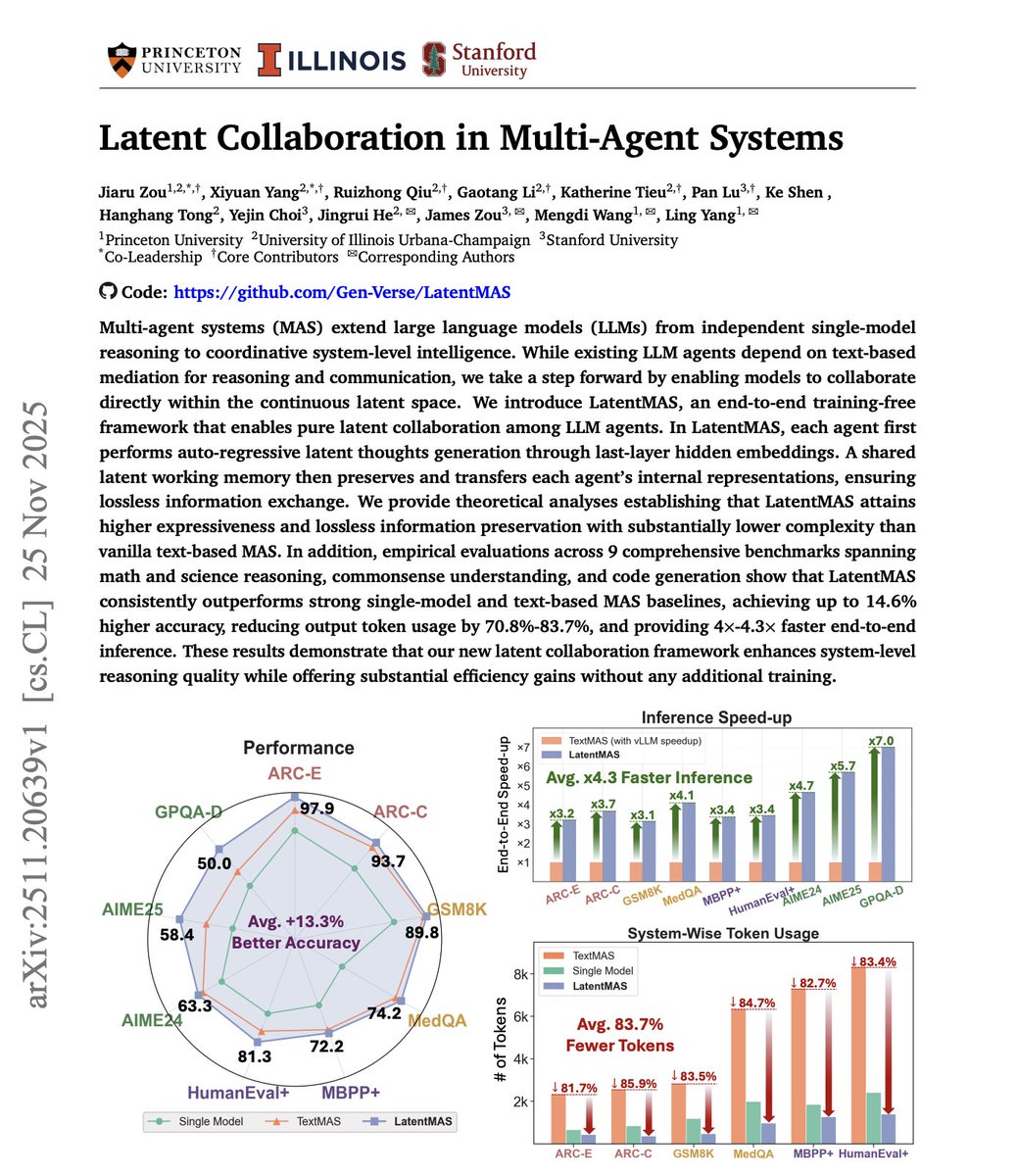

Multi-agent systems are powerful but expensive. However, the cost isn't in the reasoning itself. It's in the communication. Agents exchange full text messages, consuming tokens for every coordination step. When agents need to collaborate on complex problems, this overhead adds up fast. More agents, more messages, more tokens, more cost. This new research introduces LatentMAS, a framework where agents communicate through compressed latent vectors instead of natural language. The key idea: agents don't need to explain everything in words. They encode task-relevant information into compact hidden representations. Other agents decode what they need. No verbose back-and-forth. The framework operates in three phases: - Encoding: agents compress their knowledge into low-dimensional vectors. - Sharing: these vectors replace text messages between agents. - Decoding: receiving agents reconstruct what matters. Inspired by how transformers process information internally through hidden states. Now applied to inter-agent communication. What makes this powerful? Communication costs drop significantly while task performance stays intact. The paper provides theoretical guarantees and empirical validation across multiple reasoning benchmarks. This unlocks practical scalability. It will allow AI devs to deploy more agents, run more complex reasoning without proportional cost increases. Paper: https://t.co/nl2OQX7txH Learn to build AI agents in our academy: https://t.co/zQXQt0PMbG

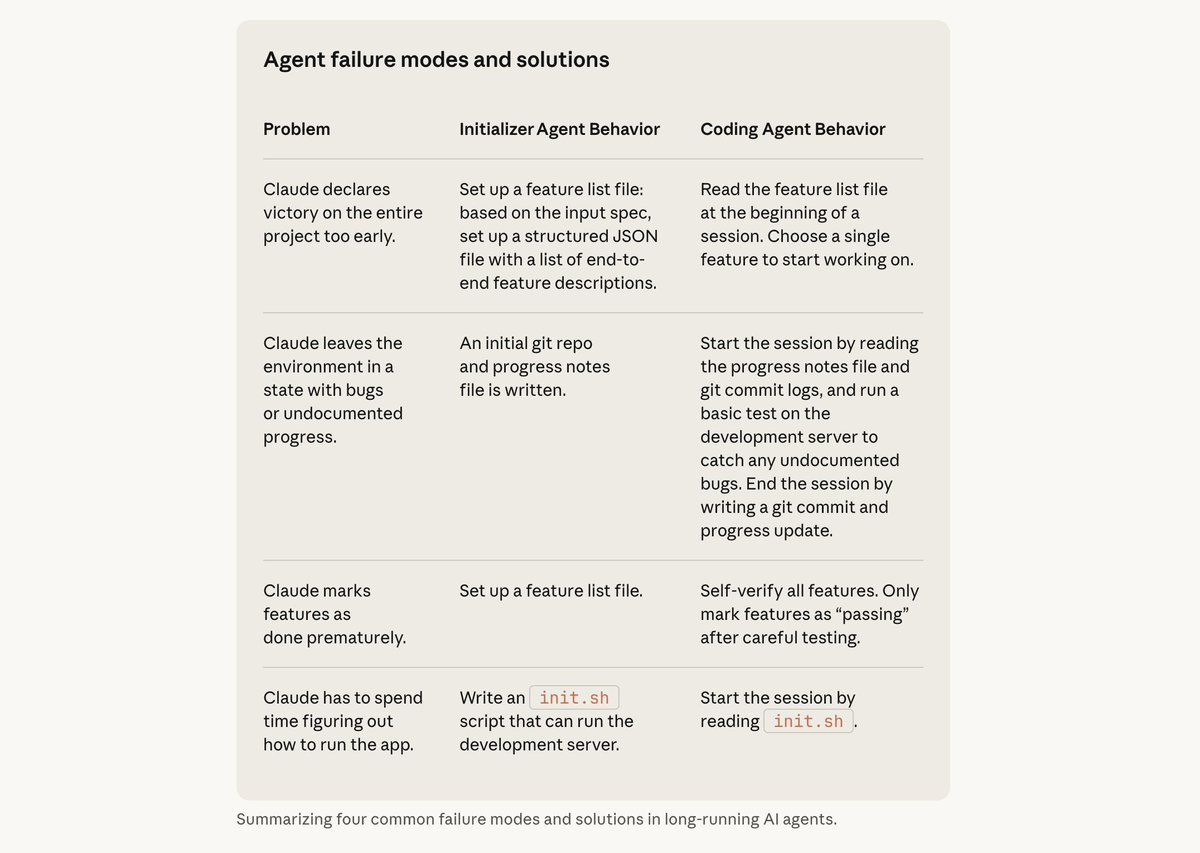

As usual, Anthropic just published another banger. This one is on building agents that continue to do useful work for an arbitrarily long time. Great tips on context management. A must-read for AI devs. https://t.co/UXCIr86FS4

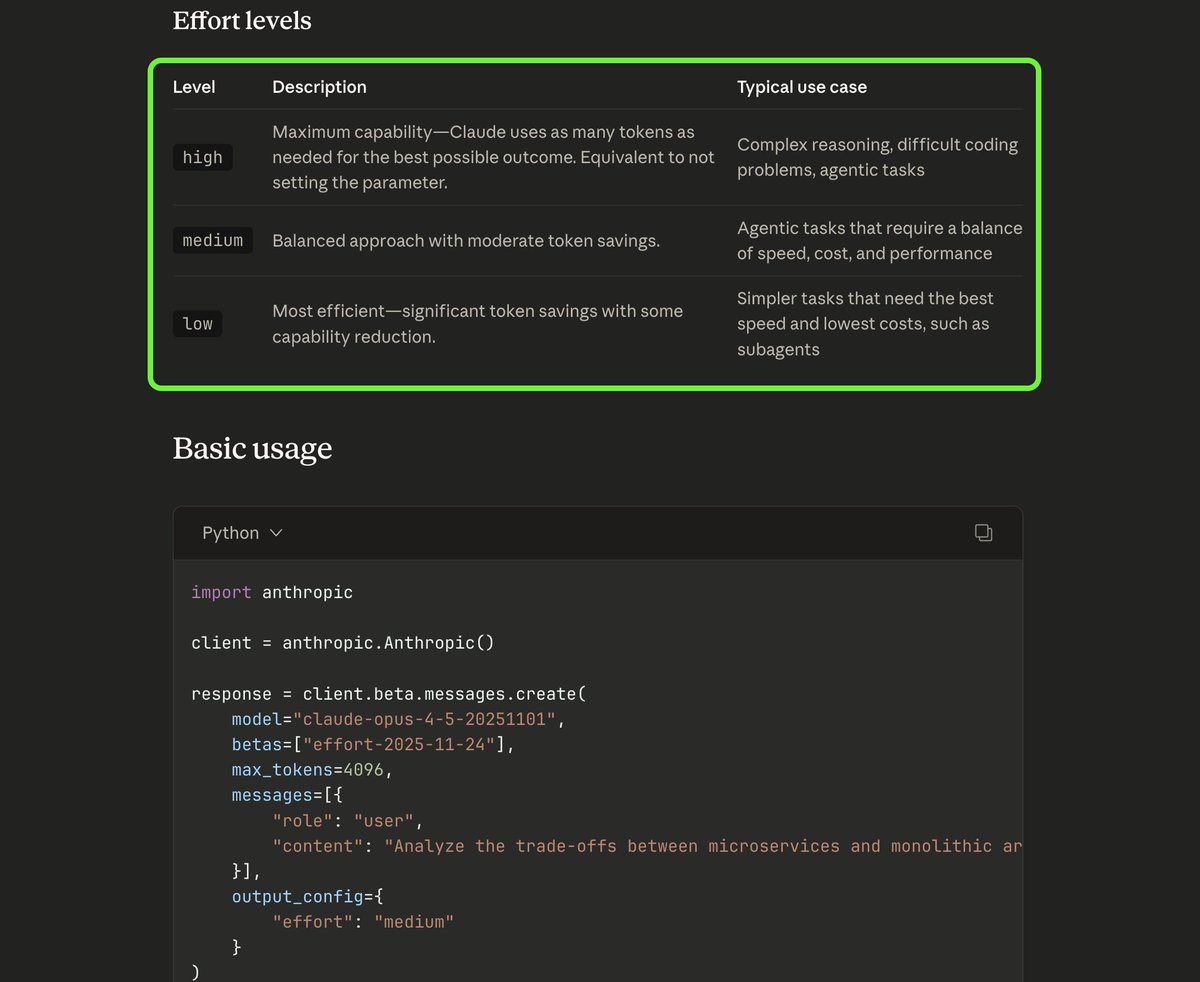

a cool part of the opus 4.5 that went under the radar. you can now set the effort level to trade off between response thoroughness and token efficiency. high - complex reasoning medium - agentic tasks with balanced effort low - simpler tasks https://t.co/gTDEvplhUj

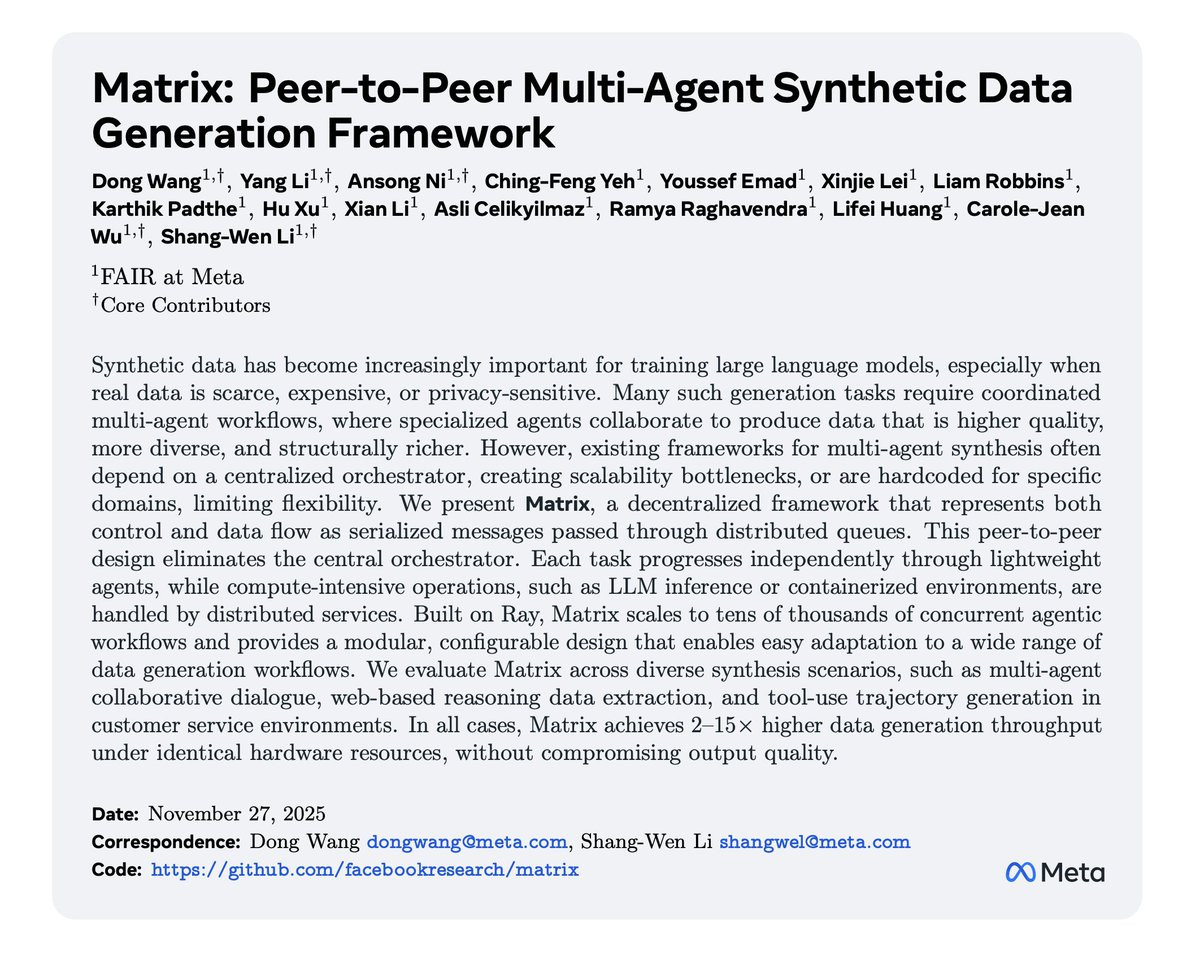

Cool paper from Meta. And another excellent application of multi-agent systems. (bookmark it) Training modern AI models requires massive amounts of high-quality data. However, the bottleneck isn't just quantity. The data is just not diverse enough. Single models generating synthetic data tend to produce homogeneous outputs, repeating patterns, and lacking the nuanced variety found in human-created datasets. This new research from Meta introduces Matrix, a peer-to-peer framework where multiple AI agents collaboratively generate synthetic training data through decentralized interactions. Matrix achieves 2–15× higher data generation throughput under identical hardware resources, without compromising output quality. TL;DR: Instead of one model producing data, specialized agents play distinct roles and interact with each other. One asks questions, another responds, a third evaluates quality. These multi-turn conversations capture complex reasoning and diverse perspectives. What makes Matrix different: no central coordinator. Agents communicate directly in a fully decentralized architecture. This enables scalability without infrastructure bottlenecks. The framework operates through role-based conversation protocols, multi-turn interaction patterns, and built-in quality filtering at each stage. Only data meeting quality thresholds makes it into the final training set. Multi-agent collaboration produces more diverse synthetic data than single-model approaches. The resulting datasets improve downstream model performance across reasoning and instruction-following benchmarks.

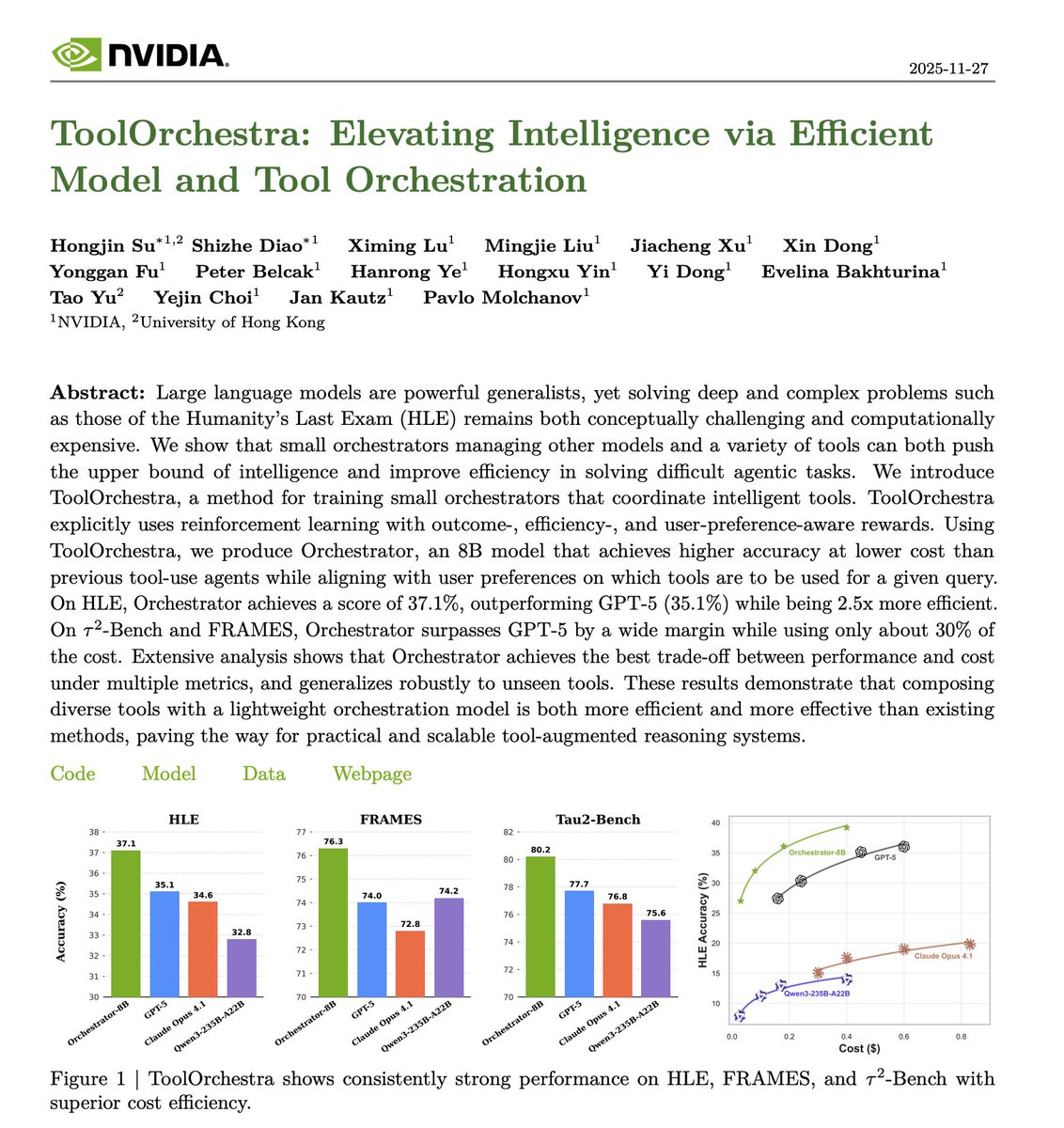

Banger paper from NVIDIA. Bigger models aren't always the answer. However, the default approach to improving AI systems today remains scaling up. More parameters, more compute, more cost. But many tasks don't require the full power of a massive model. This new research introduces ToolOrchestra, a framework that strategically coordinates multiple AI models with external tools based on task complexity. Instead of routing everything through one large model, an orchestrator decides dynamically. When is a tool necessary? Which model size fits the task? How should components coordinate? The researchers trained Orchestrator-8B, a specialized 8-billion parameter model that makes intelligent routing decisions. It determines when external tools are needed versus when model inference alone suffices. On HLE, Orchestrator achieves a score of 37.1%, outperforming GPT-5 (35.1%) while being 2.5x more efficient. They also release ToolScale, a synthetic dataset of tool usage examples across diverse scenarios for training orchestration capabilities. What it matters: strategic orchestration of smaller models with targeted tool usage can match or exceed monolithic large model performance while cutting computational overhead. Paper: https://t.co/iNvqIHGTES Learn how to build AI Agents in our academy: https://t.co/zQXQt0PMbG

We’re announcing a research collaboration with @CFS_energy, one of the world’s leading nuclear fusion companies. Together, we’re helping speed up the development of clean, safe, limitless fusion power with AI. ⚛️ https://t.co/5gDqP3WiNe

Amazing test of Gemini 3’s multimodal reasoning capabilities: try generating a threejs voxel art scene using only an image as input Prompt: I have provided an image. Code a beautiful voxel art scene inspired by this image. Write threejs code as a single-page

Best outputs from Gemini 2.5 Pro, vs 3 Pro, this example nicely illustrates the fidelity jump with 3 Pro, through strong multimodal understanding and 3D reasoning https://t.co/0aM8oSgmFA

Try it for yourself at https://t.co/yT45AjuoEn https://t.co/wmOv3cj17j

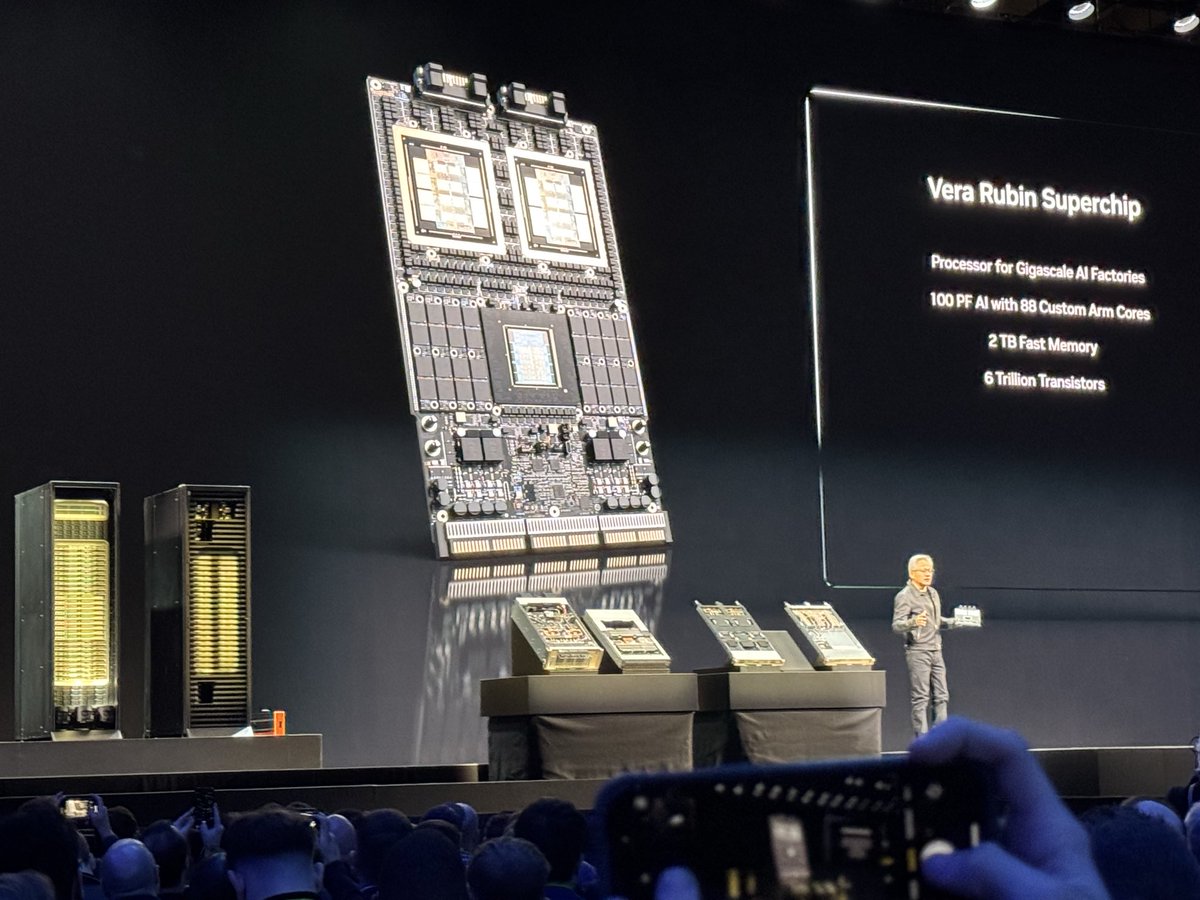

Listening to Jensen talk about his favorite maths - specs of Vera Rubin chips, and the full stack from lithography to robot fleets assembling physical fabs in Arizona & Houston. Quoting Jensen, “these factories are basically robots themselves”. I visited NVIDIA facilities before and they look absolutely unreal. Sci-fi scenes pale in comparison to the real Matrix, racks over racks fading into the horizon. The art of enchanting rocks to do computation is the greatest craft humanity has mastered. Sometimes I forget I’m at a hardware company with huge muscles to move atoms at unbelievable scale.

congrats to llama 3 large for winning the LLM trading contest by not participating https://t.co/PsA6hUYQ48

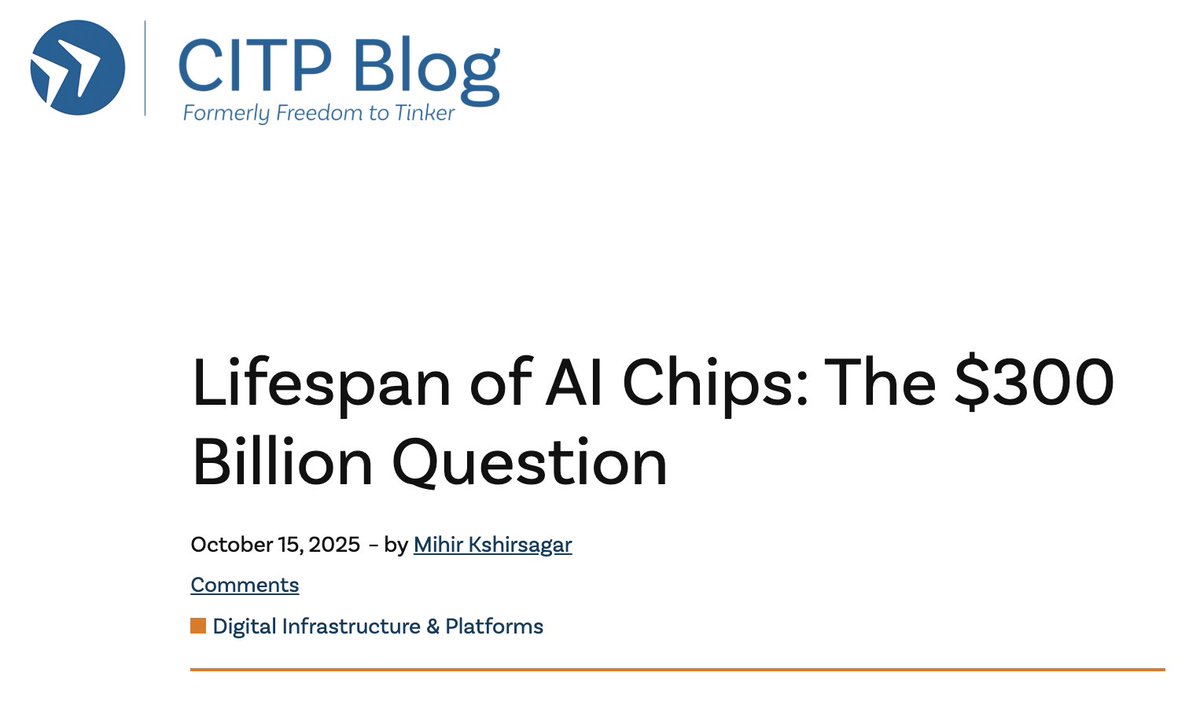

My @PrincetonCITP colleague Mihir Kshirsagar asks: are Microsoft-OpenAI, Amazon-Anthropic, and Google using subsidized capital to lock in enterprise customers right now through aggressive pricing, multi-year contracts, and deep integrations? https://t.co/iQ5sADa6aI https://t.co/tjun9v4cGj

I am on the faculty job market this year! I am seeking tenure-track faculty positions to drive my research agenda on rigorous AI evaluation for science and policy. I am applying broadly across disciplines, and would be grateful to hear of relevant positions. Materials: 🧵 https://t.co/31r7W1FHNO

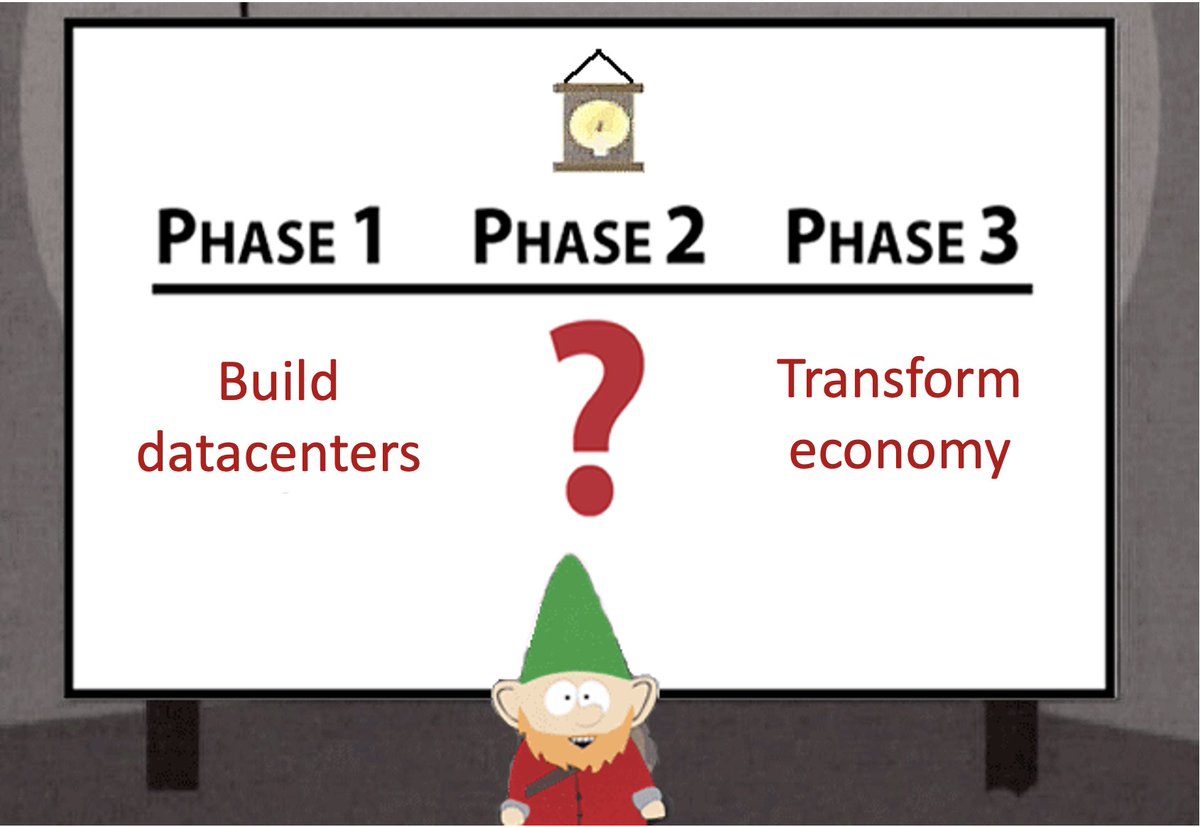

A lot of AI discourse is magical thinking and ignores the crucial "Phase 2". My bet is that (1) AI can indeed play a role in transforming various industries, societies, and governments (2) These transformations, if they happen, will usually be slow and painful (3) Their nature is not dictated by the logic of the technology itself but by what we collectively choose to do with the tech (4) In most cases, positive transformation will require institutional reform and hard decisions on issues such as compensating the "losers" of structural shifts (5) Most of the energy on AI & transformation today (for instance, on how AI can transform science) is misdirected because it doesn’t focus on real bottlenecks. The work of identifying and addressing bottlenecks, which is where the alpha is, has not really begun (6) This work will have to be done on a sector-by-sector basis, and domain expertise is more important than AI expertise.

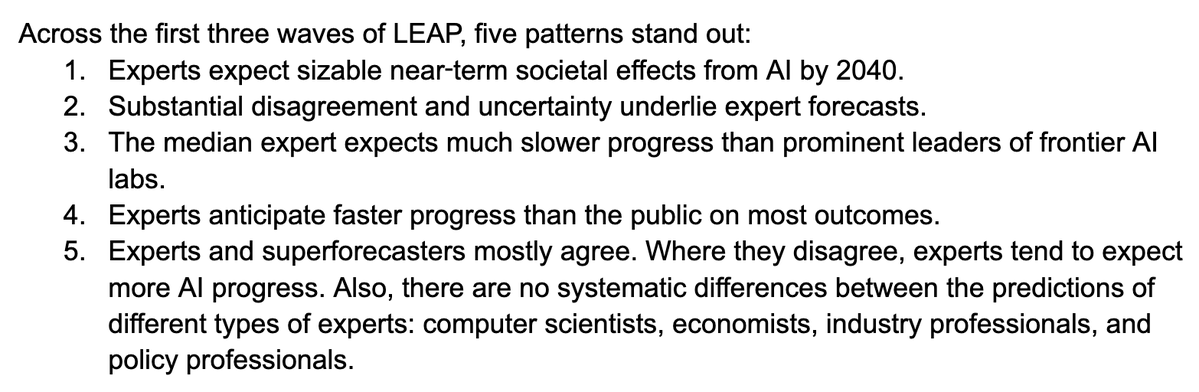

The Longitudinal Expert AI Panel is a really well thought out AI forecasting exercise and I have been happy to participate as an advisor. Here is the launch whitepaper: https://t.co/oSLN9AGayN https://t.co/7dLo1QSxQO

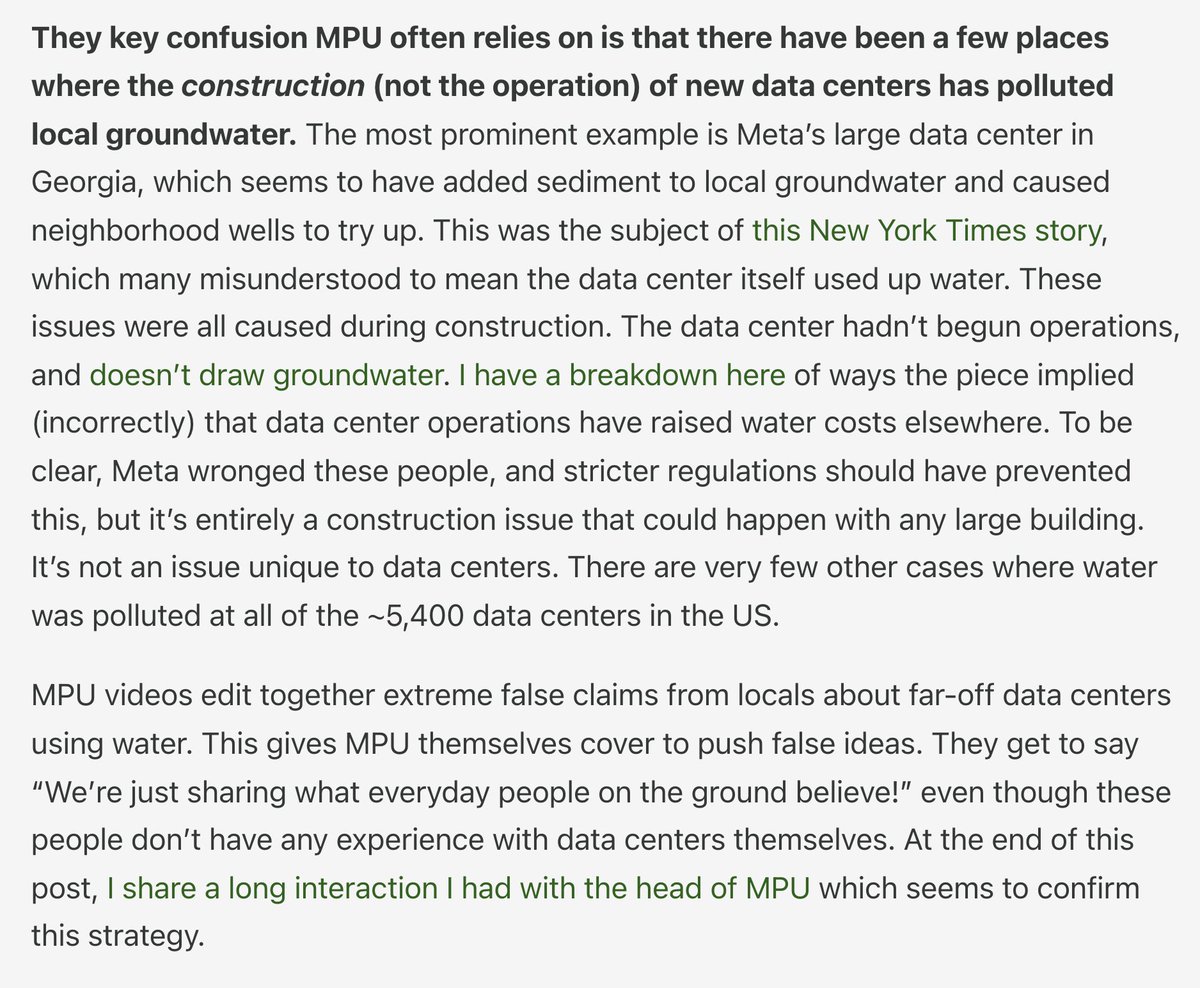

I think that Hao made a bad but honest mistake and I don't mean to attack her overall character as a journalist. In contrast, I would like to take this opportunity to directly attack the journalistic integrity of More Perfect Union, who are much more influential in the AI water conversation than Hao and have made clear, direct decisions to deceive their viewers in basically every video they made about it. I see them as the biggest bad guy in the debate. My interactions with Hao have been very nice. My interaction with the head of More Perfect Union was terrible (logged in the post below) and confirmed to me that they don't care about the truth on this at all. I have a long post on how every single presentation of the AI water issue they make has been intentionally off base here. Would like more attention on this: https://t.co/hbDqRORZsn

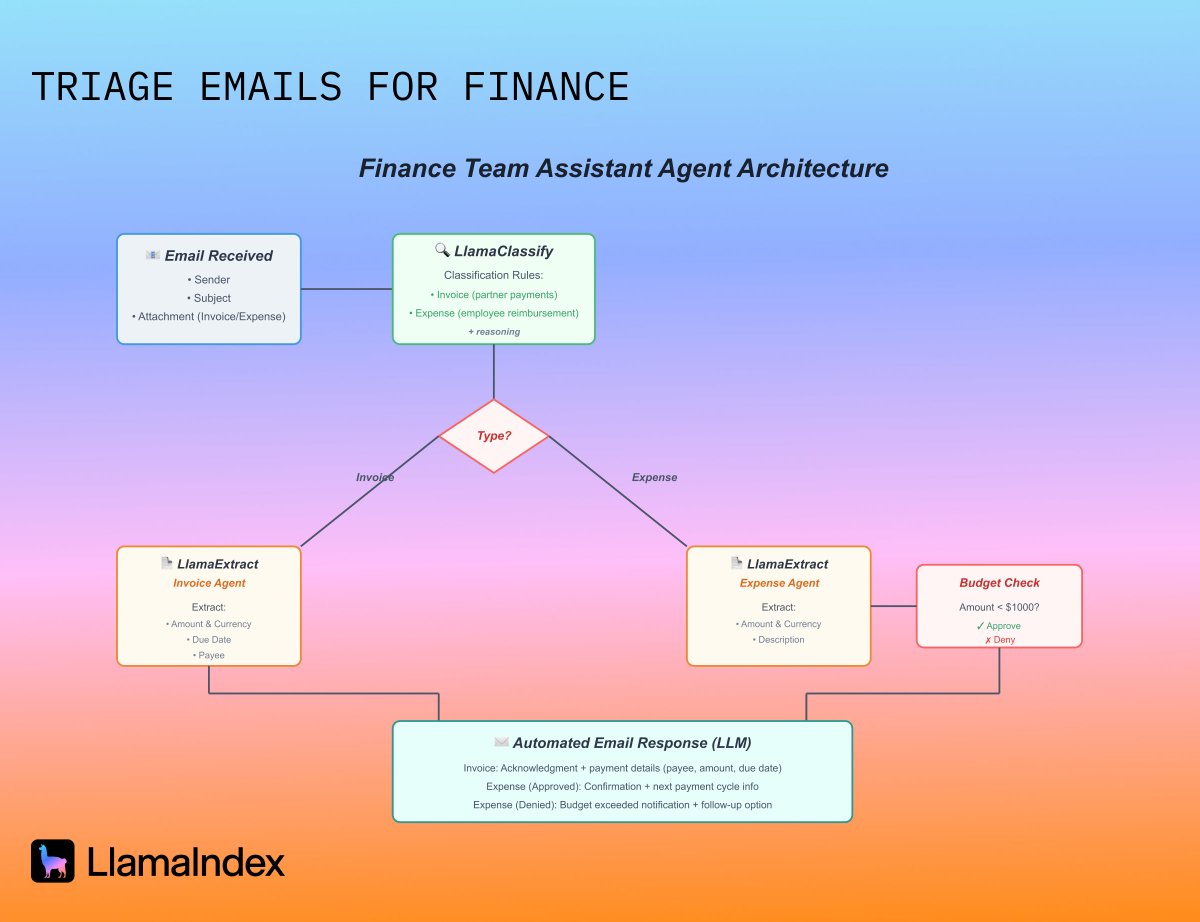

Here's a common scenario: Your finance team gets emails all day with invoices from partners and expense reports from employees. Each one needs different handling. Invoices need acknowledgment and payment scheduling. Expenses need budget validation before approval etc. In this example we build an agent that automatically triages incoming emails with attachments, extracts the right information, and takes appropriate action. Our approach uses three of our tools working together: 1️⃣ LlamaClassify handles the first decision point. It looks at each attachment and determines: is this an invoice that needs to be paid out to a partner, or an expense that needs reimbursement? It also provides reasoning for the decision. 2️⃣ LlamaExtract does the heavy lifting on data extraction. We create two specialized agents with different schemas for invoices vs expenses. 3️⃣ Agent Workflows orchestrates the entire process. It connects classification to extraction to business logic: in this case, checking expenses against a budget threshold and generating appropriate email responses via LLM. Classify incoming documents → extract relevant data → apply business rules → take action. Need to add a new document type? Add a classification rule and an extraction schema. Need different business logic? Modify the workflow steps. The components stay the same. Check out the full example: https://t.co/5qsO6gmBs2

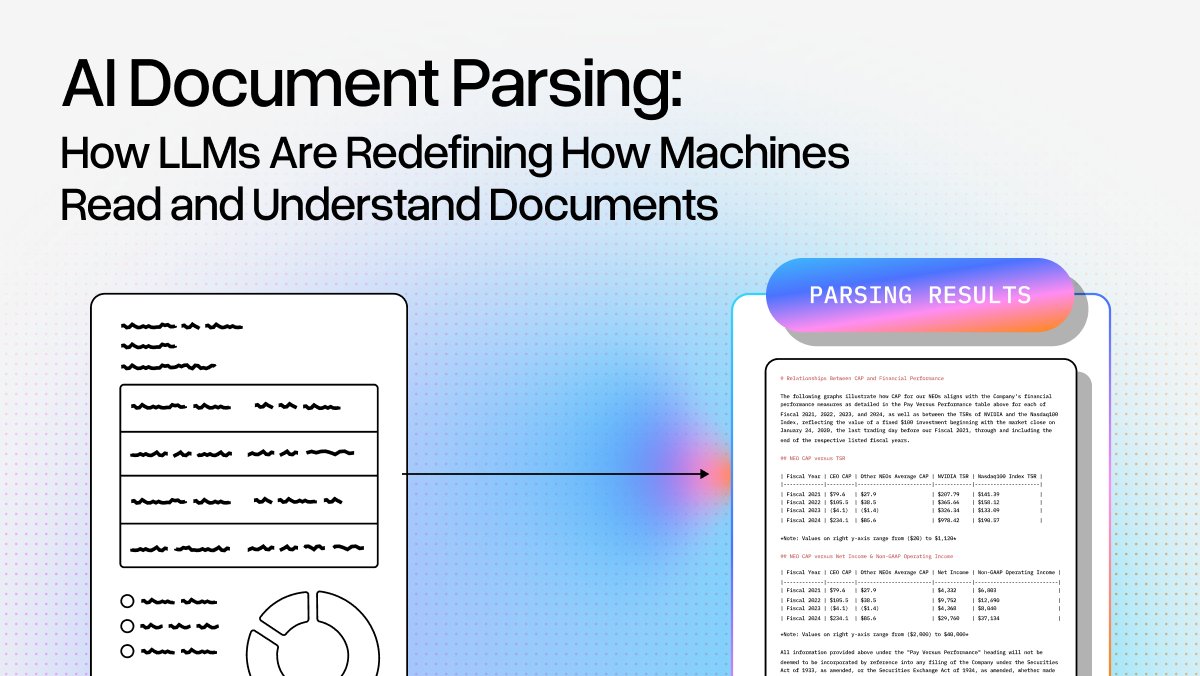

We probably shouldn't tell you how to build your own document parsing agents, but we will 😮. AI agents are transforming how we handle messy, real-world documents that break traditional OCR systems. Join our live webinar on December 4th at 9 AM PST where the LlamaParse team reveals industry secrets for parsing complex documents: 📋 Blueprint for building next-generation document parsing workflows using agents instead of OCR alone 🔧 Practical strategies for handling handwriting, rotated scans, nested tables, and visually dense layouts 🤖 Latest LlamaCloud capabilities showing how vision language models automate extraction from previously unparseable PDFs, forms, and images ⚡ When to apply each component in your parsing pipeline and why it matters We'll show you how to move beyond simple text extraction to actually automate understanding of documents with multi-column layouts, embedded charts, skewed scans, and tables within tables. Register now: https://t.co/Q17V6sC1V1

Trigger your agent workflows directly from your inbox, using our LlamaAgents and @resend webhooks📧 In this demo, we built a system that: 👉 Receives emails with documents attached 👉 Classifies the attachments as either invoices or expenses using LlamaClassify 👉 Extracts the relevant information through LlamaExtract 👉 Writes an email reply and sends it back to the user All of this is packaged as an agent workflow and deployed to the cloud through our LlamaAgents!🚀 🦙 Get started with all our LlamaCloud services now: https://t.co/Ct7pawLEFX 📚 Learn more about our agent workflows: https://t.co/VX6GwKdVMB ⭐ Star the repo on GitHub: https://t.co/vKjGP62fLE

There are Vegas parties and there is Late Shift 🎉 Join us for an exclusive re:Invent afterparty that brings together the best minds in AI and tech for a night you won't forget. 🍸 Cocktails and disco balls at Diner Ross Steakhouse in The LINQ 🤖 Connect with the teams behind @browserbase, @braintrust, @modal_labs, and LlamaIndex 🌙 Late-night tech conversations when the conference sessions end 🎟️ Limited spots with approval-required registration We're teaming up with our friends at @browserbase, @usebraintrust, and @modal_labs to host the most fun you'll have all conference. After your evening sessions, meet us for cocktails, networking, and the kind of tech chatter that makes re:Invent legendary. RSVP now - spots are limited: https://t.co/sYU6IbKYvg

See how @pathwork scaled their life insurance document processing from 5,000 to 40,000 pages per week using LlamaParse. 📄 Process complex medical records, lab results, and decades-old scanned PDFs with 8x improved throughput 🤖 Automatically extract and index carrier underwriting guidelines to keep risk rules current ⚡ Replace fragile, manual pipelines with robust automation that handles everything from digital forms to 1970s faded scans 🎯 Free up engineering time from maintenance to focus on building new product features @pathwork's Case Underwriter, Knowledge Assistant, and Pre-App Manager products all rely on transforming unstructured insurance documentation into structured data for faster decision-making. By integrating LlamaParse, they eliminated bottlenecks that were directly limiting customer growth and built future-proof infrastructure that automatically improves over time. Read the full case study: https://t.co/Cla0bDzPji

Build a document understanding agent for SEC filings that uses a multi-step approach with LlamaClassify and Extract to identify the filing type and hand it off to the right extraction agent. Deployed with LlamaAgents. 🔧 Customize extraction schemas to fit your specific data requirements and business logic 📊 Review and correct extractions through an intuitive frontend UI before finalizing results 🚀 Extend the system with additional workflows for downstream data syncing or automated monitoring ⚙️ Get started quickly with our structured template and clear documentation Check out the complete starter template and begin building your extraction system: https://t.co/a4XtdKvKbs

See the full example here: https://t.co/i5ZzljkdK3

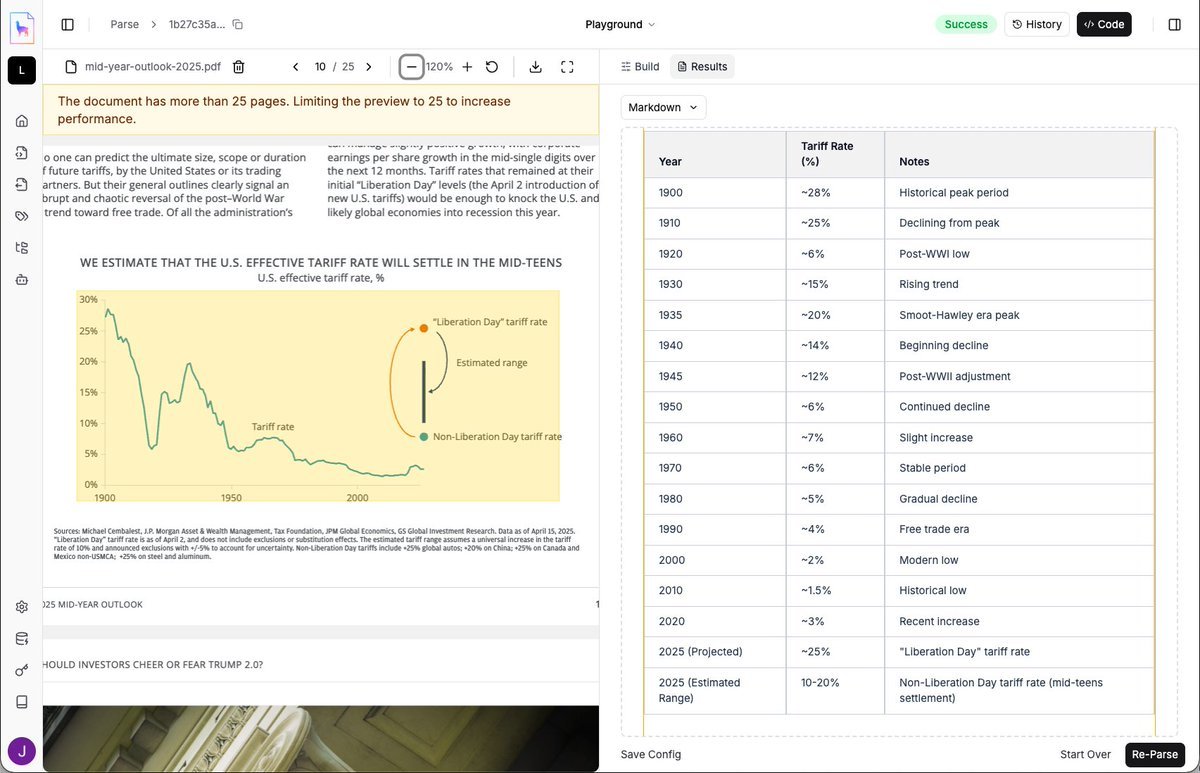

Chart OCR just got a major upgrade with our new experimental "agentic chart parsing" feature in LlamaParse 📈🧪 Most LLMs struggle with converting charts to precise numerical data, so we've created an experimental a system that follows contours in line charts and extracts values. Automate chart analysis without spending hours manually correcting extracted values. Try it now in LlamaParse: https://t.co/JHWRvwd93B

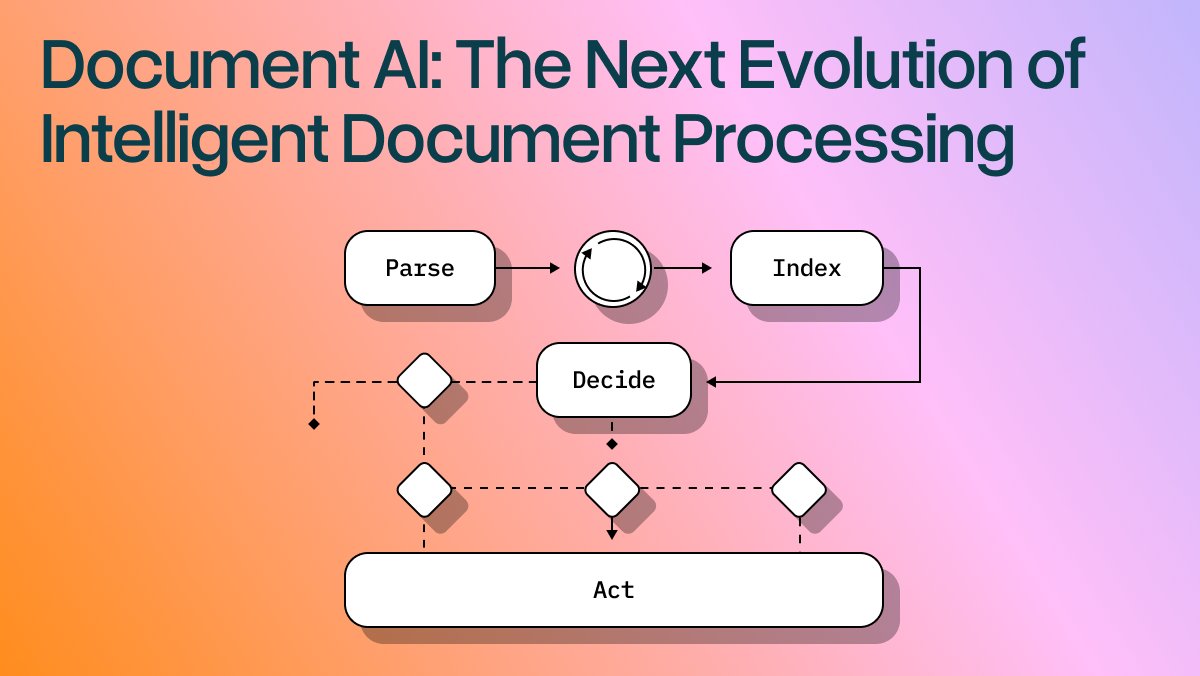

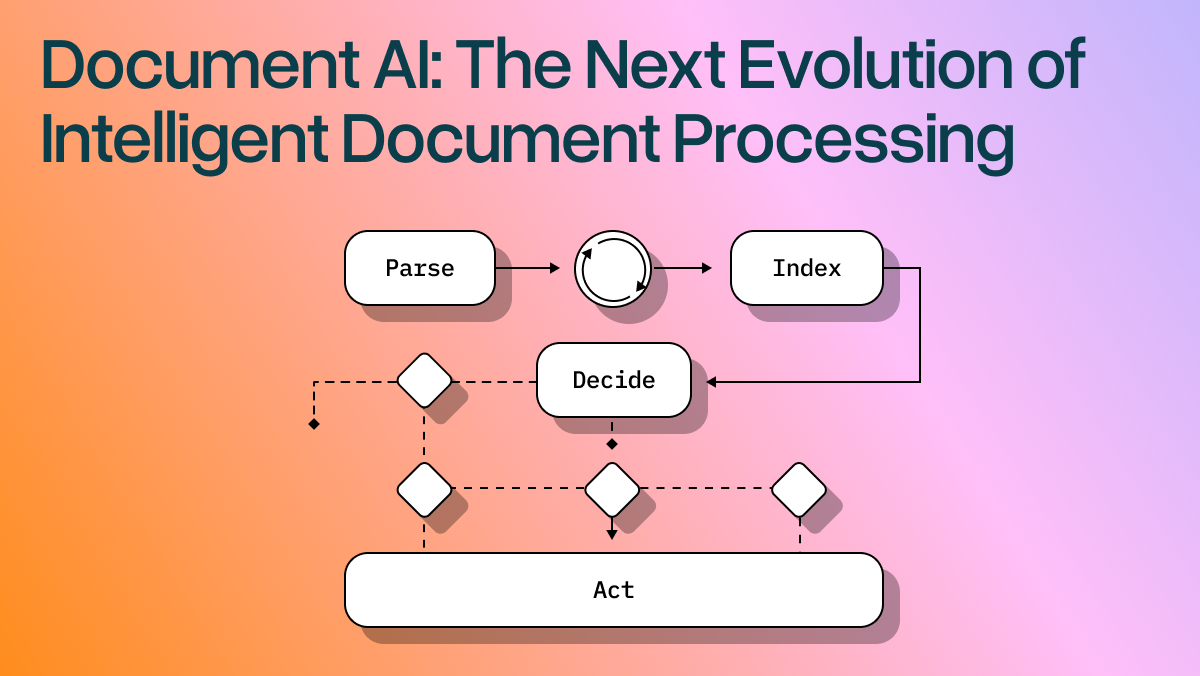

Document AI goes beyond traditional OCR to create intelligent systems that read, understand, and act on documents like humans do. Our latest blog post explains how agentic OCR combined with LLM-powered workflows is transforming document automation across industries: 🧠 Agentic OCR interprets visual and semantic structure, achieving 90%+ pass-through rates vs 60-70% with legacy systems 📊 Multimodal understanding processes charts, images, and tables that conventional OCR simply cannot interpret ⚡ Smart workflows use reasoning instead of rigid rules, adapting dynamically when documents deviate from expectations 🔄 Self-correcting error handling attempts corrections and learns from exceptions rather than failing silently Companies are seeing measurable gains: higher throughput, lower operational costs, faster deployment, and improved compliance with full auditability. We've built the complete Document AI stack with LlamaCloud - from LlamaParse for structure-aware parsing to LlamaExtract for declarative schema extraction, all orchestrated through our Workflows framework. Read the full breakdown of how Document AI operates and its business impact: https://t.co/cAlgQT2Lv2

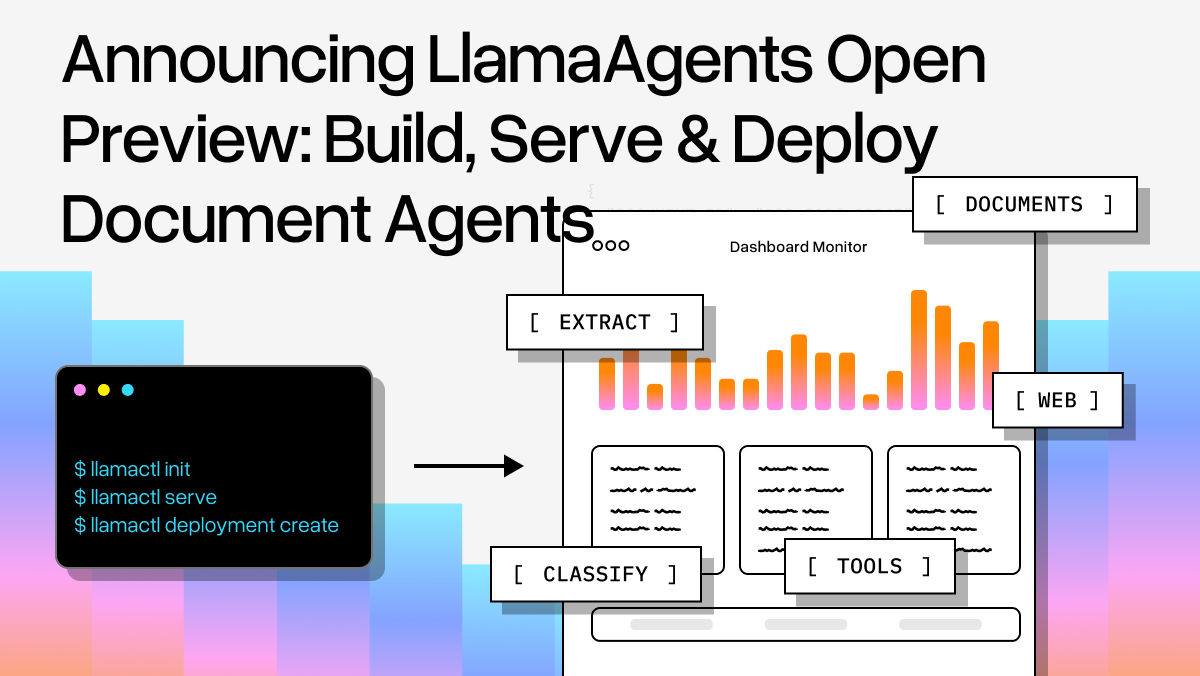

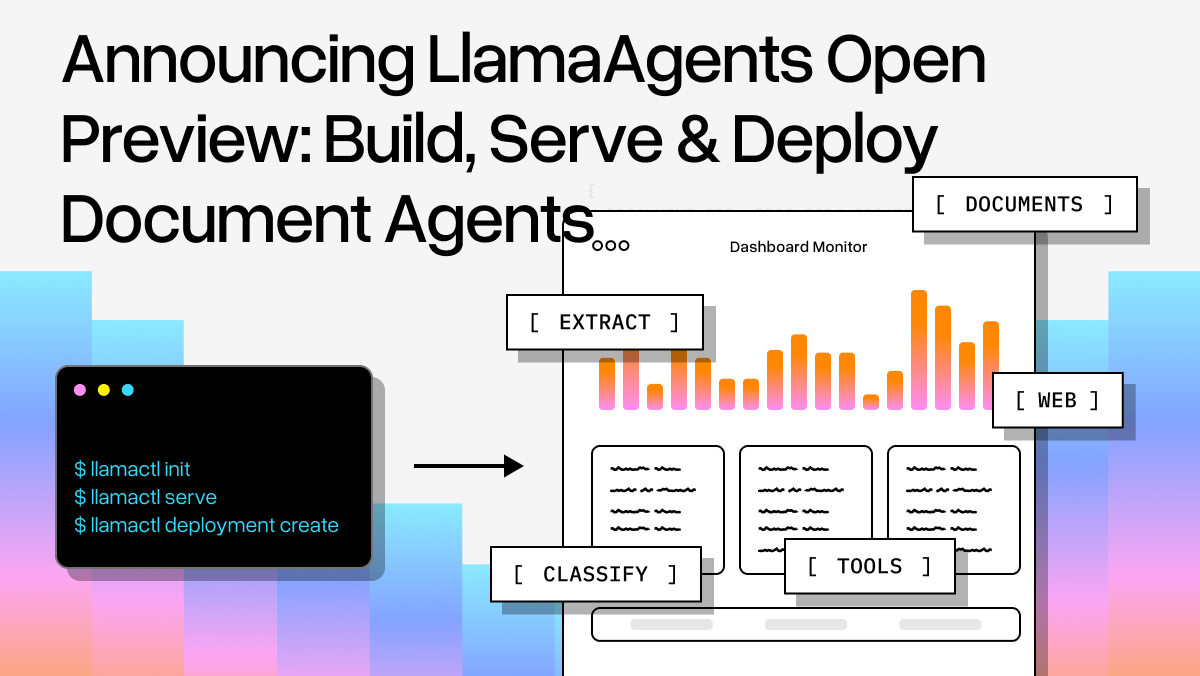

LlamaAgents is now in open preview - the fastest way to build, serve, and deploy multi-step document agents that combine LlamaCloud's document extraction and parsing power with Agent Workflows orchestration. 🚀 Get started instantly with pre-built templates for SEC filings, invoice processing, document Q&A and more 🛠️ Use llamactl CLI to serve agents locally ☁️ Deploy to production in LlamaCloud with a single command by pointing to your git repository 📊 Build agents that extract structured data, classify documents, and include human-in-the-loop review steps Perfect for automating complex document workflows where you need both powerful parsing and precise control over the agent's decision-making process. Read the full announcement and see the SEC Insights agent demo: https://t.co/6ELWb5JMkl

The @GoogleDeepMind team just dropped Gemini 3, and we at LlamaIndex have day-zero support! We also made a little demo to show how you can leverage the advanced agentic capabilities and structured output accuracy of Gemini 3 to automate your GitHub workflow around PRs, you just need to run: 𝘱𝘪𝘱 𝘪𝘯𝘴𝘵𝘢𝘭𝘭 𝘱𝘳-𝘮𝘢𝘯𝘢𝘨𝘦𝘳 👩💻 Check out the GitHub repo: https://t.co/Txtdi2vmvx 🎥 Or take a look at the demo below 👇

We’ve built one of the most advanced ways to help you automate knowledge work over your documents A lot of document work depends on encoding custom processes. For instance, enforcing custom validation checks, doing web search, integrating with external systems. LlamaAgents is a full product suite that lets you build and deploy an agentic document extraction workflow, orchestrated purely through code. 🚫 It is not a drag-and-drop builder ✅ It directly integrates with the LlamaCloud suite: document parsing, extraction, classification, indexing. ✅ It lets you orchestrate workflows through code, meaning it’s infinitely customizable ✅ It gives you the app deployment layer out of the box - and you can even customize the app layer! Come check it out: https://t.co/miJCJPj1BA Docs: https://t.co/nLzTT9hoc4

LlamaAgents is now in open preview - the fastest way to build, serve, and deploy multi-step document agents that combine LlamaCloud's document extraction and parsing power with Agent Workflows orchestration. 🚀 Get started instantly with pre-built templates for SEC filings, invoi

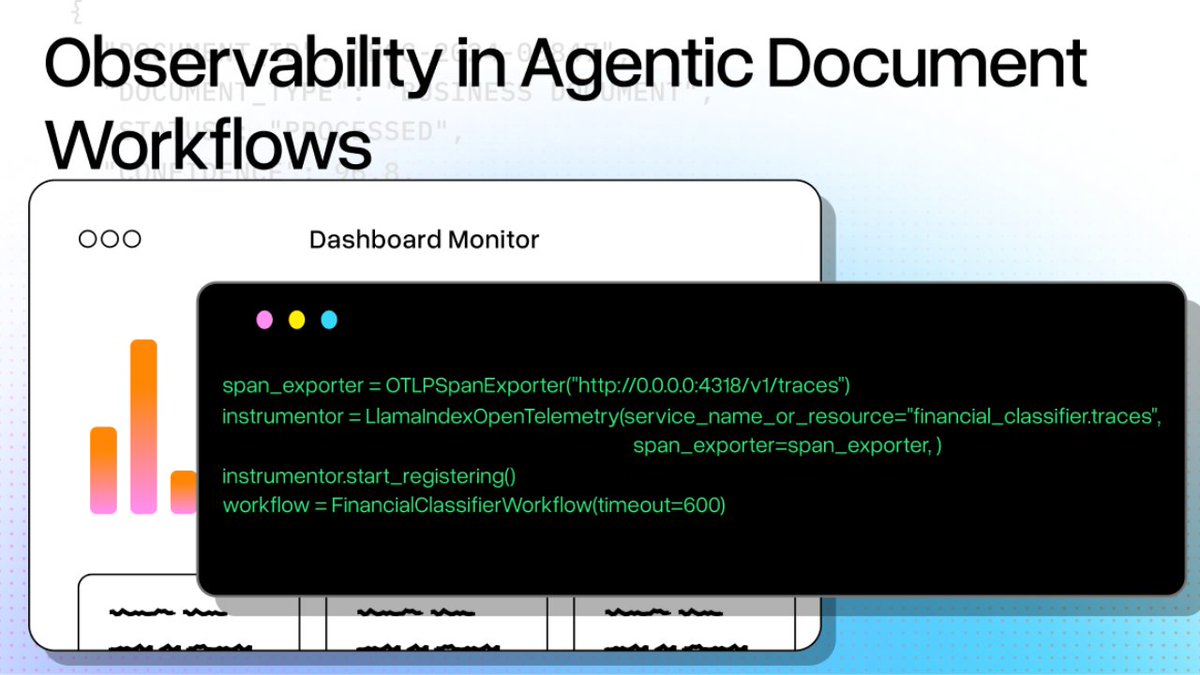

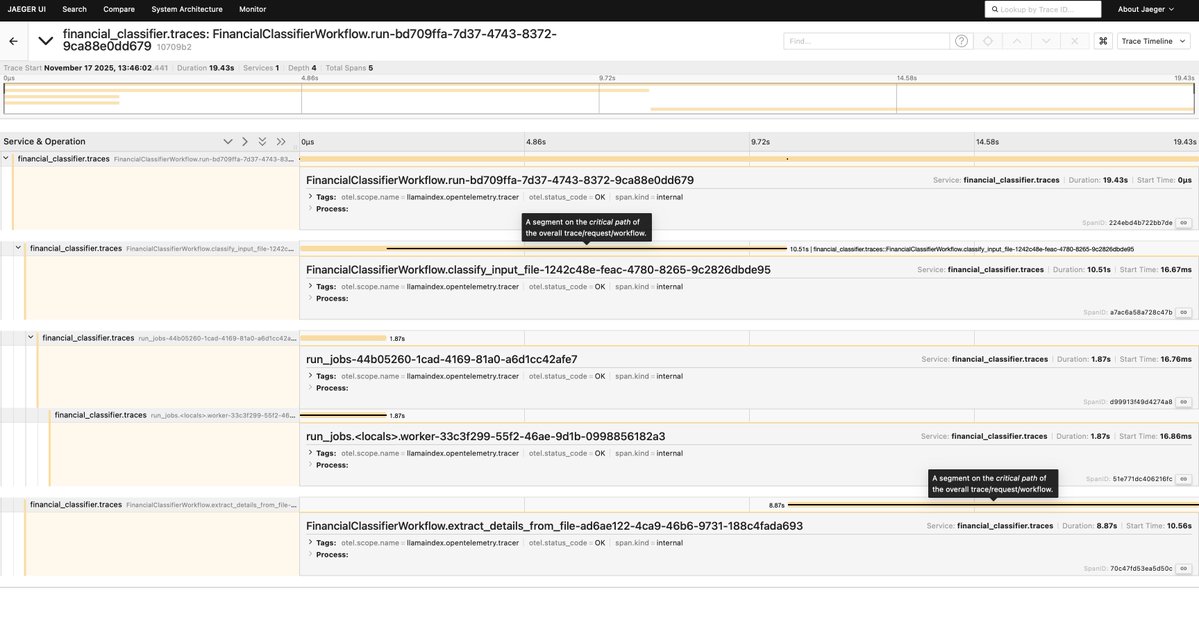

Agentic Document Workflows are crucial for AI-driven knowledge work and automation, but they are often treated as black boxes, which leads to silent failures and unexpected behaviors. With our Agent Workflows you don't have to worry about not knowing what is happening behind the scenes of your application, thanks to our built-in observability features that you can easily integrate with tracing pipelines. In our latest blog, @itsclelia shows how you can instrument your workflows to gain reliable insights over how your unstructured documents get turned into structured data, using @opentelemetry and @JaegerTracing. 📚 Read the article: https://t.co/FMPHBClMJo 👩💻 Check out the code: https://t.co/8cCP8WwCp0

Not another PDF parser 📄 🤯? Here's why AI-powered document parsing is all the rave. AI document parsing has evolved beyond OCR to systems that truly understand documents like humans do 🧠 In our latest blog post, we explore what's changing the game: 📊 Zero-shot semantic layout reconstruction - LLMs can now understand document structure without templates or training data 🔍 Deep multimodal understanding - Processing text, tables, charts, and images together out of the box 🤖 Agent engineering approach - Parsers that plan, reflect, and self-correct through reasoning workflows ⚙️ Enterprise-ready solutions - Moving beyond basic LLM APIs to production systems with metadata, provenance, and reliability Read the full article: https://t.co/YuI1oXd3Uk