@dair_ai

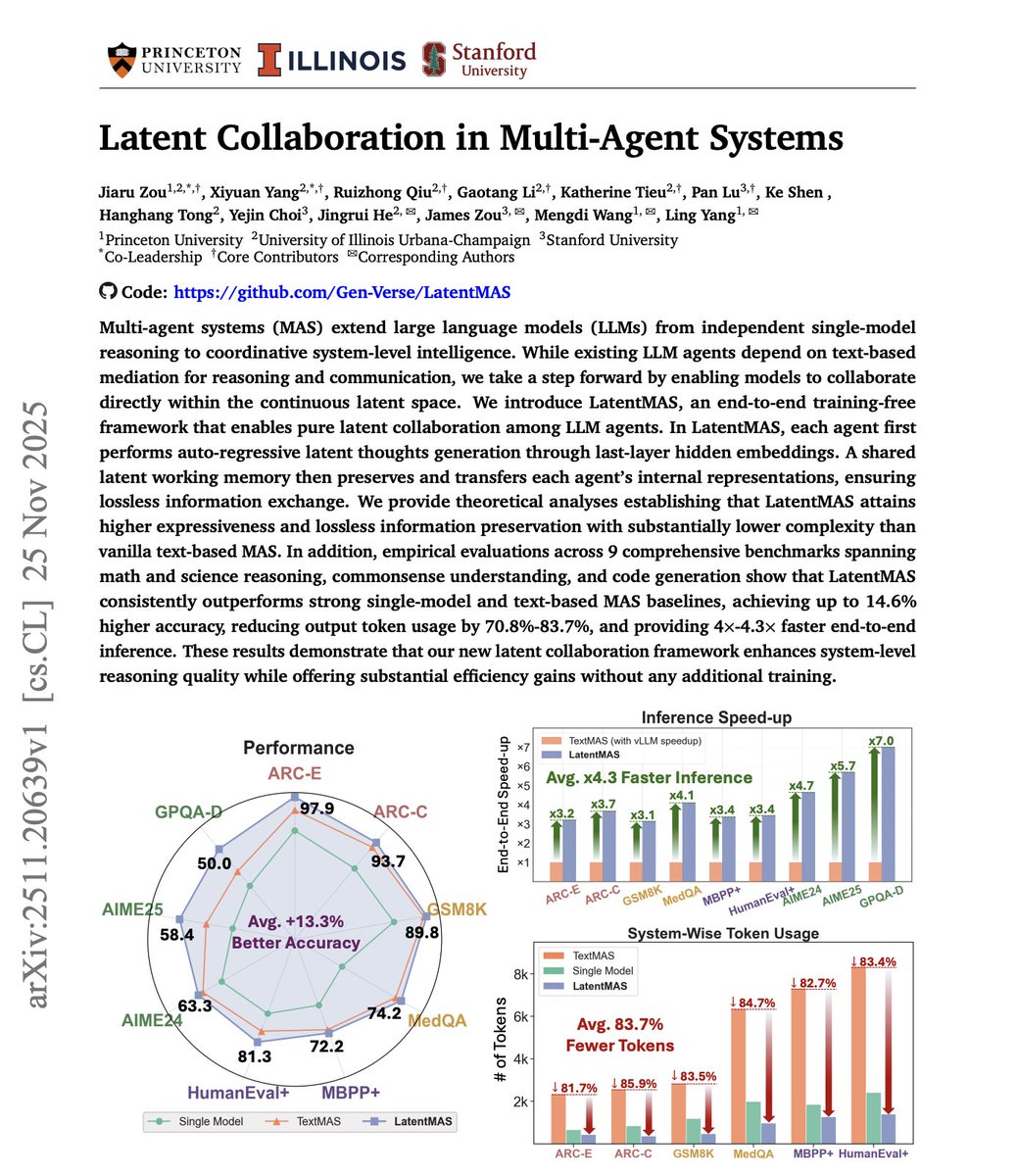

Multi-agent systems are powerful but expensive. However, the cost isn't in the reasoning itself. It's in the communication. Agents exchange full text messages, consuming tokens for every coordination step. When agents need to collaborate on complex problems, this overhead adds up fast. More agents, more messages, more tokens, more cost. This new research introduces LatentMAS, a framework where agents communicate through compressed latent vectors instead of natural language. The key idea: agents don't need to explain everything in words. They encode task-relevant information into compact hidden representations. Other agents decode what they need. No verbose back-and-forth. The framework operates in three phases: - Encoding: agents compress their knowledge into low-dimensional vectors. - Sharing: these vectors replace text messages between agents. - Decoding: receiving agents reconstruct what matters. Inspired by how transformers process information internally through hidden states. Now applied to inter-agent communication. What makes this powerful? Communication costs drop significantly while task performance stays intact. The paper provides theoretical guarantees and empirical validation across multiple reasoning benchmarks. This unlocks practical scalability. It will allow AI devs to deploy more agents, run more complex reasoning without proportional cost increases. Paper: https://t.co/nl2OQX7txH Learn to build AI agents in our academy: https://t.co/zQXQt0PMbG