@dair_ai

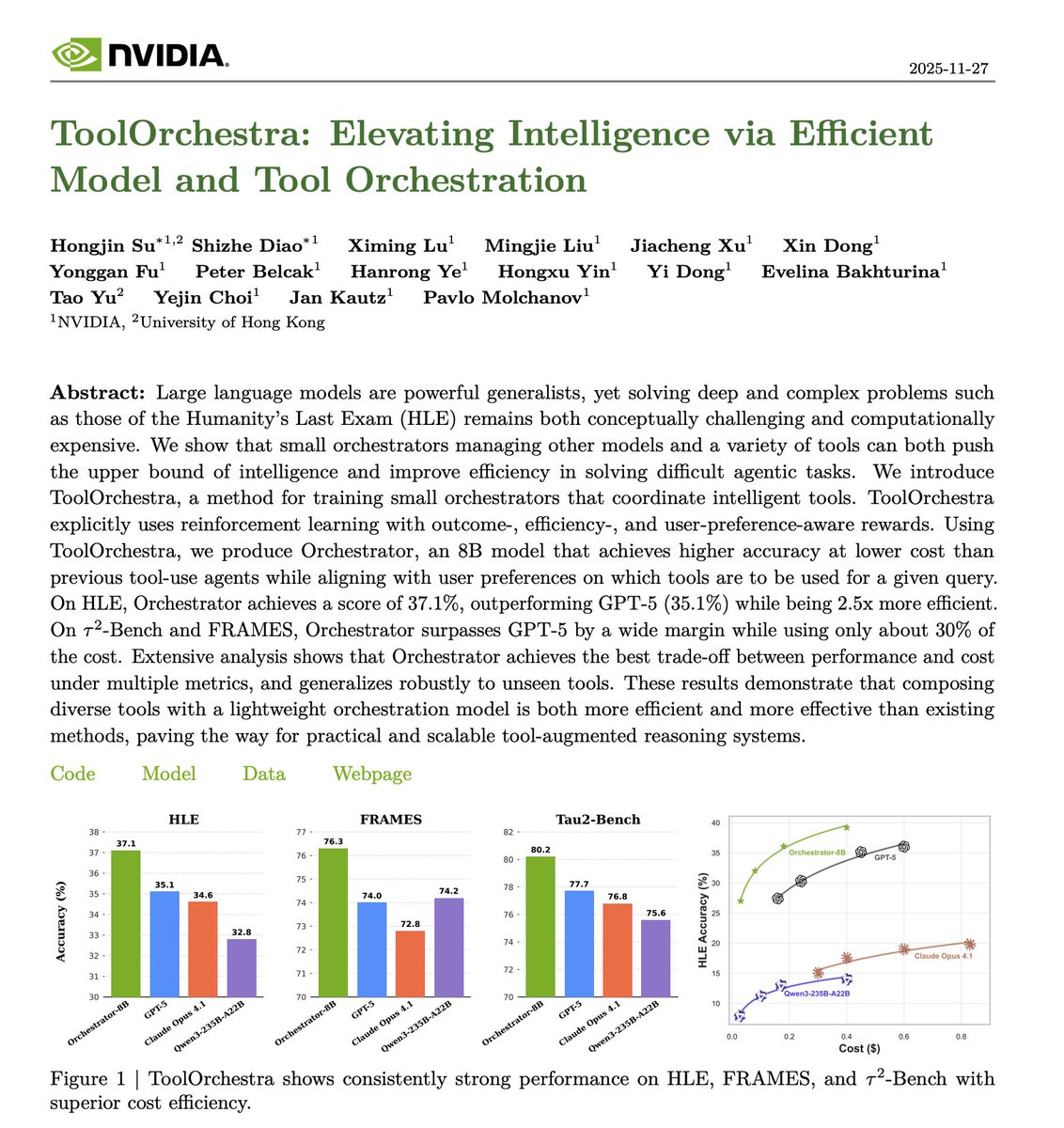

Banger paper from NVIDIA. Bigger models aren't always the answer. However, the default approach to improving AI systems today remains scaling up. More parameters, more compute, more cost. But many tasks don't require the full power of a massive model. This new research introduces ToolOrchestra, a framework that strategically coordinates multiple AI models with external tools based on task complexity. Instead of routing everything through one large model, an orchestrator decides dynamically. When is a tool necessary? Which model size fits the task? How should components coordinate? The researchers trained Orchestrator-8B, a specialized 8-billion parameter model that makes intelligent routing decisions. It determines when external tools are needed versus when model inference alone suffices. On HLE, Orchestrator achieves a score of 37.1%, outperforming GPT-5 (35.1%) while being 2.5x more efficient. They also release ToolScale, a synthetic dataset of tool usage examples across diverse scenarios for training orchestration capabilities. What it matters: strategic orchestration of smaller models with targeted tool usage can match or exceed monolithic large model performance while cutting computational overhead. Paper: https://t.co/iNvqIHGTES Learn how to build AI Agents in our academy: https://t.co/zQXQt0PMbG