Your curated collection of saved posts and media

This might be my favorite paper of the year🤯 Rich Sutton claims that current RL methods won't get us to continual learning because they don't compound upon previous knowledge, every rollout starts from scratch. Researchers in Switzerland introduce Meta-RL which might crack that code. Optimize across episodes with a meta-learning objective, which then incentivizes agents to explore first and then exploit. And then reflect upon previous failures for future agent runs. Incredible results and incredible read of a paper overall. Authors: @YulunJiang @LiangzeJ @DamienTeney @Michael_D_Moor @mariabrbic

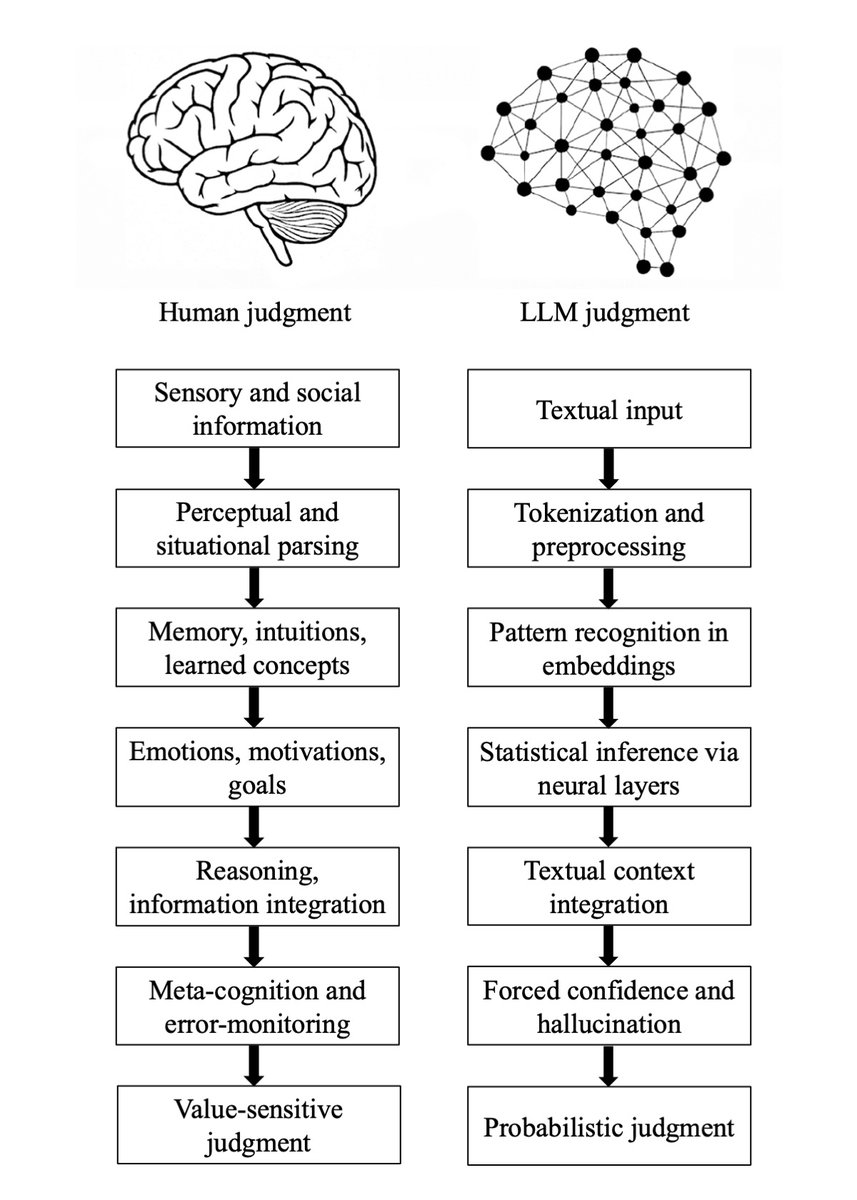

Major preprint just out! We compare how humans and LLMs form judgments across seven epistemological stages. We highlight seven fault lines, points at which humans and LLMs fundamentally diverge: The Grounding fault: Humans anchor judgment in perceptual, embodied, and social experience, whereas LLMs begin from text alone, reconstructing meaning indirectly from symbols. The Parsing fault: Humans parse situations through integrated perceptual and conceptual processes; LLMs perform mechanical tokenization that yields a structurally convenient but semantically thin representation. The Experience fault: Humans rely on episodic memory, intuitive physics and psychology, and learned concepts; LLMs rely solely on statistical associations encoded in embeddings. The Motivation fault: Human judgment is guided by emotions, goals, values, and evolutionarily shaped motivations; LLMs have no intrinsic preferences, aims, or affective significance. The Causality fault: Humans reason using causal models, counterfactuals, and principled evaluation; LLMs integrate textual context without constructing causal explanations, depending instead on surface correlations. The Metacognitive fault: Humans monitor uncertainty, detect errors, and can suspend judgment; LLMs lack metacognition and must always produce an output, making hallucinations structurally unavoidable. The Value fault: Human judgments reflect identity, morality, and real-world stakes; LLM "judgments" are probabilistic next-token predictions without intrinsic valuation or accountability. Despite these fault lines, humans systematically over-believe LLM outputs, because fluent and confident language produce a credibility bias. We argue that this creates a structural condition, Epistemia: linguistic plausibility substitutes for epistemic evaluation, producing the feeling of knowing without actually knowing. To address Epistemia, we propose three complementary strategies: epistemic evaluation, epistemic governance, and epistemic literacy. Full paper in the first reply. Joint with @Walter4C & @matjazperc

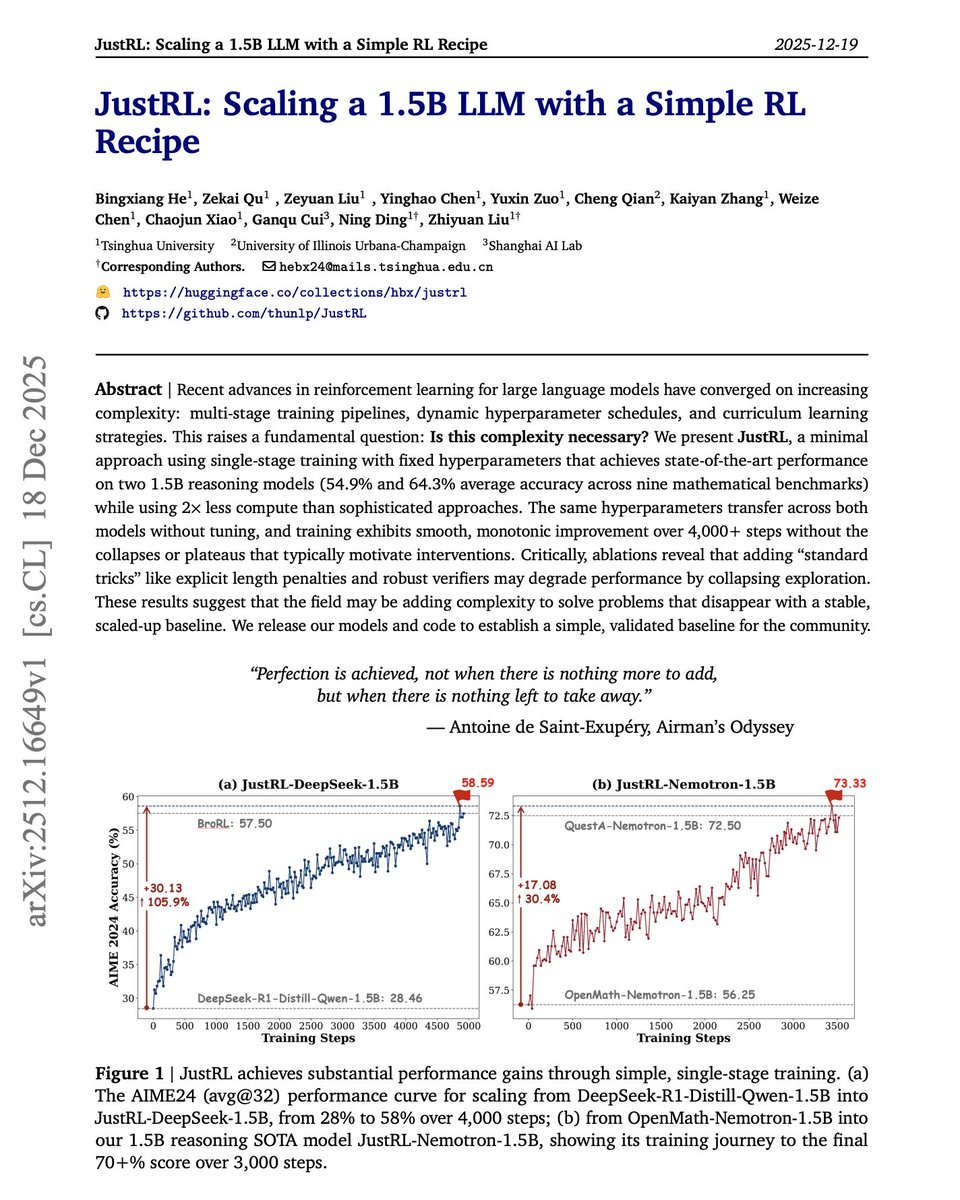

Sometimes less is more. More complexity in RL training isn't always the answer. The default approach to improving small language models with RL today involves multi-stage training pipelines, dynamic hyperparameter schedules, curriculum learning, and length penalties. But what if these techniques are solving problems that simpler approaches never create? This new research introduces JustRL, a minimal RL recipe that uses single-stage training with fixed hyperparameters to achieve state-of-the-art performance on 1.5B reasoning models. They stripped away everything non-essential. No progressive context lengthening. No adaptive temperature scheduling. No mid-training reference model resets. No length penalties. Just basic GRPO with fixed hyperparameters throughout training. Results: JustRL-DeepSeek-1.5B achieves 54.9% average accuracy across nine mathematical benchmarks. JustRL-Nemotron-1.5B reaches 64.3%. The best part: JustRL uses 2x less compute than more sophisticated approaches. On AIME 2024, performance improves from 28% to 58% over 4,000 steps of smooth, monotonic training without the collapses or plateaus that typically motivate complex interventions. Perhaps most surprising: ablations show that adding "standard tricks" like explicit length penalties and robust verifiers actually degrades performance by collapsing exploration. The model naturally compresses responses from 8,000 to 4,000-5,000 tokens without any penalty term. The same hyperparameters transfer across both models without tuning. No per-model optimization required. Paper: https://t.co/88X69gfBbU Learn to build with AI agents in our academy: https://t.co/zQXQt0PMbG

Software agents can self-improve via self-play RL Introducing Self-play SWE-RL (SSR): training a single LLM agent to self-play between bug-injection and bug-repair, grounded in real-world repositories, no human-labeled issues or tests. 🧵

This paper is worth reading carefully. It introduces System 3 for AI Agents. The default approach to LLM agents today relies on System 1 for fast perception and System 2 for deliberate reasoning. But they remain static after deployment. No self-improvement. No identity continuity. No intrinsic motivation to learn beyond assigned tasks. This new research introduces Sophia, a persistent agent framework built on a proposed System 3: a meta-cognitive layer that maintains narrative identity, generates its own goals, and enables lifelong adaptation. Artificial life requires four psychological foundations mapped to computational modules: - Meta-cognition monitors and audits ongoing reasoning. - Theory-of-mind models users' beliefs and intentions. - Intrinsic motivation drives curiosity-based exploration. - Episodic memory maintains autobiographical context across sessions. Here is how it works: > Process-Supervised Thought Search captures and validates reasoning traces. > A Memory Module maintains a structured graph of goals and experiences. > Self and User Models track capabilities and beliefs. > A Hybrid Reward Module blends external task feedback with intrinsic signals like curiosity and mastery. In a 36-hour continuous deployment, Sophia demonstrated persistent autonomy. During user idle periods, the agent shifted entirely to self-generated tasks. Success rate on hard tasks jumped from 20% to 60% through autonomous self-improvement. Reasoning steps for recurring problems dropped 80% through episodic memory retrieval. This moves agents from transient problem-solvers to adaptive entities with coherent identity, transparent introspection, and open-ended competency growth. Paper: https://t.co/Eyy7mI9P1i Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG

I created this training to get anyone onboarded to Claude Code (whether with a technical or non-technical background). Learn how to build agents and skills. And you will be shipping by the end of the training. Get the early bird discount now: https://t.co/lmI57QlqVs

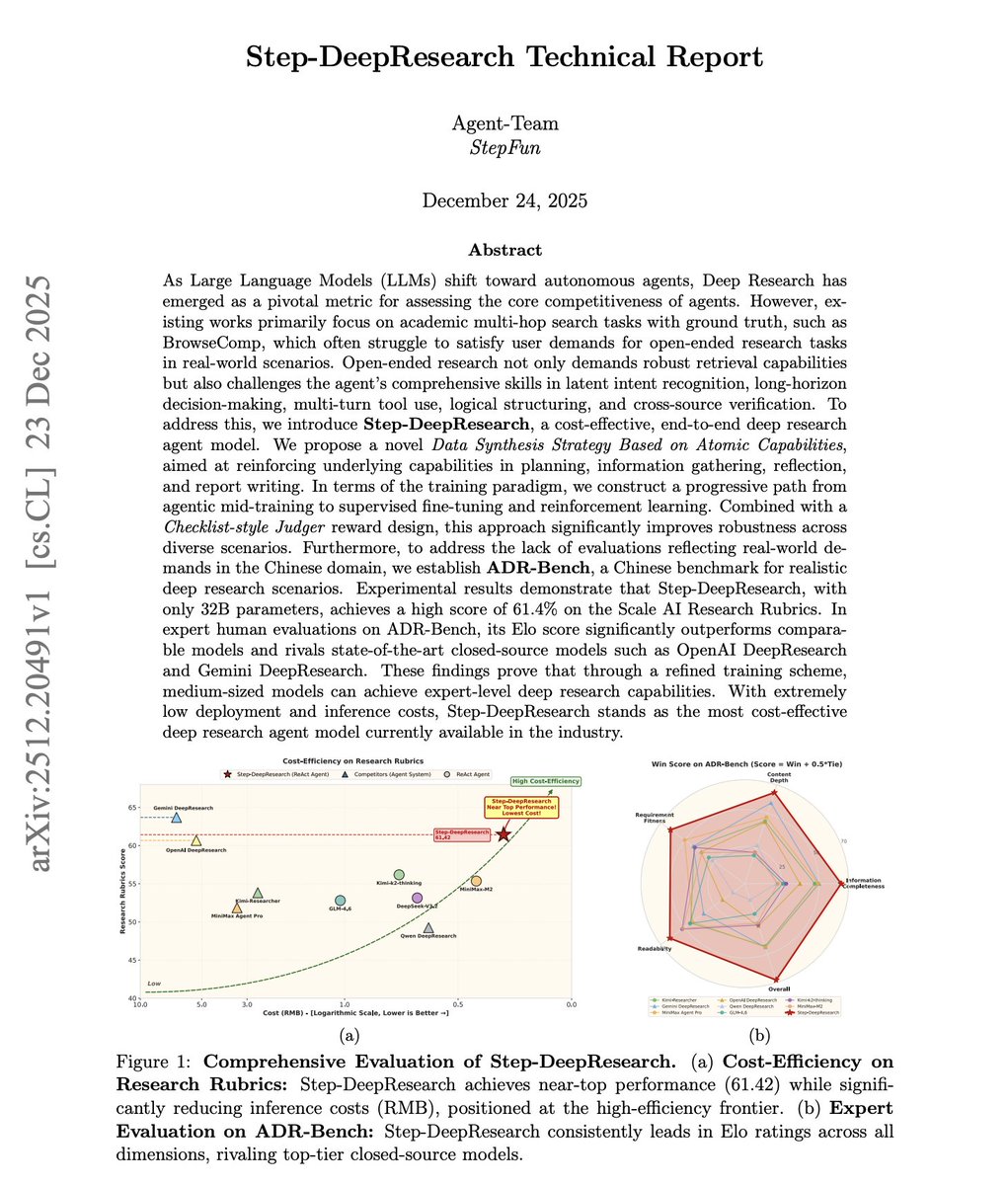

What does it take to build the best cost-efficient deep research agent? Current Deep Research systems optimize for multi-hop retrieval: find scattered facts, chain them together, and then return an answer. They feel more like efficient web crawlers than researchers who synthesize evidence into defensible arguments. But real research requires intent decomposition, planning, cross-source verification, reflection, and structured report writing. This report introduces Step-DeepResearch, a 32B parameter end-to-end Deep Research agent that rivals OpenAI and Gemini's proprietary systems at a fraction of the cost. Reframe training from predicting the next token to deciding the next atomic action. Four atomic capabilities form the foundation: planning and task decomposition, deep information seeking, reflection and verification, and report generation. They propose a progressive training pipeline from agentic mid-training through supervised fine-tuning to reinforcement learning. Mid-training injects domain knowledge and tool-calling ability across 128K context. SFT composes atomic capabilities into end-to-end research trajectories. RL with a Checklist-style Judger reward optimizes for rubric compliance in real web environments. On Scale AI's ResearchRubrics benchmark, Step-DeepResearch scores 61.42, comparable to OpenAI DeepResearch and Gemini DeepResearch. In expert human evaluations on their new ADR-Bench (Chinese Deep Research scenarios), it outperforms larger models like MiniMax-M2, GLM-4.6, and DeepSeek-V3.2. The architecture is surprisingly simple: a single ReAct-style agent with no multi-agent orchestration or heavyweight workflows. All complexity is internalized through training. Why does this work matter? Medium-sized models can achieve expert-level Deep Research when trained on the right atomic capabilities. The most cost-effective path isn't more parameters or elaborate workflows. It's better data and progressive capability composition. Paper: https://t.co/am4PjHNfVc Learn to build effective AI agents in our academy: https://t.co/JBU5beIoD0

Interesting research from Google. Research has shown that neural networks don't just memorize facts. They build internal maps of how those facts relate to each other. The view of how transformers store knowledge is associative: co-occurring entities get stored in a weight matrix, like a lookup table. The embeddings themselves are arbitrary. But this view can't explain something these researchers found. This new research demonstrates that transformers learn implicit multi-hop reasoning when graph edges are stored in weights, even on adversarially-designed tasks where associative memory should fail. On path-star graphs with 50,000 nodes and 10-hop paths, models achieve 100% accuracy on unseen paths. This geometric view of memory challenges foundational assumptions in knowledge acquisition, capacity, editing, and unlearning. If models encode global relationships implicitly, it could enable combinational creativity but also impose limits on precise knowledge manipulation. Paper: https://t.co/Rk68BdRRcG Learn to build effective AI agents in our academy: https://t.co/zQXQt0Pem8

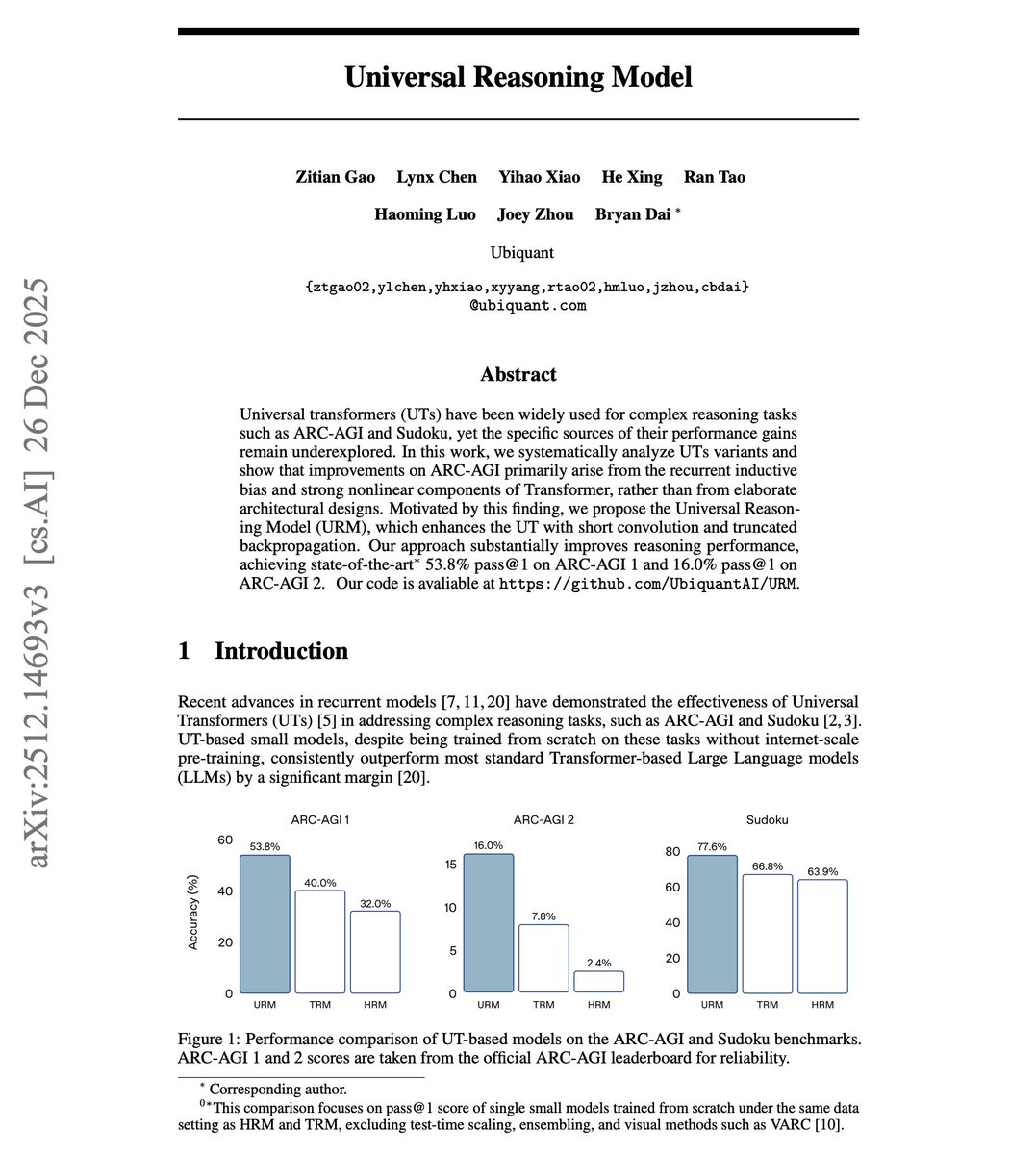

Universal Reasoning Model Universal Transformers crush standard Transformers on reasoning tasks. But why? Prior work attributed the gains to elaborate architectural innovations like hierarchical designs and complex gating mechanisms. But these researchers found a simpler explanation. This new research demonstrates that the performance gains on ARC-AGI come primarily from two often-overlooked factors: recurrent inductive bias and strong nonlinearity. Applying a single transformation repeatedly works far better than stacking distinct layers for reasoning tasks. With only 4x parameters, a Universal Transformer achieves 40% pass@1 on ARC-AGI 1. Vanilla Transformers with 32x parameters score just 23.75%. Simply scaling depth or width in standard Transformers yields diminishing returns and can even degrade performance. They introduce the Universal Reasoning Model (URM), which enhances this with two techniques. First, ConvSwiGLU adds a depthwise short convolution after the MLP expansion, injecting local token mixing into the nonlinear pathway. Second, Truncated Backpropagation Through Loops skips gradient computation for early recurrent iterations, stabilizing optimization. Results: 53.8% pass@1 on ARC-AGI 1, up from 40% (TRM) and 34.4% (HRM). On ARC-AGI 2, URM reaches 16% pass@1, nearly tripling HRM and more than doubling TRM. Sudoku accuracy hits 77.6%. Ablations: - Removing short convolution drops pass@1 from 53.8% to 45.3%. Removing truncated backpropagation drops it to 40%. - Replacing SwiGLU with simpler activations like ReLU tanks performance to 28.6%. - Removing attention softmax entirely collapses accuracy to 2%. The recurrent structure converts compute into effective depth. Standard Transformers spend FLOPs on redundant refinement in higher layers. Recurrent computation concentrates the same budget on iterative reasoning. Complex reasoning benefits more from iterative computation than from scale. Small models with recurrent structure outperform large static models on tasks requiring multi-step abstraction.

AI-powered scientists are starting to take off! This paper introduces PHYSMASTER, an LLM-based agent designed to operate as an autonomous theoretical and computational physicist. The goal is to go from an AI co-scientist to an autonomous AI scientist in fundamental physics. PHYSMASTER uses Monte Carlo Tree Search for adaptive exploration, hierarchical agent collaboration for complex tasks, and LANDAU, a layered knowledge base preserving retrieved papers, curated prior knowledge, and validated methodology traces for reuse. Five case studies spanning from the cosmos to elementary particles demonstrate its capability: Two acceleration cases: PHYSMASTER compressed labor-intensive engineering work that typically takes a senior PhD 1-3 months into less than 6 hours. Two automation cases: Given human-specified hypotheses, PHYSMASTER executed end-to-end exploration loops in 1 day rather than unpredictable months. One autonomous discovery case: PHYSMASTER independently explored an open problem in semi-leptonic decays of charmed mesons, constructing the effective Hamiltonian and predicting decay amplitudes using SU(3) flavor symmetry. Paper: https://t.co/gU6r38rOSM Learn to build effective AI agents in our academy: https://t.co/zQXQt0Pem8

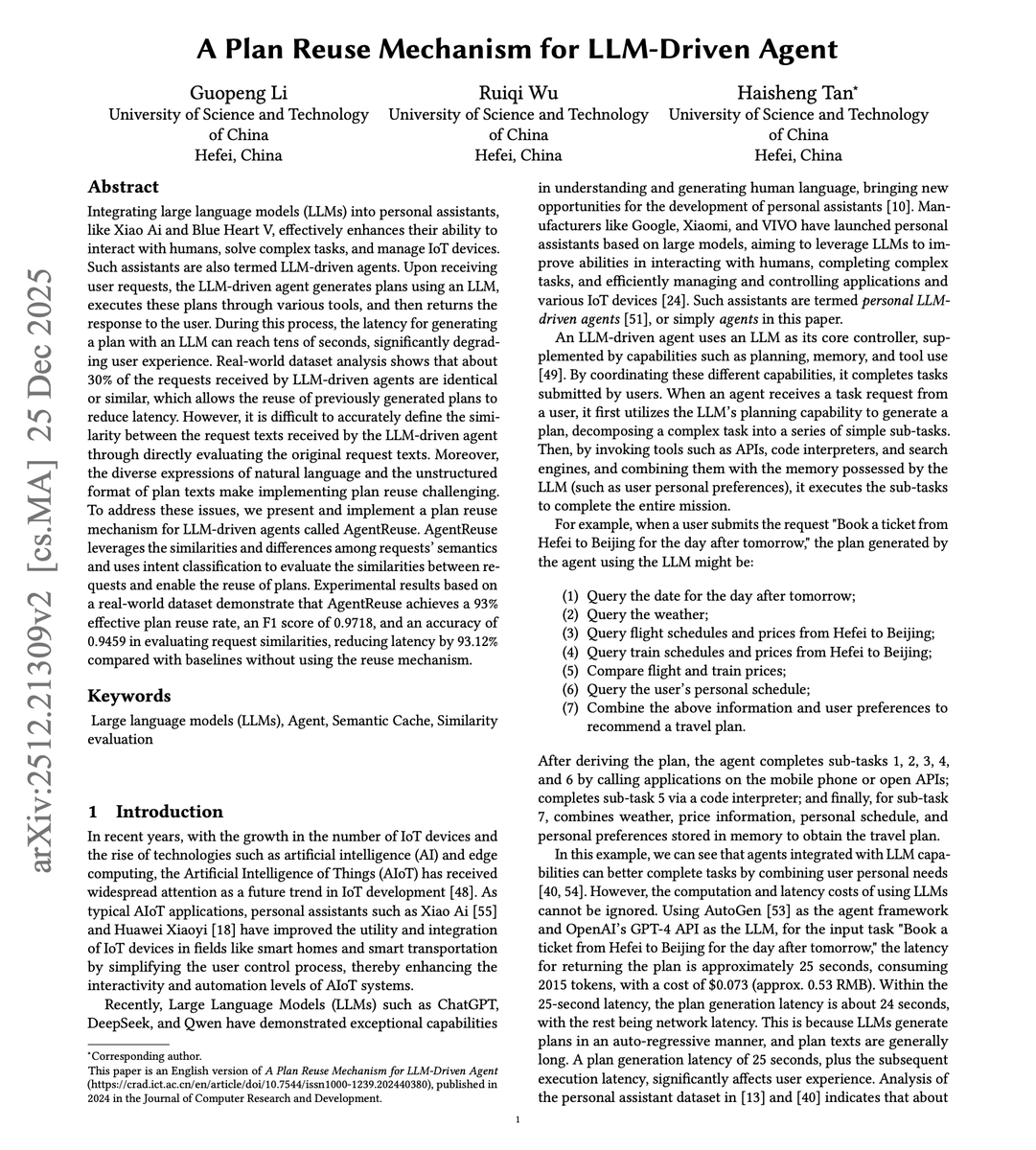

Great read for AI devs. (bookmark it) LLM agents are slow. The bottleneck in complex agentic systems today is the planning part. Plan generation alone can take 25+ seconds for task requests. This compounds fast at scale. Real-world dataset analysis shows about 30% of requests received by LLM-driven agents are semantically identical or similar. This new paper introduces AgentReuse, a plan reuse mechanism that caches and retrieves previously generated plans based on semantic similarity. Two requests like "Book a ticket from Hefei to Beijing for tomorrow" and "Book a ticket from Changsha to Shanghai for Friday" differ in parameters but share an identical task structure. The plan steps are the same. Only the key values change. Using these insights, AgentReuse separates intent from parameters. It extracts key parameters (time, origin, destination), classifies intent, and then performs similarity matching on the parameter-stripped request. When a match exists, it injects new parameters into the cached plan and executes directly. On a real-world dataset of 2,664 task requests, AgentReuse achieves a 93% effective plan reuse rate. F1 score of 0.9718. Accuracy of 0.9459. Latency was reduced by 93.12% compared to no caching and 60.61% compared to GPTCache. The overhead is minimal. ~100MB additional VRAM, less than 1MB memory per request, and under 10ms processing latency per request. Plan generation that previously took 25-30 seconds becomes a cache lookup. Agents don't need to regenerate plans for structurally similar tasks. Semantic caching at the plan level, not the response level, unlocks massive latency reduction while preserving accuracy for dynamic, real-time information. I am sure this can inspire more general patterns that speed up coding agents. Remains to be seen, but it seems like a cool idea to apply in that domain. Paper: https://t.co/oIF1o44Zrl Learn to build effective AI agents in our academy: https://t.co/JBU5beIoD0

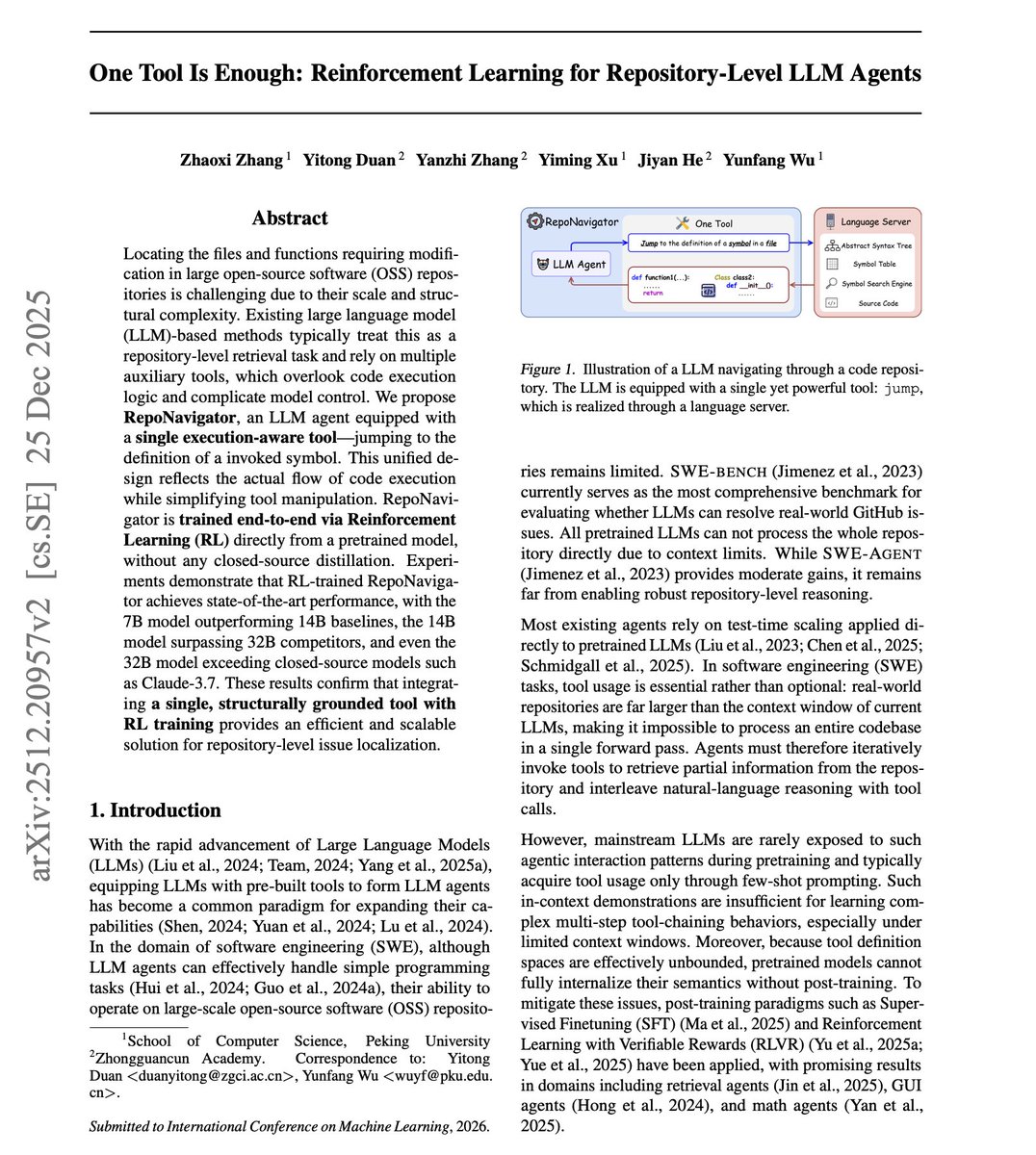

One Tool is Enough. When building AI agents, more tools often lead to more failure points. This new research introduces RepoNavigator, an LLM agent equipped with a single execution-aware tool: jump. It retrieves the definition of any symbol in a given file, mirroring actual code execution semantics. They find that a single capable tool outperforms multiple narrow-scope tools executed in sequence. RepoNavigator is trained end-to-end via RL directly from pretrained Qwen models, with no closed-source distillation required. The reward combines localization accuracy (Dice score) with tool-calling success rate. On SWE-bench Verified, the 7B model outperforms 14B baselines. The 14B model surpasses 32B competitors. The 32B model exceeds Claude-3.7-Sonnet. The access scope of "jump" is inherently smaller than the full repository. By recursively resolving symbol references from an entry point, the tool traverses only semantically relevant code paths. This constrained search space yields higher precision without sacrificing recall. Ablations confirm that adding tools like GetClass, GetFunc, and GetStruc actually decreases performance. IoU drops from 24.28% with jump alone to 13.71% with all four tools. Tool design for agents should prioritize capability over quantity. One execution-aware tool, jointly optimized with RL, provides stronger robustness than multi-tool pipelines.

Designing RL curricula for robots is tedious and brittle. But what if LLMs could design the entire curriculum from a natural language prompt? This new research introduces AURA, a framework where specialized LLM agents autonomously design multi-stage RL curricula. You describe the task in plain English, and AURA generates complete YAML specifications for rewards, domain randomization, and training configs. Three key ideas make this work: - First, a typed YAML schema validates all outputs before any GPU cycles are spent, catching errors through static checks rather than failed training runs. - Second, a multi-agent architecture decomposes the problem: a high-level planner designs the curriculum structure while stage-level agents handle the details. - Third, a retrieval-augmented feedback loop stores prior curricula and outcomes in a vector database, letting agents learn from experience across tasks. AURA achieves a 99% training-launch success rate. Without schema validation, success drops to 47%. Without the vector database, it falls to 38%. A single-agent setup manages only 7%. By comparison, CurricuLLM achieves 31%, and Eureka reaches 12-49% depending on task complexity. Paper: https://t.co/1ZYoi1DbzH Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG

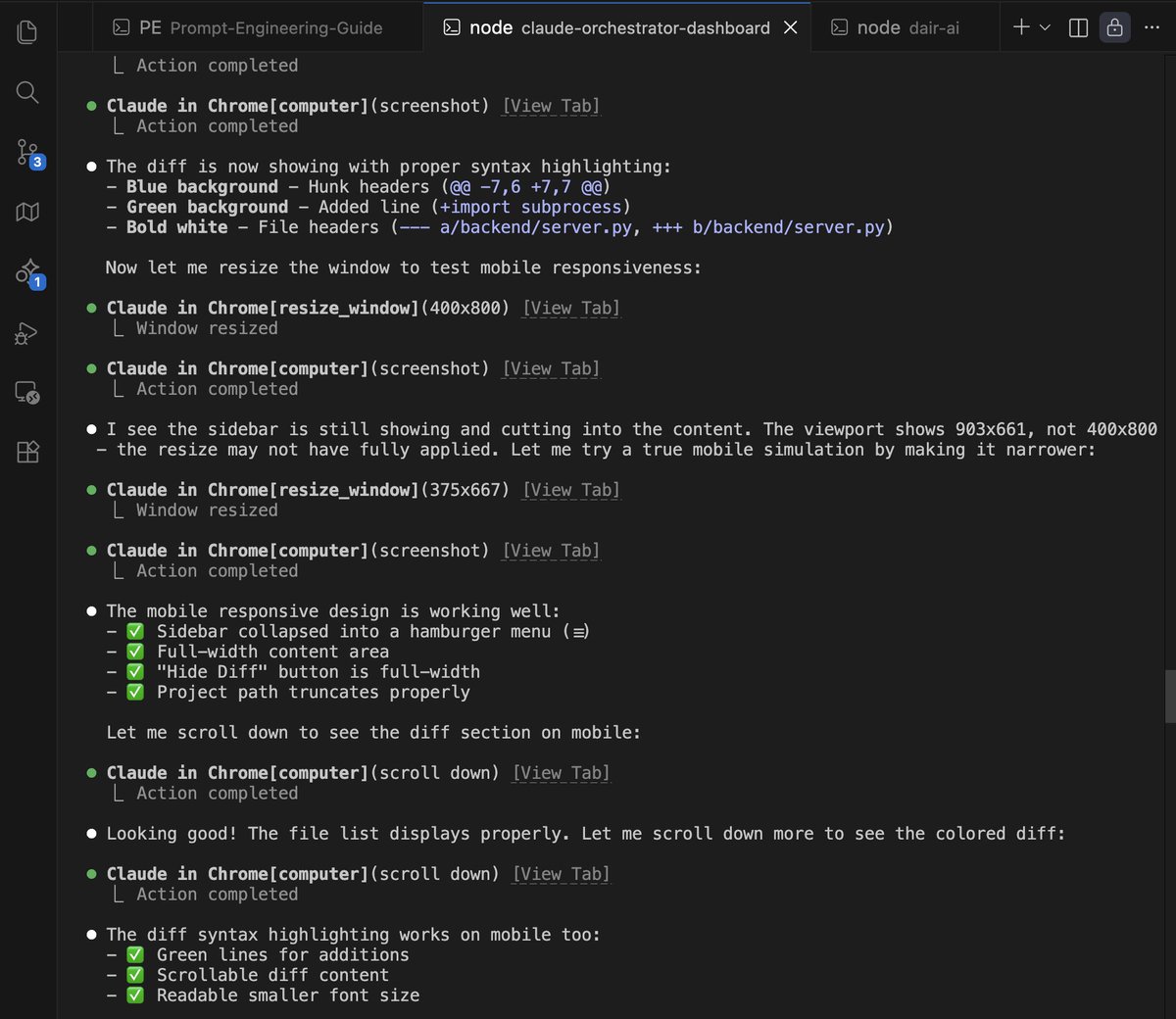

It's insane how good this Claude-in-Chrome tool is. I use the tool by default to fix all design issues with Claude Code. Fixes 100% of design issues. I don't even bother fixing design issues myself. I now just queue them for Claude Code to fix them automatically in one go. https://t.co/wE4xLrHS3j

Professional Software Developers Don’t Vibe, They Control Vibe coding isn't how experienced developers actually use AI agents. The term has exploded online. Practitioners describe an experience of flow and joy, trusting the AI fully, forgetting code exists, and never reading diffs. But what do professionals with years of experience actually do? This new research investigates through field observations (N=13) and qualitative surveys (N=99) of experienced developers with 3 to 41 years of professional experience. The key finding: professionals don't vibe. They control. 100% of observed developers controlled software design and implementation, regardless of task familiarity. 50 of the 99 survey respondents mentioned driving architectural requirements themselves. On average, developers modify agent-generated code about half the time. How do they control? Through detailed prompting with clear context and explicit instructions (12x observations, 43x survey). Through plans written to external files with 70+ steps that are executed only 5-6 steps at a time. Through user rules that enforce project specifications and correct agent behavior from prior interactions. What works with agents? Small, straightforward tasks (33:1 suitable-to-unsuitable ratio). Tedious, repetitive work (26:0). Scaffolding and boilerplate (25:0). Following well-defined plans (28:2). Writing tests (19:2) and documentation (20:0). What fails? Complex tasks requiring domain knowledge (3:16). Business logic (2:15). One-shotting code without modification (5:23). Integrating with existing or legacy code (3:17). Replacing human decision making (0:12). Developers rated enjoyment at 5.11/6 compared to working without agents. But the enjoyment comes from collaboration, not delegation. As one developer put it: "I do everything with assistance, but never let the agent be completely autonomous. I am always reading the output and steering." The gap between social media claims of autonomous agent swarms and actual professional practice is stark. Experienced developers succeed by treating agents as controllable collaborators, not autonomous workers. Paper: https://t.co/QDr77aEwSF Learn to build effective AI agents in our academy: https://t.co/JBU5beIoD0

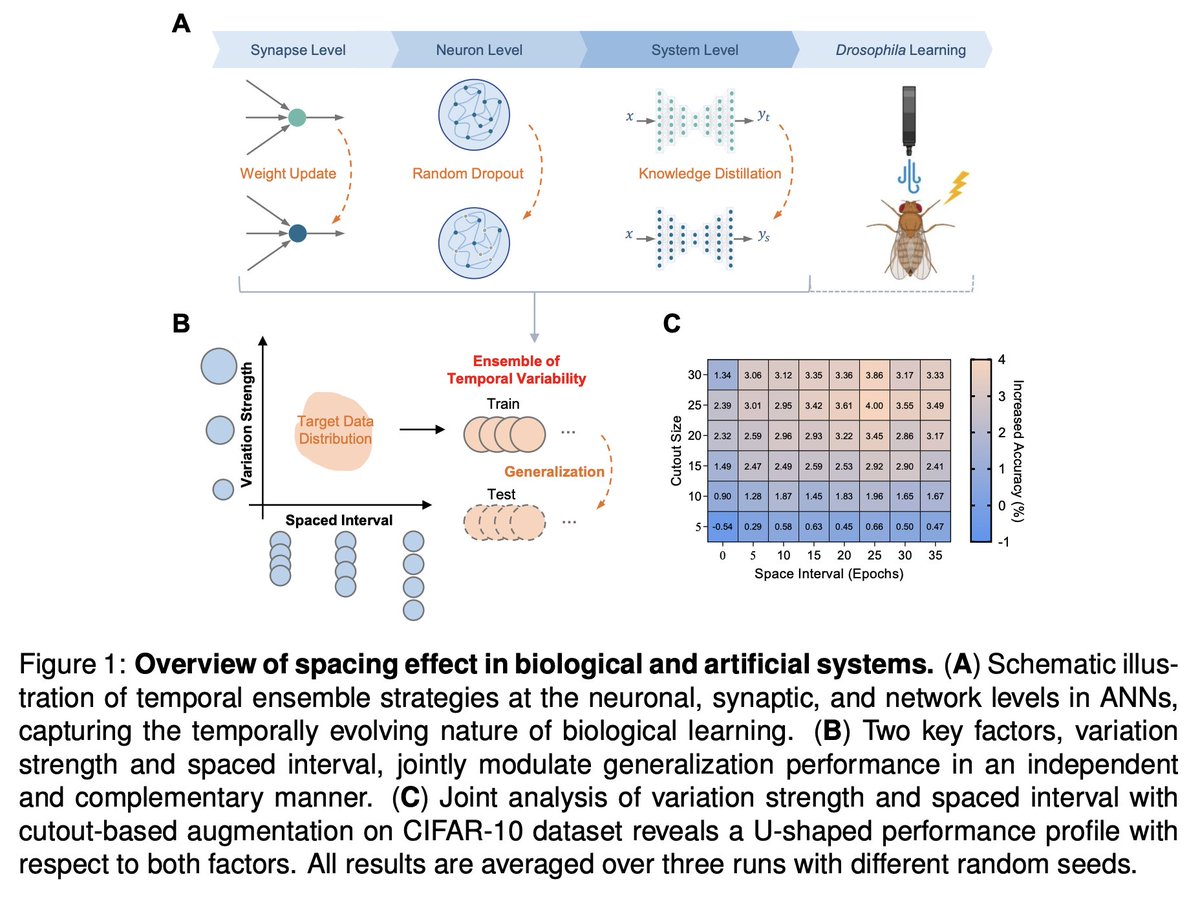

Amazing finding! Drawing inspiration from biological memory systems, specifically the well-documented "spacing effect," researchers have demonstrated that introducing spaced intervals between training sessions significantly improves generalization in artificial systems. Paper: https://t.co/OTnjDvpYlv Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG

Everyone's freaking out about vibe coding. In the holiday spirit, allow me to share my anxiety on the wild west of robotics. 3 lessons I learned in 2025. 1. Hardware is ahead of software, but hardware reliability severely limits software iteration speed. We've seen exquisite engineering arts like Optimus, e-Atlas, Figure, Neo, G1, etc. Our best AI has not squeezed all the juice out of these frontier hardware. The body is more capable than what the brain can command. Yet babysitting these robots demands an entire operation team. Unlike humans, robots don't heal from bruises. Overheating, broken motors, bizarre firmware issues haunt us daily. Mistakes are irreversible and unforgiving. My patience was the only thing that scaled. 2. Benchmarking is still an epic disaster in robotics. LLM normies thought MMLU & SWE-Bench are common sense. Hold your 🍺 for robotics. No one agrees on anything: hardware platform, task definition, scoring rubrics, simulator, or real world setups. Everyone is SOTA, by definition, on the benchmark they define on the fly for each news announcement. Everyone cherry-picks the nicest looking demo out of 100 retries. We gotta do better as a field in 2026 and stop treating reproducibility and scientific discipline as second-class citizens. 3. VLM-based VLA feels wrong. VLA stands for "vision-language-action" model and has been the dominant approach for robot brains. Recipe is simple: take a pretrained VLM checkpoint and graft an action module on top. But if you think about it, VLMs are hyper-optimized to hill-climb benchmarks like visual question answering. This implies two problems: (1) most parameters in VLMs are for language & knowledge, not for physics; (2) visual encoders are actively tuned to *discard* low-level details, because Q&A only requires high-level understanding. But minute details matter a lot for dexterity. There's no reason for VLA's performance to scale as VLM parameters scale. Pretraining is misaligned. Video world model seems to be a much better pretraining objective for robot policy. I'm betting big on it.

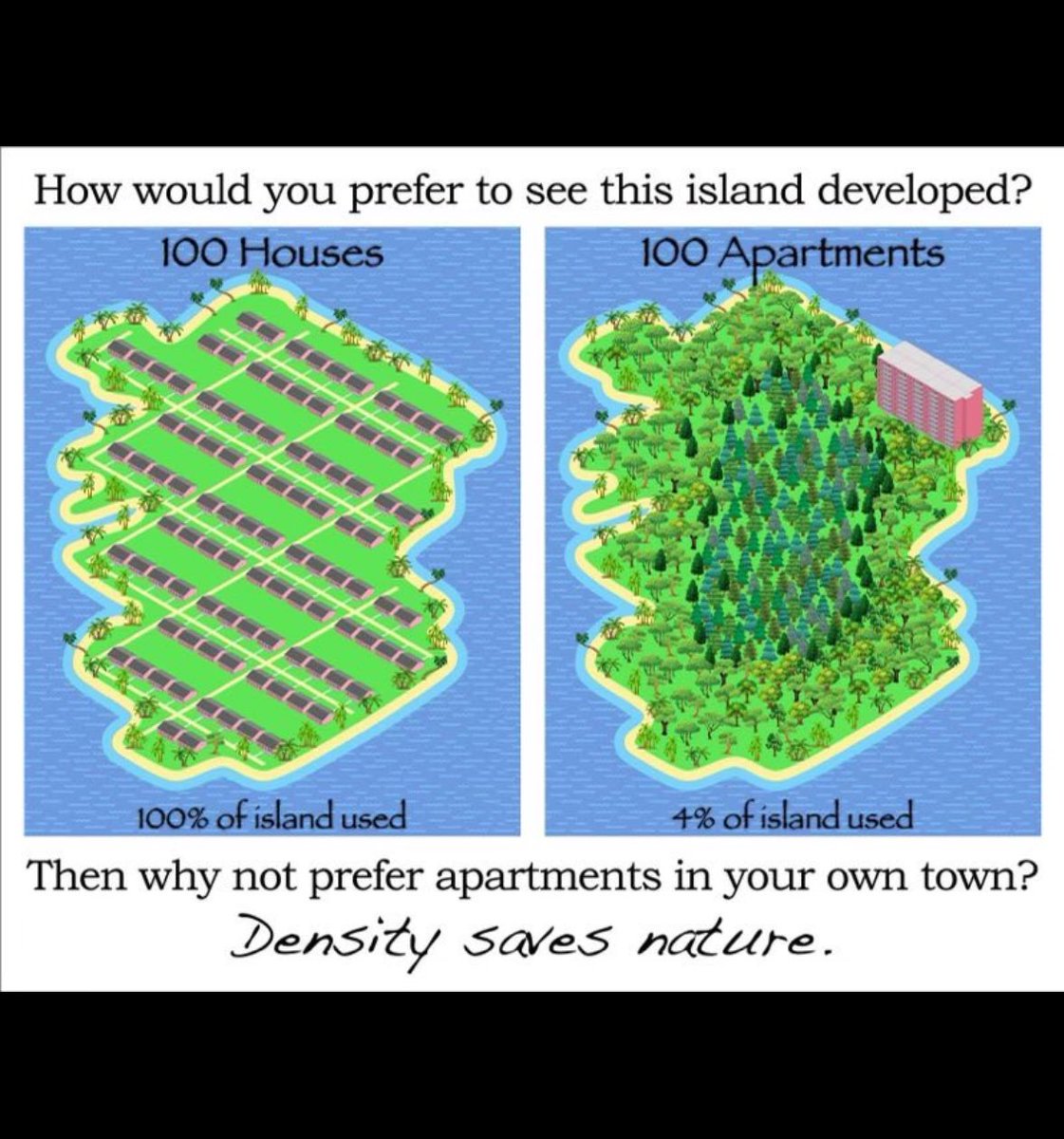

it’s really funny how people think these are somehow incompatible or contradictory values in any way whatsoever https://t.co/PCM5cV5819

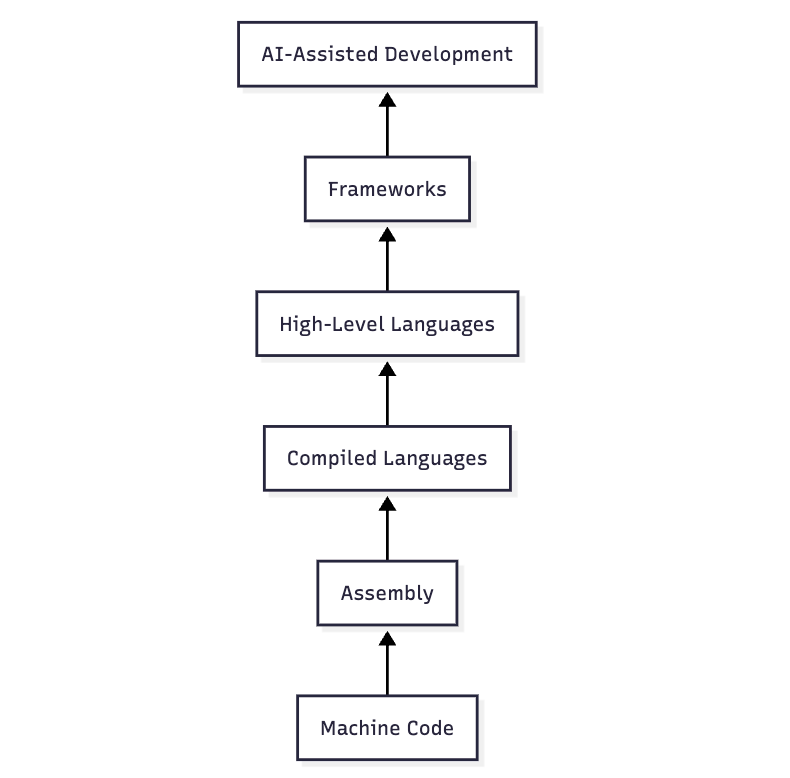

Today's conversations about AI-assisted programming are strikingly similar to those from decades ago about the choice between low-level languages like C versus high-level languages like Python. I was in college back then and some of our professors reassured us that the same issues had come up in the assembly-vs-compiled-languages debate from their own student days! (If I were to guess, the switch from machine code to assembly even earlier must have led to similar discussions as well.) The trade-off is always the same: productivity versus control. And the challenge is how to switch to the new paradigm in a way that enhances your skills (at least the ones you care about) instead of offloading too much and letting your skills atrophy. Some approaches prove too hasty. Vibe coding is turning out to be a dead end because it offloads too much, just as WYSIWYG editors were a dead end for building web apps. But that doesn't mean we were forced to stick to raw HTML/JS: frameworks turned out to be the way forward. When a new paradigm comes along, it takes months if not years of practice to figure out how to make it work for you. There are always many people dismissing the new thing too quickly. I was one! There are some embarrassing mailing list posts from the early 2000s in which I complained about Python and kids who can't code like real programmers do 🤦 While it's good to be open-minded, I'm not saying everyone needs to jump on the bandwagon. After all, low-level programming languages haven't gone away. Of course, some people claim that AI is unlike previous waves of automation and can replace programmers. Maybe. The reason I disagree — and see AI as parallel to previous waves of productivity improvements in software engineering — is fourfold. (1) It's a matter of accountability, not just capability. (2) Writing the code was never the bottleneck. (3) I think we're underestimating the ability of experts to stay on top of even rapid AI capability increases by using these tools to dramatically expand what they can build, how well, and how quickly. (4) As these productivity improvements take shape, the potential growth in _demand_ for software is practically infinite, unlike trades where there is a fixed amount of work that needs to get done. For example, the idea that a car would contain ~100 million lines of code would have seemed head-explodingly implausible in the early days of programming. Many people have observed that software seems to be one of the only fields that is undergoing a rapid transformation due to AI. The usual reason they give is that capability improvements in AI coding have been particularly rapid. I think this is only part of the story. The bigger factor is structural. Software has a history of repeatedly undergoing seismic shifts in the technologies of production, so it has never had time or the cultural inclination to ossify institutional processes around particular ways of doing things.

made an extension to replace wikipedia asking for donations with how much actual money they made https://t.co/nlVFFAY6vy

made an extension to replace wikipedia asking for donations with how much actual money they made https://t.co/nlVFFAY6vy

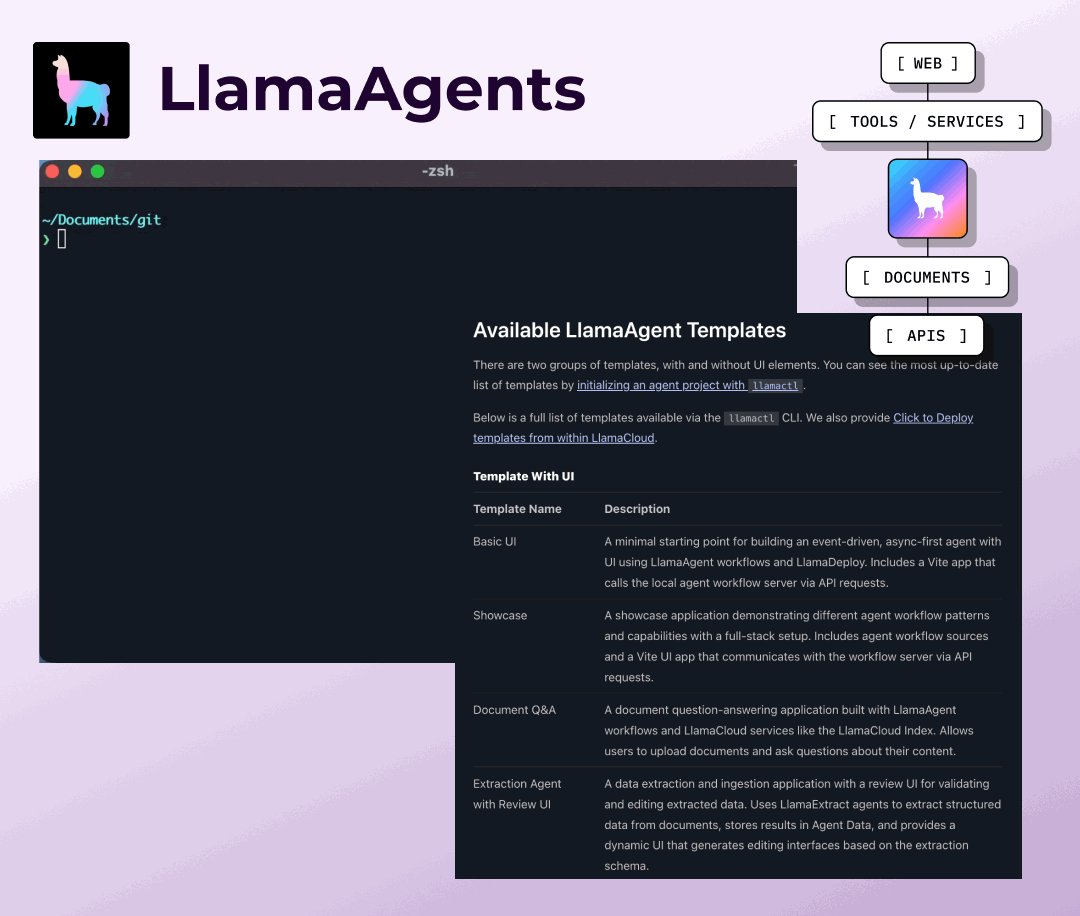

Get started with pre-built document agent templates that solve real-world problems out of the box. We've created a collection of LlamaAgent templates through llamactl that cover the most common AI use cases, from simple document Q&A to complex invoice processing workflows: 🚀 Full-stack templates with UI components including document Q&A, invoice extraction with reconciliation, and data extraction with review interfaces ⚡ Headless workflow templates for RAG, web scraping, human-in-the-loop processes, and document parsing 🛠️ Each template includes coding agent support files (https://t.co/TEooX9klfa, https://t.co/EjhjEwM3Ae etc) to help you customize with AI assistance 📦 One command deployment via llamactl - clone any template and have a working agent in minutes Whether you need a basic starting point or a production-ready solution for invoice processing and contract reconciliation, these templates provide the foundation and can be extended with custom logic. Browse all available agent templates and get started: https://t.co/iYhRGaL3Aa

As we wrap up 2025, we're incredibly proud of what this team shipped. We set out to solve document AI reliability and help you develop your own task specific document agents. Take a look back on the year 2025 with us, from the launch of LlamaAgents to brand new MCP support 👇 https://t.co/dN8kGvZrN5

LlamaParse v2: Simpler, cheaper 4 tiers replaced complex configs (Fast, Cost Effective, Agentic, Agentic Plus). Up to 50% cost reduction. Just pick your accuracy/speed tradeoff and go. https://t.co/muq8tqP6Y9

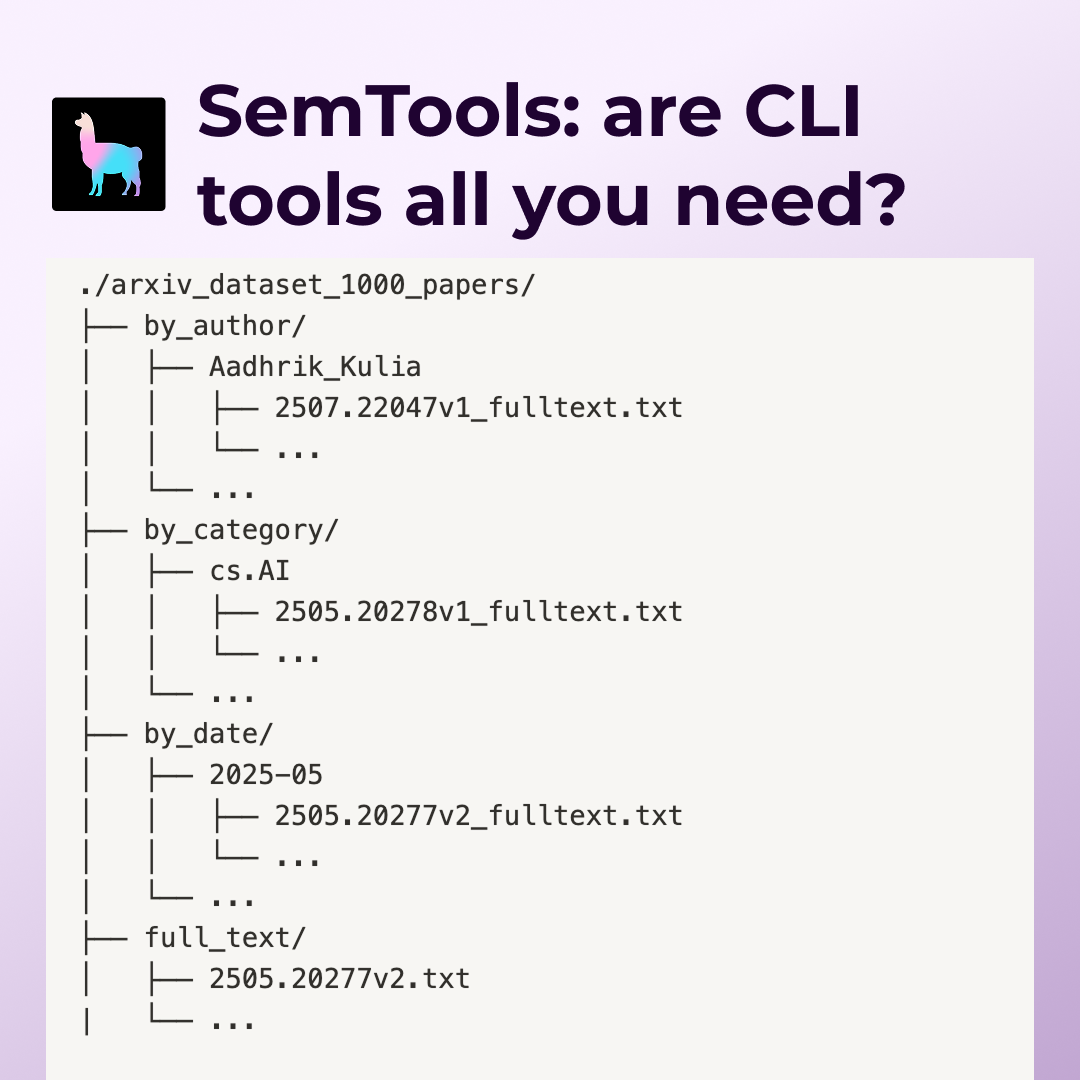

SemTools CLI: Parse and search documents from the terminal Three tools that turn coding agents into document researchers: parse: Convert complex formats (PDFs, etc.) to searchable markdown via LlamaParse search: Fuzzy semantic keyword search using static embeddings ask: performs agentic search over documents We benchmarked Claude Code on 1000 ArXiv papers. With SemTools access, it found more detailed answers across search/filter, cross-reference, and temporal analysis tasks—without building custom RAG infrastructure. Unix tools + semantic search = surprisingly capable document agents. https://t.co/6Hja2RHkDL

LlamaSheets (beta): Handle messy spreadsheets Multi-stage processing that actually understands merged cells, headers across rows, and broken layouts. 40+ features per cell. Turns real-world spreadsheet chaos into clean data. https://t.co/otTKtyQy6f

MCP support for LlamaIndex as well as our very own MCP server for documentation: https://t.co/R4PmPqtbxF

LlamaSplit (beta): Auto-separate bundled documents AI analyzes page content to split mixed PDFs into distinct sections. No more manual sorting. Solves the "invoice packet with receipts and contracts" problem. https://t.co/gvaUxc0264

And so many open-source projects: · Open-source NotebookLM alternative · StudyLlama (organize study materials) · Filesystem explorer with Gemini @GoogleDeepMind · MCP integration for coding agents Full recap with all the links: https://t.co/euJqlhtYaO Here's to building more in 2026.

The change from the @dwarkesh_sp podcast 2 months ago vs. the tweet below from @karpathy is genuinely insane It’s a night and day difference. We went from “these models are slop and we’re 10 years away” to “I’ve never felt more behind and I could be 10x more powerful” This all changed with Opus 4.5. It will be looked back on as a historical milestone.

I've never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become ava

A portal is opening to your very own world... 🧵 https://t.co/SbYQcygb8h

A portal is opening to your very own world... 🧵 https://t.co/SbYQcygb8h