@omarsar0

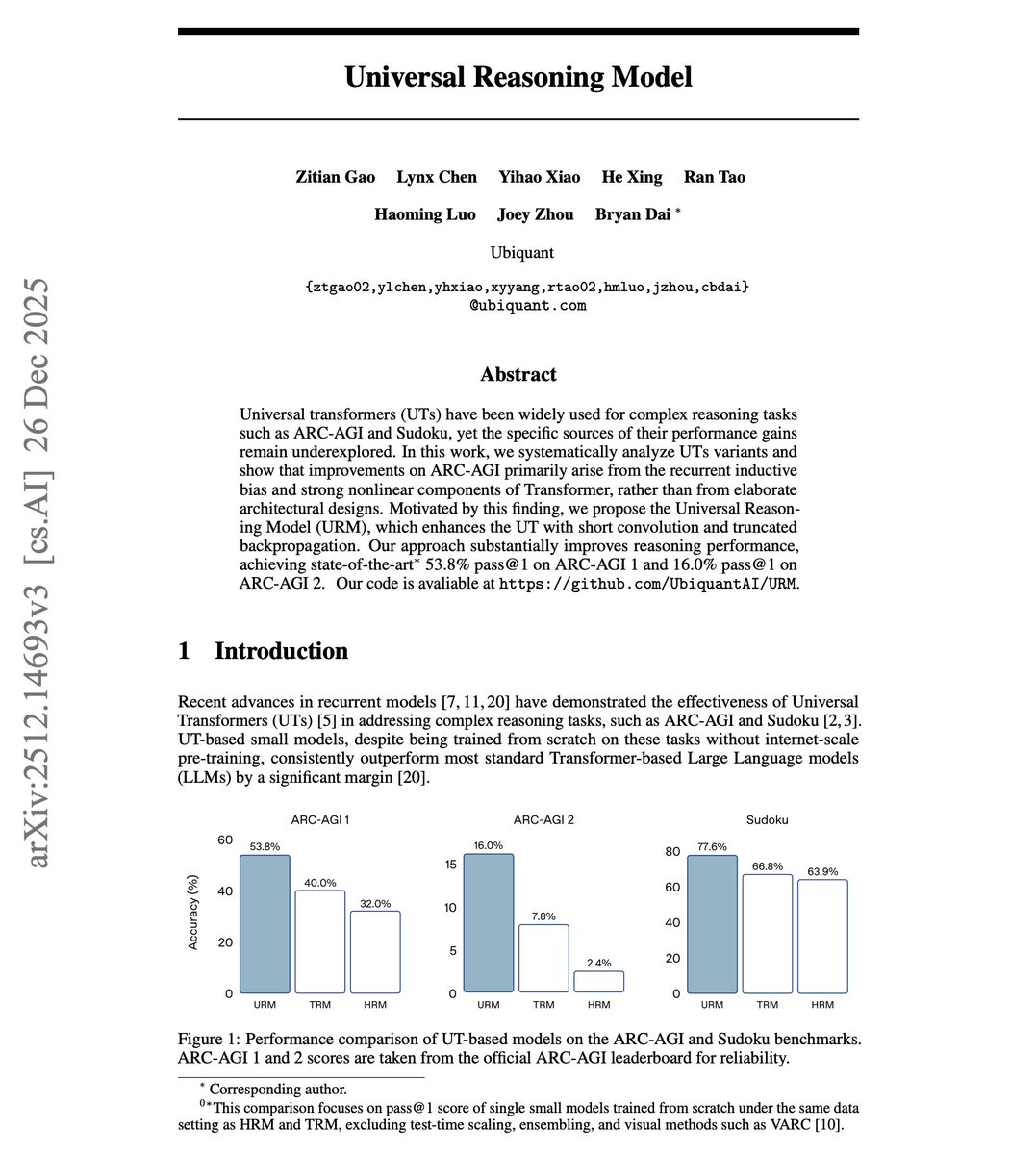

Universal Reasoning Model Universal Transformers crush standard Transformers on reasoning tasks. But why? Prior work attributed the gains to elaborate architectural innovations like hierarchical designs and complex gating mechanisms. But these researchers found a simpler explanation. This new research demonstrates that the performance gains on ARC-AGI come primarily from two often-overlooked factors: recurrent inductive bias and strong nonlinearity. Applying a single transformation repeatedly works far better than stacking distinct layers for reasoning tasks. With only 4x parameters, a Universal Transformer achieves 40% pass@1 on ARC-AGI 1. Vanilla Transformers with 32x parameters score just 23.75%. Simply scaling depth or width in standard Transformers yields diminishing returns and can even degrade performance. They introduce the Universal Reasoning Model (URM), which enhances this with two techniques. First, ConvSwiGLU adds a depthwise short convolution after the MLP expansion, injecting local token mixing into the nonlinear pathway. Second, Truncated Backpropagation Through Loops skips gradient computation for early recurrent iterations, stabilizing optimization. Results: 53.8% pass@1 on ARC-AGI 1, up from 40% (TRM) and 34.4% (HRM). On ARC-AGI 2, URM reaches 16% pass@1, nearly tripling HRM and more than doubling TRM. Sudoku accuracy hits 77.6%. Ablations: - Removing short convolution drops pass@1 from 53.8% to 45.3%. Removing truncated backpropagation drops it to 40%. - Replacing SwiGLU with simpler activations like ReLU tanks performance to 28.6%. - Removing attention softmax entirely collapses accuracy to 2%. The recurrent structure converts compute into effective depth. Standard Transformers spend FLOPs on redundant refinement in higher layers. Recurrent computation concentrates the same budget on iterative reasoning. Complex reasoning benefits more from iterative computation than from scale. Small models with recurrent structure outperform large static models on tasks requiring multi-step abstraction.