@omarsar0

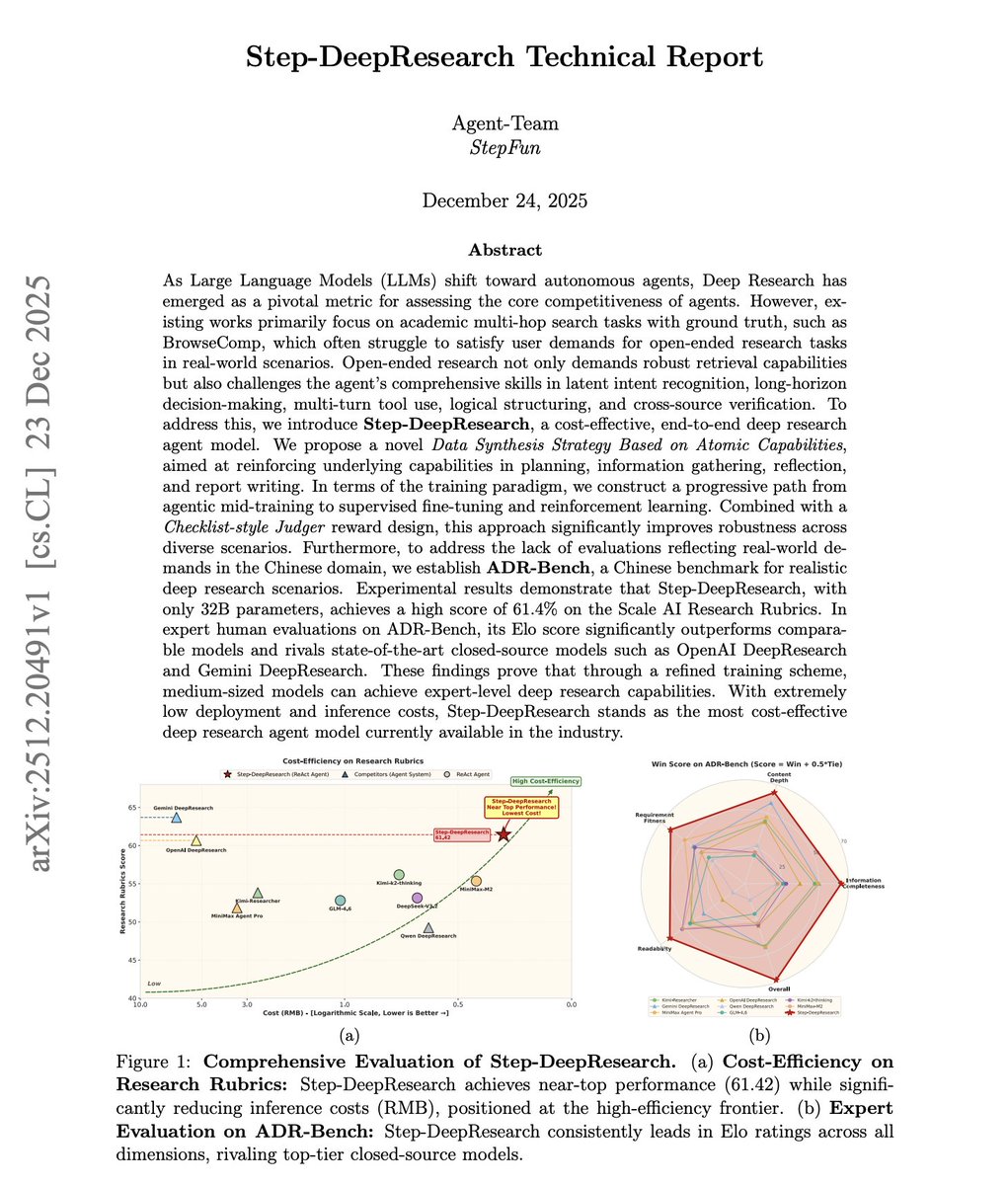

What does it take to build the best cost-efficient deep research agent? Current Deep Research systems optimize for multi-hop retrieval: find scattered facts, chain them together, and then return an answer. They feel more like efficient web crawlers than researchers who synthesize evidence into defensible arguments. But real research requires intent decomposition, planning, cross-source verification, reflection, and structured report writing. This report introduces Step-DeepResearch, a 32B parameter end-to-end Deep Research agent that rivals OpenAI and Gemini's proprietary systems at a fraction of the cost. Reframe training from predicting the next token to deciding the next atomic action. Four atomic capabilities form the foundation: planning and task decomposition, deep information seeking, reflection and verification, and report generation. They propose a progressive training pipeline from agentic mid-training through supervised fine-tuning to reinforcement learning. Mid-training injects domain knowledge and tool-calling ability across 128K context. SFT composes atomic capabilities into end-to-end research trajectories. RL with a Checklist-style Judger reward optimizes for rubric compliance in real web environments. On Scale AI's ResearchRubrics benchmark, Step-DeepResearch scores 61.42, comparable to OpenAI DeepResearch and Gemini DeepResearch. In expert human evaluations on their new ADR-Bench (Chinese Deep Research scenarios), it outperforms larger models like MiniMax-M2, GLM-4.6, and DeepSeek-V3.2. The architecture is surprisingly simple: a single ReAct-style agent with no multi-agent orchestration or heavyweight workflows. All complexity is internalized through training. Why does this work matter? Medium-sized models can achieve expert-level Deep Research when trained on the right atomic capabilities. The most cost-effective path isn't more parameters or elaborate workflows. It's better data and progressive capability composition. Paper: https://t.co/am4PjHNfVc Learn to build effective AI agents in our academy: https://t.co/JBU5beIoD0