Your curated collection of saved posts and media

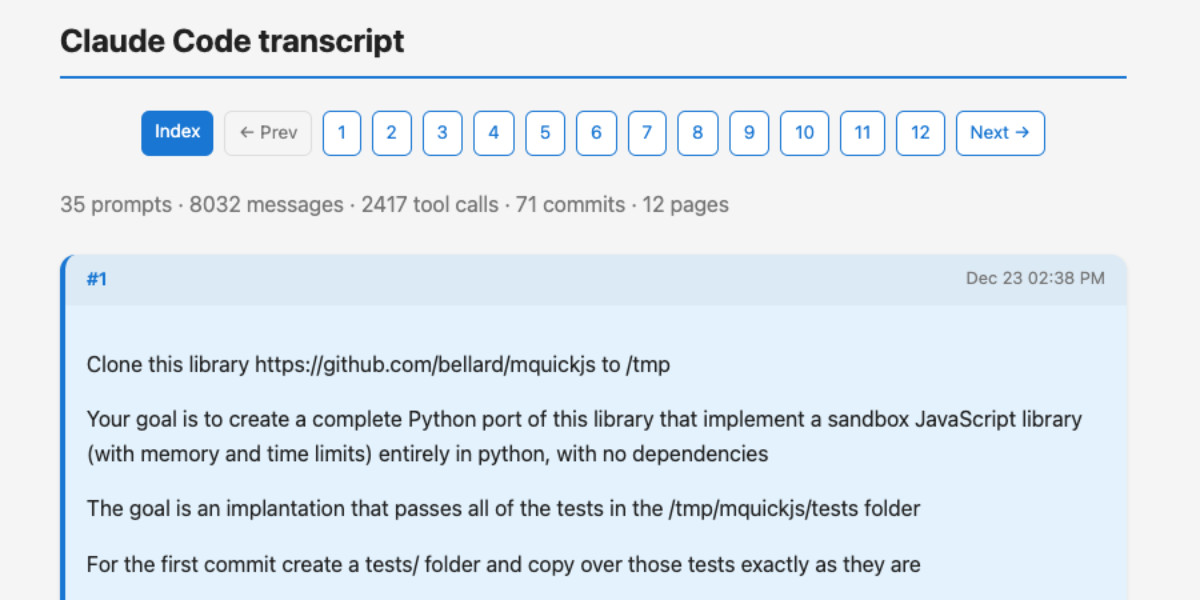

I built a new Python CLI tool called claude-code-transcripts that can create nice readable HTML versions of your Claude Code sessions, both local and pulled from Claude Code for web, and makes it easy to publish them online too https://t.co/pHl8l2lXeK

Soprano: An instant, ultra-lightweight TTS model for realistic speech; generates 10 hours of 32kHz audio in <20s; streams with <15ms latency using just 80M params & <1GB VRAM. Has some limitations and drawbacks. https://t.co/BZmckav7mW https://t.co/gWi1qpevWi

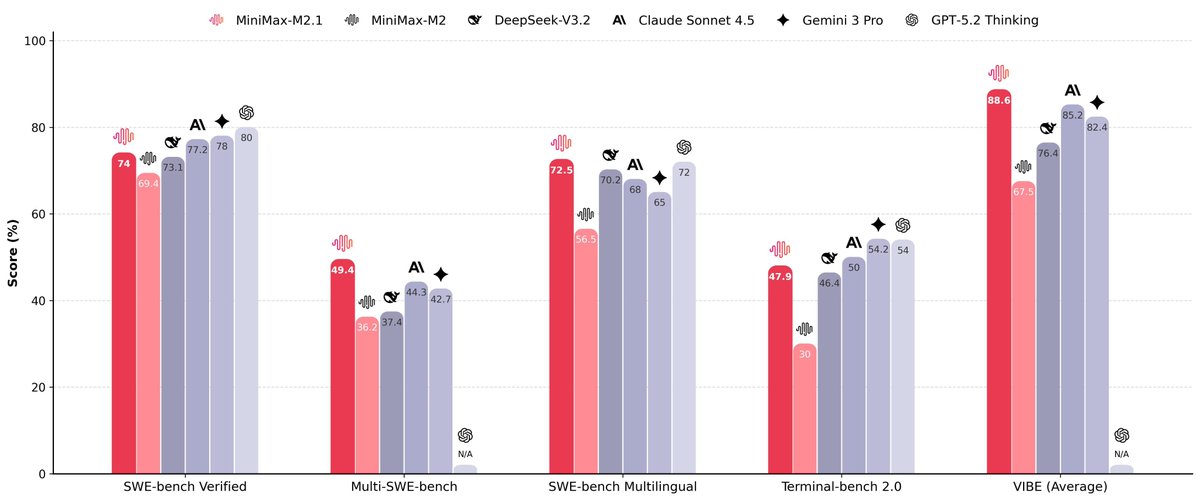

MiniMax M2.1 is OPEN SOURCE: SOTA for real-world dev & agents • SOTA on coding benchmarks (SWE / VIBE / Multi-SWE) • Beats Gemini 3 Pro & Claude Sonnet 4.5 • 10B active / 230B total (MoE) Not just SOTA, faster to infer, easier to deploy, and yes, you can even run it locally Weights: https://t.co/3lYeI6qyg2

Transcribes and summarizes meetings locally using small language models https://t.co/qrJkQuYdWS https://t.co/AGg4LvZQyX

Transcribes and summarizes meetings locally using small language models https://t.co/qrJkQuYdWS https://t.co/AGg4LvZQyX

Wow. Anthropic just curated an impressive collection of use cases for Claude 🤯 You already get 39 deep guides and more get added weekly. It’s also free and definitely worth bookmarking. (link below) https://t.co/t1FUE24fvP

Memory in the Age of AI Agents This 102-page survey introduces a unified framework for understanding agent memory through three lenses: Forms, Functions, and Dynamics. https://t.co/Mn357FOH15

@simonw When Claude stops, you can use a stop hook to poke it to keep going. eg. see https://t.co/4WW1baGEeM

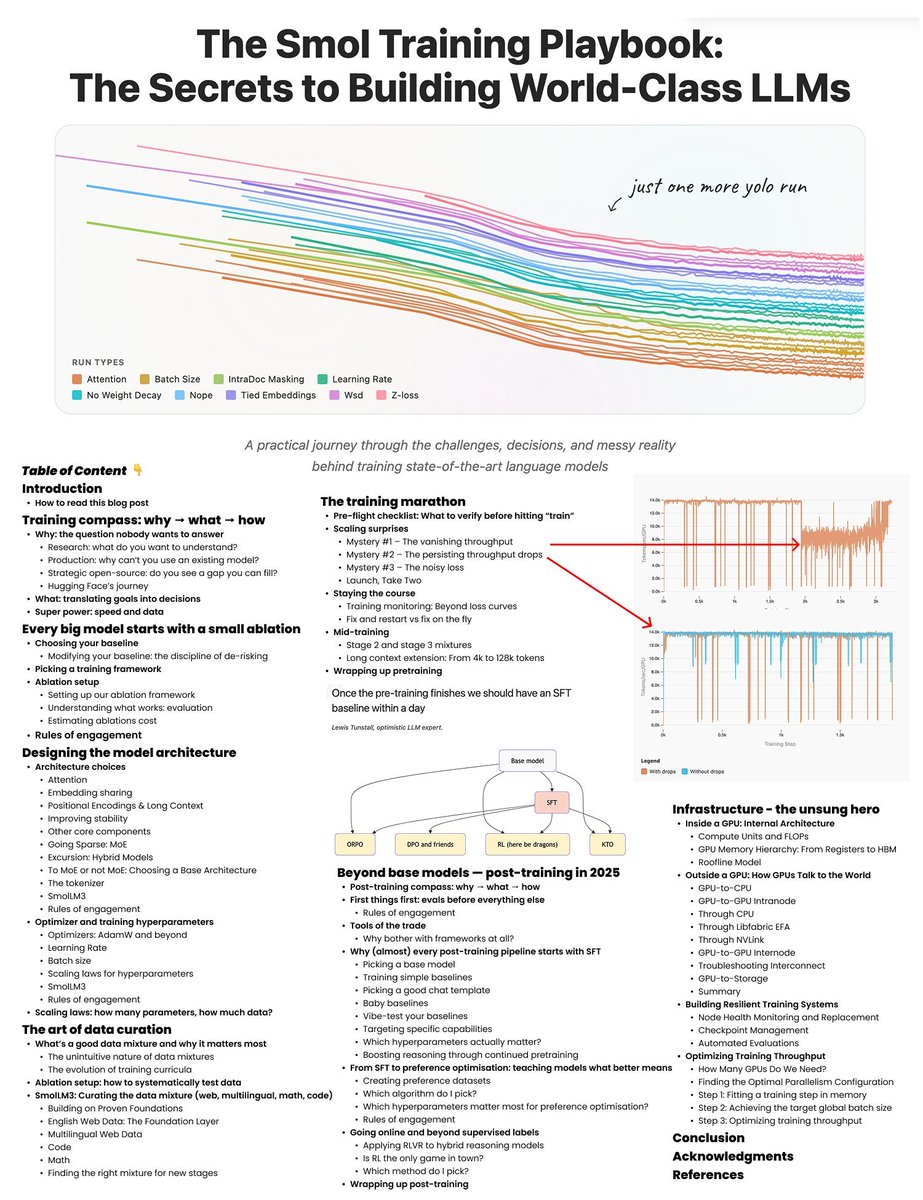

Hugging Face has released a 214-page MASTERCLASS on how to train LLMs > it’s called The Smol Training Playbook > and if want to learn how to train LLMs, > this GIFT is for you > this training bible walks you through the ENTIRE pipeline > covers every concept that matters from why you train, > to what you train, to how you actually pull it off > from pre-training, to mid-training, to post-training > it turns vague buzzwords into step-by-step decisions > architecture, tokenization, data strategy, and infra > highlights the real-world gotchas > instabilities, scaling headaches, debugging nightmares > distills lessons from building actual > state-of-the-art LLMs, not just toy models how modern transformer models are actually built > tokenization: the secret foundation of every LLM > tokenizer fundamentals > vocabulary size > byte pair encoding > custom vs existing tokenizers > all the modern attention mechanisms are here > multi-head attention > multi-query attention > grouped-query attention > multi-latent attention > every positional encoding trick in the book > absolute position embedding > rotary position embedding > yaRN (yet another rotary network) > ablate-by-frequency positional encoding > no position embedding > randomized no position embedding > stability hacks that actually work > z-loss regularization > query-key normalization > removing weight decay from embedding layers > sparse scaling, handled > mixture-of-experts scaling > activation ratio tuning > choosing the right granularity > sharing experts between layers > load balancing across experts > long-context handling via ssm > hybrid models: transformer plus state space models data curation = most of your real model quality > data curation is the main driver of your model’s actual quality > architecture alone won’t save you > building the right data mixture is an art, > not just dumping in more web scrapes > curriculum learning, adaptive mixes, ablate everything > you need curriculum learning: > design data mixes hat evolve as training progresses > use adaptive mixtures that shift emphasis > based on model stage and performance > ablate everything: run experiments to systematically > test how each data source or filter impacts results > smollm3 data > the smollm3 recipe: balanced english web data, > broad multilingual sources, high-quality code, and diverse math datasets > without the right data pipeline, > even the best architecture will underperform the training marathon > do your preflight checklist or die > check your infrastructure, > validate your evaluation pipelines, > set up logging, and configure alerts > so you don’t miss silent failures > scaling surprises are inevitable > things will break at scale in ways they never did in testing > vanishing throughput? that usually means > you’ve got a hidden shape mismatch or > batch dimension bug killing your GPU utilization > sudden drops in throughput? > check your software stack for inefficiencies, > resource leaks, or bad dataloader code > seeing noisy, spiky loss values? > your data shuffling is probably broken, > and the model is seeing repeated or ordered data > performance worse than expected? > look for subtle parallelism bugs > tensor parallel, data parallel, > or pipeline parallel gone rogue > monitor like your GPUs depend on it (because they do) > watch every metric, track utilization, spot anomalies fast > mid-training is not autopilot > swap in higher-quality data to improve learning, > extend the context window if you want bigger inputs, > and use multi-stage training curricula to maximize gains > the difference between a good model and a failed run is > almost always vigilance and relentless debugging during this marathon post-training > post-training is where your raw base model > actually becomes a useful assistant > always start with supervised fine-tuning (sft) > use high-quality, well-structured chat data and > pick a solid template for consistent turns > sft gives you a stable, cost-effective baseline > don’t skip it, even if you plan to go deeper > next, optimize for user preferences > direct preference optimization (dpo), > or its variants like kernelized (kto), > online (orpo), or adversarial (apo) > these methods actually teach the model > what “better” looks like beyond simple mimicry > once you’ve got preference alignment,go on-policy: > reinforcement learning from human feedback (rlhf) > or on-policy distillation, which lets your model learn > from real interactions or stronger models > this is how you get reliability and sharper behaviors > the post-training pipeline is where > assistants are truly sculpted; > skipping steps means leaving performance, > safety, and steerability on the table infra is the boss fight > this is where most teams lose time, > money, and sanity if they’re not careful > inside every gpu > you’ve got tensor cores and cuda cores for the heavy math, > plus a memory hierarchy (registers, shared memory, hbm) > that decides how fast you can feed data to the compute units > outside the gpu, your interconnects matter > pcie for gpu-to-cpu, > nvlink for ultra-fast gpu-to-gpu within a node, > infiniband or roce for communication between nodes, > and gpudirect storage for feeding massive datasets > straight from disk to gpu memory > make your infra resilient: > checkpoint your training constantly, > because something will crash; > monitor node health so you can kill or restart > sick nodes before they poison your run > scaling isn’t just “add more gpus” > you have to pick and tune the right parallelism: > data parallelism (dp), pipeline parallelism (pp), tensor parallelism (tp), > or fully sharded data parallel (fsdp); > the right combo can double your throughput, > the wrong one can bottleneck you instantly to recap > always start with WHY > define the core reason you’re training a model > is it research, a custom production need, or to fill an open-source gap? > spec what you need: architecture, model size, data mix, assistant type > transformer or hybrid > set your model size > design the right data mixture > decide what kind of assistant or > use case you’re targeting > build infra for the job, plan for chaos, pick your stability tricks > build infrastructure that matches your goals > choose the right GPUs > set up reliable storage > and plan for network bottlenecks > expect failures, weird bugs, > and sudden bottlenecks at scale > select your stability tricks in advance: > know which techniques you’ll use to fight loss spikes, > unstable gradients, and hardware hiccups closing notes > the pace of LLM development is relentless, > but the underlying principles never go out of style > and this PDF covers what actually matters > no matter how fast the field changes > systematic experimentation is everything > run controlled tests, change one variable at a time, and document every step > sharp debugging instincts will save you > more time (and compute budget) than any paper or library > deep knowledge of both your software stack > and your hardware is the ultimate unfair advantage; > know your code, know your chips > in the end, success comes from relentless curiosity, > tight feedback loops, and a willingness to question everything > even your own assumptions if i had this two years ago, it would have saved me so much time > if you’re building llms, > read this before you burn gpu months happy hacking

Using a mocap suit to kick yourself in the balls with a robot is a great metaphor to close out 2025. https://t.co/G1hY5Fd6YF

Understanding Git Worktrees. A great Git feature in times of agentic AI https://t.co/Fo7Qnfceze

VideoRAG - [KDD'2026] "VideoRAG: Chat with Your Videos" https://t.co/Xm8wsnDUzx

VideoRAG - [KDD'2026] "VideoRAG: Chat with Your Videos" https://t.co/Xm8wsnDUzx

@simonw When Claude stops, you can use a stop hook to poke it to keep going. eg. see https://t.co/4WW1baGEeM

Claude Code is truly amazing. I just single shotted a linux app for my ancient outdoor camera system. Now I can make some more enhancements and have a functioning app I want. Will it make me a lot of money maybe not but with AI coding tools I can scratch itches I have had. https://t.co/1fd2r6GlBH

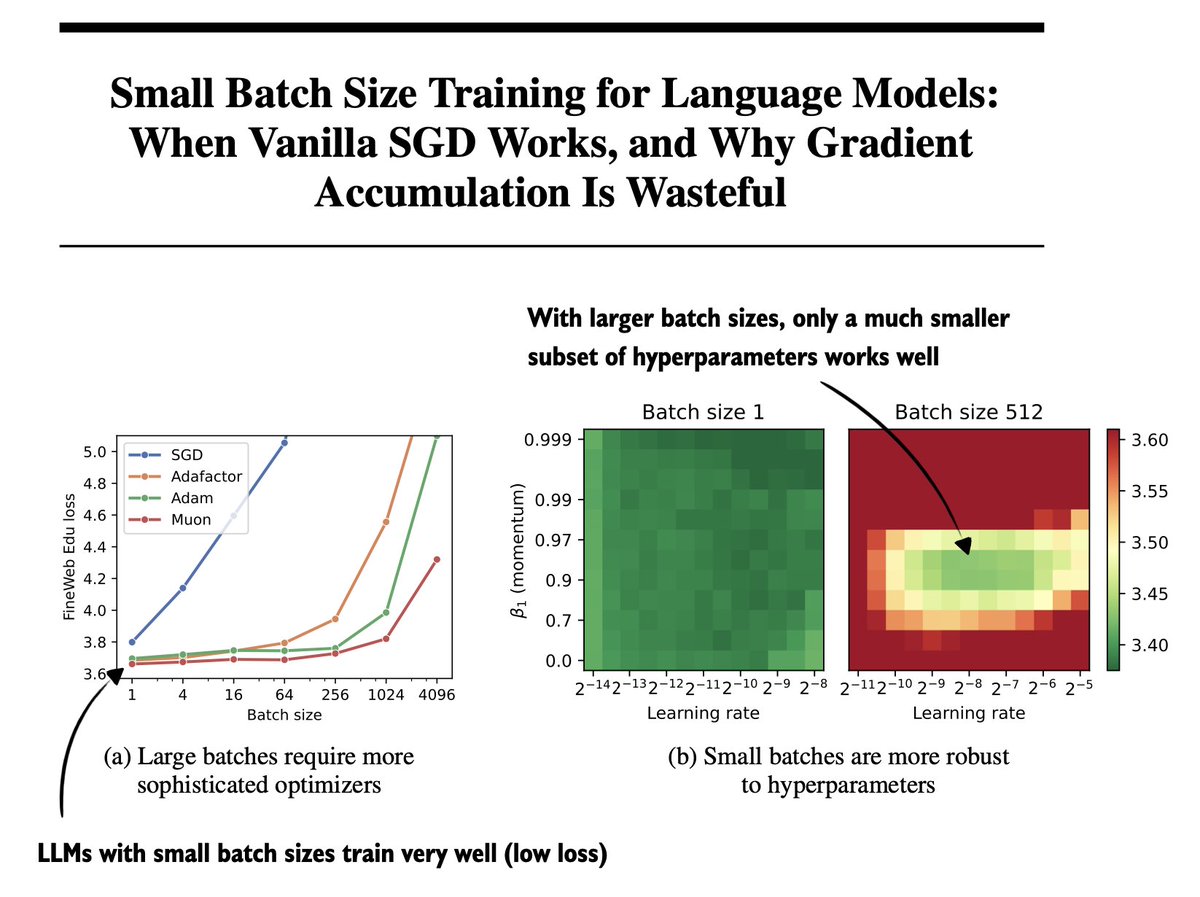

One of the underrated papers this year: "Small Batch Size Training for Language Models: When Vanilla SGD Works, and Why Gradient Accumulation Is Wasteful" (https://t.co/0O4XjGDLIP) (I can confirm this holds for RLVR, too! I have some experiments to share soon.) https://t.co/Vy6yVeGqiK

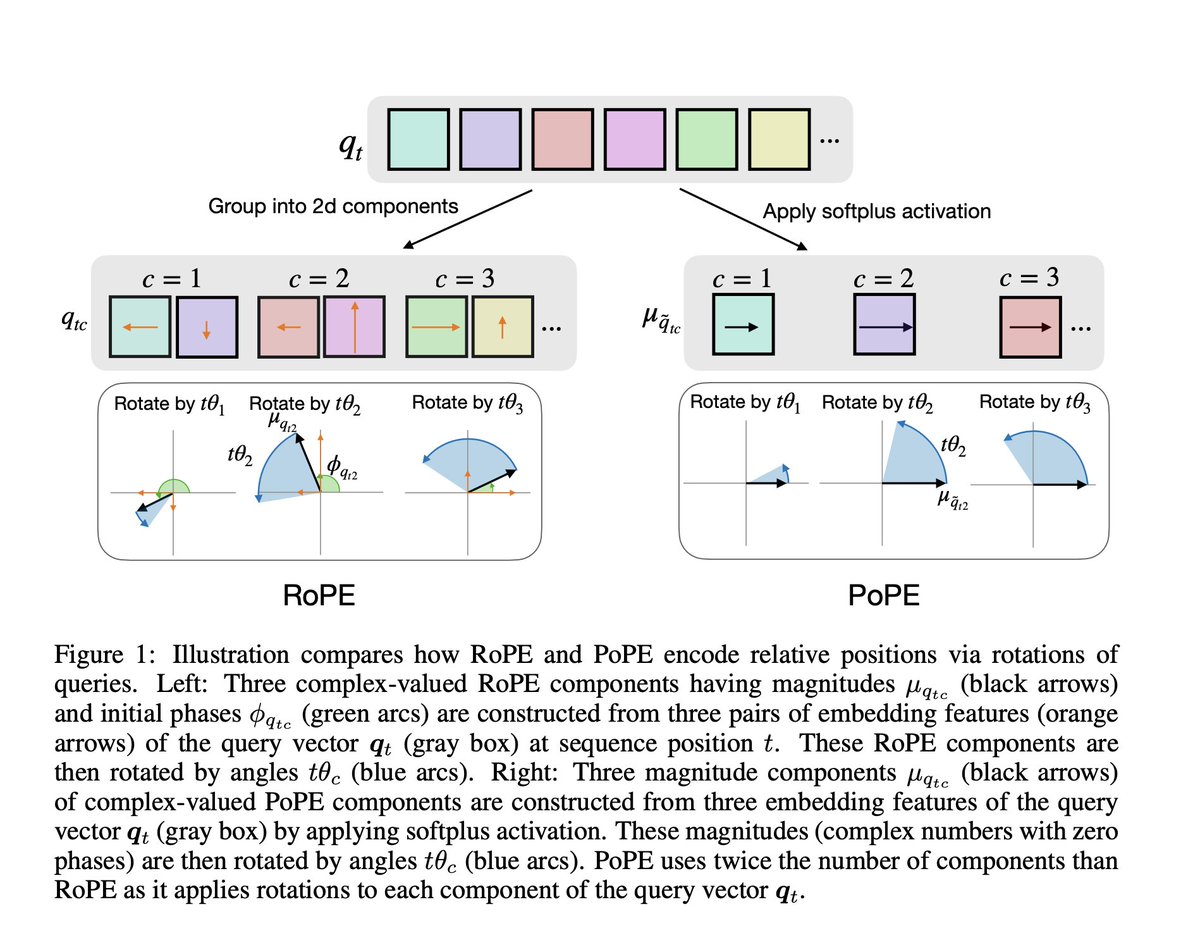

RoPE is fundamentally flawed. This paper shows that RoPE mixes up “what” a token is with “where” it is, so the model can’t reliably reason about relative positions independently of token identity. Eg. the effective notion of “3 tokens to the left” subtly depends on which letters are involved, so asking “what letter is 3 to the left of Z in a sequence 'ABSCOPZG' ” becomes harder than it should be because the positional ruler itself shifts with content. So this paper proposes PoPE, which gives the model a fixed positional ruler by encoding where tokens are independently of what they are, letting "content" only control match strength while "position" alone controls distance. With PoPE achieving 95% accuracy while RoPE would be stuck at 11% on Indirect Indexing task

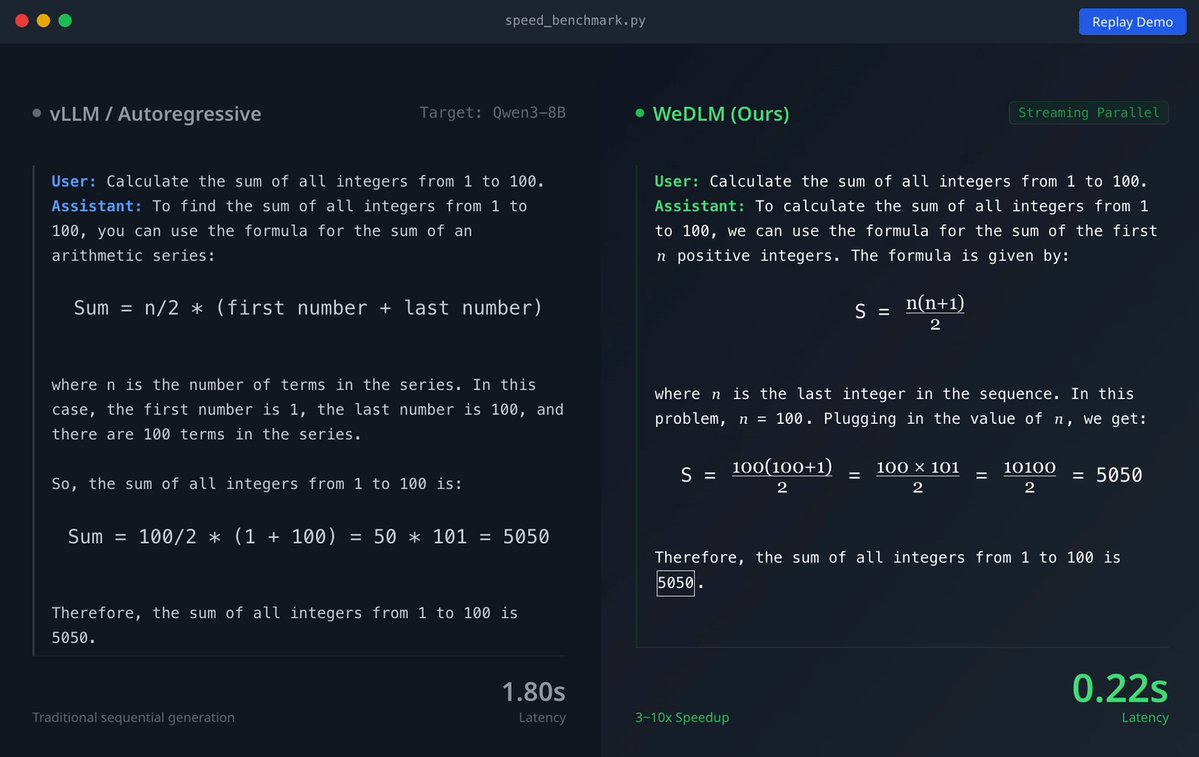

Tencent just released WeDLM 8B Instruct on Hugging Face A diffusion language model that runs 3-6× faster than vLLM-optimized Qwen3-8B on math reasoning tasks. https://t.co/bRURRHbF3S

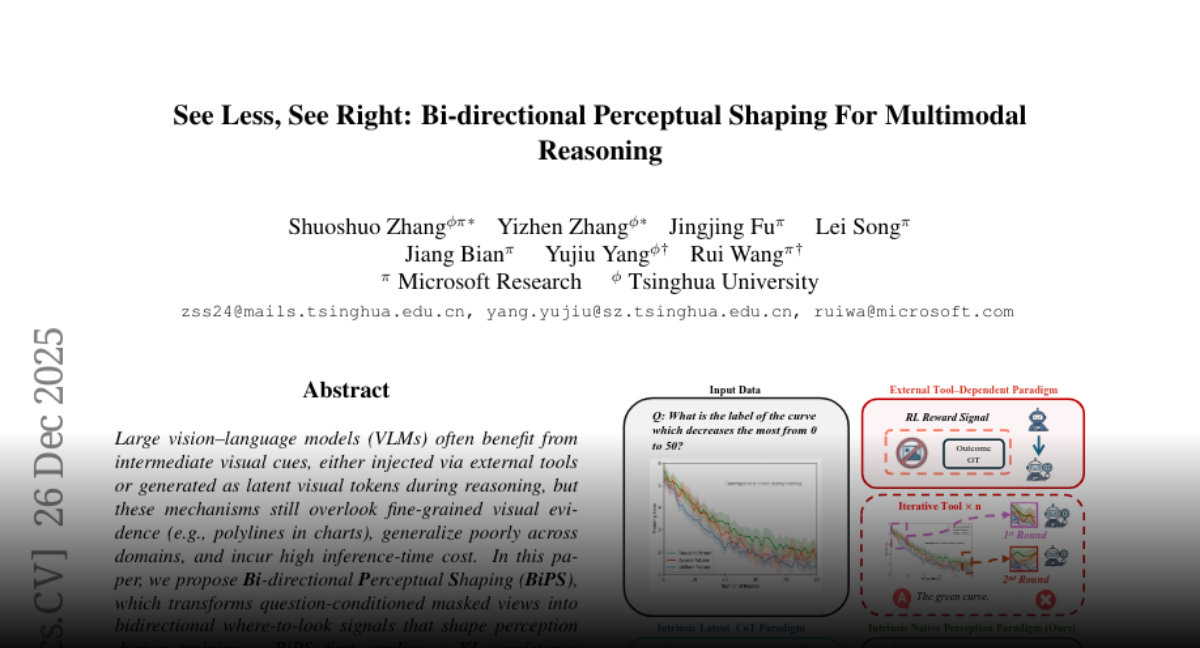

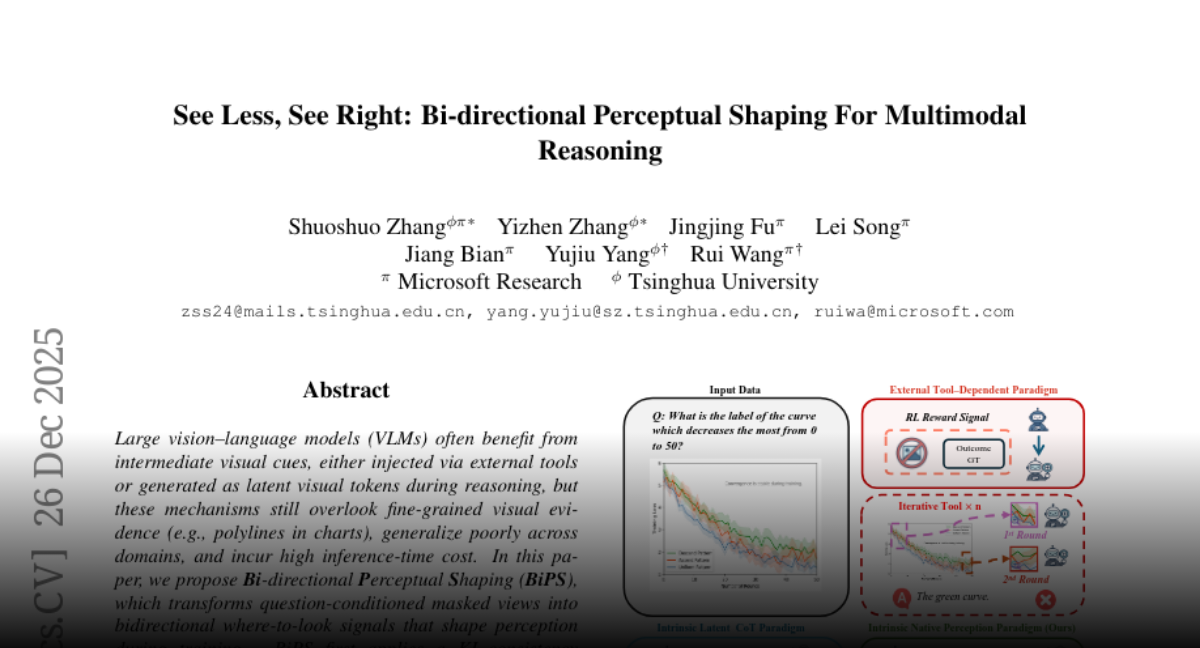

See Less, See Right Bi-directional Perceptual Shaping For Multimodal Reasoning https://t.co/AyrytLPJup

See Less, See Right Bi-directional Perceptual Shaping For Multimodal Reasoning https://t.co/AyrytLPJup

📢 Confession: I ship code I never read. Here's my 2025 workflow. https://t.co/tmxxPowzcR

mini led screen https://t.co/q4SlpVIkiF

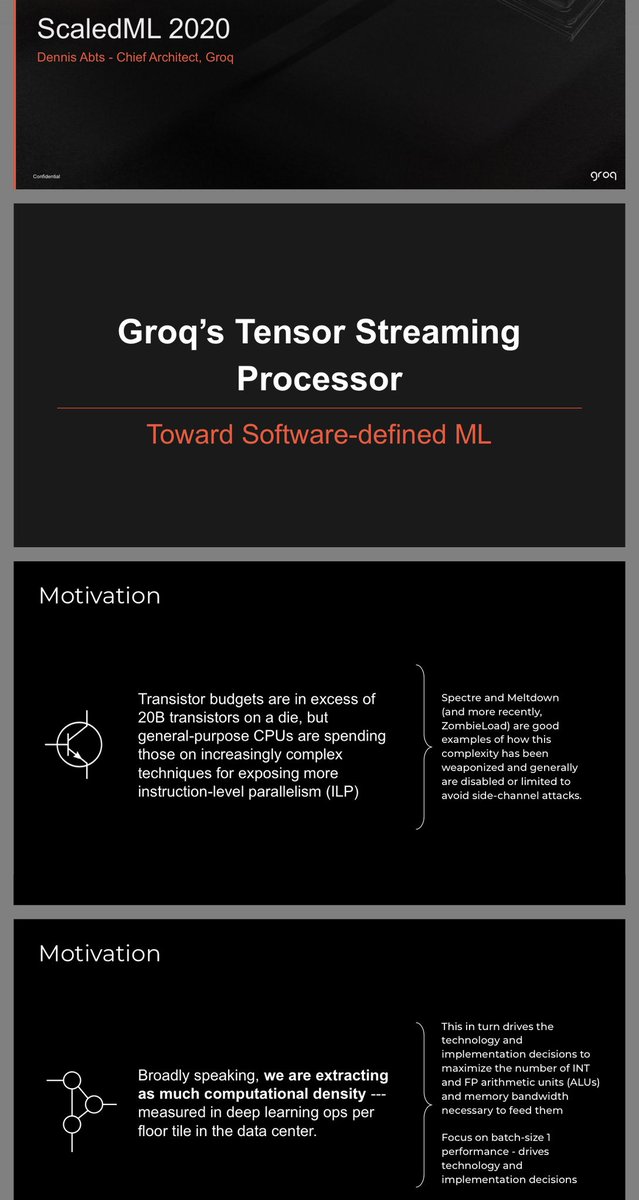

Groq’s presentation From ScaledML 2020! They released the chip then. Linked to slides in next tweet https://t.co/XN3DL2ibQO

Slides: https://t.co/cYosAp6gmj ScaledML: https://t.co/Bl4Wc2y3xJ (happening January 2026)

Fun to come home after a day of birding to find that the agar art has grown and looks roughly as I hoped it would 😁 https://t.co/Eo41vdzHdE

Stick https://t.co/Q7qKMjBt1T

https://t.co/IQMnfBc1Ll is set for its IPO on Jan 8, 2026. This journey has been powered by our developers, researchers, and users from Day 1. Thank you for building this reality with us! https://t.co/yXOuapE3Hm

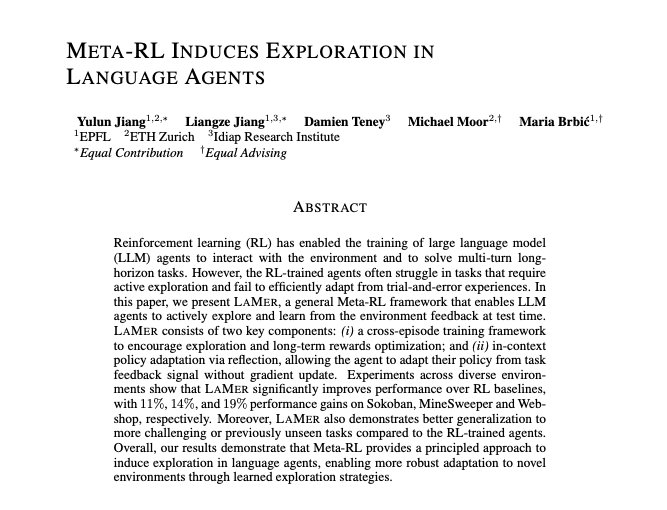

This might be my favorite paper of the year🤯 Rich Sutton claims that current RL methods won't get us to continual learning because they don't compound upon previous knowledge, every rollout starts from scratch. Researchers in Switzerland introduce Meta-RL which might crack that code. Optimize across episodes with a meta-learning objective, which then incentivizes agents to explore first and then exploit. And then reflect upon previous failures for future agent runs. Incredible results and incredible read of a paper overall. Authors: @YulunJiang @LiangzeJ @DamienTeney @Michael_D_Moor @mariabrbic

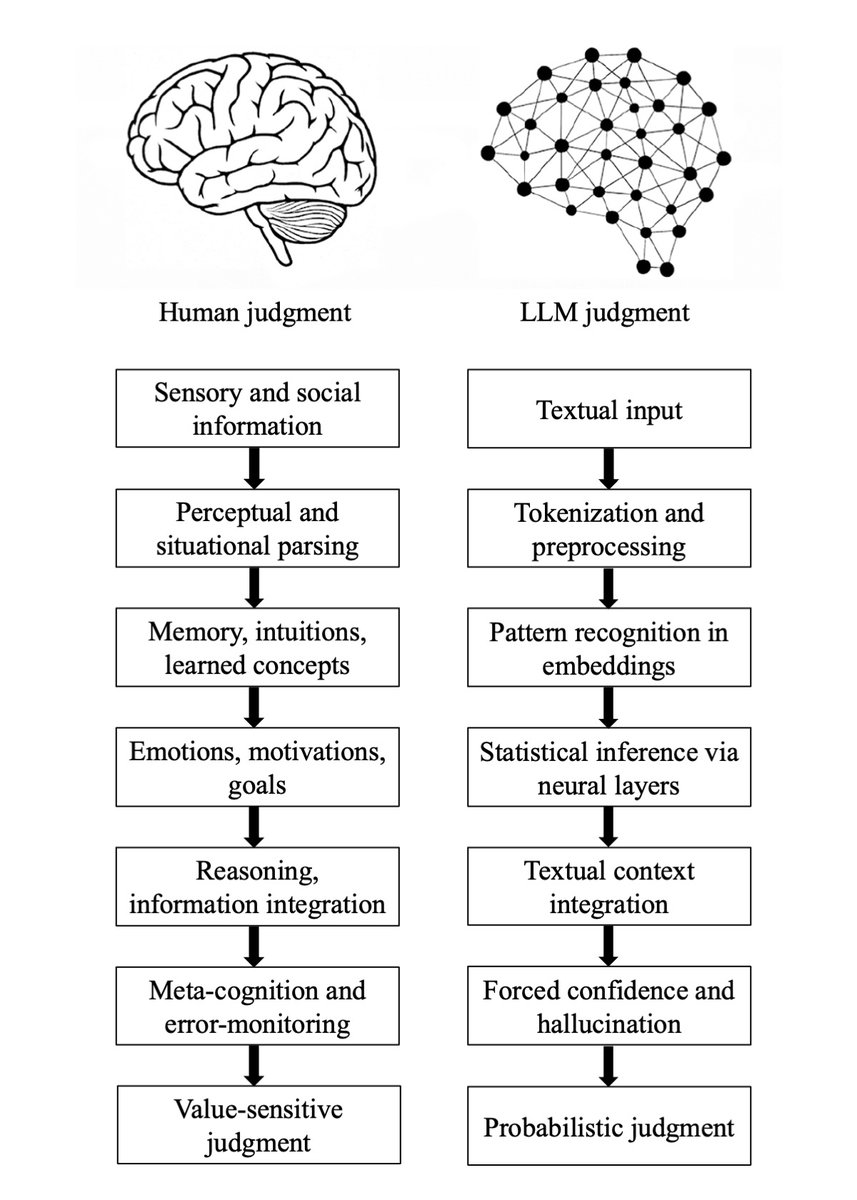

Major preprint just out! We compare how humans and LLMs form judgments across seven epistemological stages. We highlight seven fault lines, points at which humans and LLMs fundamentally diverge: The Grounding fault: Humans anchor judgment in perceptual, embodied, and social experience, whereas LLMs begin from text alone, reconstructing meaning indirectly from symbols. The Parsing fault: Humans parse situations through integrated perceptual and conceptual processes; LLMs perform mechanical tokenization that yields a structurally convenient but semantically thin representation. The Experience fault: Humans rely on episodic memory, intuitive physics and psychology, and learned concepts; LLMs rely solely on statistical associations encoded in embeddings. The Motivation fault: Human judgment is guided by emotions, goals, values, and evolutionarily shaped motivations; LLMs have no intrinsic preferences, aims, or affective significance. The Causality fault: Humans reason using causal models, counterfactuals, and principled evaluation; LLMs integrate textual context without constructing causal explanations, depending instead on surface correlations. The Metacognitive fault: Humans monitor uncertainty, detect errors, and can suspend judgment; LLMs lack metacognition and must always produce an output, making hallucinations structurally unavoidable. The Value fault: Human judgments reflect identity, morality, and real-world stakes; LLM "judgments" are probabilistic next-token predictions without intrinsic valuation or accountability. Despite these fault lines, humans systematically over-believe LLM outputs, because fluent and confident language produce a credibility bias. We argue that this creates a structural condition, Epistemia: linguistic plausibility substitutes for epistemic evaluation, producing the feeling of knowing without actually knowing. To address Epistemia, we propose three complementary strategies: epistemic evaluation, epistemic governance, and epistemic literacy. Full paper in the first reply. Joint with @Walter4C & @matjazperc

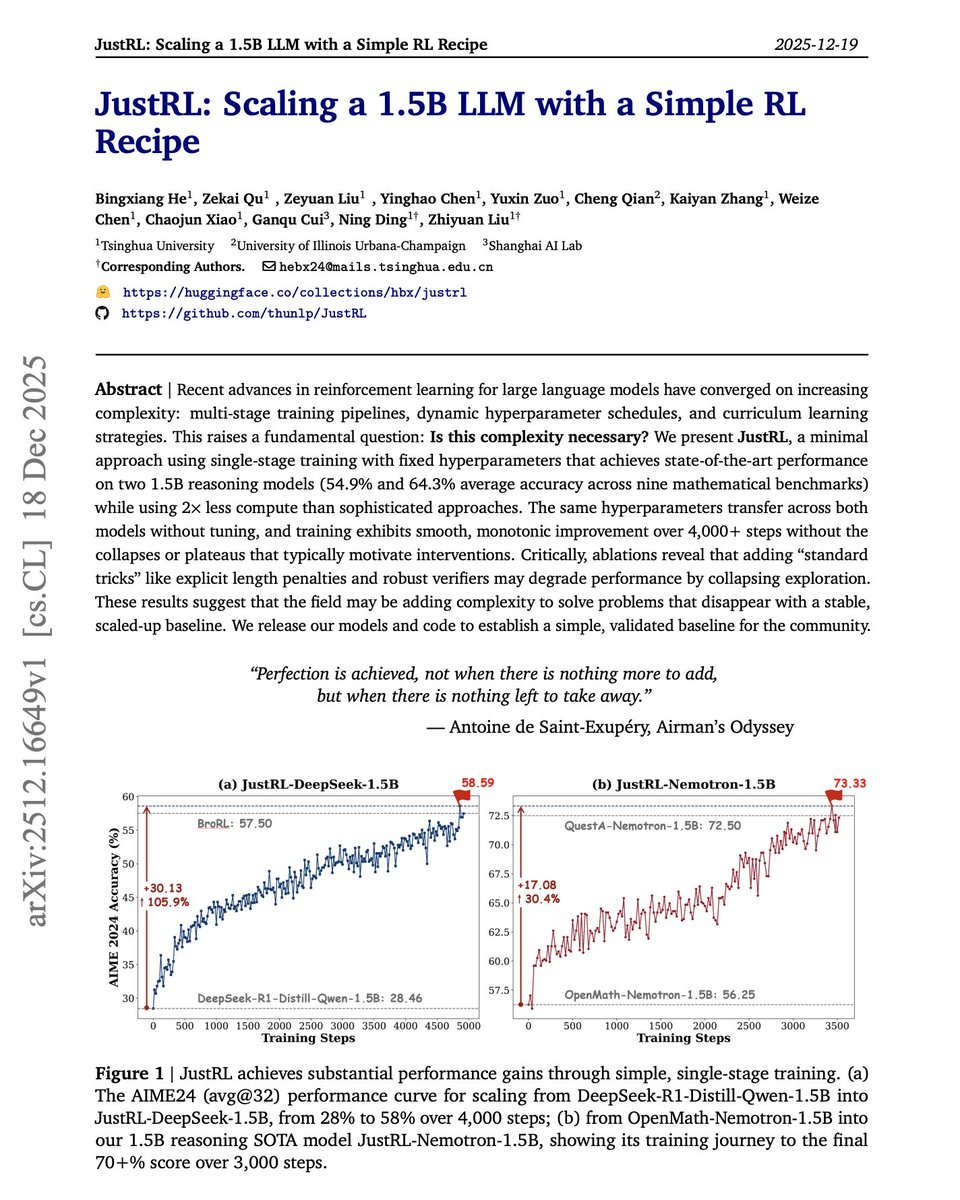

Sometimes less is more. More complexity in RL training isn't always the answer. The default approach to improving small language models with RL today involves multi-stage training pipelines, dynamic hyperparameter schedules, curriculum learning, and length penalties. But what if these techniques are solving problems that simpler approaches never create? This new research introduces JustRL, a minimal RL recipe that uses single-stage training with fixed hyperparameters to achieve state-of-the-art performance on 1.5B reasoning models. They stripped away everything non-essential. No progressive context lengthening. No adaptive temperature scheduling. No mid-training reference model resets. No length penalties. Just basic GRPO with fixed hyperparameters throughout training. Results: JustRL-DeepSeek-1.5B achieves 54.9% average accuracy across nine mathematical benchmarks. JustRL-Nemotron-1.5B reaches 64.3%. The best part: JustRL uses 2x less compute than more sophisticated approaches. On AIME 2024, performance improves from 28% to 58% over 4,000 steps of smooth, monotonic training without the collapses or plateaus that typically motivate complex interventions. Perhaps most surprising: ablations show that adding "standard tricks" like explicit length penalties and robust verifiers actually degrades performance by collapsing exploration. The model naturally compresses responses from 8,000 to 4,000-5,000 tokens without any penalty term. The same hyperparameters transfer across both models without tuning. No per-model optimization required. Paper: https://t.co/88X69gfBbU Learn to build with AI agents in our academy: https://t.co/zQXQt0PMbG

Software agents can self-improve via self-play RL Introducing Self-play SWE-RL (SSR): training a single LLM agent to self-play between bug-injection and bug-repair, grounded in real-world repositories, no human-labeled issues or tests. 🧵

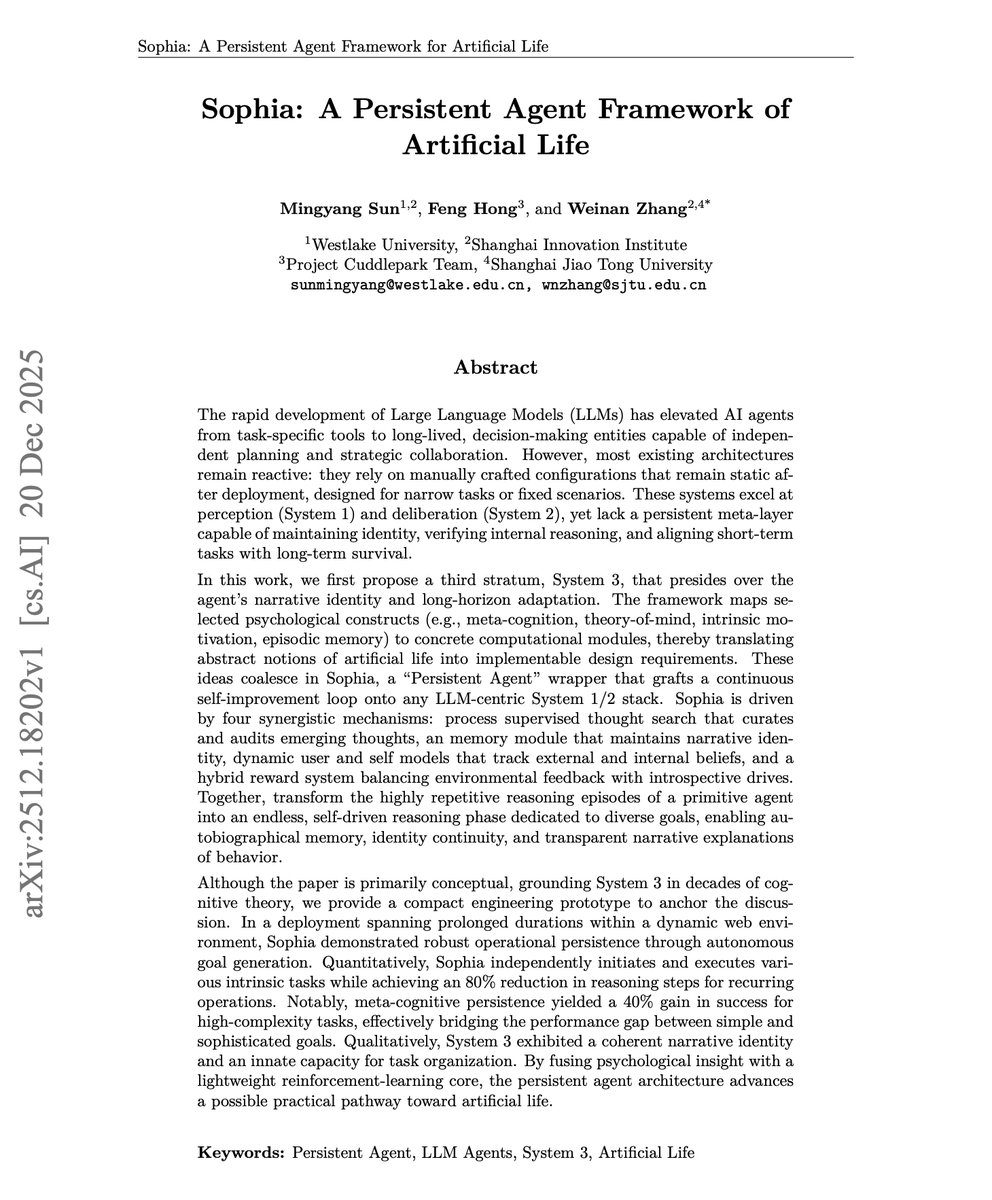

This paper is worth reading carefully. It introduces System 3 for AI Agents. The default approach to LLM agents today relies on System 1 for fast perception and System 2 for deliberate reasoning. But they remain static after deployment. No self-improvement. No identity continuity. No intrinsic motivation to learn beyond assigned tasks. This new research introduces Sophia, a persistent agent framework built on a proposed System 3: a meta-cognitive layer that maintains narrative identity, generates its own goals, and enables lifelong adaptation. Artificial life requires four psychological foundations mapped to computational modules: - Meta-cognition monitors and audits ongoing reasoning. - Theory-of-mind models users' beliefs and intentions. - Intrinsic motivation drives curiosity-based exploration. - Episodic memory maintains autobiographical context across sessions. Here is how it works: > Process-Supervised Thought Search captures and validates reasoning traces. > A Memory Module maintains a structured graph of goals and experiences. > Self and User Models track capabilities and beliefs. > A Hybrid Reward Module blends external task feedback with intrinsic signals like curiosity and mastery. In a 36-hour continuous deployment, Sophia demonstrated persistent autonomy. During user idle periods, the agent shifted entirely to self-generated tasks. Success rate on hard tasks jumped from 20% to 60% through autonomous self-improvement. Reasoning steps for recurring problems dropped 80% through episodic memory retrieval. This moves agents from transient problem-solvers to adaptive entities with coherent identity, transparent introspection, and open-ended competency growth. Paper: https://t.co/Eyy7mI9P1i Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG