@askalphaxiv

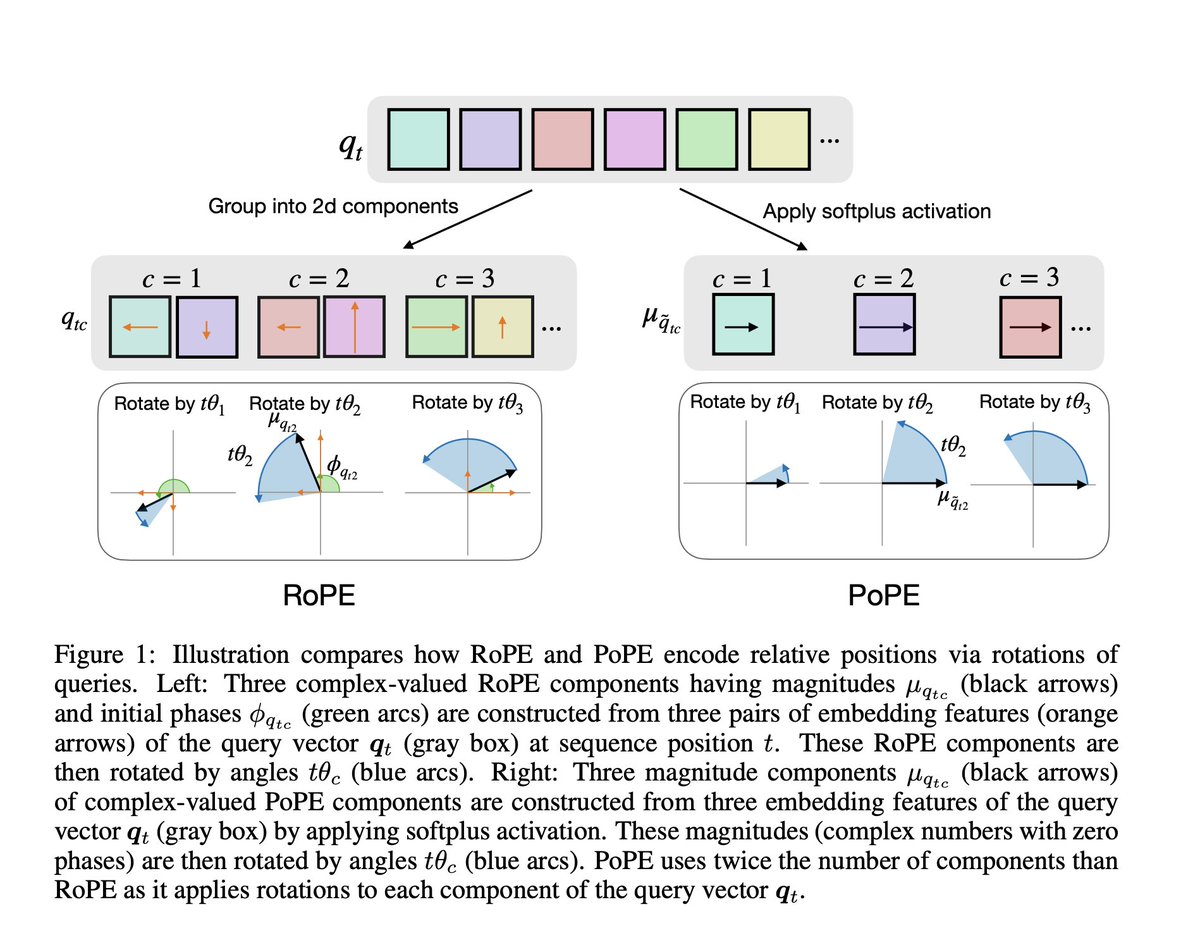

RoPE is fundamentally flawed. This paper shows that RoPE mixes up “what” a token is with “where” it is, so the model can’t reliably reason about relative positions independently of token identity. Eg. the effective notion of “3 tokens to the left” subtly depends on which letters are involved, so asking “what letter is 3 to the left of Z in a sequence 'ABSCOPZG' ” becomes harder than it should be because the positional ruler itself shifts with content. So this paper proposes PoPE, which gives the model a fixed positional ruler by encoding where tokens are independently of what they are, letting "content" only control match strength while "position" alone controls distance. With PoPE achieving 95% accuracy while RoPE would be stuck at 11% on Indirect Indexing task