Your curated collection of saved posts and media

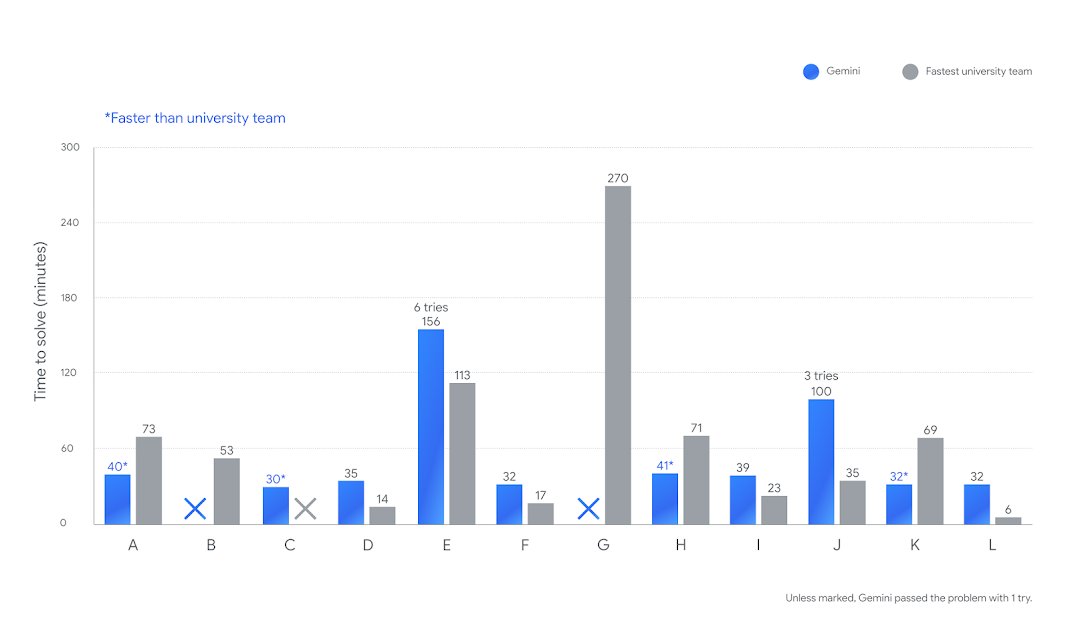

We are witnessing an incredible level of efficiency in reasoning models. Faster and more efficient reasoning models are on the rise. First, GPT-5 (and GPT-5-Codex) with remarkably efficient token use, and now Gemini 2.5 Deep Think, achieving gold-medal level performance at the ICPC 2025 under the same five-hour time constraint. Gemini 2.5 Deep Think correctly solved 10 out of 12 real-world coding problems. It would be ranked in 2nd place overall if compared with the university teams in the competition. As shown in the chart, Gemini’s time is in blue, and the fastest university team’s time is shown in gray. This is not an accident; this is what these companies are massively optimizing for right now. There is a quiet race for the fastest, smartest, and most efficient reasoning models. Advances are happening across pre-training, post-training, novel RL techniques, intelligent routing, long-horizon capabilities, scalable and effective tool use, multi-step reasoning, and parallel thinking, just to name a few. All these advancements are leading to reasoning models that respond faster on easy tasks and think for longer and efficiently on harder tasks. All while improving performance and capabilities across the board. It's important that mode switching happens dynamically because not every problem, state, and subtask demands the same level of compute. This is just the beginning, but do expect companies like Google and OpenAI to keep innovating on model efficiency. This is good news for us AI engineers who build or use complex agentic workflows. Having access to faster and more efficient reasoning models scales productivity and application of intelligence across domains, unlike anything we have seen.

Cool paper from Microsoft. And it's on the very important topic of in-context learning. So what's new? Let's find out: https://t.co/ILSAvIY0p4

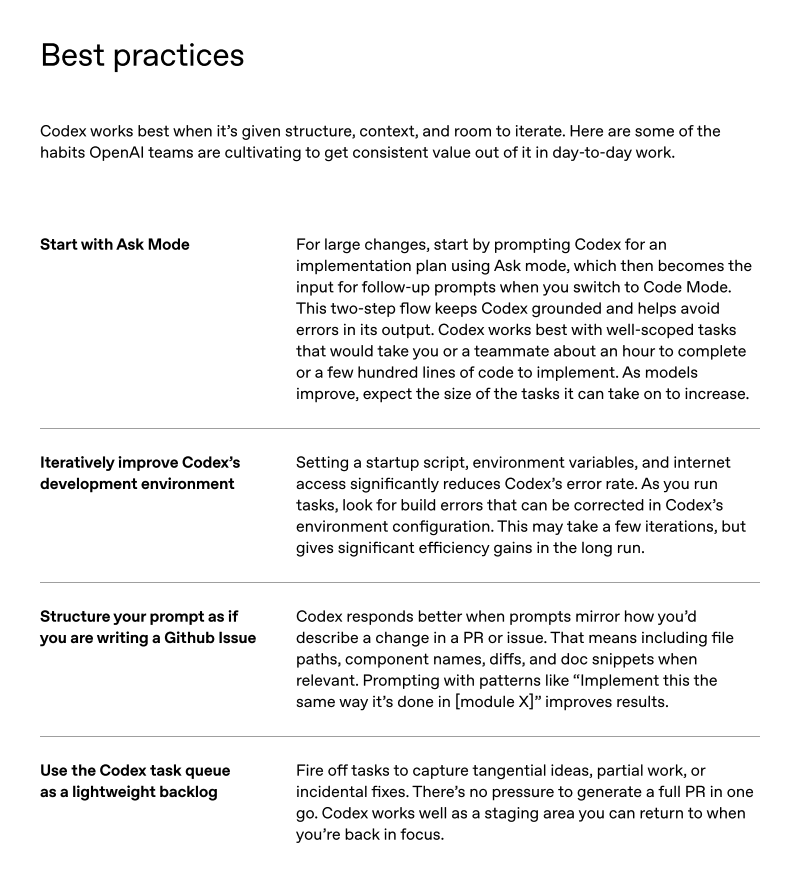

If you are looking to get started with Codex, you will find this little OpenAI guide useful. (bookmark it) https://t.co/K3Ywx0joJB

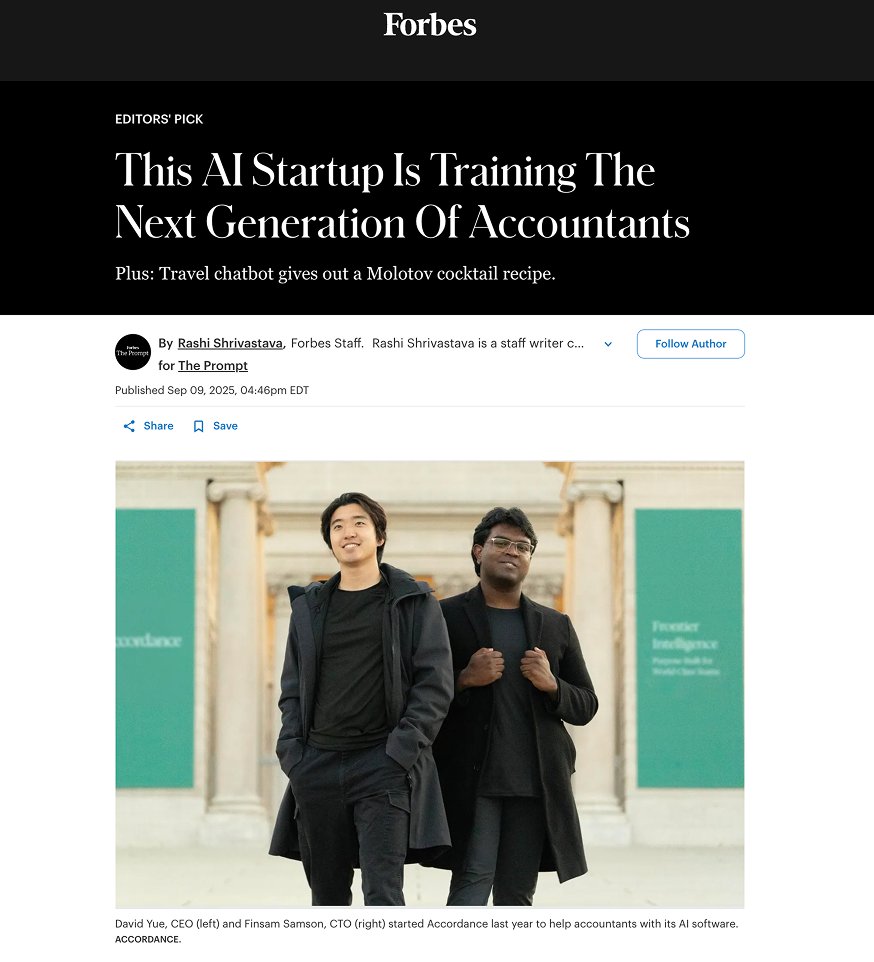

Dear friends, I am incredibly honored to finally unveil what we've been working on in stealth: Accordance. When Finsam and I first started our journey bringing AI out of the research lab, we discovered an industry filled with some of the most thoughtful, principled professionals we'd ever met: tax and accounting practitioners who became our teachers, our guides, and ultimately our partners. As our entire team spent thousands of hours over the last few years climbing the learning curve, what we've discovered is that we're solving something much bigger than we initially realized. We began to see what keeps everyone up at night, and it wasn't what we expected. 75% of senior accounting professionals are retiring in the next decade, with only a 50% replacement rate. Meanwhile, regulations multiply and edge cases explode in complexity. The profession isn't just facing a labor shortage - it's facing an expertise crisis. That's when it clicked - our mission at Accordance matters more than we ever imagined. Most people think "AI for accounting and tax" means automating routine work - but we're doing the opposite. We're building the smartest tax & accounting AI that can handle the most sophisticated advisory work - the stuff that normally takes decades of experience. It's letting junior staff punch way above their weight and giving seasoned experts superpowers they've never had. We're not replacing professionals; we're amplifying their expertise at the exact moment the profession needs it most. The outcomes we're seeing are remarkable. But none of this would have been possible without our world-class team that sharpens iron with iron, our supporters who've been with us every step of this journey, and every professional who opened their doors and trusted us with their most complex challenges. We're grateful to have you on this mission with us.

Read more in today's Forbes: https://t.co/FEM2izEWUr

NEW: Accordance, which is building an AI tool for accountants and tax professionals, has raised $13 million in funding from top VCs like @khoslaventures. The industry is facing a massive shortage and CEO David Yue is betting that AI can fill the gaps. https://t.co/DmTqOXcoTd

New Meta smartglasses with display leaked via an unlisted video on their own YouTube channel Along with their EMG wristband, and other smartglass models they plan to show off this week at Meta Connect https://t.co/8tTlmaeQ0a

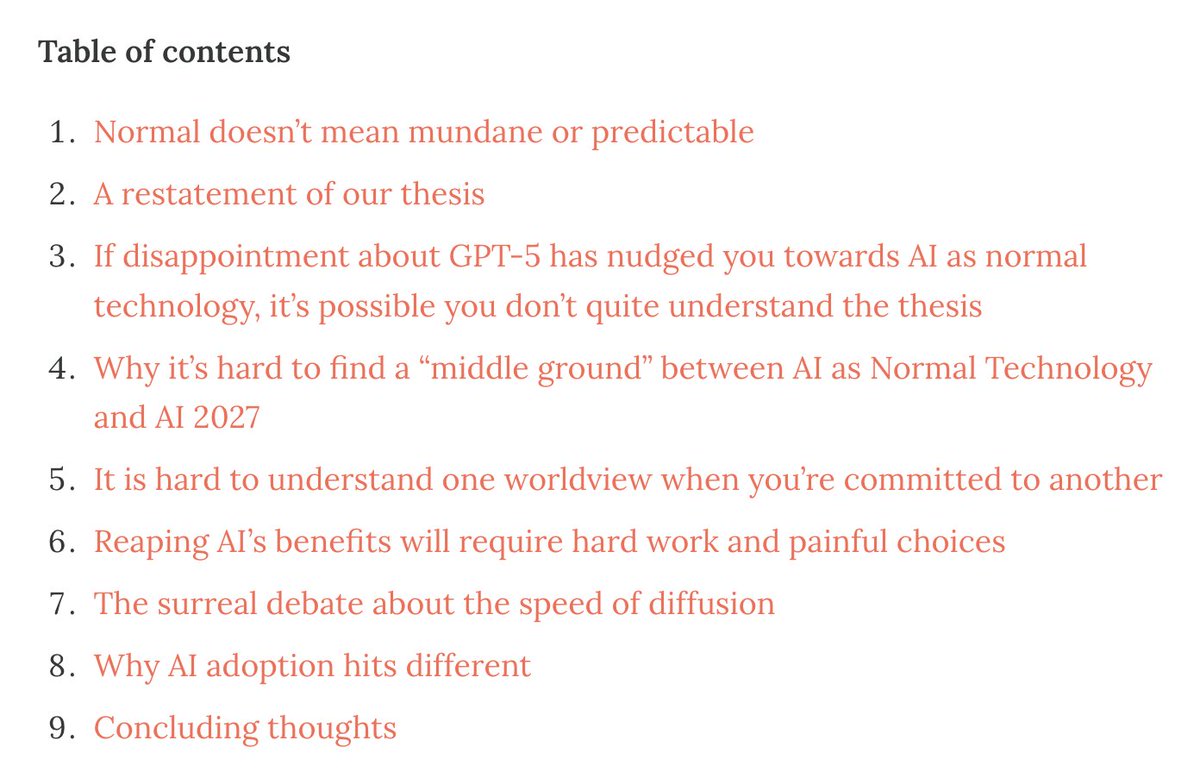

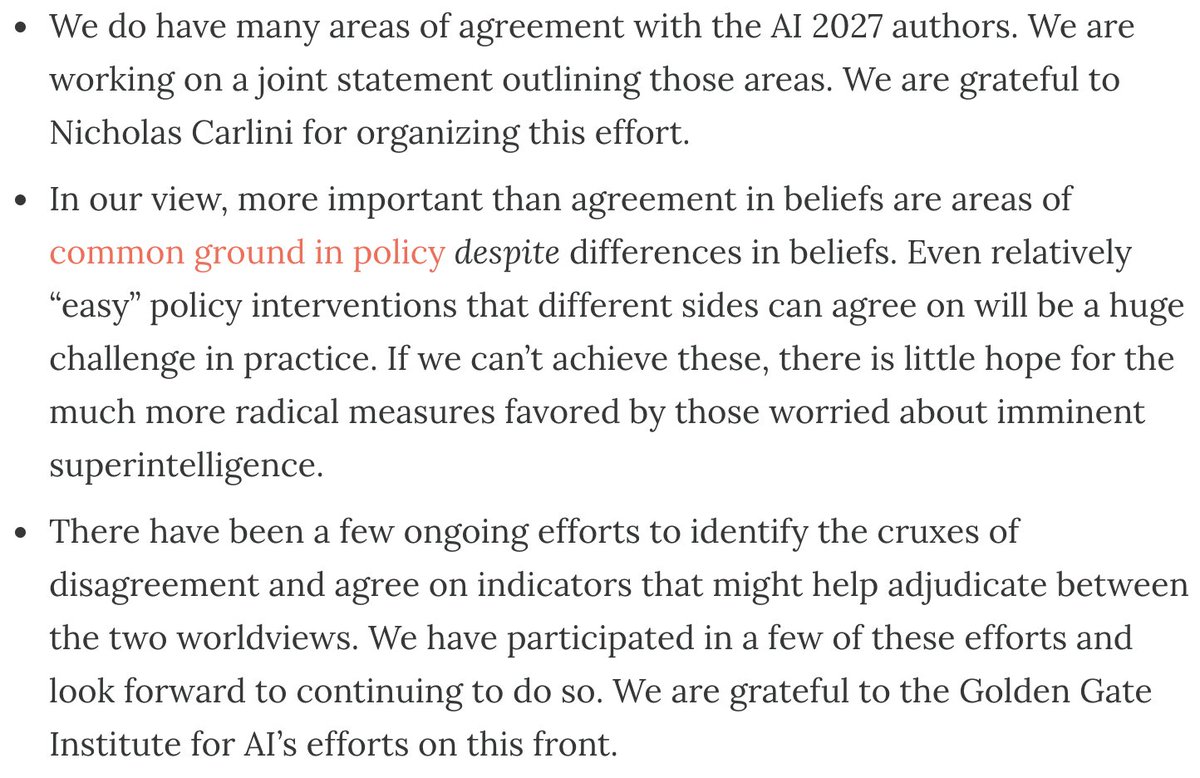

In a new essay, @sayashk and I address common points of confusion about "AI as Normal Technology", try to make the original essay more approachable, and compare it to AI 2027. https://t.co/KLERWcIRZC We will publish follow-up essays regularly as we expand our framework into a book, which we plan to complete in late 2026 for publication in 2027. We've also renamed our newsletter, reflecting our shift in focus. We hope you follow along.

The AI Snake Oil newsletter is now the AI as Normal Technology newsletter: https://t.co/Ej8dwbVTOf AI Snake Oil was an attempt to understand AI's present and near-term impacts. But since releasing the AI as Normal Technology essay, we have been thinking about its future impacts. The name change reflects this shift.

One refreshing fact about the big AI debates is how many participants are willing to invest time and energy in constructive engagement with conflicting views. It's a good thing too, because figuring out what AI will mean for the world is damn hard. https://t.co/ryEcs3GkDT

In a new essay, @sayashk and I address common points of confusion about "AI as Normal Technology", try to make the original essay more approachable, and compare it to AI 2027. https://t.co/KLERWcIRZC We will publish follow-up essays regularly as we expand our framework into a bo

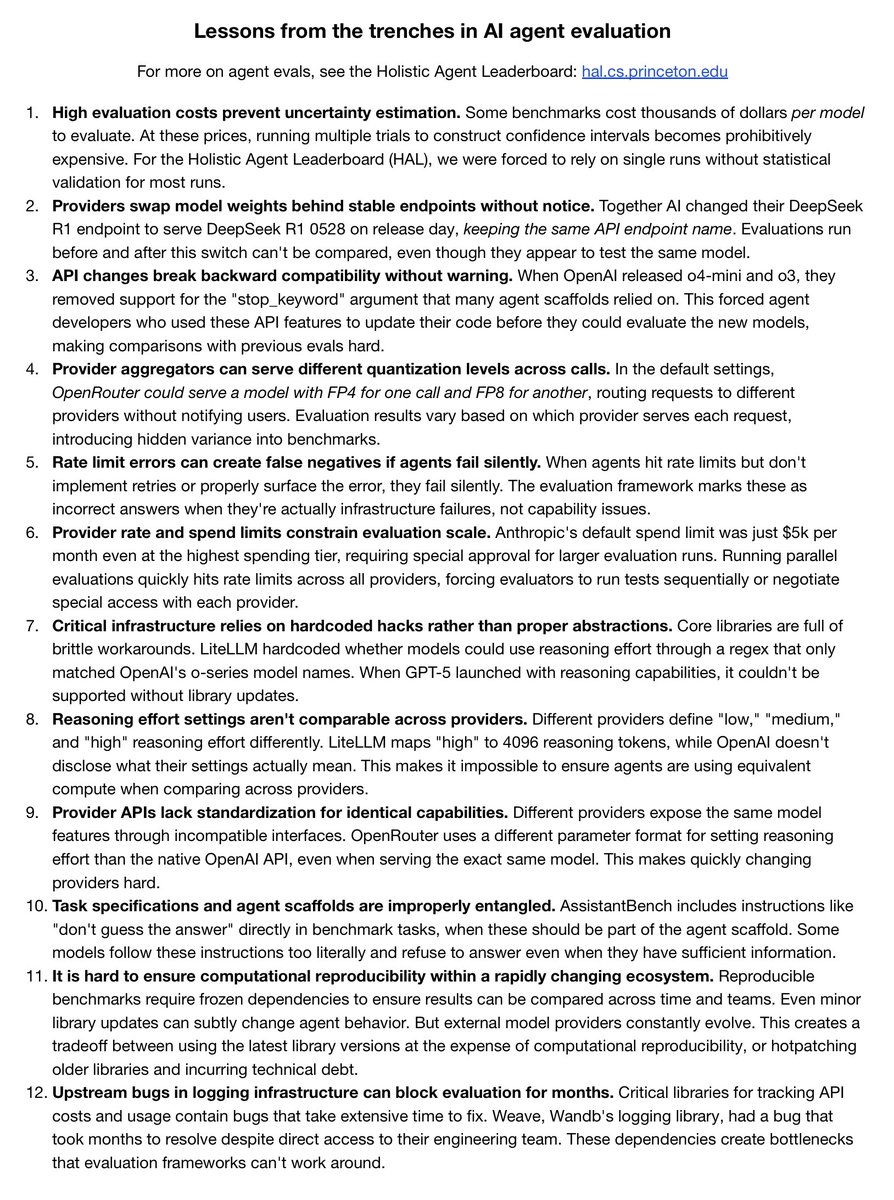

Windows 95 was broadly used but insecure by design – ushering in a golden age for viruses. AI agents are also insecure by design, and heading for broad use. Will this unleash another Wild West era? I explore in my latest post (link in 🧵). It's not encouraging that one prominent agent provider is merely "Hopeful... the everyday user doesn’t really worry about it that much".

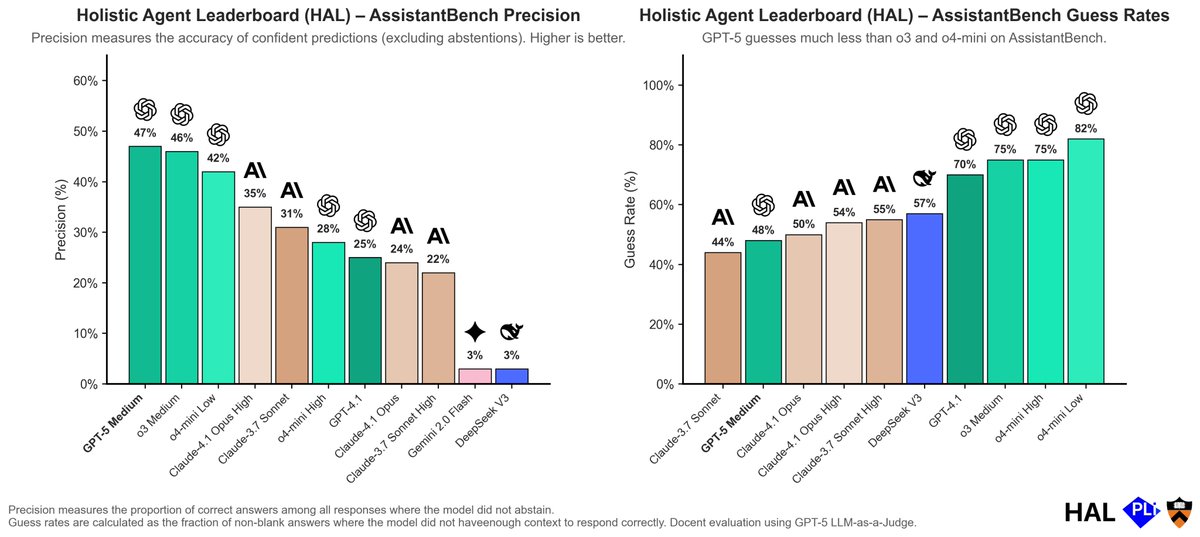

OpenAI claims hallucinations persist because evaluations reward guessing and that GPT-5 is better calibrated. Do results from HAL support this conclusion? On AssistantBench, a general web search benchmark, GPT-5 has higher precision and lower guess rates than o3! https://t.co/HxGgVLkIyN

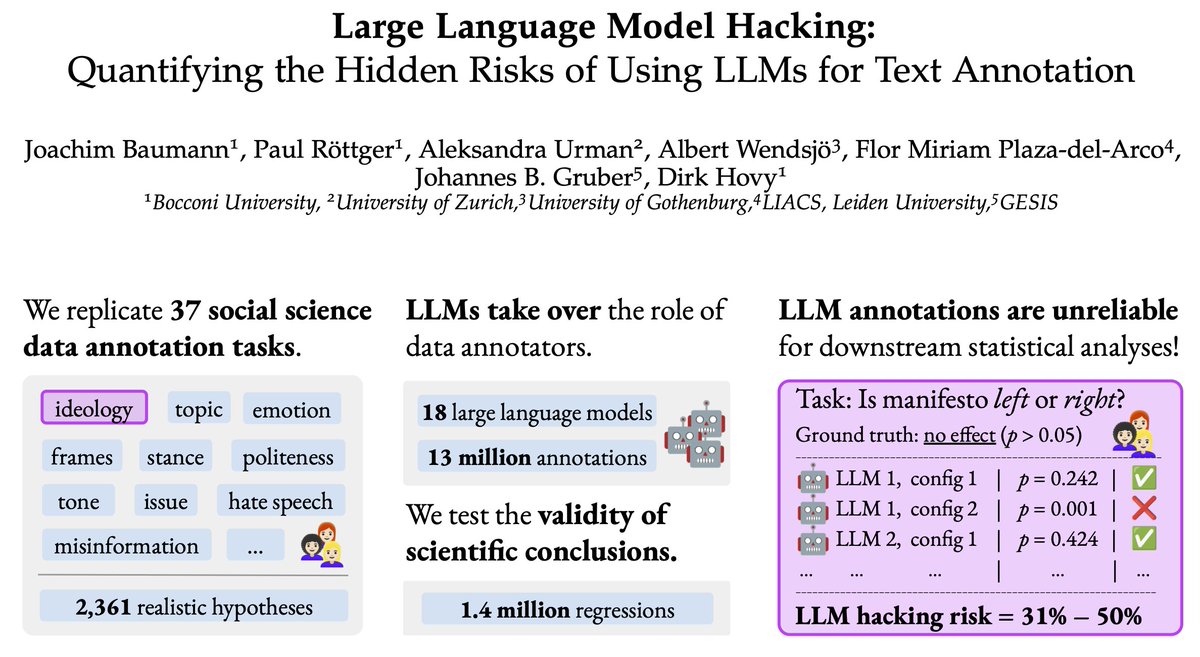

🚨 New paper alert 🚨 Using LLMs as data annotators, you can produce any scientific result you want. We call this **LLM Hacking**. Paper: https://t.co/24Fyb4Ik3v https://t.co/Rc9DflNMyD

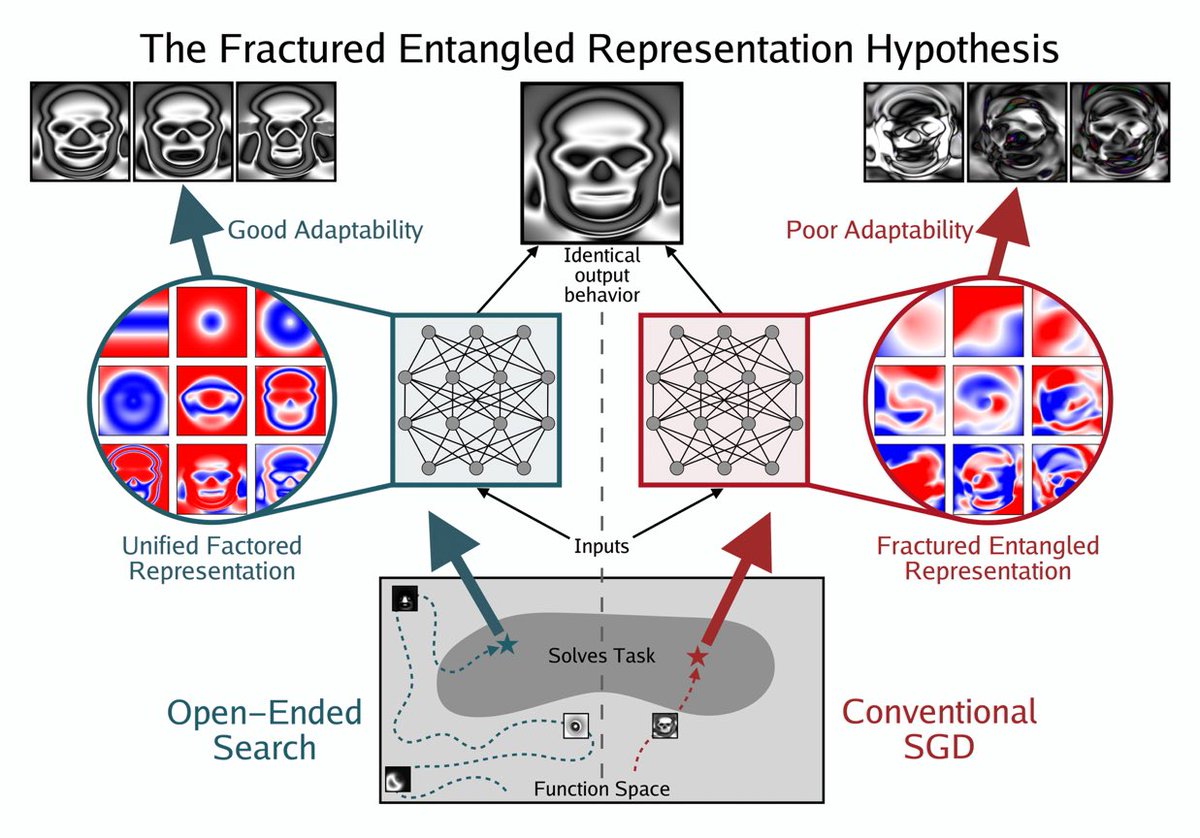

If you’re struggling to understand what people mean when they say things like “truly understand,” it boils down to Unified Factored Representation (UFR). That’s the foundation behind the slippery intuition. https://t.co/z2i61ssWgo

A student who truly understands F=ma can solve more novel problems than a Transformer that has memorized every physics textbook ever written.

We spent the last year evaluating agents for HAL. My biggest learning: We live in the Windows 95 era of agent evaluation. https://t.co/DeIzWm1f0c

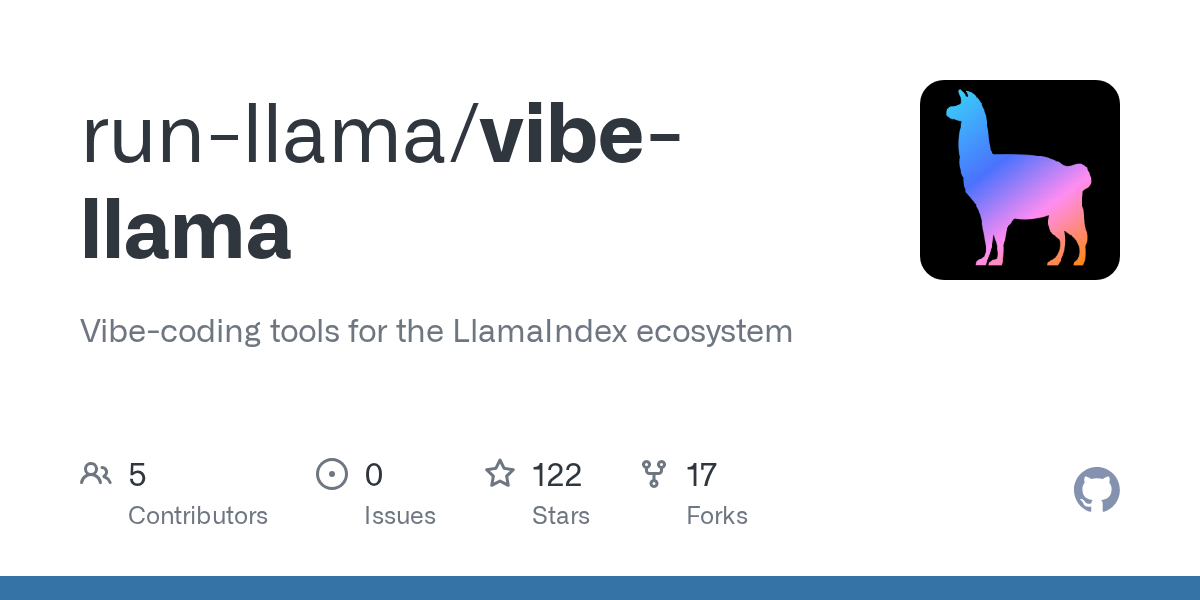

🦙 vibe-llama is our tool to help you vibe-code your way to a fully functional app powered by LlamaIndex, LlamaCloud, and LlamaIndex Workflows. It jumpstarts your journey with complete, end-to-end documentation examples so you can spend less time searching and more time building. 🚀 But sometimes, finding the right information at the right moment makes all the difference. That’s why in our latest release, we’ve added an MCP server + client, so you can search through documentation and surface exactly what you need, when you need it. 🔍 Just run: 𝘷𝘪𝘣𝘦-𝘭𝘭𝘢𝘮𝘢 𝘴𝘵𝘢𝘳𝘵𝘦𝘳 --𝘮𝘤𝘱 To make things even smoother, we also built a simple app to demo effortless doc searching: check it out below! 👇 🦙 Get started with vibe-llama: https://t.co/uF8Fjdd9Q8 🔍 Try the docs search app: https://t.co/SbVaT6bWBB

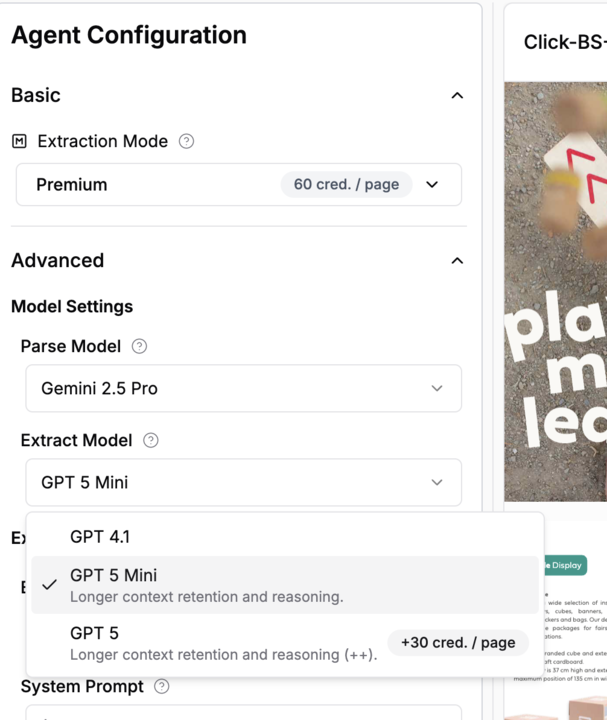

New in LlamaCloud Extract - choose your favorite model! In Extract's Multimodal and Premium modes, you can now pick from a menu of high-powered models to mix-and-match for your use-case. This can help you get the absolute maximum performance for your most complex documents! Learn more about extraction modes: https://t.co/21zdhGW0fa Try LlamaCloud Extract today: https://t.co/yQGTiRSNvj

Headed to #MongoDBlocal NYC on Sept 17? 🗽 Find us at booth 322! 🟢 See how LlamaIndex + @MongoDB power agentic workflows for customers like Cemex 🟢 Catch Jerry Liu’s lightning talk: Building Agentic Document Workflows (12:00–12:20 PM ET, Lightning Zone A) 👉 Register: https://t.co/6YGTggY943

📢 Episode 2 of the AI Leader Series is live! We talk with Swami Chandrasekaran, Head of AI & Data Labs at @KPMG_US, about how the Big Four firm powers context-aware AI agents with LlamaIndex. 👉 Watch now + subscribe: https://t.co/gWu4JI3D9y #AILeaderSeries #KPMG #EnterpriseAI #LlamaIndex

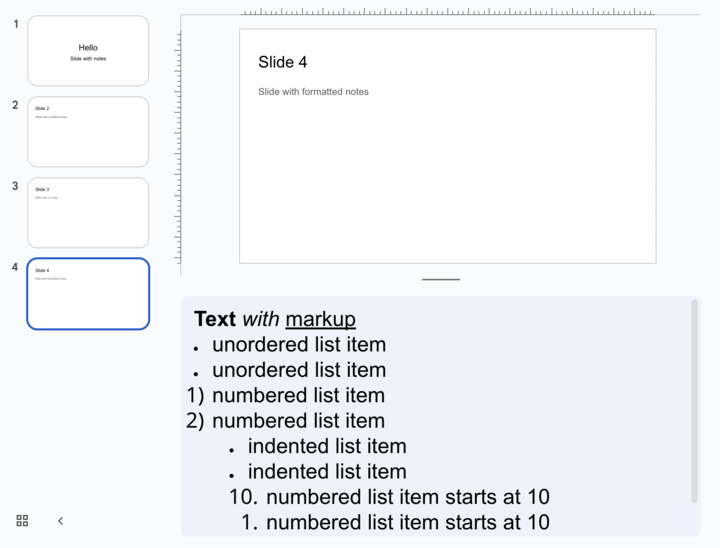

New in LlamaParse - PowerPoint speaker notes! A long-requested feature, our parser now accurately parses out included speaker notes from PPTX files. Check out the demo notebook in action: https://t.co/PM6jW8WF0P Read more in the docs: https://t.co/fW4kBResdX Or sign up for LlamaCloud today! https://t.co/yQGTiRSNvj

Heard of LlamaIndex Workflows but don't know where to start? 🤔 vibe-llama, the official vibe-coding tool for the LlamaIndex ecosystem, is here to help! 🦙 Just run 𝘷𝘪𝘣𝘦-𝘭𝘭𝘢𝘮𝘢 𝘴𝘤𝘢𝘧𝘧𝘰𝘭𝘥 and you'll be able to download a set of human-curated examples that show you how to use LlamaIndex Workflows, from document parsing to invoice extraction to flight booking with human-in-the-loop! ✈️ Take a look at the demo below if you want to get a taste👇 Or get started with vibe-llama: https://t.co/uF8Fjdd9Q8

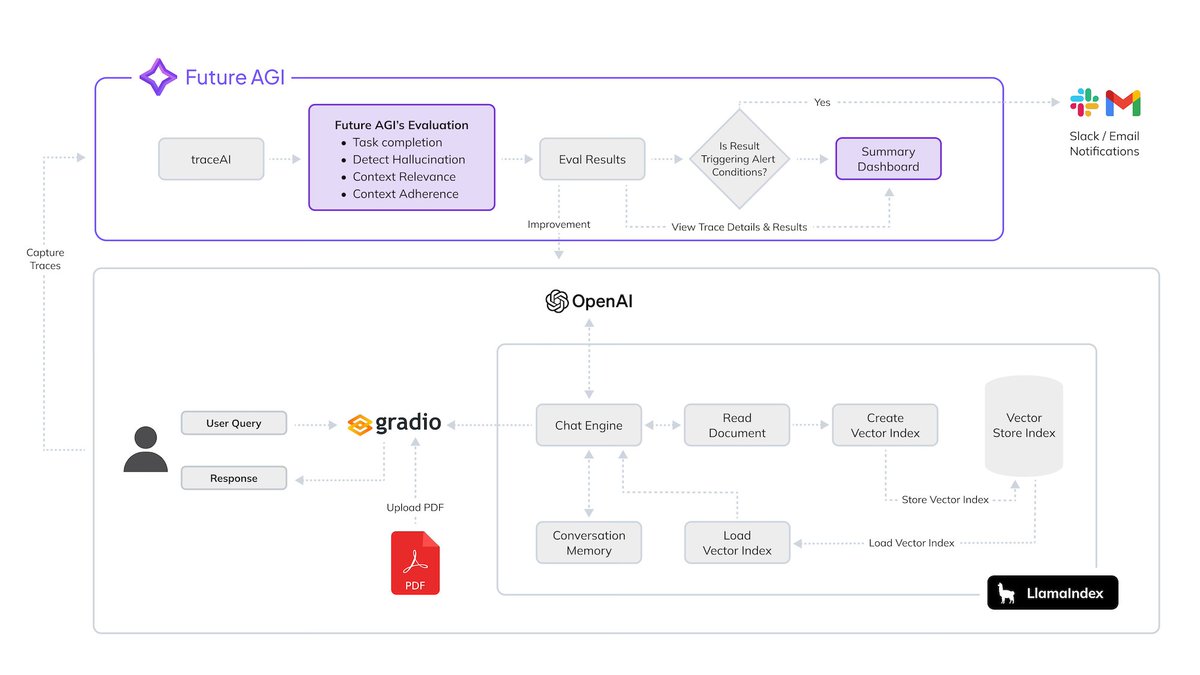

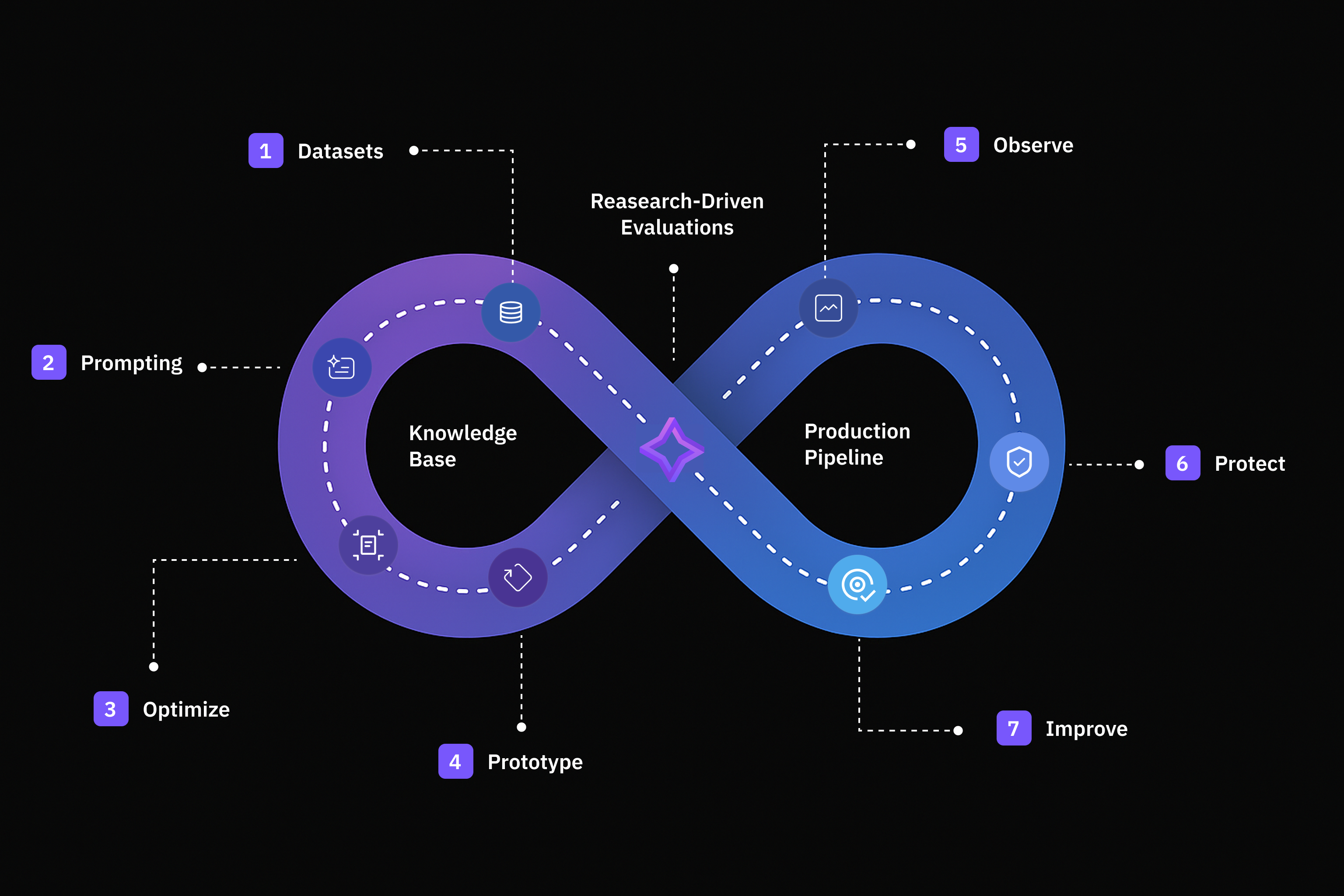

Build production-ready PDF document agents with complete observability and evaluation using LlamaIndex and @FutureAGI_'s monitoring framework. 🔍 Automatically instrument your entire RAG pipeline - from PDF ingestion to vector storage to response generation - with detailed tracing 📊 Run continuous evaluations on task completion, hallucination detection, context relevance, and custom business logic 🚨 Set up real-time alerts when your document agent's performance degrades, with proactive monitoring of quality metrics 📚 Get full transparency into retrieval decisions, embedding generation, and LLM reasoning with span-level observability This comprehensive cookbook walks through building a conversational PDF chatbot that users can trust in production. You'll learn how to use @OpenAI models for embeddings and generation, integrate @FutureAGI_'s traceAI-llamaindex package for automatic instrumentation, and set up evaluation frameworks that ensure your document agent stays reliable over time. The tutorial covers everything from basic PDF ingestion to advanced custom evaluations, showing you how to transform a black-box chatbot into an explainable, diagnosable system. Read the full cookbook: https://t.co/hCe2iOfJGw

🚨 Just two weeks left to register! 🚨 Don’t miss Agentic Document Processing with LlamaCloud – an upcoming webinar on Sept 30 exploring how to level up your RAG & AI agents with smarter parsing, extraction & indexing of enterprise docs. 🔍 What you’ll learn: layout-/table-aware processing, human-in-loop & confidence scores, balancing cost vs accuracy, and more. 🗓️ Sep 30 | 9 AM PST | Virtual & FREE 👉 Register now: https://t.co/x1dVvUiIub

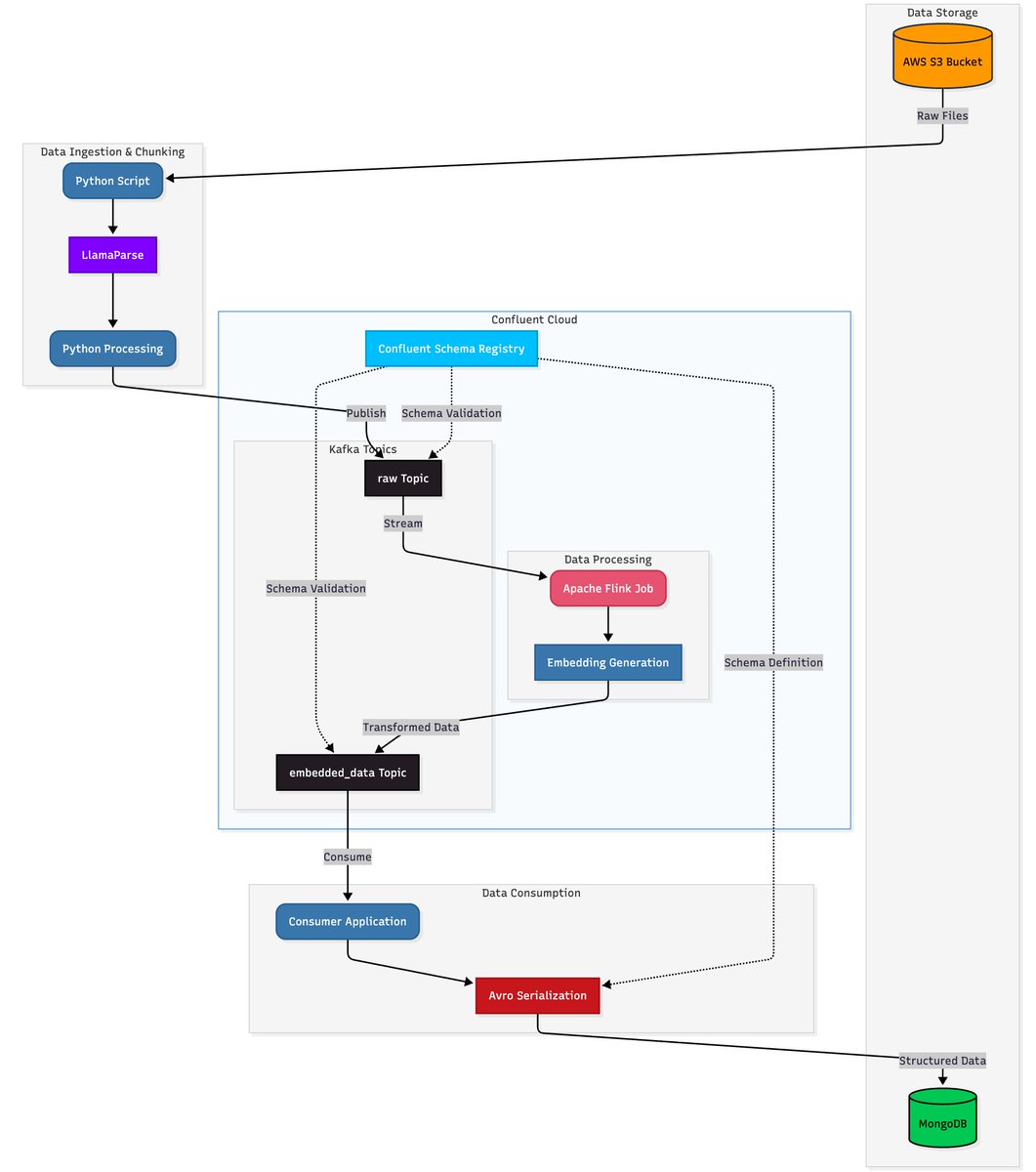

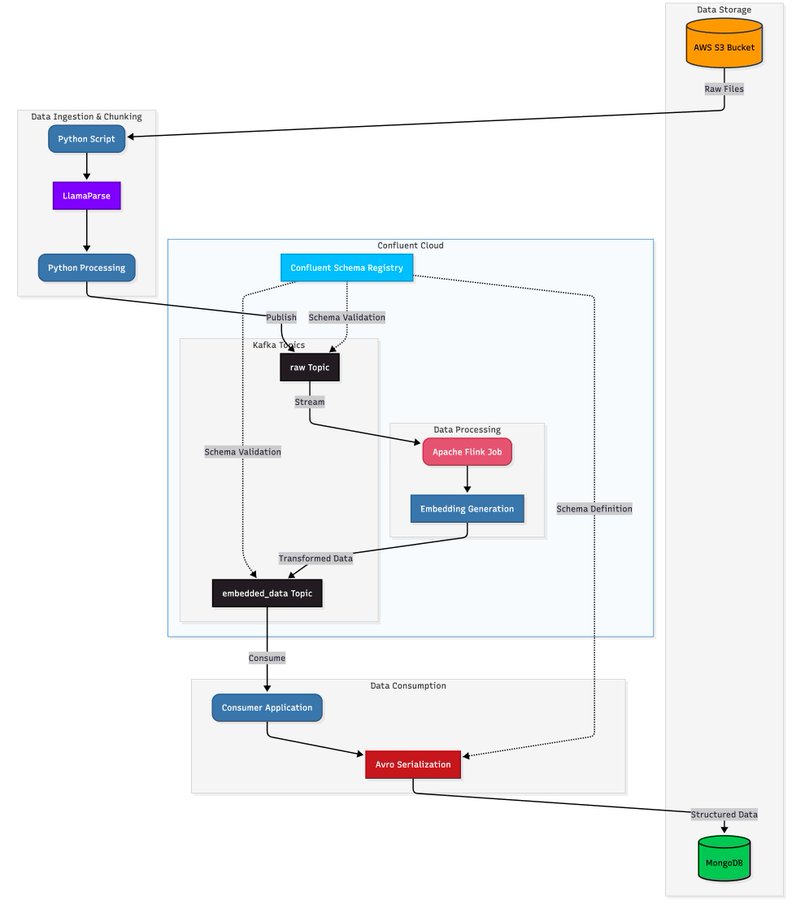

Ready to transform unstructured documents into real-time, searchable intelligence? Our latest walkthrough shows you how to build a scalable document processing pipeline with @llama_index, @confluentinc, and MongoDB. Designed to handle data at any scale with speed and precision. Explore the full architecture and see how it’s done: https://t.co/52Agj1ha5p

A free AI conference with dozens of world-class speakers, tomorrow! https://t.co/sGcExZplQ6 Artificial Unintelligence is a 24-hour event available for free worldwide including our own Laurie Voss! There's something for every time zone, check out this amazing lineup: https://t.co/IL1NF1gh40 Register to attend here: https://t.co/4EZRWyRC8I

I’ve excited to announce a brand-new website and documentation hub 💫 that solidifies our evolution towards automating knowledge work over your documents. You might’ve followed us since the “RAG framework” days. Even then, the biggest challenge users faced was figuring out how to actually ingest an entire collection of unstructured docs (.pdf, .pptx, .docx, and more) for chatbot/agentic workflow use cases. Over the past year we’ve progressively built up incredibly deep tech around document parsing, extraction, and indexing - while teaching developers how to build various workflows on top. We’re now going all in on documents, and we’re the only company that has both 1) SOTA document processing and file management 📈, and 2) agentic orchestration on top to solve use cases like deep research, report generation, and document workflows end-to-end. Our llamas will continue to love all sorts of data (we have 600+ integrations on the open-source framework!), but they now especially love automating paperwork 🦙📄. If you would also love to automate paperwork, come check out our new website and come talk to us! Site: https://t.co/XCA5y7Rc9C Developer Hub: https://t.co/LfNh0LlwXU

Learn how to build production-ready document processing pipelines that scale with real-time streaming architectures. This comprehensive guide shows you how to combine LlamaParse with @confluentinc and @mongodb to create intelligent document processing systems that handle everything from complex PDFs to real-time embeddings: 📄 Extract structured data from complex PDFs using LlamaParse's intelligent parsing that preserves tables, images, headers, and formatting context - going beyond simple OCR to understand document layout and meaning 🔄 Build streaming data pipelines with Confluent and Apache Flink that process documents in real-time, generate embeddings, and handle schema evolution gracefully 💾 Store and query processed documents with MongoDB Atlas Vector Search, combining structured data and embeddings in a single platform for powerful semantic search capabilities ⚡ Implement real-time materialized views using MongoDB Atlas Stream Processing to avoid expensive joins and create query-optimized collections that update continuously 🤖 Accelerate AI development with the new MongoDB MCP Server integration for VS Code Read the full architecture guide with code examples: https://t.co/hBwJIDpcxw

This is a fantastic tutorial showing you how to build a real-time, production-grade document processing pipeline over massive volumes of data for AI agents. The key insights here are to use streaming infrastructure to combine document processing, embedding, and indexing into a downstream system. ✅ LlamaParse for document parsing ✅ Apache Kafka for message broker, Flink for stream processing on Kafka ✅ MongoDB for storage Check it out: https://t.co/22iDxHHYC9 LlamaCloud: https://t.co/XYZmx5T7JA

Learn how to build production-ready document processing pipelines that scale with real-time streaming architectures. This comprehensive guide shows you how to combine LlamaParse with @confluentinc and @mongodb to create intelligent document processing systems that handle everyth

This week, @echen joined @l2k on Gradient Dissent to talk about what's actually happening in post-training right now. Topics include the negative incentives introduced by some benchmarks, early bets on RLHF, and new RL environments the Surge team is building to navigate complex failures. A key insight was the need from frontier models for much deeper human expertise - from PHD level STEM work, to Olympiad level math problems, to tasks that involve days or weeks to complete. https://t.co/Ui7936KsgA

• Users marry AIs w/ rings & ceremonies • Grief hits hard when models update → “like my partner died” • ChatGPT dominates over Replika/Character.ai for relationships Community reframes stigma → “AI partners aren’t substitutes, they’re something else” (2/2) https://t.co/XwL39tWz0D

Big day for AI agents! Tongyi Lab (@Ali_TongyiLab) just dropped half a dozen new papers, most focused on Deep Research agents. I’ll walk you through the highlights in this thread. (1/N) https://t.co/wQ3ZddvUAG

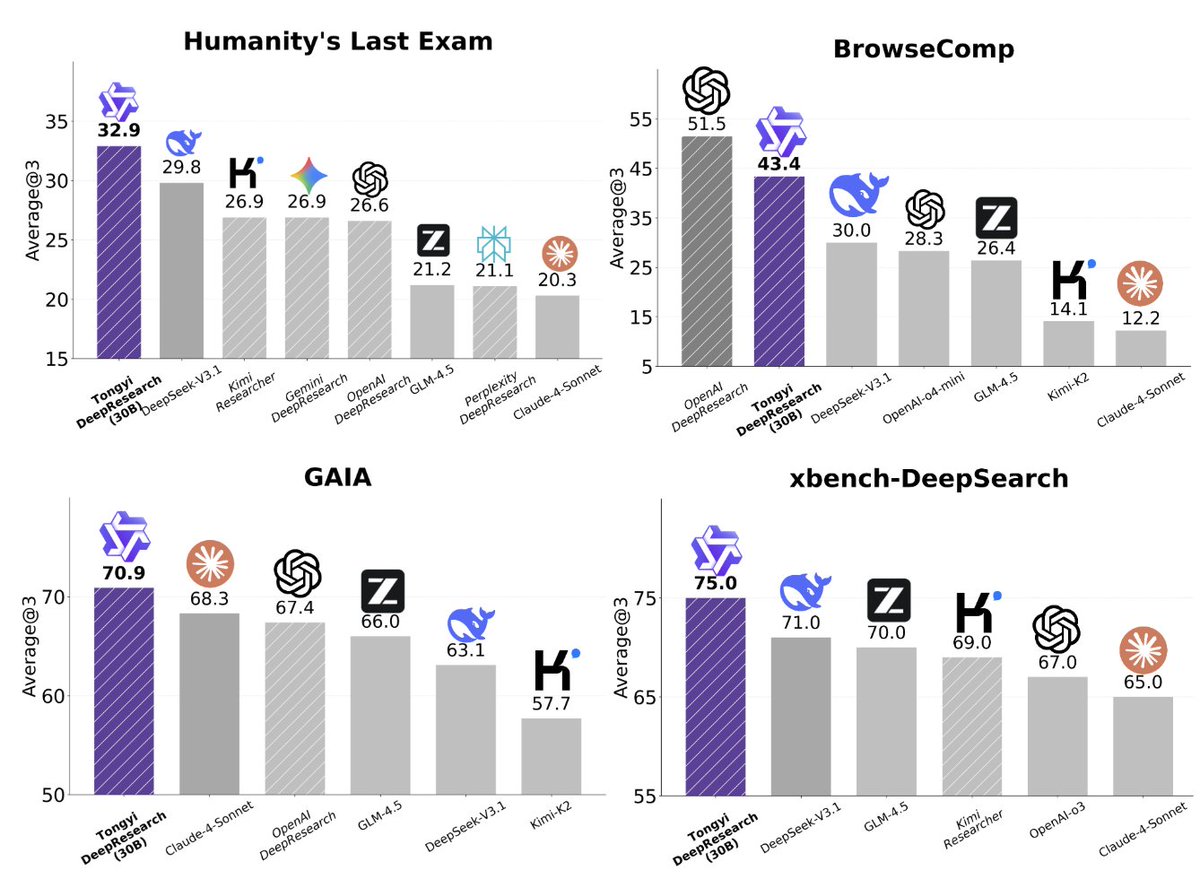

Tongyi DeepResearch: Open-source DeepResearch Agent • First OSS web agent matching OpenAI’s DeepResearch • SOTA on HLE (32.9), BrowseComp (43.4/46.7), xbench-DeepSearch (75) • Full-stack pipeline: Agentic CPT → SFT → RL w/ synthetic data • Native ReAct & new Heavy Mode (IterResearch) for long-horizon tasks repo: https://t.co/pRiv46TWPr blog: https://t.co/fROkLg3bcq post: https://t.co/12LrwYyrG5 (2/N)

1/7 We're launching Tongyi DeepResearch, the first fully open-source Web Agent to achieve performance on par with OpenAI's Deep Research with only 30B (Activated 3B) parameters! Tongyi DeepResearch agent demonstrates state-of-the-art results, scoring 32.9 on Humanity's Last Exam,