@joabaum

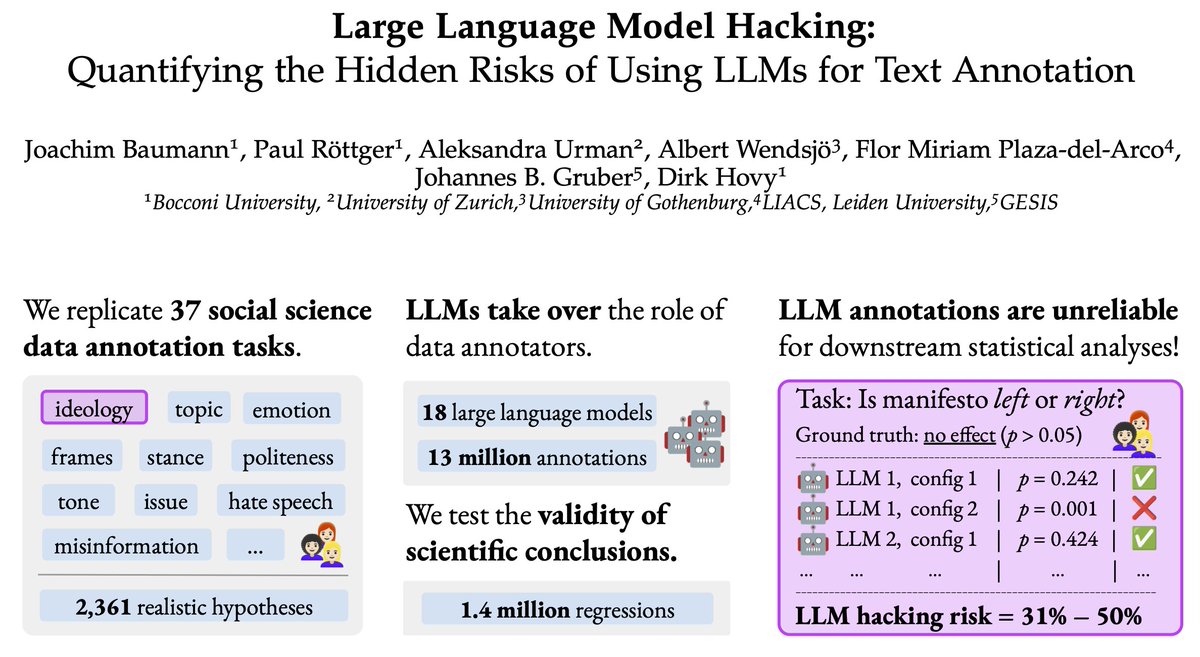

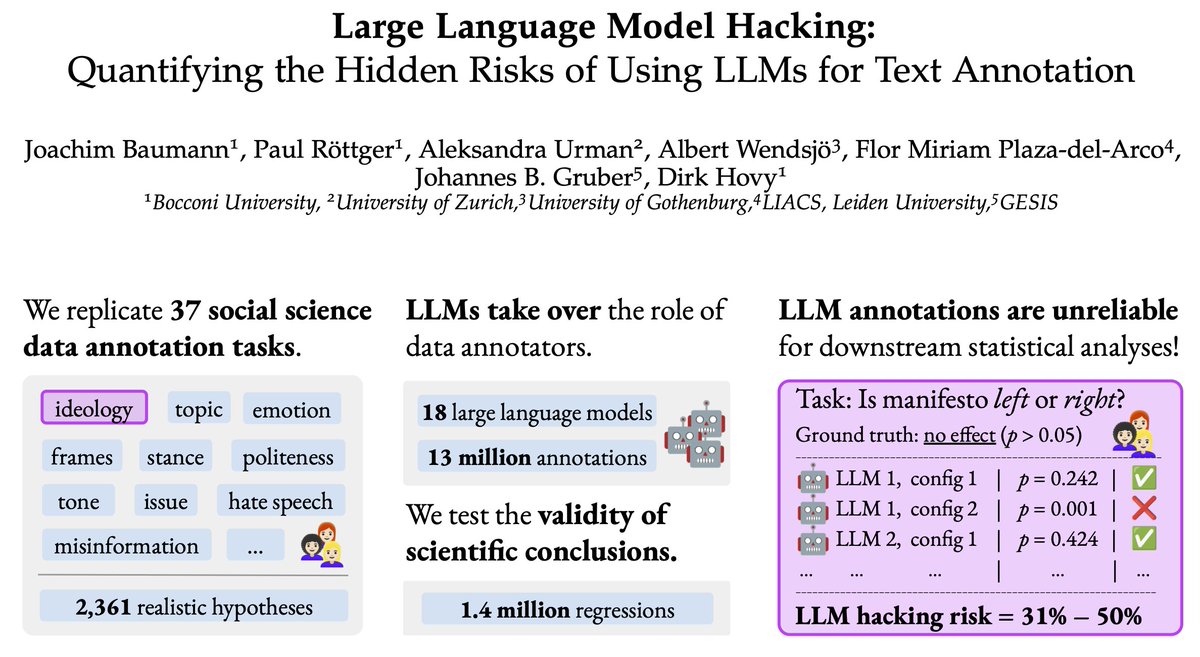

🚨 New paper alert 🚨 Using LLMs as data annotators, you can produce any scientific result you want. We call this **LLM Hacking**. Paper: https://t.co/24Fyb4Ik3v https://t.co/Rc9DflNMyD

Viewing enriched Twitter post

🚨 New paper alert 🚨 Using LLMs as data annotators, you can produce any scientific result you want. We call this **LLM Hacking**. Paper: https://t.co/24Fyb4Ik3v https://t.co/Rc9DflNMyD

{

"media": [

{

"url": "https://crmoxkoizveukayfjuyo.supabase.co/storage/v1/object/public/media/posts/1966454537922793979/media_0.jpg?",

"media_url": "https://crmoxkoizveukayfjuyo.supabase.co/storage/v1/object/public/media/posts/1966454537922793979/media_0.jpg?",

"type": "photo",

"filename": "media_0.jpg"

}

],

"processed_at": "2025-09-18T13:49:23.793021",

"pipeline_version": "2.0"

}{

"type": "tweet",

"id": "1966454537922793979",

"url": "https://x.com/joabaum/status/1966454537922793979",

"twitterUrl": "https://twitter.com/joabaum/status/1966454537922793979",

"text": "🚨 New paper alert 🚨 Using LLMs as data annotators, you can produce any scientific result you want. We call this **LLM Hacking**.\n\nPaper: https://t.co/24Fyb4Ik3v https://t.co/Rc9DflNMyD",

"source": "Twitter for iPhone",

"retweetCount": 101,

"replyCount": 13,

"likeCount": 504,

"quoteCount": 10,

"viewCount": 44963,

"createdAt": "Fri Sep 12 10:51:11 +0000 2025",

"lang": "en",

"bookmarkCount": 374,

"isReply": false,

"inReplyToId": null,

"conversationId": "1966454537922793979",

"displayTextRange": [

0,

160

],

"inReplyToUserId": null,

"inReplyToUsername": null,

"author": {

"type": "user",

"userName": "joabaum",

"url": "https://x.com/joabaum",

"twitterUrl": "https://twitter.com/joabaum",

"id": "1360270456985690113",

"name": "Joachim Baumann",

"isVerified": false,

"isBlueVerified": false,

"verifiedType": null,

"profilePicture": "https://pbs.twimg.com/profile_images/1745519070017937408/ynVm4tXS_normal.jpg",

"coverPicture": "",

"description": "",

"location": "Zurich, Switzerland",

"followers": 175,

"following": 797,

"status": "",

"canDm": false,

"canMediaTag": true,

"createdAt": "Fri Feb 12 17:08:15 +0000 2021",

"entities": {

"description": {

"urls": []

},

"url": {}

},

"fastFollowersCount": 0,

"favouritesCount": 51,

"hasCustomTimelines": true,

"isTranslator": false,

"mediaCount": 2,

"statusesCount": 31,

"withheldInCountries": [],

"affiliatesHighlightedLabel": {},

"possiblySensitive": false,

"pinnedTweetIds": [

"1966454537922793979"

],

"profile_bio": {

"description": "Postdoc @MilaNLProc / Incoming Postdoc @StanfordNLP @StanfordAILab / Prev: @UZH_en @MPI_IS @CarnegieMellon. CompSocSci, LLMs, algorithmic fairness.",

"entities": {

"description": {

"user_mentions": [

{

"id_str": "0",

"indices": [

8,

19

],

"name": "",

"screen_name": "MilaNLProc"

},

{

"id_str": "0",

"indices": [

39,

51

],

"name": "",

"screen_name": "StanfordNLP"

},

{

"id_str": "0",

"indices": [

52,

66

],

"name": "",

"screen_name": "StanfordAILab"

},

{

"id_str": "0",

"indices": [

75,

82

],

"name": "",

"screen_name": "UZH_en"

},

{

"id_str": "0",

"indices": [

83,

90

],

"name": "",

"screen_name": "MPI_IS"

},

{

"id_str": "0",

"indices": [

91,

106

],

"name": "",

"screen_name": "CarnegieMellon"

}

]

},

"url": {

"urls": [

{

"display_url": "joebaumann.org",

"expanded_url": "https://joebaumann.org/",

"indices": [

0,

23

],

"url": "https://t.co/s1hsoTGRud"

}

]

}

}

},

"isAutomated": false,

"automatedBy": null

},

"extendedEntities": {

"media": [

{

"allow_download_status": {

"allow_download": true

},

"display_url": "pic.twitter.com/Rc9DflNMyD",

"expanded_url": "https://twitter.com/joabaum/status/1966454537922793979/photo/1",

"ext_alt_text": "We present our new preprint titled \"Large Language Model Hacking: Quantifying the Hidden Risks of Using LLMs for Text Annotation\".\nWe quantify LLM hacking risk through systematic replication of 37 computational social science annotation tasks.\nWe use a combined set of 2,361 realistic hypotheses that researchers might test using these annotations.\nThen, we collect 13 million LLM annotations across plausible LLM configurations.\nThe annotations feed into 1.4 million regressions testing the hypotheses. \nFor a hypothesis with no true effect ($p > 0.05$), different LLM configs yield conflicting conclusions.\nCheckmarks indicate correct conclusions matching ground truth; crosses indicate LLM hacking -- incorrect conclusions due to annotation errors.\nLLM hacking occurs in 31-50\\% of cases even with highly capable models.\nSince minor configuration changes can flip scientific conclusions, from correct to incorrect, LLM hacking can be exploited to present anything as statistically significant.",

"ext_media_availability": {

"status": "Available"

},

"features": {

"large": {},

"orig": {}

},

"id_str": "1966451933981372416",

"indices": [

161,

184

],

"media_key": "3_1966451933981372416",

"media_results": {

"id": "QXBpTWVkaWFSZXN1bHRzOgwAAQoAARtKPZ2A1vAACgACG0o/+8fX0fsAAA==",

"result": {

"__typename": "ApiMedia",

"id": "QXBpTWVkaWE6DAABCgABG0o9nYDW8AAKAAIbSj/7x9fR+wAA",

"media_key": "3_1966451933981372416"

}

},

"media_url_https": "https://pbs.twimg.com/media/G0o9nYDW8AAxPZG.jpg",

"original_info": {

"focus_rects": [

{

"h": 1300,

"w": 2321,

"x": 54,

"y": 0

},

{

"h": 1300,

"w": 1300,

"x": 1071,

"y": 0

},

{

"h": 1300,

"w": 1140,

"x": 1151,

"y": 0

},

{

"h": 1300,

"w": 650,

"x": 1396,

"y": 0

},

{

"h": 1300,

"w": 2375,

"x": 0,

"y": 0

}

],

"height": 1300,

"width": 2375

},

"sizes": {

"large": {

"h": 1121,

"w": 2048

}

},

"type": "photo",

"url": "https://t.co/Rc9DflNMyD"

}

]

},

"card": null,

"place": {},

"entities": {

"urls": [

{

"display_url": "arxiv.org/pdf/2509.08825",

"expanded_url": "https://arxiv.org/pdf/2509.08825",

"indices": [

137,

160

],

"url": "https://t.co/24Fyb4Ik3v"

}

]

},

"quoted_tweet": null,

"retweeted_tweet": null,

"isLimitedReply": false,

"article": null

}