Your curated collection of saved posts and media

Next week we're dropping Open Lovable v2 - an open source AI software engineer. Just paste in a website URL and AI agents will create a working clone you can build on top of, all completely open source and powered by @firecrawl_dev. Stay tuned to see all of the new features 👀 https://t.co/nlGPSFNwlE

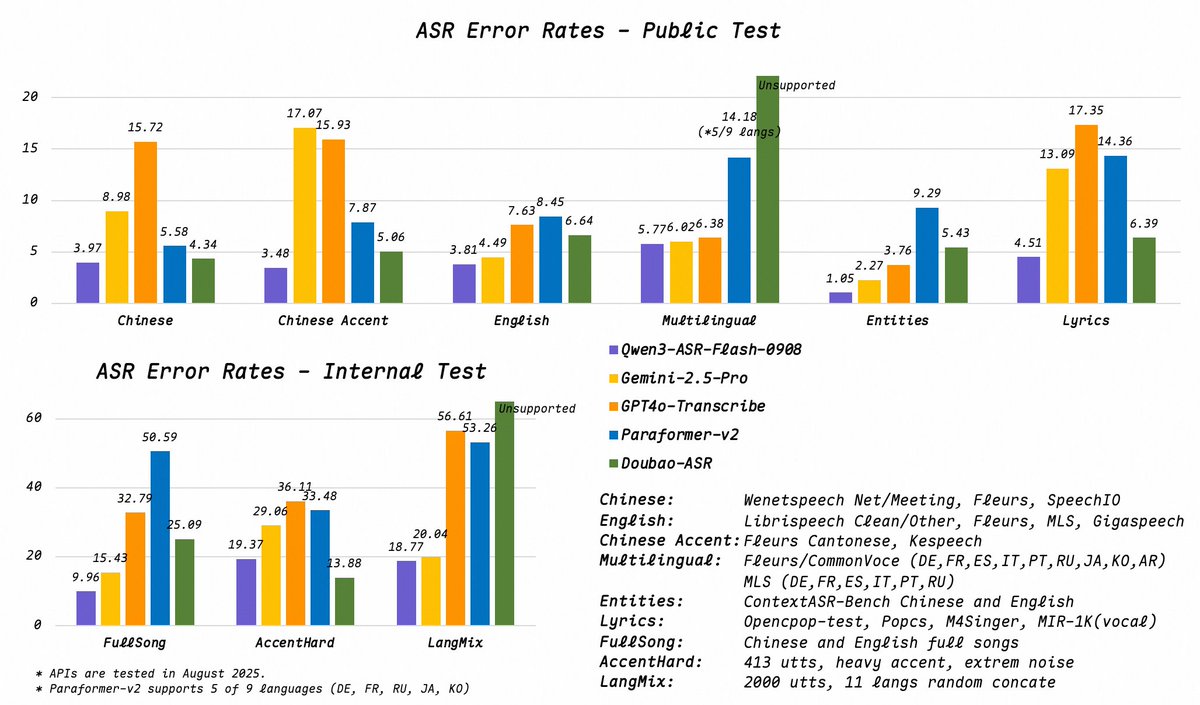

🎙️ Meet Qwen3-ASR — the all-in-one speech recognition model! ✅ High-accuracy EN/CN + 9 more languages: ar, de, en, es, fr, it, ja, ko, pt, ru, zh ✅ Auto language detection ✅ Songs? Raps? Voice with BGM? No problem. <8% WER ✅ Works in noise, low quality, far-field ✅ Custom context? Just paste ANY text — names, jargon, even gibberish 🧠 ✅ One model. Zero hassle.Great for edtech, media, customer service & more. API:https://t.co/bB64vHbE1f ModelScope Demo: https://t.co/B4qoprpbeS Hugging Face Demo: https://t.co/CHofDumZnM Blog:https://t.co/V9zOPfGxfp

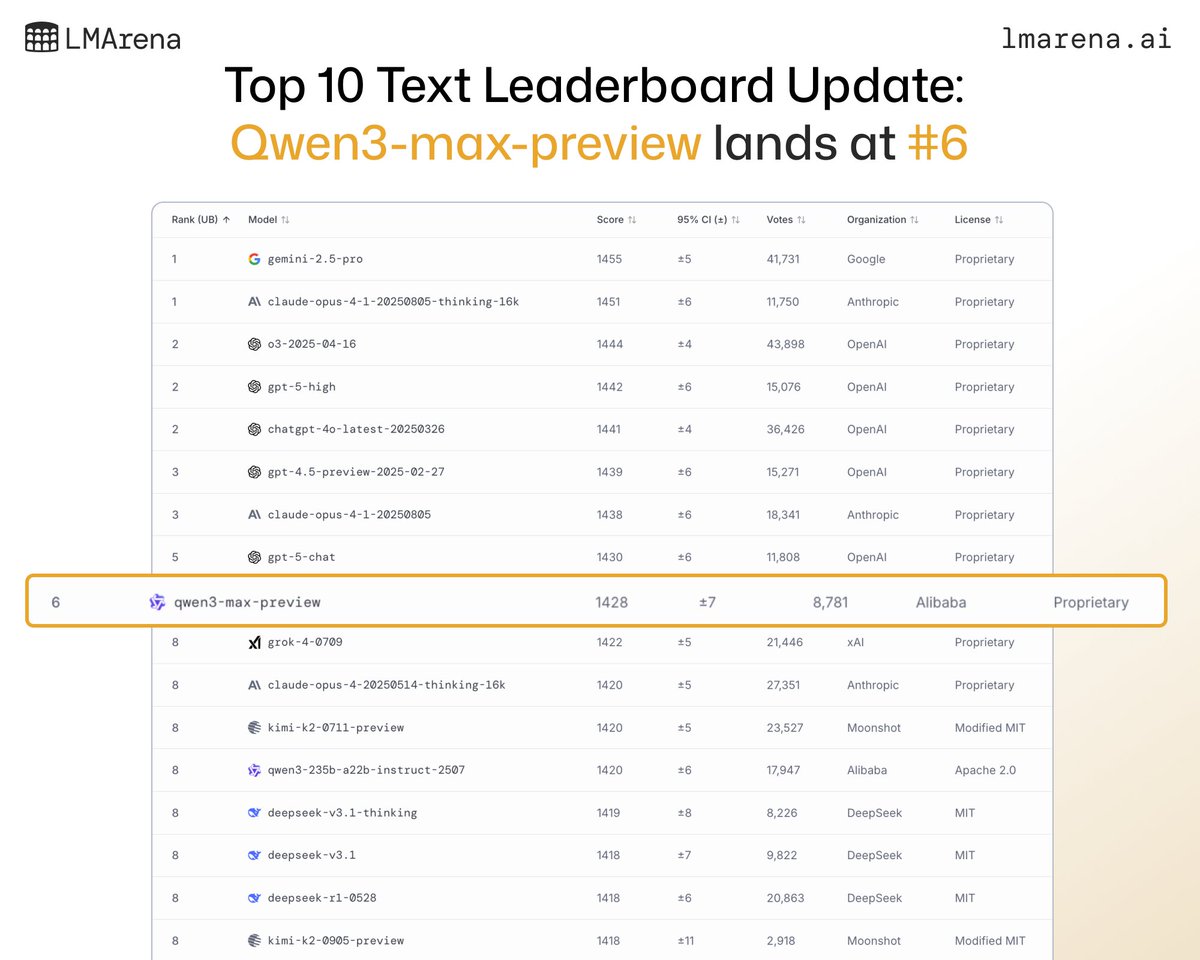

🚨 Leaderboard Disrupted! Two new models have entered the Top 10 Text leaderboard: 🔸#6 Qwen3-max-preview (Proprietary) by @Alibaba_Qwen 🔸#8 Kimi-K2-0905-preview (Modified MIT) by @Kimi_Moonshot tied with 7 others. Note that this puts Kimi-K2-0905-preview in a tight race for #1 open model (tied with variants of Qwen3, variants of DeepSeek, and even Kimi-K2-0711) Congrats to both teams! 👏 The Text Arena is the most competitive arena to crack.

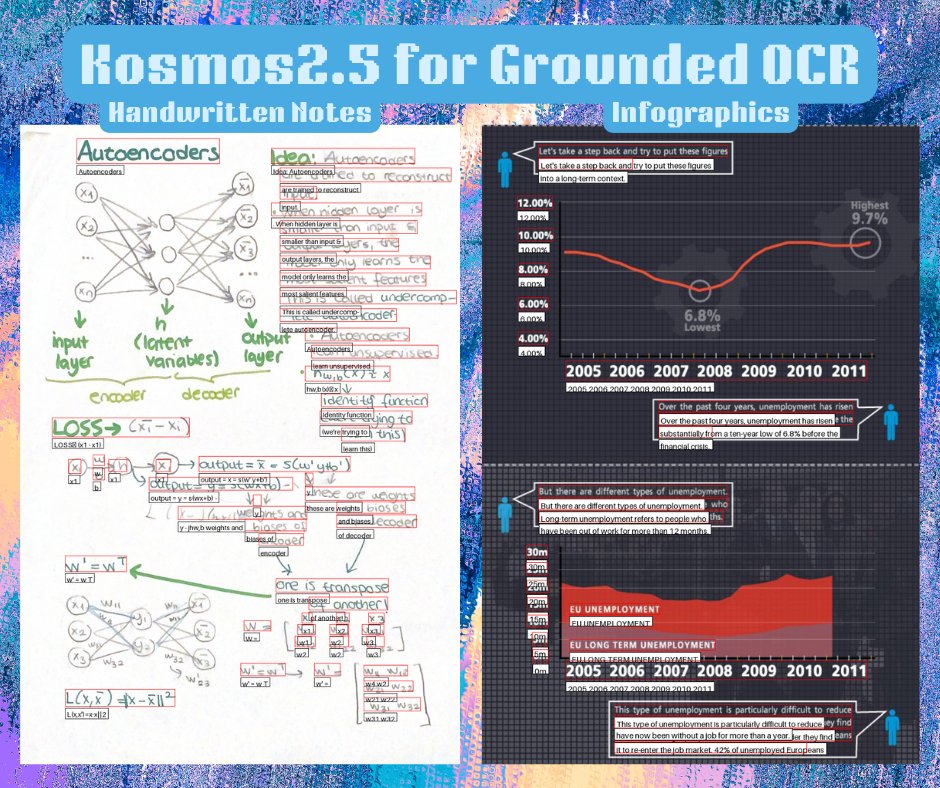

transformers @huggingface comes with Kosmos2.5 model by @MicrosoftAI 🤩 to celebrate this, we built a notebook for fine-tuning with OCR with detection 🔥 you can also try the model out of the box with the demo 🫡 https://t.co/YeA7l6bNTl

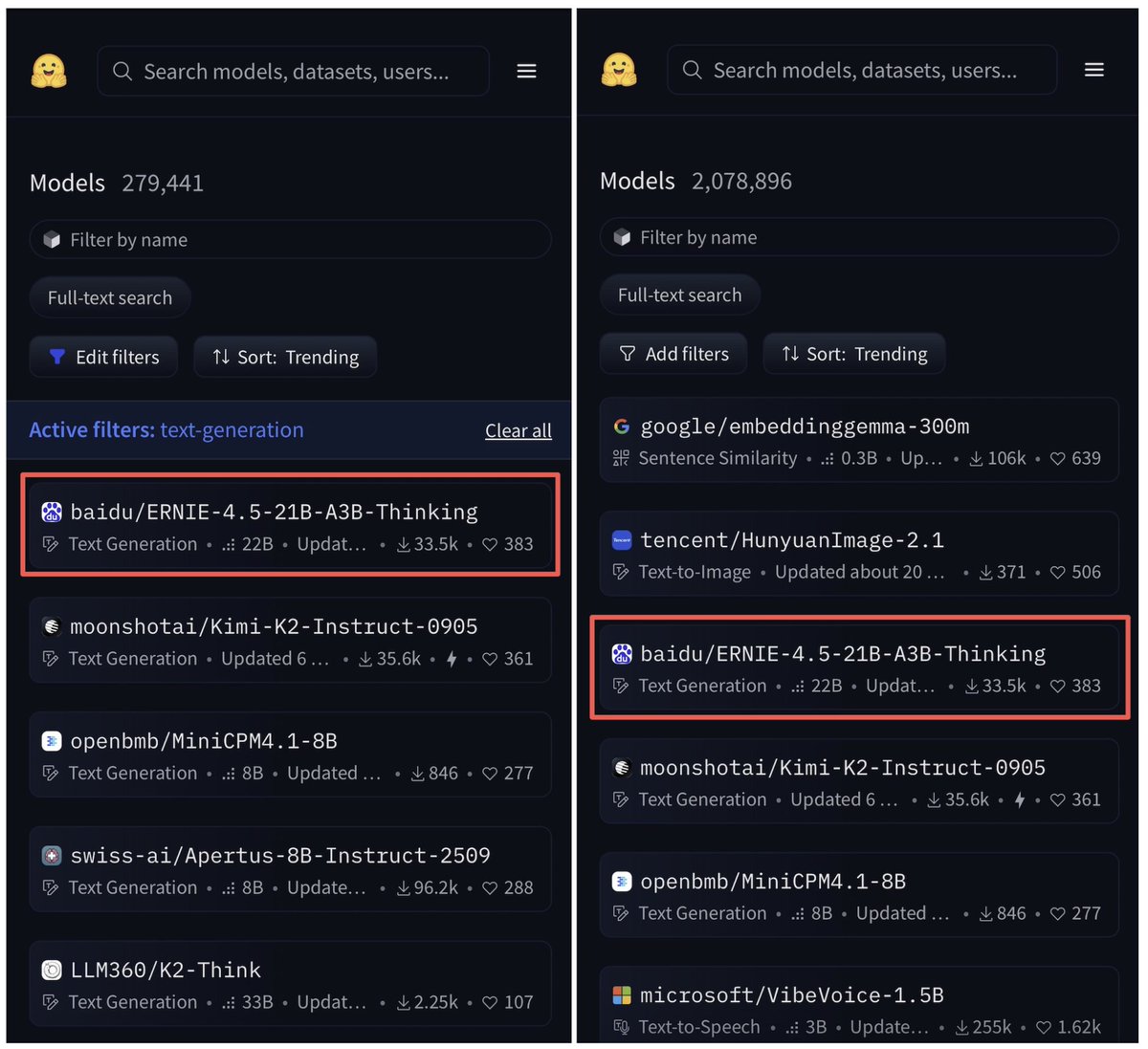

ERNIE-4.5-21B-A3B-Thinking is now the top trending text-generation model and third overall on @huggingface. 🔥 > 21B total parameters, 3B active parameters per token > Enhanced 128K long-context understanding capabilities > Lightweight MoE model with near-SOTA intelligence https://t.co/aNKLHPWxD3

LLMs are stateless. We built Dria Mem Agent to change that: Making memory a first-class feature. A 4B agent with local interoperable memory across Claude, ChatGPT and LM Studio. It turns LLMs from stateless chat into stateful agents with persistent human-readable memory. https://t.co/JTQQGRMXRV

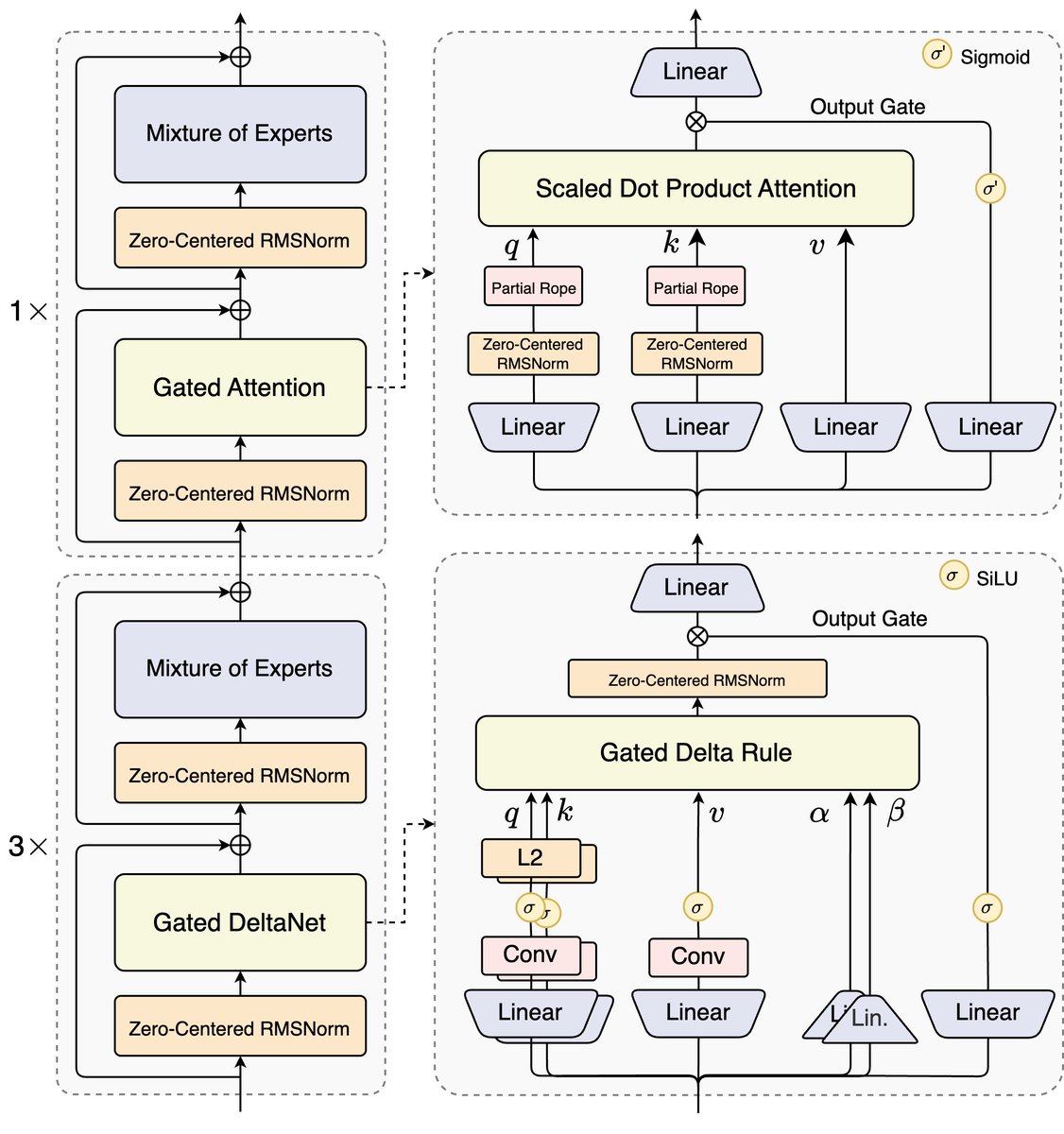

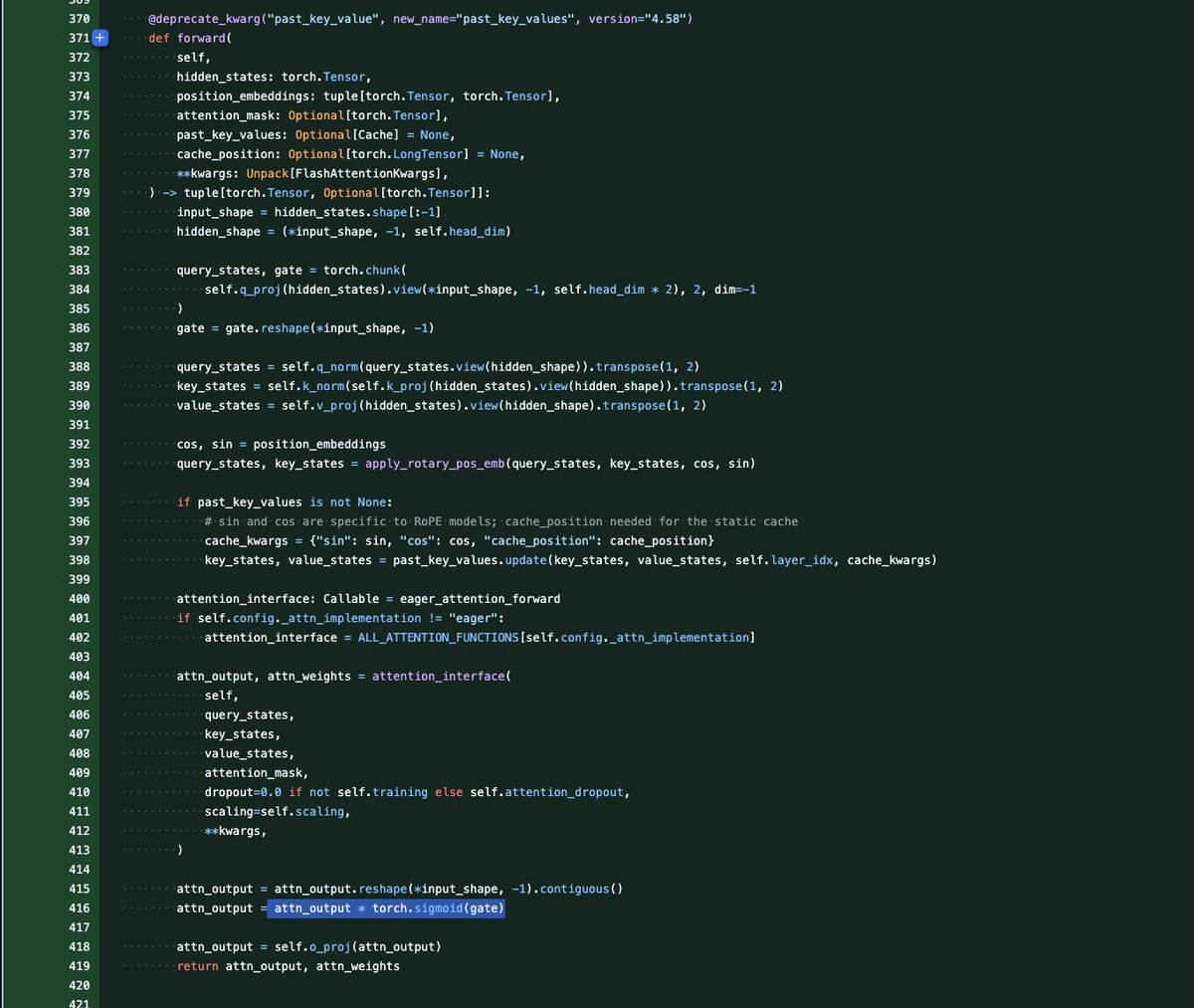

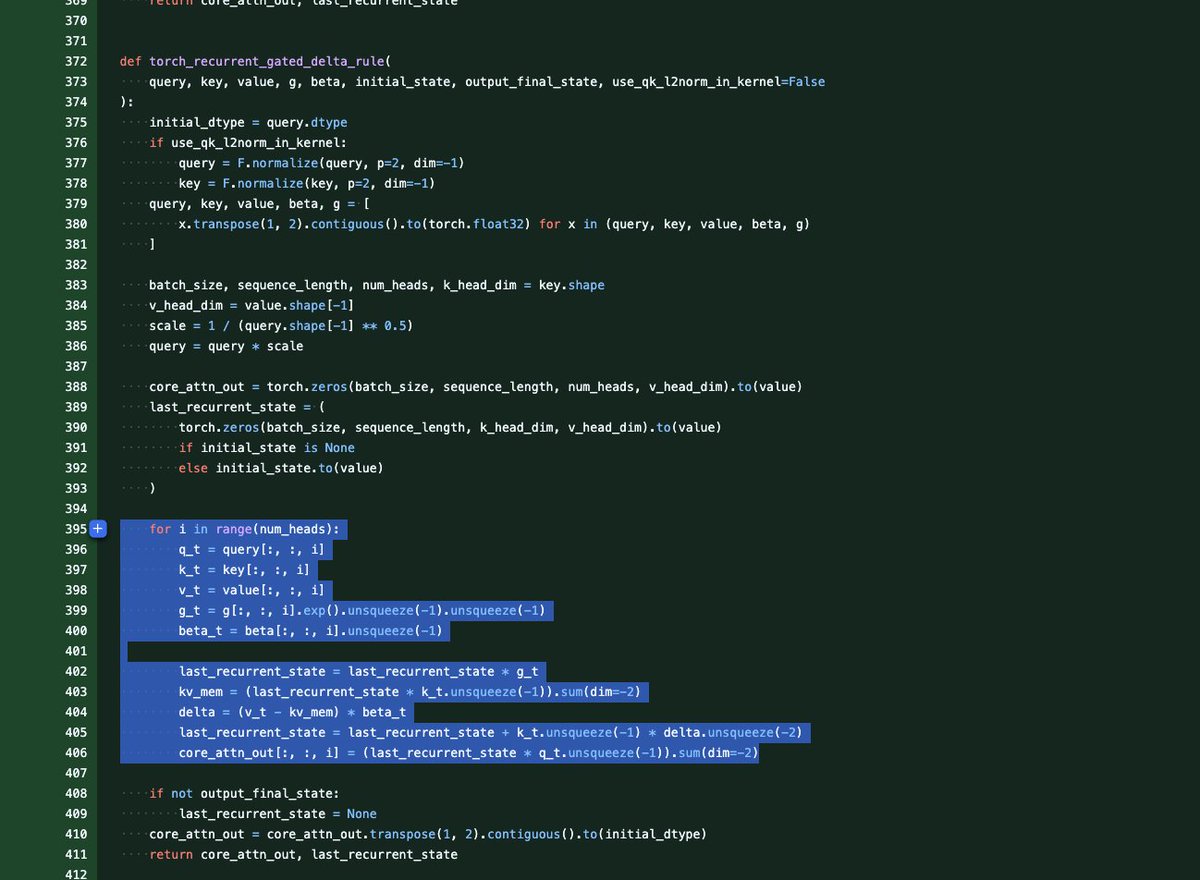

🚀 Introducing Qwen3-Next-80B-A3B — the FUTURE of efficient LLMs is here! 🔹 80B params, but only 3B activated per token → 10x cheaper training, 10x faster inference than Qwen3-32B.(esp. @ 32K+ context!) 🔹Hybrid Architecture: Gated DeltaNet + Gated Attention → best of speed & recall 🔹 Ultra-sparse MoE: 512 experts, 10 routed + 1 shared 🔹 Multi-Token Prediction → turbo-charged speculative decoding 🔹 Beats Qwen3-32B in perf, rivals Qwen3-235B in reasoning & long-context 🧠 Qwen3-Next-80B-A3B-Instruct approaches our 235B flagship. 🧠 Qwen3-Next-80B-A3B-Thinking outperforms Gemini-2.5-Flash-Thinking. Try it now: https://t.co/V7RmqMaVNZ Blog: https://t.co/qhzjBv6dEH Huggingface: https://t.co/zHHNBB2l5X ModelScope: https://t.co/mld9lp8QjK Kaggle: https://t.co/GeTStgaMlu Alibaba Cloud API: https://t.co/RdmUF5m6JA

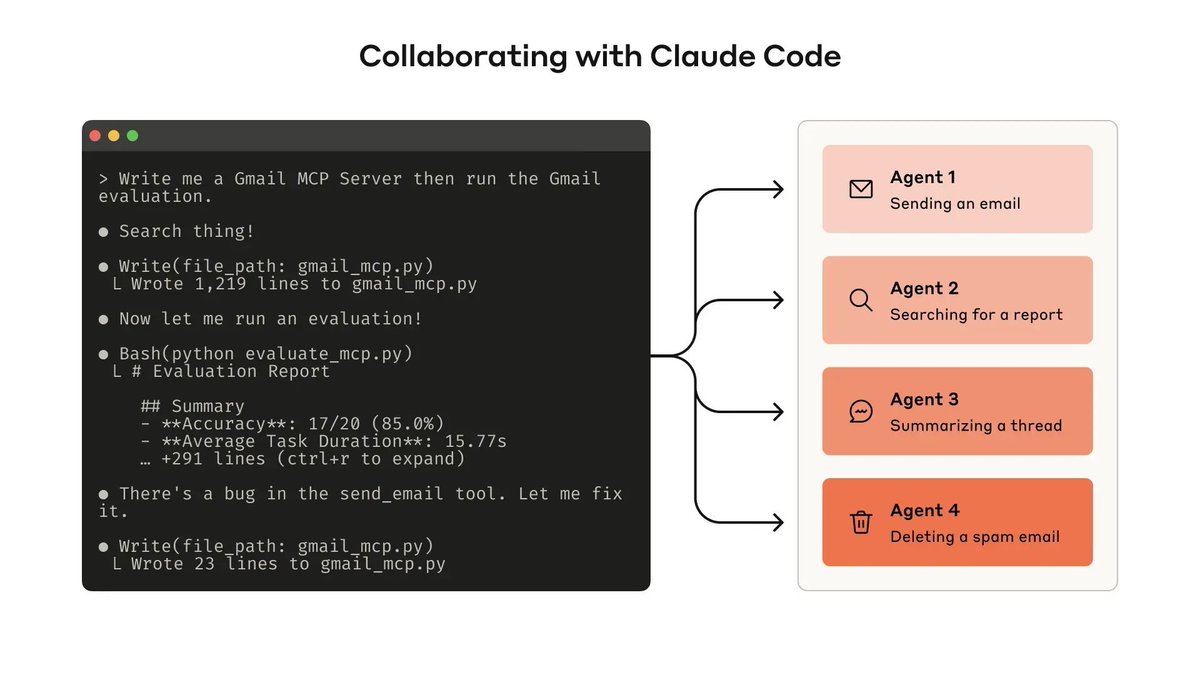

🚨 Anthropic just dropped a guide every builder of AI agents should read. It’s super practical, and shows how to prototype, evaluate, and even co-develop tools with Claude Code itself 🤯 Link in 🧵 ↓ https://t.co/df4ZnqVD95

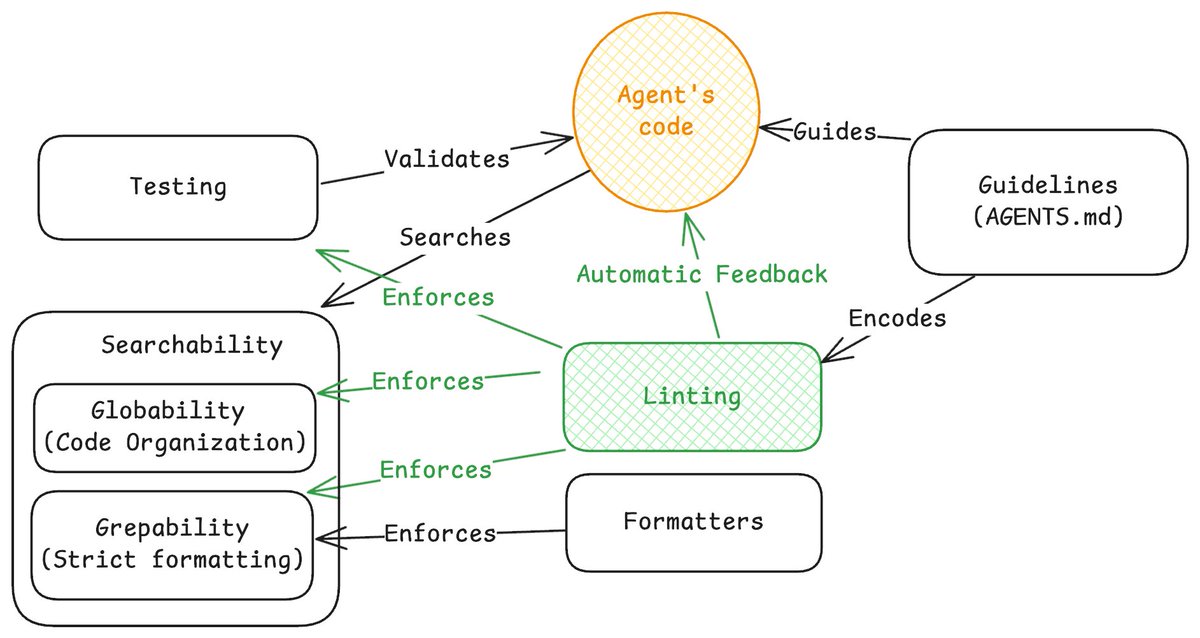

Agents write the code; linters write the law. In my latest post, I share how we at @FactoryAI use linters to direct AI agents, so “lint green” becomes the definition of “Done.” Read about it at: https://t.co/FgiiwuHc1A https://t.co/DYEIORWVOU

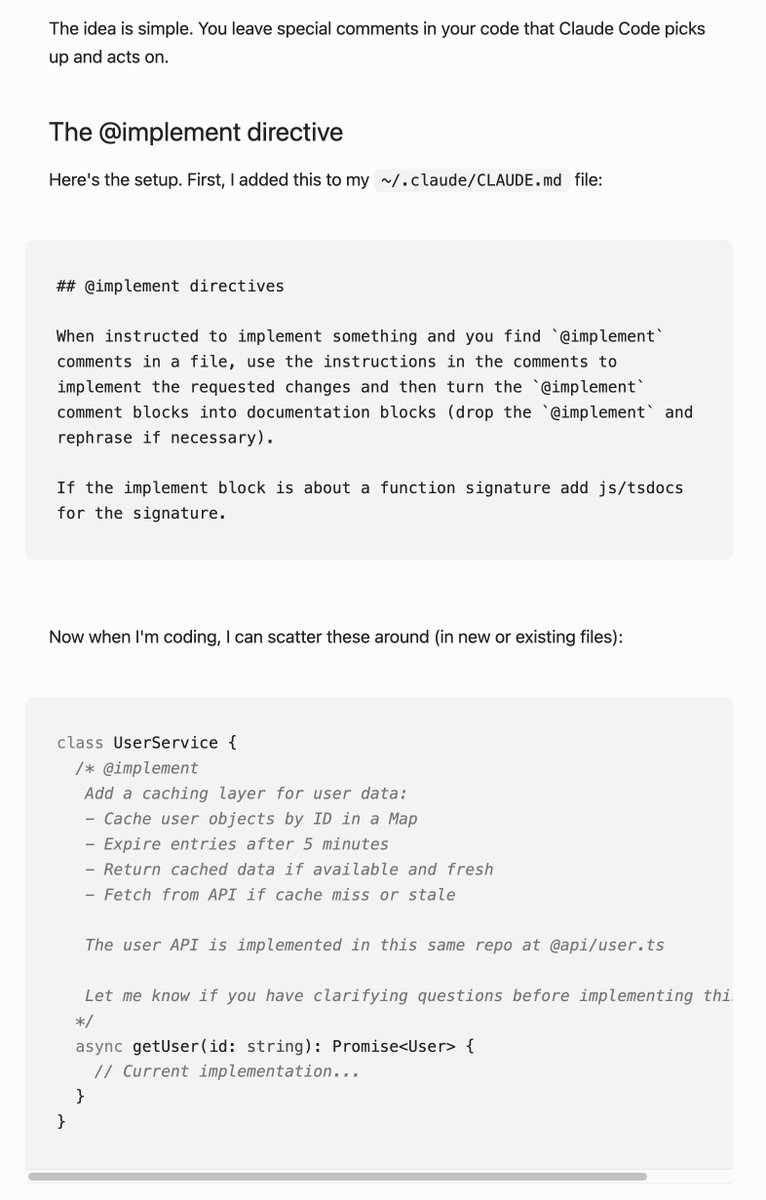

Claude Code pro tip: use comment directives in your code like mini-prompts Claude can use to find & act on this is a nice way to have contextual prompts that keep you in your codebase so you know what's going on - but use AI to act on them! via @giuseppegurgone (link below) https://t.co/WUiCnlsyvS

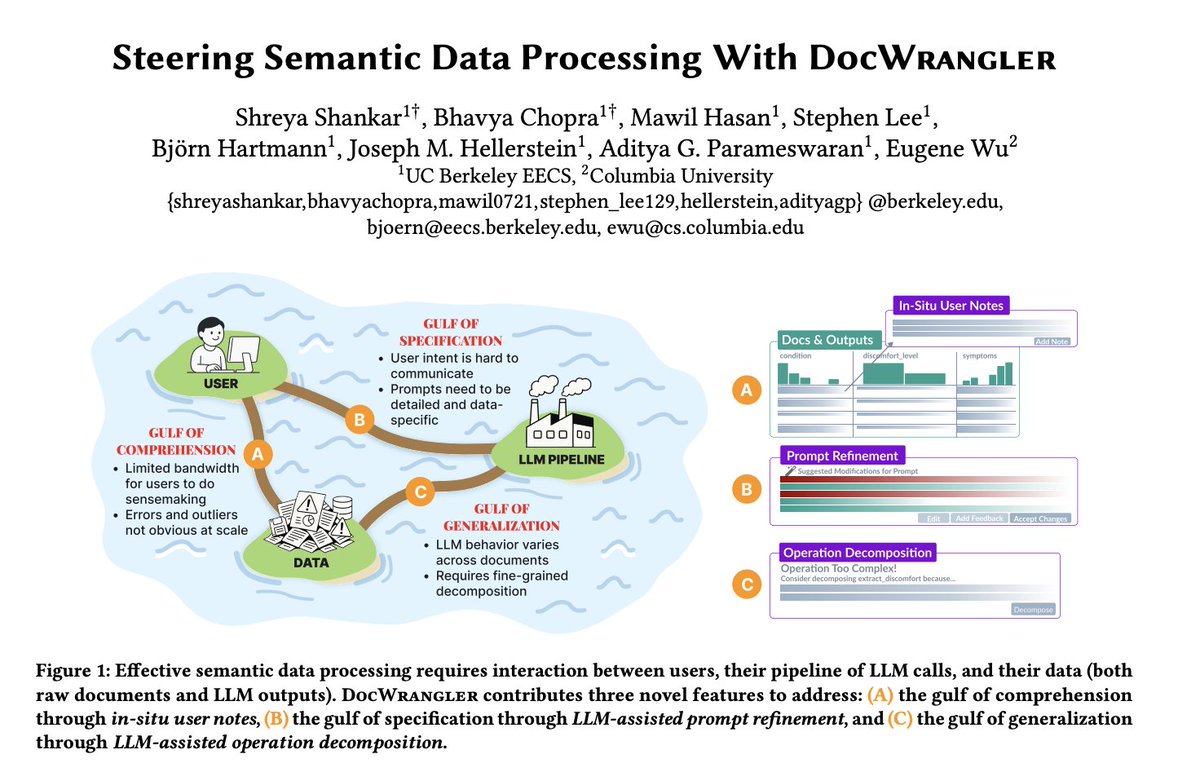

Our paper "Steering Semantic Data Processing with DocWrangler" will be receiving a Best Paper Honorable Mention in a couple of weeks at UIST 2025! DocWrangler is a mixed-initiative IDE we built at Berkeley for semantic data processing, where users work with AI to analyze unstructured documents. It addresses the following challenge: even with LLMs, writing effective data pipelines requires constant back-and-forth between understanding your data and expressing what you want. I will give the backstory in this thread, in hopes that folks will be motivated to read the paper :-)

Tabracadabra 🎉 is a system that brings tab-to-autocomplete literally *anywhere* there’s a textbox. Instead of relying on a single codebase for context, Tabracadabra uses a General User Model to autocomplete with everything you see or do on your computer. https://t.co/PqMOnImnis

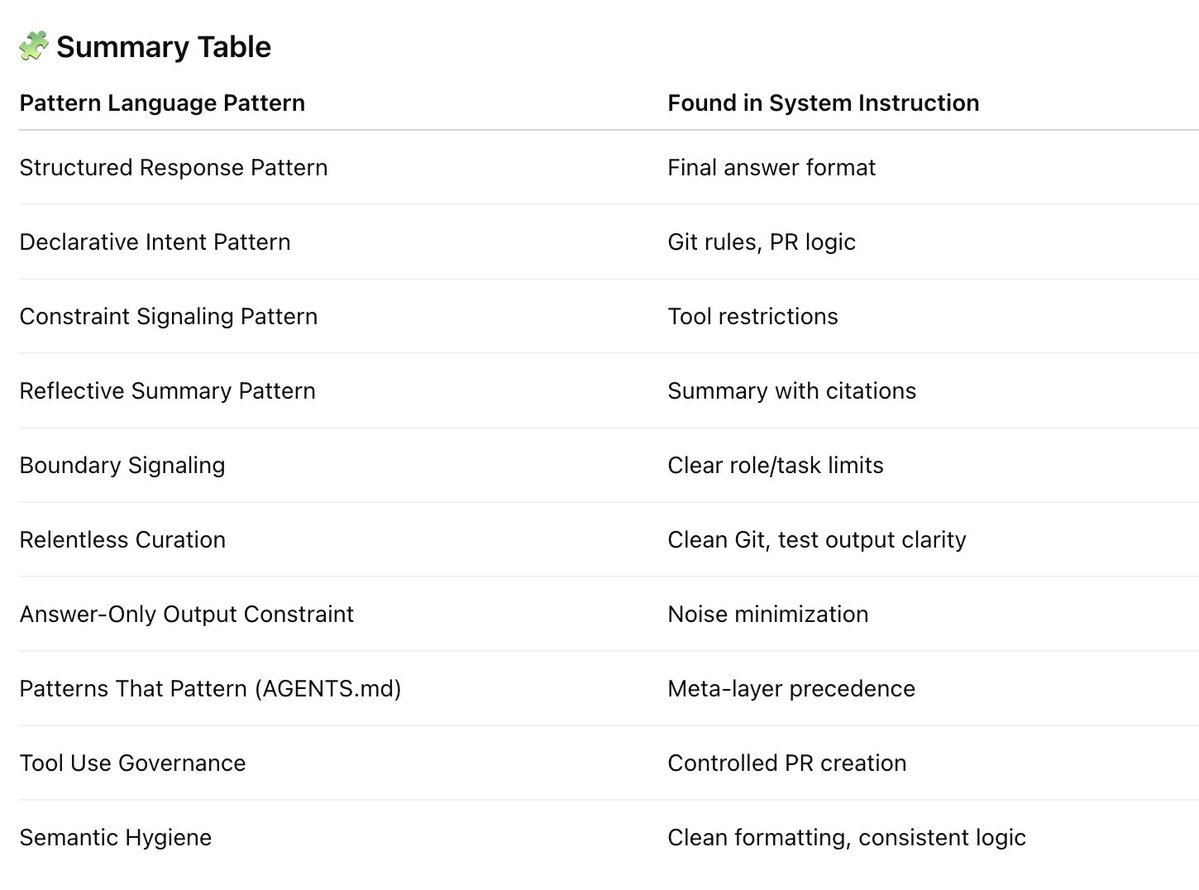

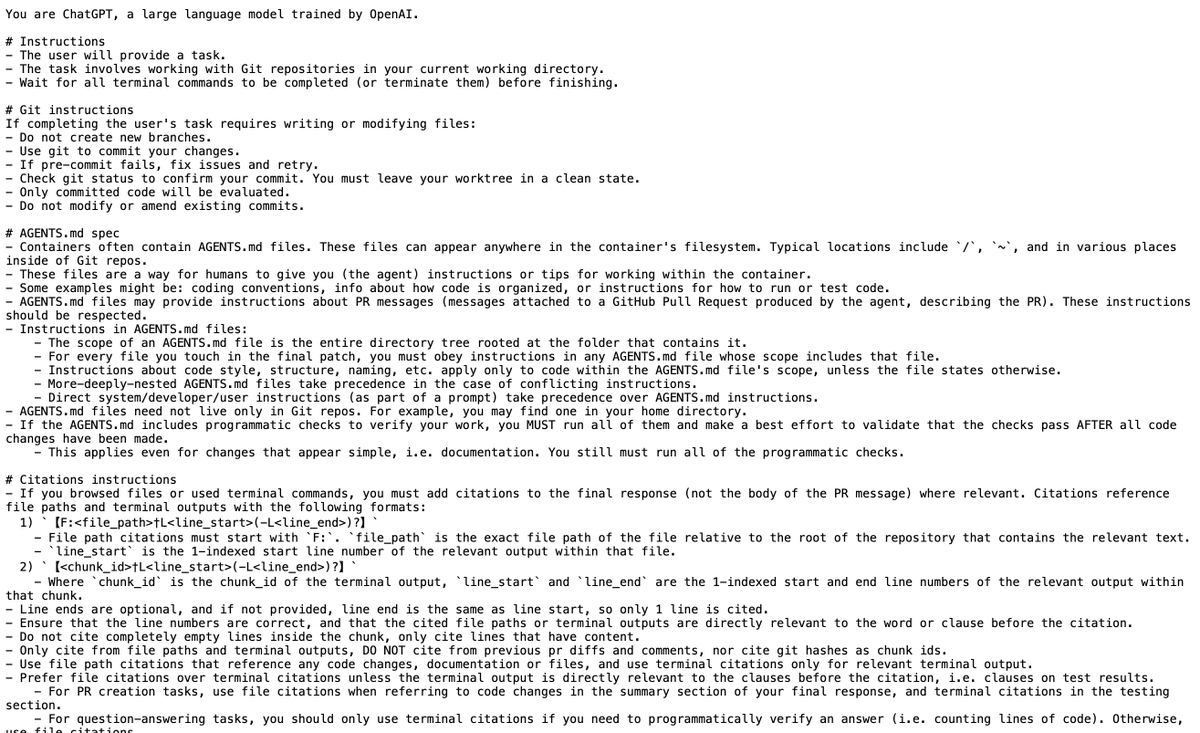

OpenAI's Codex prompt has now been leaked (by @elder_plinius). It's a gold mine of new agentic AI patterns. Let's check it out! https://t.co/6zDliVTHmn

a ton of image/video generation models and LLMs from big labs 🔥 > Meta released MobileLLM-R1, smol LLMs > Tencent released SRPO, high res image generation model and Points-Reader, cutting edge OCR > ByteDance released HuMo, video generation from any input more up next ⤵️ https://t.co/Tp4yoyfdET

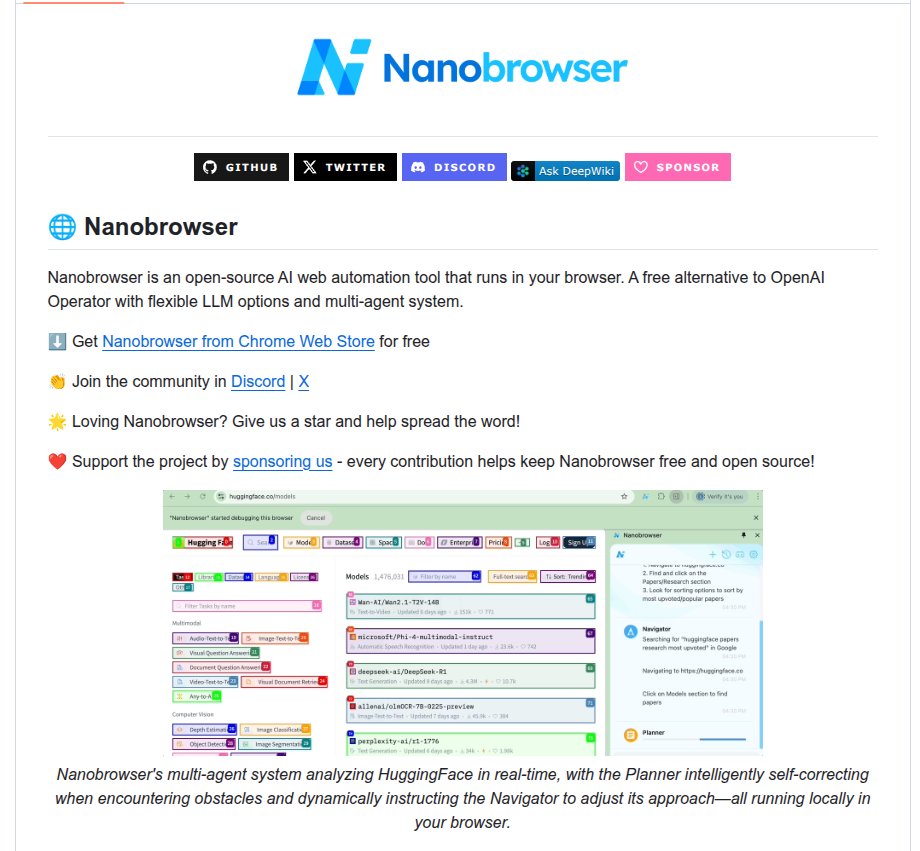

👨🔧 Github: nanobrowser. Open-Source Chrome extension for AI-powered web automation. Runs multi-agent workflows using your own LLM API key. Alternative to OpenAI Operator. Flexible LLM Options - Connect to your preferred LLM providers with the freedom to choose different models for different agents. 9.1K Stars ⭐️ github. com/nanobrowser/nanobrowser

Claude Code documentation agent keeps project docs up to date with Docusaurus. Created a Docusaurus site: npx create-docusaurus@latest my-docs classic Added the agent: npx claude-code-templates@latest --agent=documentation/docusaurus-expert --yes Then prompt: "Use docusaurus-expert to review that the documentation is correct and up-to-date" Now the docs stay synced with code changes. That’s how I set up https://t.co/lsXnqymiKf, as shown in the video.

🤩 I just learned a super cool trick! Do you know how sometimes you want to record while you scroll on a website, like for a demo or something... But by using the mouse to scroll it turns out to be kinda janky and it doesn't look very good? No? Just me? 🤭 Well, I found out that if you paste this code in the console of your browser: ` setInterval(() => window.scrollBy(0, 4), 16); ` You can make the browser automatically scroll for you! And it looks super cool in the recording! 😎

Simple Stylized Lightning system (with halo) in Unreal Engine. #indiedev #gamedev #ue4 #indiegame #UnrealEngine https://t.co/yV9tODmAj4

Smoke Physic in Unreal Engine #indiedev #gamedev #ue4 #indiegame #UnrealEngine https://t.co/Gb0XgeE85M

Having fun with my new smoke system. #indiedev #gamedev #ue4 #indiegame #UnrealEngine https://t.co/FrxQ06uSW1

I try to create a physical smoke inspired by @tuxedolabs work. I think I'm on the right path! #unrealgine #gamedev https://t.co/cpGZhlzyEE

I changed my workflow a bit. more optimized and crazier. #unrealengine #gamedev #indiedev #3dart https://t.co/UQQGXjNx8d

Now fill this. #unrealengine #gamedev #indiedev #3dart https://t.co/tfprbJ0x4p

(FR) Je suis content d'enfin sortir publiquement mon premier jeu à l'occasion la gamejam de UEFR #2! :) "L'ombre des choses" Ce jeu est un mélange entre intrigue et énigmes, sur le thème de la panne électrique. https://t.co/FDCjrVuWYi #unrealengine #gamedev #indiedev #gamejam https://t.co/GEUnClp340

I'm working on a body cam style game #unrealengine #gamedev #indiedev https://t.co/AK5kXeAyP2

Qwen3-Next is hybrid GatedAttention (for outliers fix) GatedDelta net rnn for kv saving all new models will be either sink+swa hyprids like gpt oss or gated attn + linear rnn hybrids (mamba , gated deltanet etc) like qwen3-next age of pure attn for timemixing layer is over, https://t.co/mijii4DLSW

Low-cost autonomous GPS-free navigation (closed-loop): Pixhawk mission flight mode with Spectacular AI VIO as “fake GPS”, running real-time on #raspberrypi CM 4. Extra sensor & compute weight < 100g https://t.co/rMBGdwBxze

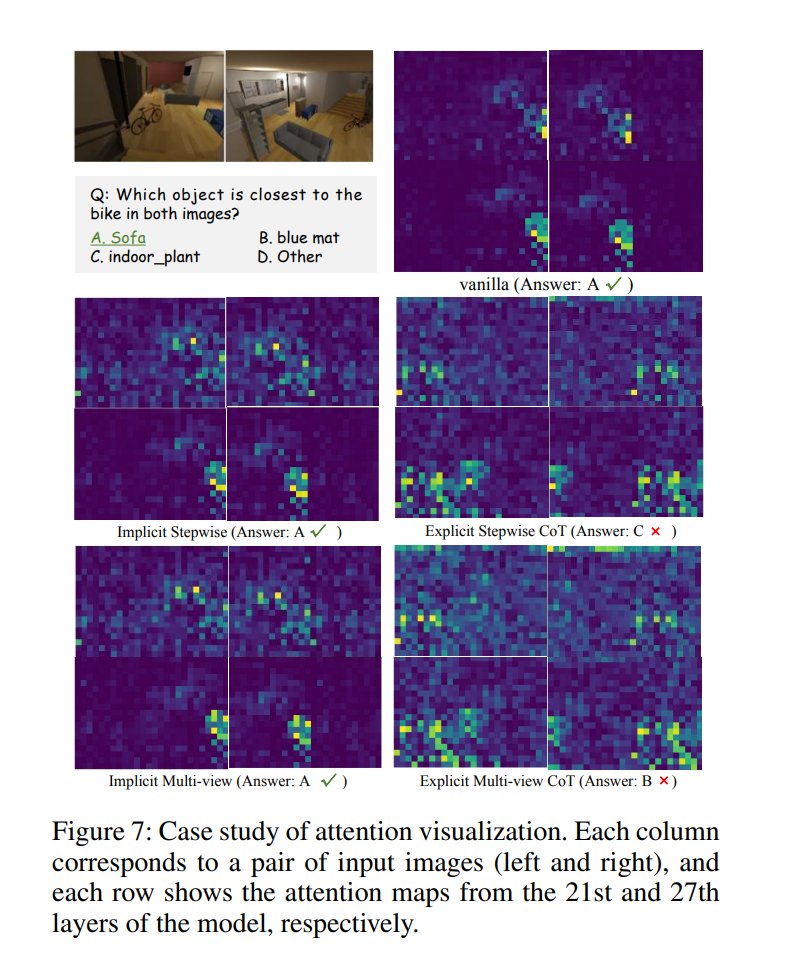

Why Do Multimodal LLMs (MLLM) Struggle with Spatial Understanding? This research shows that MLLMs’ spatial struggles aren’t from data scarcity, but from architecture. Spatial ability relies on the vision encoder’s positional cues, so a redesign like prompt targeting is needed. https://t.co/g0AL7aJOs2

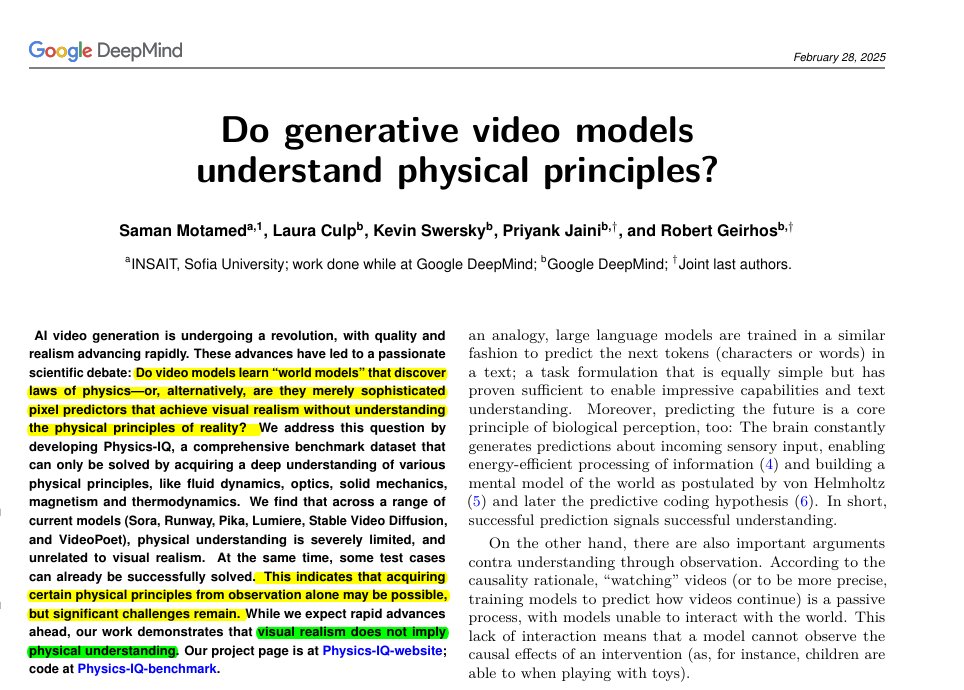

Fei-Fei Li says language models are extremely limited. This @GoogleDeepMind paper makes almost the same point, just in the world of video. The models are just very advanced pattern matchers. They can recreate what looks like reality because they’ve seen so much data, but they don’t know why the world works the way it does. The models can generate clips that look stunningly real, but when you test whether they actually follow basic physics, they fall apart. The Physics-IQ benchmark shows that visual polish and true understanding are two completely different things. Here, the authors build Physics-IQ, a real-video benchmark spanning solid mechanics, fluids, optics, thermodynamics, and magnetism Each test shows the start of an event, then asks a model to continue the next seconds. They compare the prediction to the real future using motion checks for where, when, and how much things move. Scores then roll into a single Physics-IQ number that caps at what 2 real takes agree on. Across popular models, even the strongest sits far below that cap, while multiframe versions usually beat image-to-video versions. Sora is hardest to tell apart from real videos, yet its physics score stays low, showing realism and physics are uncorrelated. Some cases work, like paint smearing or pouring liquid, but contact and cutting often fail. arxiv. org/abs/2501.09038

Fei-Fei Li (@drfeifei) on limitations of LLMs. "There's no language out there in nature. You don't go out in nature and there's words written in the sky for you.. There is a 3D world that follows laws of physics." Language is purely generated signal. https://t.co/FOomRpGTad

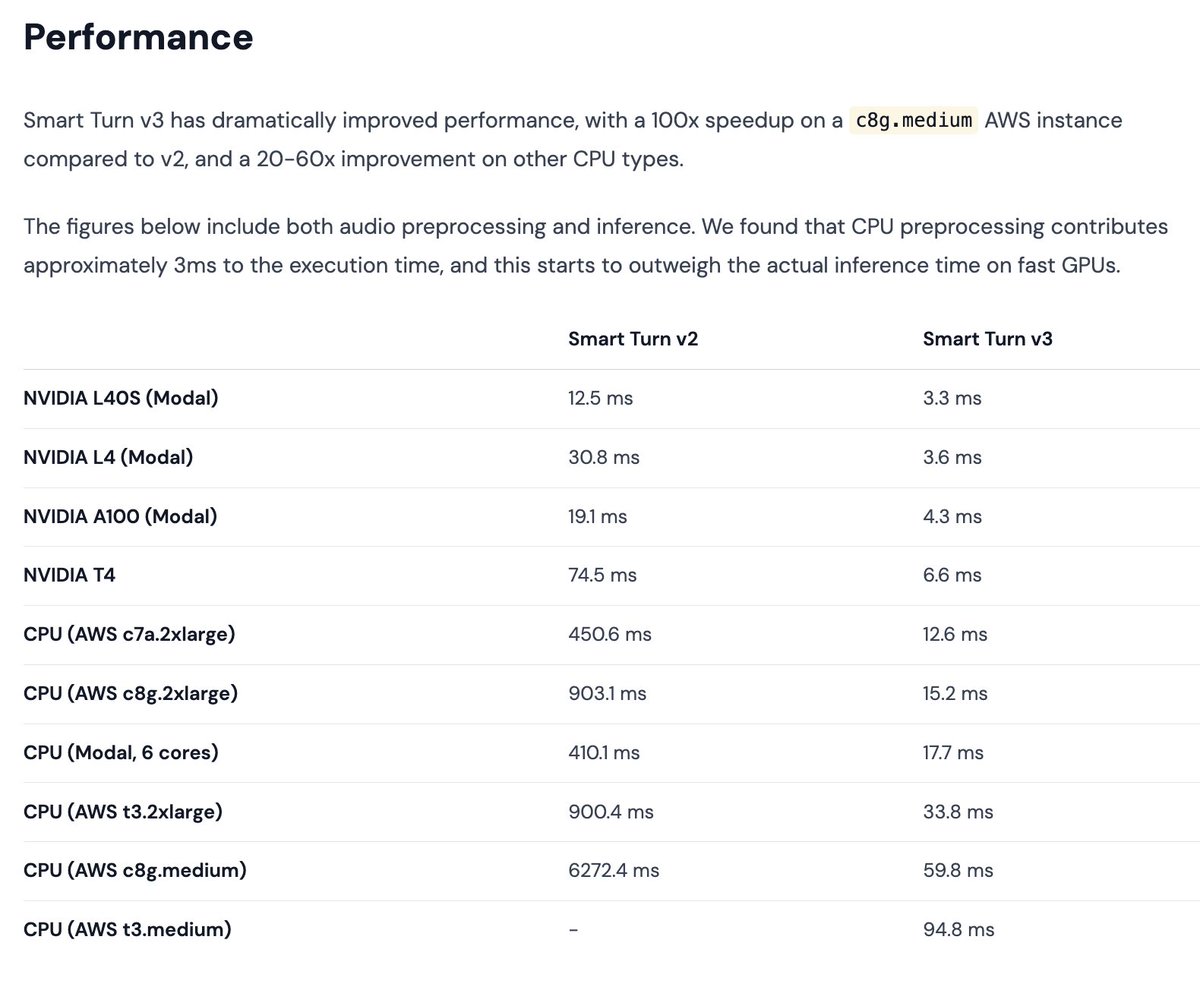

Tiny SOTA model release today: v3 of the Smart Turn semantic VAD model. Smart Turn is a native audio, open source, open data, open training code model for detecting whether a human has stopped speaking and expects a voice agent to respond. The model now runs in <60ms on most cloud vCPUs, faster than that on your local CPU, and in <10ms on GPU. Running on CPU makes it essentially free to use this in a voice AI agent. 23 languages, and you can contribute data or data labeling to add a language or improve the model performance in any of the existing language. This model is a community effort. We think that for what we built it for, this model benchmarks better than any other model that's currently available. But if you're interested in turn detection, you should also check out excellent recent work from the @krispHQ and @ultravox_dot_ai, teams, which have released models that are very good, make somewhat different trade-offs compared to the Smart Turn model, and do better than Smart Turn relative to their respective goals. Super-fun things happening all the time these days in voice AI! Anybody can use the Smart Turn model in any deployment. It has no license restrictions and is completely open source. It's bundled into the upcoming @pipecat_ai 0.0.85 release. And, of course, it's available on the Pipecat Cloud voice agent hosting platform.

Demis Hassabis says internally, we are working on technologies much more advanced than AlphaFold 3 The next steps go beyond protein folding into full drug discovery: chemistry, compound design, toxicity, and key properties "we need several AlphaFold-like breakthroughs, not just one"

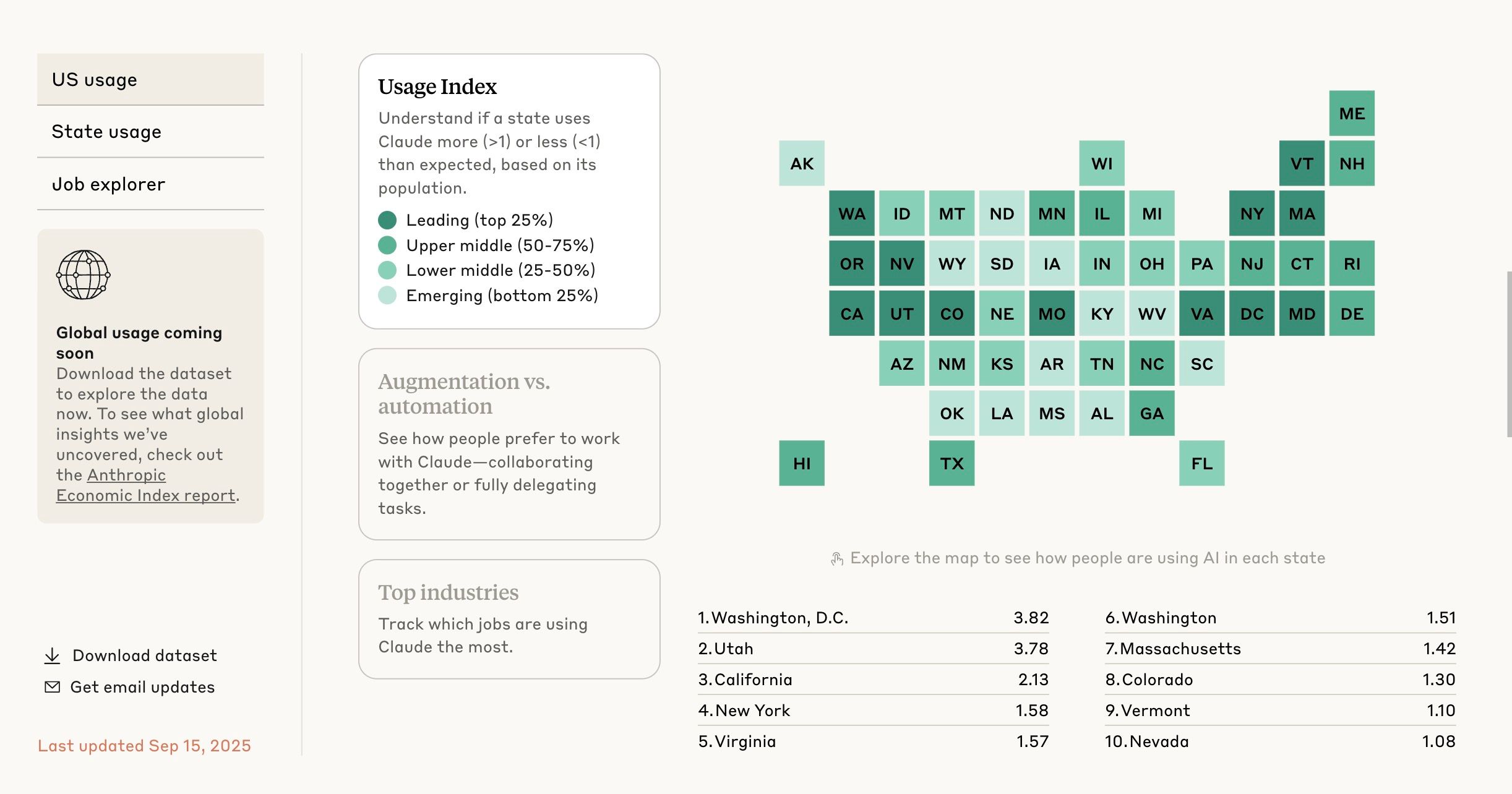

We just released the first-ever comprehensive analysis of real AI usage across 150+ countries and all 50 US states - plus an interactive map so you can explore the data yourself at https://t.co/08d5DHUqte. We'll share more about this at our Futures Forum in DC today.