@rohanpaul_ai

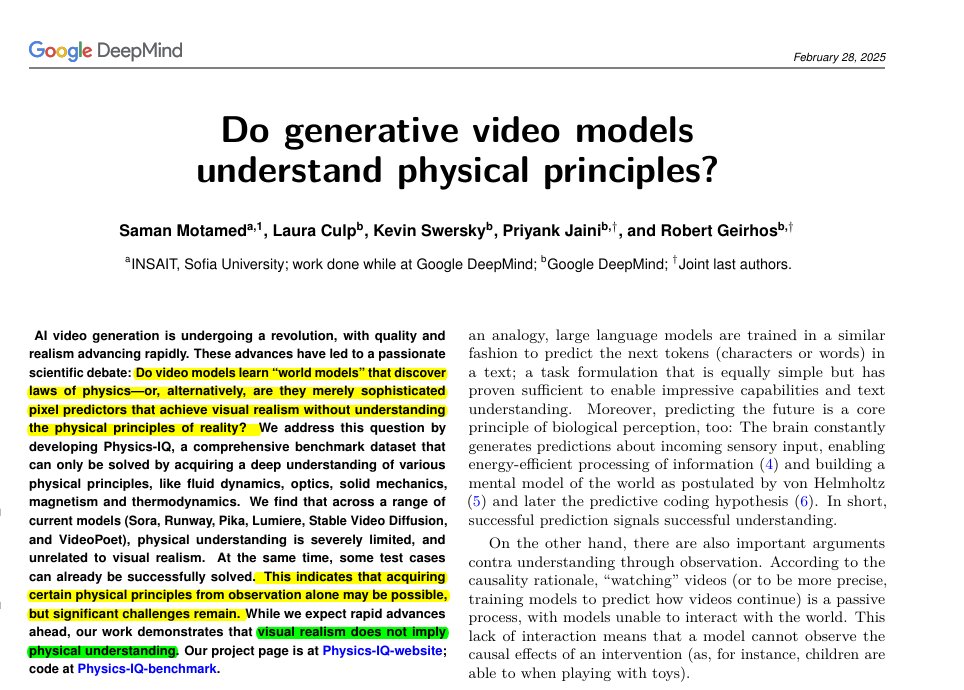

Fei-Fei Li says language models are extremely limited. This @GoogleDeepMind paper makes almost the same point, just in the world of video. The models are just very advanced pattern matchers. They can recreate what looks like reality because they’ve seen so much data, but they don’t know why the world works the way it does. The models can generate clips that look stunningly real, but when you test whether they actually follow basic physics, they fall apart. The Physics-IQ benchmark shows that visual polish and true understanding are two completely different things. Here, the authors build Physics-IQ, a real-video benchmark spanning solid mechanics, fluids, optics, thermodynamics, and magnetism Each test shows the start of an event, then asks a model to continue the next seconds. They compare the prediction to the real future using motion checks for where, when, and how much things move. Scores then roll into a single Physics-IQ number that caps at what 2 real takes agree on. Across popular models, even the strongest sits far below that cap, while multiframe versions usually beat image-to-video versions. Sora is hardest to tell apart from real videos, yet its physics score stays low, showing realism and physics are uncorrelated. Some cases work, like paint smearing or pouring liquid, but contact and cutting often fail. arxiv. org/abs/2501.09038