@kwindla

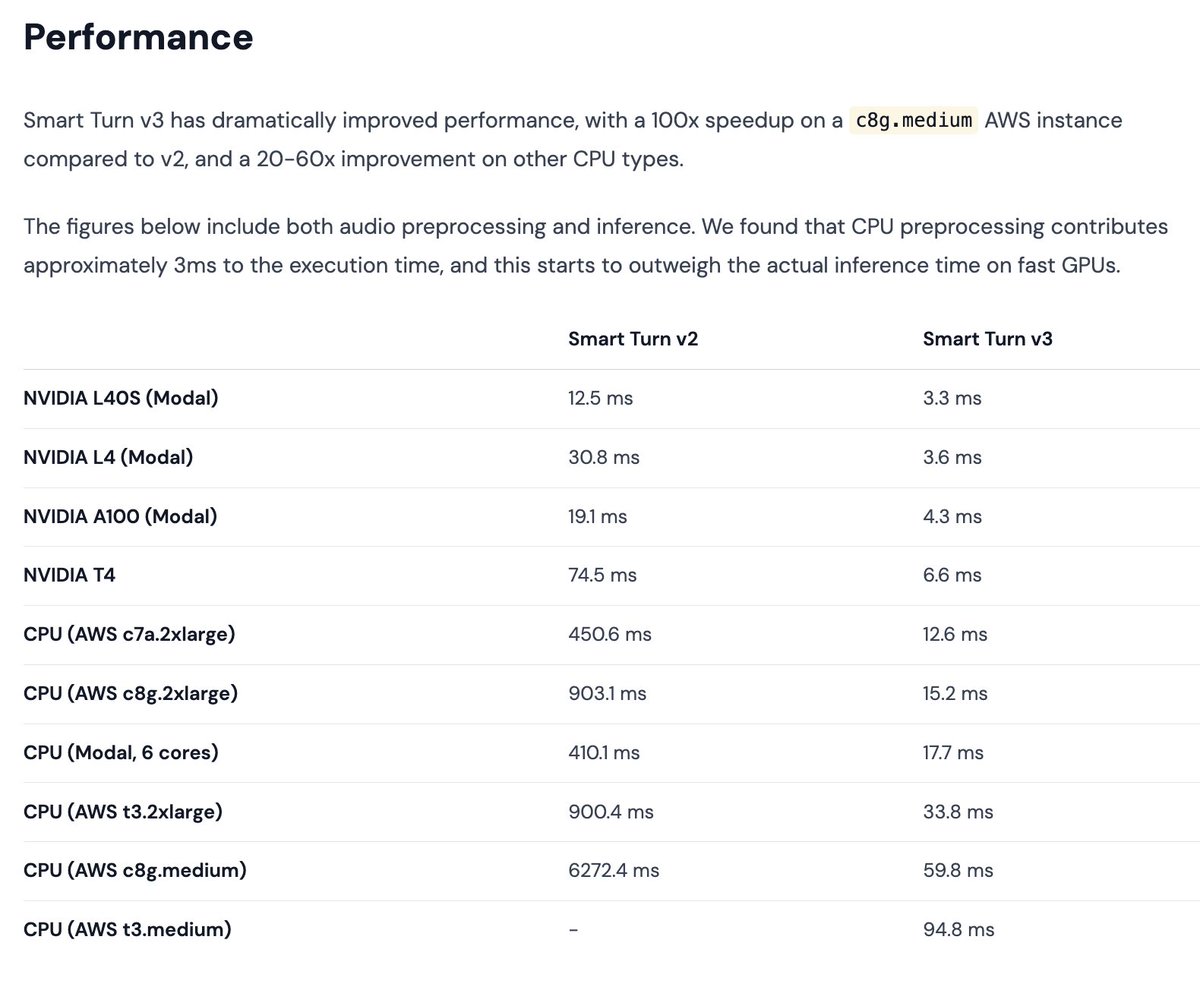

Tiny SOTA model release today: v3 of the Smart Turn semantic VAD model. Smart Turn is a native audio, open source, open data, open training code model for detecting whether a human has stopped speaking and expects a voice agent to respond. The model now runs in <60ms on most cloud vCPUs, faster than that on your local CPU, and in <10ms on GPU. Running on CPU makes it essentially free to use this in a voice AI agent. 23 languages, and you can contribute data or data labeling to add a language or improve the model performance in any of the existing language. This model is a community effort. We think that for what we built it for, this model benchmarks better than any other model that's currently available. But if you're interested in turn detection, you should also check out excellent recent work from the @krispHQ and @ultravox_dot_ai, teams, which have released models that are very good, make somewhat different trade-offs compared to the Smart Turn model, and do better than Smart Turn relative to their respective goals. Super-fun things happening all the time these days in voice AI! Anybody can use the Smart Turn model in any deployment. It has no license restrictions and is completely open source. It's bundled into the upcoming @pipecat_ai 0.0.85 release. And, of course, it's available on the Pipecat Cloud voice agent hosting platform.