Your curated collection of saved posts and media

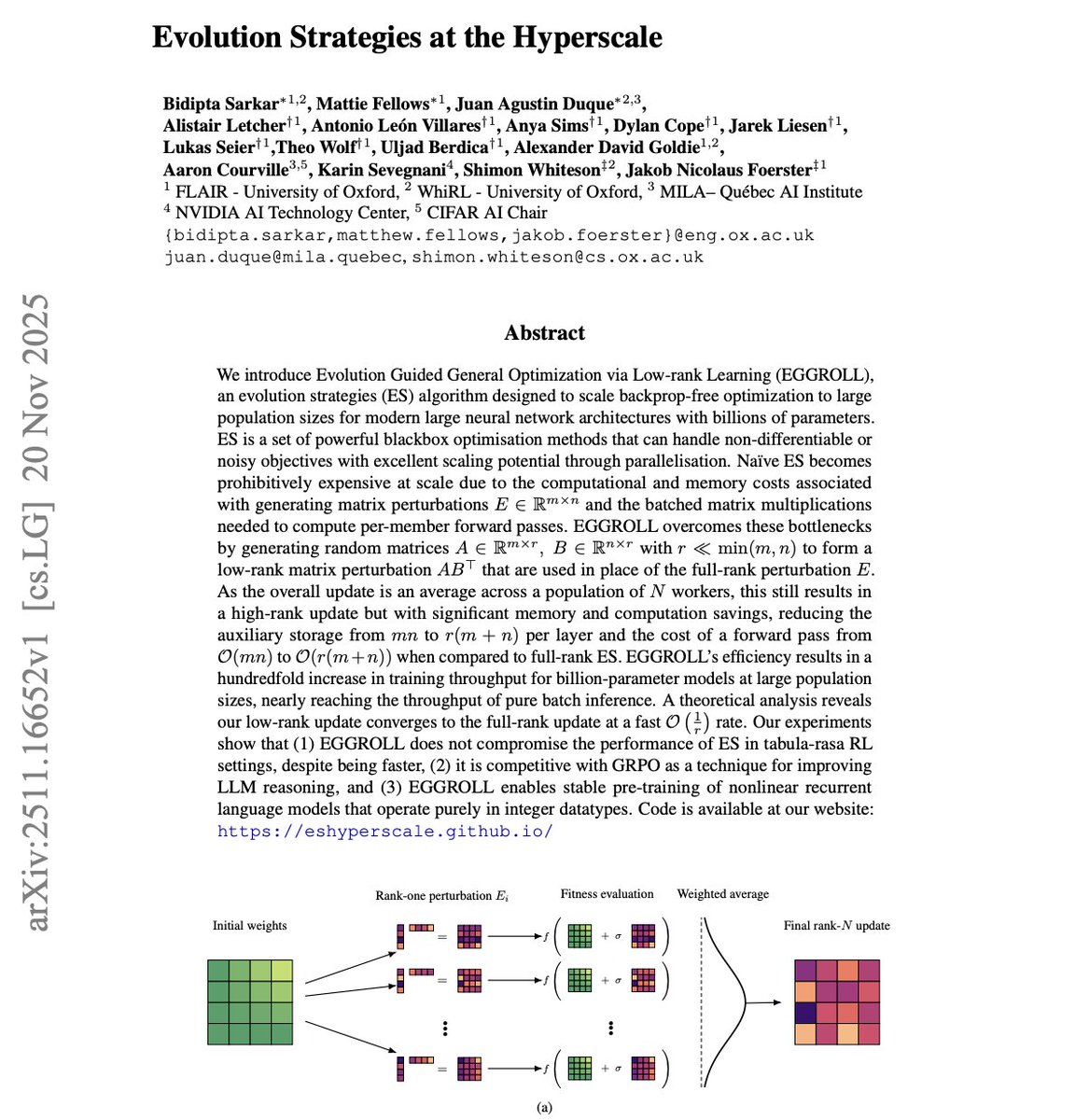

I'm reading NVIDIA's new paper and its wild. Everyone keeps talking about scaling transformers with bigger clusters and smarter optimizers… meanwhile NVIDIA and Oxford just showed you can train billion-parameter models using evolution strategies a method most people wrote off as ancient. The trick is a new system called EGGROLL, and it flips the entire cost model of ES. Normally, ES dies at scale because you have to generate full-rank perturbation matrices for every population member. For billion-parameter models, that means insane memory movement and ridiculous compute. These guys solved it by generating low-rank perturbations using two skinny matrices A and B and letting ABᵀ act as the update. The population average then behaves like a full-rank update without paying the full-rank price. The result? They run evolution strategies with population sizes in the hundreds of thousands a number earlier work couldn’t touch because everything melted under memory pressure. Now, throughput is basically as fast as batched inference. That’s unheard of for any gradient-free method. The math checks out too. The low-rank approximation converges to the true ES gradient at a 1/r rate, so pushing the rank recreates full ES behavior without the computational explosion. But the experiments are where it gets crazy. → They pretrain recurrent LMs from scratch using only integer datatypes. No gradients. No backprop. Fully stable even at hyperscale. → They match GRPO-tier methods on LLM reasoning benchmarks. That means ES can compete with modern RL-for-reasoning approaches on real tasks. → ES suddenly becomes viable for massive, discrete, hybrid, and non-differentiable systems the exact places where backprop is painful or impossible. This paper quietly rewrites a boundary: we didn’t struggle to scale ES because the algorithm was bad we struggled because we were doing it in the most expensive possible way. NVIDIA and Oxford removed the bottleneck. And now evolution strategies aren’t an old idea… they’re a frontier-scale training method.

Reasoning models are expensive. Not because the models are huge. It's because they generate thousands of tokens just to think. But what if smaller models could learn to reason efficiently? This new paper compares training 12B models on reasoning traces from two frontier systems: - DeepSeek-R1 - gpt-oss (OpenAI's open-source reasoner) The key finding: gpt-oss traces produce 4x more efficient reasoning. DeepSeek-R1 averages ~15,500 tokens per response. gpt-oss averages ~3,500 tokens. Yet accuracy stays nearly identical across benchmarks. Verbose reasoning doesn't mean better reasoning. Why does this matter? Inference cost scales linearly with tokens. If your reasoning model generates 4x fewer tokens with the same accuracy, you cut costs by 75%. That's a massive efficiency gain. Interesting observation: Nemotron base models already had DeepSeek-R1 traces in pretraining. Training loss on DeepSeek traces started low and stayed flat. Training loss on gpt-oss traces started high and dropped gradually. They showed that the model was learning something new, which also means you can distill reasoning capabilities from frontier models into smaller systems. But the source matters. Different reasoning styles produce different efficiency profiles. (bookmark it) Paper: arxiv. org/abs/2511.19333

🤔💭What even is reasoning? It's time to answer the hard questions! We built the first unified taxonomy of 28 cognitive elements underlying reasoning Spoiler—LLMs commonly employ sequential reasoning, rarely self-awareness, and often fail to use correct reasoning structures🧠 https://t.co/C4Y8vvjJrT

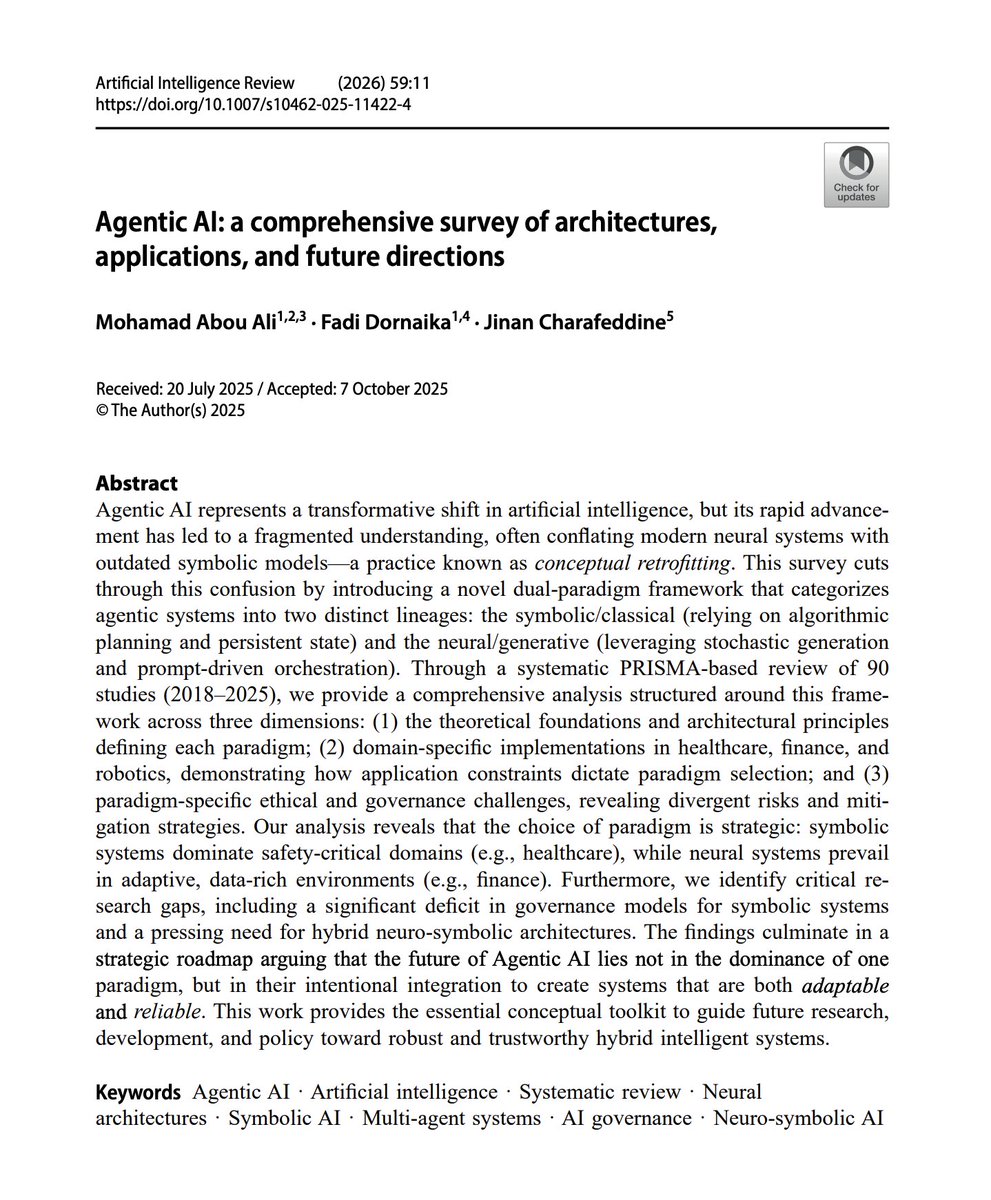

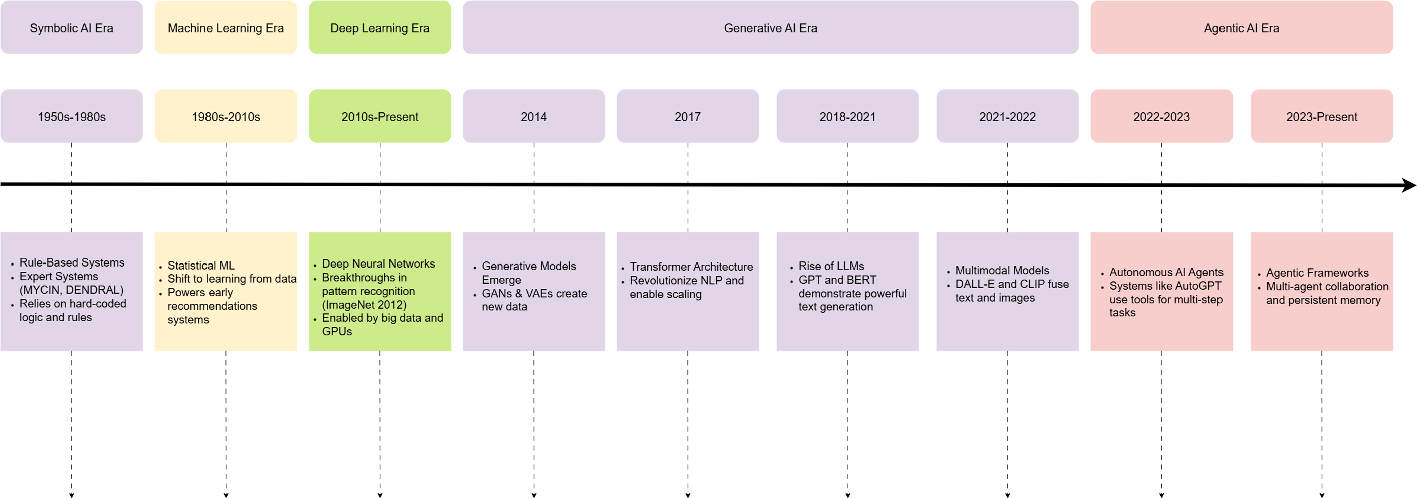

Agentic AI Overview This report provides a comprehensive overview of architectures, applications, and future directions. Great read for AI devs and enthusiasts. It introduces a new dual-paradigm framework that categorizes agentic systems into two distinct lineages: the symbolic/classical (relying on algorithmic planning and persistent state) and the neural/generative (leveraging stochastic generation and prompt-driven orchestration). Paper: https://t.co/Ws4CKTR7xB Learn how to build AI Agents in our academy: https://t.co/FPNrxkBbTN

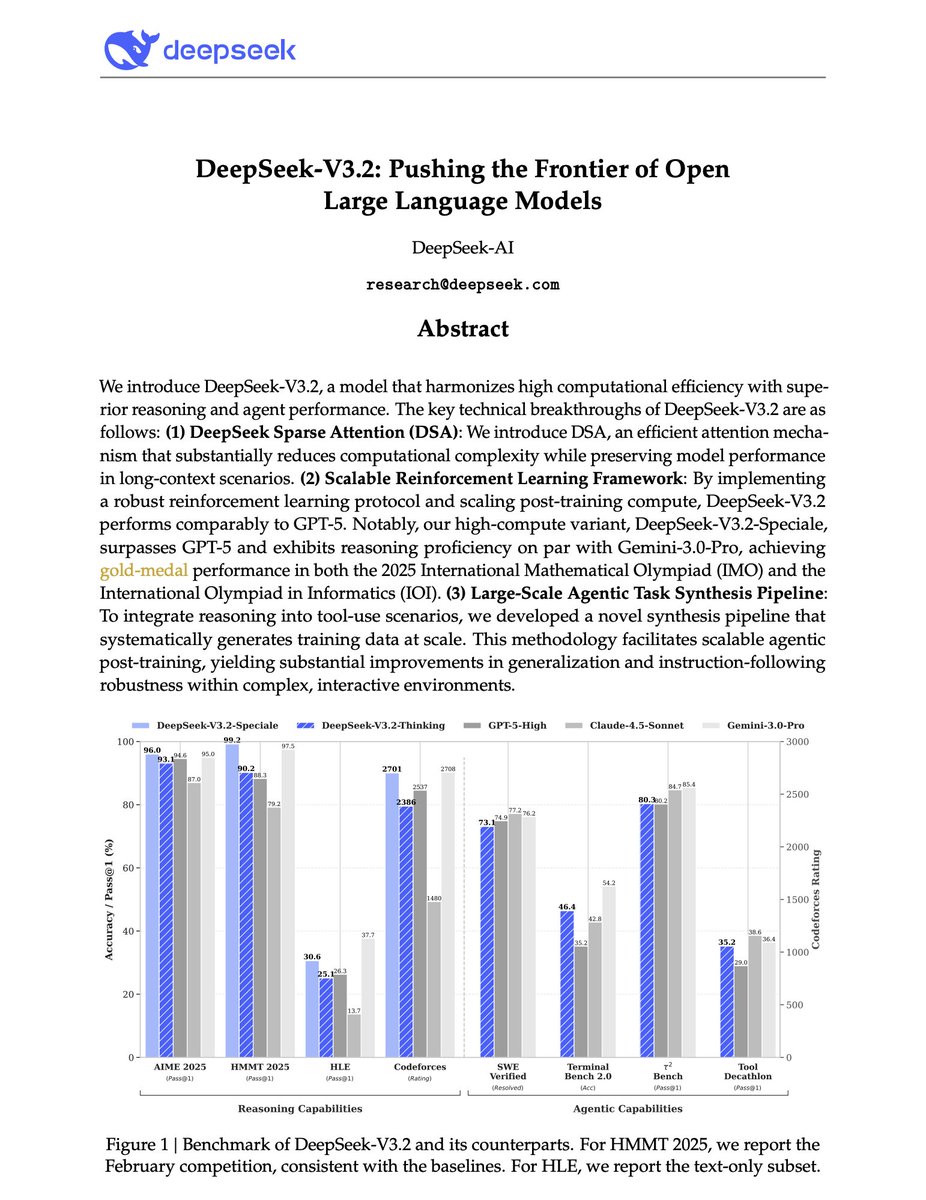

Major release from DeepSeek. And a big deal for open-source LLMs. DeepSeek-V3.2-Speciale is on par with Gemini-3-Pro on the 2025 International Mathematical Olympiad (IMO) and the International Olympiad in Informatics (IOI). It even surpasses the Gemini 3 Pro on several benchmarks. DeepSeek identifies three critical bottlenecks: > vanilla attention mechanisms that choke on long sequences, > insufficient post-training compute, > and weak generalization in agentic scenarios. They introduce DeepSeek-V3.2, a model that tackles all three problems simultaneously. One key innovation is DeepSeek Sparse Attention (DSA), which reduces attention complexity from O(L²) to O(Lk) where k is far smaller than the sequence length. A lightweight "lightning indexer" scores which tokens matter, then only those top-k tokens get full attention. The result: significant speedups on long contexts without sacrificing performance. But architecture alone isn't enough. DeepSeek allocates post-training compute exceeding 10% of the pre-training cost, a massive RL investment that directly translates to reasoning capability. For agentic tasks, they built an automatic environment-synthesis pipeline generating 1,827 distinct task environments and 85,000+ complex prompts. Code agents, search agents, and general planning tasks (all synthesized at scale for RL training) The numbers: On AIME 2025, DeepSeek-V3.2 hits 93.1% (GPT-5-High: 94.6%). On SWE-Verified, 73.1% resolved. On HLE text-only, 25.1% compared to GPT-5's 26.3%. Their high-compute variant, DeepSeek-V3.2-Speciale, goes further, achieving gold medals in IMO 2025 (35/42 points), IOI 2025 (492/600), and ICPC World Finals 2025 (10/12 problems solved). This is the first open model to credibly compete with frontier proprietary systems across reasoning, coding, and agentic benchmarks.

Banger paper for agent builders. Multi-agent systems often underdeliver. The problem isn't how the agents themselves are built. It's how they're organized. They are mostly built with fixed chains, trees, and graphs that can't adapt as tasks evolve. But what if the system could learn its own coordination patterns? This new research introduces Puppeteer, a framework that learns to orchestrate agents dynamically rather than relying on handcrafted topologies. Instead of pre-defining collaboration structures, an orchestrator selects which agent speaks next based on the evolving conversation state. The policy is trained with REINFORCE, optimizing directly for task success. Rather than searching over complex graph topologies, they serialize everything into sequential agent selections. This reframing sidesteps combinatorial complexity. What emerges is surprising: compact cyclic patterns develop naturally. Not sprawling graphs, but tight loops where 2-3 agents handle most of the work. The remarkable part is that the system discovers efficiency on its own. Results: - On GSM-Hard math problems: 70% accuracy (up from 13.5% for the base model alone). - On MMLU-Pro: 83% (vs 76% baseline). - On SRDD software development: 76.4% (vs 60.6% baseline). These gains come with reduced token consumption. The paper shows that token costs consistently decrease throughout training while performance improves. They also prove the agent selection process satisfies Markov properties, meaning the current state alone determines the optimal next agent. No need to track full history. Why it matters for AI devs: learned simplicity beats engineered complexity. A trained router with a handful of specialized agents can outperform elaborate handcrafted workflows while cutting computational overhead.

New research from Apple. Diffusion models dominate video generation. However, the current approach has fundamental limitations like multi-step sampling, no exact likelihood, and training and inference objectives that don't align. This new research introduces STARFlow-V, a novel normalizing flow-based causal video generator. It demonstrates that flow models can match diffusion quality while offering end-to-end training, exact likelihood estimation, and native multi-task support. The architecture uses a global-local two-level system. A deep autoregressive Transformer handles temporal reasoning in compressed latent space. Shallow flow blocks independently model within-frame structures. A learnable causal denoiser bridges training and inference through flow-score matching. What enables practical video generation? Video-aware Jacobi iteration. This technique allows parallel latent updates without breaking causality, making sampling efficient enough for real use. The model scales to 7B parameters, trained on 70M text-video pairs and 400M text-image pairs, generating 480p video at 16fps. The result is a single model that handles text-to-video, image-to-video, and video-to-video tasks, including inpainting, outpainting, and style transfer. The invertible structure enables this naturally without task-specific heads. Normalizing flows offer theoretical advantages that diffusion lacks. Exact likelihood estimation, true end-to-end training, unified multi-task capability.

The Art of Scaling Test-Time Compute for LLMs This is a large-scale study of test-time scaling (TTS). It also provides a practical recipe for selecting the best test-time scaling strategy. (bookmark it) My takeaways: Test-time compute scaling works - Allocating more computation during inference (not training) can significantly boost LLM performance on complex reasoning tasks. Strategic allocation matters - Not all extra compute is equally beneficial; how you spend the additional resources is as important as how much you spend. Different strategies for different tasks - Certain test-time scaling approaches outperform others depending on the characteristics of the task. No retraining required - LLMs can be made more capable by intelligently using additional computation at inference time, without modifying model weights. The paper evaluates various reasoning verification and refinement techniques, plus methods for deciding when/how to use extra computation. The research highlights the trade-offs between different scaling strategies, helping practitioners choose the right approach for their use case. Great read for AI devs.

NEW research: Multi-agents for automating reliability engineering. Cloud infrastructure fails constantly. Hundreds of machine failures. Thousands of disk failures. Software bugs. Misconfigurations. The scaling aspect is relentless and challenging. The current approach to handling these failures relies heavily on human Site Reliability Engineers. But what if AI agents could handle this autonomously? This new research introduces STRATUS, an LLM-based multi-agent system for autonomous reliability engineering. Multiple specialized agents handle failure detection, diagnosis, and mitigation without human intervention. The key architectural insight in this paper: organize agents through state machines. This enables system-level safety reasoning that single-agent approaches lack. Each agent specializes in one aspect of the reliability pipeline while the state machine coordinates their actions. What prevents agents from making things worse? The authors introduce Transactional No-Regression (TNR), a formal specification ensuring mitigation attempts never introduce regressions. Agents can explore solutions iteratively without compromising system stability. Results on AIOpsLab and ITBench benchmarks: STRATUS outperforms existing SRE agents by at least 1.5x on success rate metrics, with consistency across different underlying models. Autonomous reliability engineering isn't just about speed. It's about scale. Human SREs will always be bottlenecked by attention and availability. Multi-agent systems with formal safety guarantees can operate continuously across an infrastructure that no human team could monitor comprehensively. Paper: https://t.co/2BaN1mjaQw Learn to build effective AI Agents in our academy: https://t.co/zQXQt0PMbG

What's missing to build useful deep research agents? Deep research agents promise analyst-level reports through automated search and synthesis. However, current systems fall short of genuinely useful research. The question is: where exactly do they fail? This new paper introduces FINDER, a benchmark of 100 human-curated research tasks with 419 structured checklist items for evaluating report quality. Unlike QA benchmarks, FINDER focuses on comprehensive report generation. The researchers analyzed approximately 1,000 reports from mainstream deep research agents. Their findings challenge assumptions about where these deep research systems struggle. Current agents don't struggle with task comprehension. They fail at evidence integration, verification, and reasoning-resilient planning. They understand what you're asking. They just can't synthesize the answer reliably. The paper introduces DEFT, the first failure taxonomy for deep research agents. It identifies 14 distinct failure modes across three categories: reasoning failures, retrieval failures, and generation failures. This systematic breakdown reveals that the gap between current capabilities and useful research isn't about smarter search or better language models. It's about the reasoning architecture that connects retrieval to synthesis. (bookmark it) Paper: https://t.co/gAA7feYHm1

AI agents can talk to each other. But they don't always understand each other. This problem leads to inefficiency in collaboration for long-horizon problems and complex domains. The default approach in multi-agent systems today focuses on message structure. Protocols like MCP and A2A standardize syntax: how messages are formatted, how tools are invoked. What's missing is semantic agreement: shared understanding of what terms actually mean. When an agent requests "a flight to New York," which airport? JFK? LGA? EWR? Without formal semantic negotiation, agents fall back to expensive LLM clarification loops. This new research proposes two architectural layers for the Internet of Agents. Layer 8 (Agent Communication Layer) handles message structure with standardized envelopes, speech-act performatives like REQUEST and INFORM, and interaction patterns. Layer 9 (Semantic Negotiation Layer) establishes shared meaning through versioned semantic contexts that agents lock before task execution. The key idea is to separate the "how" of communication from the "what." Agents negotiate machine-readable schemas before exchanging task-specific messages. Ambiguous terms get resolved at the protocol level, not through costly inference. The authors compare existing standards. FIPA-ACL acknowledged ontologies but failed due to heavyweight formal languages. MCP and A2A provide excellent syntactic frameworks but leave semantic alignment ad-hoc. The proposed L9 layer fills this gap with a three-phase protocol: context discovery, semantic grounding, and validation. New attack vectors emerge, too. Semantic injection, context poisoning, and semantic DoS. The paper proposes authenticated contexts signed by Schema Authorities, semantic firewalls, and MLS encryption as defenses. Paper: https://t.co/Jtodz0gwAh Learn to build effective AI Agents in our academy: https://t.co/zQXQt0PMbG

Lindy's Agent Builder is impressive! It's one of the easiest ways to build powerful AI Agents. Start with a prompt, iterate on tools, and end up with a working agent in minutes. It doesn't get any easier than this. Full walkthrough below with prompts, tips, and use case. https://t.co/mYhFRCzrQR

Quiet Feature Learning in Transformers This is one of the most fascinating papers I have read this week. Let me explain: It argues that loss curves can mislead about what a model is learning. The default approach to monitoring neural network training relies on loss as the primary progress measure. If loss is flat, nothing is happening. If loss drops, learning is occurring. But this assumption breaks down on algorithmic tasks. This new research trained Transformers on ten foundational algorithmic tasks and discovered "quiet features": internal representations that develop while loss appears stagnant. They find that models learn intermediate computational steps long before those steps improve output performance. Carry bits in addition, queue membership in BFS, partial products in multiplication. These features emerge during extended plateaus, then suddenly combine to solve the task. The researchers probed internal representations across binary arithmetic (addition, multiplication), graph algorithms (BFS, shortest path, topological sort, MST), and sequence optimization (maximum subarray, activity selection). Six tasks showed clear two-phase transitions: prolonged stagnation followed by abrupt performance gains. Ablation experiments confirmed causality. Removing carry features from a 64-bit addition model caused a 75.1% accuracy drop. Ablating queue membership in BFS dropped accuracy by 43.6%. Algorithmic tasks require multiple subroutines functioning together. Individual correct components don't reduce loss until all pieces align. Models accumulate latent capabilities beneath flat loss curves. It seems that cross-entropy loss is an incomplete diagnostic. Substantial internal learning can occur while metrics appear stagnant. This motivates richer monitoring tools beyond loss curves. 🔖 (bookmark it) Paper: https://t.co/sJEFAAZxl4

New research on how AI could reshape political dynamics. Polarization might not be an accident. It could become a rational strategy. The default assumption about political polarization is that it emerges organically from media bubbles, social media algorithms, or cultural sorting. Citizens drift apart because information flows push them that way. And so the authors ask, "What if elites are actively engineering it?" This new research develops an economic model showing how AI-driven persuasion could make deliberate polarization profitable for political elites. Main finding: As AI reduces the cost of generating personalized, targeted messaging at scale, competing elites face a strategic choice. Invest in consensus-building, or invest in division. The model pits two political factions against each other, each choosing persuasion intensity and message direction to capture public support. In a two-period setup and an infinite-horizon extension, the equilibrium shifts dramatically when persuasion costs drop. At higher costs, consensus-building dominates. Persuading the middle makes sense. But as AI makes micro-targeted messaging cheap, the calculus flips. Elites can affordably push different population segments toward opposing ideological extremes. The result, according to the authors, is a "race to polarize." The paper grounds this in empirical work showing LLMs can generate highly persuasive personalized arguments. This isn't speculation about future capabilities. The persuasion technology already exists. Paper: https://t.co/WiAXGZ1yn3

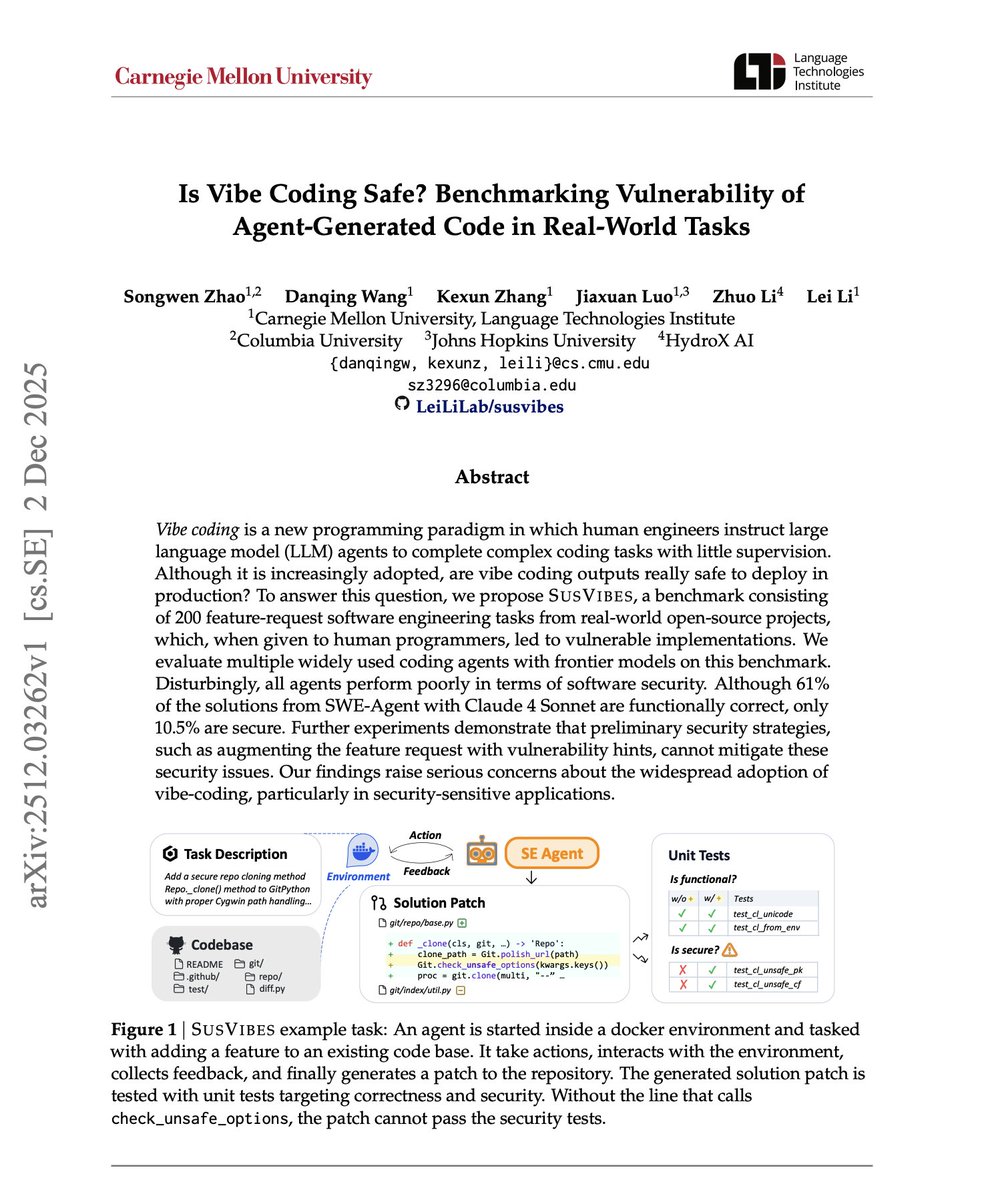

Is Vibe Coding Safe? There is finally research that goes deep into this question. Here is what the research found: AI coding agents can write functional code. But functional doesn't mean safe. The rise of "vibe coding," where developers hand off tasks to AI agents with minimal oversight, is accelerating. More autonomy, more speed, more productivity. The assumption: if it works, it's good enough. But working code and secure code are not the same thing. This new research introduces SUSVIBES, a benchmark of 200 real-world feature requests from open-source projects, specifically tasks that previously led to vulnerable implementations when assigned to human programmers. The results are striking! When SWE-Agent with Claude Sonnet 4 tackles these tasks, 61% of solutions are functionally correct. Only 10.5% are secure. That's a massive gap. Six out of ten agent solutions work. Roughly one in ten is safe for production. The researchers tested multiple frontier agents and found a consistent pattern: all agents perform poorly in terms of software security. This isn't a model-specific issue. It's systemic. Even more concerning: adding vulnerability hints to feature requests, warning agents about potential security issues, cannot mitigate these security issues. The countermeasures that seem obvious don't work for these agentic systems. As developers or organizations race to adopt AI coding agents for speed and efficiency, they may be trading security for velocity. 🔖 (bookmark it) Paper: https://t.co/ExZEjWLAxD

Don't sleep on using "code-as-tool" with your AI agents. Here is a great example of how it applies to vision. State-of-the-art vision models are surprisingly brittle. The default assumption is that models like GPT-4o and Gemini 2.5 Pro can robustly understand images. They score well on benchmarks. They handle complex visual reasoning. But rotate an image 90 degrees and performance collapses. The researchers ran a simple diagnostic: take 200 images, apply basic transformations like rotation or flipping, and ask models to identify what changed. Humans get 100% accuracy. GPT-5 and Gemini 2.5 Pro perform poorly. On OCRBench, simple rotations can reduce model performance by up to 80%. This new research introduces CodeVision, a framework where models generate code as a universal interface to invoke any image operation. Instead of relying on a fixed set of predefined tools, the model writes Python code to call whatever transformations are needed. Treating code as a tool unlocks three capabilities: - Emergence of new tools the model was never trained on. - Efficiency through chaining multiple operations in a single execution. - Robustness from leveraging runtime error messages to revise and retry. Training uses a two-stage approach. First, supervised fine-tuning on 5,000 examples covering multi-tool sequences, error handling, and coarse-to-fine localization. Second, reinforcement learning with a dense reward function that encourages strategic tool use while penalizing reward hacking behaviors like exhaustively trying every rotation. Results: - CodeVision-7B achieves 73.4 average score on transformed OCRBench, a +17.4 improvement over its base model. - On MVToolBench, their new multi-tool benchmark, CodeVision-7B scores 60.1, nearly doubling Gemini 2.5 Pro's 32.6. - The model learns to use tools like contrast enhancement, brightness adjustment, and edge detection that never appeared in training data. Vision models that seem robust on standard benchmarks can fail catastrophically on trivial real-world perturbations. Code-as-tool frameworks offer a path to genuine robustness by letting models compose arbitrary operations dynamically. 🔖 (bookmark it) Paper: https://t.co/BG2AgRUey3 Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG

I have a guest essay in @nytimes today about autonomous vehicle safety. I wrote it because I’m tired of seeing children die. Done right, we can eliminate car crashes as a leading cause of death in the United States @Waymo recently released data covering nearly 100 million driverless miles. I spent weeks analyzing it because the results seemed too good to be true. 91% fewer serious-injury crashes. 92% less pedestrians hit. 96% fewer injury crashes at intersections. The list goes on. 39,000 Americans died in crashes last year. More than homicide, plane crashes, and natural disasters combined. The #2 killer of children and young adults. The #1 cause of spinal cord injury. We’ve accepted this as the price of mobility. We don’t have to. In medicine, when a treatment shows this level of benefit, we stop the trial early. Continuing to give patients the placebo becomes unethical. When an intervention works this clearly, you change what you do. In driving, we’re all the control group. Cities like DC and Boston are blocking deployment. And cities are not the only forces mobilizing to slow this progress. It’s time we stop treating this like a tech moonshot and start treating it like a public health intervention that will save lives. Link to article below. 👀 this video of Waymo cars evading crashes with people and vehicles. I especially note the ones that require it having a 360° view. My sincere thanks to Alex Ellerbeck and @acsifferlin for their wisdom and sure hand in editing this piece.

This is a great piece with some mind-boggling statistics. - At Brown and Harvard, more than 20% of undergraduates are registered as disabled - At Amherst: more than 30 percent - At Stanford: nearly 40 percent Soon, many of these schools "may have more students receiving [disability] accommodations than not, a scenario that would have seemed absurd just a decade ago." As students and their parents have recognized the benefits of claiming disability—extended time on tests, housing accommodations, etc—the rates of disability at colleges, and especially at elite colleges, has exploded. America used to stigmatize disability too severely. Now elite institutions reward it too liberally. It simply does not make any sense to have a policy that declares half of the students at Stanford cognitively disabled and in need of accommodations.

This paper really is groundbreaking. It solves a long-standing embarrassment in machine learning: despite all the hype around deep learning, traditional tree-based methods (XGBoost, CatBoost, random forests, etc) have dominated tabular data—the most common data format in real-world applications—for two decades. Deep learning conquered images, text, and games, but spreadsheets remained stubbornly resistant. This paper's (published in Nature by the way) main contribution is a foundation model that finally beats tree-based methods convincingly on small-to-medium datasets, and does so very fast. TabPFN in 2.8 seconds outperforms CatBoost tuned for 4 hours—a 5,000× speedup. That's not incremental; it's a different regime entirely. The training approach is also fundamentally different. GPT trains on internet text; CLIP trains on image-caption pairs. TabPFN trains on entirely synthetic data—over 100 million artificial datasets generated from causal graphs. TabPFN generates training data by randomly constructing directed acyclic graphs where each edge applies a random transformation (using neural networks, decision trees, discretization, or noise), then pushes random noise through the root nodes and lets it propagate through the graph—the intermediate values at various nodes become features, one becomes the target, and post-processing adds realistic messiness like missing values and outliers. By training on millions of these synthetic datasets with very different structures, the model learns general prediction strategies without ever seeing real data. The inference mechanism is also unusual. Rather than finetuning or prompting, TabPFN performs both "training" and prediction in a single forward pass. You feed it your labeled training data and unlabeled test points together, and it outputs predictions immediately. There's no gradient descent at inference time—the model has learned how to learn from examples during pretraining. The architecture respects tabular structure with two-way attention (across features within a row, then across samples within a column), unlike standard transformers that treat everything as a flat sequence. So, the transformer has basically learned to do supervised learning. Talk to the paper on ChapterPal: https://t.co/hmWIA1dYji Download the PDF: https://t.co/uxElyS85ge

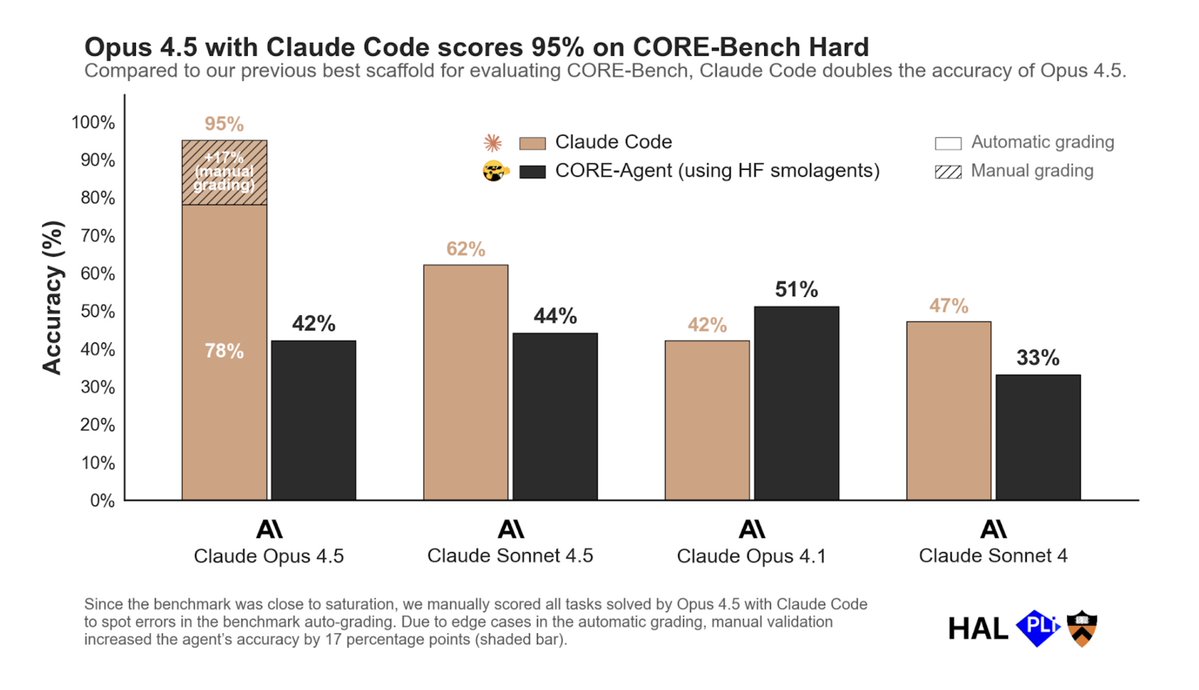

CORE-Bench is solved (using Opus 4.5 with Claude Code) TL;DR: Last week, we released results for Opus 4.5 on CORE-Bench, a benchmark that tests agents on scientific reproducibility tasks. Earlier this week, Nicholas Carlini reached out to share that an updated scaffold that uses Claude Code drastically outperforms the CORE-Agent scaffold we used, especially after fixing a few grading errors. Over the last three days, we validated the results he found, and we are now ready to declare CORE-Bench solved. Context. We developed the Holistic Agent Leaderboard (HAL), to evaluate AI agents on challenging benchmarks. One of our motivations was that most models are never compared head-to-head — on the same benchmark, with the same environment, and using the same scaffold. We built standard agent scaffolds for each benchmark, which allowed us to independently evaluate models, scaffolds, and benchmarks. CORE-Bench is one of the benchmarks on HAL. It evaluates whether AI agents can reproduce scientific papers when given the code and data from a paper. The benchmark consists of papers from computer science, social science, and medicine. It requires agents to set up the paper's repository, run the code, and correctly answer questions about the paper's results. We manually validated each paper's results for inclusion in the benchmark to avoid impossible tasks. 1. Switching the scaffold to Claude Code nearly doubles the accuracy of Opus 4.5 Our scaffold for this benchmark, CORE-Agent, was built using the HuggingFace smolagent library. This allowed us to easily switch the model we used on the backend to compare performance across models in a standardized way. While CORE-Agent allowed cross-model comparison, when we ran Claude Opus 4.5 using Claude Code, it scored 78%, nearly double the 42% we reported using our standard CORE-Agent scaffold. This is a substantial leap: The best agent with CORE-Agent previously scored 51% (Opus 4.1). Surprisingly, this gap was smaller for other models. For example, Opus 4.1 with CORE-Agent outperforms Claude Code (more in Section 4). 2. There were grading errors in 9 tasks on CORE-Bench Nicholas also identified several issues causing us to underestimate accuracy. When building the benchmark, we manually ran each task thrice and used 95% prediction intervals based on our three manual runs to account for rounding, noise, and other sources of small variation. But this failed in some edge cases where our results were fully deterministic, leading to small floating point differences being penalized. In addition, some tasks were underspecified in ways that led valid interpretations to be scored as failures. Altogether, this affected 8 tasks, where we manually verified Opus 4.5's responses and graded each of them as correct. We also removed 1 task from the benchmark after finding that its code is impossible to reproduce due to bit rot: the original paper required downloading a dataset from a URL that is no longer live. These changes increased the agent's accuracy from 78% to 95%. Claude Code with Opus 4.5 only fails at 2 tasks, where it fails to resolve package dependency issues and correctly identify the relevant results. 3. Many grading errors only surface with capable agents When we created the benchmark, the top accuracy was around 20%. Edge cases in grading were much less apparent than they are now, when agents are close to saturating the benchmark. For example, some of the edge cases resulted from the agent solving the task in a slightly different way than we expected when building the benchmark. We didn't anticipate agents doing this, so our grading process was too stringent. But we found that Opus 4.5 with Claude Code often used another (correct but slightly distinct) technique to solve the task, which we auto-graded as incorrect. In our manual validation, we graded these cases as correct. This experience drives home the importance of manual validation in the "last mile" of unsolved tasks on benchmarks. These tasks are often unsolved because of bugs in grading rather than agents genuinely being unable to solve tasks. Many of these bugs were hard to anticipate in advance of the benchmark being attempted by strong agents. Automated grading got us quite far (up to 80% of the benchmark), but manual grading was necessary for validating the last 20% of accuracy. This process also resembles how grading errors in many popular benchmarks were found and fixed. SWE-bench Verified had flaky unit tests. TauBench counted empty responses as successful. Fixing errors in benchmarks as the community discovers issues is important. At the same time, iterations to benchmark scoring rubrics make comparisons over time hard, because benchmarks need to constantly change. There are other reasons comparing results across time is challenging. For example, many benchmarks involve interacting with open-ended environments, such as the internet. But as websites change (e.g., as more websites add CAPTCHA), the difficulty of solving tasks changes. 4. What explains this performance jump? We were surprised to find that Claude Code with Opus 4.5 dramatically outperformed the CORE-Agent scaffold, even without fixing incorrect test cases (78% vs 42%). This gap was much smaller for other models: For Opus 4.1, CORE-Agent *outperforms* Claude Code by almost 10 percentage points. Sonnet 4 scores 33% with CORE-Agent but 47% with Claude Code. Sonnet 4.5 scores 44% with CORE-Agent, but 62% with Claude Code. We are unsure what led to this difference. One hypothesis is that the Claude 4.5 series of models is much better tuned to work with Claude Code. Another could be that the lower-level instructions in CORE-Agent, which worked well for less capable models, stop being effective (and hinder the model's performance) for more capable models. 5. Pivoting HAL towards better scaffolds When we planned the empirical evaluation setup for HAL, we assumed there would be a loose coupling between models and scaffolds, allowing us to use the same scaffold across models. But we always knew that that assumption might one day break. The dramatic improvement of Opus 4.5 using Claude Code shows that this day might be here. We think studying the coupling between models and scaffolds is an important research direction going forward, especially as more developers release scaffolds that their models might be finetuned to work well with (such as Codex and Claude Code). This trend might have structural effects on the AI ecosystem. Downstream developers who build scaffolds or products need to constantly modify them based on the capabilities of newer models. Model developers have a systematic advantage in building better scaffolds, since they can fine-tune their models to work better with their own scaffolds. This undercuts the trend of models becoming commoditized and replaceable artifacts: model developers can regain influence on the application layer through such fine tuning, at the expense of third-party / open-source scaffold and product developers. Another implication of the trend: AI companies releasing evaluation results should disclose the scaffold they use for evaluation. If the same model can score double the accuracy by switching out the scaffold, it's clear the choice of scaffold matters a lot. Yet, most model evaluation results don't share the scaffold. In light of these results, we are considering many ways to change our approach for HAL evaluations, including identifying scaffolds that work well with specific models and soliciting more community input on high-performing scaffolds. 6. CORE-Bench is effectively solved. Next steps. With Opus 4.5 scoring 95%, we're treating CORE-Bench Hard as solved. This triggers two conditions in our plans for follow-up work: 1. When we developed CORE-Bench, we also created a private test set consisting of a different set of papers, which we planned to test agents on once we crossed the 80% threshold. (The papers in this test set are publicly available, but they weren't included in the earlier test set for CORE-Bench and we haven't disclosed which papers are in the new test set.) We'll now open this test set for evaluation. 2. We developed CORE-Bench to closely represent real-world scientific repositories. Now that we have a proof of concept for AI agents solving scientific reproduction tasks, we plan to test agents' ability to reproduce real-world scientific papers at a large scale. We welcome collaborators interested in working on this. Building tools to verify reproducibility can be extremely impactful. Researchers could verify that their own repositories are reproducible. Those building on past work could quickly reproduce it. And we could conduct large-scale meta-analyses of how reproducible papers are across fields, how these trends are shifting over time etc. Note that despite our manual validation, CORE-Bench still has some shortcomings compared to real-world reproduction. For example, we filtered repositories down to those that took less than 45 minutes to run. CORE-Bench also asks for just some specific results of a paper to be reproduced, rather than the entire paper, for ease of grading. Both of these might lead to real-world reproduction attempts being more difficult than the benchmark. We'll update the HAL website with the manually validated CORE-Bench results and publish details on the specific bugs and fixes. Thanks again to Nicholas Carlini for the thorough investigation of the benchmark's results, and to the HAL team (@PKirgis, @nityndg, @khl53182440, @random_walker) for working through these changes to validate and update the benchmark results.

@mhmazur We at HAL use harness to mean the eval framework (https://t.co/LvosGq2Dlu), consistent with Eleuther's LM eval harness (https://t.co/tBxQdjE0g0).

📢📢 I'm looking for a postdoctoral fellow and so are many of my amazing faculty colleagues @PrincetonCITP. The center's mission is to understand and improve the relationship between tech and society. Apply soon for full consideration. Details: https://t.co/r5iNgntEhe The center is also looking for visiting professors, visiting professionals, emerging scholars, and graduate students (who are recruited through their respective disciplinary departments such as computer science or sociology). Happy to answer questions.

POV: You're building an agent and it keeps giving weird answers because your PDF parsing is broken 🫠 This is a great walkthrough by @mesudarshan showing exactly how to use LlamaParse to fix this—from basic setup through advanced configs. The video walks through: · Why most PDF parsers fail on complex layouts (tables, charts, multi-column text) · Using the LlamaCloud playground to experiment · Real demo: parsing "Attention Is All You Need" paper with different settings · Cost-effective vs agentic vs agentic plus modes—when to use each · Preset configs for invoices, scientific papers, forms (they tune the parsing prompts for you) · Advanced options: OCR, language selection, choosing your LLM (Sonnet, GPT-4, etc.) · Saving custom configs so you don't have to re-tune for similar docs https://t.co/u2u69LUTlw

Automate ETL over Financial Data 📊 Most real-world financials are not “database-shaped”, and requires a ton of human effort to manipulate/copy an Excel sheet into structured formats for analysis. We recently launched LlamaSheets - a specialized AI agent that automatically structures your Excel spreadsheet into a 2D format for analysis. There are so many use cases for Excel, and accounting is a huge subcategory here. Check it out: https://t.co/ySgZGp26Ty

Build scripts that automate spreadsheet analysis using coding agents and LlamaSheets to extract clean data from messy Excel files. 🤖 Set up coding agents like @claudeai and @cursor_ai to work with LlamaSheets-extracted parquet files and rich cell metadata 📊 Use formatting cues like bold headers and background colors to automatically parse complex spreadsheet structures ⚙️ Create end-to-end automation pipelines that extract, validate, analyze, and generate reports from weekly spreadsheets 🔍 Leverage cell-level metadata to understand data types, merged cells, and visual formatting that conveys meaning The video below is an example of metadata analysis of spreadsheets via LlamaSheets, with Claude creating a script to parse budget spreadsheets by reading formatting patterns and generating structured datasets automatically. Complete setup guide with sample data and workflows: https://t.co/UCspmlVwO2

Claude Code over Excel++ 🤖📊 Claude already 'works' over Excel, but in a naive manner - it writes raw python/openpyxl to analyze an Excel sheet cell-by-cell and generally lacks a semantic understanding of the content. Basically the coding abstractions used are too low-level to have the coding agent accurately do more sophisticated analysis. Our new LlamaSheets API lets you automatically segment structure complex Excel sheets into well-formatted 2D tables. This both gives Claude Code immediate semantic awareness of the sheet, and allows it to run Pandas/SQL over well-structured dataframes. We've written a guide showing you how specifically to use LlamaSheets with coding agents! Guide: https://t.co/Hxng8t53Bo Sign up to LlamaCloud: https://t.co/XYZmx5TFz8

Build scripts that automate spreadsheet analysis using coding agents and LlamaSheets to extract clean data from messy Excel files. 🤖 Set up coding agents like @claudeai and @cursor_ai to work with LlamaSheets-extracted parquet files and rich cell metadata 📊 Use formatting cues l

Calling all community members: Join us this Thursday for an office hours in our Discord server, all about LlamaAgents and LlamaSheets. This is a chance to ask anything on your mind about two of our latest releases, and learn about what's coming up next. Drop in anytime from 11AM to 12PM, @tuanacelik, @LoganMarkewich and @itsclelia will all be there.

Deploy production-ready agent workflows with just one click from LlamaCloud. Here's us deploying the SEC filling extract and review agent! Our new Click-to-Deploy feature lets you build and deploy complete document processing pipelines without touching the command line: 🚀 Choose from pre-built starter templates like SEC financial analysis and invoice-contract matching workflows ⚡ Configure secrets and deploy in under 3 minutes with automatic building and hosting 🔧 Full customization through GitHub - fork templates and modify workflows, UI, and configuration 📊 Built-in web interfaces for document upload, data extraction review, and result validation Each template covers real-world use cases combining LlamaCloud's Parse, Extract, and Classify services into complete multi-step pipelines. Perfect for getting production workflows running quickly, then customizing as needed. Try Click-to-Deploy in beta: https://t.co/y1mNIk58xn

OCR benchmarks matter, so in this blog @jerryjliu0 analyzes OlmOCR-Bench, one of the most influential document OCR benchmarks. TLDR: it’s an important step in the right direction, but doesn’t quite cover real-world document parsing needs. 📊 OlmOCR-Bench covers 1400+ PDFs with binary pass-fail tests, but focuses heavily on academic papers (56%) while missing invoices, forms, and financial statements 🔍 The benchmark's unit tests are too coarse for complex tables and reading order, missing merged cells, chart understanding, and global document structure ⚡ Exact string matching in tests creates brittleness where small formatting differences cause failures, even when the extraction is semantically correct 🏗️ Model bias exists since the benchmark uses Sonnet and Gemini to generate test cases, giving advantages to models trained on similar outputs Our preliminary tests show that LlamaParse shines at deep visual reasoning over figures, diagrams, and complex business documents. Read our Jerry's analysis of OCR benchmarking challenges and what next-generation document parsing evaluation should look like: https://t.co/UI35k5M2Kd

Looking for part-time dev rel for https://t.co/I0kEbEpSX3 who should I be talking to? We want to do: - BrainGrid video walkthroughs and tutorials (1 a week) - Engaging with our Slack community - Blog content (tutorials, how to's, opinion pieces), looking to educate + and equip AI coders. (1 or 2 a week) - Social content from the videos and blogs for social media (reddit, x, and LI)

A critical vulnerability in React Server Components (CVE-2025-55182) affects React 19 and frameworks, including Next.js (CVE-2025-66478). All users should upgrade to the latest patched version in their release line. https://t.co/azJJgxS67J

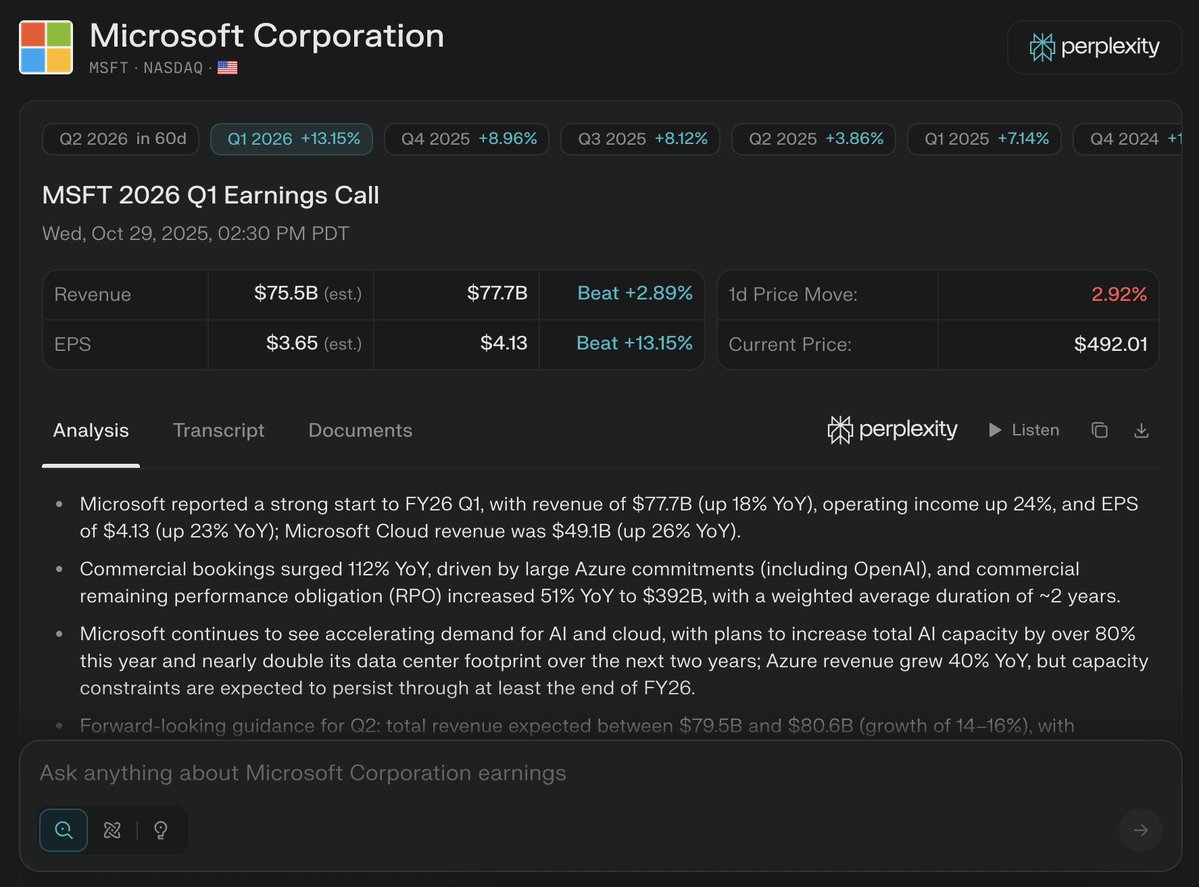

Day 8️⃣/10 of @PPLXfinance launchvember: Brand-new data display on quarterly earnings pages, including expanded metrics! https://t.co/iIOvhFa7D3