@omarsar0

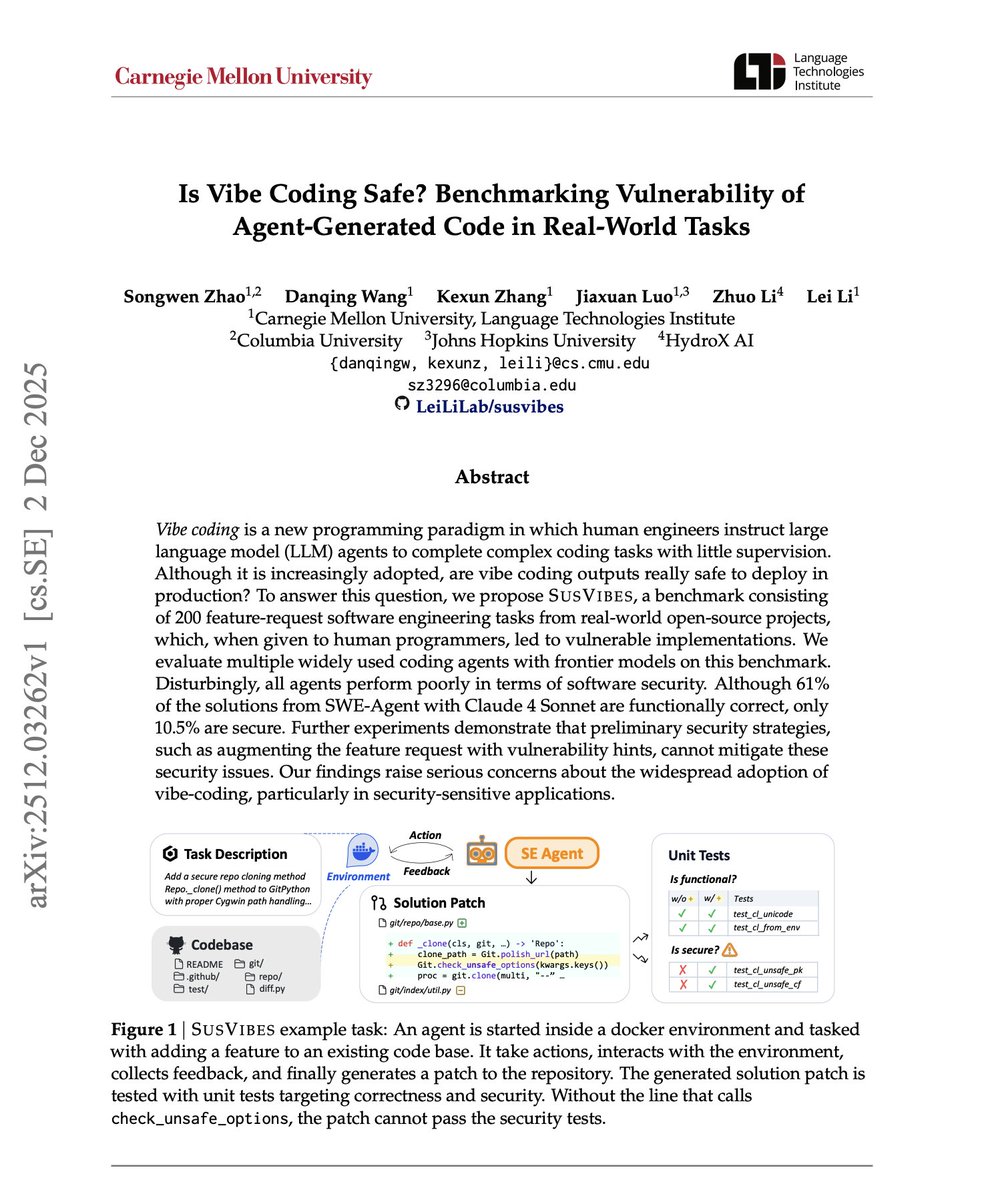

Is Vibe Coding Safe? There is finally research that goes deep into this question. Here is what the research found: AI coding agents can write functional code. But functional doesn't mean safe. The rise of "vibe coding," where developers hand off tasks to AI agents with minimal oversight, is accelerating. More autonomy, more speed, more productivity. The assumption: if it works, it's good enough. But working code and secure code are not the same thing. This new research introduces SUSVIBES, a benchmark of 200 real-world feature requests from open-source projects, specifically tasks that previously led to vulnerable implementations when assigned to human programmers. The results are striking! When SWE-Agent with Claude Sonnet 4 tackles these tasks, 61% of solutions are functionally correct. Only 10.5% are secure. That's a massive gap. Six out of ten agent solutions work. Roughly one in ten is safe for production. The researchers tested multiple frontier agents and found a consistent pattern: all agents perform poorly in terms of software security. This isn't a model-specific issue. It's systemic. Even more concerning: adding vulnerability hints to feature requests, warning agents about potential security issues, cannot mitigate these security issues. The countermeasures that seem obvious don't work for these agentic systems. As developers or organizations race to adopt AI coding agents for speed and efficiency, they may be trading security for velocity. 🔖 (bookmark it) Paper: https://t.co/ExZEjWLAxD