@Yesterday_work_

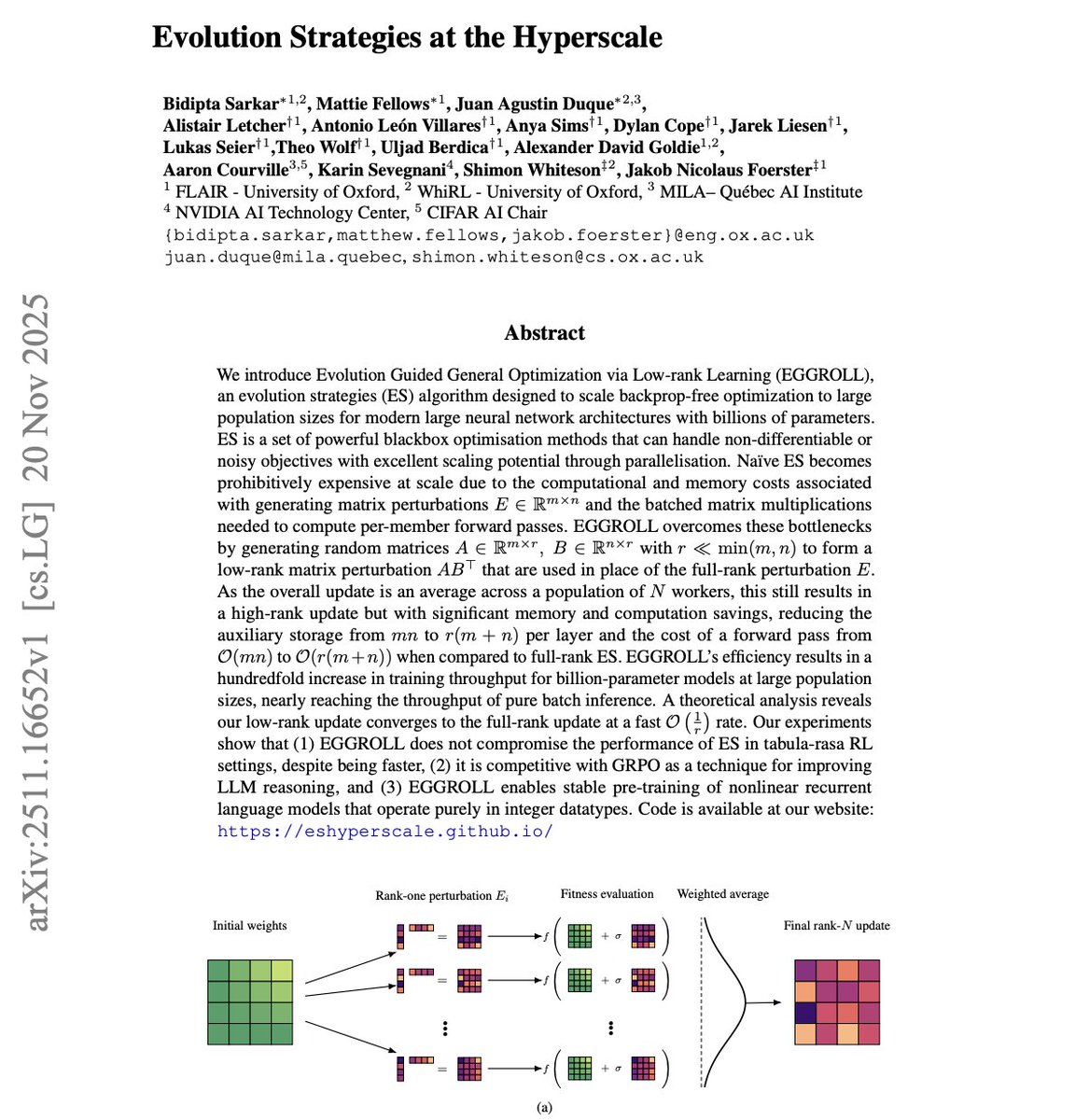

I'm reading NVIDIA's new paper and its wild. Everyone keeps talking about scaling transformers with bigger clusters and smarter optimizers… meanwhile NVIDIA and Oxford just showed you can train billion-parameter models using evolution strategies a method most people wrote off as ancient. The trick is a new system called EGGROLL, and it flips the entire cost model of ES. Normally, ES dies at scale because you have to generate full-rank perturbation matrices for every population member. For billion-parameter models, that means insane memory movement and ridiculous compute. These guys solved it by generating low-rank perturbations using two skinny matrices A and B and letting ABᵀ act as the update. The population average then behaves like a full-rank update without paying the full-rank price. The result? They run evolution strategies with population sizes in the hundreds of thousands a number earlier work couldn’t touch because everything melted under memory pressure. Now, throughput is basically as fast as batched inference. That’s unheard of for any gradient-free method. The math checks out too. The low-rank approximation converges to the true ES gradient at a 1/r rate, so pushing the rank recreates full ES behavior without the computational explosion. But the experiments are where it gets crazy. → They pretrain recurrent LMs from scratch using only integer datatypes. No gradients. No backprop. Fully stable even at hyperscale. → They match GRPO-tier methods on LLM reasoning benchmarks. That means ES can compete with modern RL-for-reasoning approaches on real tasks. → ES suddenly becomes viable for massive, discrete, hybrid, and non-differentiable systems the exact places where backprop is painful or impossible. This paper quietly rewrites a boundary: we didn’t struggle to scale ES because the algorithm was bad we struggled because we were doing it in the most expensive possible way. NVIDIA and Oxford removed the bottleneck. And now evolution strategies aren’t an old idea… they’re a frontier-scale training method.