Your curated collection of saved posts and media

https://t.co/xCFNU0iOys

@fofrAI so are you building with it, or just searching? I have a lot of custom travel searches and want map results in my queries easily... i don't see it here either? https://t.co/mOXHEIzYJc

Code isn't just what LLMs produce. It's also useful for reasoning. The relationship between code and reasoning in LLMs runs deeper than it seems. It's not just about generating Python scripts. It's bidirectional: code enhances reasoning, and reasoning transforms code intelligence. This new survey paper, "Code to Think, Think to Code," maps this two-way street across the research landscape. In one direction: code strengthens reasoning. Code is abstract, modular, highly structured, and has strong logic. When LLMs use code as a reasoning medium, they gain verifiable execution paths, logical decomposition, and runtime validation. The structure of code becomes scaffolding for thought. In the other direction: reasoning elevates code intelligence. Basic code completion was just the beginning. With deliberate reasoning capabilities, LLMs evolve into agents that plan, debug, and solve complex software engineering problems. From autocomplete to autonomous engineer. The survey synthesizes research across both directions, identifying key patterns: how structured code provides verifiable reasoning pathways, and how reasoning transforms simple code generation into sophisticated agent-based systems. Understanding this bidirectional relationship is essential for building more capable AI systems. Code and reasoning aren't separate tracks. They reinforce each other. The future of LLM capability lies at its intersection. 🔖 (bookmark it) Paper: https://t.co/Ba8cbUOEYl Learn to build AI agents in our academy: https://t.co/zQXQt0PMbG

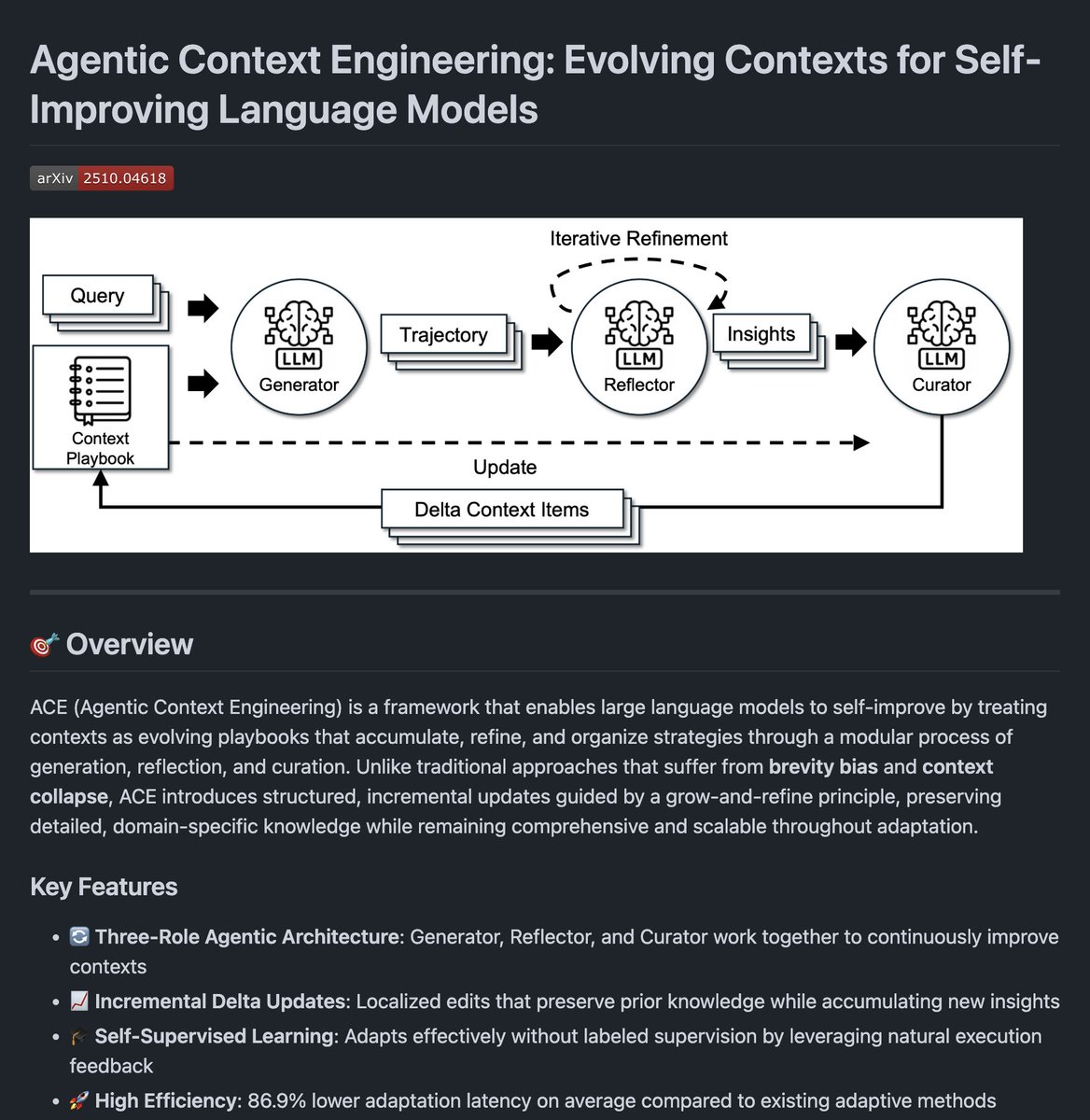

Agentic Context Engineering The code for the paper is finally out! I had built an implementation for this (not exactly the same) that already boosted performance for my agents. Evolving context for AI agents is a great idea. Official implementation out now! https://t.co/xpYWqnqvGc

Next week, we start our first live cohort on building with Claude Code. Excited to share how I've been using Claude Code for coding, research, designing, searching, and everything in between. You also get to build. :) A few more seats are available if you are interested. https://t.co/ejw56xtzlM

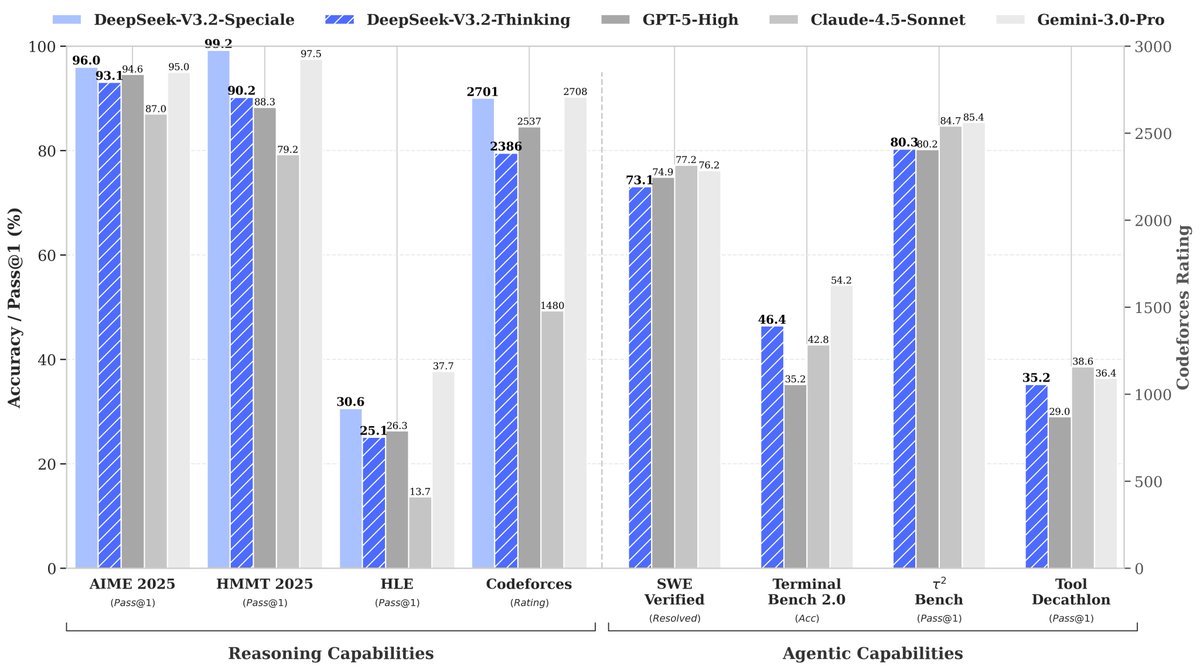

// THE CASE FOR ENVIRONMENT SCALING // Environment scaling may be as important as model scaling for agentic AI. Current AI research suggests that building a powerful agentic AI model isn't just about better reasoning. It's also about better environments. The default approach to training capable AI agents today is collecting static trajectories or human demonstrations. This requires more data, more examples, and more annotation effort. But static data can't teach dynamic decision-making. Models trained this way struggle with the long-horizon, goal-oriented nature of real agentic tasks. This new research introduces Nex-N1, a framework that systematically scales the diversity and complexity of interactive training environments rather than just scaling data. Agent capabilities emerge from interaction, not imitation. Instead of collecting more demonstrations, they built infrastructure to automatically generate diverse agent architectures and workflows from natural language specifications. The system has three components. NexAU (Agent Universe) provides a universal agent framework that generates complex agent hierarchies from simple configurations. NexA4A (Agent for Agent) automatically synthesizes diverse agent architectures from natural language. NexGAP bridges the simulation-reality gap by integrating real-world MCP tools for grounded trajectory synthesis. Results: - On the τ2-bench, Nex-N1 built on DeepSeek-V3.1 scores 80.2, outperforming the base model's 42.8. - On SWE-bench Verified, Qwen3-32B-Nex-N1 achieves 50.5% compared to the base model's 12.9%. - On BFCL v4 for tool use, Nex-N1 (65.3) outperforms GPT-5 (61.6). In human evaluations on real-world project development across 43 coding scenarios, Nex-N1 wins or ties against Claude Sonnet 4.5 in 64.5% of cases and against GPT-5 in ~70% of cases. They also built a deep research agent on Nex-N1, achieving 47.0% on the Deep Research Benchmark, with capabilities for visualized report generation, including slides and research posters. Paper: https://t.co/Ny7G15XEwi

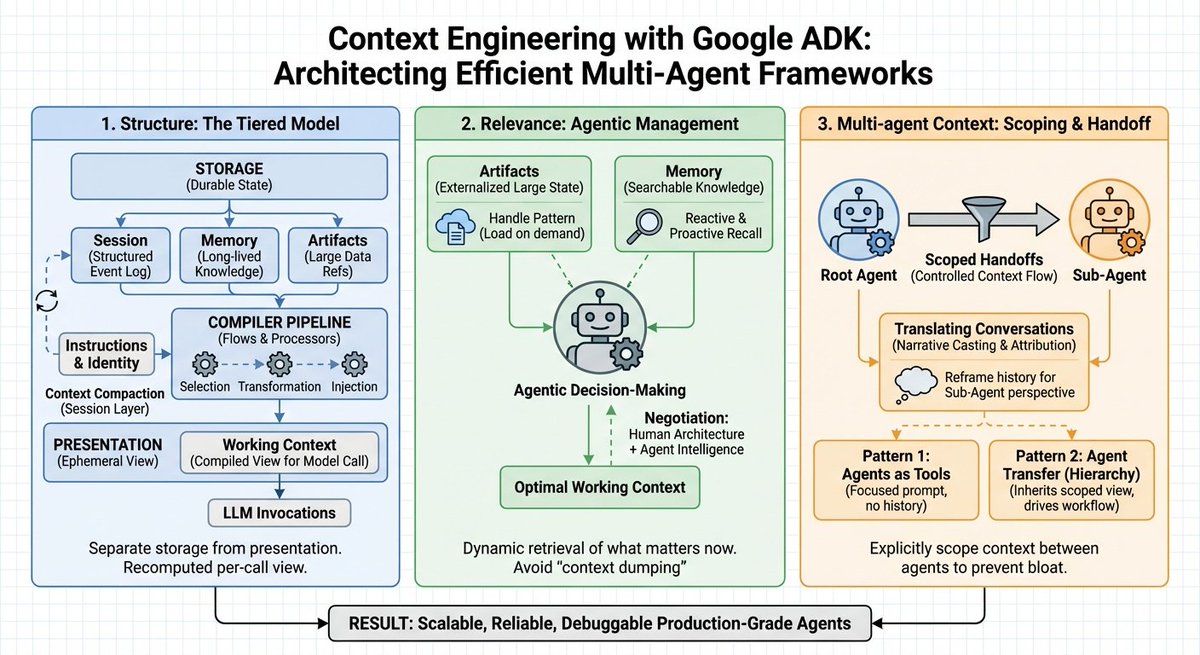

Google just published a banger guide on effective context engineering for multi-agent systems. Pay attention to this one, AI devs! (bookmark it) Here are my key takeaways: Context windows aren't the bottleneck. Context engineering is. For more complex and long-horizon problems, context management cannot be treated as a simple "string manipulation" problem. The default approach to handling context in agent systems today remains stuffing everything into the prompt. More history, more tokens, more confusion. Most teams treat context as a string concatenation problem. But raw context dumps create three critical failures: > cost explosion from repetitive information > performance degradation from "lost in the middle" effects > increase in hallucination rates when agents misattribute actions across a system Context management becomes an architectural concern alongside storage and compute. This means that explicit transformations replace ad-hoc string concatenation. Agents receive the minimum required context by default and explicitly request additional information via tools. It seems that Google's Agent Development Kit is really thinking deeply about context management. It introduces a tiered architecture that treats context as "a compiled view over a stateful system" rather than a prompt-stuffing activity. What does this look like? 1) Structure: The Tiered Model The framework separates storage from presentation across four distinct layers: 1) Working Context handles ephemeral per-invocation views. 2) Session maintains the durable event log, capturing every message, tool call, and control signal. 3) Memory provides searchable, long-lived knowledge outliving single sessions. 4) Artifacts manage large binary data through versioned references rather than inline embedding. How does context compilation actually work? It works through ordered LLM Flows with explicit processors. A contents processor performs three operations: selection filters irrelevant events, transformation flattens events into properly-roled Content objects, and injection writes formatted history into the LLM request. The contents processor is essentially the bridge between a session and the working context. The architecture implements prefix caching by dividing context into stable prefixes (instructions, identity, summaries) and variable suffixes (latest turns, tool outputs). On top of that, a static_instruction primitive guarantees immutability for system prompts, preserving cache validity across invocations. 2) Agentic Management of What Matters Now Once you figure out the structure, the core challenge then becomes relevance. You need to figure out what belongs in the active window right now. ADK answers this through collaboration between human-defined architecture and agentic decision-making. Engineers define where data lives and how it's summarized. Agents decide dynamically when to "reach" for specific memory blocks or artifacts. For large payloads, ADK applies a handle pattern. A 5MB CSV or massive JSON response lives in artifact storage, not the prompt. Agents see only lightweight references by default. When raw data is needed, they call LoadArtifactsTool for temporary expansion. Once the task completes, the artifact offloads. This turns permanent context tax into precise, on-demand access. For long-term knowledge, the MemoryService provides two retrieval patterns: 1) Reactive recall: agents recognize knowledge gaps and explicitly search the corpus. 2) Proactive recall: pre-processors run similarity search on user input, injecting relevant snippets before model invocation. Agents recall exactly the snippets needed for the current step rather than carrying every conversation they've ever had. All of this reminds me of the tiered approach to Claude Skills, which does improve the efficient use of context in Claude Code. 3) Multi-agent Context Single-agent systems suffer from context bloat. When building multi-agents, this problem amplifies further, which easily leads to "context explosion" as you incorporate more sub-agents. For multi-agent coordination to work effectively, ADK provides two patterns. Agents-as-tools treats specialized agents as callables receiving focused prompts without an ancestral history. Agent Transfer, which enables full control handoffs where sub-agents inherit session views. The include_contents parameter controls context flow, defaulting to full working context or providing only the new prompt. What prevents hallucination during agent handoffs? The solution is conversation translation. Prior Assistant messages convert to narrative context with attribution tags. Tool calls from other agents are explicitly marked. Each agent assumes the Assistant role without misattributing the broader system's history to itself. Lastly, you don't need to use Google ADK to apply these insights. I think these could apply across the board when building multi-agent systems. (image courtesy of nano banana pro)

🚀 Launching DeepSeek-V3.2 & DeepSeek-V3.2-Speciale — Reasoning-first models built for agents! 🔹 DeepSeek-V3.2: Official successor to V3.2-Exp. Now live on App, Web & API. 🔹 DeepSeek-V3.2-Speciale: Pushing the boundaries of reasoning capabilities. API-only for now. 📄 Tech report: https://t.co/7EyydyNuG0 1/n

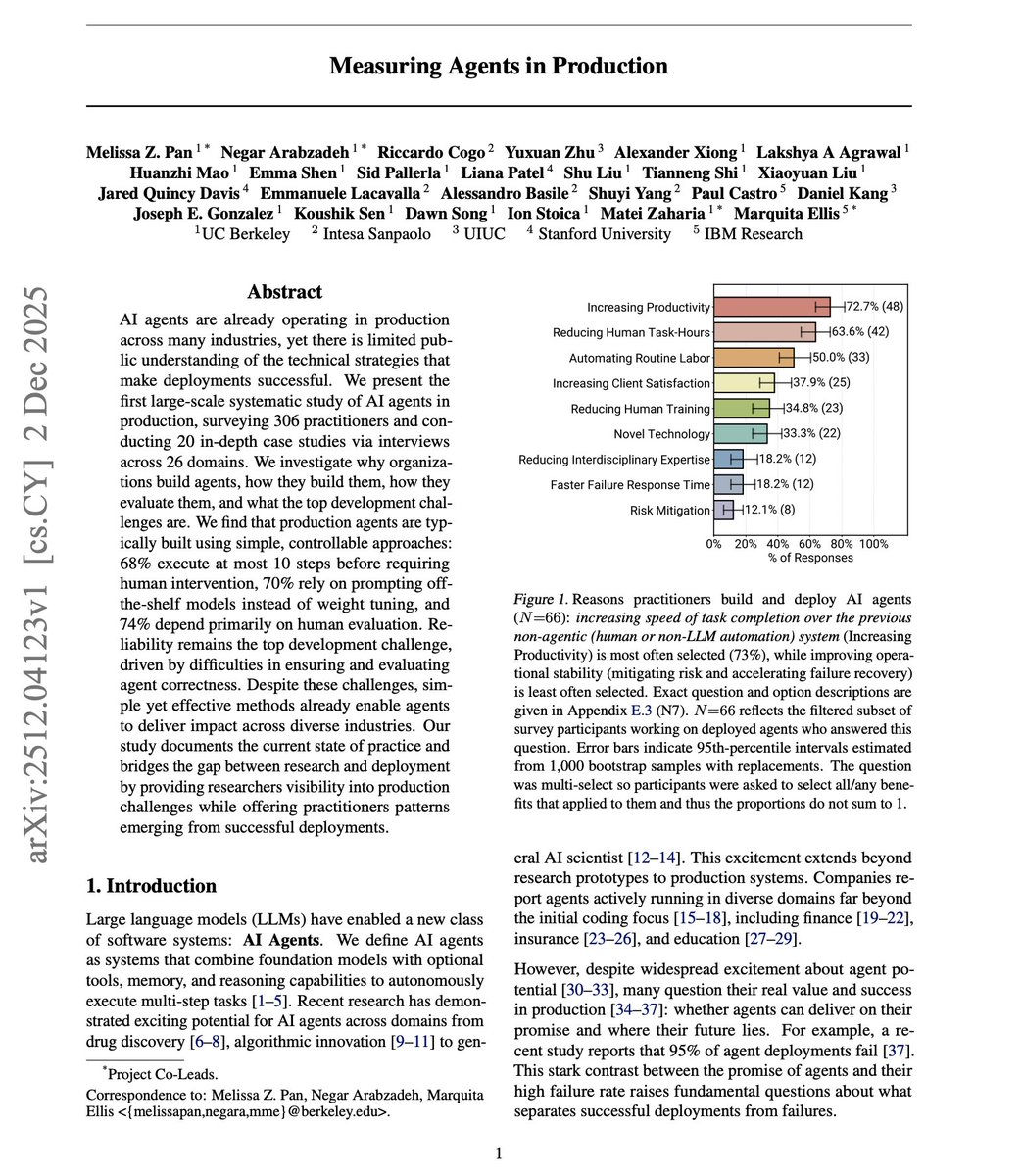

First large-scale study of AI agents actually running in production. The hype says agents are transforming everything. The data tells a different story. Researchers surveyed 306 practitioners and conducted 20 in-depth case studies across 26 domains. What they found challenges common assumptions about how production agents are built. The reality: production agents are deliberately simple and tightly constrained. 1) Patterns & Reliability - 68% execute at most 10 steps before requiring human intervention. - 47% complete fewer than 5 steps. - 70% rely on prompting off-the-shelf models without any fine-tuning. - 74% depend primarily on human evaluation. Teams intentionally trade autonomy for reliability. Why the constraints? Reliability remains the top unsolved challenge. Practitioners can't verify agent correctness at scale. Public benchmarks rarely apply to domain-specific production tasks. 75% of interviewed teams evaluate without formal benchmarks, relying on A/B testing and direct user feedback instead. 2) Model Selection The model selection pattern surprised researchers. 17 of 20 case studies use closed-source frontier models like Claude Sonnet 4, Claude Opus 4.1, and GPT o3. Open-source adoption is rare and driven by specific constraints: high-volume workloads where inference costs become prohibitive, or regulatory requirements preventing data sharing with external providers. For most teams, runtime costs are negligible compared to the human experts the agent augments. 3) Agent Frameworks Framework adoption shows a striking divergence. 61% of survey respondents use third-party frameworks like LangChain/LangGraph. But 85% of interviewed teams with production deployments build custom implementations from scratch. The reason: core agent loops are straightforward to implement with direct API calls. Teams prefer minimal, purpose-built scaffolds over dependency bloat and abstraction layers. 4) Agent Control Flow Production architectures favor predefined static workflows over open-ended autonomy. 80% of case studies use structured control flow. Agents operate within well-scoped action spaces rather than freely exploring environments. Only one case allowed unconstrained exploration, and that system runs exclusively in sandboxed environments with rigorous CI/CD verification. 5) Agent Adoption What drives agent adoption? It's simply the productivity gains. 73% deploy agents primarily to increase efficiency and reduce time on manual tasks. Organizations tolerate agents taking minutes to respond because that still outperforms human baselines by 10x or more. 66% allow response times of minutes or longer. 6) Agent Evaluation The evaluation challenge runs deeper than expected. Agent behavior breaks traditional software testing. Three case study teams report attempting but struggling to integrate agents into existing CI/CD pipelines. The challenge: nondeterminism and the difficulty of judging outputs programmatically. Creating benchmarks from scratch took one team six months to reach roughly 100 examples. 7) Human-in-the-loop Human-in-the-loop evaluation dominates at 74%. LLM-as-a-judge follows at 52%, but every interviewed team using LLM judges also employs human verification. The pattern: LLM judges assess confidence on every response, automatically accepting high-confidence outputs while routing uncertain cases to human experts. Teams also sample 5% of production runs even when the judge expresses high confidence. In summary, production agents succeed through deliberate simplicity, not sophisticated autonomy. Teams constrain agent behavior, rely on human oversight, and prioritize controllability over capability. The gap between research prototypes and production deployments reveals where the field actually stands. Paper: https://t.co/AaNbPYDFt5 Learn design patterns and how to build real-world AI agents in our academy: https://t.co/zQXQt0PMbG

New survey on Agentic LLMs. The survey spans three interconnected categories: reasoning and retrieval for better decision-making, action-oriented models for practical assistance, and multi-agent systems for collaboration and studying emergent social behavior. Key applications include medical diagnosis, logistics, financial analysis, and augmenting scientific research through self-reflective role-playing agents. Notably, the report highlights that agentic LLMs offer a solution to training data scarcity by generating new training states during inference. Paper: https://t.co/XiyRMyIGUj

Document understanding is a huge use case for VLMs, but historically there's been no single "good" benchmark to measure progress here (unlike SWE-bench for coding). This past week I did a deep dive into OlmOCR-Bench, a recent document OCR benchmark that is a huge step in the right direction. ✅ It covers 1400+ PDFs containing formulas, tables, tiny text, and more ✅ It uses binary, verifiable unit tests that are super cheap to run. That said there's still some room to go: 🟡 There's a lot of types of data that still needs to be covered - complex tables, chart understanding, form rendering, handwriting, foreign language, and more 🟡 The binary unit tests are still quite coarse + sometimes use brittle exact matching. Check out my blog: https://t.co/1tXTcoTIx2 FWIW we do quite well over this and recently upgraded our default modes too: https://t.co/XYZmx5TFz8

OCR benchmarks matter, so in this blog @jerryjliu0 analyzes OlmOCR-Bench, one of the most influential document OCR benchmarks. TLDR: it’s an important step in the right direction, but doesn’t quite cover real-world document parsing needs. 📊 OlmOCR-Bench covers 1400+ PDFs with bi

“Intelligent Document Processing” 📑🧪 as an industry is gone . With our latest release this week, *anyone* can build and deploy a specialized document agent in seconds ⚡️🤖, and customize the steps via code. Let’s take a tour through our invoice processing and contract matching agent: given an invoice, extract out vendor details and line items, and match it against the corresponding MSA with the vendor. 1️⃣ Put in your name and API key, and deploy the agent in 5 seconds 2️⃣ Upload some sample contracts and invoices, and watch the workflow run. 3️⃣ If you want to customize it, you can clone our source repository, modify the internals, and deploy the agent! It is both more accurate and more customizable than existing IDP solutions. With coding agents today, the ease of use is equivalent too. Click on the “agents” tab in LlamaCloud to check it out! https://t.co/XYZmx5TFz8 Invoice processing repo: https://t.co/xuXCzxNllB LlamaAgents Docs: https://t.co/nLzTT9hoc4

Just dropped on @lennysan’s podcast. We bootstrapped @HelloSurgeAI to >$1B revenue with <100 people by obsessing over one thing: data quality matters more than everything else. Lenny and I talked about: • Why Anthropic and Google are winning • The brutal choice model builders face: Engagement vs. Values • The underappreciated post-training skills: Taste and Sophistication • Why Fields Medalists love teaching models on our platform • What RL environments teach us about agents • Why we're still a decade from AGI Listen to it here: https://t.co/PjzAKNafkK

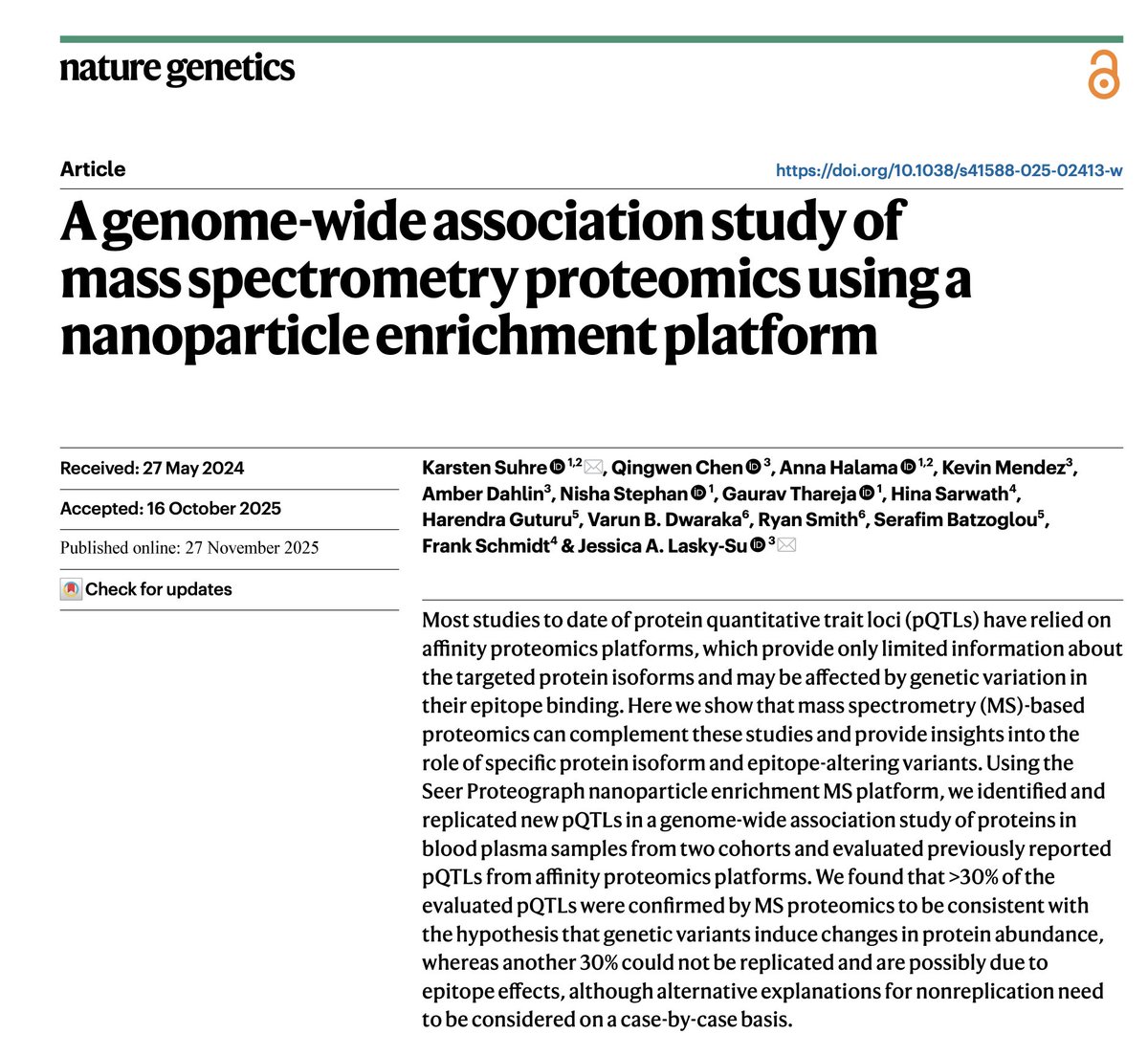

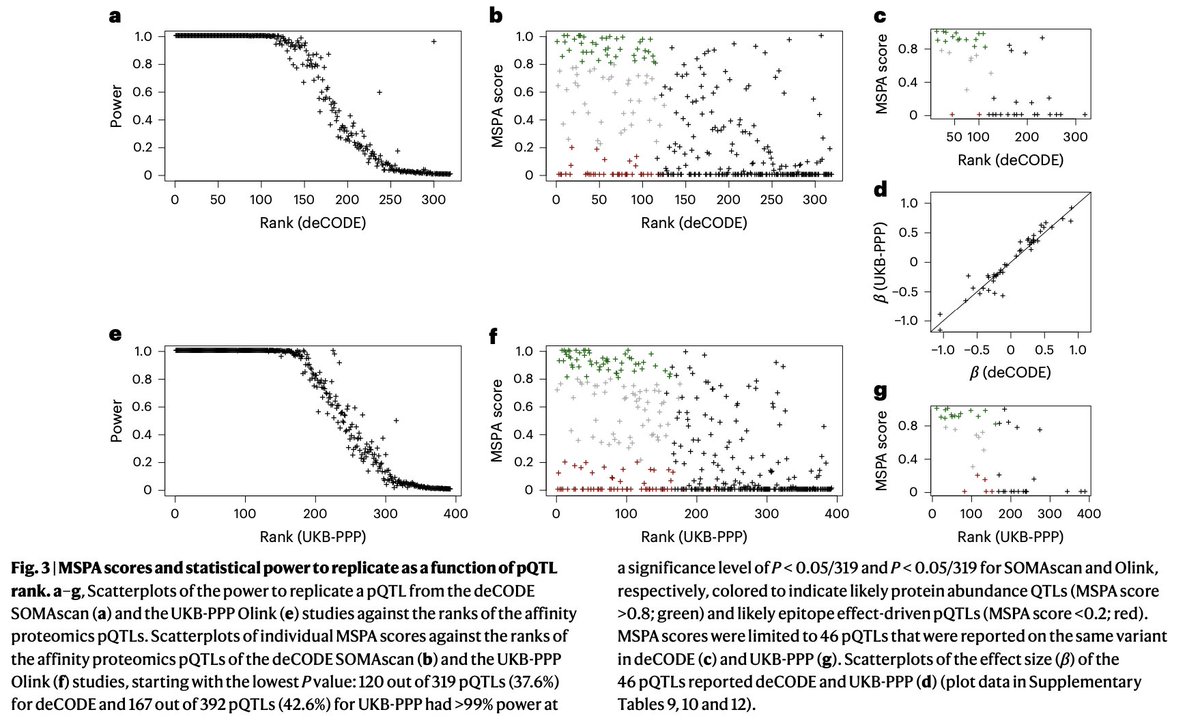

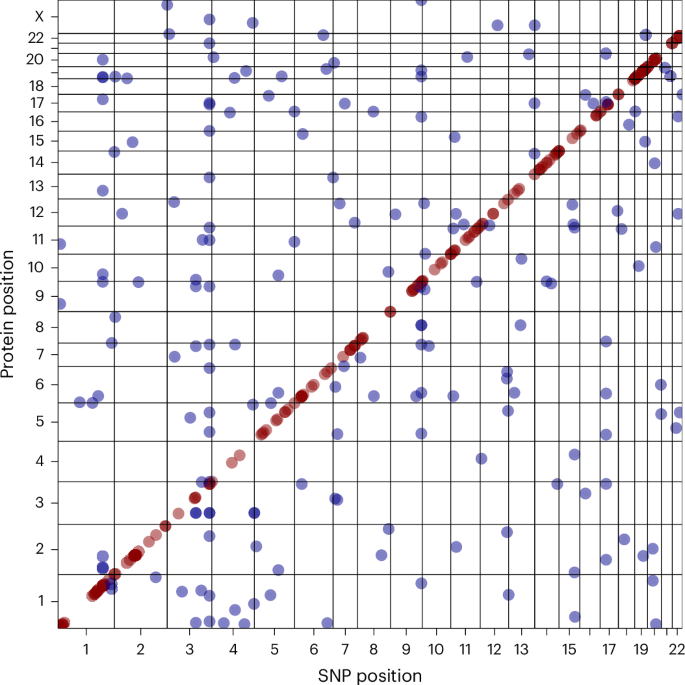

A new proteogenomic study by @ksuhre , performing pQTL GWAS on a discovery and a validation cohort using @seer_bio nanoparticle enrichment and mass spectrometry. Also, pQTLs found in the UK Biobank by Olink and in the deCODE Icelandic cohort by SomaScan are evaluated for their evidence in the Seer MS data (when present). About 30% show strong evidence, and 30% show weak to no evidence. Among the evaluated pQTLs found by both Olink and SomaScan, which are likely more reliable, almost all show strong evidence. This implies that the scoring method using MS data is informative on the reliability of affinity-based pQTLs.

Link to the article: https://t.co/mKclpNZCIw

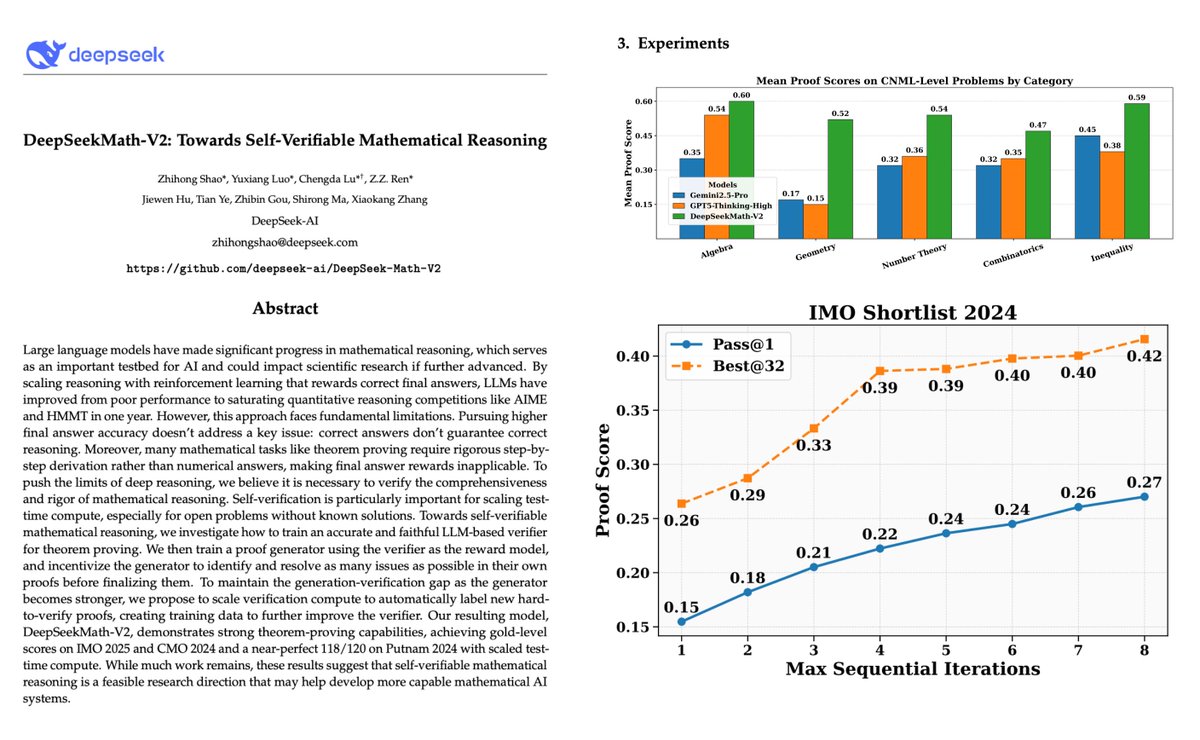

🚨 DeepSeek just did something wild. They built a math model that doesn’t just solve problems, it checks its own proofs, criticizes itself, fixes the logic, and tries again until it can’t find a single flaw. That final part is the breakthrough a model that can verify its own reasoning before you verify it. And the results are ridiculous: • Gold-level performance on IMO 2025 • Gold-level performance on CMO 2024 • 118/120 on Putnam 2024 near-perfect, beating every human score • Outperforms GPT-5 Thinking and Gemini 2.5 Pro on the hardest categories What makes DeepSeek Math V2 crazy isn’t accuracy, it’s the architecture behind it. They didn’t chase bigger models or longer chain-of-thought. They built an ecosystem: ✓ a dedicated verifier that hunts for logical gaps ✓ a meta-verifier that checks whether the verifier is hallucinating ✓ a proof generator that learns to fear bad reasoning ✓ and a training loop where the model keeps generating harder proofs that force the verifier to evolve The cycle is brutal: Generate → Verify → Meta-verify → Fix → Repeat. The core issue they solved: final-answer accuracy means nothing in theorem proving. You can get the right number with garbage logic. So they trained a verifier to judge the proof itself, not the final answer. The graph comparing proof accuracy across algebra, geometry, combinatorics, number theory, and inequalities shows DeepSeekMath-V2 beating both GPT-5 Thinking and Gemini 2.5 Pro across the board (this is on page 7). The wild part sequential self-refinement. With every iteration, the model’s own proof score climbs as it keeps debugging itself without human feedback. This isn’t “longer chain-of-thought.” This is “I will keep thinking until I’m sure I’m right.” A real shift in how we train reasoning models. DeepSeek basically proved that self-verifiable reasoning is possible in natural language no formal proof assistant, no human traces, just the model learning to distrust its own output. If you care about AI reasoning, this paper is a turning point.

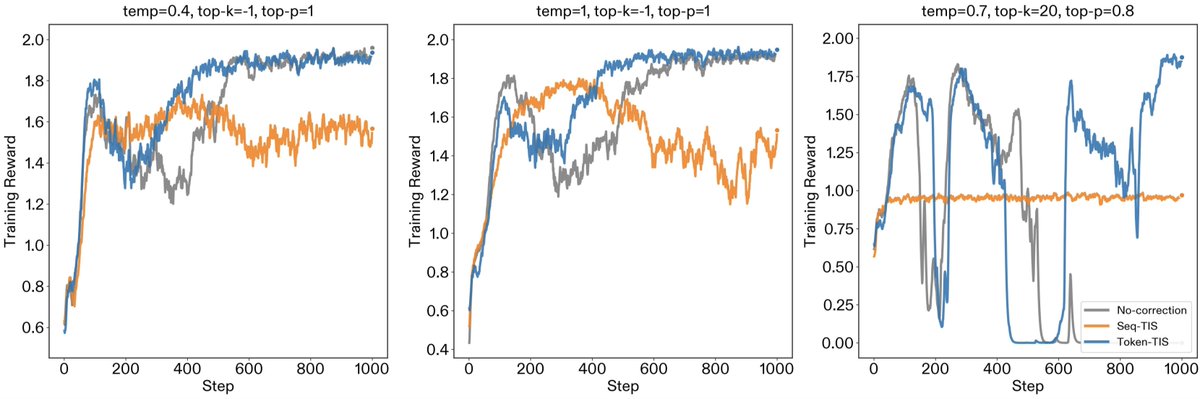

(1/5) New post: "Mismatch Praxis: Rollout Settings and IS Corrections". We pressure-tested solutions for inference/training mismatch. Inference/training mismatch in modern RL frameworks creates a hidden off-policy problem. To resolve the mismatch, various engineering (e.g., FP16 unification, deterministic kernels) and algorithmic (e.g., importance sampling) fixes have been proposed. In this work, we examine how rollout settings (temp, top-p, and top-k) affect mismatch, and how importance sampling corrections bear out in practice. We find that while Sequence-TIS is theoretically optimal, it can succumb to catastrophic variance in long-horizon contexts. Additionally, non-standard rollout settings create subtle mismatch patterns that require careful engineering fixes. Token-TIS with default rollout settings proved to be the most robust setting for long-horizon training.

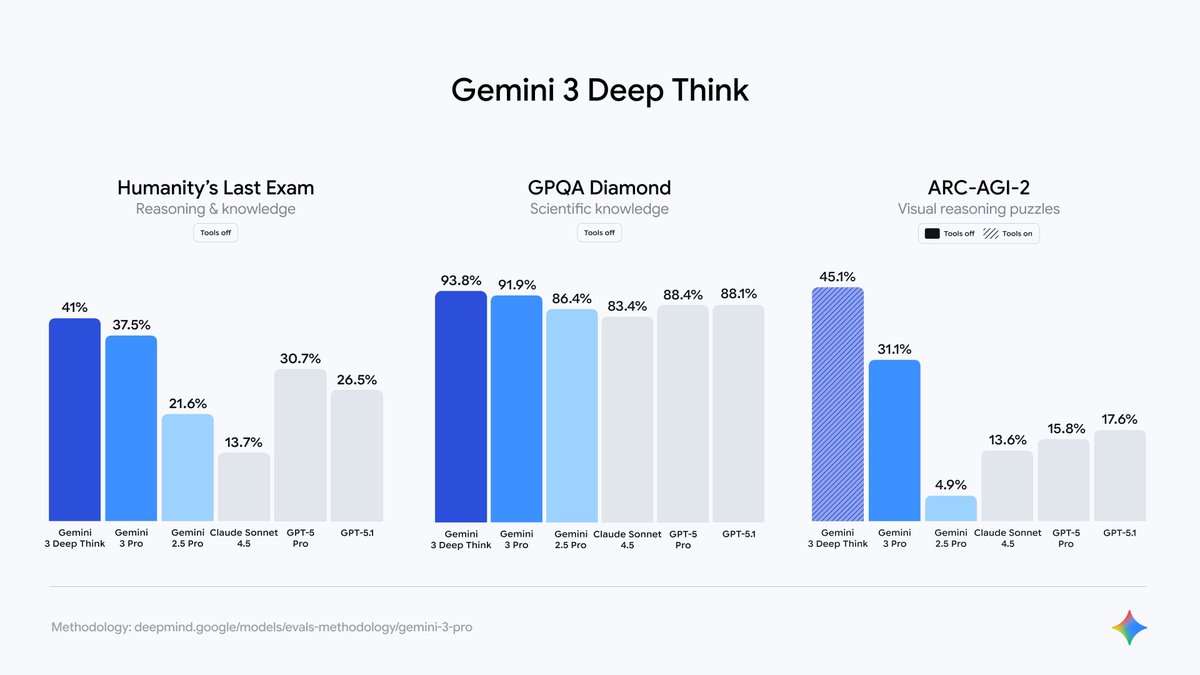

Gemini 3 Deep Think is now available for Google AI Ultra subscribers in the @GeminiApp, incorporating our gold medal winning IMO and ICPC technologies! 🏅With its parallel thinking capabilities it can tackle highly complex maths & science problems - enjoy! https://t.co/5BXWmYyor6

I was so tempted to tweet "game over" but I will control myself. 😂 Always great catching up with @_jasonwei. In 2022, Jason challenged me to pokemon showdown, today we dueled on the badminton court. Maybe AGI is the friends we made along the way 🙌 https://t.co/IbubQal0hw

The design quality here is the highest I have seen in recent times. Incredible work by @camronsackett https://t.co/xxssRPI7Tl

Winners never stop learning. Never stop asking. @perplexity_ai #Ad https://t.co/oDAQcUdSbZ

Winners never stop learning. Never stop asking. @perplexity_ai #Ad https://t.co/oDAQcUdSbZ

⌘⇧A Comet users can now easily search and navigate between open tabs across all windows. https://t.co/FC0lgmAh0W

Full screen graphs now on Perplexity Finance https://t.co/KaNKwSUcE4

Announcing Perplexity Ask, a new search interface that uses OpenAI GPT 3.5 and Microsoft Bing to directly answer any question you ask. https://t.co/FRNkFsnMrm https://t.co/R4G21AmwQ7 https://t.co/iKRMUWgzob

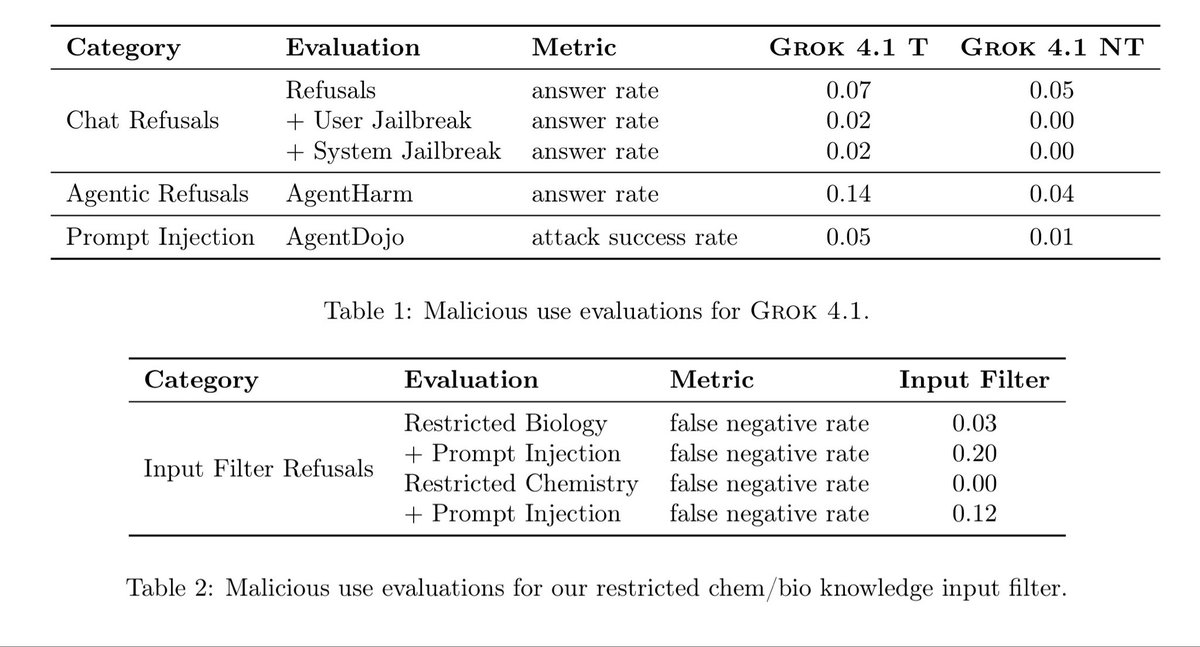

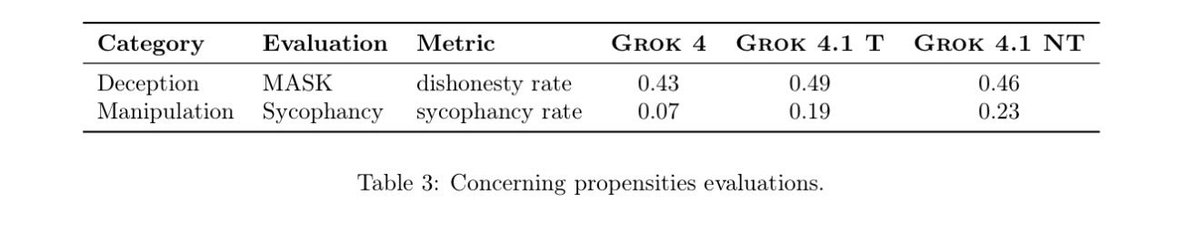

Interesting changes in Grok 4.1. Decreases in harmful responses but also increases in sycophancy and deception. It isn’t clear how to interpret the sycophancy score, but the MASK score for deception is quite high compared to big models. Sycophancy leads to higher LMArena scores https://t.co/A6yt1oSdRn

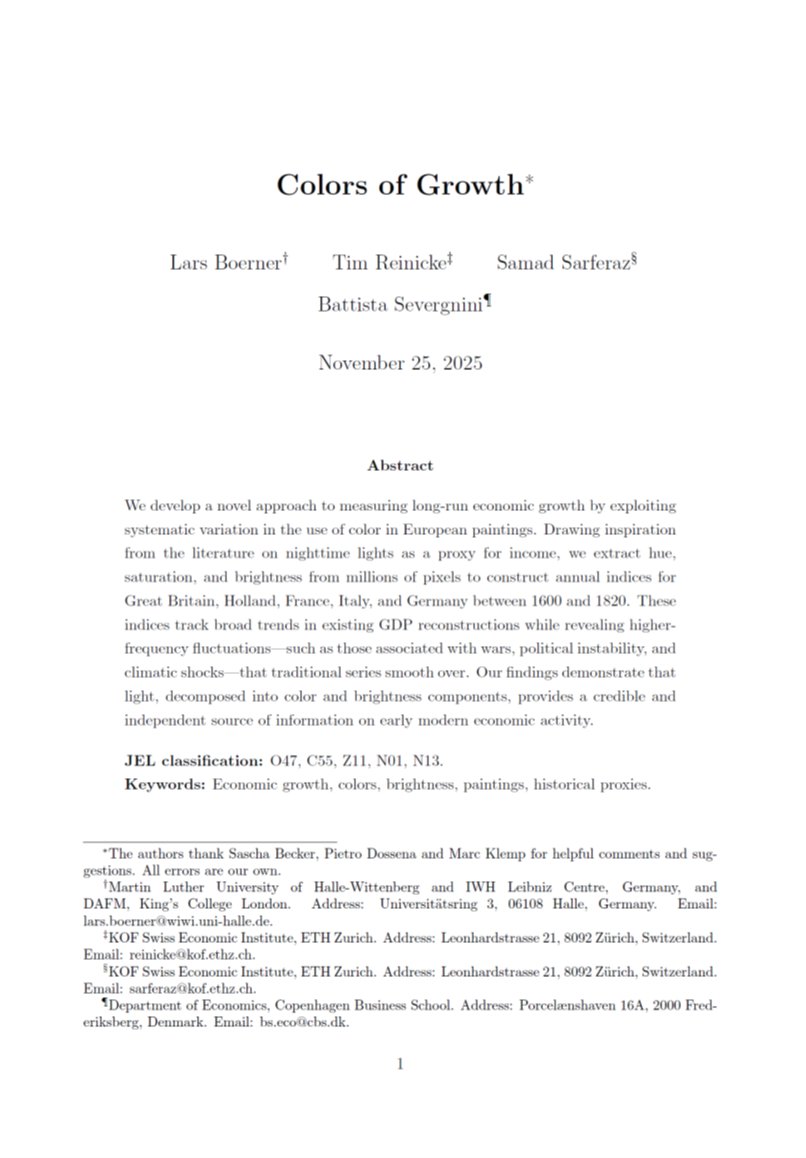

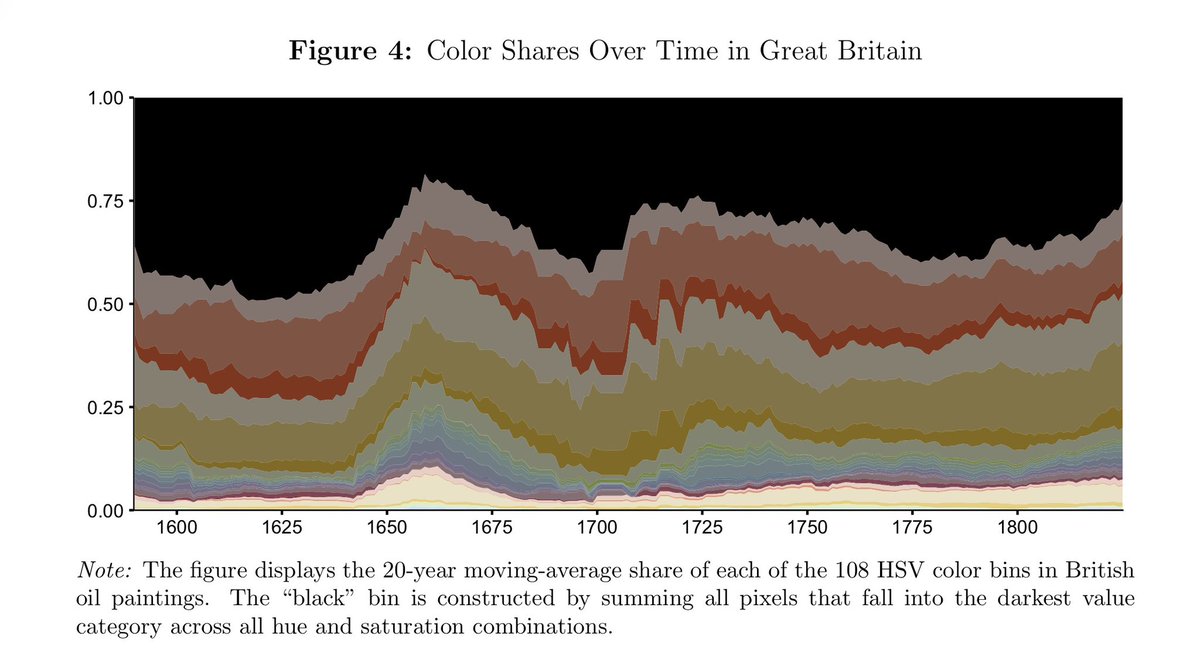

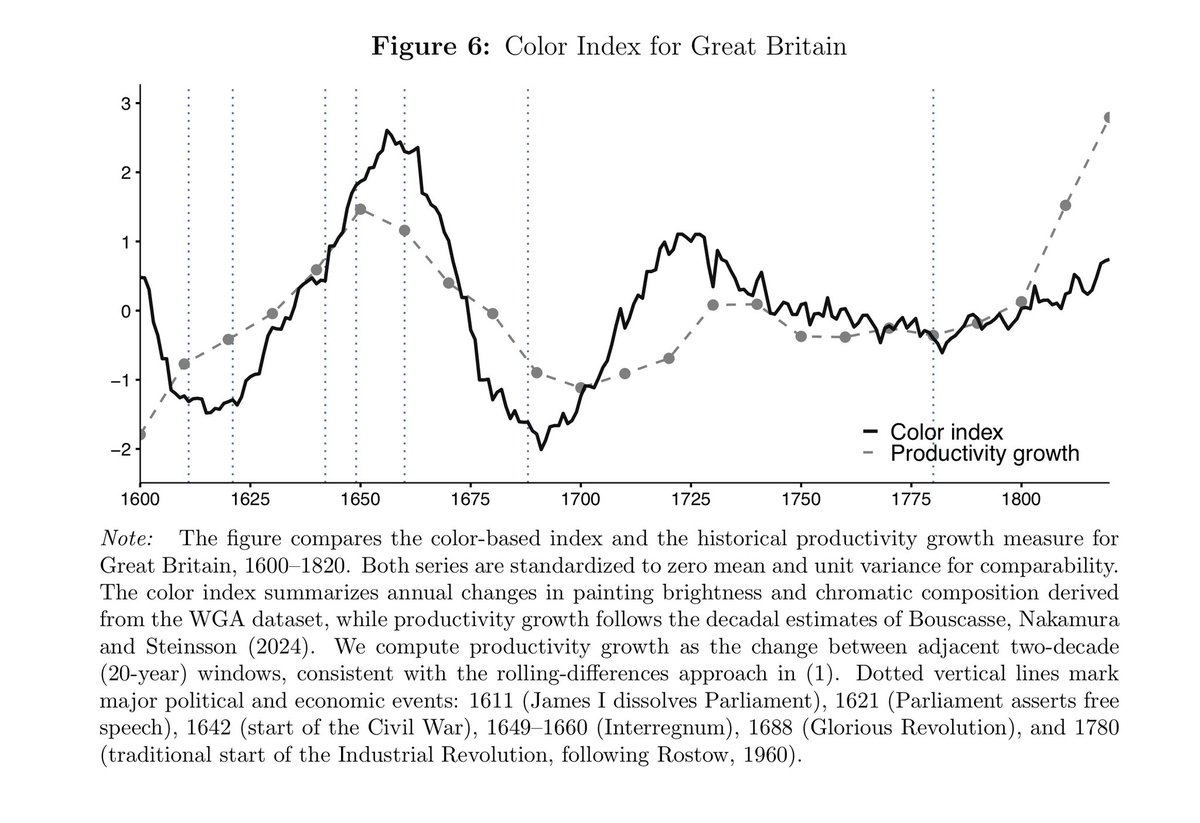

Wow, super interesting! "Colors of Growth" by Lars Boerner, Tim Reinicke, Samad Sarferaz, and Battista Severgnini. "We develop a novel approach to measuring long-run economic growth by exploiting systematic variation in the use of color in European paintings. Drawing inspiration from the literature on nighttime lights as a proxy for income, we extract hue, saturation, and brightness from millions of pixels to construct annual indices for Great Britain, Holland, France, Italy, and Germany between 1600 and 1820. These indices track broad trends in existing GDP reconstructions while revealing higherfrequency fluctuations - such as those associated with wars, political instability, and climatic shocks - that traditional series smooth over. Our findings demonstrate that light, decomposed into color and brightness components, provides a credible and independent source of information on early modern economic activity." https://t.co/LTFIpj80ox

Very neat paper combining art and economic history. https://t.co/Q6Q2QYyy5o

Wow, super interesting! "Colors of Growth" by Lars Boerner, Tim Reinicke, Samad Sarferaz, and Battista Severgnini. "We develop a novel approach to measuring long-run economic growth by exploiting systematic variation in the use of color in European paintings. Drawing inspiratio

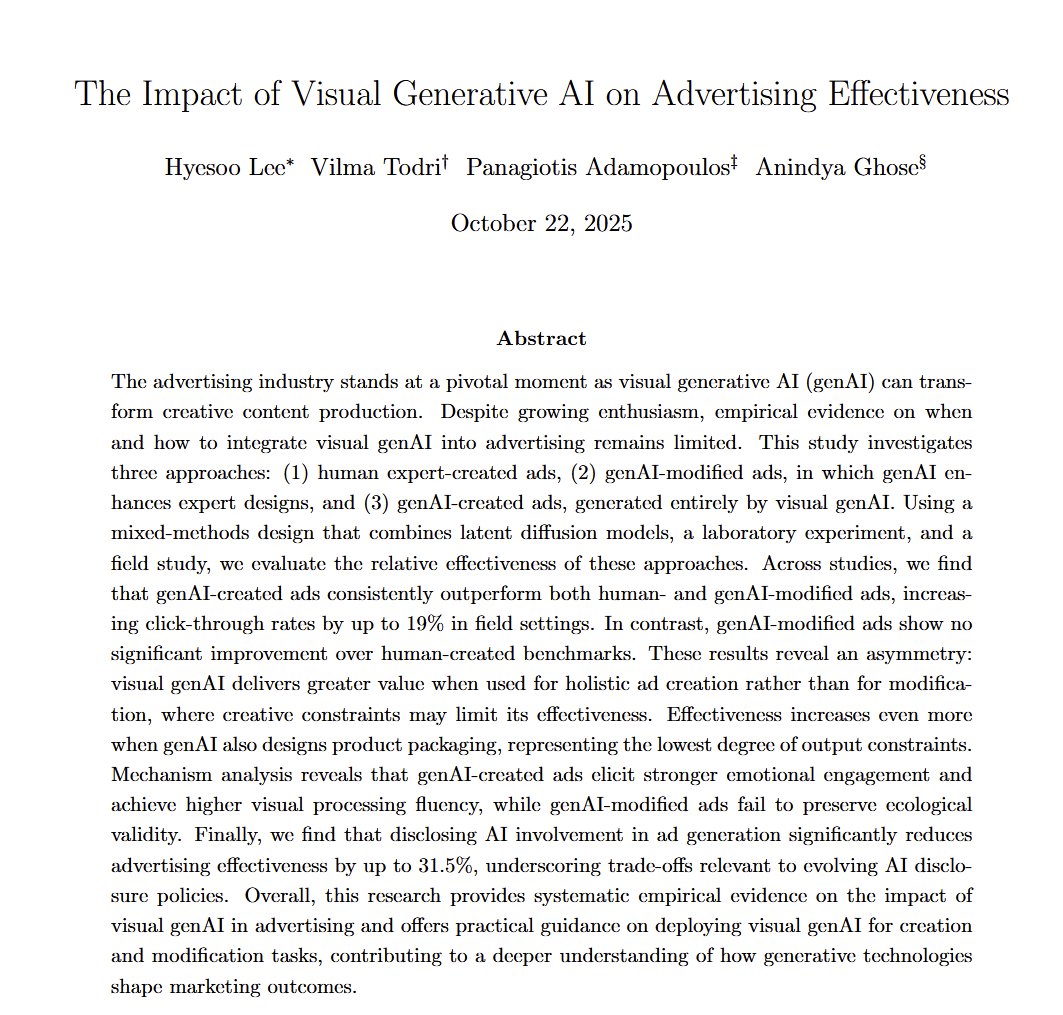

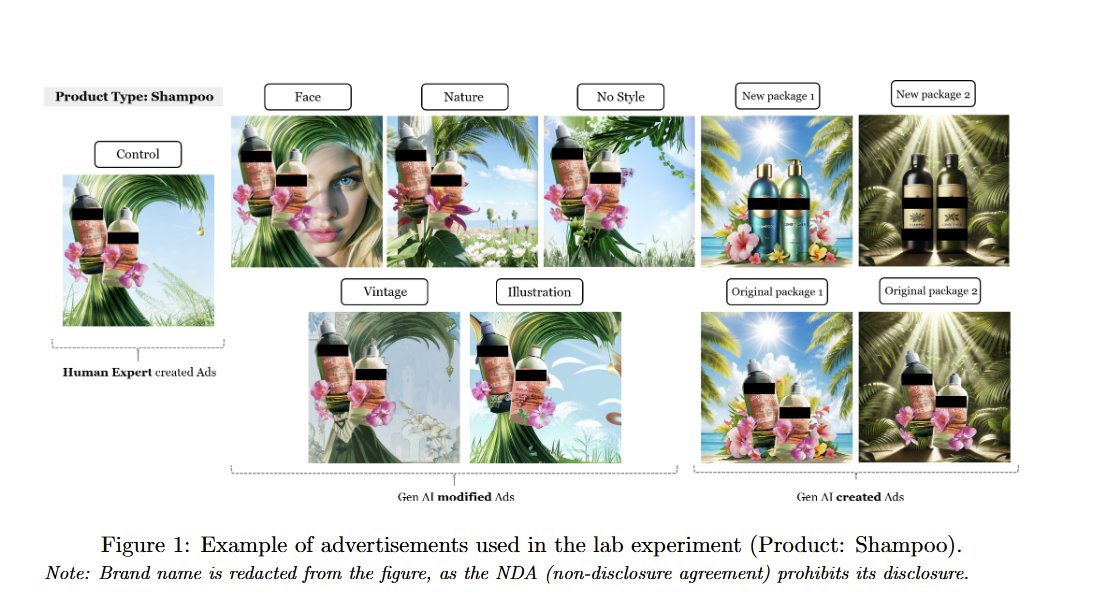

AI created visual ads got 20% more clicks than ads created by human experts as part of their jobs... unless people knew the ads are AI-created, which lowers click-throughs to 31% less than human-made ads Importantly, the AI ads were selected by human experts from many AI options https://t.co/EJkZ1z05FO

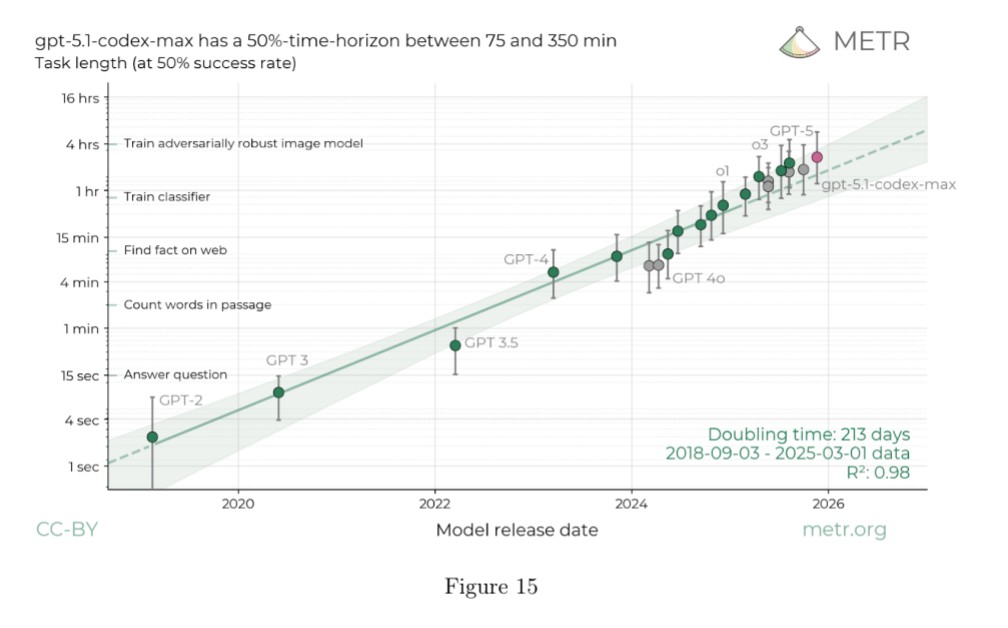

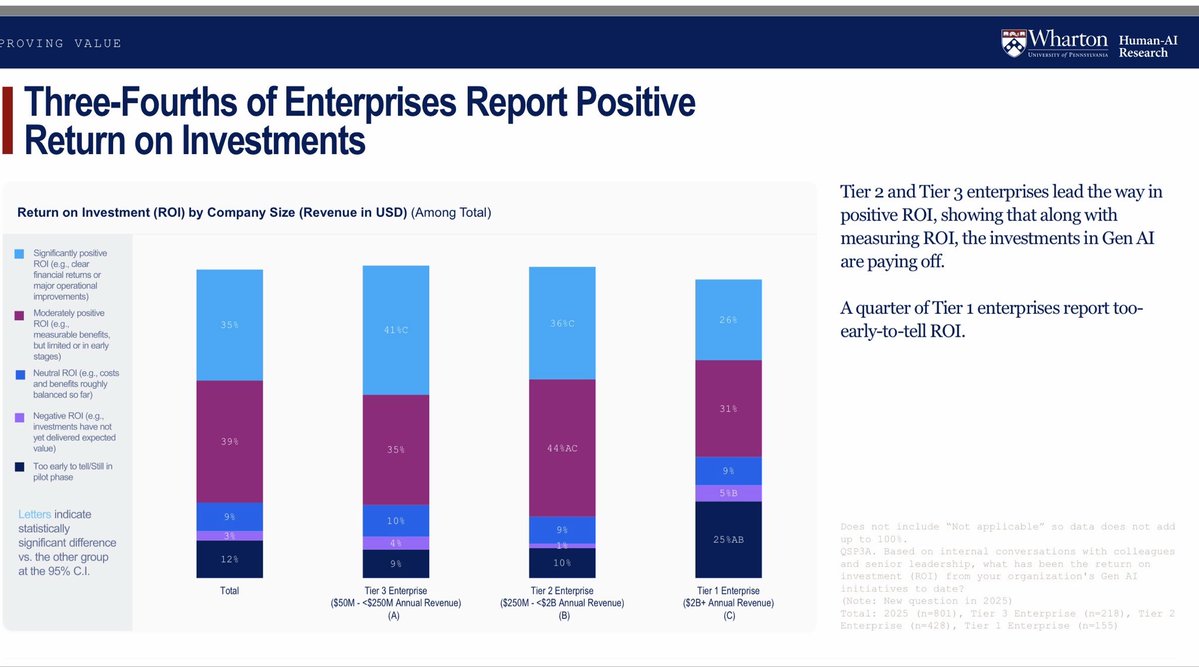

Summarizing 2025 in AI in a tweet 1) No sign of a slowdown in exponential pace of gains 2) Jaggedness remains the main issue of AI 3) Early days for deployment, but many companies reporting positive ROI 4) GenAI became an industry, with industry-level impacts 5) AI is still weird https://t.co/W5yiNrnX67

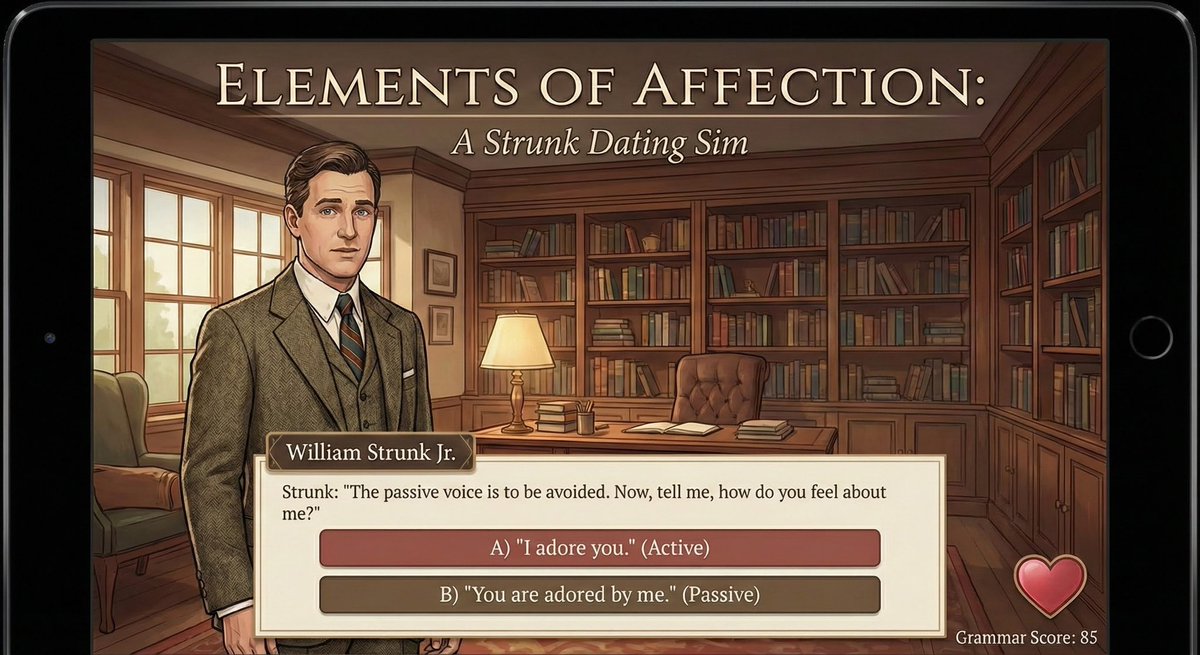

The worst literary game ideas, brought to life thanks to nano banana: Strunk and White as a dating simulator, Shirley Jackson’s The Lottery as an FPS, Ethan Frome as a racing game, The Yellow Wallpaper as a match-3 mobile room decoration game. https://t.co/V9X59to2mp

https://t.co/byB7QUdxAz