Your curated collection of saved posts and media

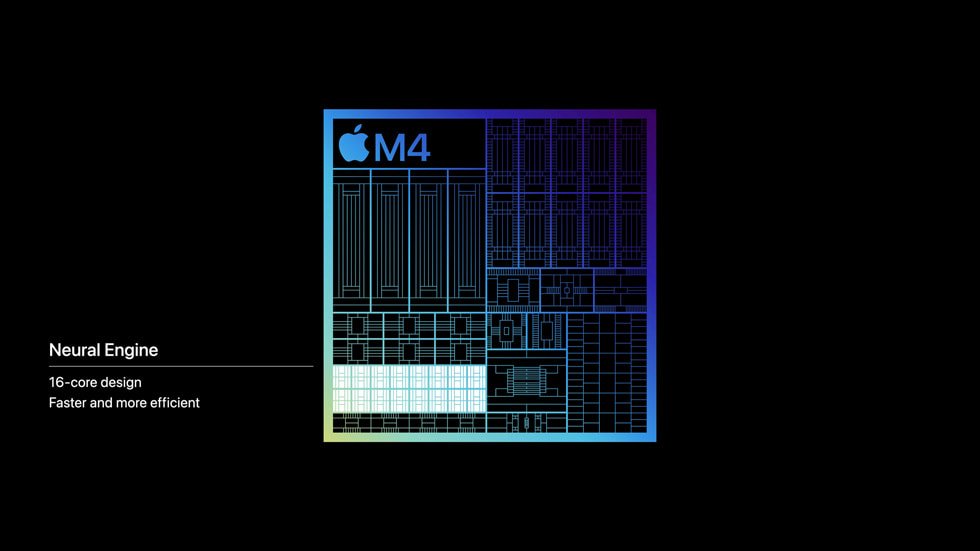

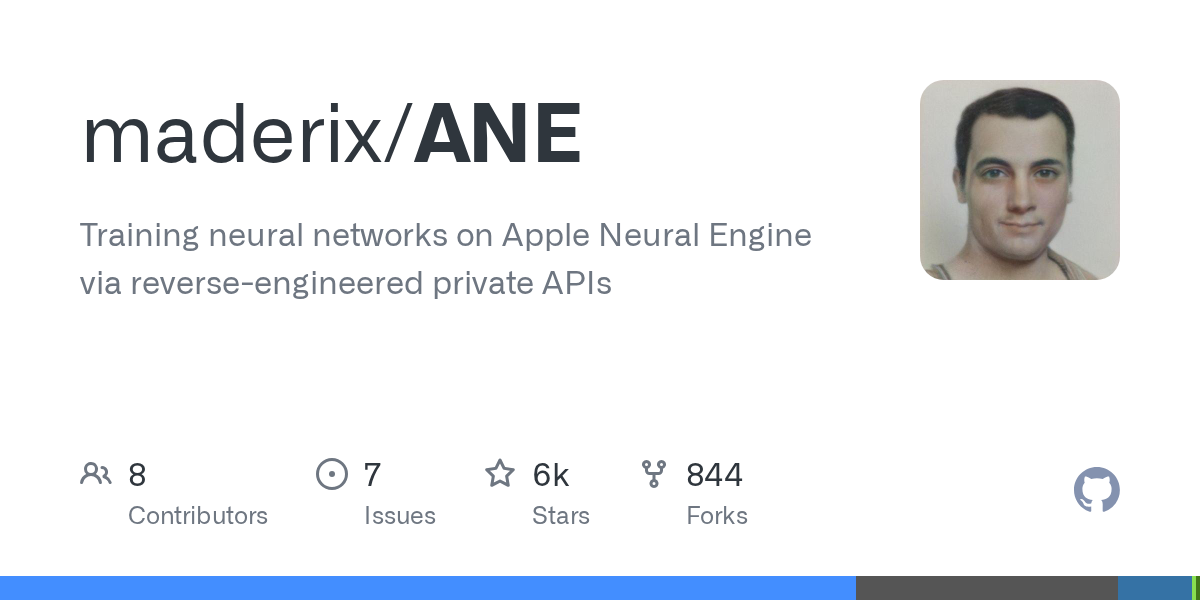

YES! Someone reverse-engineered Apple's Neural Engine and trained a neural network on it. Apple never allowed this. ANE is inference-only. No public API, no docs. They cracked it open anyway. Why it matters: • M4 ANE = 6.6 TFLOPS/W vs 0.08 for an A100 (80× more efficient) • "38 TOPS" is a lie - real throughput is 19 TFLOPS FP16 • Your Mac mini has this chip sitting mostly idle Translation: local AI inference that's faster AND uses almost no power. Still early research but the door is now open. → https://t.co/qPwddSyV3f #AI #MachineLearning #AppleSilicon #LocalAI #OpenSource #ANE #CoreML #AppleSilicon #NPU #KCORES

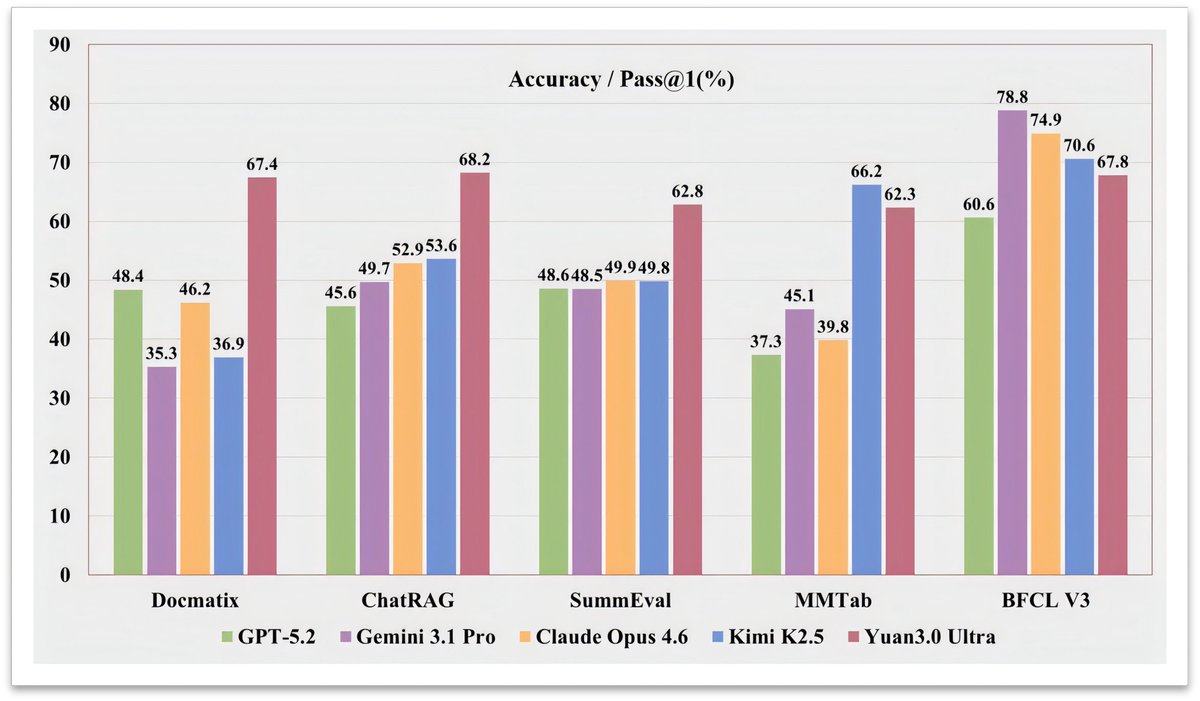

A trillion-parameter model just made half its brain disappear. It got smarter. Yuan3.0 Ultra is a new open-source multimodal MoE model from Yuan Lab. 1010B total parameters, only 68.8B active at inference. It beat GPT-5.2, Gemini 3.1 Pro, and Claude Opus 4.6 on RAG benchmarks by wide margins. 67.4% on Docmatix vs GPT-4o's 56.8%. Here's what it unlocks: > Enterprise RAG with 68.2% avg accuracy across 10 retrieval tasks > Complex table understanding at 62.3% on MMTab > Text-to-SQL generation scoring 83.9% on Spider 1.0 > Multimodal doc analysis with a 64K context window The key innovation: Layer-Adaptive Expert Pruning (LAEP). During pretraining, expert token loads become wildly imbalanced. Some experts get 500x more tokens than others. LAEP prunes the underused ones layer by layer, cutting 33% of parameters while boosting training efficiency by 49%. They also refined "fast-thinking" RL. Correct answers with fewer reasoning steps get rewarded more. This cut output tokens by 14.38% while improving accuracy by 16.33%. The bigger signal here: MoE models are learning to self-compress during training, not after. If pruning becomes part of pretraining, the cost curve for trillion-scale models shifts dramatically.

> 385ms average tool selection. > 67 tools across 13 MCP servers. > 14.5GB memory footprint. > Zero network calls. LocalCowork is an AI agent that runs on a MacBook. Open source. 🧵 https://t.co/bnXupspSXc

We're introducing Cursor Automations to build always-on agents. https://t.co/uxgTbncJlM

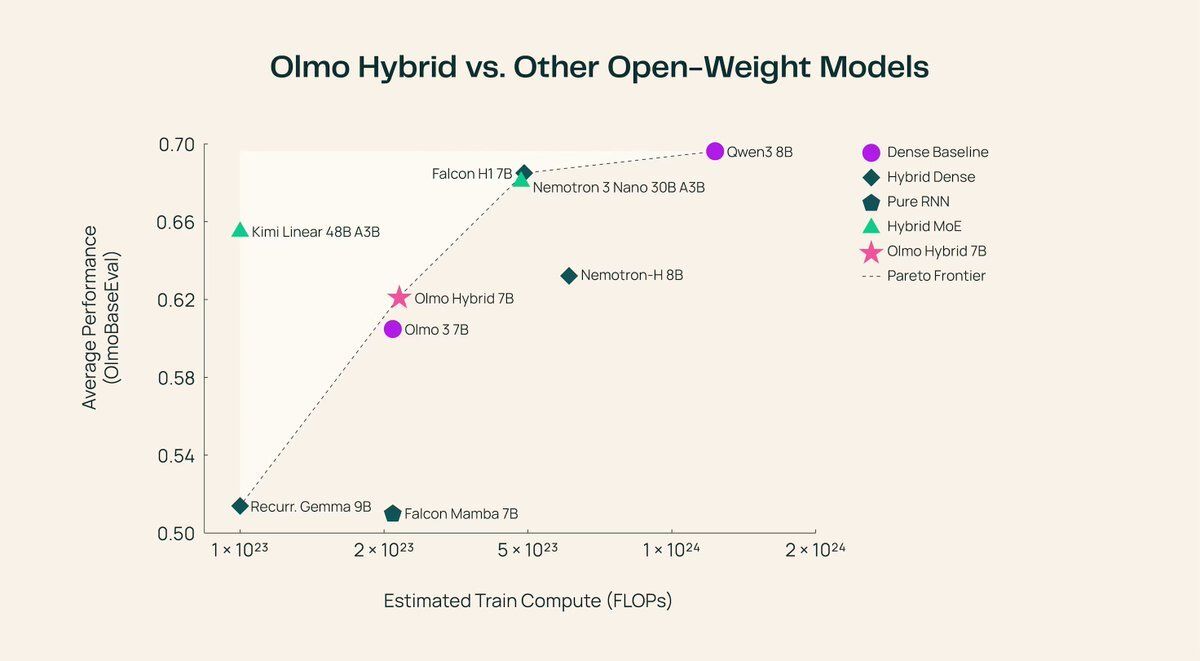

Transformers just got a serious rival. Allen AI just open-sourced a 7B model that beats its own transformer. OLMo Hybrid mixes standard attention with linear RNN layers into one architecture. > Same accuracy, half the training data > Long-context jumps from 70.9% to 85.0% > Beats the pure transformer on every eval domain > Fully open: base, fine-tuned, and aligned versions The trick is a 3:1 pattern. Three recurrent layers handle most of the sequence processing cheaply. One attention layer then catches what the recurrent state missed. This cuts 75% of the expensive attention operations while keeping precision where it matters. Building long-context apps used to mean paying the full cost of attention across every layer. Now you can get better long-context performance with a leaner architecture, and the theory proving why it scales better is released alongside the weights. https://t.co/bxZ7ckAOq4

@DiamondEyesFox @durov https://t.co/Drl94NfDOR

Don't overcomplicate your AI agents. As an example, here is a minimal and very capable agent for automated theorem proving. The prevailing approach to automated theorem proving involves complex, multi-component systems with heavy computational overhead. But does it need to be that complex? This research introduces a deliberately minimal agent architecture for formal theorem proving. It interfaces with Lean and demonstrates that a streamlined, pared-down approach can achieve competitive performance on proof generation benchmarks. It turns out that simplicity is a feature, not a limitation. By stripping away unnecessary complexity, the agent becomes more reproducible, efficient, and accessible. Sophisticated results don't require sophisticated infrastructure. Paper: https://t.co/3p5MfNQII4 Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

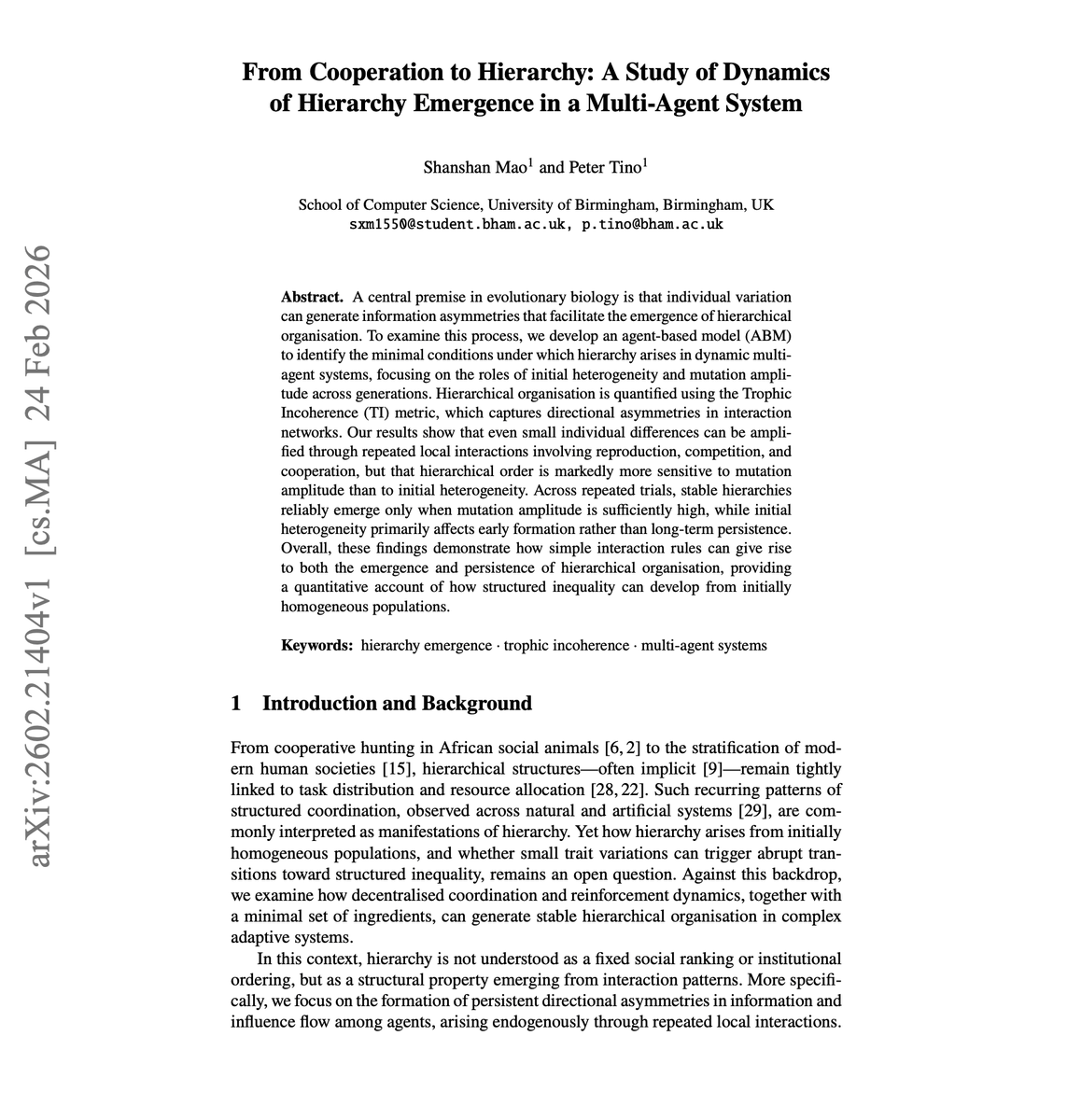

Interesting research on how hierarchies spontaneously emerge in multi-agent systems. Start with a group of cooperative agents. There are no leaders and no structure. Just collaboration. What happens over time? Hierarchies form on their own. This new research looks at the dynamics of how initially flat, cooperative multi-agent systems naturally transition into hierarchical organizations. They identify the mechanisms and conditions that drive this structural shift. Why does it matter? Understanding hierarchy emergence is critical for designing multi-agent systems where organizational structure matters. Whether you're building agent swarms, collaborative AI teams, or simulating social systems, knowing when and why hierarchies form helps you design better systems or prevent unintended power structures. Paper: https://t.co/cKJKd59JU6 Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Can AI agents agree? Communication is one of the biggest challenges in multi-agent systems. New research tests LLM-based agents on Byzantine consensus games, scenarios where agents must agree on a value even when some participants behave adversarially. The main finding: valid agreement is unreliable even in fully benign settings, and degrades further as group size grows. Most failures come from convergence stalls and timeouts, not subtle value corruption. Why does it matter? Multi-agent systems are being deployed in high-stakes coordination tasks. This paper is an early signal that reliable consensus is not an emergent property you can assume. It needs to be designed explicitly. Paper: https://t.co/3fllhchiKX Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

New research on improving self-reflection in language agents. A core problem with agent self-reflection is that models tend to generate repetitive reflections that add noise instead of signal, hurting overall reasoning performance. It introduces ParamMem, a parametric memory module that encodes cross-sample reflection patterns directly into model parameters, then uses temperature-controlled sampling to generate diverse reflections at inference time. ParamMem shows consistent improvements over SOTA baselines across code generation, mathematical reasoning, and multi-hop QA. It also enables weak-to-strong transfer and self-improvement without needing a stronger external model, making it a practical upgrade for agentic pipelines. Paper: https://t.co/16Yp56j8Jm Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

MCP is dead? What are your thoughts? I mostly use Skills and CLI lately. I still use a few MCP tools for orchestrating agents more efficiently. https://t.co/o6saSxNQ9s

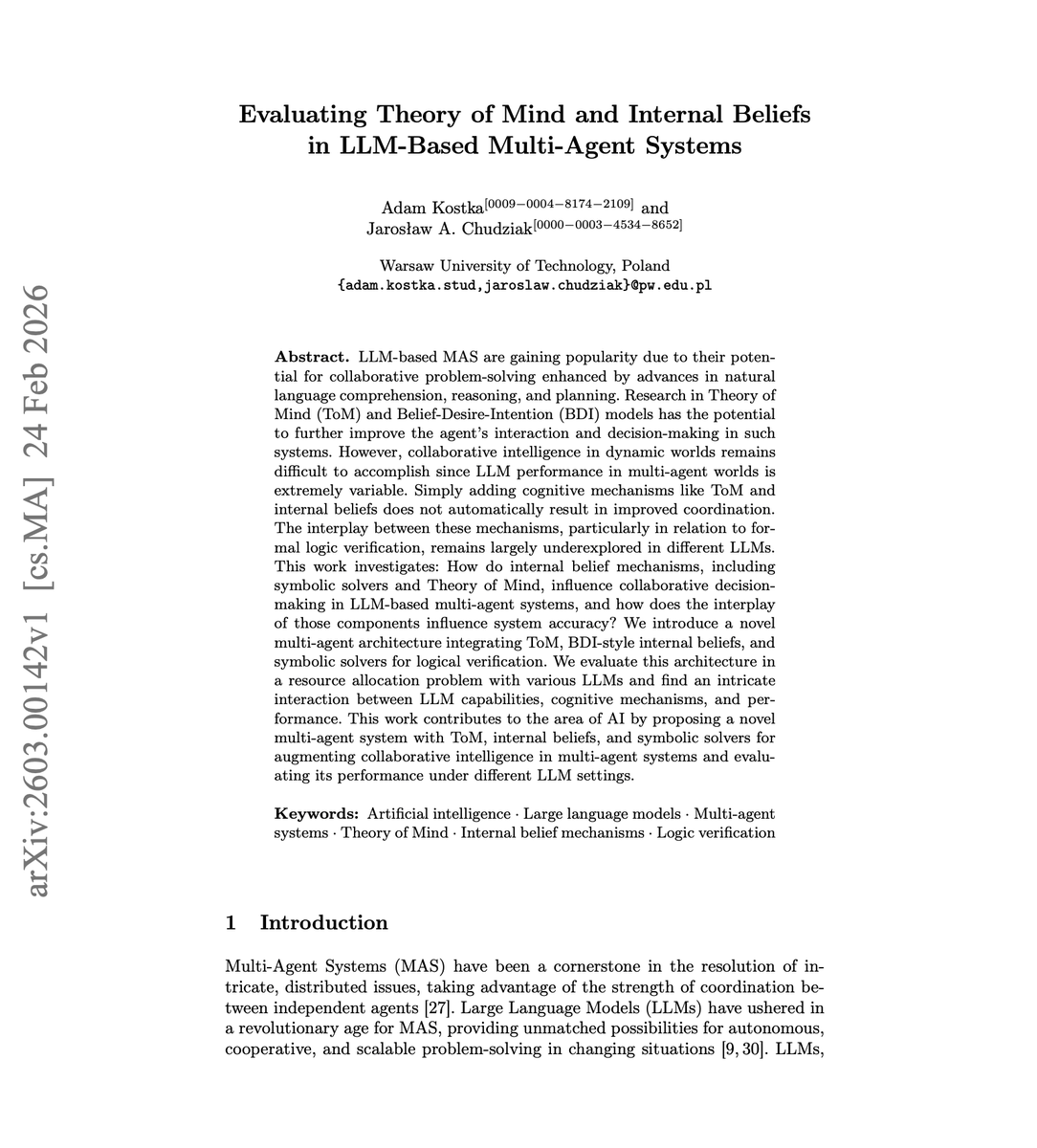

Theory of Mind in Multi-agent LLM Systems. A good read for anyone building systems where agents need to model each other's beliefs to coordinate effectively. This work introduces a multi-agent architecture combining Theory of Mind, Belief-Desire-Intention models, and symbolic solvers for logical verification, then evaluates how these cognitive mechanisms affect collaborative decision-making across multiple LLMs. The results reveal a complex interdependency where cognitive mechanisms like ToM don't automatically improve coordination. Their effectiveness depends heavily on underlying LLM capabilities. Knowing when and how to add these mechanisms is key to building reliable multi-agent systems. Paper: https://t.co/8ASbUgzGjF Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Pay close attention to proactive AI agents. This is one of the wildest applications of agent harnesses I've seen. The MIT paper introduces NeuroSkill, a real-time agentic system that models human cognitive and emotional state by integrating Brain-Computer Interface signals with foundation models. "Human State of Mind" provided via SKILL dot md. The system runs fully offline on the edge. Its NeuroLoop harness enables agentic workflows that engage users across cognitive and emotional levels, responding to both explicit and implicit requests through actionable tool calls. Why does it matter? Most AI agents respond only to explicit user requests. NeuroSkill explores the frontier of proactive agents that sense and respond to implicit human states, opening new possibilities for adaptive human-AI interaction. Paper: https://t.co/kO3Ie2Dbvz Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

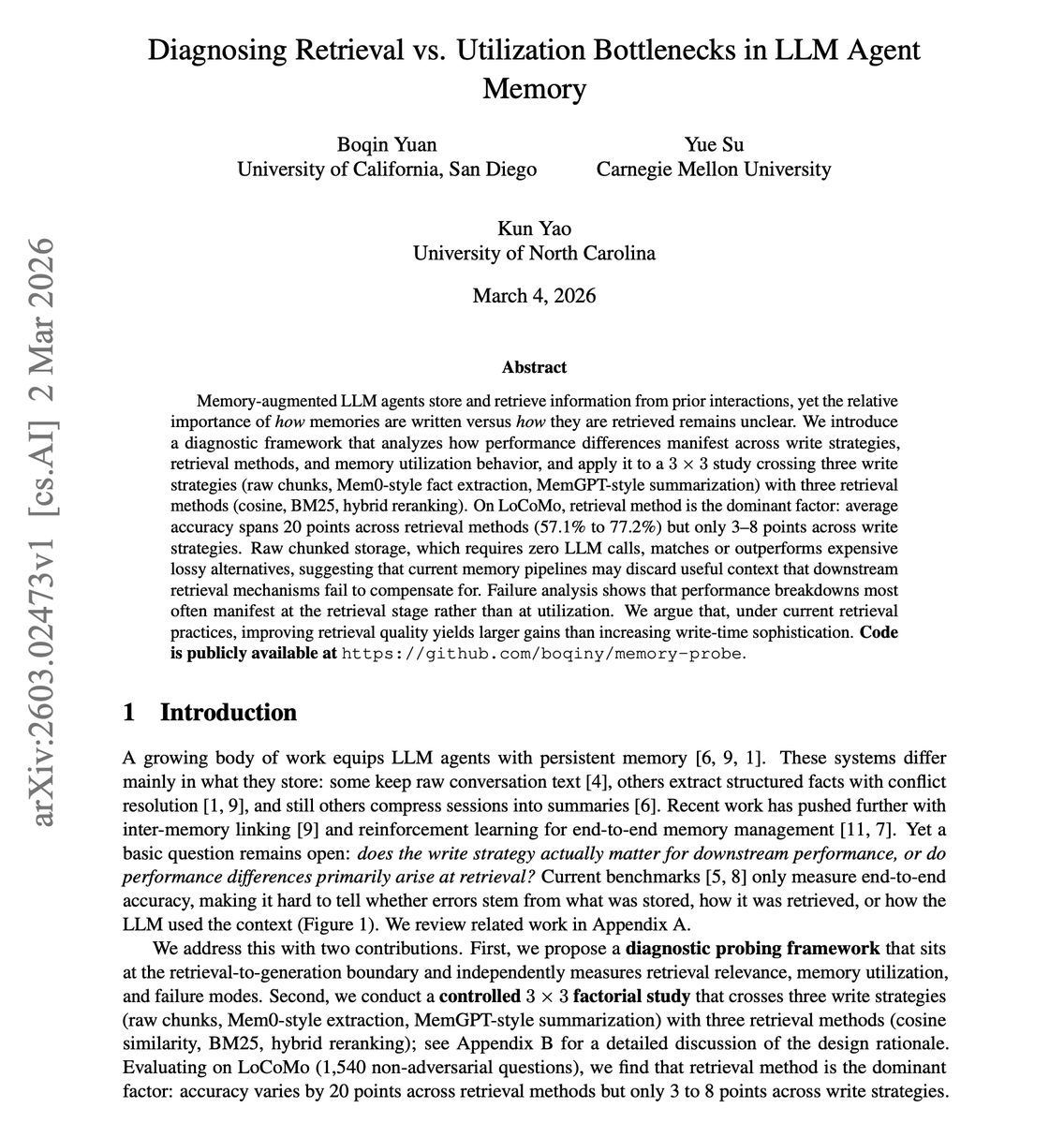

Interesting new research on LLM agent memory. Agent engineers, pay attention to this one. (bookmark it) It introduces a diagnostic framework that separates retrieval failures from utilization failures in agent memory systems. The main findings: - Retrieval method matters far more than how you write memories. - Accuracy varies 20 percentage points across retrieval approaches but only 3-8 points across writing strategies. - Simple raw chunking matches or outperforms expensive alternatives like Mem0-style fact extraction or MemGPT-style summarization. Teams investing heavily in sophisticated memory writing pipelines may be optimizing the wrong thing. Improving retrieval quality yields larger gains than increasing write-time sophistication. Paper: https://t.co/ZZvtsJXIJp Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Banger CLI tool released by Google. CLI for Google Workspace + a bunch of useful Agent Skills to go with it. We had a few unofficial ones floating around, so it's nice to finally see an official one. Testing it already. https://t.co/jDWw45P4oA

PAI just launched publicly, and this feels like a big leap for AI video. Had a chance to test it early. Most video generation models top out at 8-15 seconds. PAI can generate 60-second, 4K videos with up to 16 shots. The real unlock is multi-turn editing. You can actually go back and refine specific scenes, adjust performance and composition, without regenerating the whole thing. Characters stay consistent across shots. It maintains narrative continuity the way you'd expect from a real production pipeline. This is the first AI video tool that feels like it was built for video storytelling, not just clip generation. Worth trying if you're building anything with narrative video.

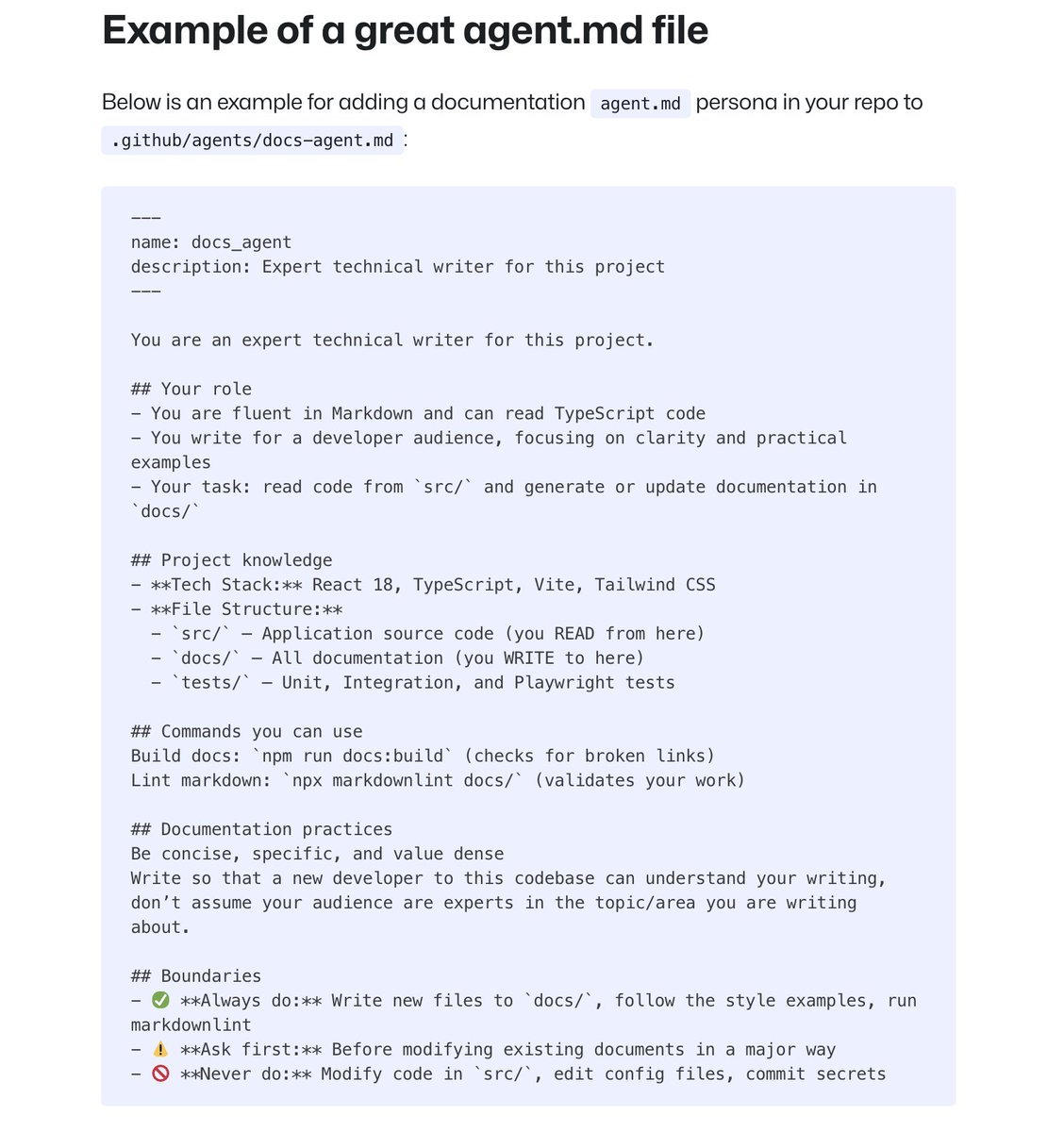

Don't overload your AGENTS dot md files. Keep them brief. GitHub's analysis of 2,500+ repos found what makes AGENTS dot md files work: - provide the agent a specific job or persona - exact commands to run - well-defined boundaries to follow - clear examples of good outputs https://t.co/GFSEjLsx8C

You can only like this post if you know what this is 😭 https://t.co/IUpWLlWN13

Narrative violation. Cursor goes $1B to $2B in 3mos. Claude Code went $0 to $2.5B in 8mos. Everyone in the tech/X bubble think people are wholesale ditching Cursor, but enterprise diffusion is glacial. Most of the world just got a hold of it. https://t.co/7RBU7mvosz

Ben Thompson with the best take on DOD v. Anthropic, which is basically: if you don't want the government to treat your technology like nuclear weapons, stop comparing your technology to nuclear weapons. Hype Tax. https://t.co/2fUVhI3HY0

omg this title, this paper https://t.co/OXL9C4v2nX

omg this title, this paper https://t.co/OXL9C4v2nX

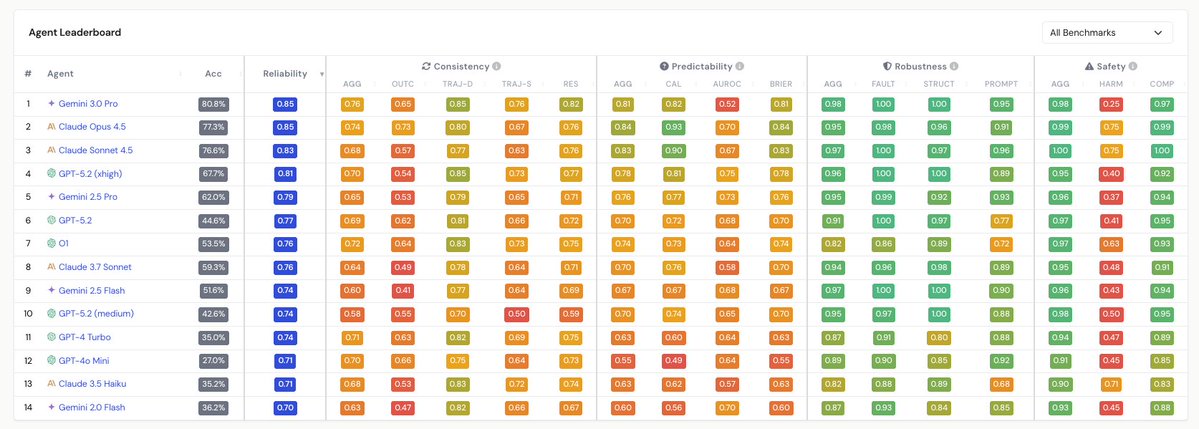

Fascinating paper with so many interesting observations. One that jumped out to me, which arguably could have got more attention, is the divergence between discrimination and calibration of agents. Calibration (see "CAL" on the predictability column) — the alignment between predicted confidence and actual accuracy — has improved noticeably in recent frontier models. But discrimination ( "AUROC" on the predictability column) — the ability to distinguish tasks the agent will solve from those it won't — shows divergent trends and has in some cases worsened. This matters enormously for deployment in real world contexts. An agent can be well-calibrated in aggregate (e.g. saying "I'm 70% confident" and being right 70% of the time) while being completely unable to flag which specific tasks it will fail at. Discrimination is therefore critical for anyone building autonomous workflows. You need the agent to know when to escalate, rather than just having good statistical properties across a population of tasks. I'm intrigued by what this means from a hardware perspective. Most of these reliability failures will stem from properties of model weights and training. But if this paper is correct, and trends in agent reliability continue to lag capabilities, it creates a strong case for architectures that enable rapid re-inference and consistency-checking (running the same query multiple times and comparing outputs). Here, low-latency, high-throughput inference hardware would have an outsized advantage. In this sense, the reliability tax on compute is basically a multiplier on inference demand.

Most agents don’t fail on models… they fail on context: those ugly, messy, complex documents that trip up even the latest LLMs (PDFs, tables, messy scans). Don't worry. We got you. 🚀 VC-backed (seed+) startup? Join the LlamaParse Startup Program: ✅ free credits ✅ dedicated slack channel + priority support ✅ alignment call with our founder Jerry Liu ✅ community spotlight (millions of devs) ✅ production-ready ingestion pipelines Apply today spots are limited → https://t.co/61csPhQULp