Your curated collection of saved posts and media

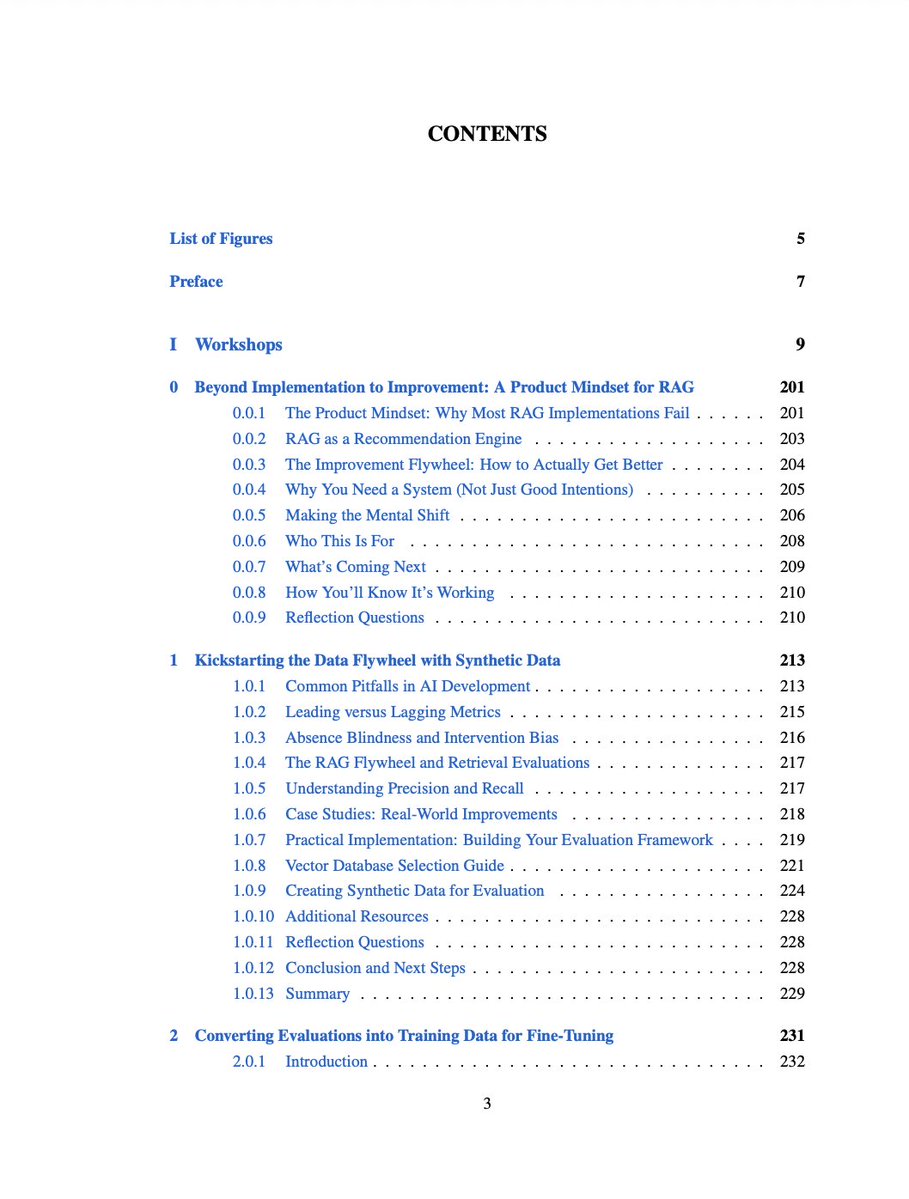

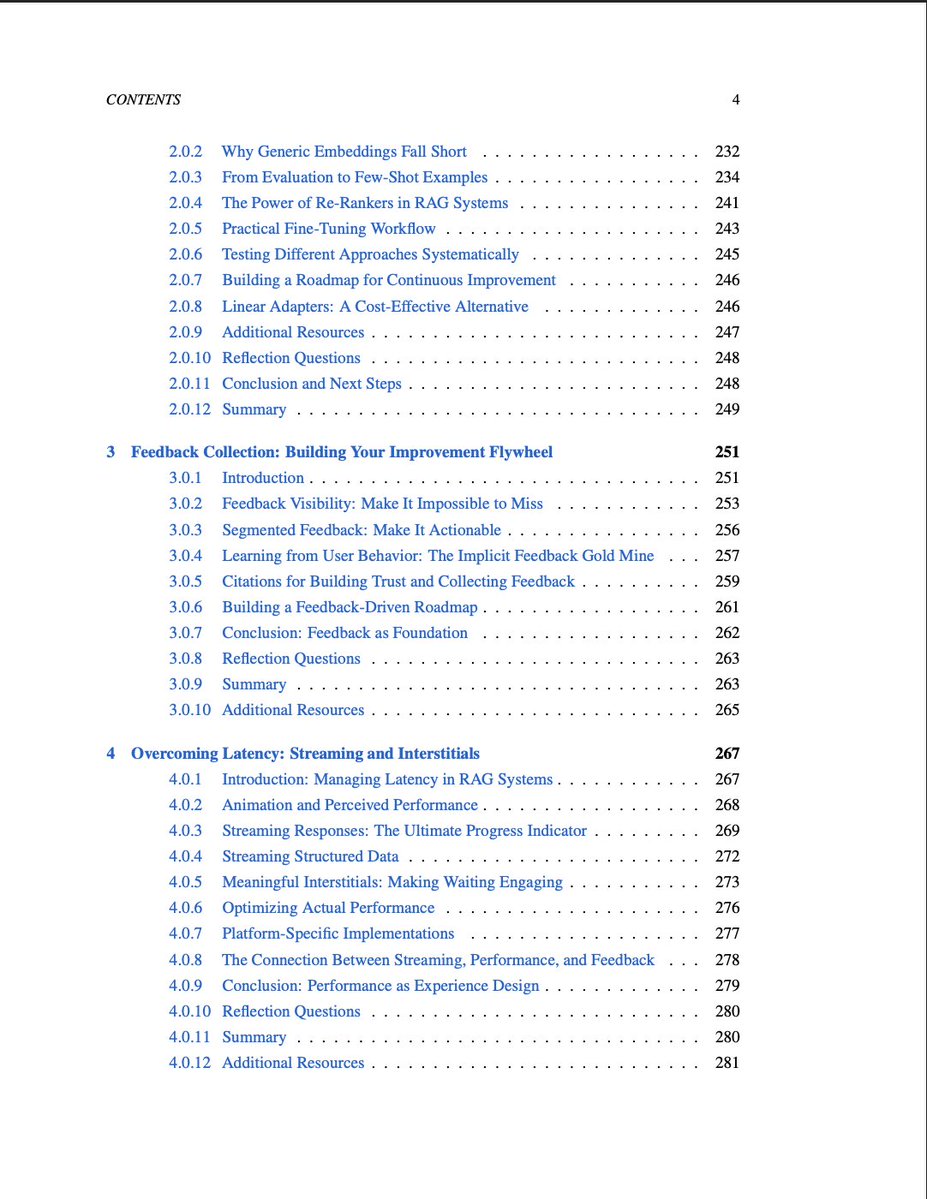

We are focusing on building! Very little theory in this one. It's not about tricks and prompts; it's about building systematically and solving hard problems. This is useful for both beginners and experienced builders. The course launches tomorrow! https://t.co/6ZICQMTK0X

Vibe Minecraft: a multi-player, self-consistent, real-time world model that allows building anything and conjuring any objects. The function of tools and even the game mechanics itself can be programmed by natural language, such as "chrono-pickaxe: revert any block to a previous state in time" and "waterfalls turn into rainbow bridge when unicorns pass by". Players collectively define and manipulate a shared world. The neural sim takes as input a *multimodal* system prompt: game rules, asset pngs, a global map, and easter eggs. It periodically saves game states as a sequence of latent vectors that can be loaded back into context, optionally with interleaved "guidance texts" to allow easy editing. Each gamer has their own explicit stat json (health, inventory, 3D coordinate) as well as implicit "player vectors" that capture higher-order interaction history. Game admins can create a Minecraft multiverse because the latents are compatible from different servers. Each world can seamlessly cross with another to spawn new worlds in seconds. People can mix & match with their friends' or their own past states. "Rare vectors" can emerge as some players would inevitably wander into the bizarre, uncharted latent space of the world model. Those float matrices can be traded as NFTs. The wilders things you try, the more likely you'll mine rare vectors. Whoever ships Vibe Minecraft first will go down in history as altering the course of gaming forever.

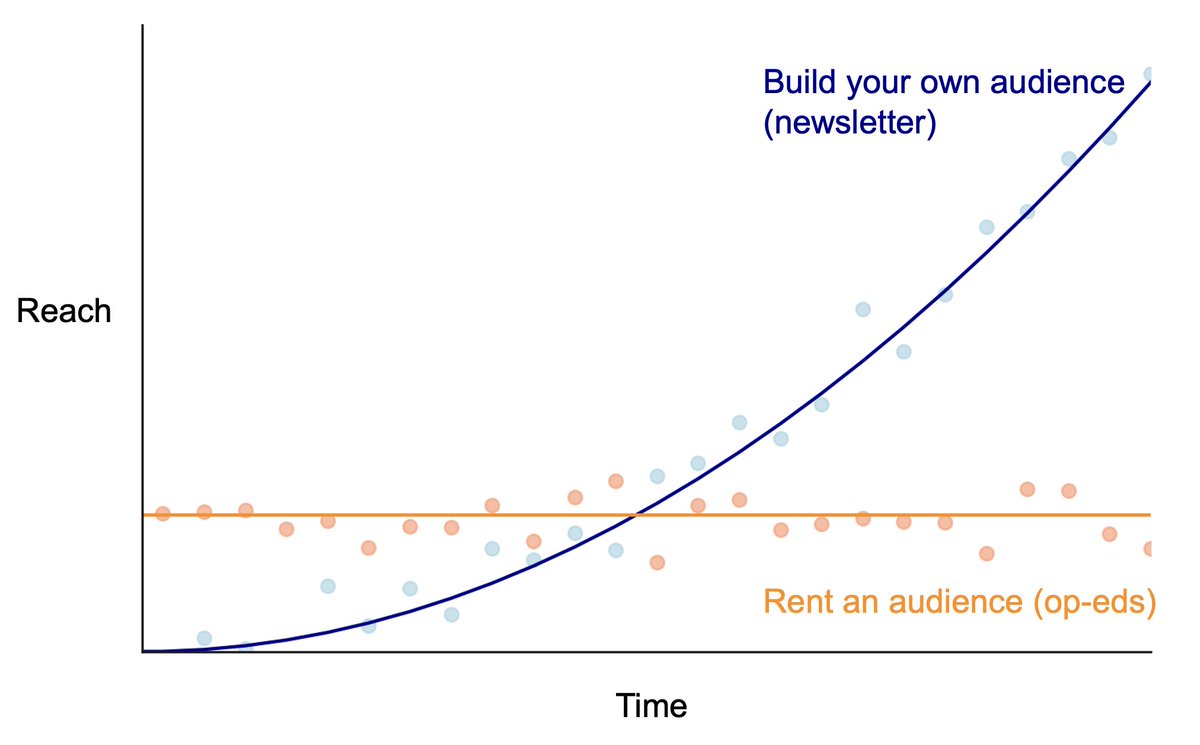

Some people have asked me about the decision to start a newsletter rather than using other channels like op-eds. There's one key thing I've learned, and I made a stylized chart to explain it. If you build your own audience, your reach grows over time with each piece you write. But it takes a *long* time — years in most cases — before that audience grows big enough that it all starts to feel worthwhile. Start a newsletter only if you know you're in it for the long haul. (I started the AI Snake Oil newsletter with @sayashk when we started writing our book together, so we knew we would be writing about AI for many years.) There are many other advantages to controlling your own channel, including not having to go through the crapshoot of pitching op-eds or worrying about word limits. In the long run, it is likely to be the better choice. But it's a lonely journey and there is no guarantee of success.

An interesting bug or shadowban, not sure which: when I retweet, it shows up on my profile but the counter doesn't go up (0 in this case). And my quote tweets don't work (just shows a link instead of expanding the quoted tweet) nor do link previews. It's been this way for months. https://t.co/2UPP2ftIMD

I'm giving a couple of online talks at events open to the public today (Wednesday) and tomorrow: - A high-level overview of AI as Normal Technology at the Public AI Summit, starting soon: https://t.co/svszMsu33Z - A talk on AI's impact on science at the Neuro-Symbolic AI summer school tomorrow: https://t.co/miQWlFvmDF Register to attend.

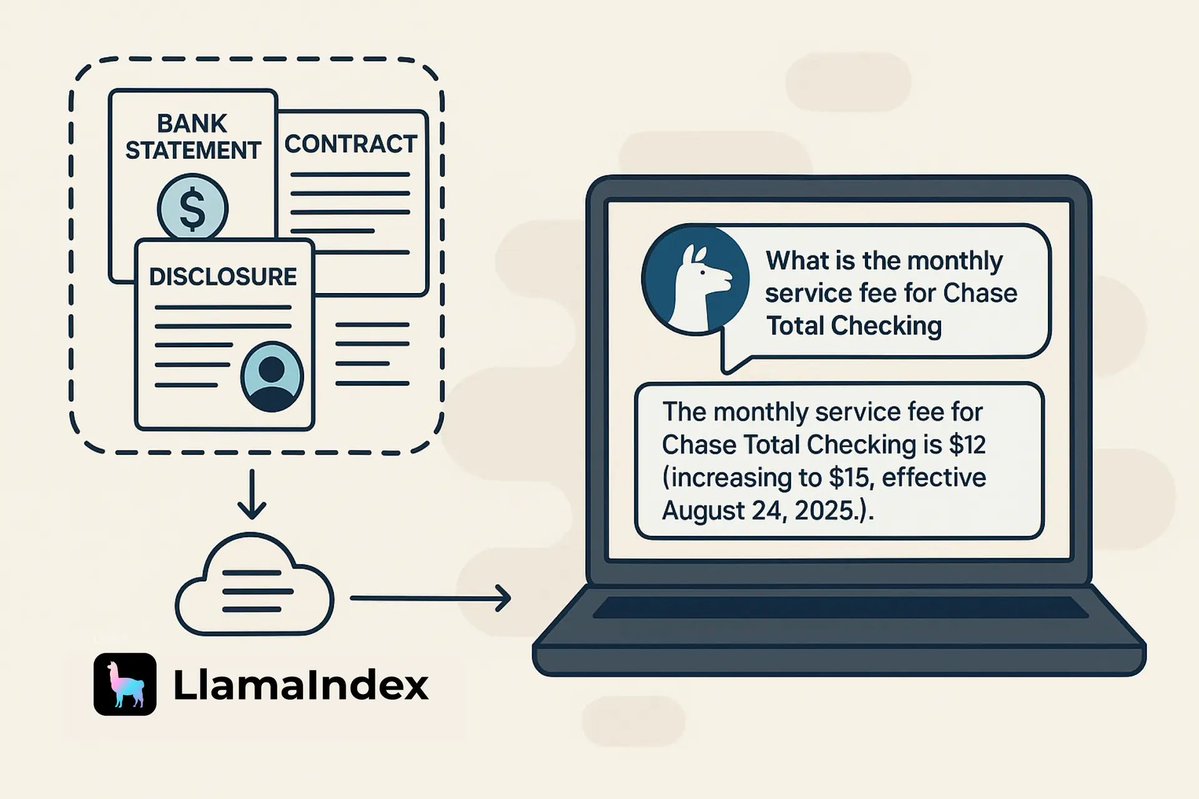

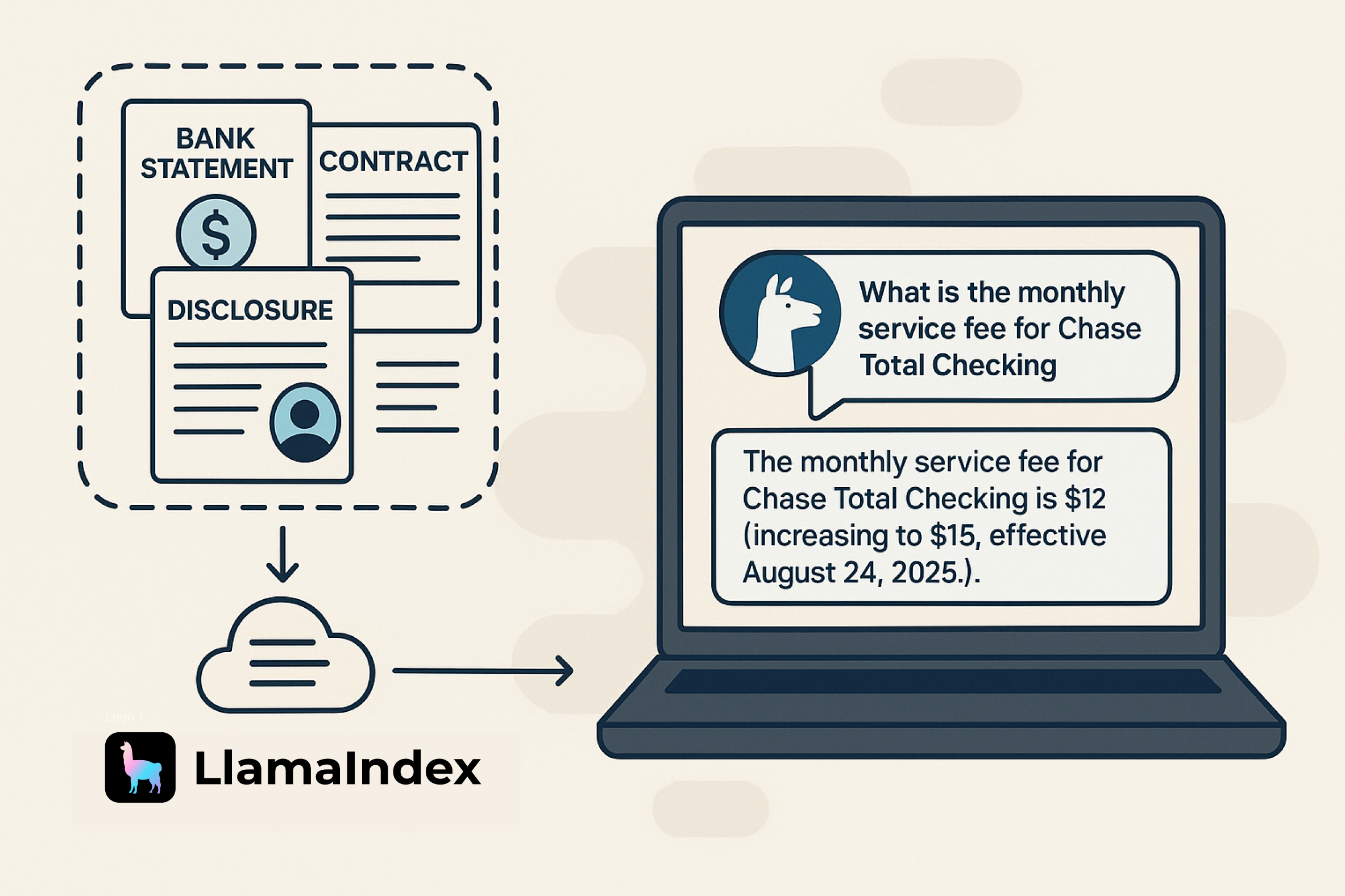

Transform dense enterprise documents into intelligent AI agents that can reason, calculate, and answer complex questions automatically. Here's a complete tutorial showing how to build context-aware document agents using LlamaCloud and our LlamaIndex, working with real @jpmorgan documents with deposit account disclosures and rate agreements: 📄 Parse and index complex PDFs seamlessly with LlamaCloud, going far beyond basic document extraction 🤖 Build agents that can reason across multiple document sections and perform multi-step calculations 🔧 Integrate document retrieval with business logic tools using our FunctionAgent and Workflows 💡 Handle scenarios like calculating banking fees across multiple transactions with full reasoning transparency The tutorial walks through everything from setup to deployment, showing how an agent can intelligently answer questions like "What fees would I be charged for overdrafts, card replacement, and stop payments?" by automatically querying relevant policies and performing the math. We're using Claude Sonnet 4 from @AnthropicAI to power the reasoning capabilities. Complete tutorial and code: https://t.co/1F73CjZhYK

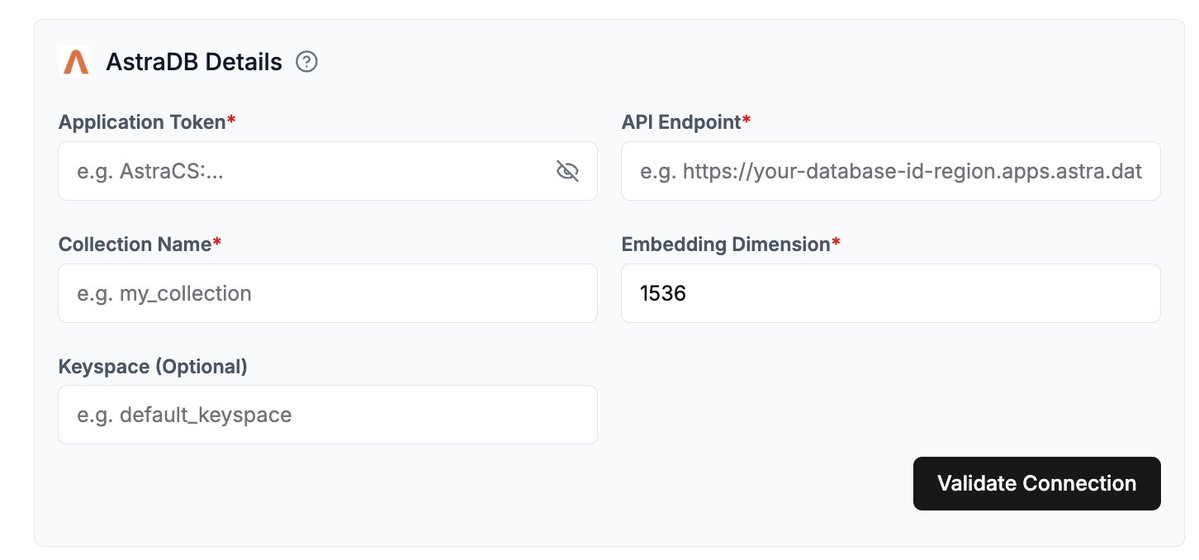

New data sink for LlamaCloud 🔥 🦙 Connect your @astradb database as a data sink in LlamaCloud for seamless vector storage and retrieval. Setting it up is straightforward - we support both UI configuration and programmatic setup through our Python and TypeScript clients. Here's what you need to know: 🔧 Simple configuration requiring just your AstraDB application token, API endpoint, and collection details 📊 Full support for query operators for precise metadata filtering This is enterprise-grade vector storage that integrates seamlessly with our indexing and retrieval systems, making it perfect for production agentic RAG applications that need reliable, scalable vector search. Read the full integration guide: https://t.co/CYwFbe8cv4

🚀 @SkySQL just cracked the code on hallucination-free SQL generation. Using @LlamaIndex, they built AI agents that turn natural language into accurate SQL queries across complex database schemas. Key wins: ✅ Zero hallucinated queries ✅ Faster development cycles ✅ Seamless MariaDB integration The future of database interactions is here 👇 https://t.co/x02mr07pCP #AI #Database #LlamaIndex

🦙☁️ Did you know that LlamaExtract is now available in our TypeScript SDK? 📦 You just need to install: 𝘯𝘱𝘮 𝘪𝘯𝘴𝘵𝘢𝘭𝘭 𝘭𝘭𝘢𝘮𝘢-𝘤𝘭𝘰𝘶𝘥-𝘴𝘦𝘳𝘷𝘪𝘤𝘦𝘴 👩💻 Check out Research Extractor: our @nextjs <> LlamaExtract demo that lets you upload research papers and receive structured information about them in markdown! Take a look below!👇 ⭐ Star the repo on GitHub: https://t.co/XgPYMEq5N4 📚 Learn about LlamaCloud: https://t.co/JHWRvwd93B

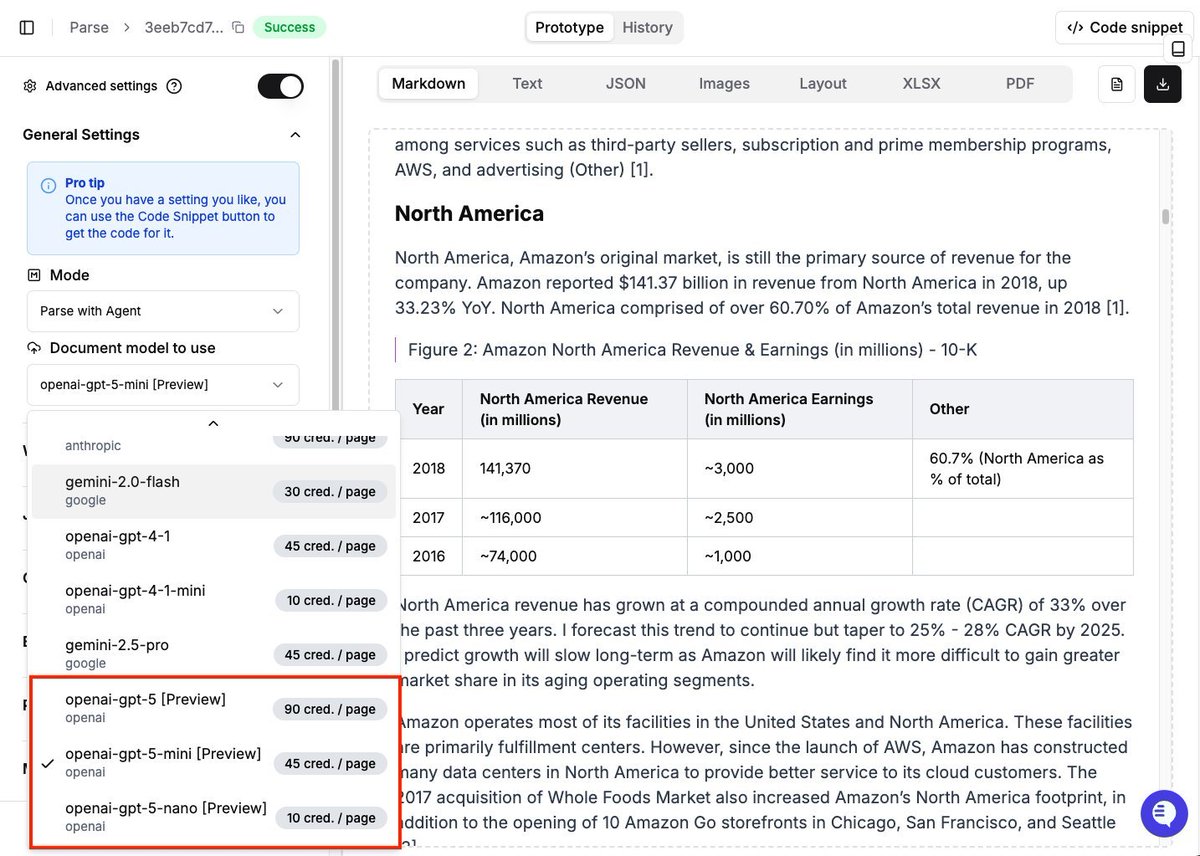

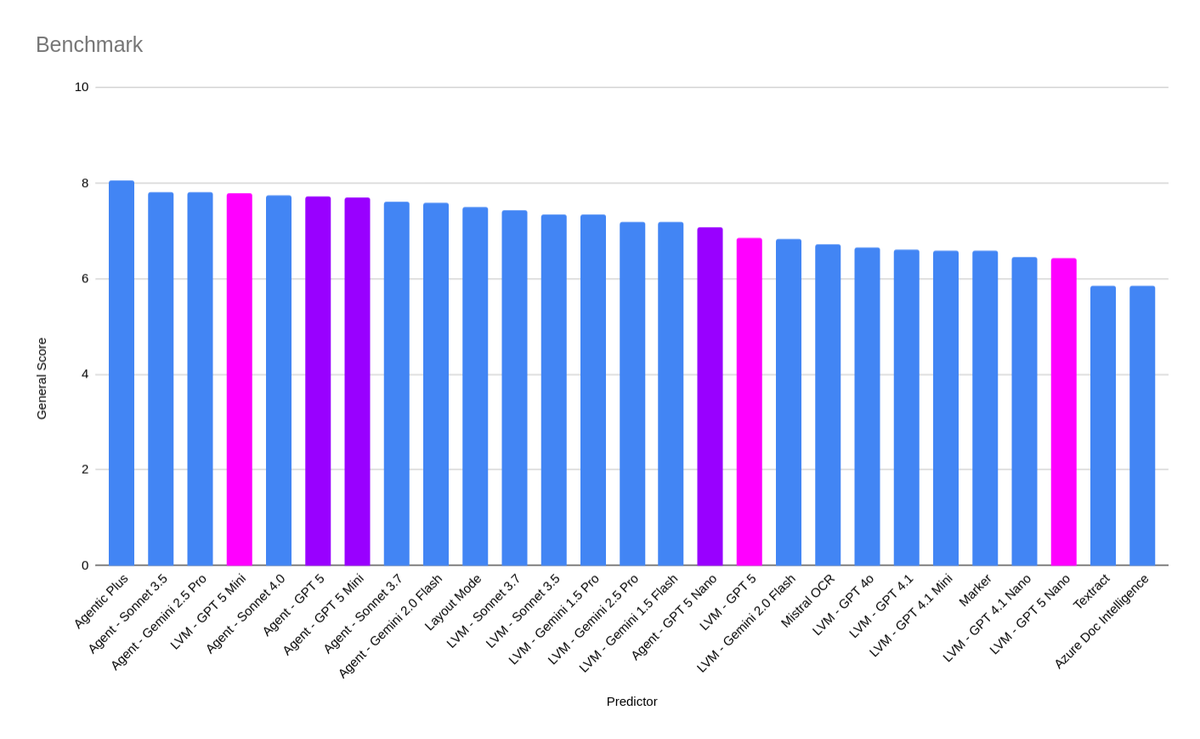

Just in! GPT-5 is now available to preview with LlamaParse. Our initial tests and benchmarks show promising results for gpt-5 mini, providing a nice blend between accuracy and cost with really nice table and visual recognition capabilities! Signup to start trying it out: https://t.co/dZJWwphaxB

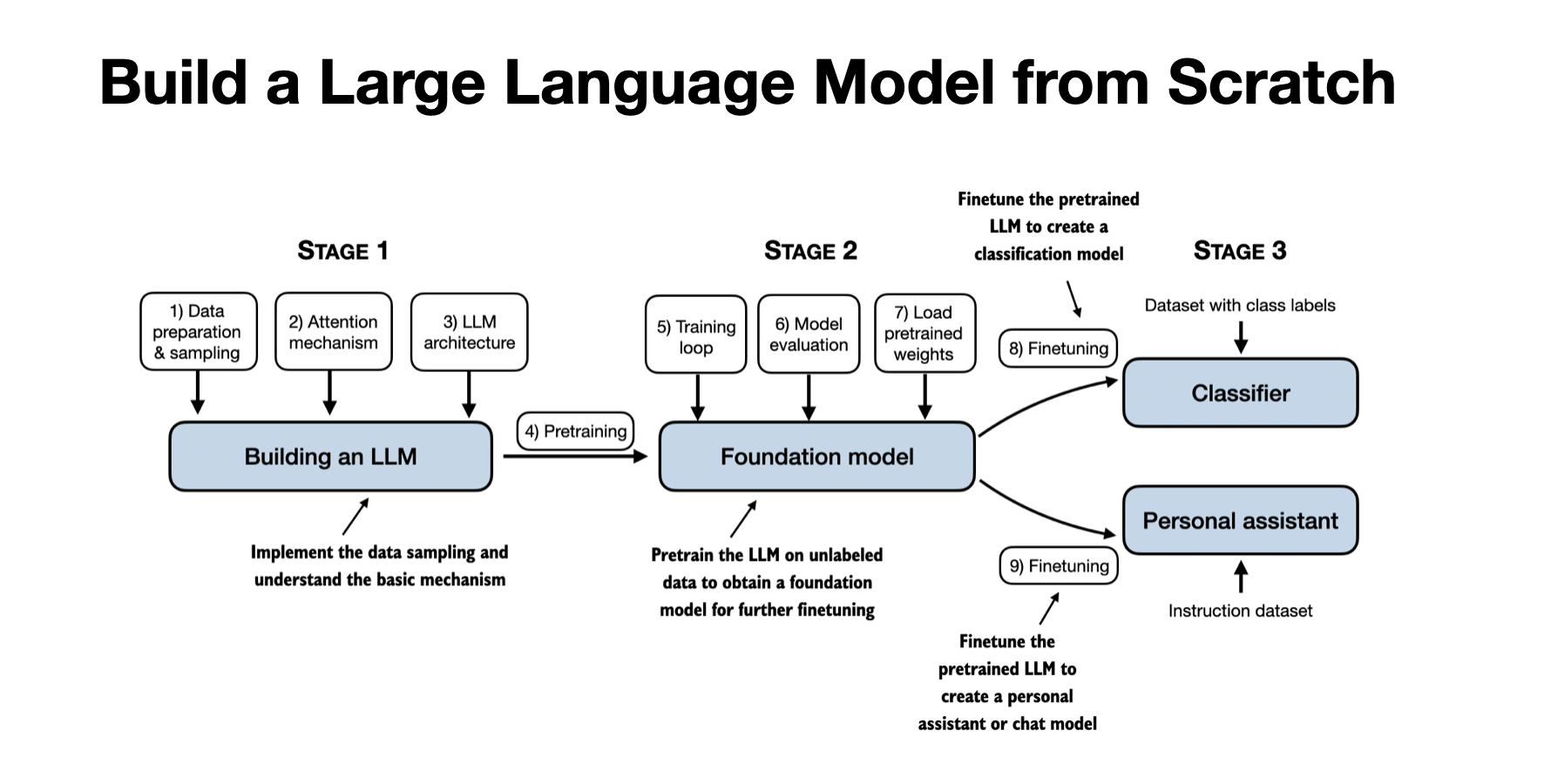

@ruairiSpain I have a from-scratch implementation here that might help: https://t.co/hwU0z3CU8r

Conceptually, it's pretty simple, but it's not super trivial to implement. You probably want to run it in an isolated docker container so it can't wrack havoc on your machine. The official repo has an implementation here: https://t.co/ctPfcaOMfq This is based on their response format they explain here: https://t.co/AeGv4XVFxg

@zhulin_li Thanks for the feedback! The figure & code does look correct to me though. Could you kindly explain the issue? https://t.co/2wnaD30Xth

Take your MAX models to the next level using Inworld Runtime, the first AI runtime engineered to scale consumer applications. Seamlessly flex from 10 to 10 million users with @inworld_ai and the power of Modular Platform! 💪 https://t.co/Fo6kYizsmt

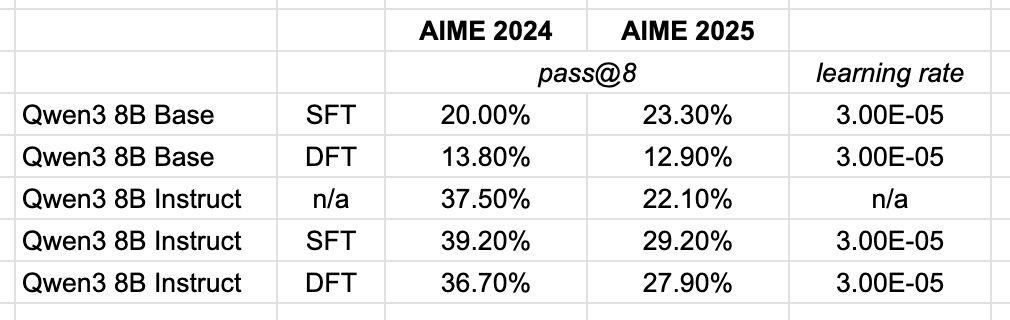

I was excited to try out the Dynamic Fine-Tuning proposed in this paper, but as all things that seem too good to be true, it likely is. The relative improvements over vanilla SFT weren't reproducible on a larger newer base model. In the paper they used Qwen 2.5 1.5B, so maybe it's something about smaller vs larger models using the proposed technique. Of course, take my own experiments with a grain of salt as I only performed this over a single learning rate to get a vibe check on DFT.

On the Generalization of SFT A Reinforcement Learning Perspective with Reward Rectification https://t.co/uukH6APiVl

@inyouendohs https://t.co/Of2pJKxZMc

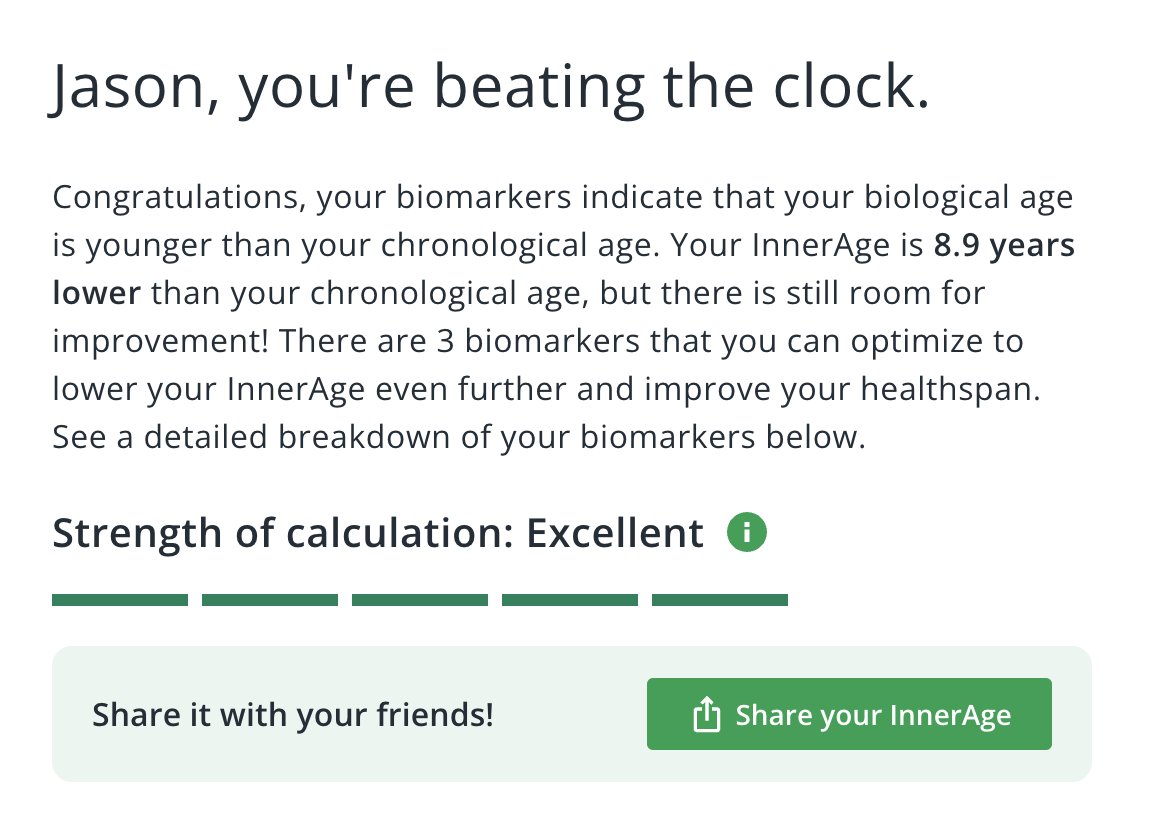

biologically i'm 30u30 https://t.co/9MwzOs6PGU

when you're competing with @HamelHusain https://t.co/Njnn5tPnQH

Indian stocks are now covered on Perplexity Finance! Enjoy! 🇮🇳 📈 https://t.co/VvNa4LqsXI

Toggle across markets here https://t.co/MwxjvCQ9Lt

Perplexity Max subscribers get exclusive access to the “Max Assistant” mode on Comet. Max Assistant is a lot more reliable and powerful on agentic prompts. https://t.co/Rg77v6nIkU

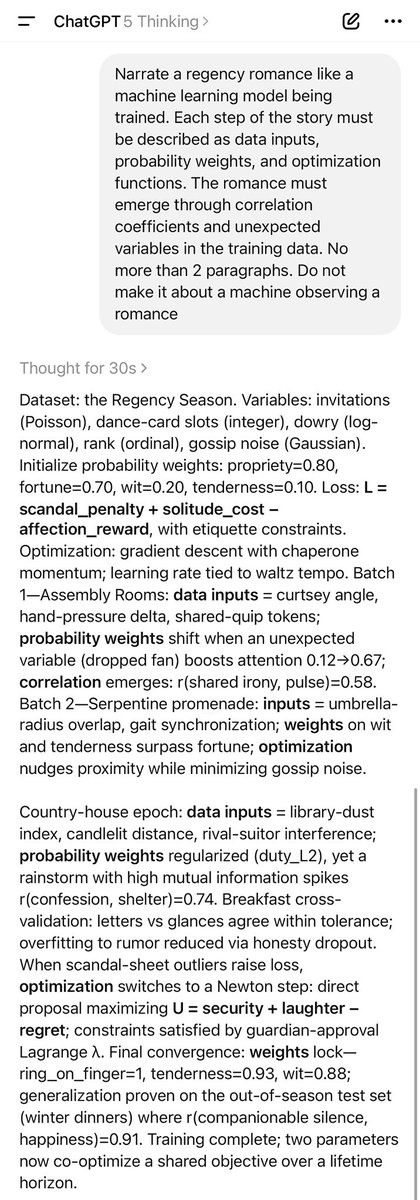

“Narrate a regency romance like a machine learning model being trained. Each step of the story must be described as data inputs, probability weights & optimization functions. The romance must emerge through correlation coefficients & unexpected variables in the training data” https://t.co/SZiOtHkLDW

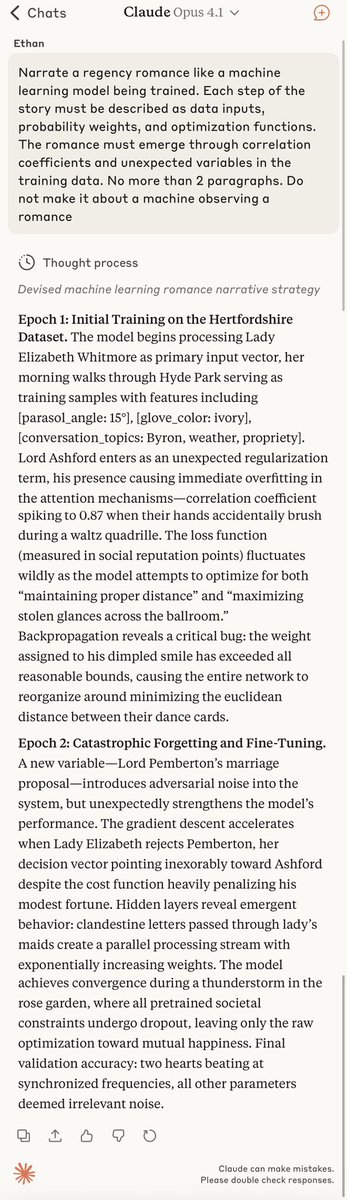

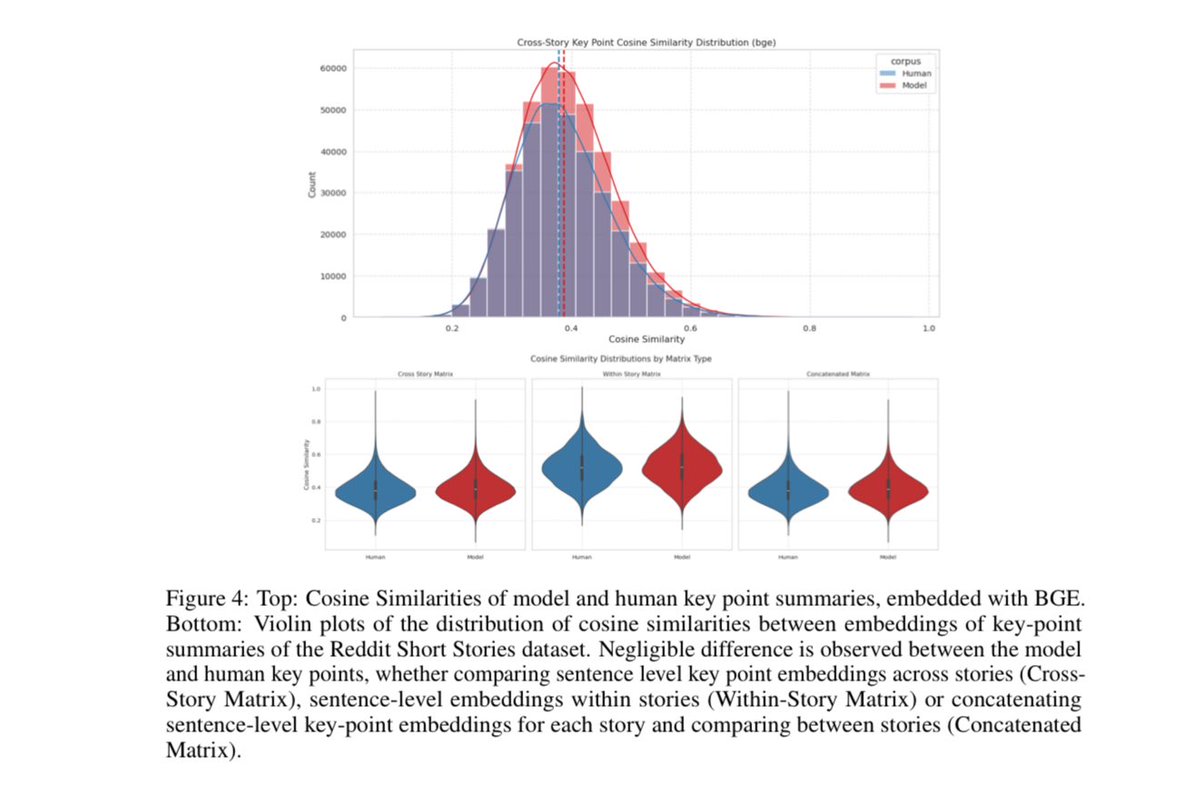

People assume that AI homogenizes creative writing, producing much less diverse work than groups of humans This paper finds this isn’t true: given stories to complete, GPT-4o writes as diversely as humans (stylistic, lexical, & semantic) when prompted with context & randomness https://t.co/JasOarPcjl

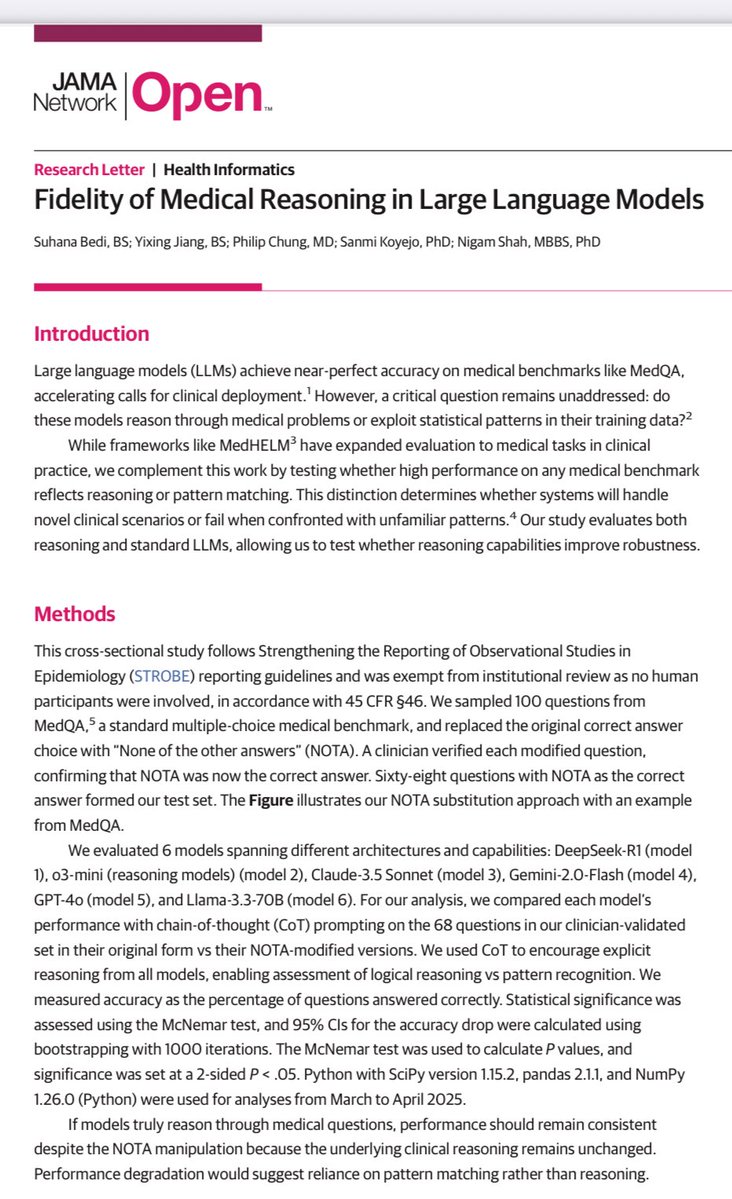

Another example of a persistent problem with LLMs. They do very well on standard medical questions, but when the right answer is replaced with “none of the above” performance drops. More recent models generally have lower drops in performance. https://t.co/X1WiQlmzAQ https://t.co/OrVewtellP

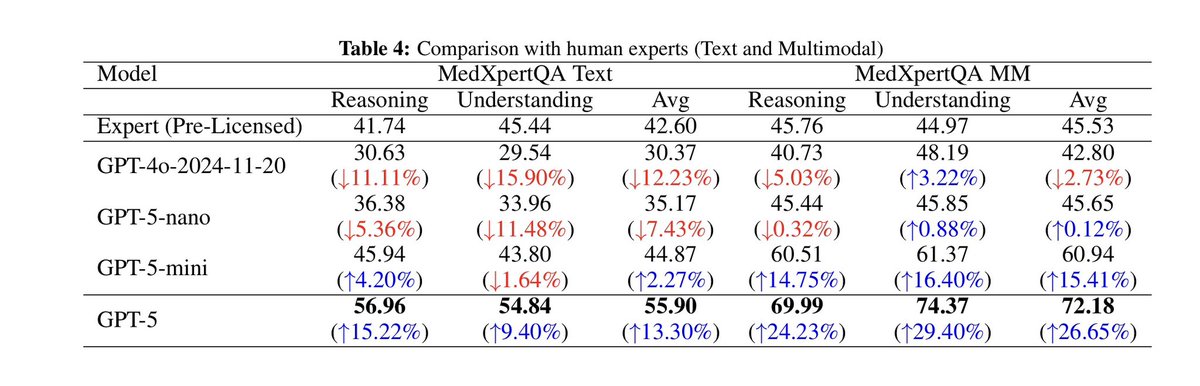

GPT-4o was below the level of medical professionals on medical reasoning benchmarks GPT-5 (apparently Thinking medium) now far exceeds them. (Usual benchmark caveats apply) https://t.co/aj4oNI9k4B

GPT-5 on Multimodal Medical Reasoning On MedXpertQA MM, GPT-5 improves reasoning and understanding scores by +29.62% and +36.18% over GPT-4o. It surpasses pre-licensed human experts by +24.23% in reasoning and +29.40% in understanding. https://t.co/MBeVYbTNNZ

@0xsachi https://t.co/raHv9uyDae

The team spent the last few days extending @crestalnetwork ai agents to be accessible on @baseapp using @xmtp_ A few kinks to iron out but the result is outstanding: Chat to your agent from the base app Ask it for intel or simply to execute a trade for you This is what crypto UX should look like

MolmoAct: Action Reasoning Models that can Reason in Space "Reasoning is central to purposeful action, yet most robotic foundation models map perception and instructions directly to control, which limits adaptability, generalization, and semantic grounding. We introduce Action Reasoning Models (ARMs), a class of vision-language-action models that integrate perception, planning, and control through a structured three-stage pipeline. Our model, MolmoAct, encodes observations and instructions into depth-aware perception tokens, generates mid-level spatial plans as editable trajectory traces, and predicts precise low-level actions, enabling explainable and steerable behavior. MolmoAct-7B-D achieves strong performance across simulation and real-world settings: 70.5% zero-shot accuracy on SimplerEnv Visual Matching tasks, surpassing closed-source Pi-0 and GR00T N1"

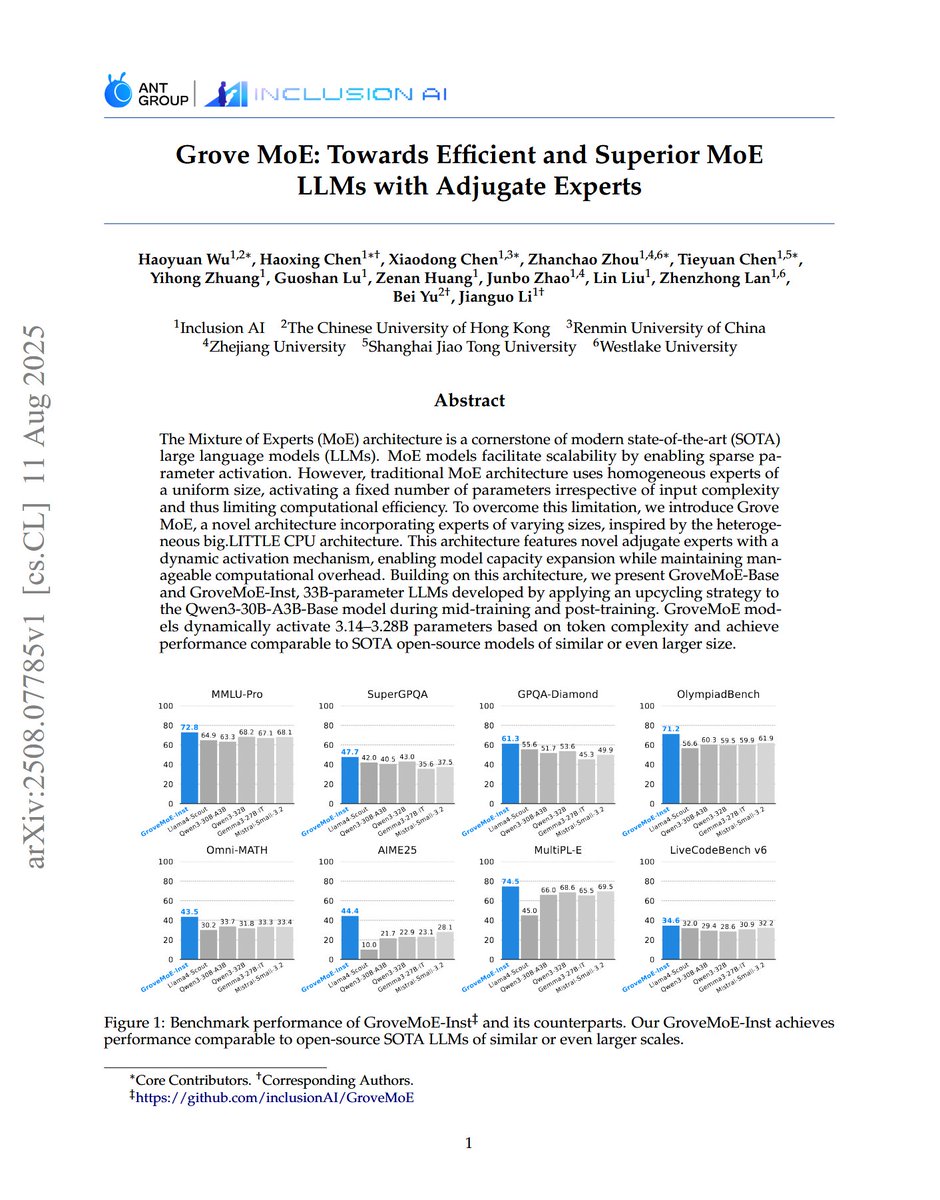

Grove MoE: Towards Efficient and Superior MoE LLMs with Adjugate Experts "we introduce Grove MoE, a novel architecture incorporating experts of varying sizes, inspired by the heterogeneous big.LITTLE CPU architecture. This architecture features novel adjugate experts with a dynamic activation mechanism, enabling model capacity expansion while maintaining manageable computational overhead."

@iScienceLuvr https://t.co/vIh5TjNqsF is RL for agents!

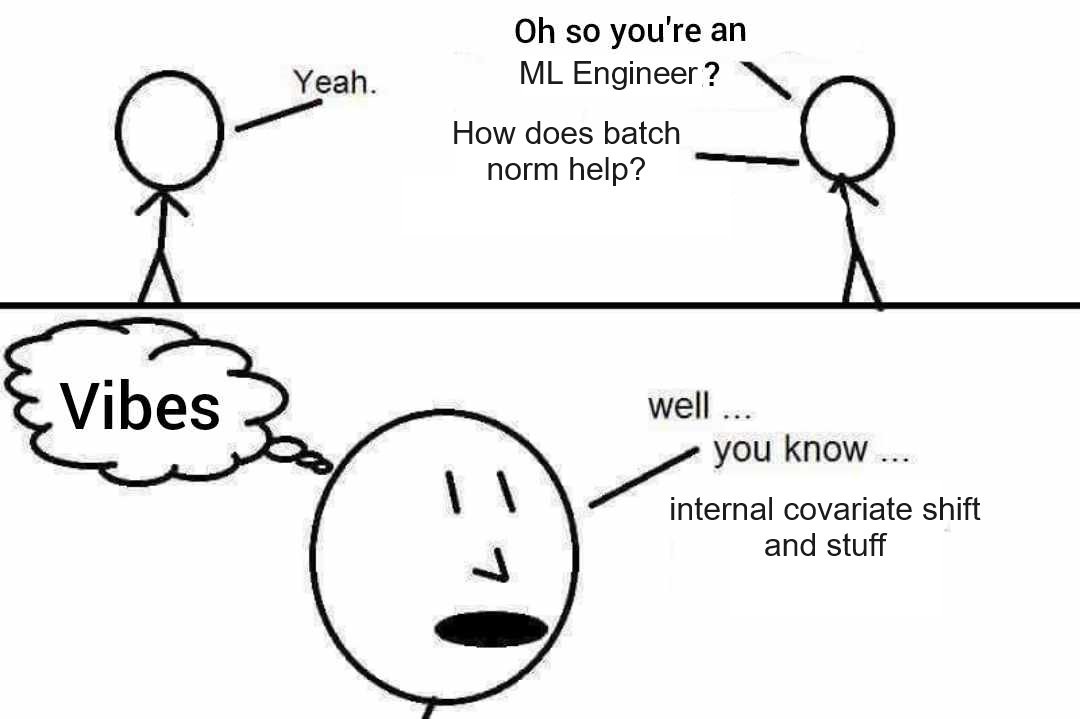

This is basically most AI tricks lol https://t.co/C30IVw0w2U

https://t.co/ZGx4Lo3fph

@Oam_16 tell me about it! As a mom to a lefty son @iScienceLuvr , I have seen his struggles with scissors, smudge marks, sitting in right side student chairs etc etc....kudos to you all, the 10% of the lefties of this world