@iScienceLuvr

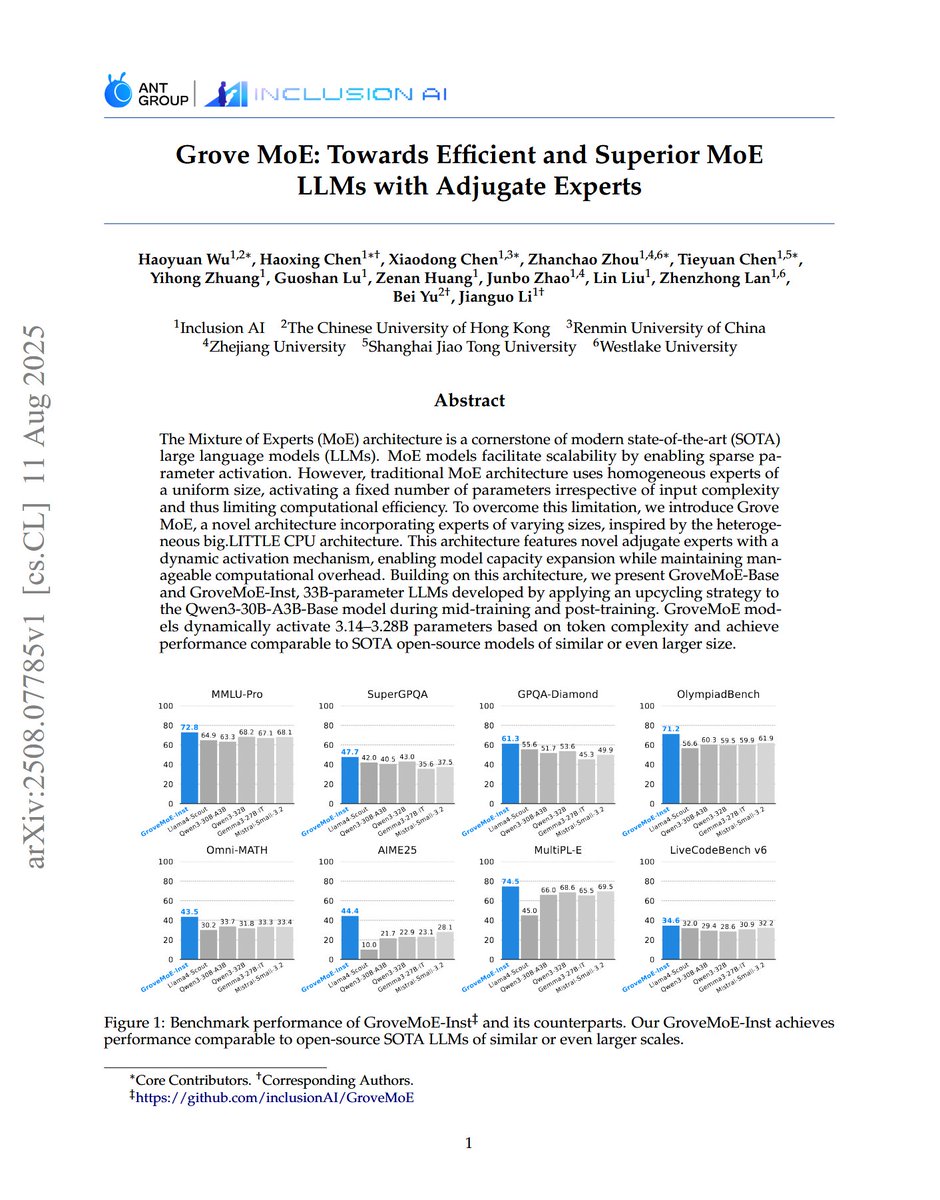

Grove MoE: Towards Efficient and Superior MoE LLMs with Adjugate Experts "we introduce Grove MoE, a novel architecture incorporating experts of varying sizes, inspired by the heterogeneous big.LITTLE CPU architecture. This architecture features novel adjugate experts with a dynamic activation mechanism, enabling model capacity expansion while maintaining manageable computational overhead."