Your curated collection of saved posts and media

This is incredible https://t.co/pv5mZOP2Oz

This is incredible https://t.co/pv5mZOP2Oz

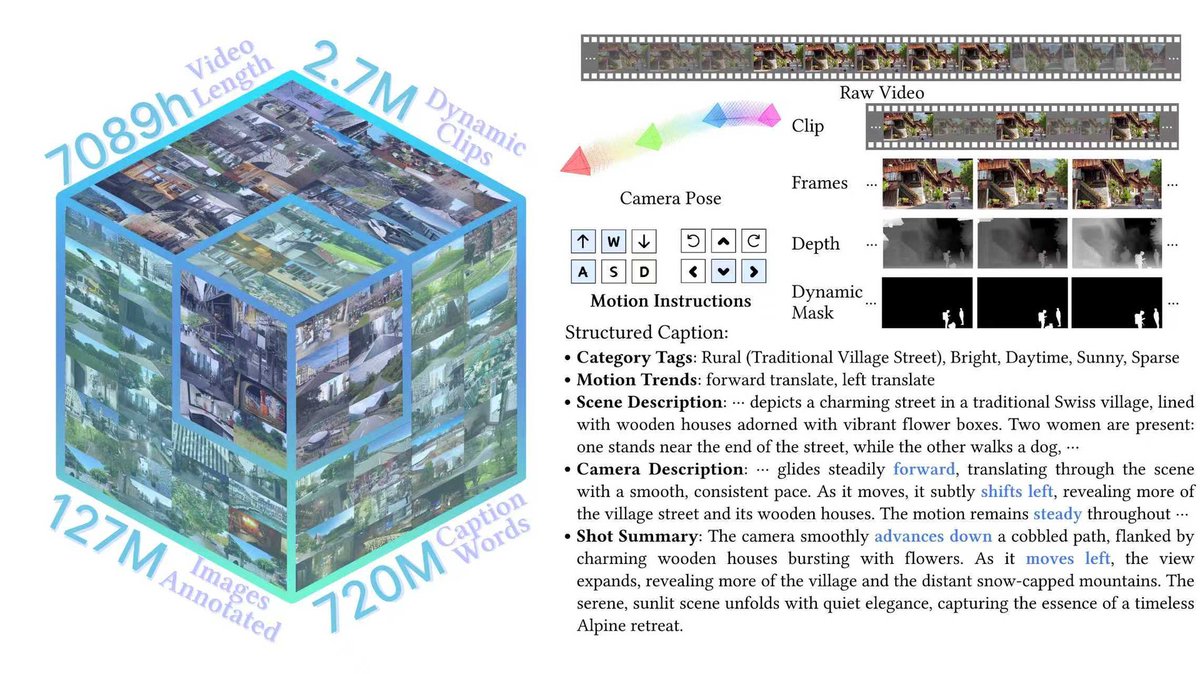

Introducing SpatialVID: A massive new video dataset for 3D spatial intelligence Crucial for training next-gen models, it features over 7,000 hours of diverse, in-the-wild video with dense annotations like camera poses, depth maps, and dynamic masks. https://t.co/FJXyPgOxxy

Meet MapAnything – a transformer that directly regresses factored metric 3D scene geometry (from images, calibration, poses, or depth) in an end-to-end way. No pipelines, no extra stages. Just 3D geometry & cameras, straight from any type of input, delivering new state-of-the-art results 🚀 One universal model enables SoTA for: 🔥 Mono Depth Estimation 🔥 Multi-View SfM 🔥 Multi-View Stereo 🔥 Depth Completion 🔥 Registration … and many more possibilities! – plus everything is metric 🎯 We release code for data processing, training, benchmarking & ablations – everything Apache 2.0! Details & Links 👇

At Matroid, we are making computer vision accessible + impactful across industries. More importantly, we're hiring. Stop by, meet the team, and let’s talk about building the future together. #UCBerkeley #EngineeringCareers #AI #ComputerVision https://t.co/hxorfpKGzX

a=(y,d=mag(k=(y<5?6+sin(y^1)*6:4+cos(y))*cos(i+t/4),e=y/3-13)+sin(e/4-t)/3)=>point((q=y*k/5*(2+sin(d*2+y-t*4)))+90*cos(c=d/3-t/2+i%2)+200,q*sin(c)+d*29-170) t=0,draw=$=>{t||createCanvas(w=400,w);background(9).stroke(w,96);for(t+=PI/90,i=1e4;i--;)a(i/790)}//#つぶやきProcessing https://t.co/7V117yf699

All systems go at @flySFO! We’ve been approved by the airport to begin operations, and will start testing soon. More here: https://t.co/0SGUdcl3sj https://t.co/BTfmuhfr8Z

1/n I’m really excited to share that our @OpenAI reasoning system got a perfect score of 12/12 during the 2025 ICPC World Finals, the premier collegiate programming competition where top university teams from around the world solve complex algorithmic problems. This would have placed it first among all human participants. 🥇🥇

An exciting moment for AI in complex algorithmic reasoning & coding. Our new Gemini Advanced model achieved Gold at the ICPC, a programming contest close to my heart! Also the beginning of an great journey in this space. So proud of the amazing team: https://t.co/Gy0Ix6oemx 1/4

Weekend :) https://t.co/is3WMOQlBk

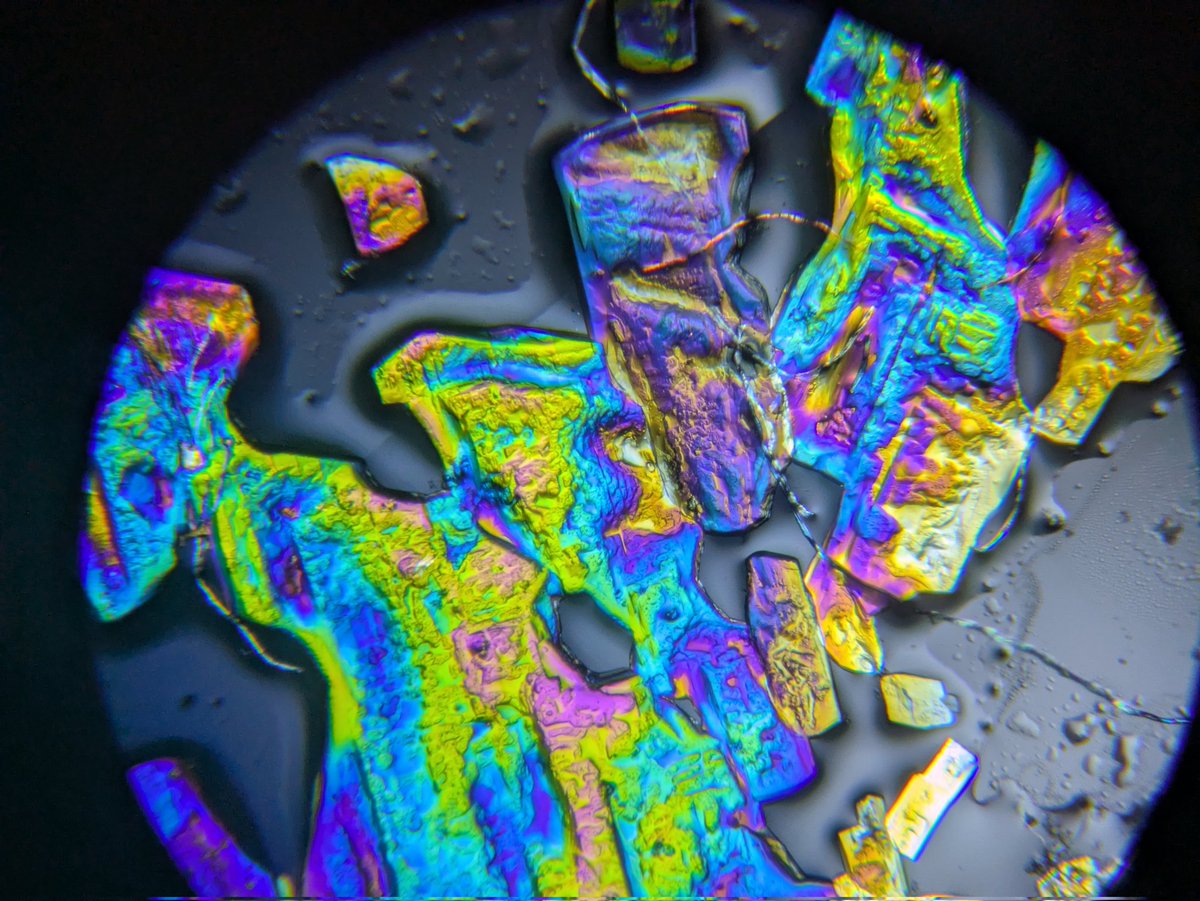

Left eye vs right eye looking at white LED light through a spectroscope. https://t.co/yHXrEbLWSs

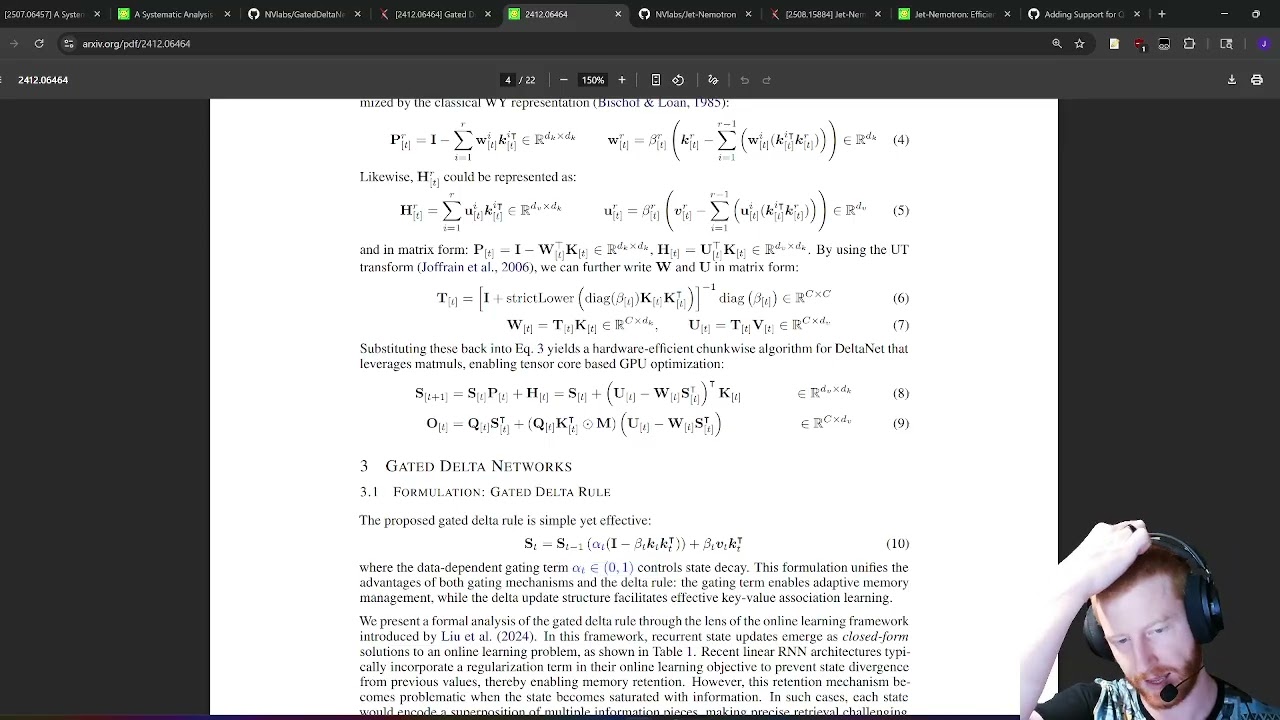

Wondering what the recent 'hybrid linear attention' buzz is about? I recorded a quick video looking at Jet Nemotron, Gated Delta Net and related pieces, prompted by the next Qwen possibly being a nice-looking hybrid model :) Hope it's useful: https://t.co/aCVgaxxESR

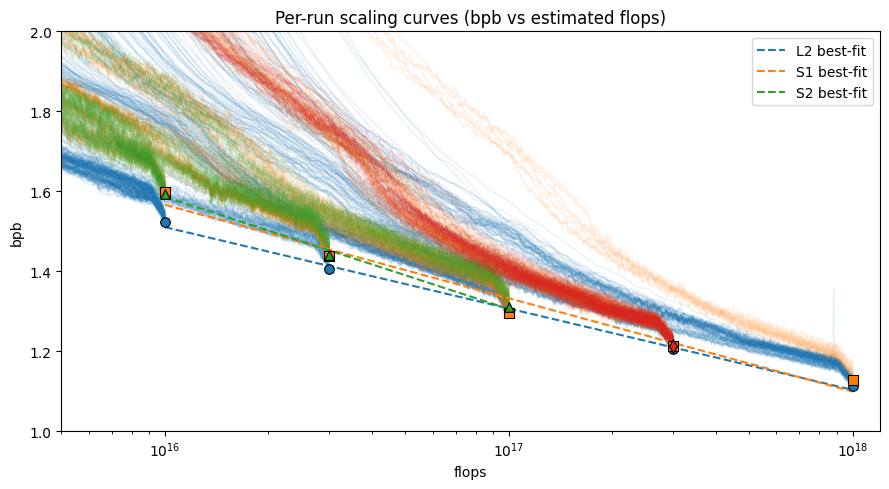

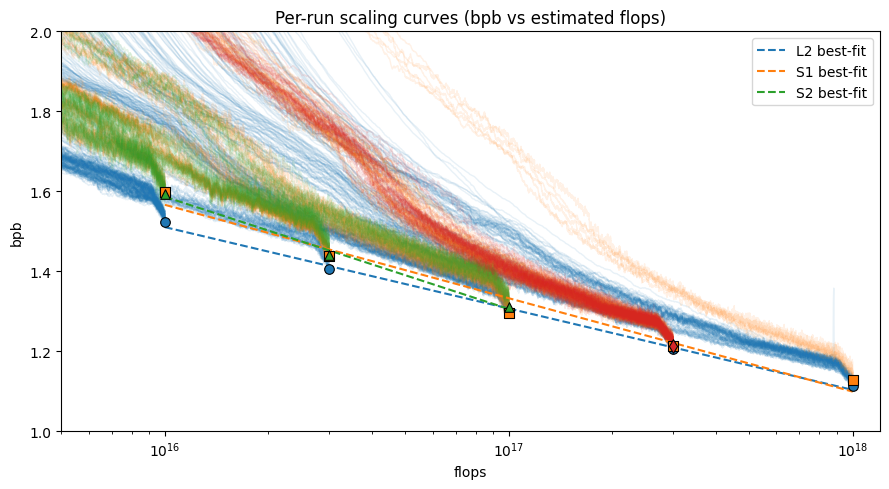

(5/6) https://t.co/Hhw069sXPP

(5/6) https://t.co/Hhw069sXPP

Slow mo IPA dropping into water, surface tension does some fun stuff https://t.co/NJkha5EhVU

Be Alex Krizhevsky. Born in the Soviet Union. Join Hinton’s lab. Create AlexNet. Train it on GPUs in your bedroom. Breaks every record. Spark the Deep Learning revolution. Get 181,495 citations. Disappear. https://t.co/Ou7GfFa4Y4

Replit just released the ultimate coding agent. It can build it's own AI agents, build, debug, test full apps end-to-end. Not just snippets. Not just suggestions. 𝗙𝗼𝗿 𝘁𝗵𝗲 𝗳𝗶𝗿𝘀𝘁 𝘁𝗶𝗺𝗲, 𝗮𝗻 𝗔𝗜 𝗰𝗮𝗻 • Run autonomously for up to 200 minutes • Self-test in a browser and fix bugs in real time • Spin up new agents that live in Slack, Telegram, or on a schedule 𝗪𝗵𝘆 𝘁𝗵𝗶𝘀 𝗺𝗮𝘁𝘁𝗲𝗿𝘀 Most AI tools stop at “prototyping.” They get stuck when it’s time to test, debug, or refactor. 𝗪𝗵𝗮𝘁'𝘀 𝗻𝗲𝘄 𝗶𝗻 𝗔𝗴𝗲𝗻𝘁 3 ▸ 200 minutes of autonomous runtime ▸ Automated Testing: 3× faster and 10× cheaper ▸ Live Monitoring with your phone. 𝗔𝘂𝘁𝗼𝗺𝗮𝘁𝗲 𝗔𝗻𝘆𝘁𝗵𝗶𝗻𝗴 For the first time, Agent 3 can generate other agents: → A Slack bot to query your data. → A Telegram bot to track habits or send reminders. → A daily automation that emails your stock portfolio update.

I tested out some Midjourney styles with "an open door" to weird effect, then edited together in a @heyglif workflow with Kling and MMAudio. Posted about it and the MJ styles result in my latest newsletter (link follows). https://t.co/sxqffjzs9I

This is wild! Don't sleep on Replit Agent 3. It makes it extremely easy to vibe code AI automation workflows. Watch how I use it to build a workflow that tracks new Claude Code releases and sends Slack notifications. Zero code written! https://t.co/q98tmbqzFq

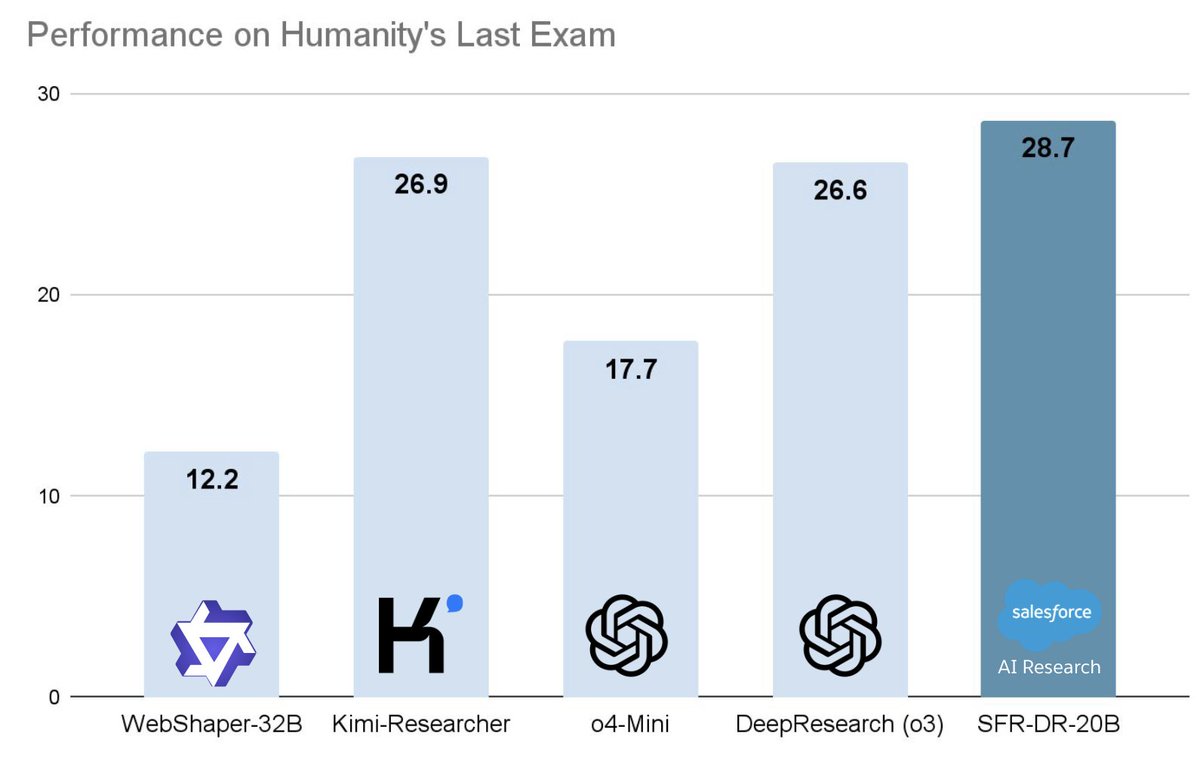

Meet SFR-DeepResearch (SFR-DR) 🤖: our RL-trained autonomous agents that can reason, search, and code their way through deep research tasks. 🚀SFR-DR-20B achieves 28.7% on Humanity's Last Exam (text-only) using only web search 🔍, browsing 🌐, and Python interpreter 🐍, surpassing DeepResearch with OpenAI o3 and Kimi Researcher. 🤖SFR-DR agents are trained to operate independently, without pre-defined multi-agent workflows. They autonomously plan, reason, and propose and take actions as defined by their tools. 🔄SFR-DR agents are trained with end-to-end RL. Starting from reasoning optimized models, our RL pipeline carefully preserves reasoning abilities while training models to become more capable research agents. 📝SFR-DR agents are also trained to manage their own memory by summarizing previous results when context becomes limited. This enables a virtually unlimited context window, enabling long-horizon tasks Paper: https://t.co/32idhdknhh #AIAgents #ReinforcementLearning #DeepResearch

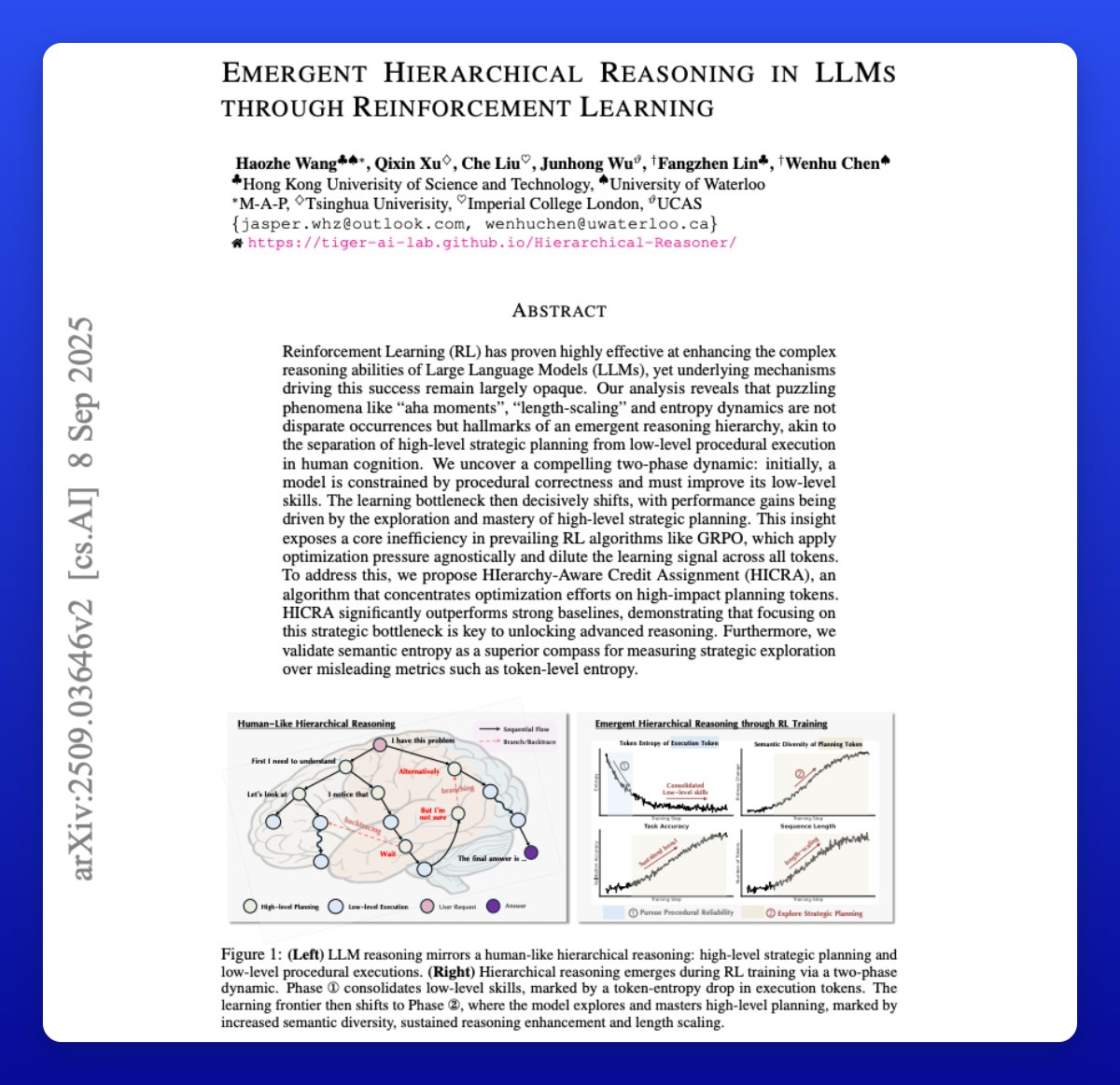

Emergent Hierarchical Reasoning in LLMs The paper argues that RL improves LLM reasoning via an emergent two-phase hierarchy. First, the model firms up low-level execution, then progress hinges on exploring high-level planning. More on this interesting analysis: https://t.co/Tp95C5dfnA

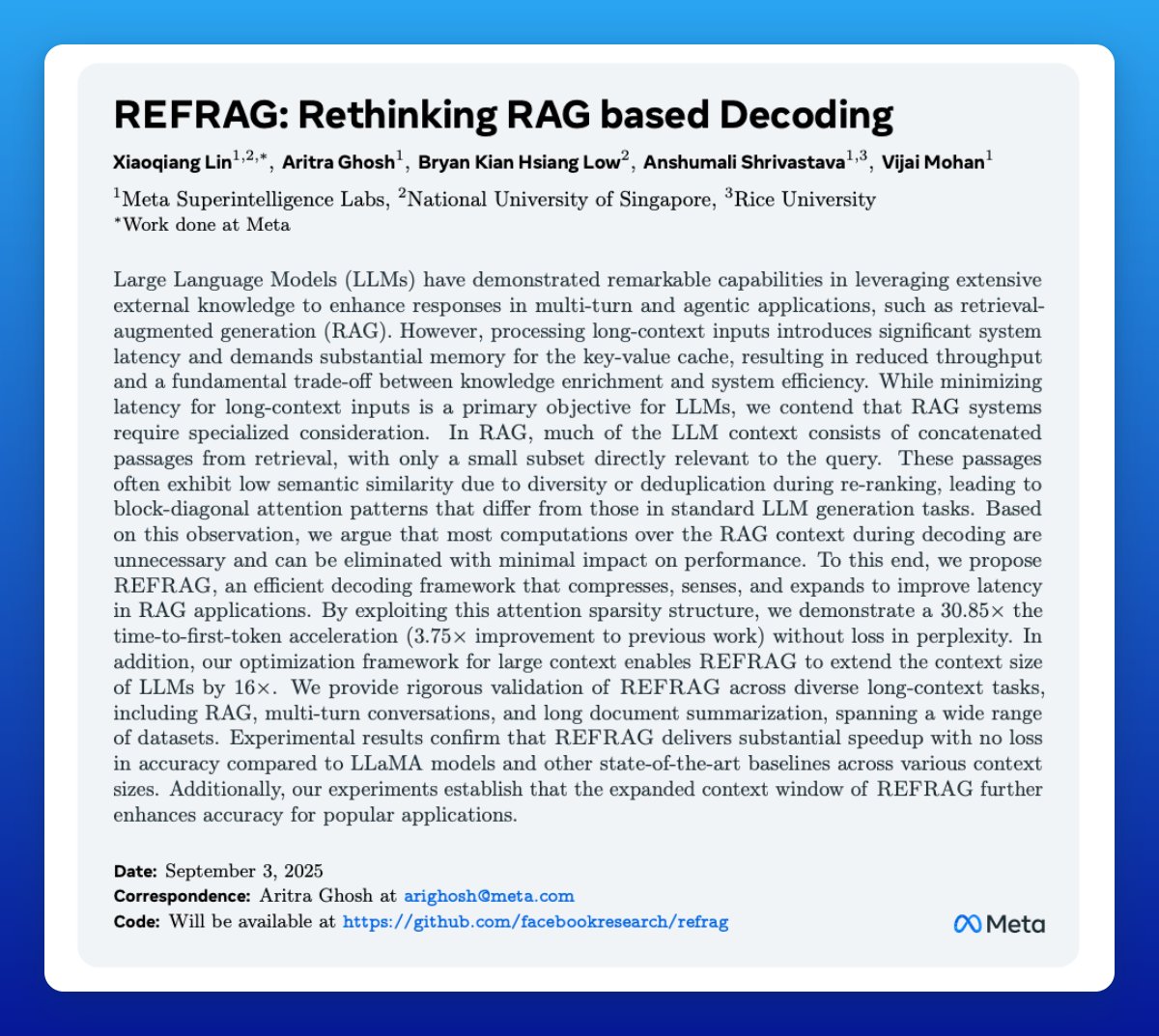

Another impressive paper by Meta. It's a plug-in decoding strategy for RAG systems that slashes latency and memory use. REFRAG achieves up to 30.85× TTFT acceleration. Let's break down the technical details: https://t.co/0mydoan9wA

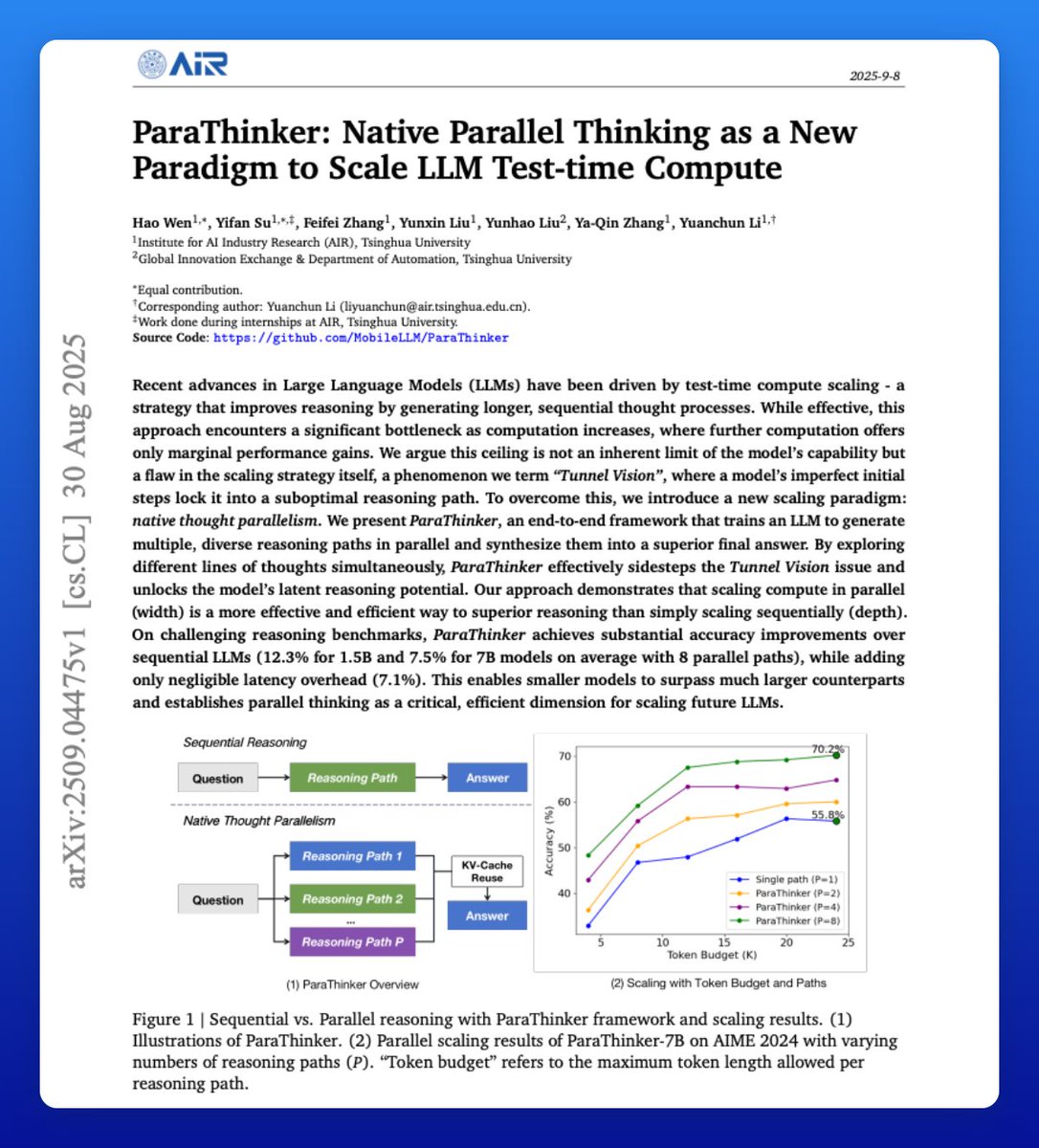

Another banger paper on reasoning LLMs! They train models to "think wider" to explore multiple ideas that produce better responses. It's called native thought parallelism and proves superior to sequential reasoning. Great read for AI devs! Here are the technical details: https://t.co/zcrnPiRsRp

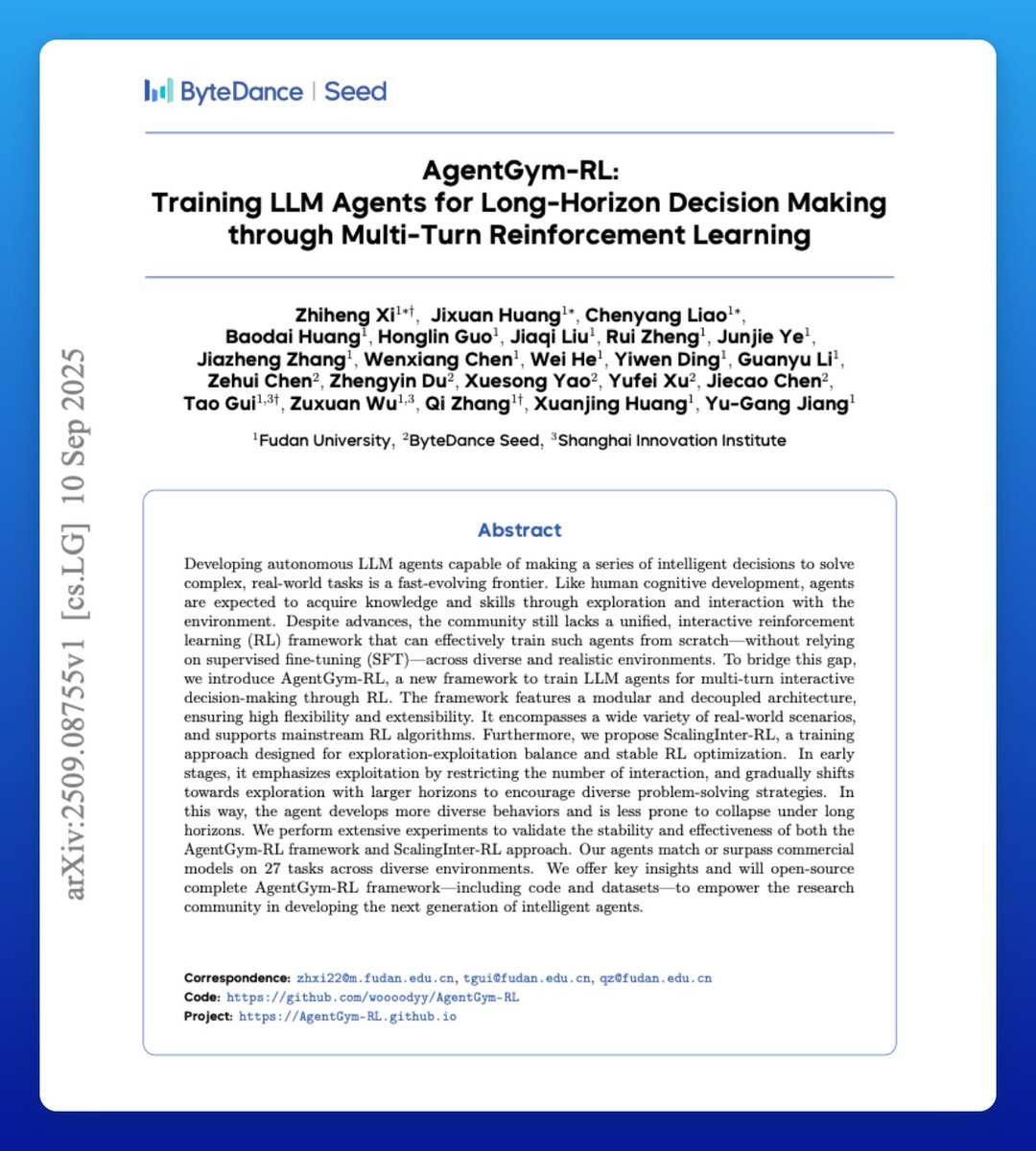

AI Agents suck at long-horizon tasks. AgentGym-RL aims to train strong LLM agents with long-horizon capabilities. Finds that post-training and test-time compute scale better than model size alone for agentic tasks. Leads to 7B models that beat much larger systems. My notes: https://t.co/rfqmHaGWUr

Using small language models in a multi-agent system is not always good. Here is one scenario (multi-agent debate) where weaker LLM agents often disrupt the performance of the stronger agents. This focuses on LLM agents engaged in debate, but it might be a more pervasive issue. https://t.co/Jj5AaUXPIf

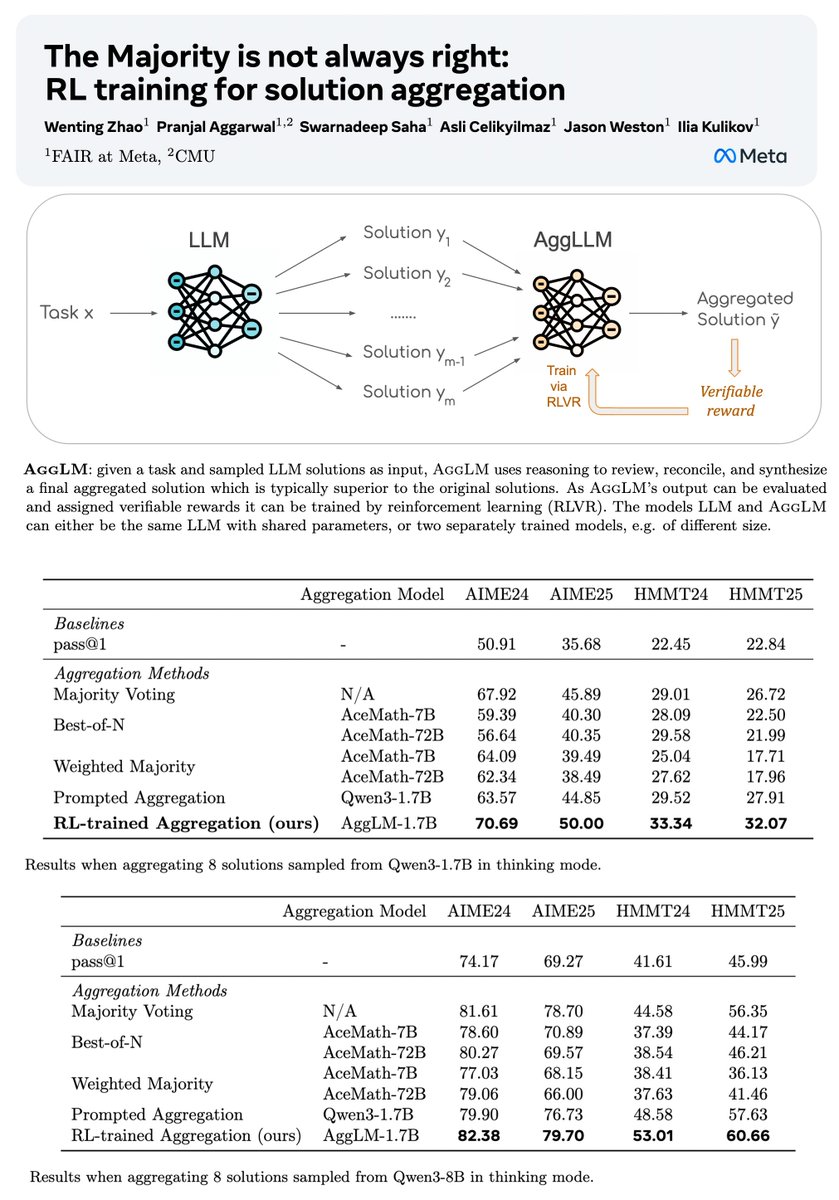

🌀New Test-time scaling method 🌀 📝: https://t.co/yqWvOMZpwq - Use RL to train an LLM solution aggregator – Reasons, reviews, reconciles, and synthesizes a final solution -> Much better than existing techniques! - Simple new method. Strong results across 4 math benchmarks. 🧵1/5 https://t.co/1Y3LaX8DyB

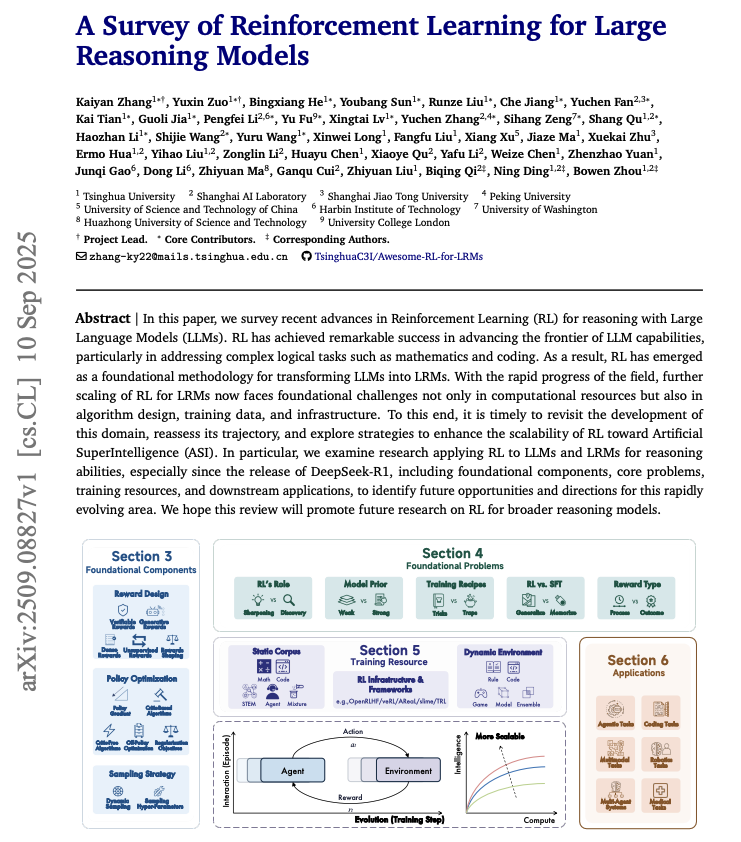

A Survey of Reinforcement Learning for Large Reasoning Models. 100+ pages covering foundational components, core problems, training resources, and applications. Great recaps of RL for LLMs. https://t.co/tyMcONkFWQ

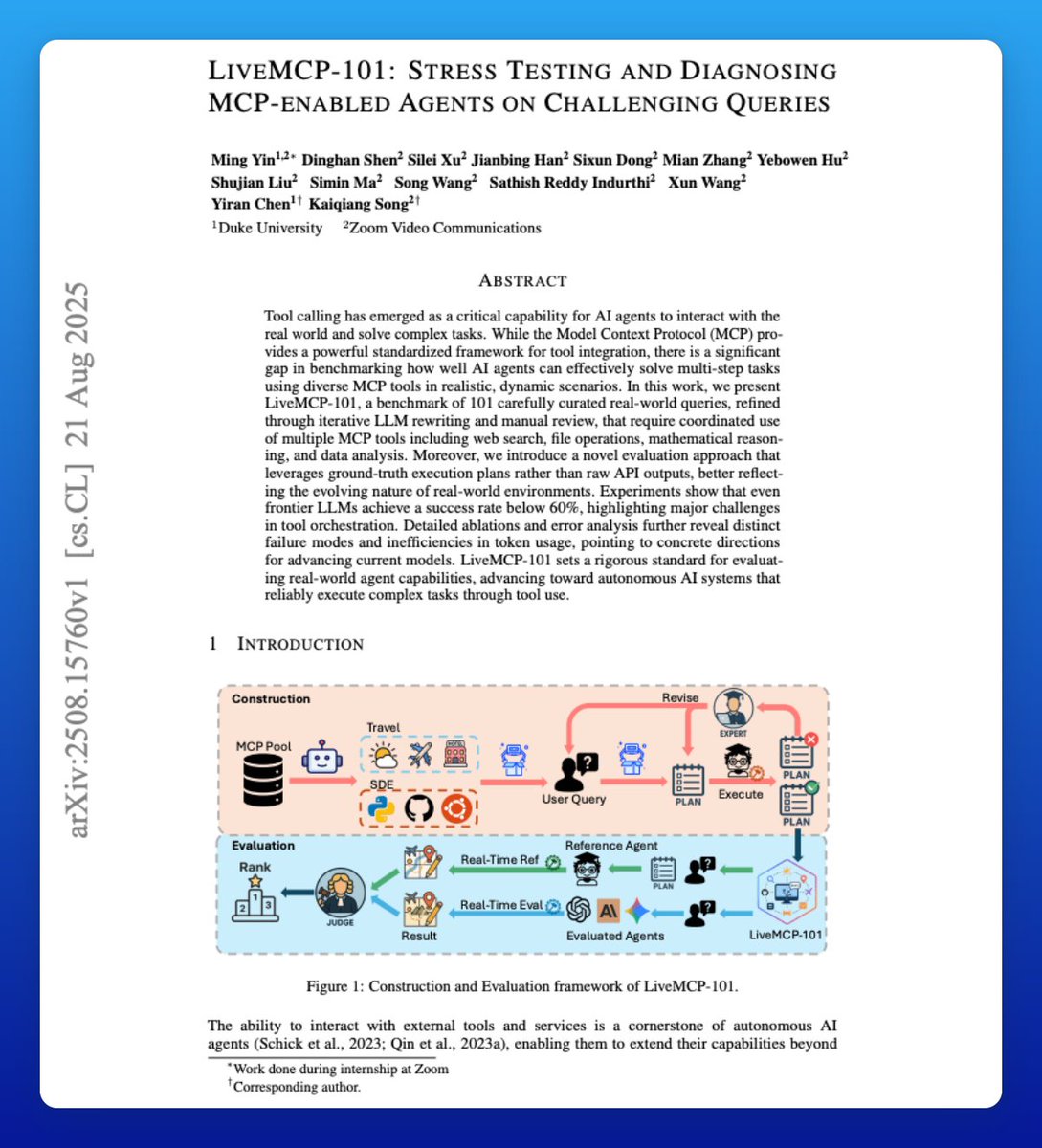

LiveMCP-101 This paper introduces LiveMCP-101, a novel real-time evaluation framework with a benchmark designed to stress-test agents on complex, real-world tasks. It moves beyond the mock data and synthetic environments of previous works. More notes ↓ https://t.co/HUMdyzb8uv

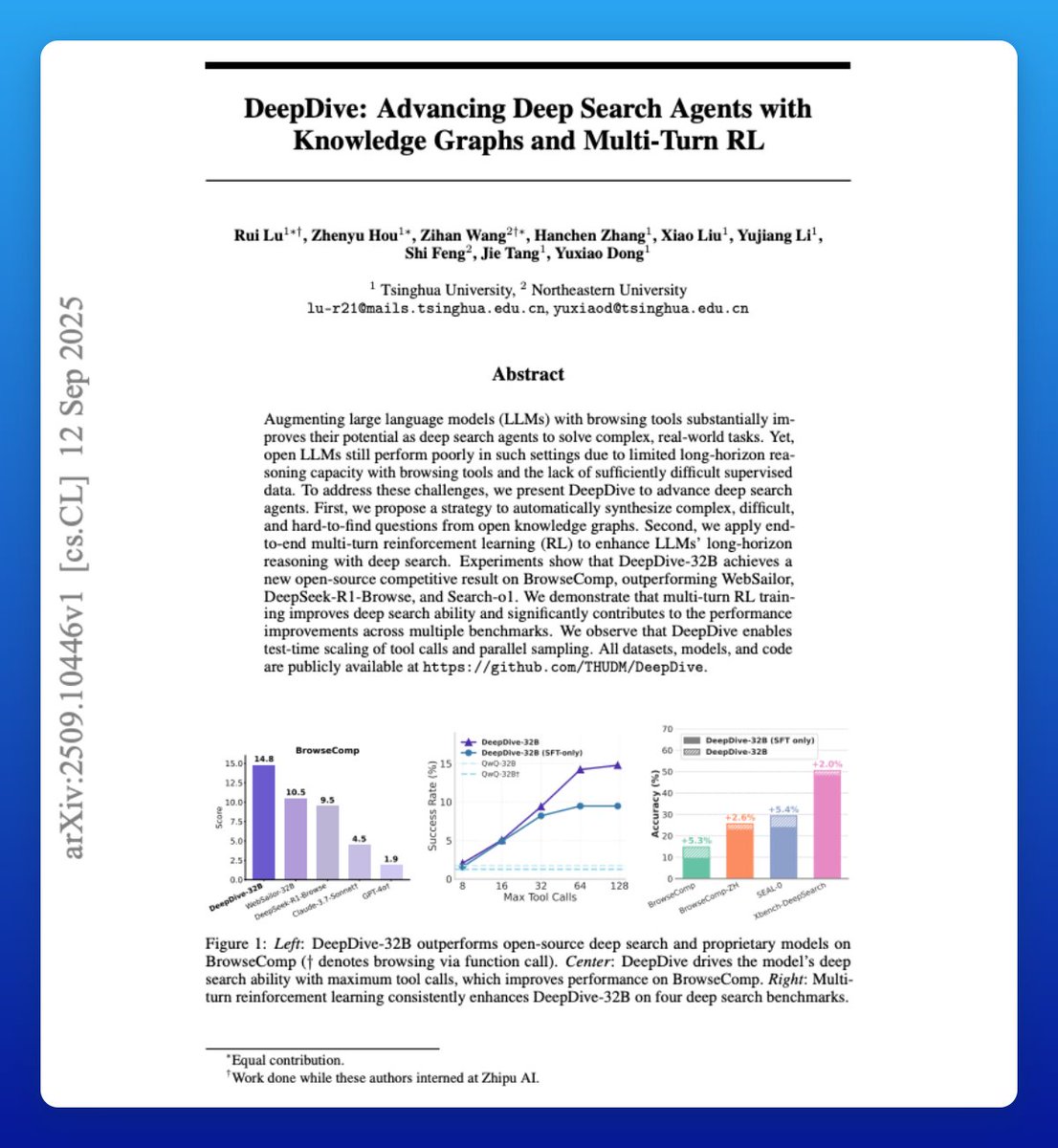

Multi-turn RL and data difficulty significantly advance deep research agents. There is a strong pattern here. It shows that training only on shallow datasets or with loose rewards won’t cut it. Let's break down the technical details: https://t.co/MxbOPuYlhM

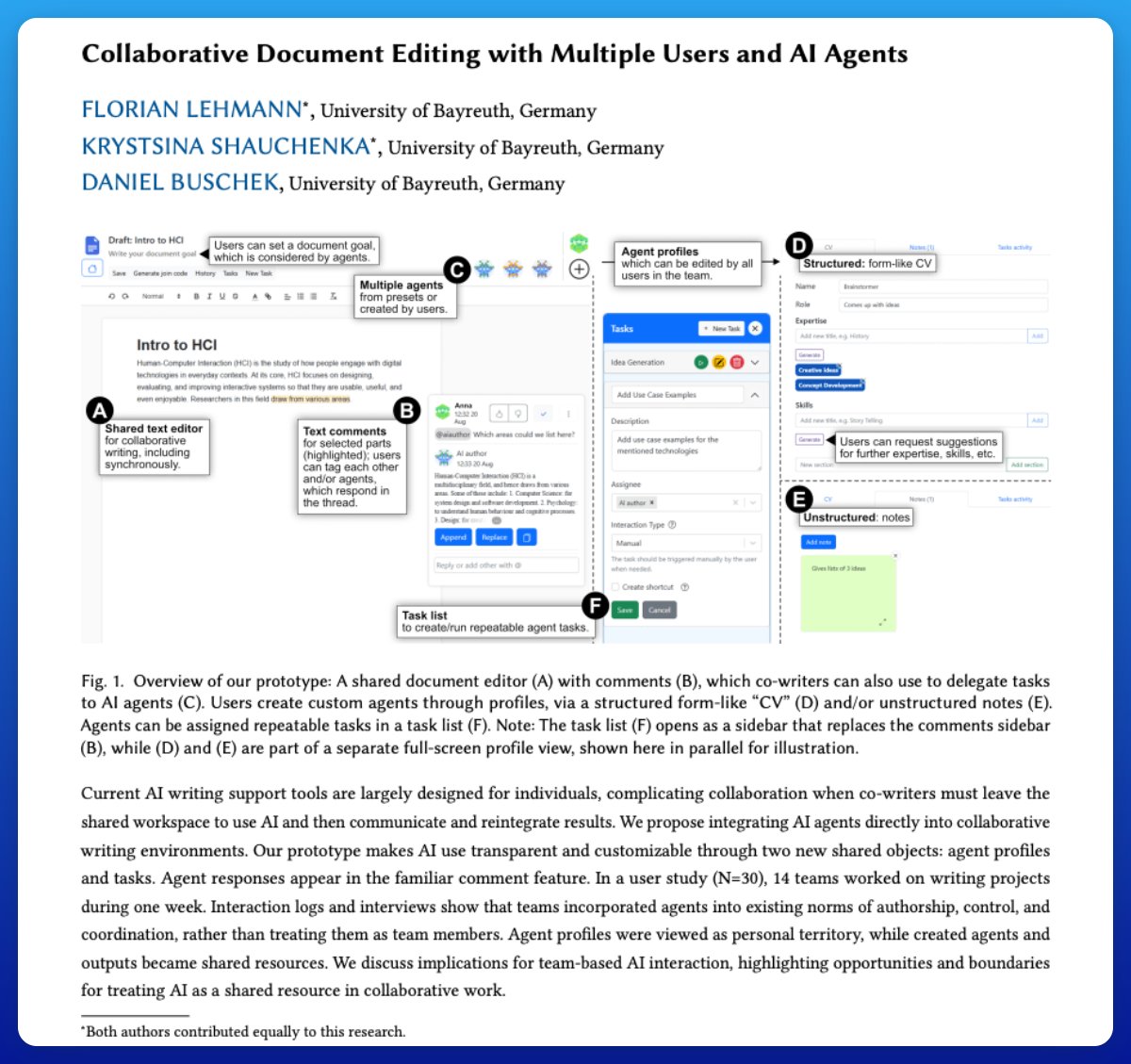

There are all kinds of opportunities to build AI agents that act as seamless collaborators. However, most people today still use AI agents as tools. As an example, this collaborative document editing use case finds that participants did not regard the created agents as collaborators. Here are some additional thoughts: Collaborative AI design should respect territoriality: profiles may remain individual, while outputs can serve as shared, negotiable artifacts. Embedding AI into familiar collaboration features (e.g., comments) eases adoption and supports emerging team norms. There is a lot more to explore in terms of better UX/UI. Future systems need focus- and collaboration-aware agent initiative to balance proactive support with user control. Proactive AI is a huge area of exploration for builders. There are also trust issues with AI agents that we need to resolve. How much can we trust to offload to agents? The work highlights both opportunities (shared prompting, richer feedback) and boundaries (ownership, trust, verbosity) in treating AI as a shared resource for teams.

This is one of the most promising directions to improve RAG systems. It involves combining dynamic retrieval with structured knowledge. It helps to mitigate hallucinations and outdated information, and improves knowledge quality. Pay attention to this one, AI devs! https://t.co/g7K4FCKbaM

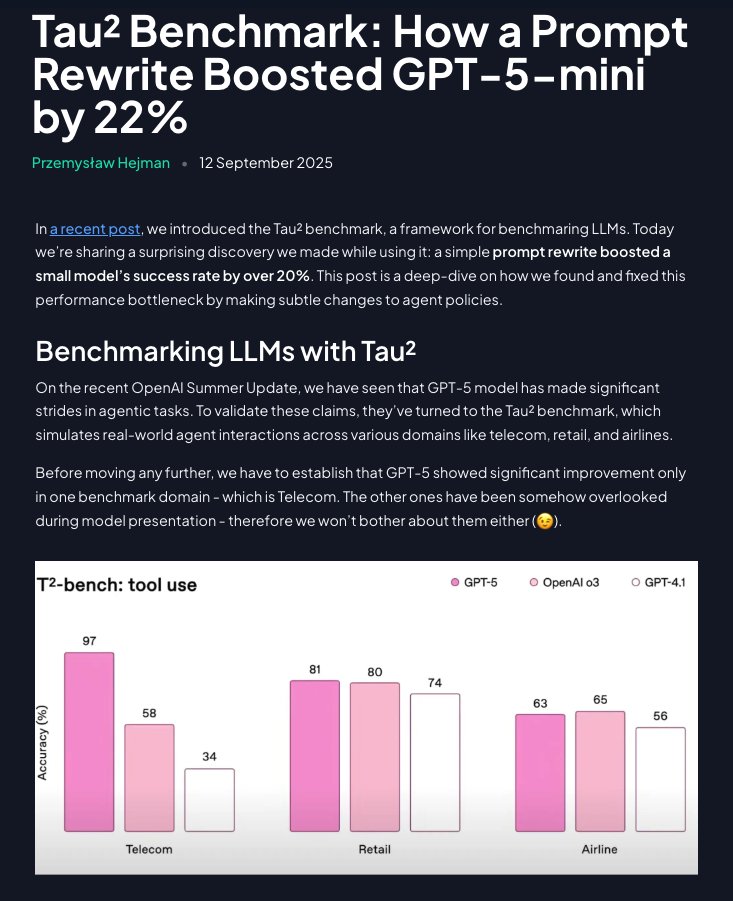

Prompt Engineering is not dead! A simple rewrite significantly improved the performance of GPT-5-mini. How? Restructuring domain policies into step-by-step, directive instructions (using Claude) boosted success rates by over 20%. GPT-5-mini even surpassed OpenAI’s o3 model. https://t.co/IrSnheudMR