@omarsar0

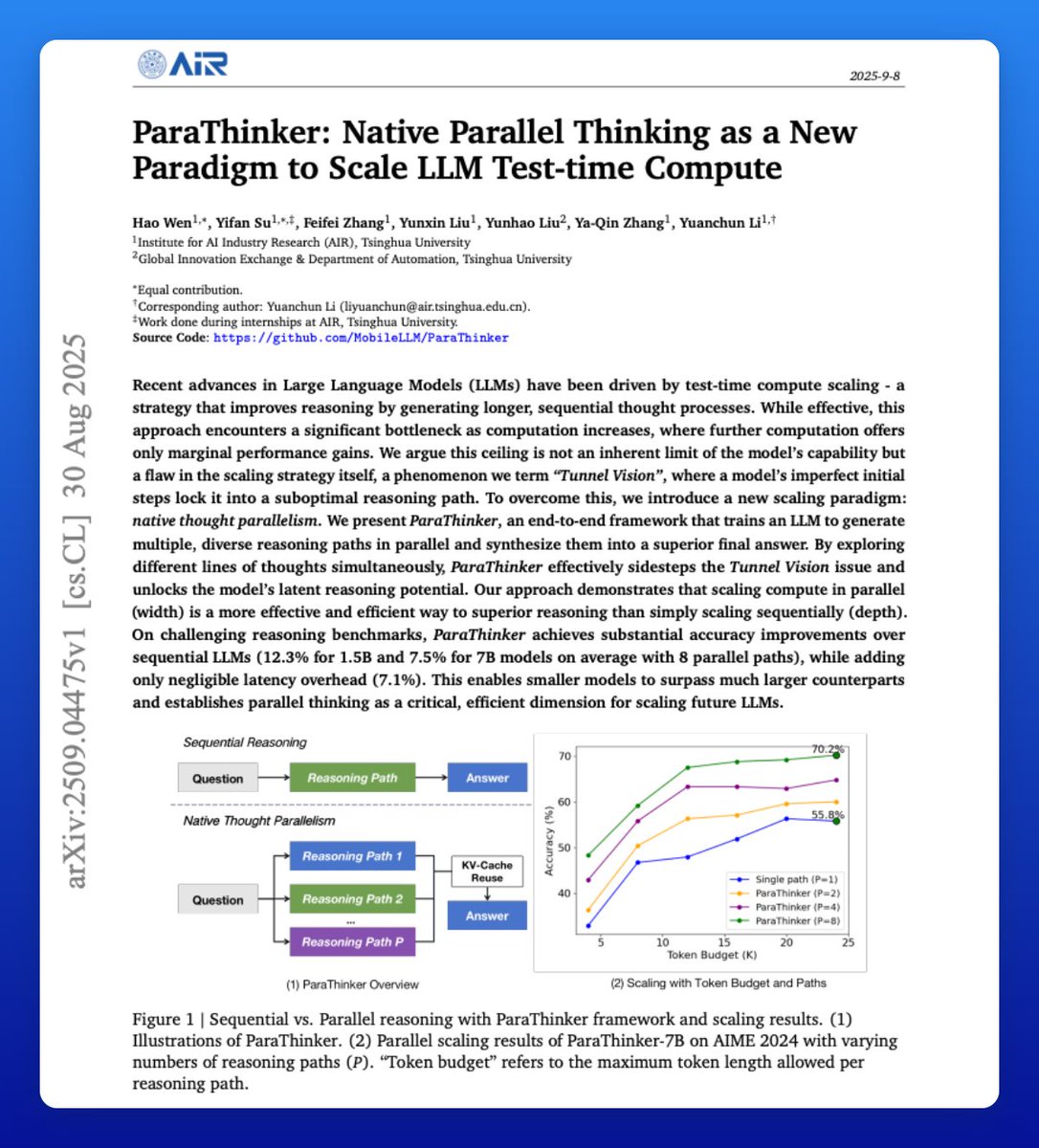

Another banger paper on reasoning LLMs! They train models to "think wider" to explore multiple ideas that produce better responses. It's called native thought parallelism and proves superior to sequential reasoning. Great read for AI devs! Here are the technical details: https://t.co/zcrnPiRsRp