Your curated collection of saved posts and media

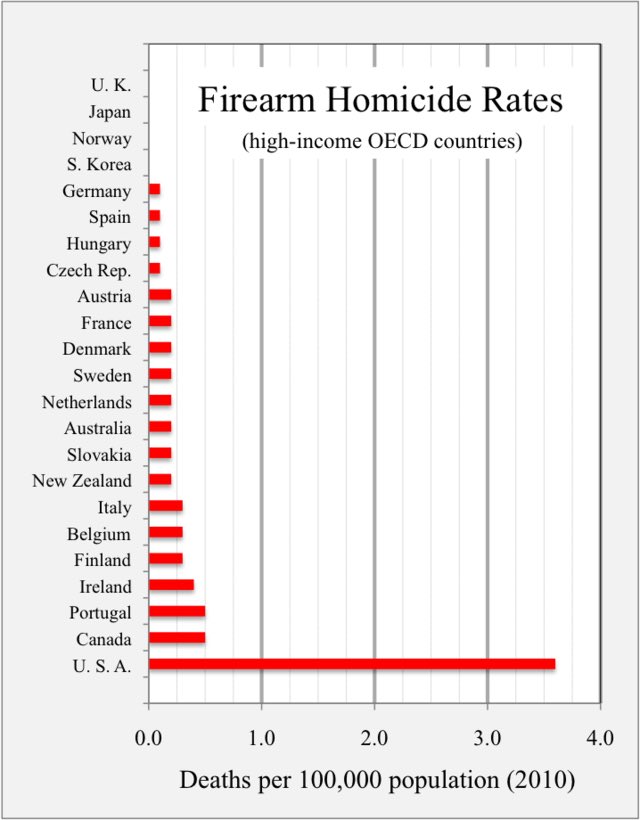

Daily reminder that gun deaths are completely a choice. Nobody dies from guns in the rest of the functioning world. It’s like dying from a fever. An ancient plague that we have solved as a species and moved on from. Except if you live in the United States. https://t.co/ZdKsMdwJM7

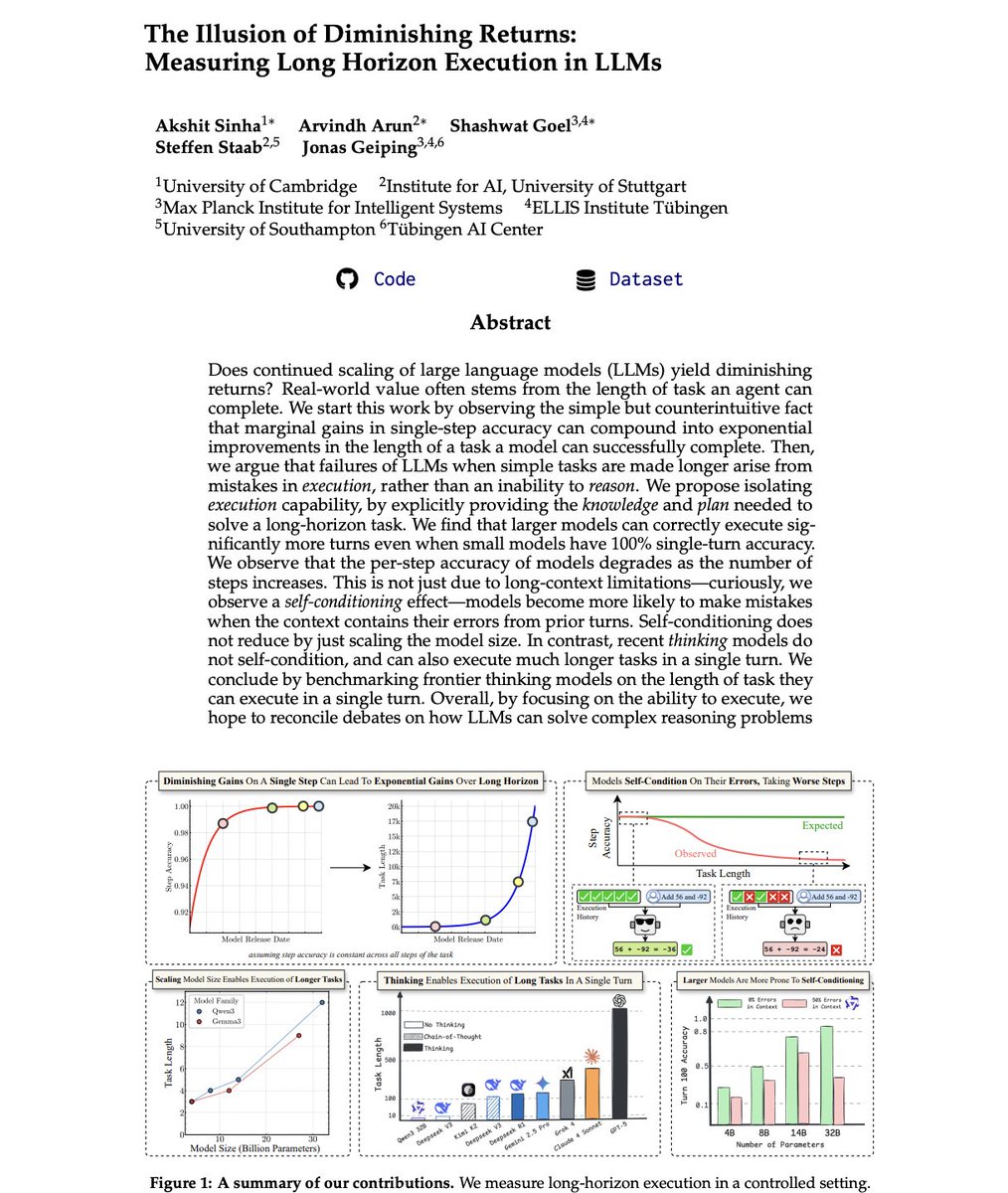

Paper fresh of the press: The Illusion of Diminishing Returns: Measuring Long Horizon Execution in LLMs. Are small models the future of agentic AI? Is scaling LLM compute not worth the cost due to diminishing returns? Are autoregressive LLMs doomed, and thinking an illusion? The bear cases for LLM scaling are all connected to a single capability: Long Horizon Execution. However, thats exactly why you should be bullish on scaling model size, and test-time compute! > First, remember the METR plot? It might be explained by @ylecun 's model of compounding errors > the horizon length of a model grows super-exponentially (@DaveShapi) in single step accuracy. > Upshot 1: Don't be fooled by slowing progress on typical short-task benchmarks > that is enough for exponential growth in horizon length. But we go beyond @ylecun's model, testing LLMs empirically... > Just execution is also hard for LLMs, even when you provide them the needed plan and knowledge. > We should not misinterpret execution failures as an inability to "reason". > Even when a small model has 100% single-step accuracy, larger models can execute far more turns above a success rate threshold. > Noticed how your agent performs worse as the task gets longer? Its not just long-context limitations.. > We observe: The Self-Conditioning Effect! > When models see errors they made earlier in their history, they become more likely to make errors in future turns. > Increasing model size worsens this problem - a rare case of inverse scaling! So what about thinking...? > Thinking is not an illusion. It is the engine for execution! > Where even DeepSeek v3, Kimi K2 fail to execute even 5 turns latently when asked to execute without CoT... > With CoT, they can do 10x more. So what about the frontier? > GPT-5 Thinking is far ahead of all other models we tested. It can execute 1000+ step tasks in one go. > At second with 432 steps is Claude 4 Sonnet... and then Grok-4 at 384 > Gemini 2.5 Pro and DeepSeek R1 lag far behind, at just 120. > Is that why GPT-5 was codenamed Horizon? 🤔 > Open-source has a long ;) way to go! > Let's grow it together! We release all code and data. We did a longggg deep dive, and present you the best takeaways with awesome plots below 👇

I have something to say about the Charlie Kirk murder. https://t.co/N3P7crTKBH

I have something to say about the Charlie Kirk murder. https://t.co/N3P7crTKBH

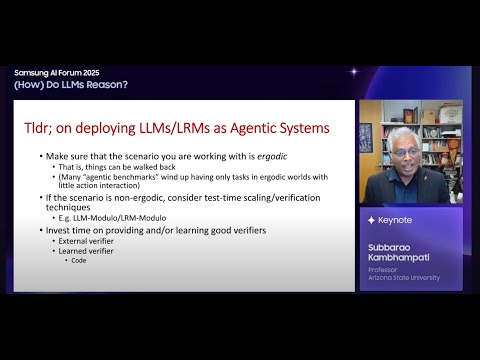

In the year since LRMs ("reasoning models") hit the scene, we have been trying to understand, analyze and demystify them.. Here are our efforts to date--conveniently all in one place (First..) Evaluation of LRMs on Planning 📜https://t.co/uu3aZzVSX4 (9/24)& 📜https://t.co/dRg7qa3uoz (TMLR) 🧵 https://t.co/CvHuWhlKNj Semantics of Intermediate Tokens (CoT's) Study on Mazes: 📜https://t.co/4LGWfiCZ5e 🧵 https://t.co/y3BthniqSG Study on CoTemp Q&A: 📜 https://t.co/Cnlb96mqKd 🧵 https://t.co/CaeVu0ex46 Analysis of RL on LRMs 📜https://t.co/021pXx842x 🧵https://t.co/XbqAyJIyB4 Interpretability of Intermediate tokens 📜https://t.co/e2J5pQLhGj 🧵https://t.co/74FSZvQ7c2 Intermediate tokens and problem complexity 📜https://t.co/C5y772QIue 🧵 https://t.co/UKgCwgHKeQ Perspective on LRMs 📜https://t.co/Skv2WIKyZY (also at https://t.co/d2fIIX82NT) (Position against anthropomorphization of Intermediate Tokens) 📜https://t.co/4f5eg5vRnA 🧵https://t.co/f6E3c2j4dm Relevant recent talks https://t.co/6lyhPLYVcY TBC..

[cost] The improved performance of LRM o1 however comes at considerably higher time/compute/cost compared to both LLMs (o1 costs 42$ compared to 1.8$ for GPT4) & normal planners (e.g. FD) that get 100% within a tiny fraction of time on local desktop (see the tables 👆 & 👇). As we

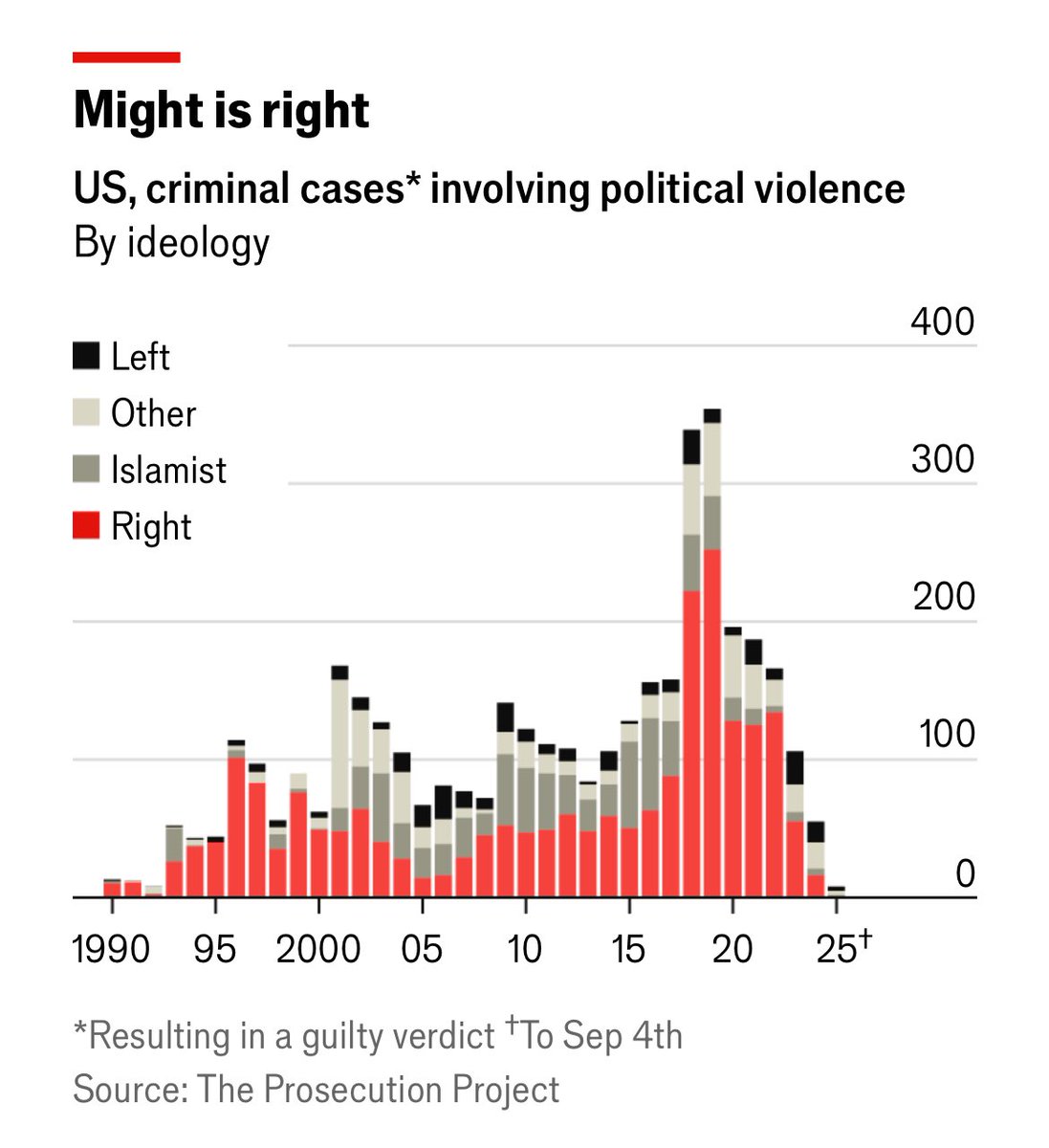

A data-driven look at political violence in America. “A separate tally by the Anti-Defamation League, an advocacy group, shows that 76% of extremist-related murders over the past decade were committed by those on the right. Such tallies, however, depend on how extremism is defined and how ideology is assigned.” https://t.co/mAfQsGdeEc

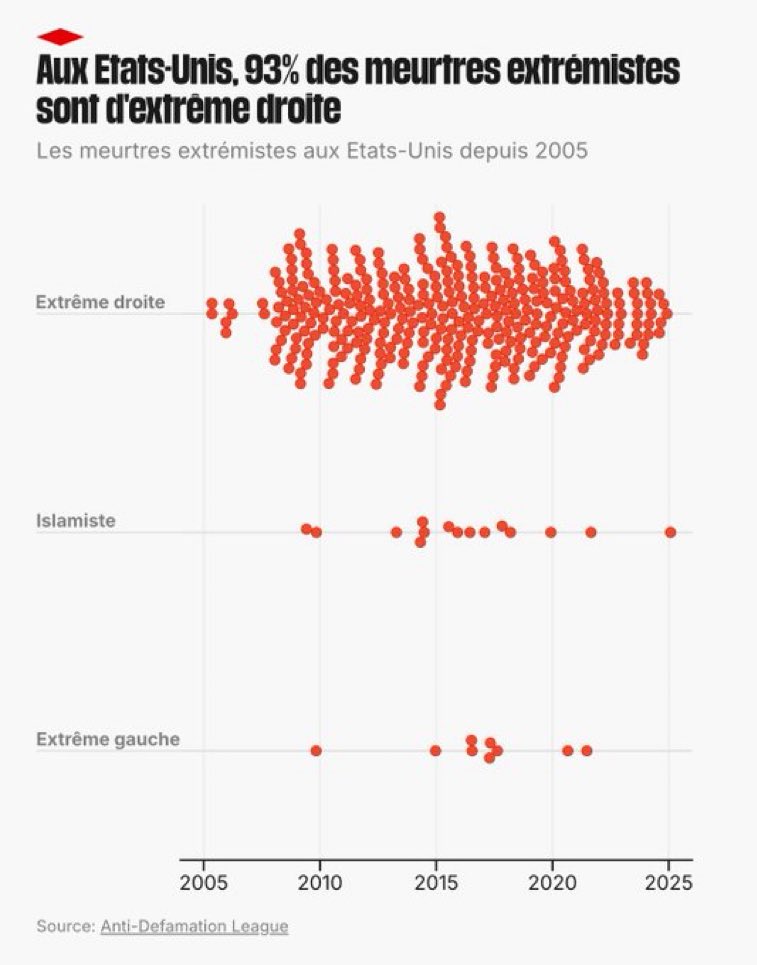

🇺🇸 Cette stat est dingue. Jamais CNews n’en parlera. Mais en gros, l’énorme majorité du terrorisme US est d’extrême droite … https://t.co/PRouXFOeqS

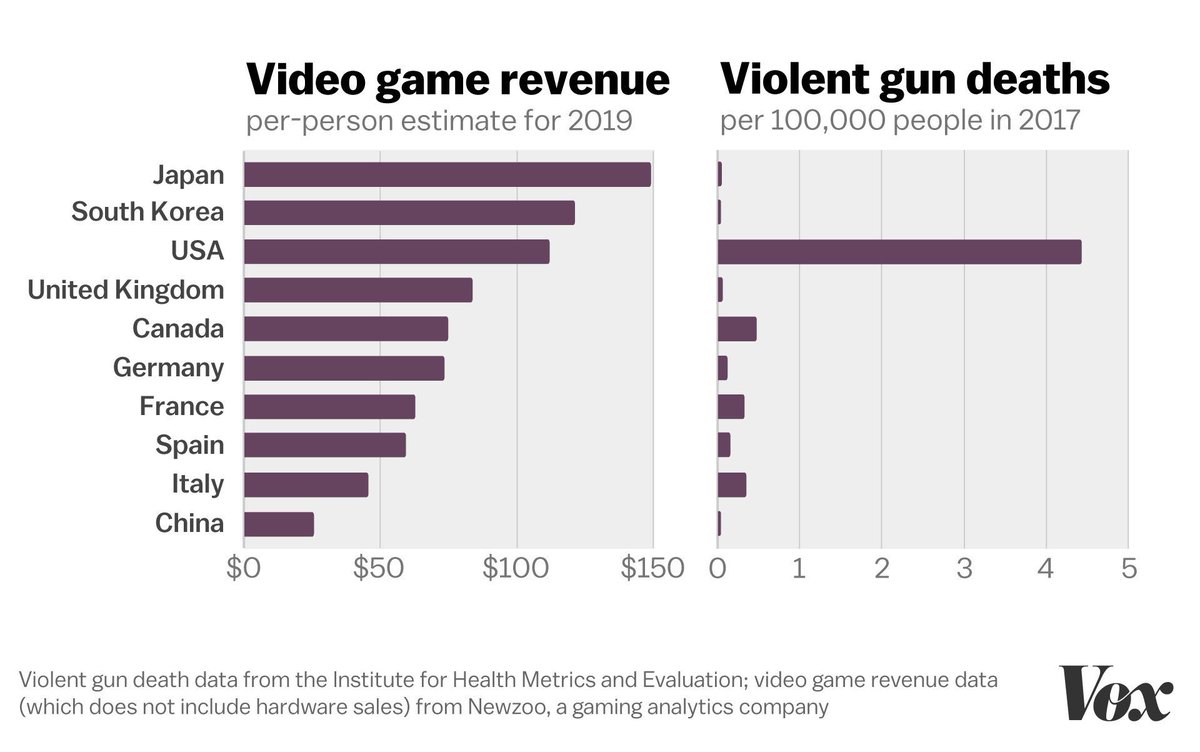

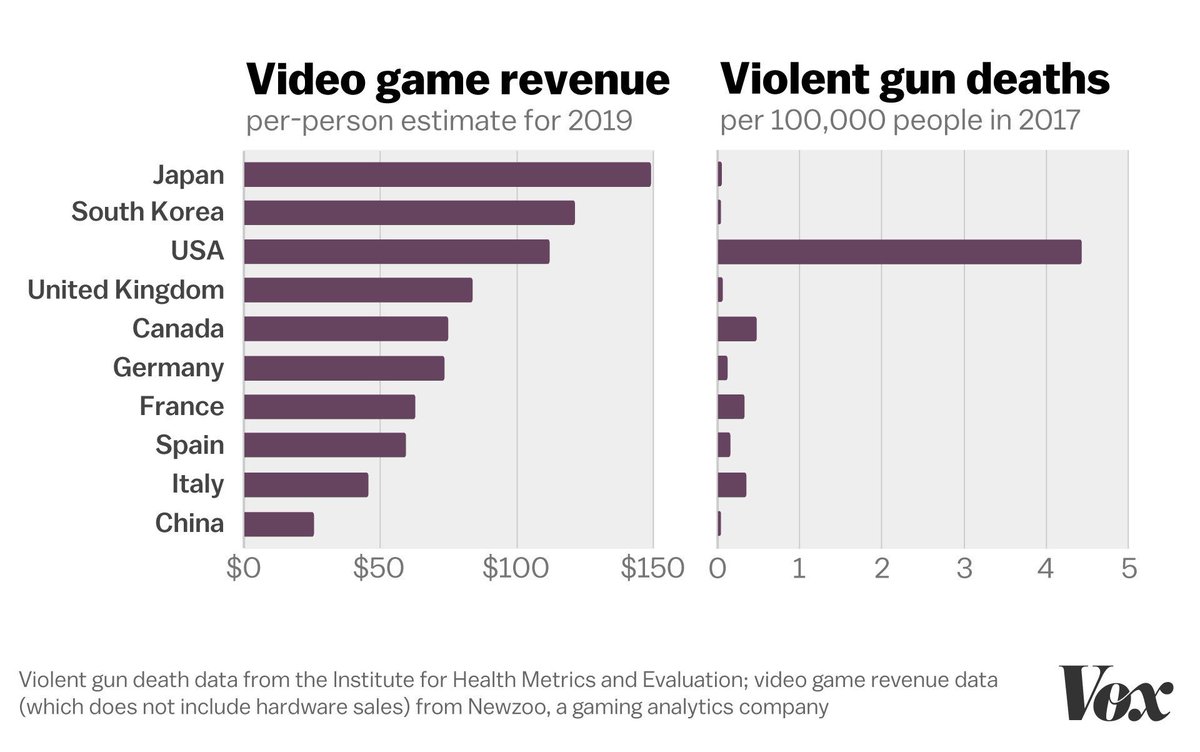

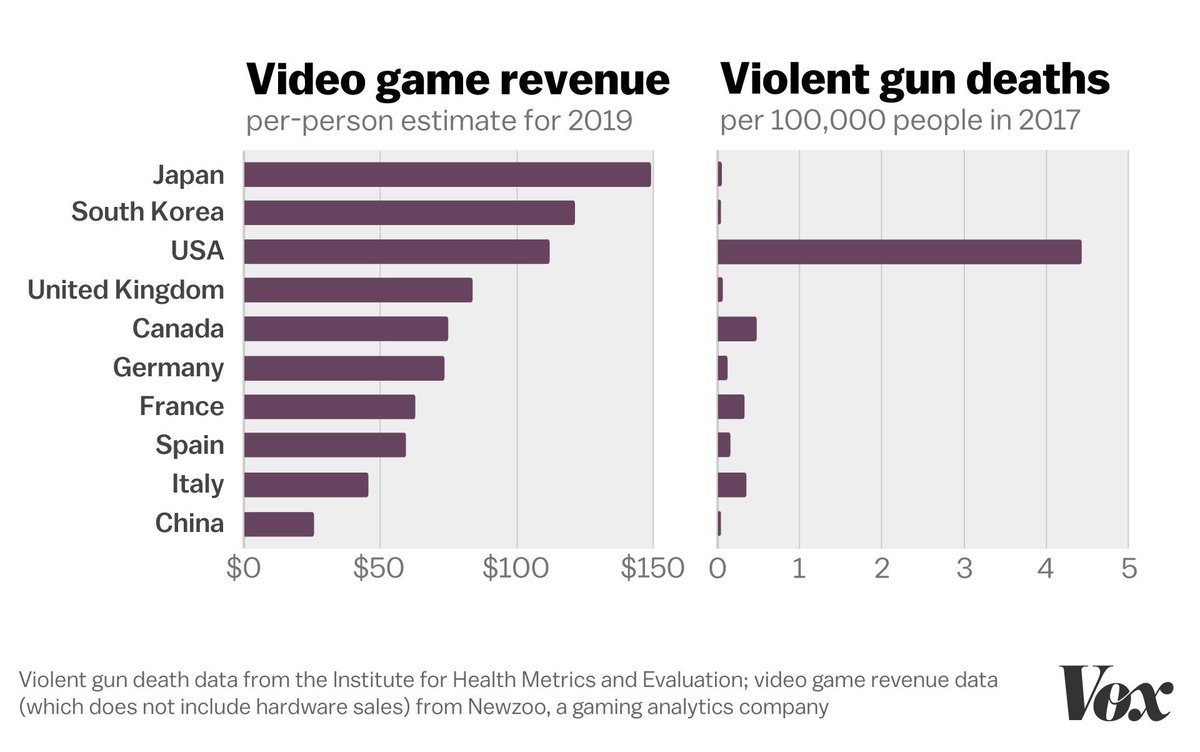

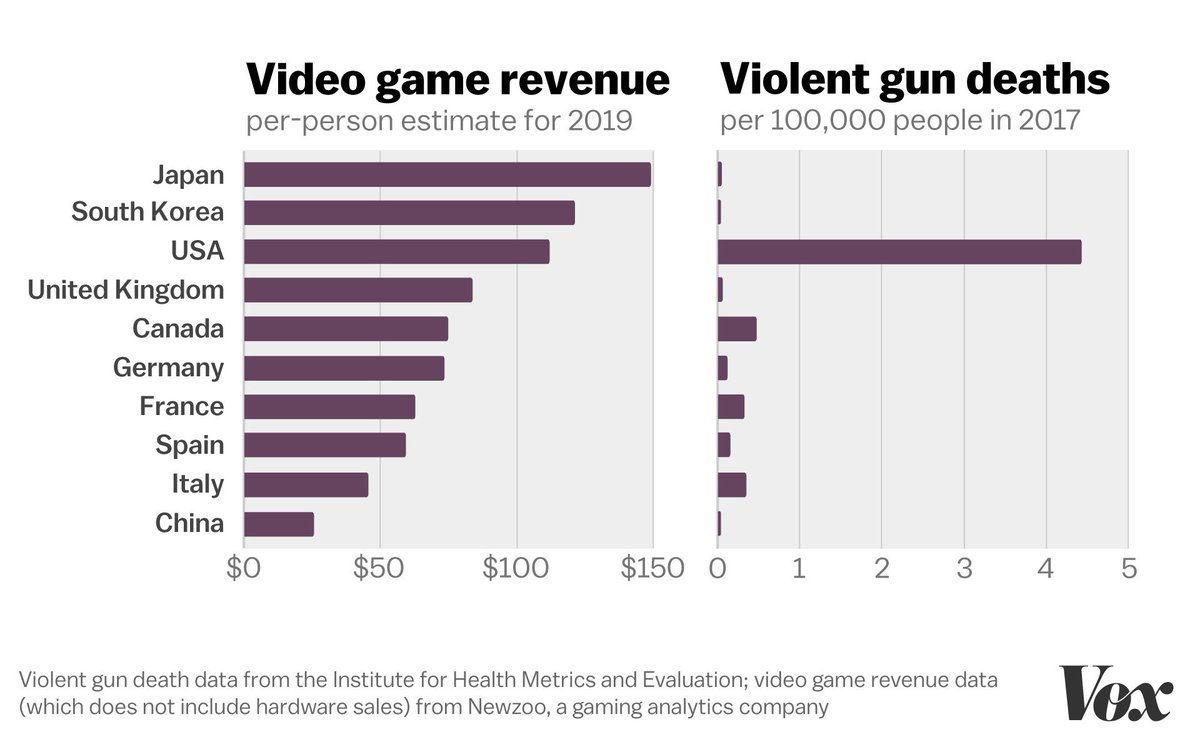

It’s not the video games. https://t.co/0JF0VUVI7R

It’s not the video games. https://t.co/0JF0VUVI7R

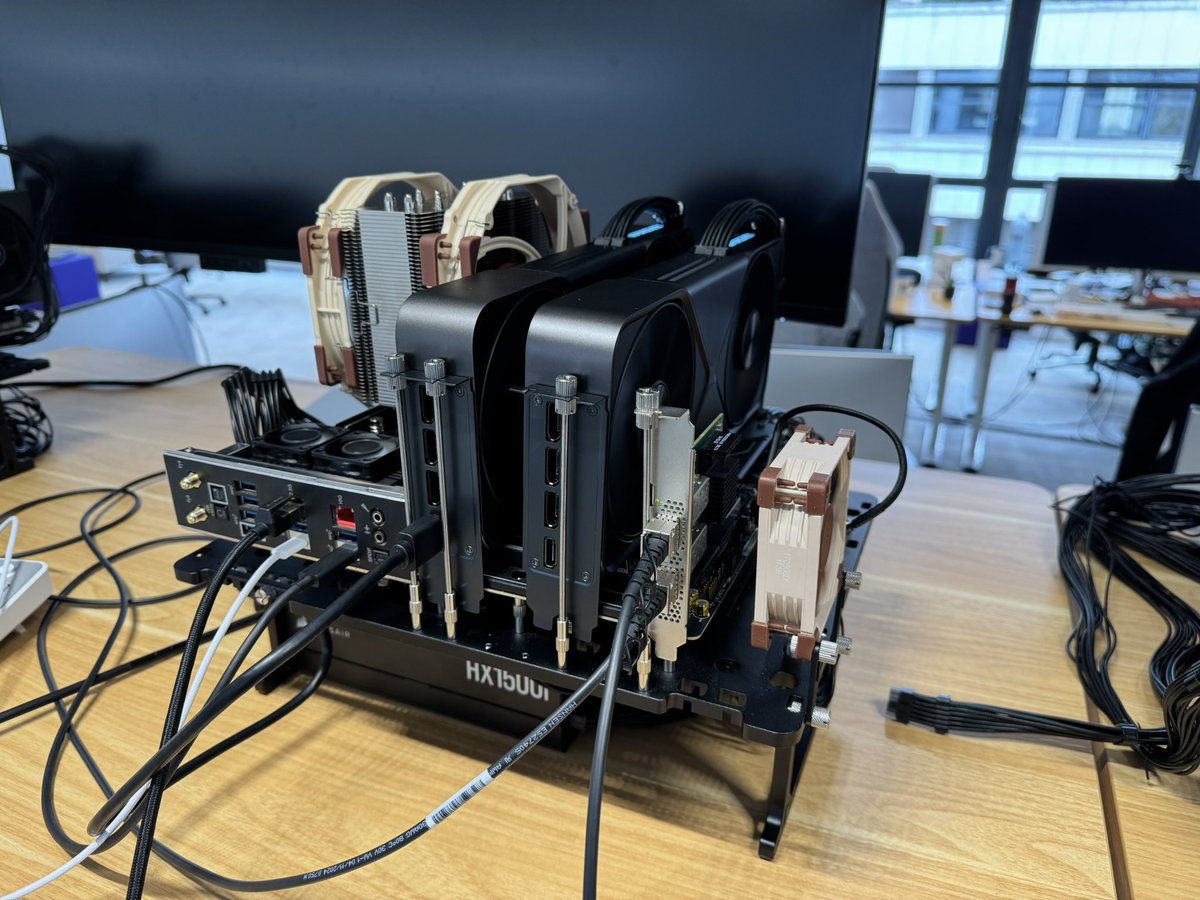

We upgraded our workbenches machines to ThreadRippers because we needed more PCI lanes to the NIC. If you come to see my talk @aiDotEngineer on Sep 24th, you might get why https://t.co/Bcb67UcaIU

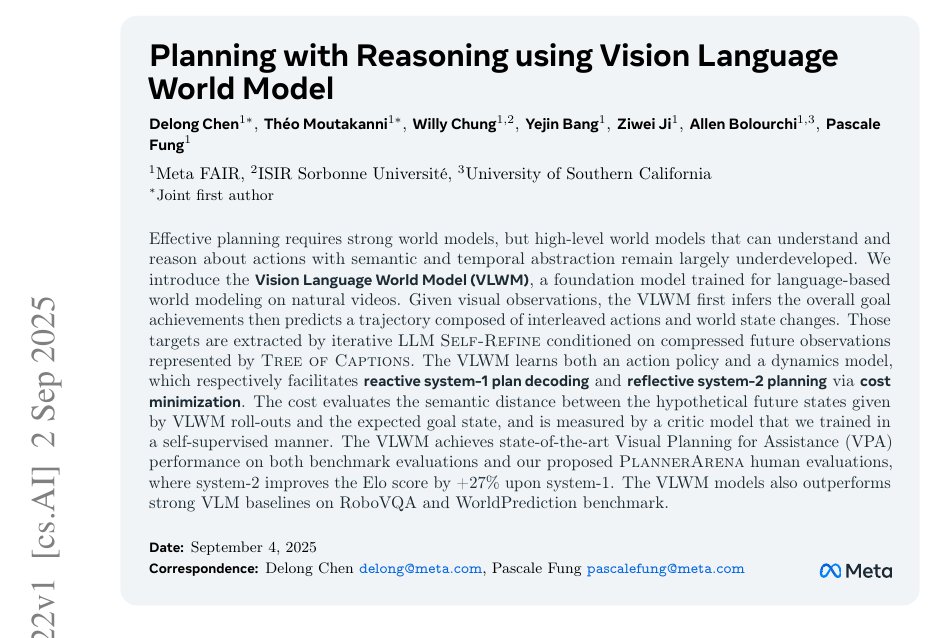

New @AIatMeta builds a vision language world model that turns videos into text plans and reasons to pick better actions. 27% higher Elo for system-2 planning over system-1. The gap it tackles, agents must predict how actions change the world rather than only label frames. VLWM, the Vision Language World Model, represents the hidden state in plain language, predicting a goal and interleaved actions with their state changes. Training targets come from a Tree of Captions that compresses each video, then an LLM refines them into goals and state updates. The model jointly learns a policy to propose the next action and a dynamics model to predict the next state. In fast mode it completes the plan text left to right, which is quick but can lock in early mistakes. In reflective mode it searches candidate plans, rolls out futures, and picks the lowest cost path. The critic that supplies this cost is trained without labels by ranking valid progress below distractors or shuffled steps. Across planning benchmarks and human head to head comparisons, reflective search produces cleaner, more reliable plans. ---- Paper – arxiv. org/abs/2509.02722 Paper Title: "Planning with Reasoning using Vision Language World Model"

I don't understand why we keep having this conversation when the data is clear. https://t.co/YrTOHCCzQZ

Fox News hosts laying the blame on gamers. FBI agents on CNN saying violent video games caused this. a Kennedy wants the government to research links to mass shooters and first person shooters. it is 1998

I don't understand why we keep having this conversation when the data is clear. https://t.co/YrTOHCCzQZ

This is journalist Karen Attiah, the only Black opinion columnist at the Washington Post. She was just fired for sharing a statement made by Charlie Kirk insulting Black women. RETWEET if you stand with @KarenAttiah! https://t.co/VJdQtakaOI

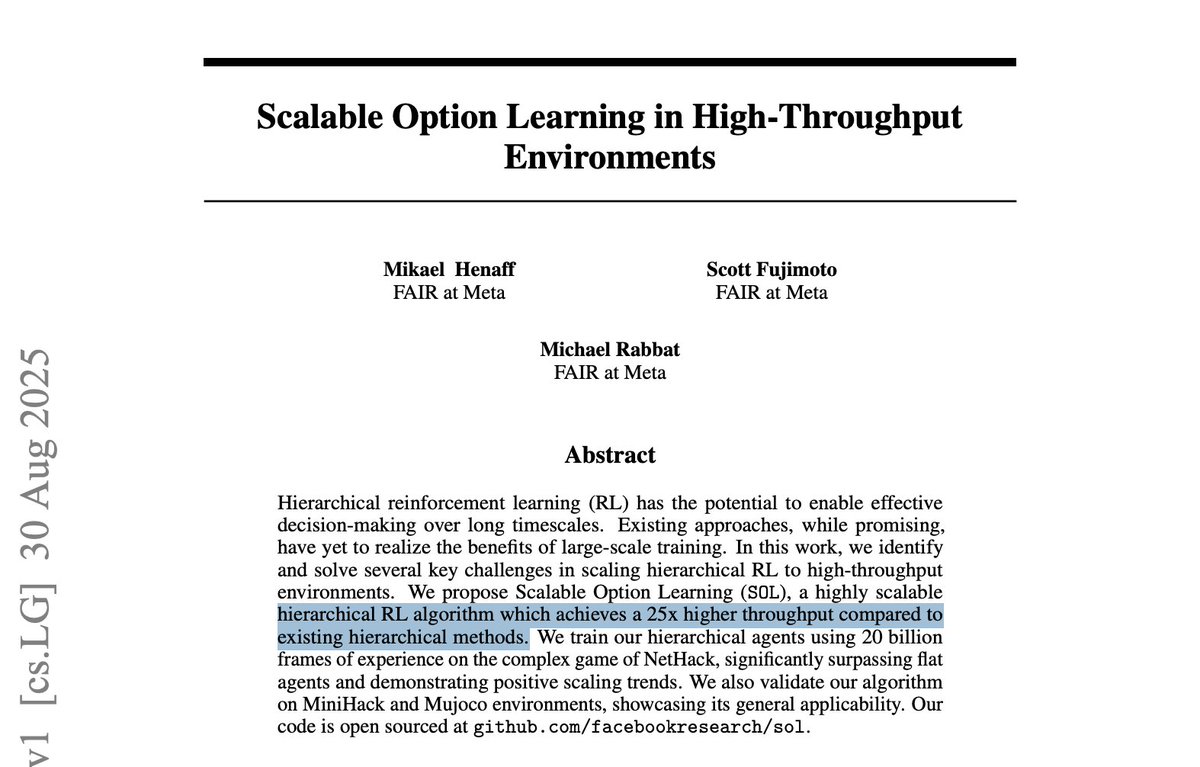

Meta just made training AI agents 25x faster. This is a breakthrough for robotics and complex planning. Meta's FAIR open sourced a new method called Scalable Option Learning. It trains a specialized agent at the scale previously seen only with LLMs. Here's how it works: The reason this type of AI (Agents trained with Hierarchical Reinforcement Learning) has been slow to train is a parallelization bottleneck. Imagine an AI team with a planner and many specialist workers (the sub-tasks). Older methods struggled because they had to process each planner's decision one-by-one before training the workers. SOL solves this with a new system design: A Single, Unified Brain: Instead of separate models, it uses a single actor-critic network to house the planner (controller policy) and all the workers (option policies). A Digital "Switch": It tells this unified brain which role to play at any given moment using a one-hot vector, a flag that says, "for this input, act as the 'navigation' worker." This allows thousands of different decisions for different policies to be batched and sent to the GPU at once. A Smart "Filter" for Learning: After the actions are taken, it uses a technique called tensorized masking. Think of this as a smart filter that ensures the right performance feedback (the rewards and advantages) goes to the correct worker policy. This is what breaks the one-at-a-time update problem. This architecture allows the entire hierarchical system to learn in parallel batches and removes the bottlenecks that held the field back. Why this matters: This new training method changes the viability of building agents that can reason and execute long-horizon tasks. - Business Leaders: This architecture is a key to developing sophisticated autonomous systems. A 25x faster training cycle accelerates R&D in robotics, logistics, and multi-stage process automation, making complex, strategic AI commercially achievable. - Practitioners: The authors plan to open-source SOL. You can implement agents that learn long-horizon skills without the performance penalty of older HRL methods, creating a path to more structured and potentially more robust models. - Researchers: This paper presents a validated solution to the HRL scaling problem (Section 3.2). The system for enabling high-throughput, asynchronous updates for a hierarchical agent is a major contribution that opens the door for large-scale experiments in temporal abstraction and credit assignment.

Excited to share details on two of our longest running and most effective safeguard collaborations, one with Anthropic and one with OpenAI. We've identified—and they've patched—a large number of vulnerabilities and together strengthened their safeguards. 🧵 1/6 https://t.co/GD39MAHjXW

Charlie Kirk has passed away at the age of 31. A husband, a father of two, and a man of God. He completely reshaped our country and had so much ahead of him. Gut-wrenching. Rest in peace, Charlie. https://t.co/IKAiHAKN5c

Please pray for my friend Charlie Kirk. My heart is with him, his wife and children during this critical time 🙏🏽 https://t.co/c6KYZPUyso

CHARLIE KIRK SHOT DEAD AT 31. A GREAT AMERICAN, IMPORTANT ALLY OF BRITAIN, FUTURE PRESIDENT, HUSBAND AND FATHER. Patriots are not your enemy. This man was fighting to save the UK and the West. God bless America. God help us all. We cannot go on like this. 🙏🇺🇸

"The Great, and even Legendary, Charlie Kirk, is dead. No one understood or had the Heart of the Youth in the United States of America better than Charlie. He was loved and admired by ALL, especially me, and now, he is no longer with us. Melania and my Sympathies go out to his beautiful wife Erika, and family. Charlie, we love you!" - President Donald J. Trump

Once again, a bullet has silenced the most eloquent truth teller of an era. My dear friend Charlie Kirk was our country's relentless and courageous crusader for free speech. We pray for Erika and the children. Charlie is already in paradise with the angels. We ask his prayers for our country.

Charlie Kirk gave everyone a voice, gave everyone a chance to speak, open debates, all ideas, all races, religions, welcome. He was one of the good guys. And they still shot him. Truly evil. https://t.co/nSBX3wZFfR

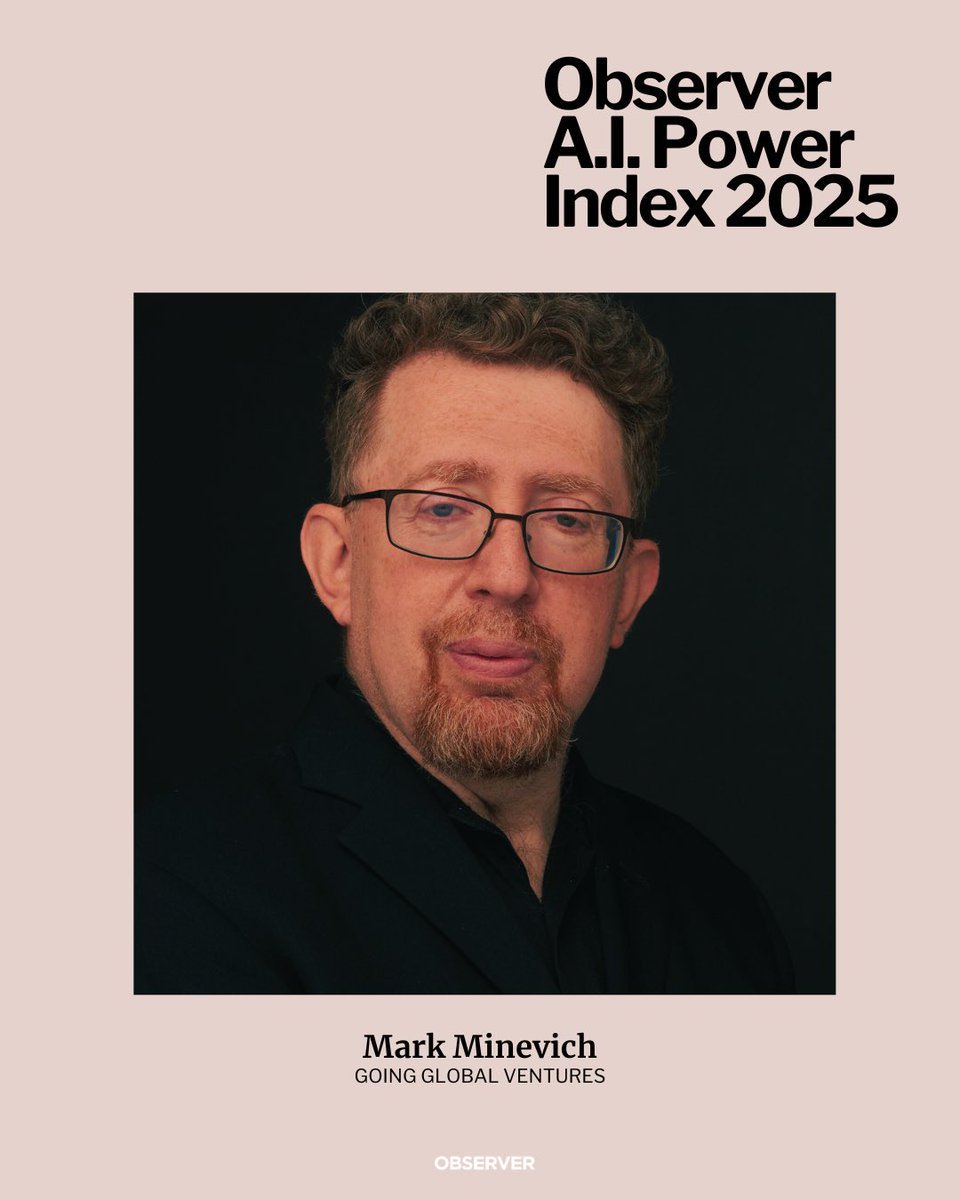

🚨 Big news! The 2025-2026 #AI150 list is here — celebrating the most visionary AI leaders shaping the future. Meet the changemakers 👉 https://t.co/wt1picGqpl Join us at #AIForum25 on Sept 30! 👉 https://t.co/SdaX3dOAFA @rwang0 @MMinevich https://t.co/Y3jXJnC12W

I lost my father in tragic circumstances when I was around the same age as Charlie Kirk’s daughter. And I can say with absolute certainty that there is no pain like losing your dad. To know that he will never get to see you grow up. To know you will never be able to hug him again, or sit in his lap, or be held in his arms. That loss follows you everywhere. It shows up at graduations, at birthdays, on Christmas mornings. It lingers in the empty chair at family dinners. It cuts deepest during those ordinary, quiet moments when you wish you could just pick up the phone and hear his voice. The world was robbed of Charlie Kirk. But, most tragically, his family was robbed of him. From one fatherless daughter to another, my heart aches for his little girl. I would not wish this kind of pain on anyone. May she find comfort and strength in the knowledge that he is safe in God’s Kingdom, still loving and protecting them from above.

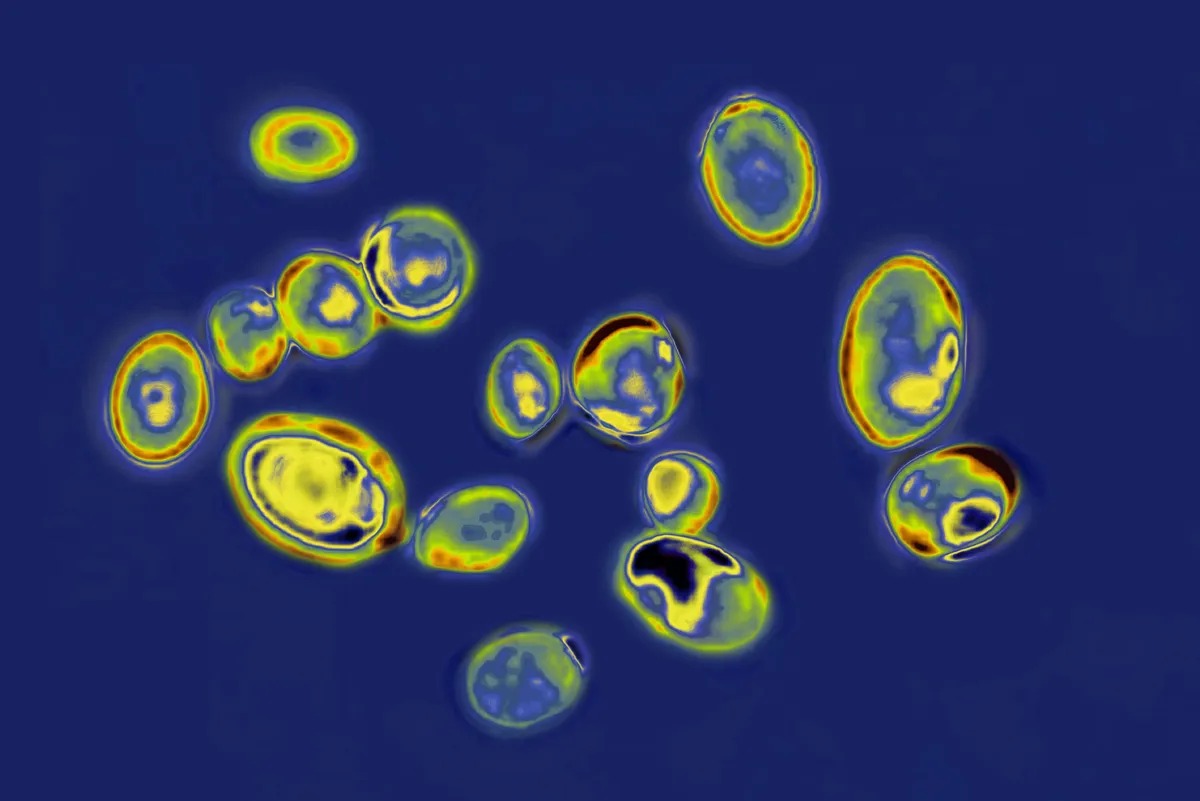

🇪🇺 DEADLY DRUG-RESISTANT FUNGUS SPREADING FAST IN EUROPEAN HOSPITALS C. auris cases surged 67% in 2023, hitting a record 1,346 - up from zero a decade ago. The fungus clings to hospital surfaces, resists treatment, and can kill up to 60% of infected patients. It’s now so widespread in countries like Greece, Italy, and Spain that outbreaks can’t even be traced. The ECDC says early detection and isolation still work, but only 17 countries even track it properly. On top of all that, no one’s writing checks to develop the drugs we actually need. Source: Bloomberg

My latest in @RedState on Beijing’s Sept. 3 Victory Day Parade and the actual message it sent about the regime and its partnerships. Link in comments. 👇 https://t.co/UmvJhK7YFt

This year’s A.I. Power Index chronicles 100 leaders navigating trillion-dollar infrastructure investments, geopolitical competition and the tension between moving fast and moving responsibly. Explore the people and companies driving A.I. influence—for better or worse: https://t.co/uqclUNLXgi

Observer’s 2025 A.I. Power Index is out 🚀 Proud to be named among the leaders defining the future of AI. This is just the beginning. @observer 🔗 https://t.co/r3D53aIquz #ObserverPowerIndex #AI https://t.co/339TQxA4gB

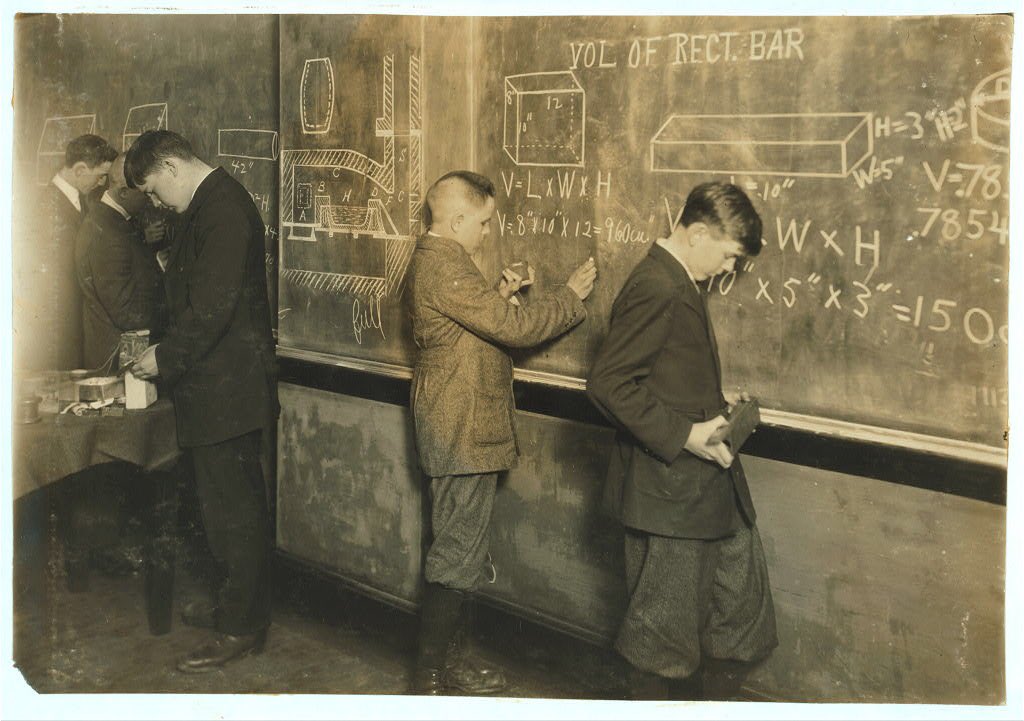

Working on a research piece on the history of vocational schools in America. Why? By 2020, there will be 1.4M more software dev jobs than applicants who can fill them. https://t.co/lTBcYZAKaL

My birthday is next week, so the group chat has been non stop “you’re such a Virgo” followed by a bunch of insults, backhanded compliments, and stupid things I completely forgot about. So, I casually dropped this and left the chat… https://t.co/m7wCVczRoD

The US job market is being propped up primarily by ongoing employment gains in the health care industry. https://t.co/Tot4An6FnL

“If a picture is worth a thousand words, then a prototype is worth a thousand meetings.” Loved this conversation on how designers, product managers, and Zillow’s co-founder himself use @Replit to ship hundreds & hundreds of prototypes. @amasad @LloydFrink @theallinpod https://t.co/IDmwecEk9m