@ShashwatGoel7

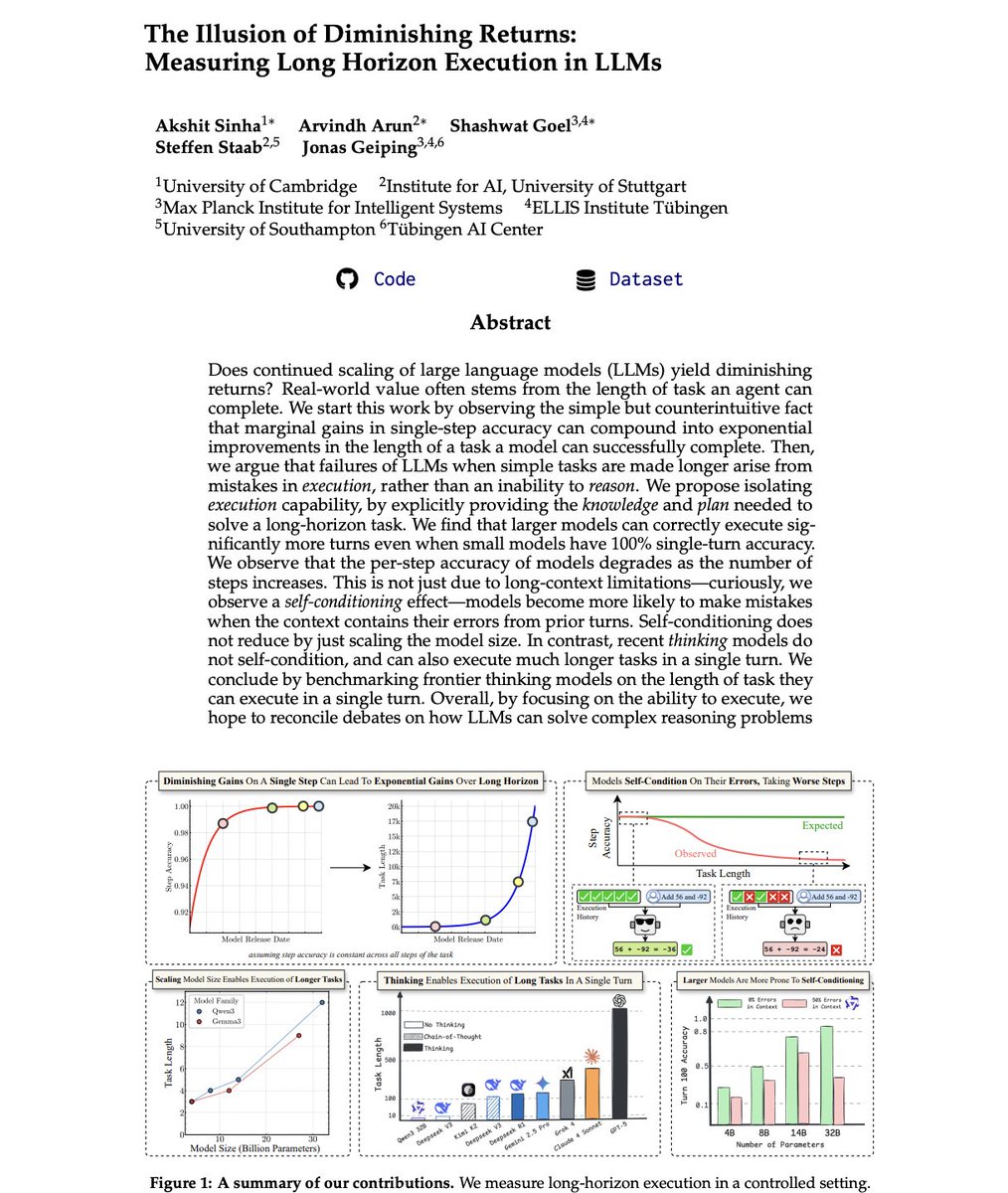

Paper fresh of the press: The Illusion of Diminishing Returns: Measuring Long Horizon Execution in LLMs. Are small models the future of agentic AI? Is scaling LLM compute not worth the cost due to diminishing returns? Are autoregressive LLMs doomed, and thinking an illusion? The bear cases for LLM scaling are all connected to a single capability: Long Horizon Execution. However, thats exactly why you should be bullish on scaling model size, and test-time compute! > First, remember the METR plot? It might be explained by @ylecun 's model of compounding errors > the horizon length of a model grows super-exponentially (@DaveShapi) in single step accuracy. > Upshot 1: Don't be fooled by slowing progress on typical short-task benchmarks > that is enough for exponential growth in horizon length. But we go beyond @ylecun's model, testing LLMs empirically... > Just execution is also hard for LLMs, even when you provide them the needed plan and knowledge. > We should not misinterpret execution failures as an inability to "reason". > Even when a small model has 100% single-step accuracy, larger models can execute far more turns above a success rate threshold. > Noticed how your agent performs worse as the task gets longer? Its not just long-context limitations.. > We observe: The Self-Conditioning Effect! > When models see errors they made earlier in their history, they become more likely to make errors in future turns. > Increasing model size worsens this problem - a rare case of inverse scaling! So what about thinking...? > Thinking is not an illusion. It is the engine for execution! > Where even DeepSeek v3, Kimi K2 fail to execute even 5 turns latently when asked to execute without CoT... > With CoT, they can do 10x more. So what about the frontier? > GPT-5 Thinking is far ahead of all other models we tested. It can execute 1000+ step tasks in one go. > At second with 432 steps is Claude 4 Sonnet... and then Grok-4 at 384 > Gemini 2.5 Pro and DeepSeek R1 lag far behind, at just 120. > Is that why GPT-5 was codenamed Horizon? 🤔 > Open-source has a long ;) way to go! > Let's grow it together! We release all code and data. We did a longggg deep dive, and present you the best takeaways with awesome plots below 👇