Your curated collection of saved posts and media

Google just gave AI agents access to your credit card. Yesterday they launched Agent Payments Protocol (AP2), which lets AI complete purchases for you 100% autonomously. Here's why you'll never transact the same way again: 🧵 https://t.co/bZpOgNPxeo

If you’re not following @Scobleizer’s X lists, you’re missing out. Just activated a full deck in X Pro 🤯 I can see everything now! https://t.co/0ohMEfFoKQ

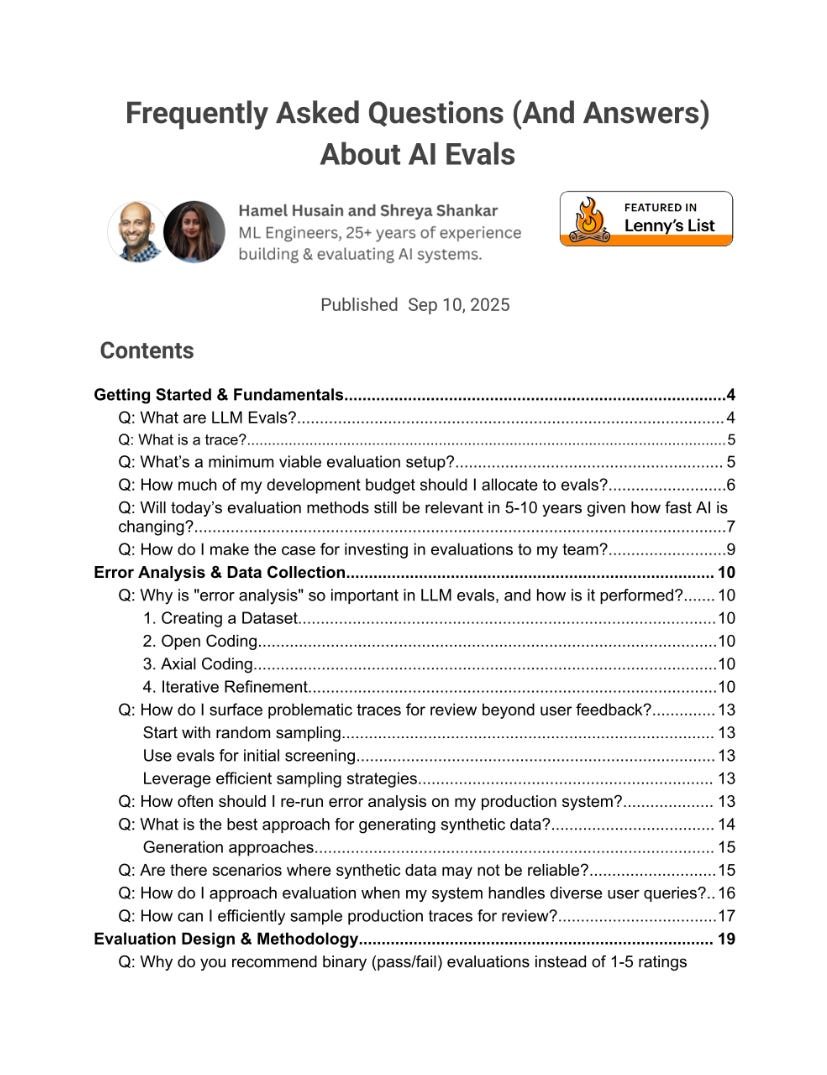

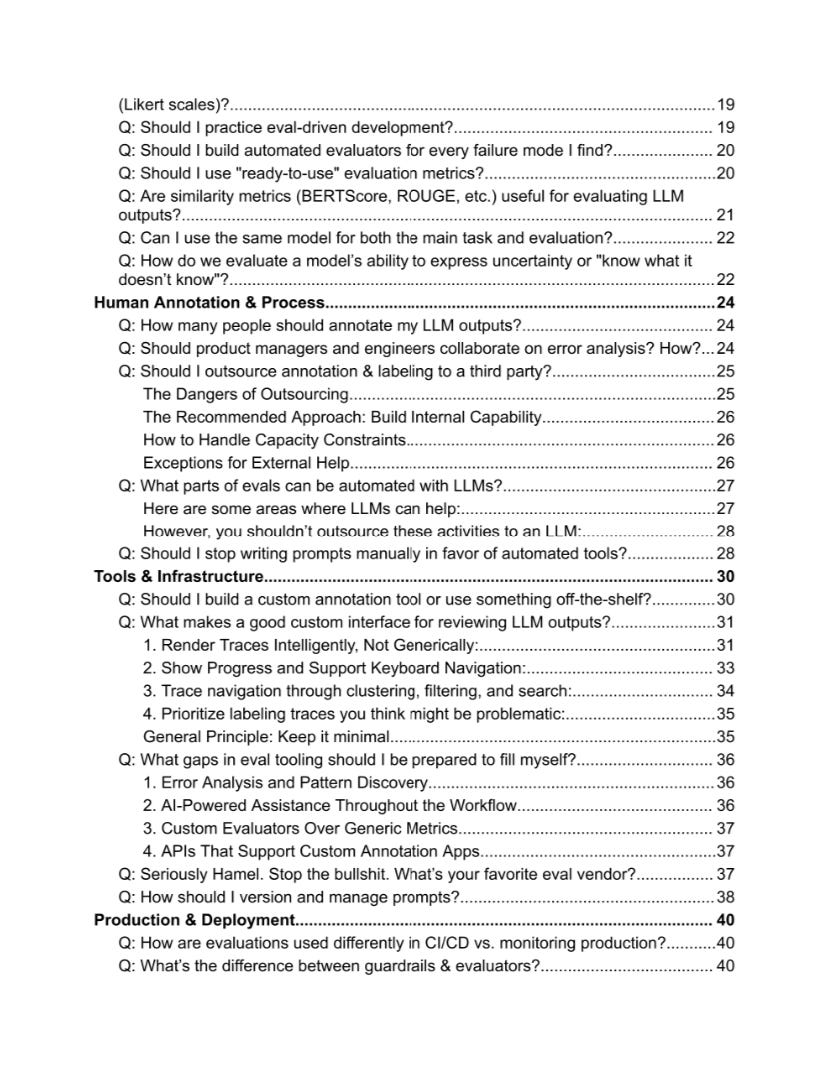

I got permission to publish a new AI Evals FAQ (Sep, 2025). It’s massive. And it's a goldmine for engineers and AI PMs. @HamelHusain and @sh_reya run the world’s No. 1 AI Evals course. Together with top AI architects and ML researchers, they answer the most common questions from 1,500+ students. 51 pages of unique insights and resources. And 100% free. Some of the questions: Q: What are LLM Evals? Q: What’s a minimum viable evaluation setup? Q: Why is "error analysis" so important in LLM evals, and how is it performed? Q: What is the best approach for generating synthetic data? Q: Are there scenarios where synthetic data may not be reliable? Q: Why do you recommend binary (pass/fail) evaluations? Q: Should I use "ready-to-use" evaluation metrics? Q: How many people should annotate my LLM outputs? Q: Should PMs and engineers collaborate on error analysis? How? Q: What parts of evals can be automated with LLMs? Q: Should I stop writing prompts manually in favor of automated tools? Q: What makes a good custom interface for reviewing LLM outputs? Q: What gaps in eval tooling should I be prepared to fill myself? Q: How should I version and manage prompts? Q: How are evaluations used differently in CI/CD vs. monitoring production? Q: What’s the difference between guardrails & evaluators? Q: Is RAG dead? Q: How should I approach evaluating my RAG system? Q: How do I evaluate sessions with human handoffs? Q: How do I evaluate complex multi-step workflows? Q: How do I evaluate agentic workflows? Get a full PDF (Google Drive, 51 pages): https://t.co/4azaPGfIxr Hope that helps! Feel free to share it with your network. — P.S. The guys shared a crazy amount of knowledge for free. But if you want to dive even deeper, here's a 35% discount for the AI Evals Cohort: https://t.co/MtnOgX99i9 (The next cohort: Oct 6—Nov 1, 2025)

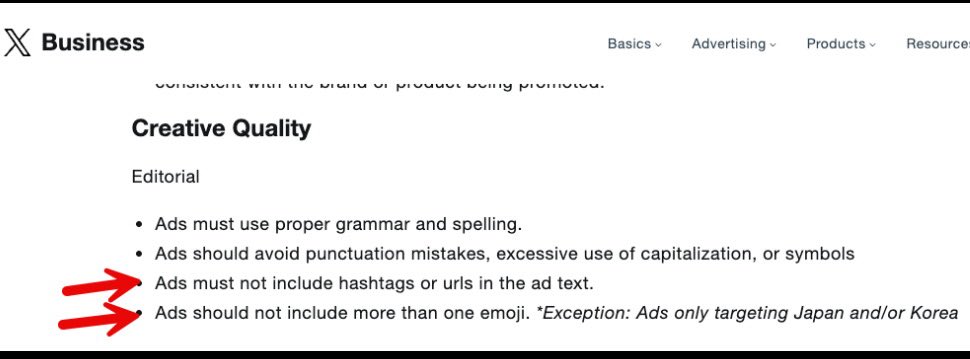

These are X’s ad creative rules, which seem worth paying attention to even if you aren’t using X for ads because they will likely down rank organic content with this? (Already knew about URLs, but not emojis) https://t.co/n8iEOh9QQn

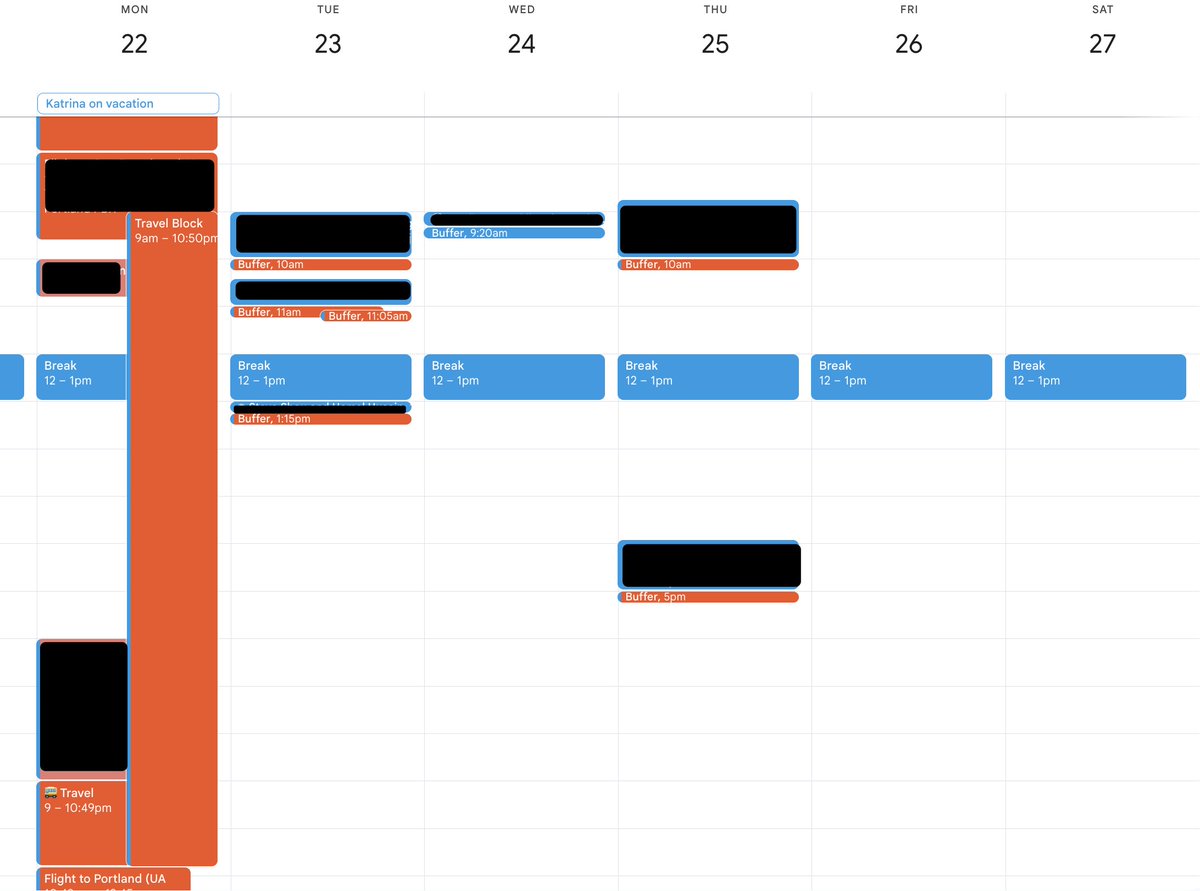

@JnBrymn Making good progress but need to be more agressive https://t.co/E9HAskiE3X

Multi-directory support: https://t.co/Pccmmf9KEE

Multi-directory support: https://t.co/Pccmmf9KEE

I like making GPUs go brrt at @modal. I wrote up what I've learned along the way in an extension to the GPU Glossary -- our "CUDA Docs for Humans". Introducing: the GPU 𝔓𝔢𝔯𝔣𝔬𝔯𝔪𝔞𝔫𝔠𝔢 Glossary. https://t.co/9IDfgGqVFX https://t.co/ESU62gVa0A

I am giving a talk in ~1 hr on some of recent work we have been doing at Berkeley around the DocETL and DocWrangler projects! The talk is titled "Agentic Query Optimization for Unstructured Data Processing," and it will be livestreamed on youtube: https://t.co/S5hC4oM5kS

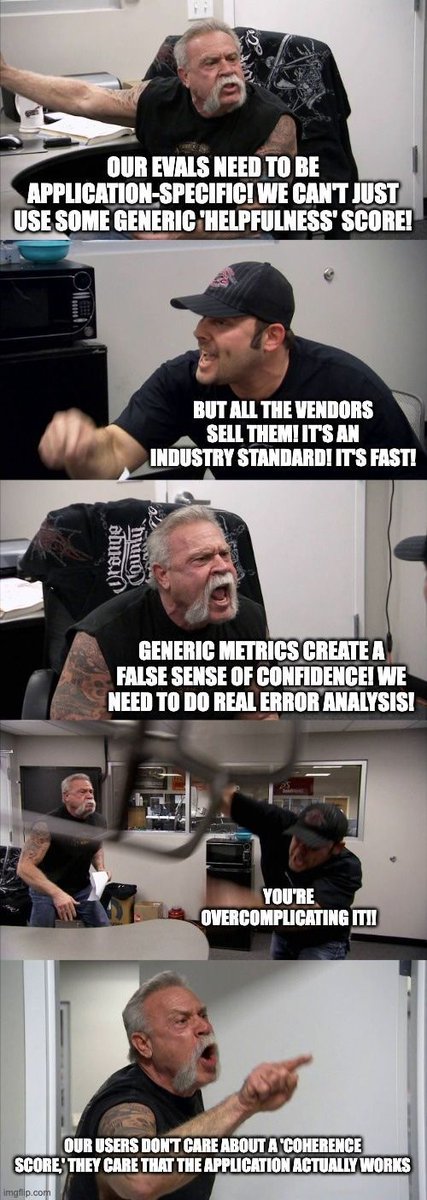

Making Eval memes. (Click to expand this one) https://t.co/IsgCxiU2CB

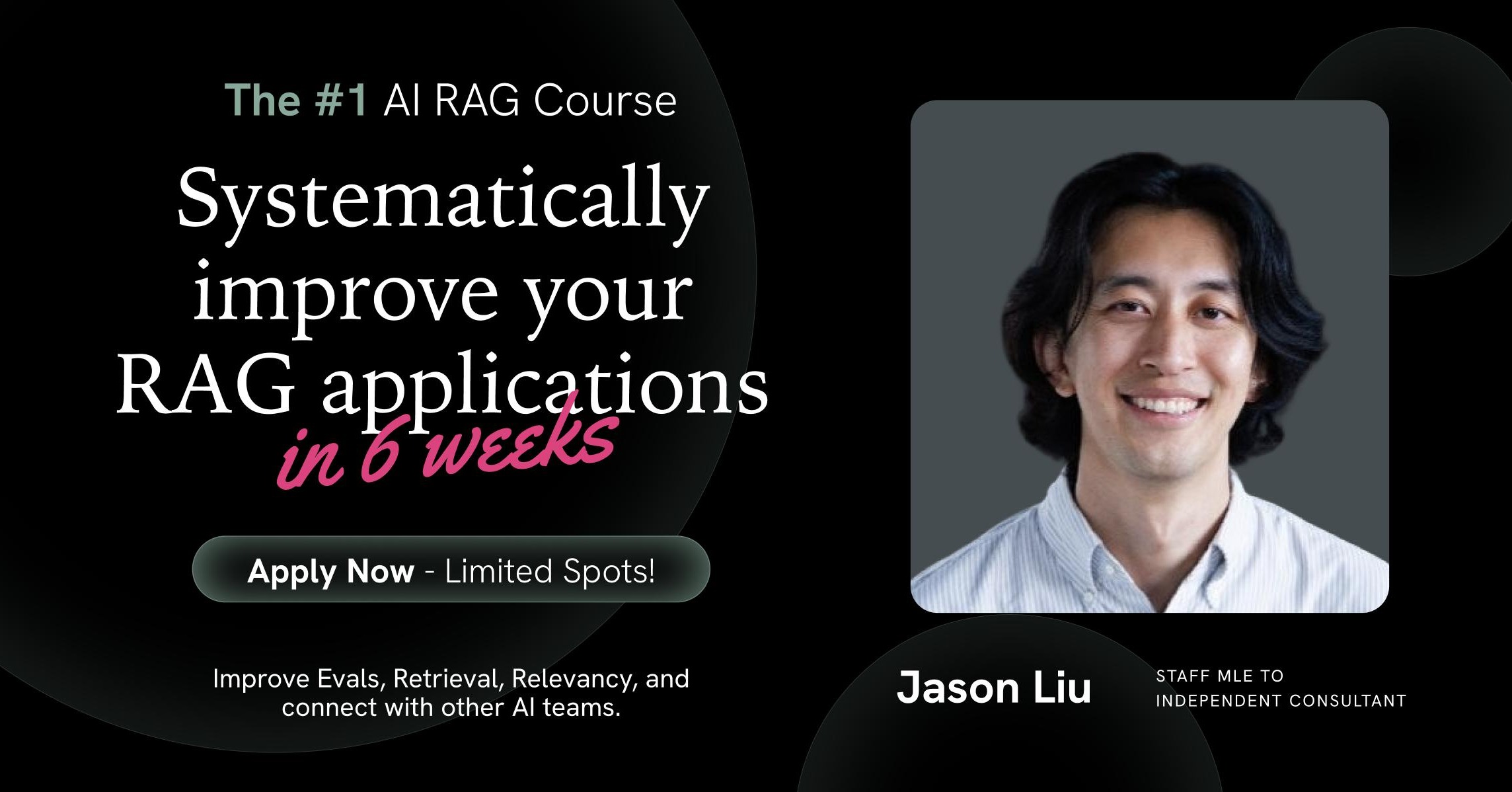

Also learning evals is the #1 course on Maven right now https://t.co/GG34qRqNYY

Trend I'm following: How AI labs' hunger for high-quality evals and data-labeling is creating some of the fastest growing and profitable companies in the world, e.g. - @mercor_ai ($1m -> $500m in 17 months 😮) - @joinHandshake ($0 -> $100m in <12 months 😮) - @HelloSurgeAI ($1.5B+

Also learning evals is the #1 course on Maven right now https://t.co/GG34qRqNYY

Demo of the Qwen3-recommender hybrid returning both semantic IDs and natural language! • steering recs via natural language • explaining the recommendation • naming the bundle of recommendations • multi-turn conversation to get recs watch till the end for the bloopers lol https://t.co/mHpKhiY7OQ

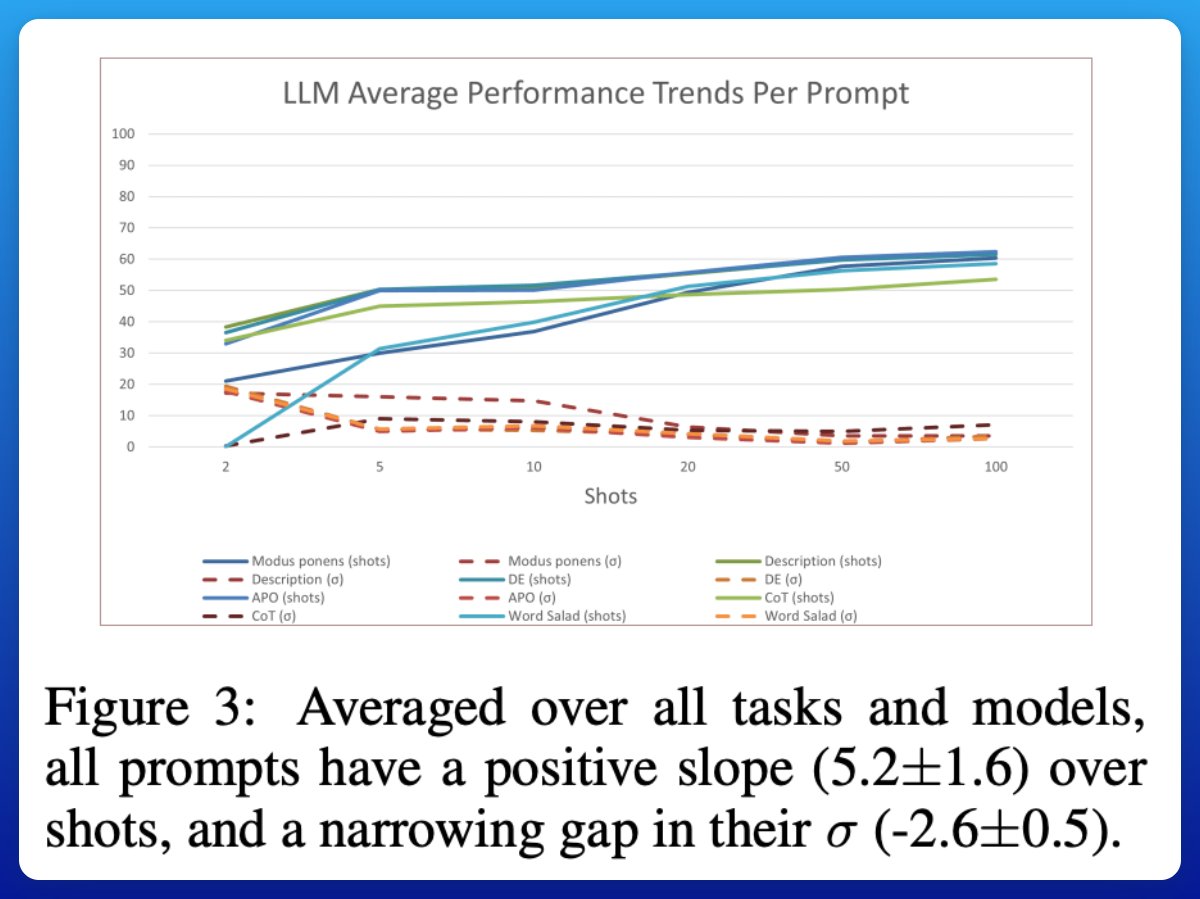

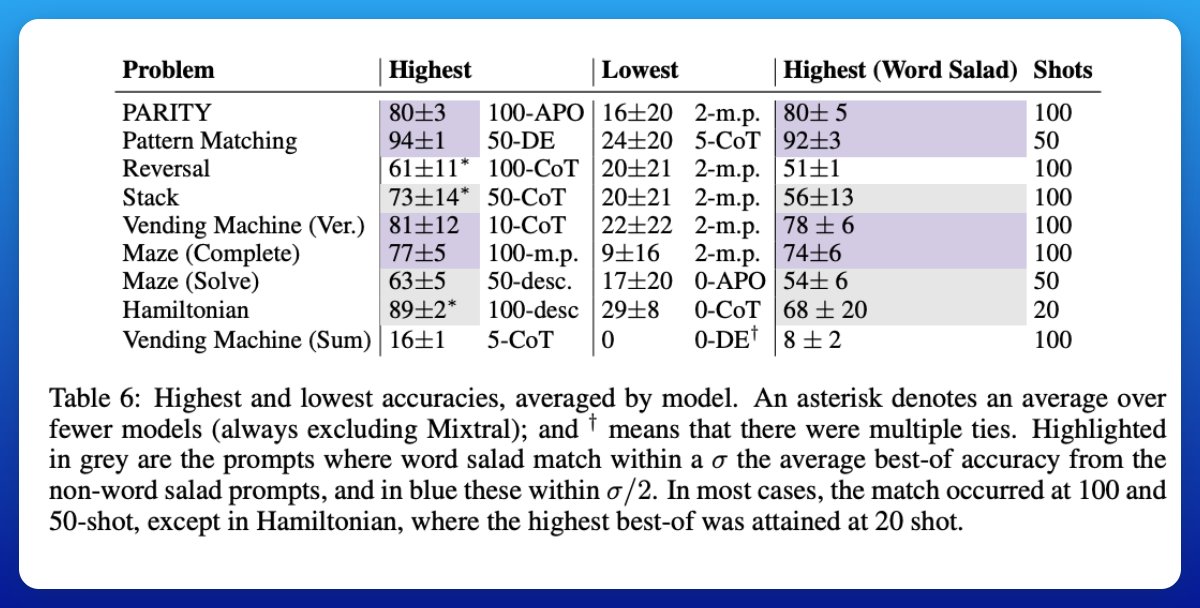

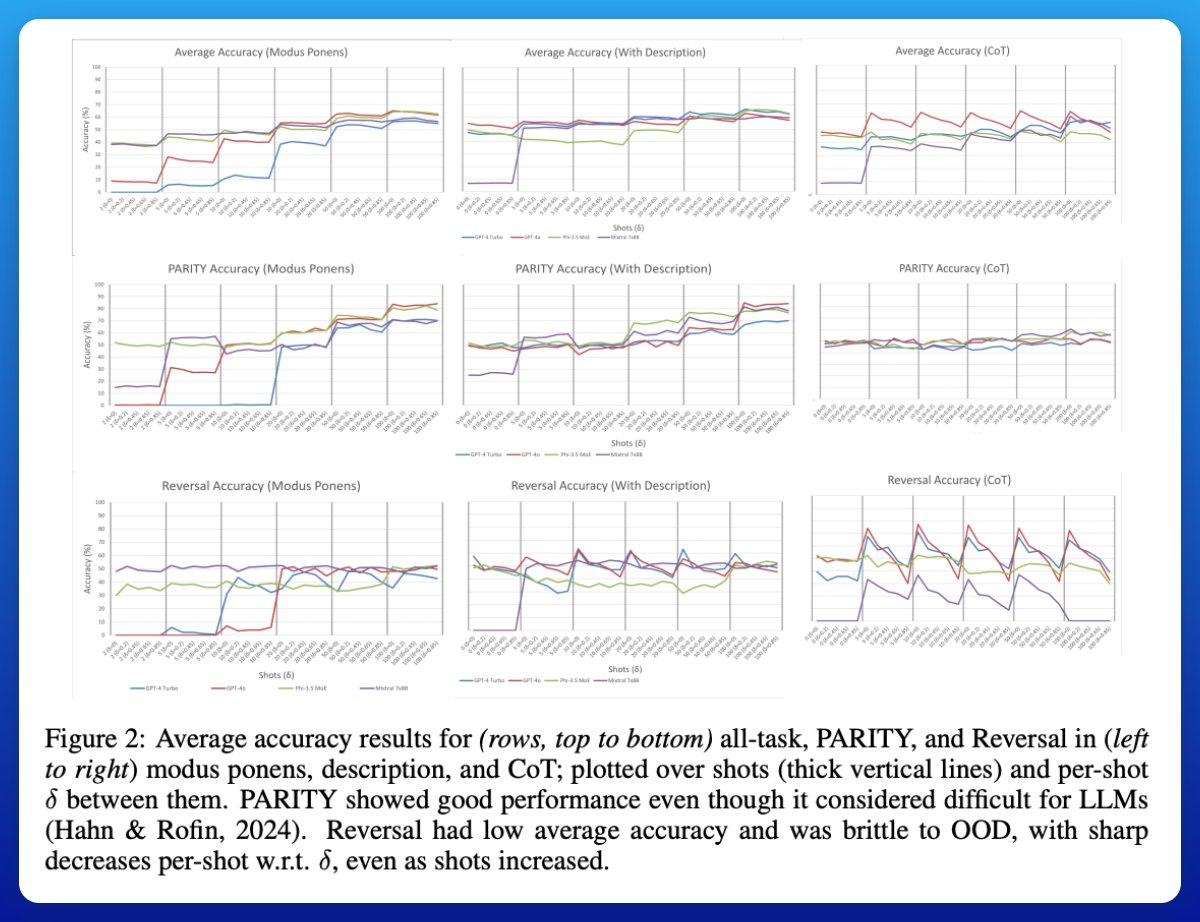

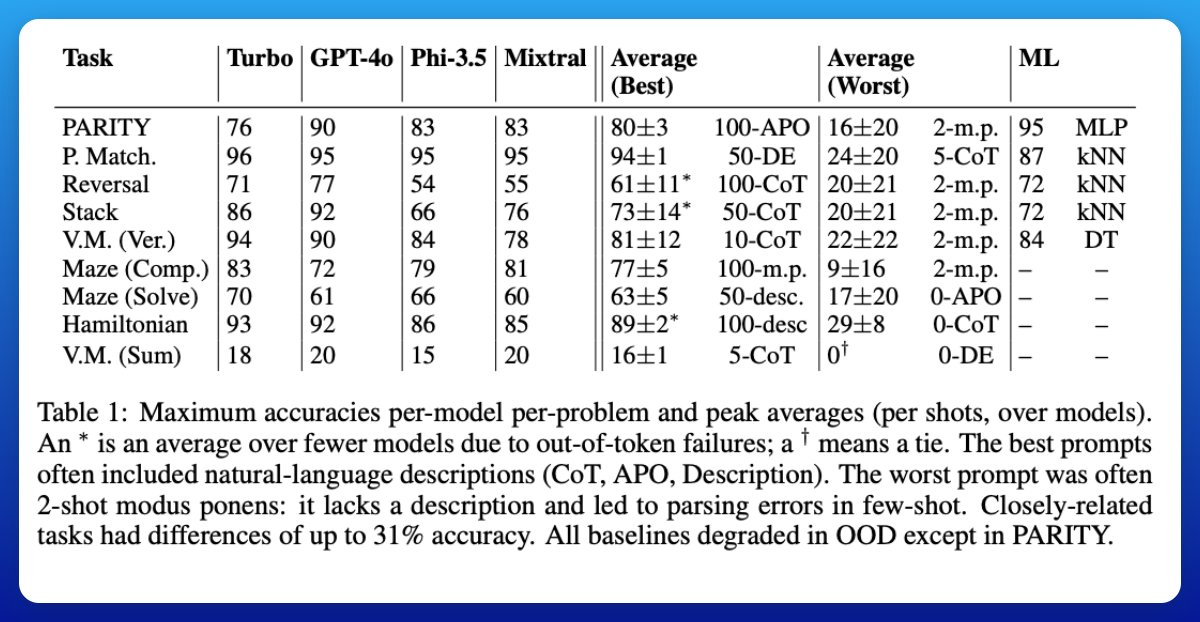

Learning happens, but needs many examples. With 50–100 examples in a prompt, accuracy improves steadily and models of different sizes and brands start looking similar. This challenges the common few-shot story: a handful of examples usually isn’t enough. https://t.co/V7H79E42F6

Prompt wording matters less over time If you replace instructions with random word salad, performance eventually catches up, as long as the exemplars remain intact. But if you scramble the examples themselves (“salad-of-thought”), performance collapses. https://t.co/VzlMgkpxRe

Weak on robustness. When the test data looks different from the training examples (distribution shift), performance drops sharply. Chain-of-thought and automated prompt optimization are especially brittle. https://t.co/lU8cnBAxoQ

Not all tasks are equal. Some formal problems (like simple pattern matching) are nearly solved, while others (like string reversal or certain arithmetic tasks) remain tough. Interestingly, two tasks that look similar can differ by as much as 31% in accuracy. https://t.co/qkZgJliUng

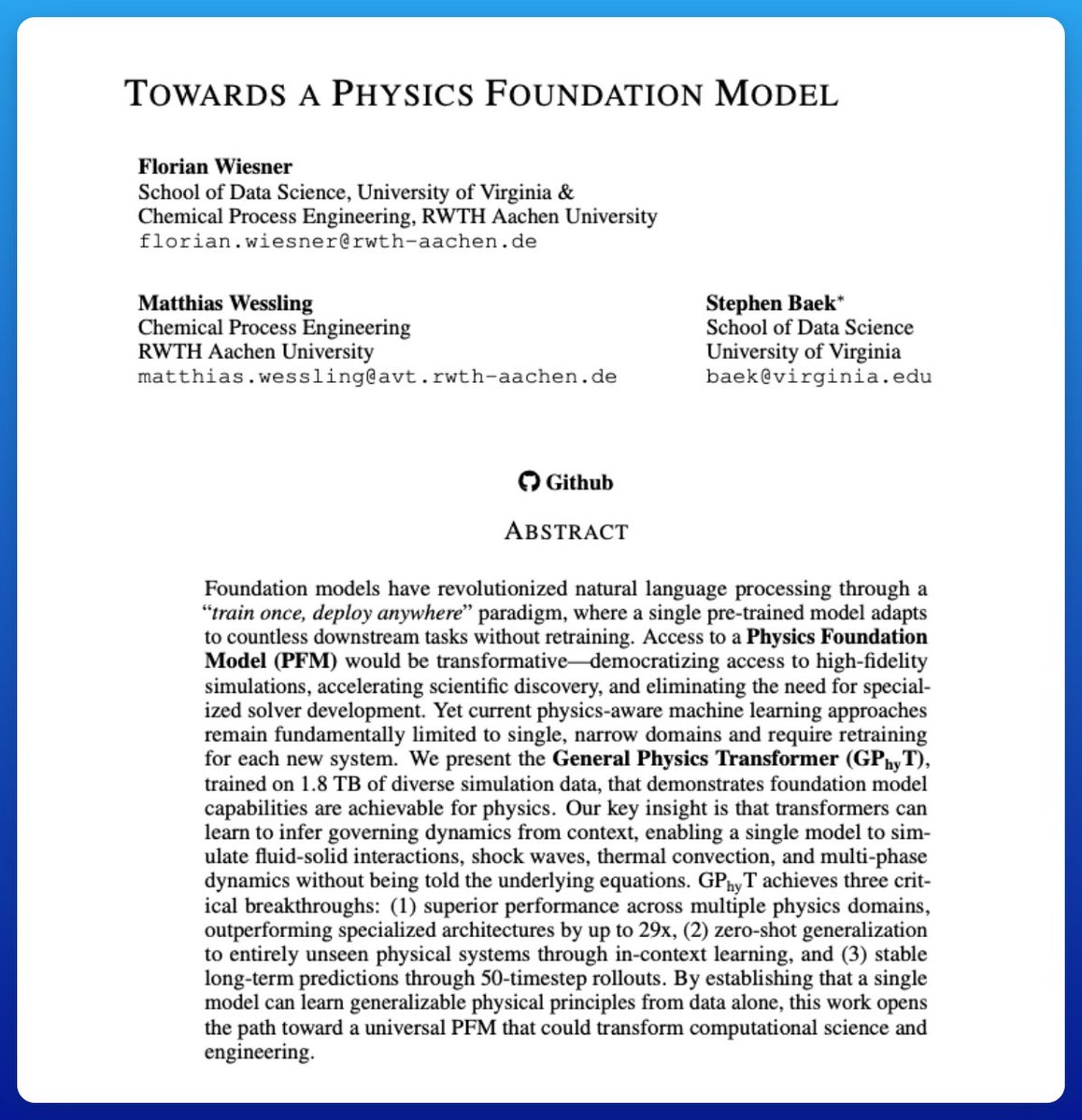

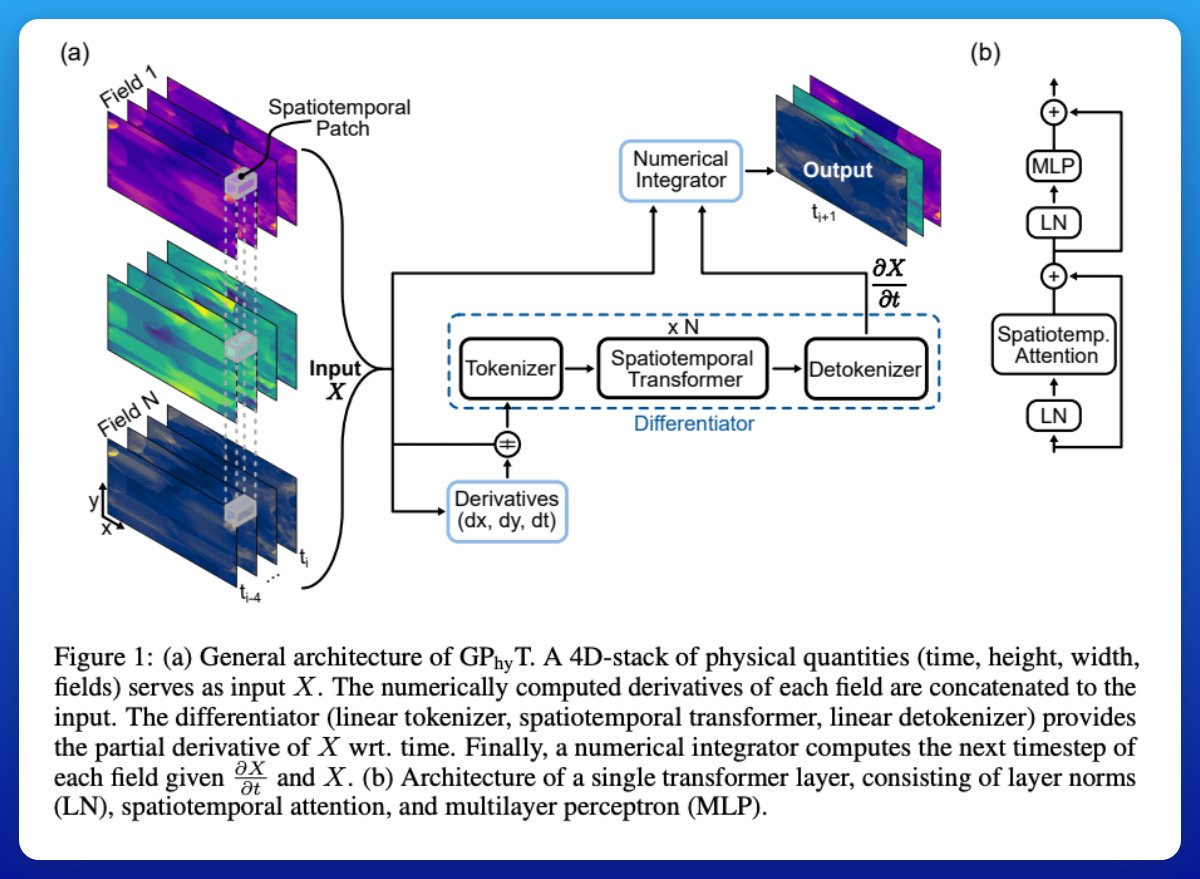

Towards a Physics Foundation Model Proposes GPhyT (General Physics Transformer), a large transformer trained on 1.8 TB of simulation data across fluid flows, shock waves, heat transfer, and multiphase dynamics. Here are a few key notes: https://t.co/CKkW9mQGsM

How the model works Think of GPhyT as a hybrid of a neural net and a physics engine. It takes in a short history of what’s happening (like a few frames of a simulation), figures out the rules of change from that, then applies a simple update step to predict what comes next. It’s like teaching a transformer to play physics frame prediction with hints from basic calculus.

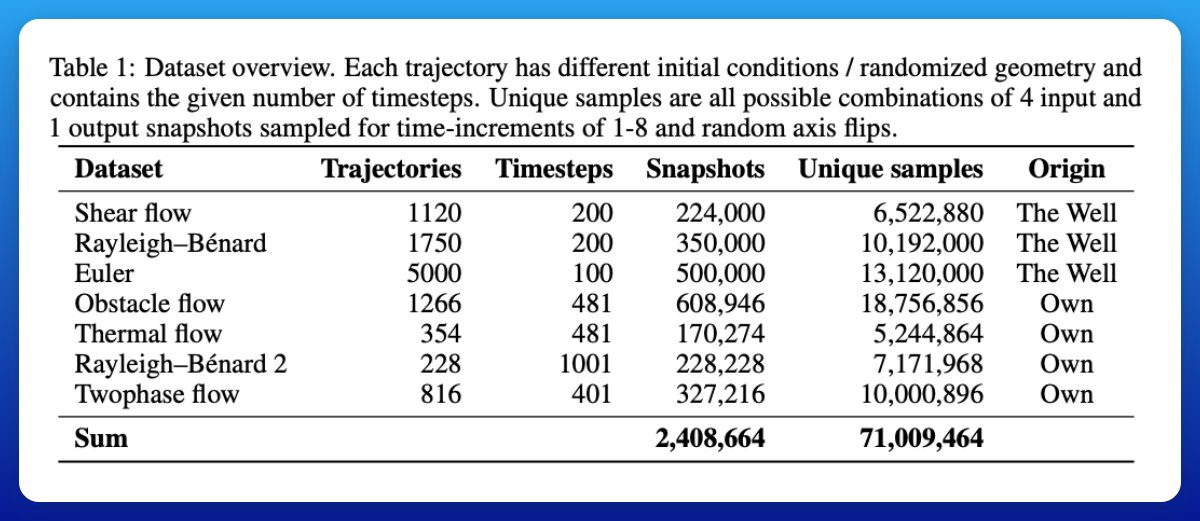

The data it learned from Instead of sticking to one type of fluid or system, the team pulled together 1.8 TB of simulations covering many different scenarios: calm flows, turbulent flows, heat transfer, fluids going around obstacles, even two-phase flows through porous material. Variable Δt sub-sampling and per-dataset normalization encourage in-context inference across scales.

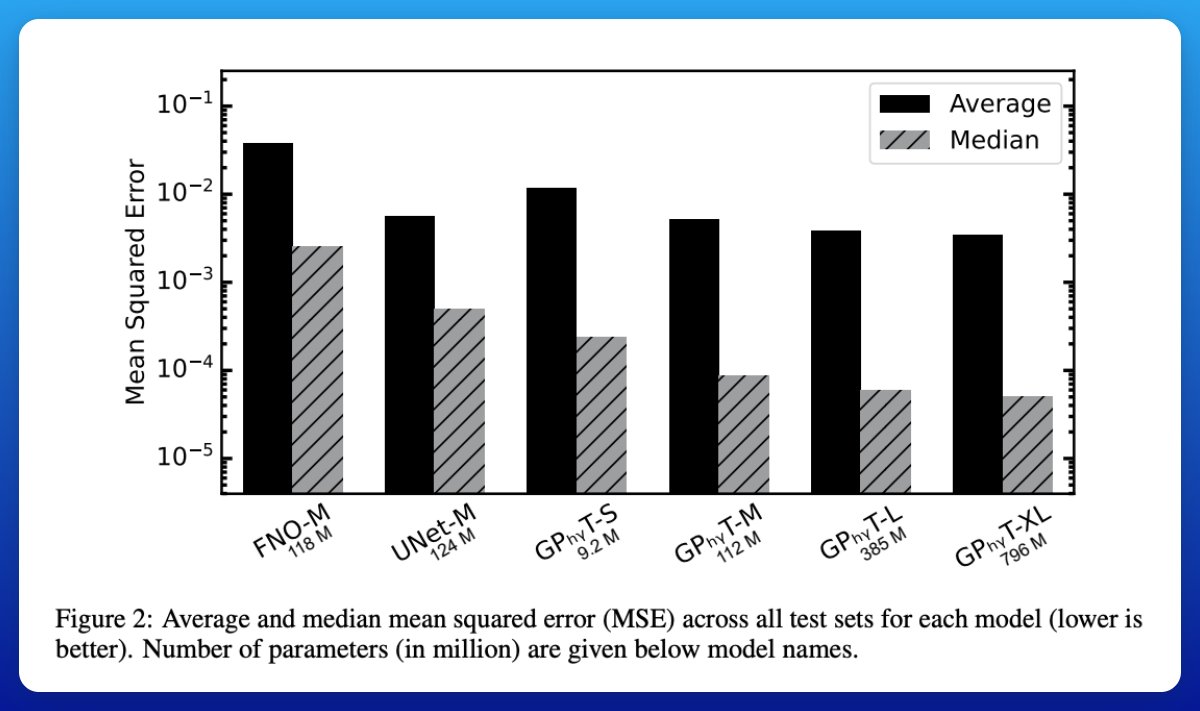

How well it performs On single-step forecasts across all test sets, GPhyT cuts median MSE vs. UNet by about 5× and vs. FNO by about 29× at similar parameter counts. https://t.co/1ELUh7WMwG

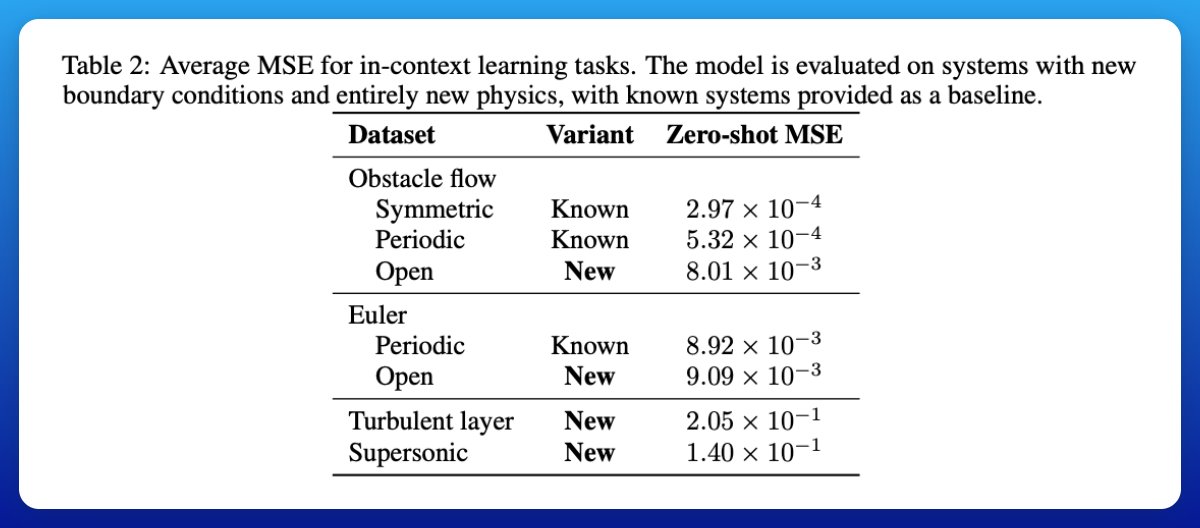

Generalization to new cases The wild part: you can hand it a new situation it never trained on, like a boundary condition it hasn’t seen, or even supersonic flow, and it will still produce physically plausible results. It doesn’t nail the fine details, but it gets the big structures right, like correctly forming bow shocks.

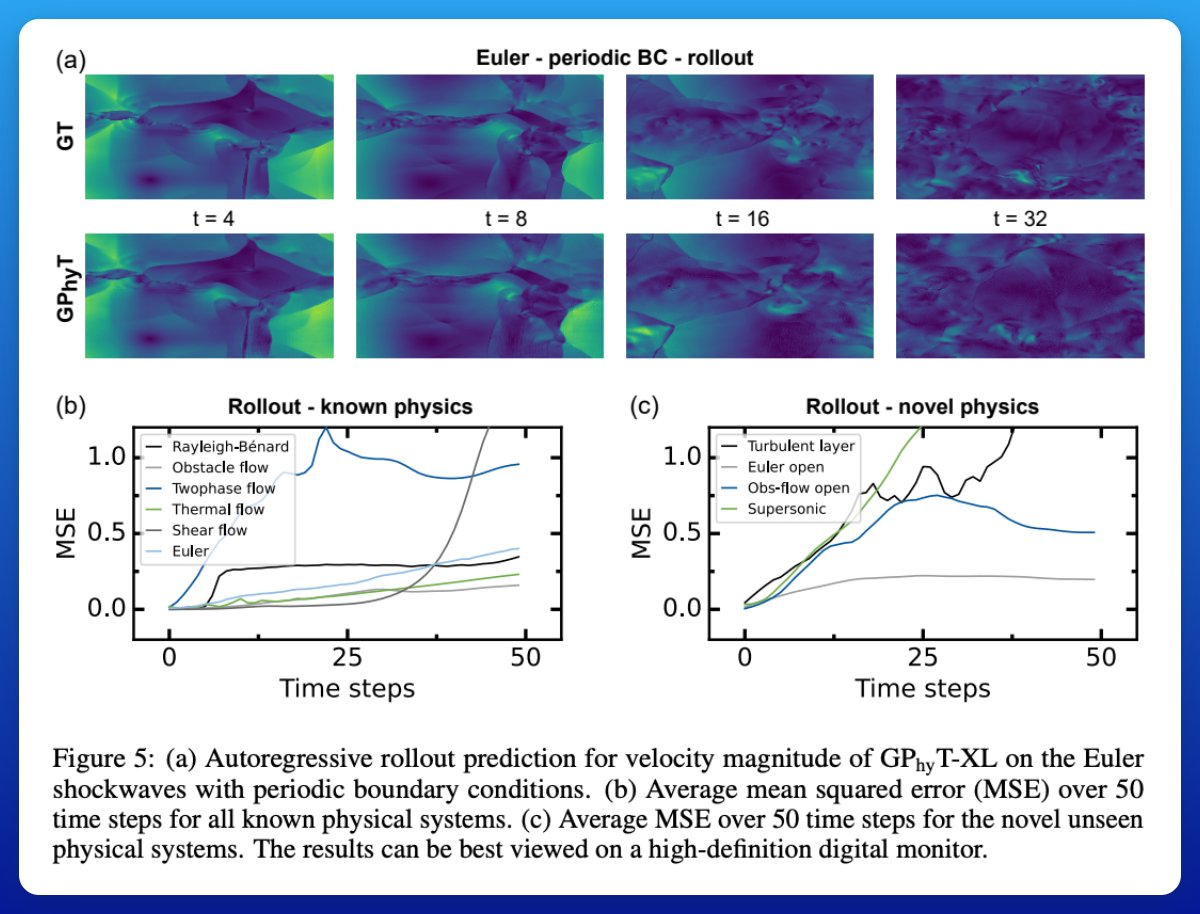

Stability over time Rollouts over 50 steps show the model keeps the overall dynamics consistent. Tiny details fade as predictions accumulate, but the large-scale behavior (like global flow structure) stays believable much longer than you’d expect for a learned model. This tells me that there is still an area of exploration for transformer-based foundation models for different domains. Paper: https://t.co/WVlW51Qkqt

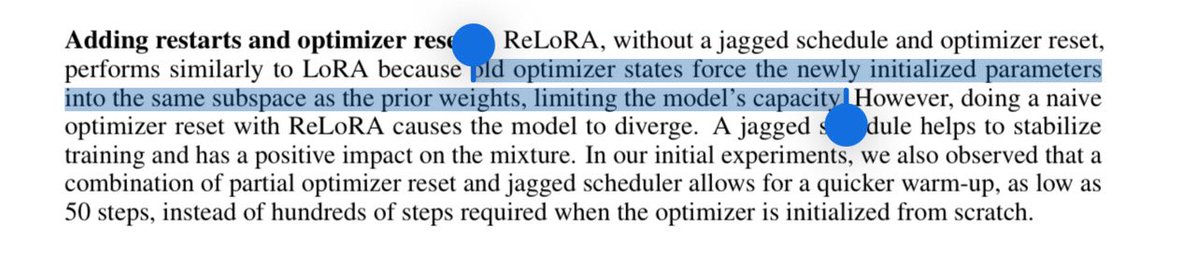

@kalomaze @cocinomial https://t.co/v2GjSCOW24

We are hiring at https://t.co/kWsq4hb8PC - I’m in SF next week too so would love to connect with builders. Let’s go! https://t.co/OZfFyGxghe

We are hiring at https://t.co/kWsq4hb8PC - I’m in SF next week too so would love to connect with builders. Let’s go! https://t.co/OZfFyGxghe

happening in 30 minutes! enroll even if you can't make it and we'll send you the recording and notes! https://t.co/sphGArPkZL

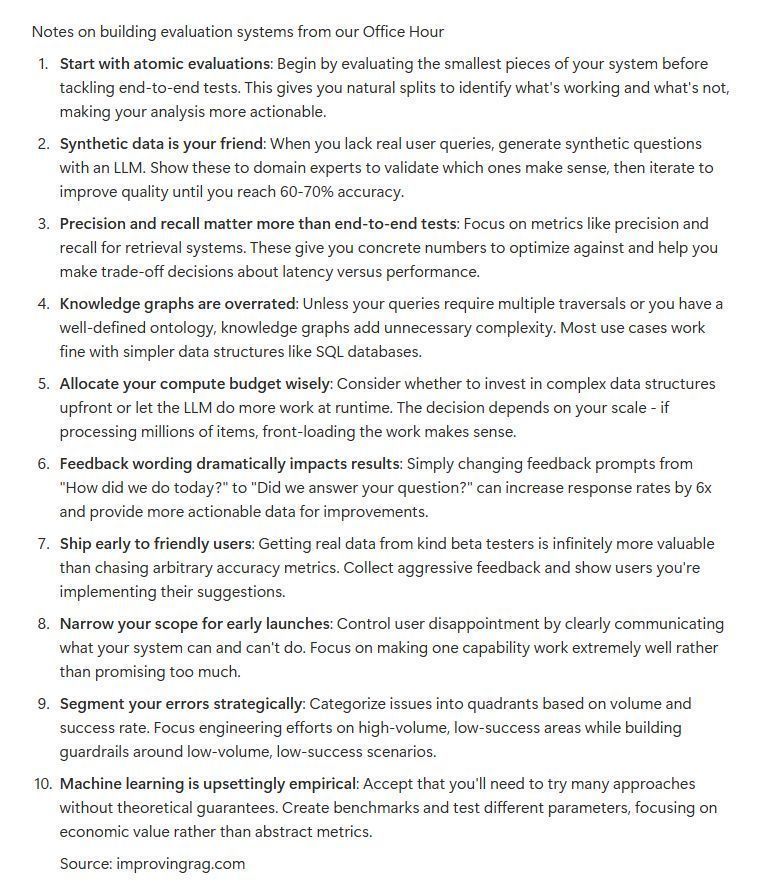

first office hours of our rag cohort https://t.co/RSvLGzkEs5

We kicked things off today. Check out the course here: https://t.co/UsWStpXh1D https://t.co/FVJ031l9l3

Most teams debug reactively, missing systematic patterns that could prevent user churn before it happens. Ben Hylak (CTO of Raindrop, ex-Apple Vision Pro/SpaceX/Google) teaches you to proactively identify which user intents break your agents and build monitoring that catches issues before users leave. "Reliable Agents: Intent-Driven Failure Detection" - Oct 1, 6PM UTC / 2PM EST / 11AM PDT. If you want to get the study notes or the recording, everything will be sent to participants. Just make sure to enroll! 🛡️ https://t.co/fawWTnMtjZ

Learn more about Systematically Improving RAG Applications here: https://t.co/UsWStpXh1D

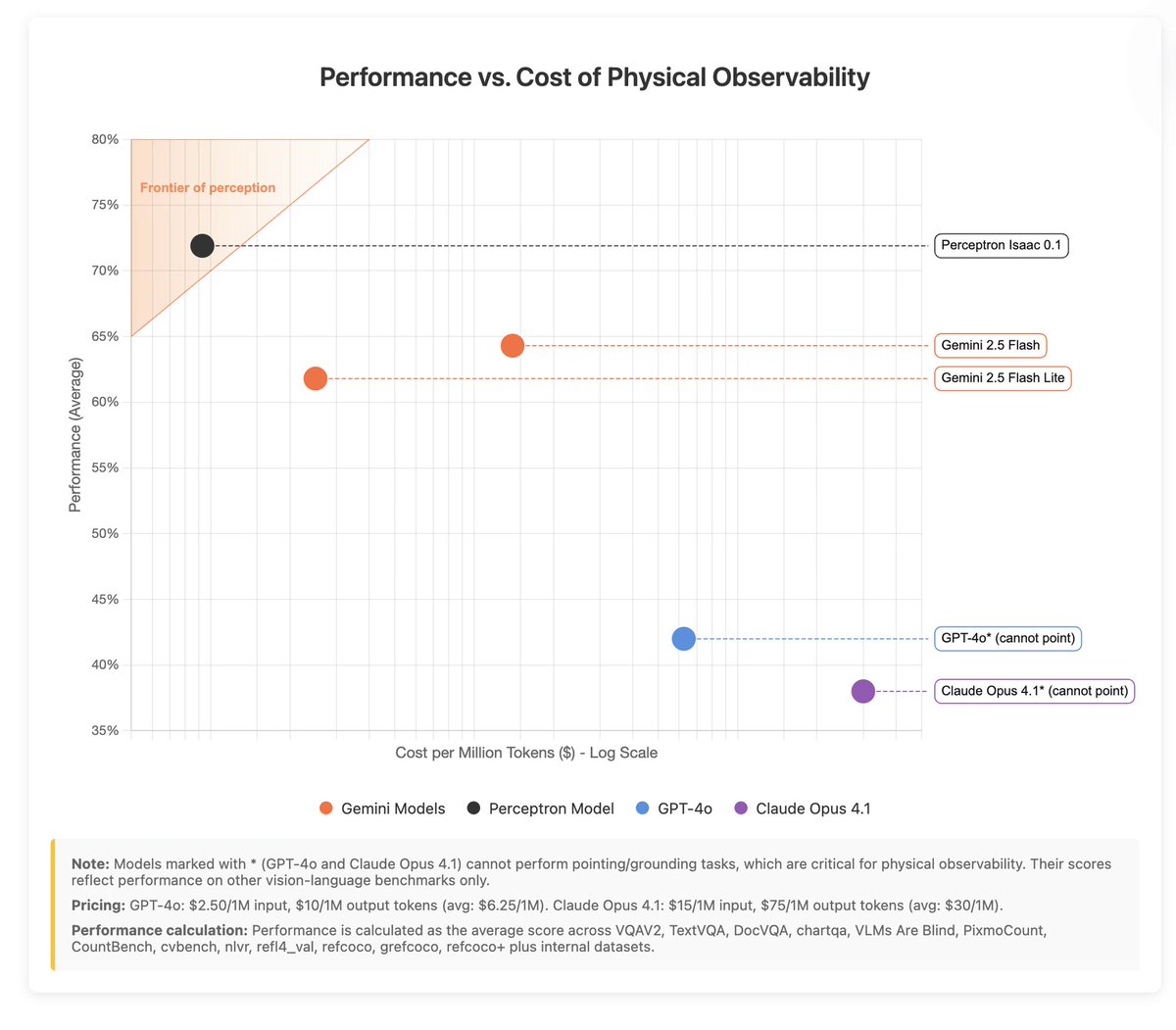

1/ Introducing Isaac 0.1 — our first perceptive-language model. 2B params, open weights. Matches or beats models significantly larger on core perception. We are pushing the efficient frontier for physical AI. https://t.co/dJ1Wjh2ARK https://t.co/hf3aq3Vb4i