@omarsar0

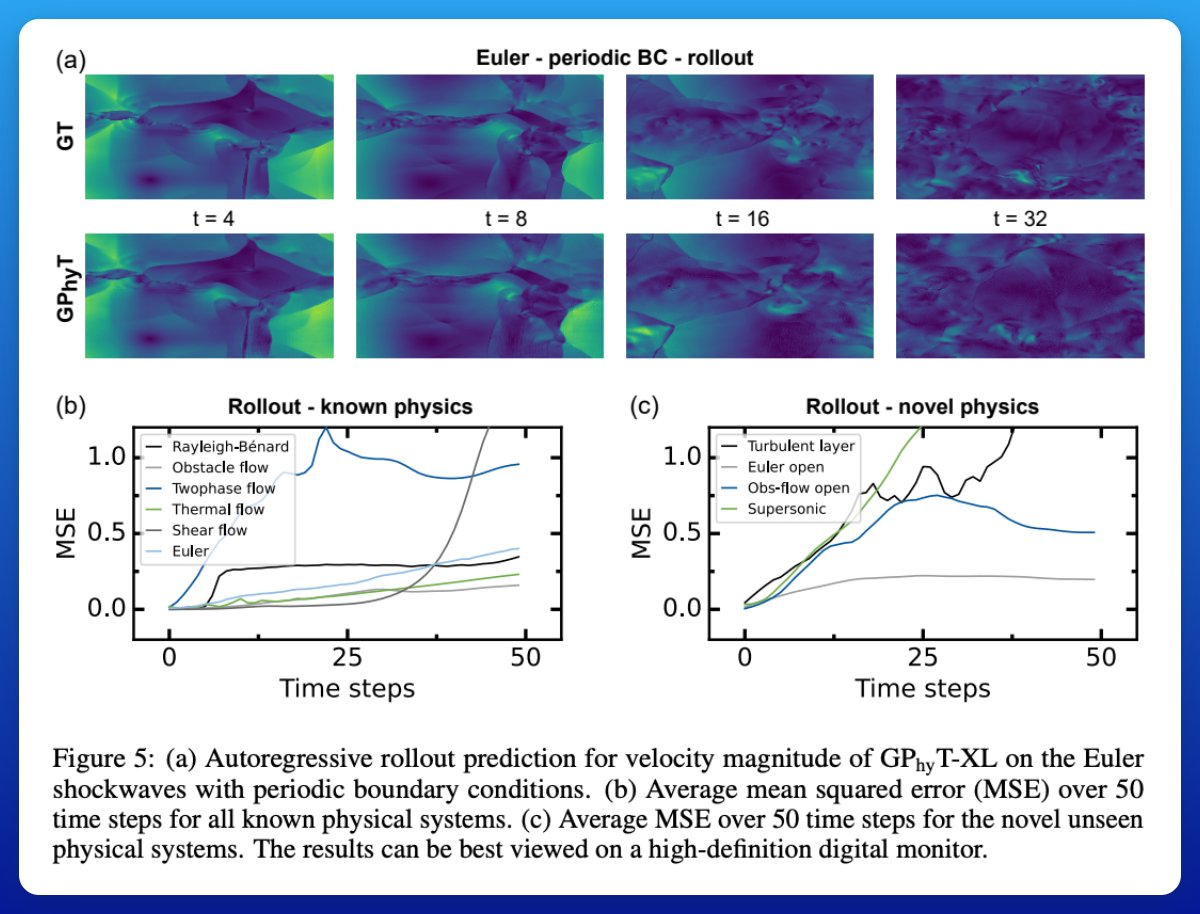

Stability over time Rollouts over 50 steps show the model keeps the overall dynamics consistent. Tiny details fade as predictions accumulate, but the large-scale behavior (like global flow structure) stays believable much longer than you’d expect for a learned model. This tells me that there is still an area of exploration for transformer-based foundation models for different domains. Paper: https://t.co/WVlW51Qkqt