@PawelHuryn

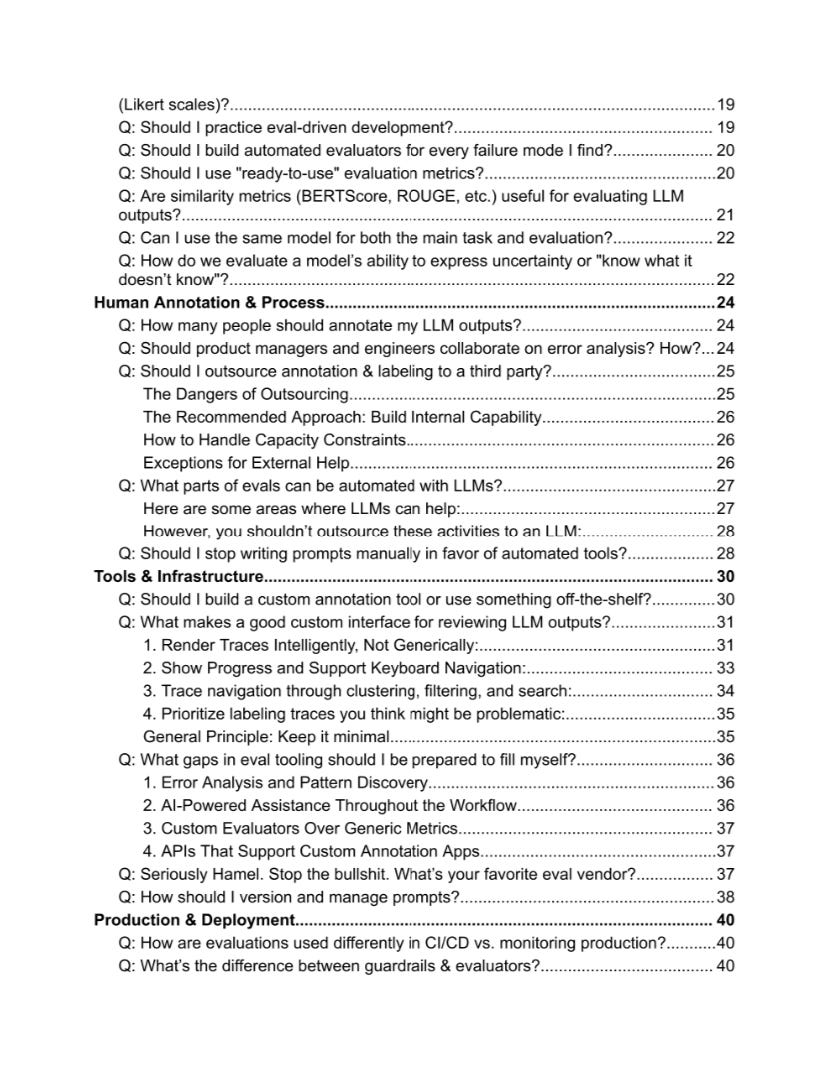

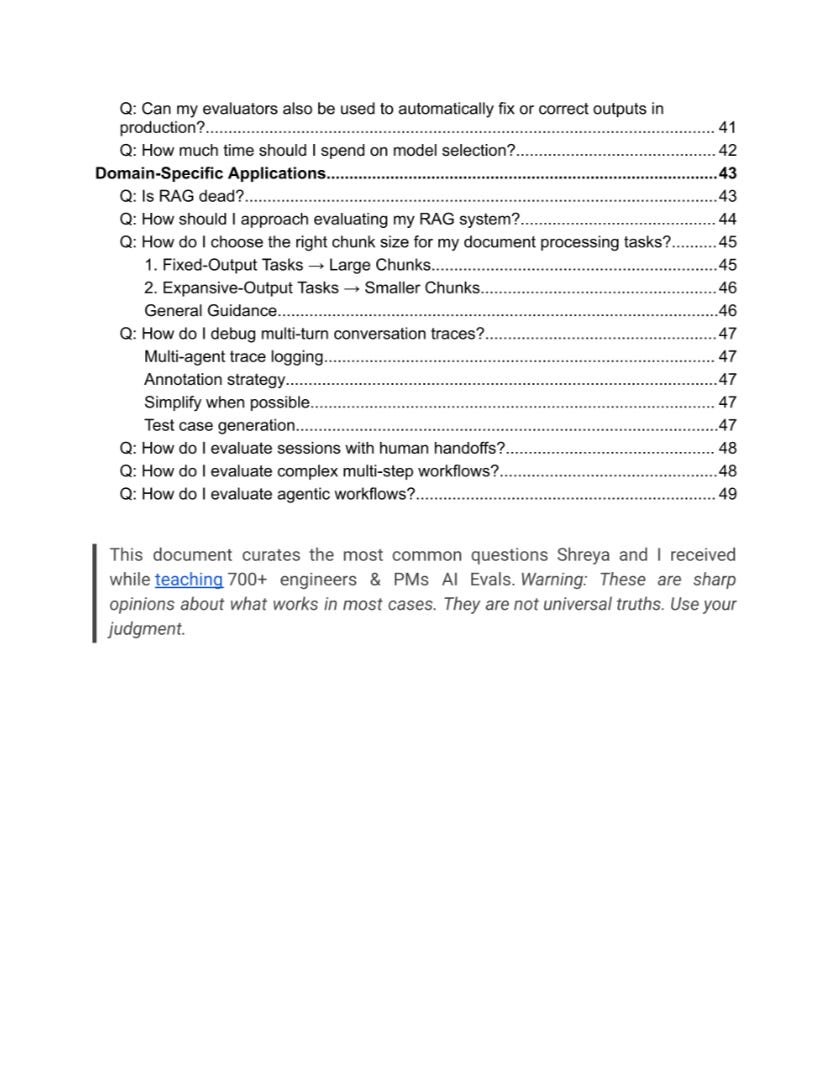

I got permission to publish a new AI Evals FAQ (Sep, 2025). It’s massive. And it's a goldmine for engineers and AI PMs. @HamelHusain and @sh_reya run the world’s No. 1 AI Evals course. Together with top AI architects and ML researchers, they answer the most common questions from 1,500+ students. 51 pages of unique insights and resources. And 100% free. Some of the questions: Q: What are LLM Evals? Q: What’s a minimum viable evaluation setup? Q: Why is "error analysis" so important in LLM evals, and how is it performed? Q: What is the best approach for generating synthetic data? Q: Are there scenarios where synthetic data may not be reliable? Q: Why do you recommend binary (pass/fail) evaluations? Q: Should I use "ready-to-use" evaluation metrics? Q: How many people should annotate my LLM outputs? Q: Should PMs and engineers collaborate on error analysis? How? Q: What parts of evals can be automated with LLMs? Q: Should I stop writing prompts manually in favor of automated tools? Q: What makes a good custom interface for reviewing LLM outputs? Q: What gaps in eval tooling should I be prepared to fill myself? Q: How should I version and manage prompts? Q: How are evaluations used differently in CI/CD vs. monitoring production? Q: What’s the difference between guardrails & evaluators? Q: Is RAG dead? Q: How should I approach evaluating my RAG system? Q: How do I evaluate sessions with human handoffs? Q: How do I evaluate complex multi-step workflows? Q: How do I evaluate agentic workflows? Get a full PDF (Google Drive, 51 pages): https://t.co/4azaPGfIxr Hope that helps! Feel free to share it with your network. — P.S. The guys shared a crazy amount of knowledge for free. But if you want to dive even deeper, here's a 35% discount for the AI Evals Cohort: https://t.co/MtnOgX99i9 (The next cohort: Oct 6—Nov 1, 2025)