Your curated collection of saved posts and media

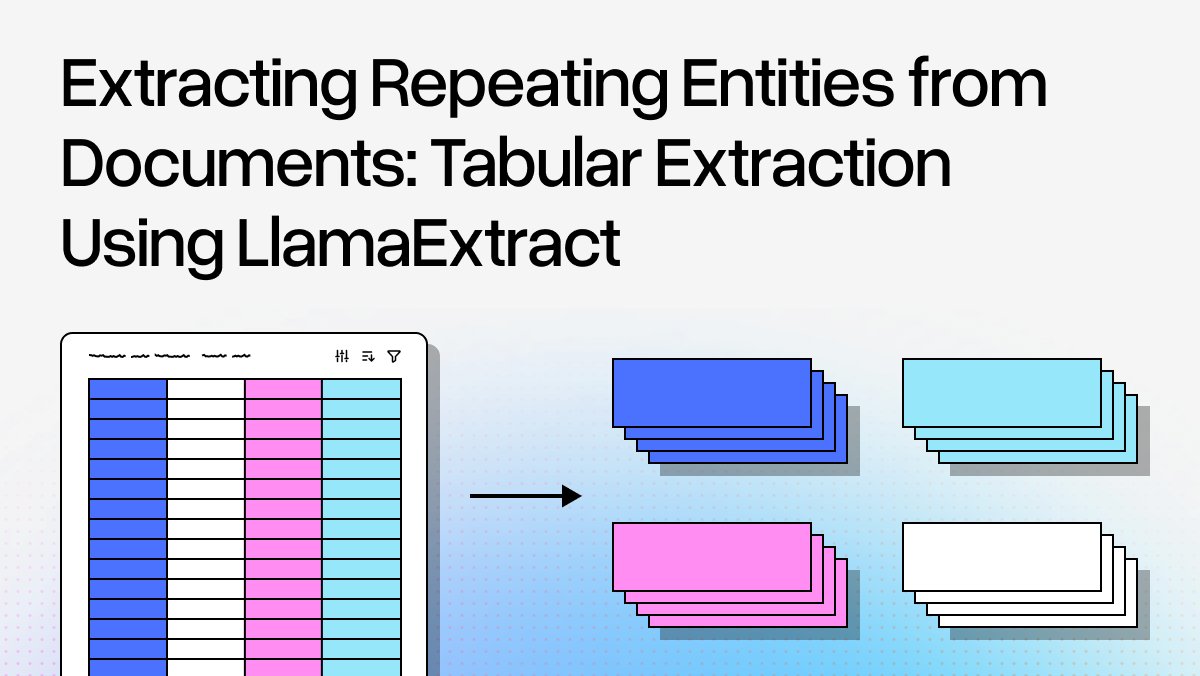

Extract data from table rows with precision using LlamaExtract's Table Row mode 📊 LlamaExtract now offers granular extraction capabilities that go beyond document-level processing, giving you powerful control over how your schema is applied: 🎯 Table row extraction applies your schema to each entity in ordered lists, table rows, and bulleted content - returning an array of JSON objects for structured data Perfect for processing invoices, financial reports, inventory lists, and any document where you need to extract repeated structured information at the row level. Learn about all configuration options: https://t.co/LUGQPDww6c And look out for an in-depth blog on how to use table row extraction in complex use-cases coming out next week 🔥

Have a LlamaAgent organize your study material: meet StudyLlama, a web app that uses LlamaAgents to help you organize and gather insights from your notes and papers! How it works: 📊 Create categories to classify your notes 📓 Upload your notes 🤖 Watch as LlamaClassify assigns one of the categories to them and LlamaExtract produces a summary and a set of questions-and-answers 🗄️ The extracted information is uploaded to a vector database 🔍 Ask questions to your notes and get the most relevant answers! 🎥 Take a look at the demo below, where @itsclelia explains you all these features and more! 👩💻 Check our the GitHub repo: https://t.co/3VgFvi08hm 🦙 Get started with LlamaCloud: https://t.co/yPVJzqoKal

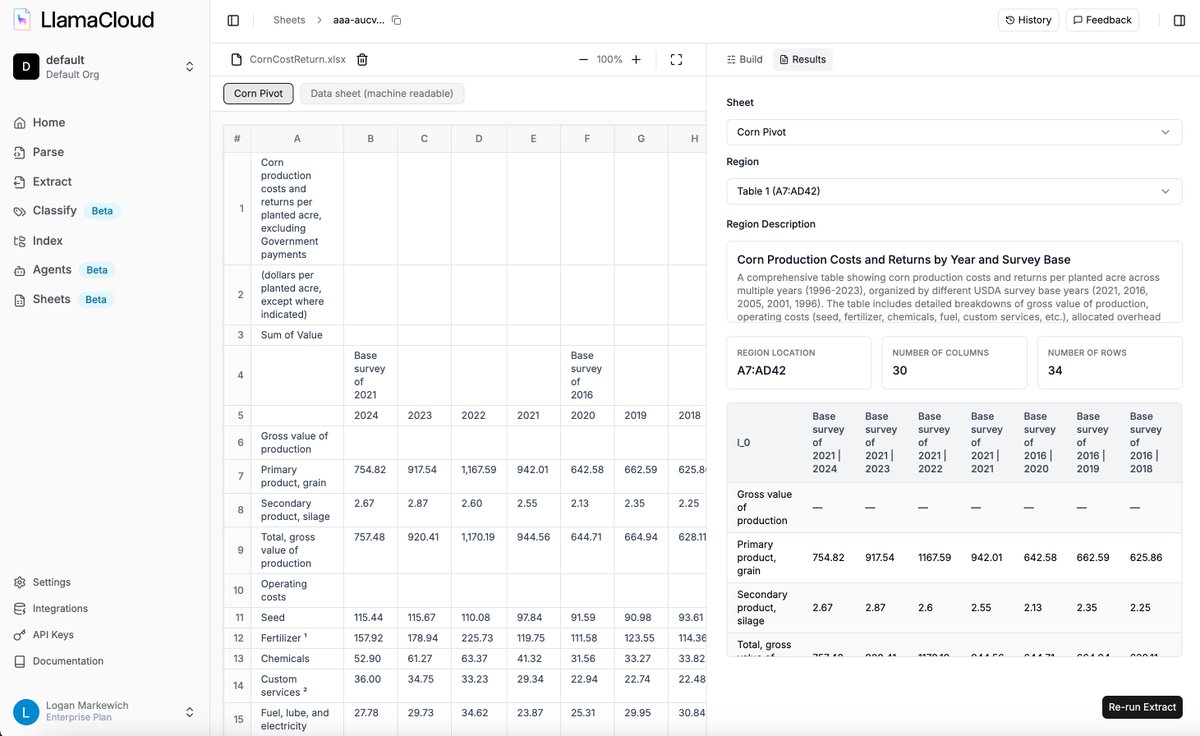

Announcing LlamaSheets in beta 🔥 Transform your messy spreadsheets into AI-ready data with our newest LlamaCloud API 📊 LlamaSheets (in beta) is a specialized API that automatically structures complex spreadsheets while preserving their semantic meaning and hierarchical context. 📋 Intelligent region classification that understands visual formatting like bold headers, colored cells, and merged regions to extract meaningful structure 🔧 Multi-stage processing pipeline with 40+ features per cell, producing clean parquet files with preserved data types 💼 Perfect for financial analysis, budget parsing, multi-region data consolidation, and automated reporting workflows 🤖 Simple 5-line Python integration that works with any agent framework including LlamaIndex, @claudeai Code, and Cursor Try LlamaSheets for free in our playground UI or integrate directly via our Python SDK and REST API. Read the full announcement and get started: https://t.co/ju3J8bWa0A And watch our intro video here: https://t.co/A3Tt0vpjzs

We launched a new API today to let you parse any Excel sheet in a structured table. Take a look at this example on core production costs 🌽: 1️⃣ The table is located at the center of the sheet with headers, footnotes, and a hierarchical column layout 2️⃣ We get back a structured table with summarization, along with parsed row/column representations This lets you directly run text-to-pandas/SQL over this data if you’re building an AI agent, or do ETL yourself over it. Check out our blog and come take a look! Blog: https://t.co/ySgZGp26Ty Try it out: https://t.co/XYZmx5TFz8

Announcing LlamaSheets in beta 🔥 Transform your messy spreadsheets into AI-ready data with our newest LlamaCloud API 📊 LlamaSheets (in beta) is a specialized API that automatically structures complex spreadsheets while preserving their semantic meaning and hierarchical context.

Stop losing 80% of your data when extracting from long documents with repeating entities like catalogs, tables, and lists. Our new Table Row extraction target in LlamaExtract solves the core problem: instead of trying to extract everything at once (where LLMs get overwhelmed), we intelligently segment documents and extract entity by entity. 🎯 Long multi-page insurance directory? Extract all 380 hospitals (vs. only 40 with document-level extraction) 📋 Handle both formal tables and semi-structured content like product catalogs automatically 🔍 Define your schema for a single entity - we return the complete list with exhaustive coverage ⚡ Leverage LLM flexibility while achieving template-based reliability through smart segmentation This approach identifies repeating patterns, segments documents at natural boundaries, then applies your schema to focused chunks. Works with tables, lists, catalogs, or any document with distinguishable repeating entities. Read the full technical breakdown with code examples here: https://t.co/Zrghh3ZZ7N

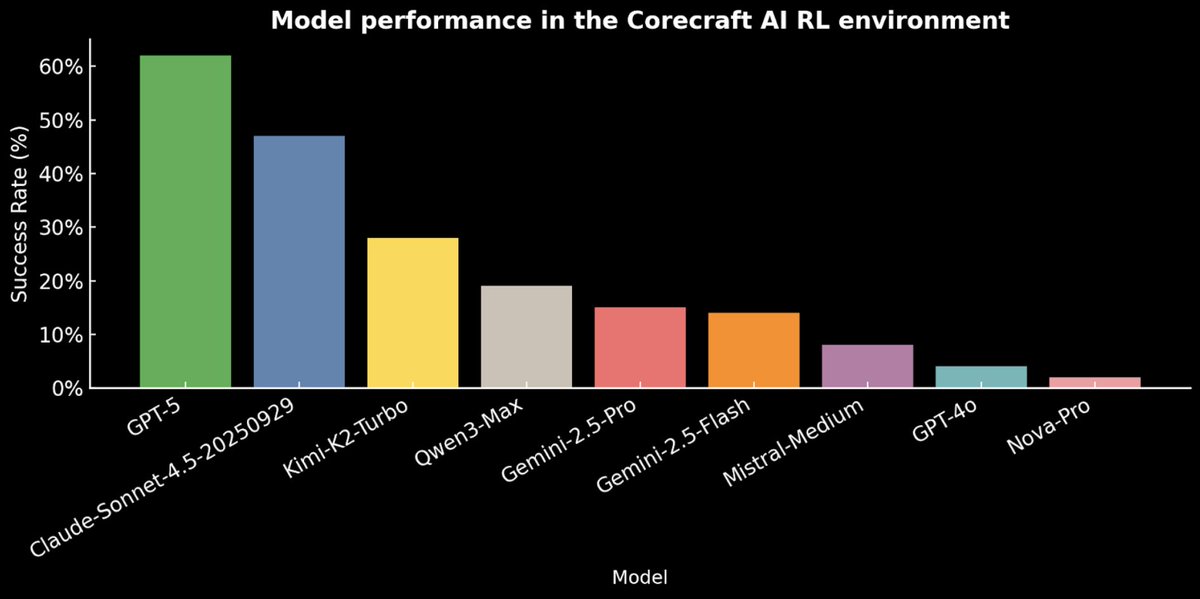

A good model can typically ace an academic benchmark. But even the best model out there will often hit a wall as soon as it’s handed a messy, real-world problem to solve. That’s why we build our own RL environments, to help frontier labs create models that can cope with the sort of rich, weird, unexpected world we all live in.

Teaching LLMs to follow instructions? Step 1. Teaching them to have taste? That's the endgame. An 8-line poem about the moon can check every box: ✅ Moon: mentioned ✅ Lines: 8 ✅ Rhymes: yes! ...and still be completely forgettable. The models that win aren't the most obedient. They're the ones that understand quality. Nuance. Voice. The ones that take your breath away. This is what separates the best frontier labs from the rest: understanding the difference between "technically correct" and "actually good." (That's why a lot of post-training researchers aren't just scientists. They're also... Artists.) Our CEO @echen talked about this on Gradient Dissent a couple months ago: training for taste, scaling the complexities of human judgment, and the art and science of post-training. https://t.co/Ui7936KsgA

Ilya Sutskever: We are no longer in the age of scaling, we are back to the age of research https://t.co/FcDwA5P5G5

@teortaxesTex I used to study pure math at UMN, GaTech and Berkeley. Modern mathematicians are short-sighted enough to not realize it's more efficient to build AGI and automate research than doing research by themseves, as those who realized this early already moved on to AI research. If Euler and Gauss were alive today, they would've been doing AI research. Many people confuse means with end. Mathematicians either care more about having fun playing puzzles than improving the speed of progress or simply purists who haven't thought outside of boundary (math). Here's complaints I made to mathematicians back then: https://t.co/414Dq2Ypg2

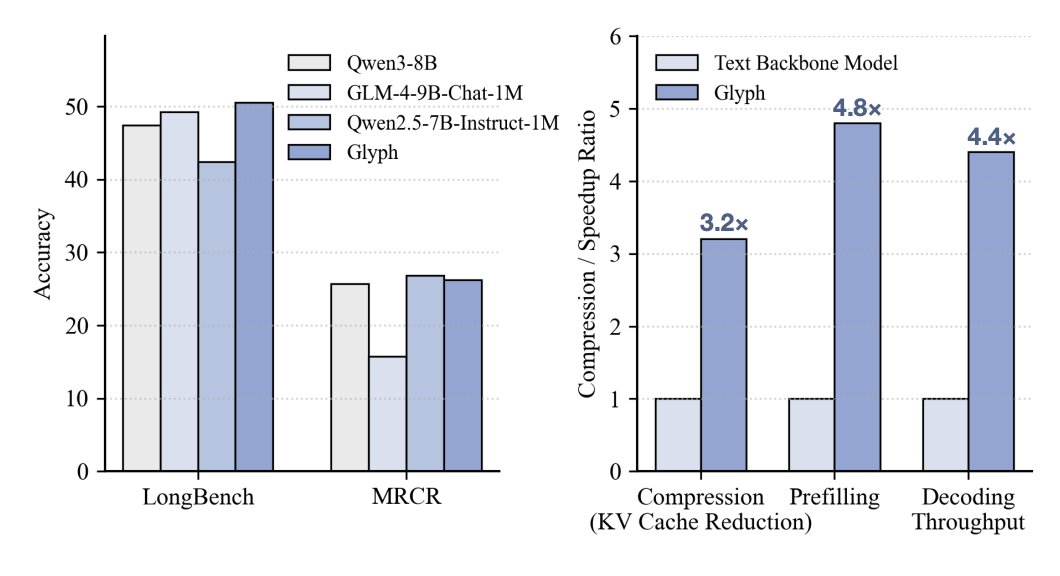

DeepSeek OCR dropped ... but honestly, Glyph [1], released the same day, showed something more interesting: 3–4× context compression and infilling cost reduction, no performance hit on long-context QA and summarization, which is much less trivial than OCR in many cases. If that holds for harder agentic tasks, that’s a serious leap. Cost-wise: - Infilling cost drops sharply - Decoding savings are more modest w/ DSA on So the impact depends on how input-heavy your agentic workflow is (e.g., deep research vs coding from scratch). Also relevant: - BLT extensions [2,3] improved scaling over BPE baseline; aggressive compression of Glyph mainly helps infilling, not much on decoding (w/ DSA). - BLT-fication could help Glyph reduce decoding cost further. - Subagents make bigger impact on latency and context length reduction. Simple yet powerful. - And swapping vision encoders for small LMs is still an open question.

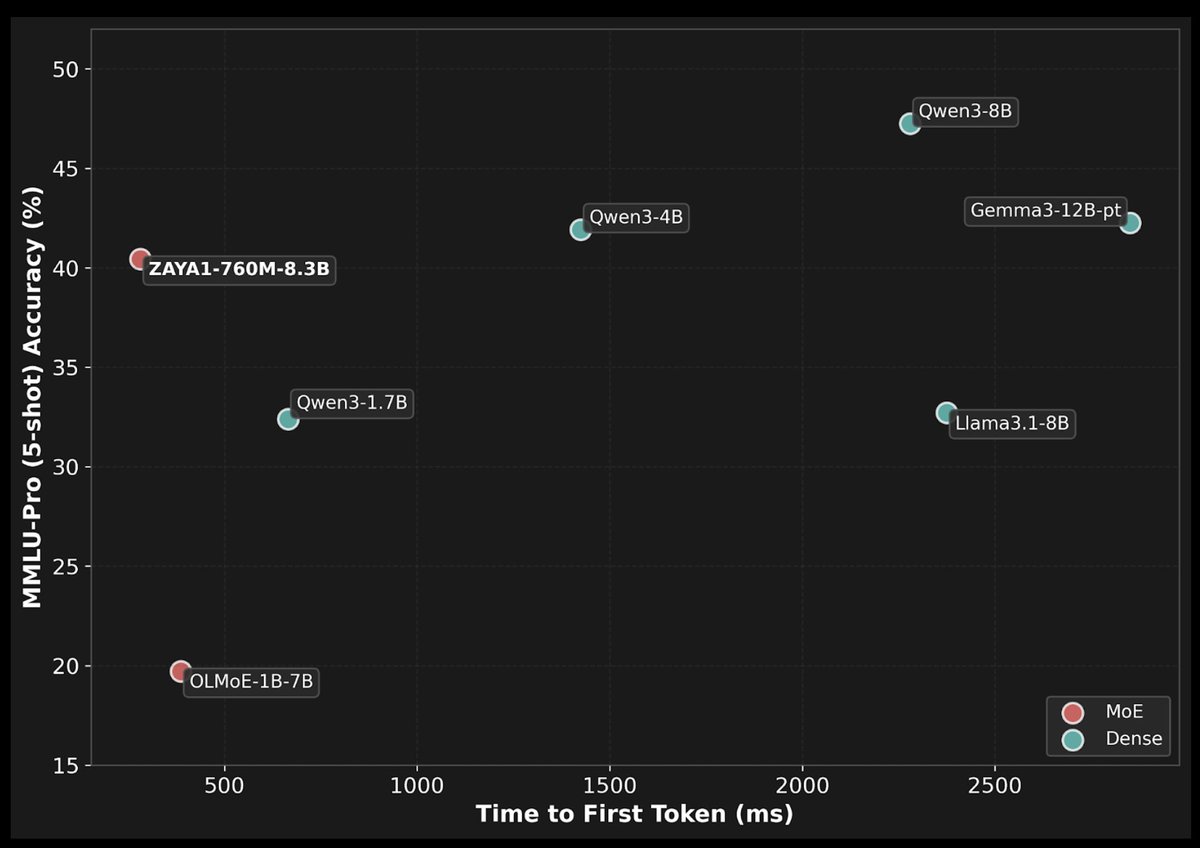

In collaboration with @AMD and @IBM, we @ZyphraAI are sharing ZAYA1-base! The first large-scale model on an integrated AMD hardware, software, and networking stack. ZAYA1 uses Zyphra’s novel MoE architecture with 760M active and 8.3B total params. Tech paper and more below👇 https://t.co/9RFBx3ORAw

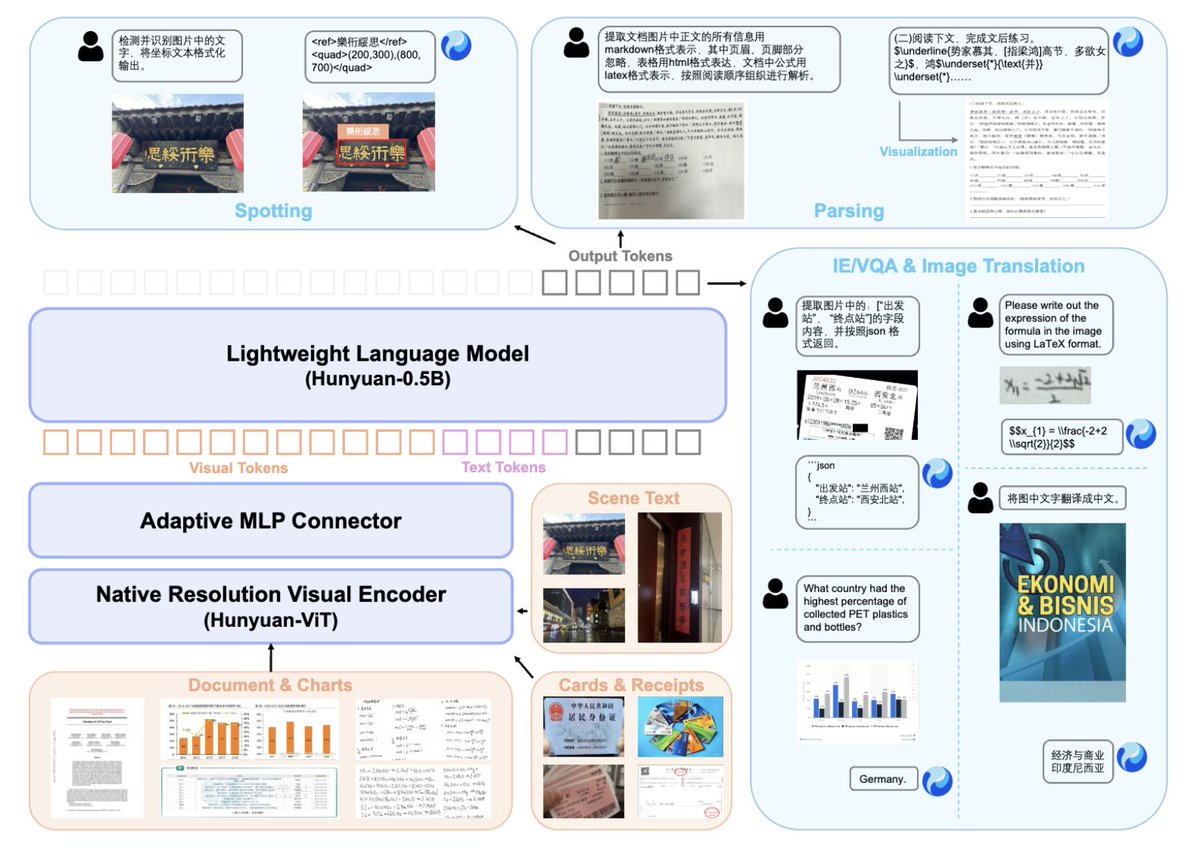

We are thrilled to open-source HunyuanOCR, an expert, end-to-end OCR model built on Hunyuan's native multimodal architecture and training strategy. This model achieves SOTA performance with only 1 billion parameters, significantly reducing deployment costs. ⚡️Benchmark Leader: Achieves a SOTA score (860) on OCRBench for models under 3B parameters and a leading 94.1 on OmniDocBench for complex document parsing. 🌐Comprehensive OCR Capabilities: Extends beyond simple text recognition to handle text spotting (street view, handwriting, art text), complex document processing (tables/formulas in HTML/LaTeX), video subtitle extraction, and end-to-end Photo translation (supports 14 languages). ✅Ultimate Usability: Embraces the "end-to-end" philosophy and achieves top-tier results with a single instruction and single inference, providing superior efficiency over traditional cascade solutions. 🌐Project Page: https://t.co/7UsMcJKhwu (web) https://t.co/mKjgjV9kwX (mobile) 🔗Github: https://t.co/MLrOnBpZBg 🤗Hugging Face: https://t.co/rZqdeZnHav 📄Technical Report: https://t.co/E4OhxV0Djw

Join Modular, @Supermicro, @TensorWave, and @AMD in an exclusive webinar exploring the technologies behind cutting-edge AI training and inferencing. 🗓 Oct 29, 2025 | 10:00 a.m. PDT https://t.co/9vvLlDCmHt

🔗 Register here: https://t.co/wzkGc59z2z

How do you boost productivity with AI without sacrificing software mastery? @clattner_llvm, Modular CEO & co-founder, chats with @jeremyphoward about building lasting systems from LLVM to Mojo 🔥 ➡️ Catch the full conversation here: https://t.co/tfIqPeCbpG

🔥 New Series! Learning GPU programming through Mojo puzzles - on an Apple M4! No expensive data center GPUs needed. No CUDA C++ complexity. Just Python-like syntax with systems performance. First video just dropped: https://t.co/MI0BzfBuL0 #Mojo #GPUProgramming #AppleSilicon

Catch up on Modular’s latest innovations! 💡 Highlights from October’s Community Meeting: • FFT Implementation in Mojo - Martin Vuyk • MAX backend for PyTorch - Gabriel de Marmiesse • Modular Platform 25.6 Updates 🎥 Watch the full recording: https://t.co/5iYzzR9zTl

Building scalable AI systems means finding the optimal solution for every layer, from silicon to software. See how Modular, @AMD, @Supermicro and @TensorWave are working together to accelerate AI development on Supermicro’s liquid-cooled systems powered by AMD Instinct™ MI350 GPUs. 📺 Watch the full webinar replay: https://t.co/xQBowuVbtR

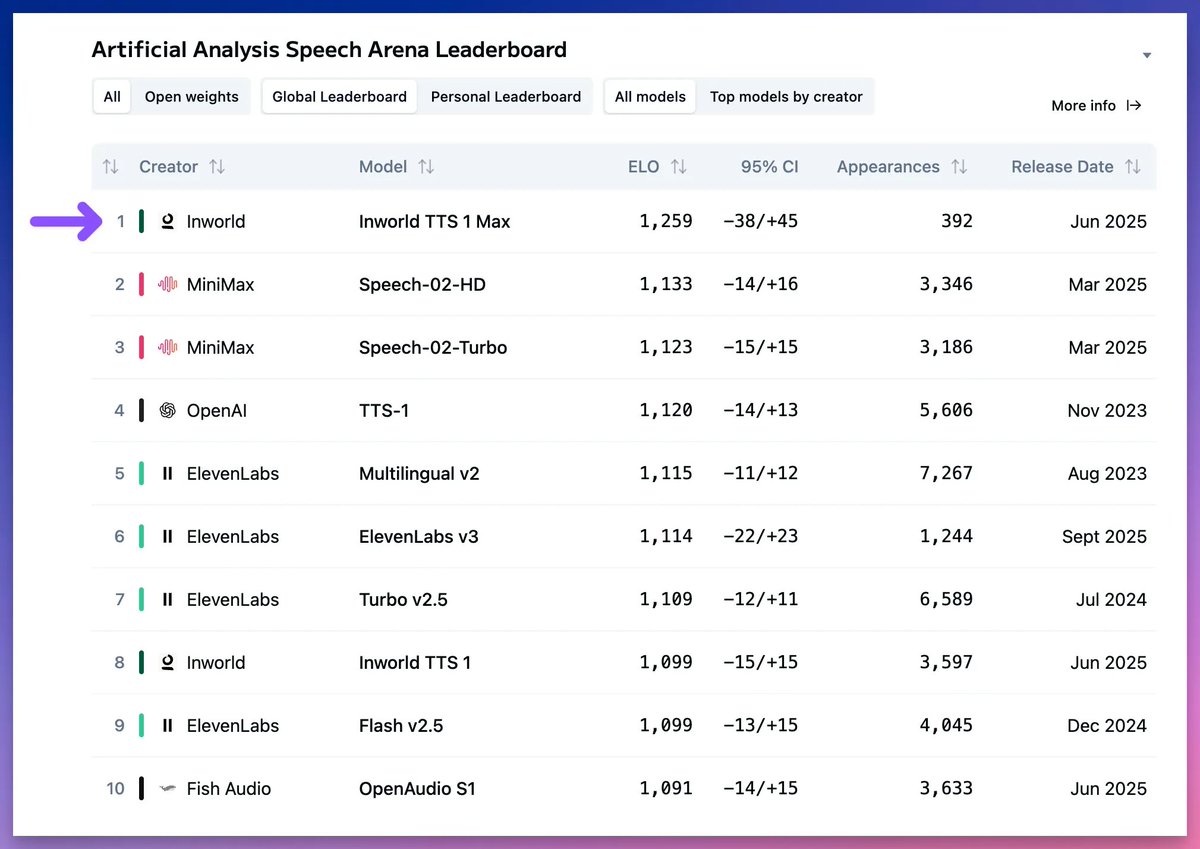

Inworld TTS 1 Max is the new leader on the Artificial Analysis Speech Arena Leaderboard, surpassing MiniMax’s Speech-02 series and OpenAI’s TTS-1 series The Artificial Analysis Speech Arena ranks leading Text to Speech models based on human preferences. In the arena, users compare two pieces of generated speech side by side and select their preferred output without knowing which models created them. The speech arena includes prompts across four real-world categories of prompts: Customer Service, Knowledge Sharing, Digital Assistants, and Entertainment. Inworld TTS 1 Max and Inworld TTS 1 both support 12 languages including English, Spanish, French, Korean, and Chinese, and voice cloning from 2-15 seconds of audio. Inworld TTS 1 processes ~153 characters per second of generation time on average, with the larger model, Inworld TTS 1 Max processing ~69 characters on average. Both models also support voice tags, allowing users to add emotion, delivery style, and non-verbal sounds, such as “whispering”, “cough”, and “surprised”. Both TTS-1 and TTS-1-Max are transformer-based, autoregressive models employing LLaMA-3.2-1B and LLaMA-3.1-8B respectively as their SpeechLM backbones. See the leading models in the Speech Arena, and listen to sample clips below 🎧

See what’s new in Modular and the community! Join our Community Meeting on Nov 24 to explore: 🎶 MMMAudio — creative-coding audio environment by Sam Pluta ✨ Shimmer — experimental Mojo-to-OpenGL renderer by Lukas Hermann 🆕 Modular updates — 25.7 release + Mojo 1.0 roadmap RSVP: https://t.co/XvvkEBhuxx

🔥 New Tutorial: Mojo GPU Puzzles - Puzzle 02: Zip Learn to process multiple arrays in parallel on the GPU with element-wise operations. Learn the fundamentals of parallel memory access patterns in under 10 minutes! https://t.co/cI4Z1RLESh

Unlock high-performance AI with Modular! Watch Abdul Dakkak’s talk on Speed of Light Inference w/ NVIDIA & AMD GPUs and see how Modular Cloud, MAX, and Mojo scale AI workloads while reducing TCO. 📺 Watch here: https://t.co/6vdvXlgEXa

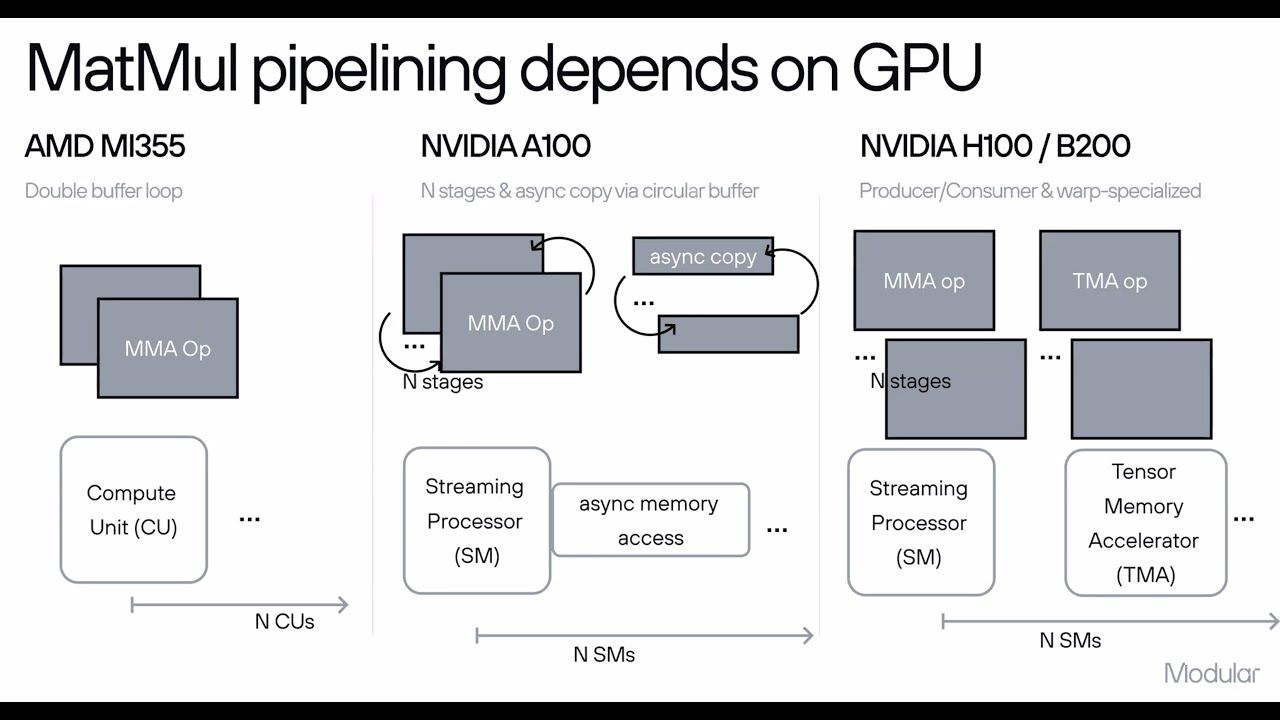

Curious how Mojo🔥 achieves its GPU performance? This Modular Tech Talk covers the architecture, compiler flow, and open-source kernels behind it, and how Mojo🔥 targets CPUs and GPUs in one model. https://t.co/dgS8LaDC6i

AI is having its Android moment. 🤚🤖 In this episode of The Neuron Podcast, we sit down with @iamtimdavis (Co-Founder & President of @Modular, ex-Google Brain) to unpack why Modular raised $250M to break AI’s GPU lock-in. Reimagine how AI gets built and deployed: 📺 YouTube: https://t.co/HwRMmyqx2S 🎧 Spotify: https://t.co/ZQMykGkffc 🎙️ Apple Podcast: https://t.co/VgPLSuEErJ #TheNeuron #TechPodcast #AIInfrastructure #FutureOfAI #AIdevelopment #OpenSourceAI #AIhardware

【原型初お披露目】】「RAITA」さんイラスト『倉本 エリカ』 原型制作:緋路 #wf2016w #ネイティブ https://t.co/gD6Xto3UNP

we did it representing the 0.0014% #nativetwitter https://t.co/VLozOfcTOj

Since were on the topic. Can we add these changes as well? https://t.co/jbDmDZnJbN

air heads or icee body wash fo da day https://t.co/6lOgDFZJwk

Through every carving, the spirit of our ancestors speaks. https://t.co/hlkuPGJu92

Image generation + search is so insanely good with Nano Banana pro! Just a simple prompt of the list of folks, nothing else. :) https://t.co/A5iWiL4hEK

an interesting"reload" of the above prompt where @_jasonwei is a CoT Kid, @NoamShazeer is a "sharding sorcerer" and @JeffDean is the scale up sensei. And also @m__dehghani is the Transformer wizard!! 😄 https://t.co/C00FMR3wRz

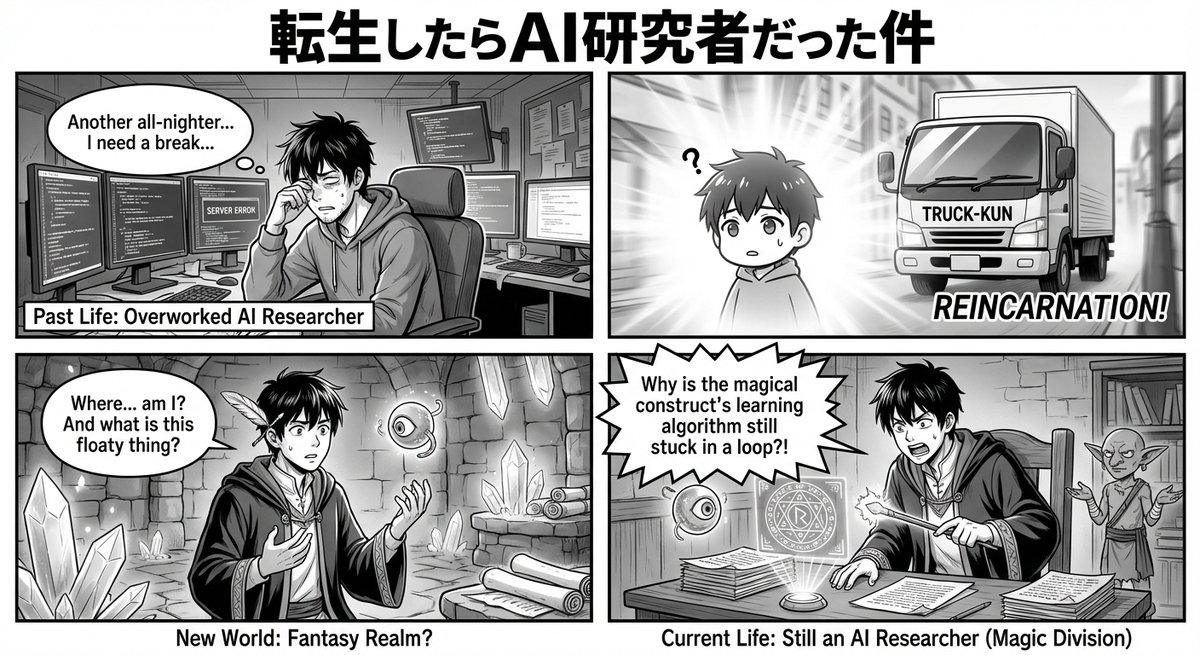

More nano banana fun 👇 "create a 4 panel manga titled "I was reincarnated into another world as an ai researcher" pretty hilarious actually 😄 https://t.co/0Fg2DbX15C