@arankomatsuzaki

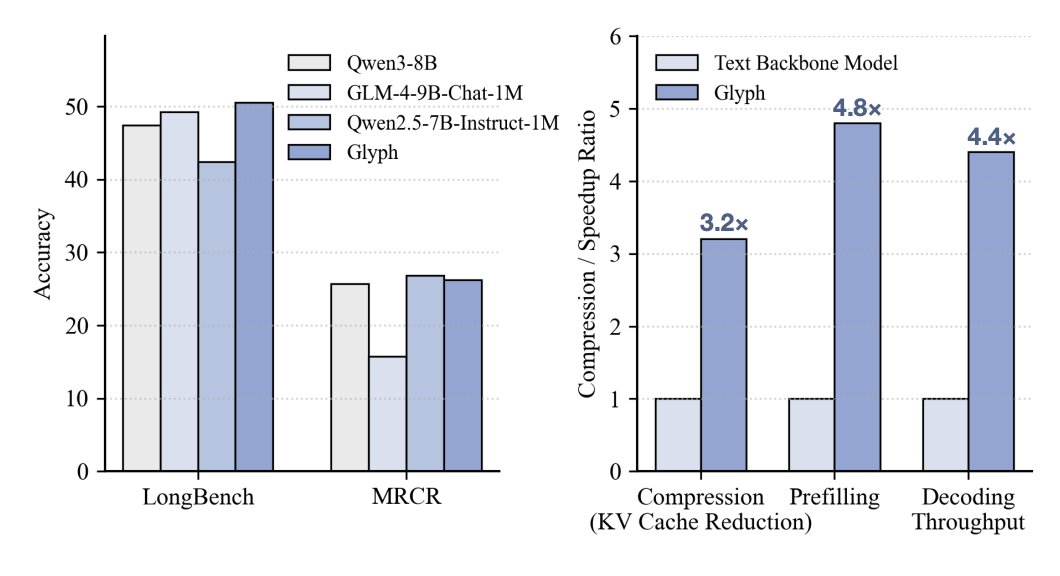

DeepSeek OCR dropped ... but honestly, Glyph [1], released the same day, showed something more interesting: 3–4× context compression and infilling cost reduction, no performance hit on long-context QA and summarization, which is much less trivial than OCR in many cases. If that holds for harder agentic tasks, that’s a serious leap. Cost-wise: - Infilling cost drops sharply - Decoding savings are more modest w/ DSA on So the impact depends on how input-heavy your agentic workflow is (e.g., deep research vs coding from scratch). Also relevant: - BLT extensions [2,3] improved scaling over BPE baseline; aggressive compression of Glyph mainly helps infilling, not much on decoding (w/ DSA). - BLT-fication could help Glyph reduce decoding cost further. - Subagents make bigger impact on latency and context length reduction. Simple yet powerful. - And swapping vision encoders for small LMs is still an open question.