Your curated collection of saved posts and media

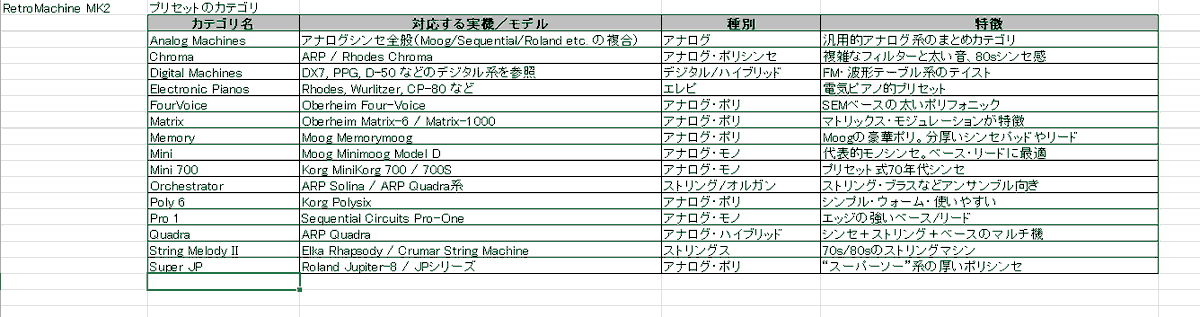

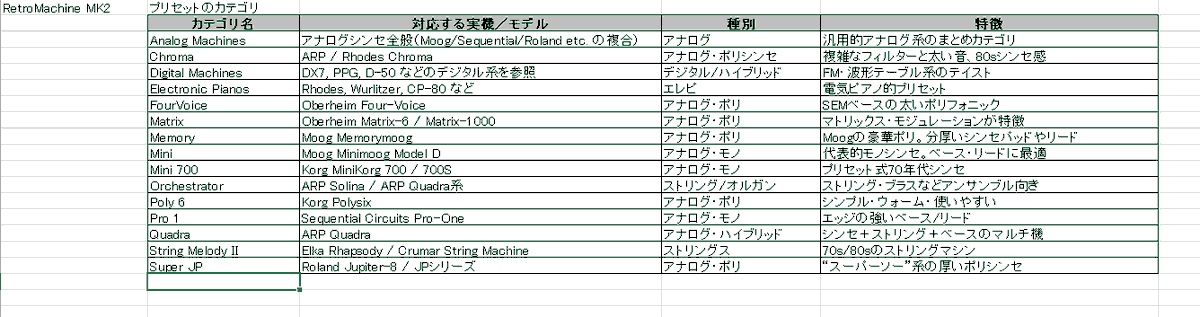

Native InstrumentsのAbsynth 6がリリースされたけど、 ビンテージシンセを調べてたら、 Native Instruments の RETRO MACHINES MK2が、 ちょっと気になった プリセットのフォルダ(カテゴリ)の実機を、 Chat GPTにまとめてもらった 参考までに #nativeinstruments #Retromaschines https://t.co/itL099XWbt

Are We Ready for RL in Text-to-3D Generation? A Progressive Investigation https://t.co/jdm6fDmOCI

discuss: https://t.co/5OzgF5ytyy

Dolphin-v2 🐬 new document parsing model released by @ByteDanceOSS ✨ 3B - MIT license ✨ Works on any document: PDFs, scans, photos ✨ Understands 21 types of content: text, tables, code, formulas, figures & more ✨ Pixel-level precision via absolute coordinate prediction https://t.co/aLuNxUAs0k

🎉 llama.cpp now has Ollama-style model management. • Auto-discover GGUFs from cache • Load on first request • Each model runs in its own process • Route by `model` (OpenAI-compatible API) • LRU unload at `--models-max` https://t.co/yfmfHL7zzj

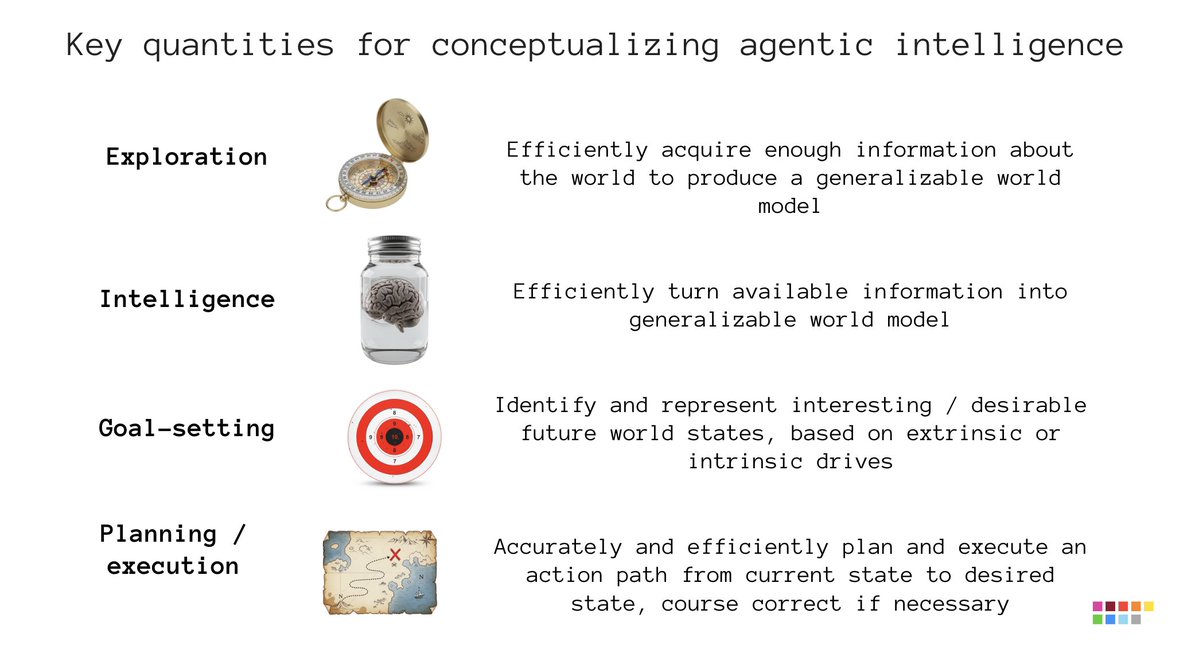

Fluid intelligence as measured by ARC 1 & 2 is your ability to turn information into a model that will generalize. That's not the only thing you need to make an intelligent agent. To start with, when you're an agent in the real world, information is not provided to you, passively. You have to go get it. That's "exploration": the agent's ability to efficiently acquire useful information (to turn into a world model) by interacting with its environment. Next, in the real world, you aren't provided instructions. There's no fixed goal. You have to figure what to do. That's "goal-setting": the ability to identify interesting or desirable future world states, via your intrinsic and extrinsic drives. This is a core part of being autonomous. Finally, "planning" represents the ability to accurately and efficiently map out and execute an action path from the current state to the desired goal, including the ability to course correct. That is also different from the ability to turn information into a model -- it's an application of having a model. All of these problems are still largely open. They're all much easier than solving fluid intelligence, in my opinion. Among them, the hardest one is exploration and the easiest one is planning.

@fchollet What type of intelligence is needed for “exploration, goal-setting, and interactive planning”? What is “beyond fluid intelligence”?

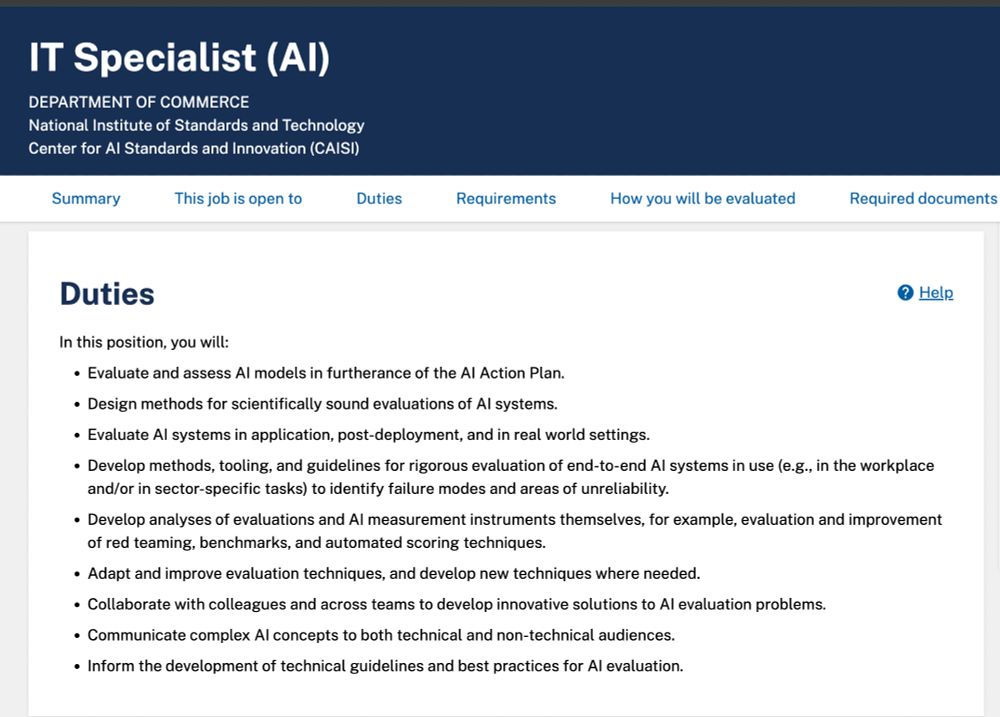

US CAISI is hiring -- the internal govt name is "IT Specialist" but it is effectively a research scientist role! Salary is $120,579 to - $195,200 per year & you work on AI evaluation within government agencies! Dream job for the right person. Details: https://t.co/HCZWEgqHex https://t.co/D9nReCZx6X

Brian Schatz: 'Native Wisdom' Needs To Be Part Of US Climate Policy - Honolulu Civil Beat https://t.co/UuciRVh20E

🚨BREAKING NEWS🚨 Sunak hails 'Climate Change' package: £11.6bn on climate finance £1.5bn for Pakistan & Somalia £65.5m for Kenya & Egypt £150m Congo & Amazon £65.5m Clean Energy Innovation £3bn Nairobi’s Railway City & hydropower project NO ONE VOTED FOR THIS! https://t.co/RfReUXZSZA

I'm not saying I'm sacrit, I'm just saying https://t.co/XL2cgnPWNs

It seems like the tailor who made this Saka’s native just did freedom yesterday because what’s this mess? 😭😭 https://t.co/tLFlnkW5vH

@BrandtRobinson You’re telling me that Me, a Native American, raised in a small town. & If white liberal ANTIFA come to my town to loot, riot, and destroy it And I defend myself and my community That I support white supremacy 🤡🙃🤡🙃🤡🙃🤡🙃🤡 https://t.co/WiPbEdbfkC

COD Ranked Demon, Fitness personality, certified hooper, & a proud member of the Choctaw Nation! Welcome @_brittneyraines, our newest member of Native Gaming! https://t.co/N7RmO3SD0z

President @BarackObama on the violence in Gaza. Full interview out Tuesday. https://t.co/U42Jy2Aa4y

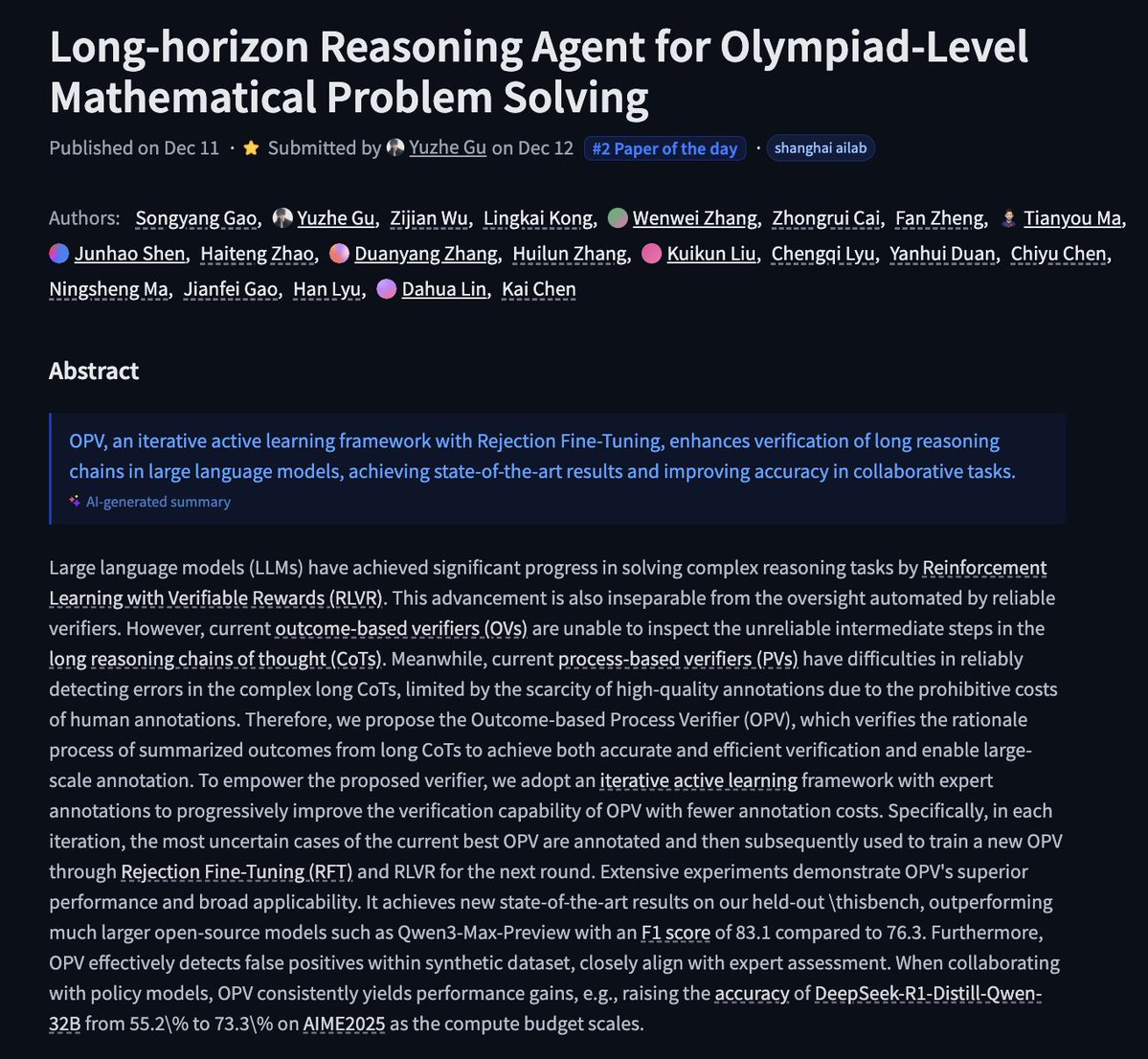

Long-horizon Reasoning Agent for Olympiad-Level Mathematical Problem Solving https://t.co/26B2wU9KqM

discuss: https://t.co/hPFHZSPmAQ

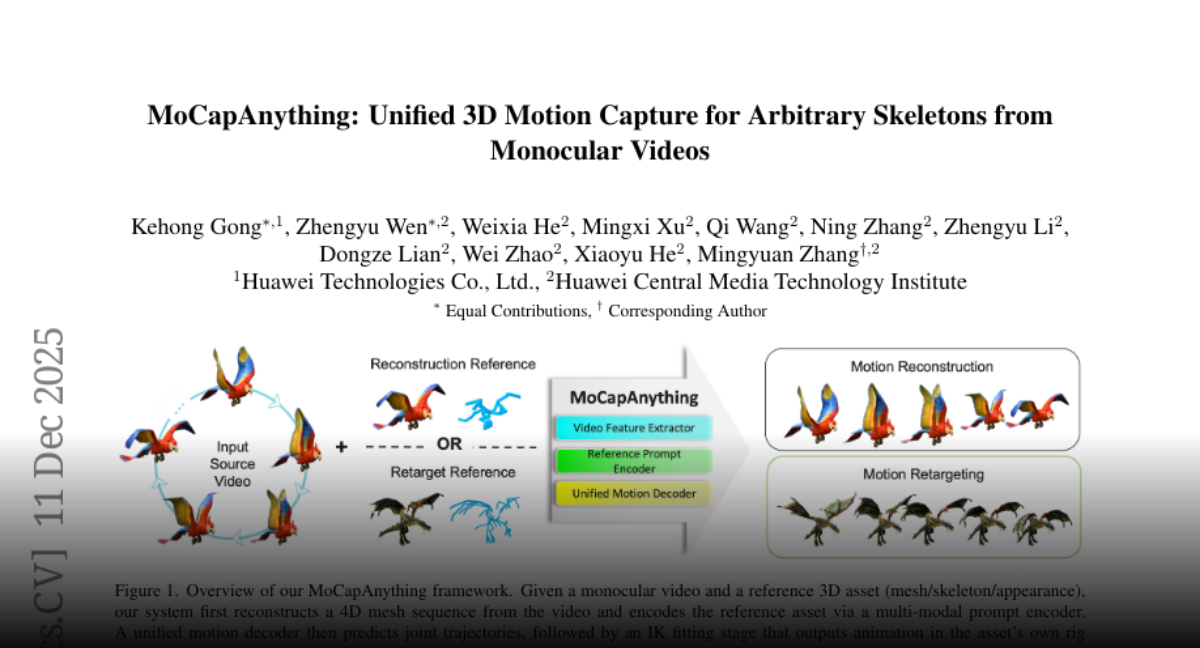

MoCapAnything Unified 3D Motion Capture for Arbitrary Skeletons from Monocular Videos https://t.co/iZ3loVus4s

discuss: https://t.co/yQnDKXMwZs

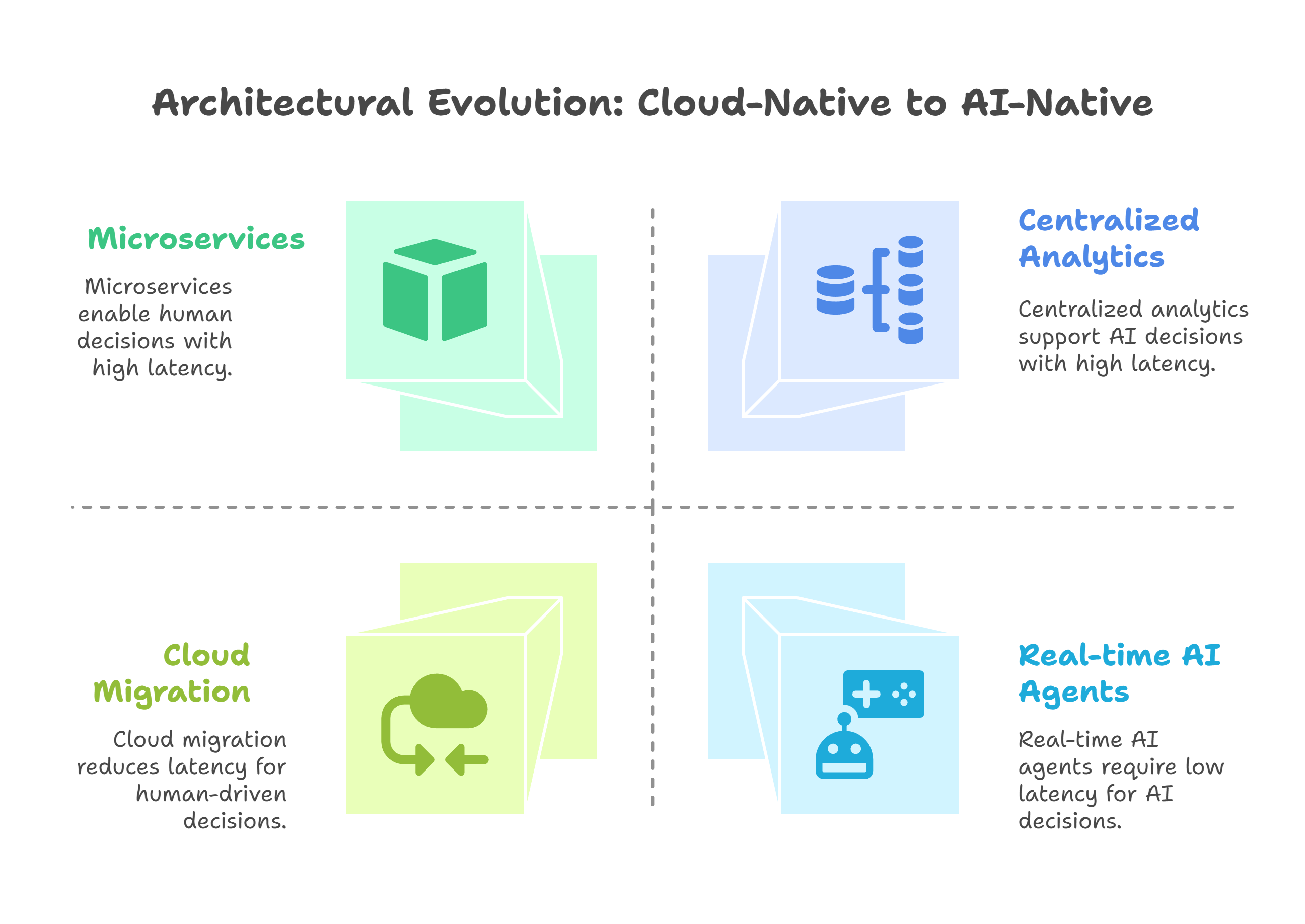

AI-Native Data Architectures: Most platforms were built for dashboards—not events, vectors, or AI agents. AI-native means real-time streams, embeddings, LLM-ready data, and a composable cloud stack. Learn more 👉 https://t.co/67luQD4gNe

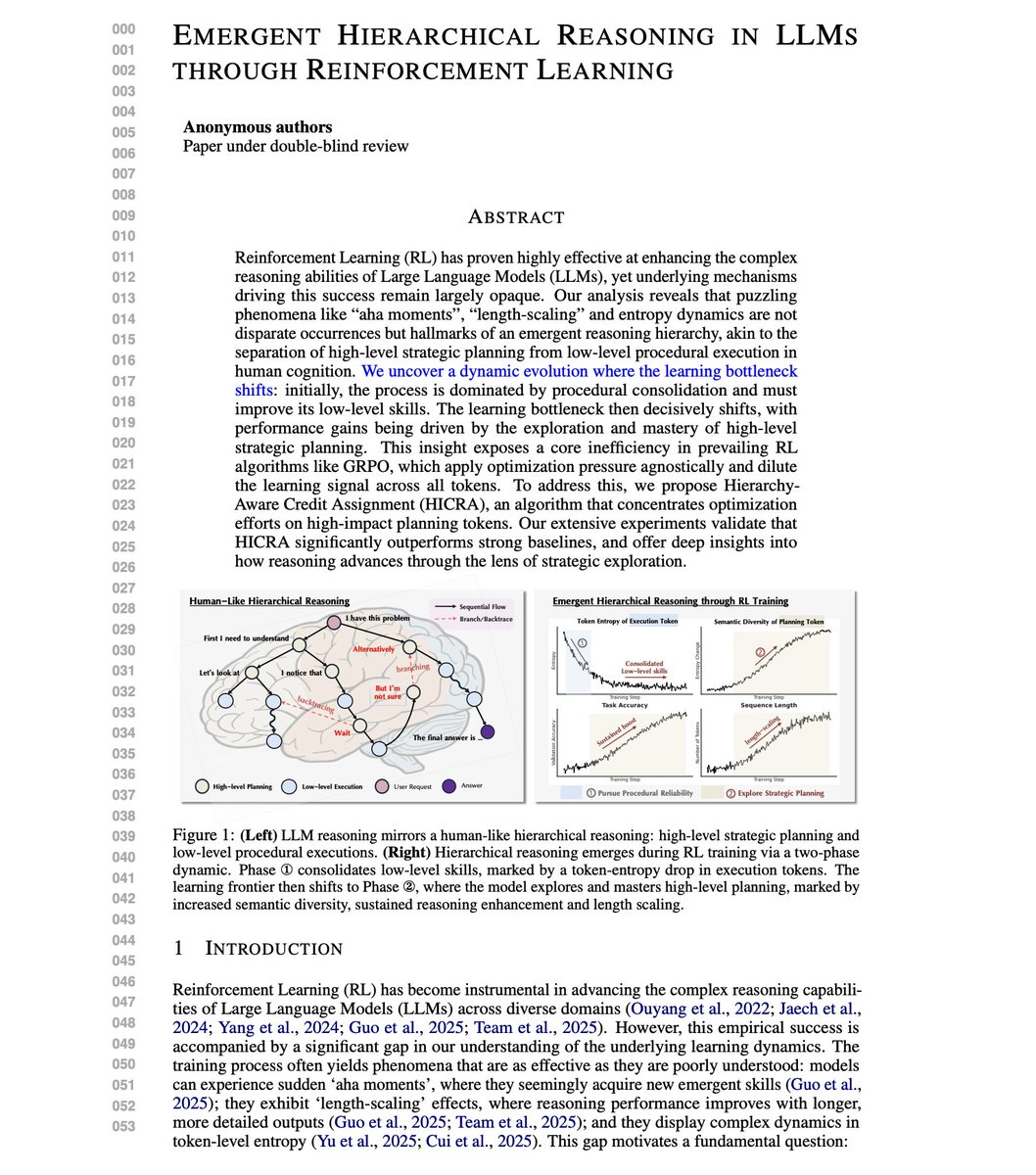

Great paper on why RL actually works for LLM reasoning. Apparently, "aha moments" during training aren't random. They're markers of something deeper. Researchers analyzed RL training dynamics across eight models, including Qwen, LLaMA, and vision-language models. The findings challenge how we think about training reasoning capabilities. RL training follows a two-phase dynamic that mirrors human cognition: first, the model masters low-level execution (calculations, formulas), then the learning bottleneck shifts to high-level strategic planning (logical maneuvers, backtracing, branching). It turns out that current algorithms like GRPO apply optimization pressure uniformly across all tokens. This dilutes the learning signal. Most tokens are procedural execution. The real gains come from strategic planning tokens. This new research introduces HICRA (Hierarchy-Aware Credit Assignment), an algorithm that concentrates optimization specifically on planning tokens rather than treating all tokens equally. How do they identify planning tokens? Through "Strategic Grams," n-grams that function as logical scaffolding: phrases like "let's try a different approach" or "but the problem mentions that." Human annotation validated 86% of identified Strategic Grams genuinely guide reasoning flow. On Qwen3-4B-Instruct, HICRA achieves 73.1% on AIME24 versus GRPO's 68.5%. On AIME25, 65.1% versus 60.0%. On Qwen2.5-7B-Base, gains of +8.4 points on AMC23 and +4.0 on Olympiad benchmarks. Error analysis reveals the mechanism: during RL training, strategic errors decrease far more than procedural errors. A perfectly executed incorrect plan still fails. RL preferentially fixes high-level strategic faults because that's where the leverage is. HICRA sustains higher semantic entropy than GRPO while maintaining lower token entropy. The difference matters because entropy regularization that promotes token-level diversity actually hurts performance. Only targeted strategic exploration improves reasoning. Overall, the paper provides a mechanistic explanation for mysterious RL phenomena like "aha moments" and length-scaling, and demonstrates that focusing optimization on the right tokens substantially improves training efficiency. (bookmark it) Paper: https://t.co/mpLvne0gGk Learn to build with AI Agents in my academy: https://t.co/JBU5beIoD0

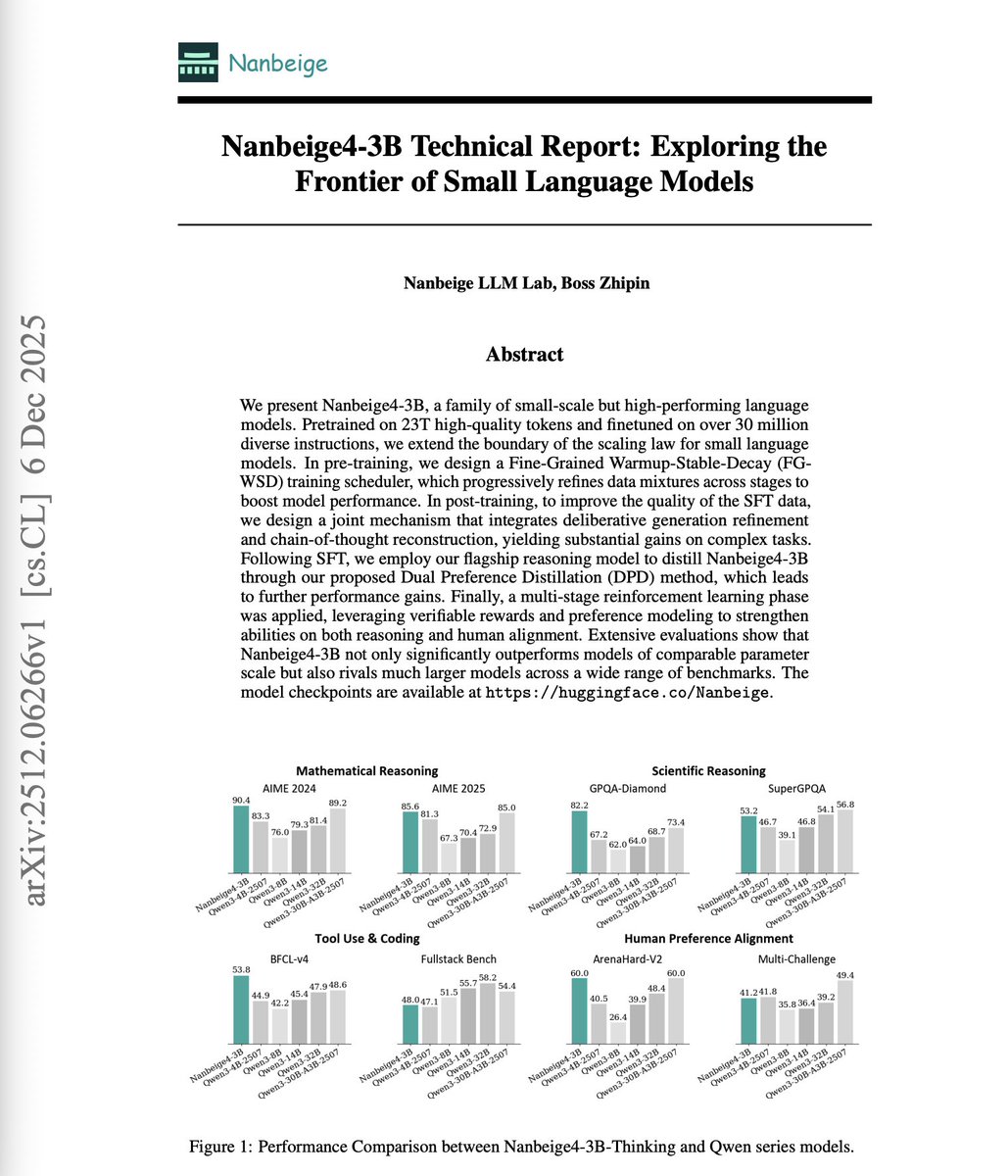

A 3B model outperforms models 10x its size on reasoning benchmarks. Small language models (SLMs) are often dismissed as fundamentally limited. The belief is that more parameters mean more capability, and that's it. More recent research indicates that the real ceiling isn't parameter count. It's the training methodology. This technical report introduces Nanbeige4-3B, a family of SLMs trained on 23 trillion high-quality tokens and finetuned on over 30 million diverse instructions. The results challenge assumptions about model scaling. On AIME 2024, Nanbeige4-3B-Thinking scores 90.4% versus Qwen3-32B's 81.4%. On GPQA-Diamond, it achieves 82.2% versus Qwen3-14B's 64.0%. This shows that the 3B model consistently outperforms models 4-10x larger. Here's how they did it: Fine-Grained WSD scheduler: Rather than uniform data sampling, they split training into stages with progressively refined data mixtures. High-quality data is concentrated in later stages. On a 1B test model, this improved GSM8K from 27.1% to 34.3% versus vanilla scheduling. Solution refinement with CoT reconstruction: They refine answer quality through iterative critique cycles, then reconstruct a chain-of-thought that logically leads to the improved solution. This yields SFT examples far better than rejection sampling. Dual Preference Distillation: The student model simultaneously learns to mimic teacher output distributions while distinguishing high-quality from low-quality responses. Token-level distillation combined with sequence-level preference optimization. Multi-stage RL: Rather than mixed-corpus training, each RL stage targets a specific domain. STEM reasoning with agentic verifiers. Coding with synthetic test functions. Human preference alignment with pairwise reward models. On the WritingBench leaderboard, Nanbeige4-3B-Thinking (79.03) approaches GPT-5 (83.87) and outperforms DeepSeek-R1 (78.92), Grok-4 (74.65), and O4-mini (72.90). The report demonstrates that carefully engineered small models can match or exceed much larger models when training methodology is optimized at every stage. Paper: https://t.co/bFPJOZycji Learn to build with LLMs and AI Agents in our academy: https://t.co/zQXQt0PMbG

@alexolegimas The intervention of Euterpe, but only for a little while? https://t.co/Ve96Vuh3dL

🔋@YuluBike is India’s largest EBIDTA profitable electric mobility company & it’s continuing to grow with #AWS. ⚡️The #startup is leading the charge in the EV sector, relying on AWS infrastructure to scale while remaining committed to innovation. 👉 https://t.co/scWmP6Kpdo https://t.co/EK7r3KBb5h

🚨 This may be the most important AI move of the year. President Trump just signed an Executive Order pushing the U.S. toward one national AI framework and not 50 state rulebooks. That’s not bureaucracy. That’s geopolitics. 50 rules = friction. Friction = slower AI. Slower AI = losing to countries that move as one. China executes centrally. Europe regulates centrally. America was fragmenting itself. This EO stops that. But here’s the real tension 👇 A single federal AI framework can accelerate compute, capital, and deployment if it scales with the technology. If it doesn’t, we replace 50 bottlenecks with one massive federal choke point. This isn’t just about innovation. It’s about who controls the future of the AI economy. Unity can be an accelerant. Centralization can also be a weapon. What happens next decides whether the U.S. dominates the AI era or slows itself down. Thank you @DavidSacks @sriramk @howardlutnick @tedcruz #AI #AIPolicy #Geopolitics #AIInfrastructure #Compute #NationalSecurity #Innovation #GlobalCompetition

We’re releasing pre-anneal checkpoints for our Nano/Mini base models. Still plenty of math + code exposure, but easier to CPT and customize than our post-anneal checkpoints. Have fun exploring. https://t.co/DnCXwbJna5

📢📢 I have a new paper in which I argue that the question “Can we make algorithms fair?” is a category error: https://t.co/IWod5em6mz A few years ago I became disillusioned with algorithmic fairness research and stopped working in the area. So when I was invited to contribute an essay to the volume “Contemporary Debates on the Ethics of Artificial Intelligence” (part of the highly successful “Contemporary Debates in Philosophy” series), it was an opportunity for me to look back and reflect. The key thesis: “There has been an avalanche of research on how to make algorithms fair, and there has been a powerful movement to turn those ideas into reality. How have things panned out?I will argue that this movement has been only minimally effective at preventing harms from automated decision-making systems. When we analyze why, it reveals two important limitations of the underlying ideas. First, fairness as a proxy for justice focuses attention on too narrow a set of questions. Second, it applies a depoliticized lens that gives an illusion of moral clarity in academic discussions but runs into headwinds when actually attempting to implement it. These attributes are not incidental and cannot easily be fixed. They are integral to what makes the fairness frame appealing in the first place.” The second part of the paper is constructive: “I advocate for a more ambitious study of fairness and justice in algorithmic decision making in which we attempt to model the sociotechnical system, not just the technical subsystem. The animating question becomes: “How should we design algorithmic bureaucracies?” This will require many shifts including letting go of neat, mathematically precise fairness definitions and embracing empirical social scientific methods. But the potential payoff is enormous in terms of a greater ability to model benefits and harms and much expanded design space for reform.” The other essays in the volume sound fascinating and I look forward to reading them. https://t.co/vTOK0vG2jQ 1 What Is Artificial Intelligence and Should We Define It in Terms of Agency? Sven Nyholm 2 Artificial Intelligence as a New Form of Agency Luciano Floridi 3 What Can AI Ethics Learn from Medical Ethics, Bioethics, and Animal Ethics? Paula Boddington 4 What Is Distinctive About AI Ethics When Compared to Bioethics? Thomas Grote 5 Can We Make Algorithms Fair? Margaret Mitchell 6 What If Algorithmic Fairness Is a Category Error? Arvind Narayanan 7 Are Explanations of AI Decisions Morally Necessary? Emily Sullivan 8 Doing Without Explainable AI David Danks 9 Nine Philosophical Questions About Privacy Leonhard Menges 10 The Group Right to Privacy in the Age of AI Anuj Puri 11 Group Rights: A Skeptical View John Zerilli 12 Entangling Ourselves with AI: Affirmative Responsibility and the Cultivation of Responsible Agency Fabio Tollon and Shannon Vallor 13 Generative AI, Language, and Authorship: Deconstructing the Debate and Moving It Forward Mark Coeckelbergh and David Gunkel 14 From “Can AI Be Creative?” to “What Is the Value of Integrating AI into Creative Processes?” Caterina Moruzzi 15 What Will Work Be Like in the Future? Daniel Susskind 16 AI and the Future of Work: An Egalitarian Vision Kate Vredenburgh 17 What Would It Look Like to Align Humans with Ants? Vincent Conitzer 18 Could We Control Superintelligent AI? Roman V. Yampolskiy 19 The Many Faces of AI Alignment Atoosa Kasirzadeh 20 On the Troubled Relation Between AI Ethics and AI Safety Olle Häggström 21 Short-Term or Long-Term AI Ethics? A Dilemma for Ethical Singularity Only Vincent C. Müller 22 Should We Worry About the Moral Status of Nonsentient AIs? Parisa Moosavi 23 On the Moral Status of AI Entities and Robots: A Critique of the Social-Relational Approach and a Defense of the Properties-Based Approach John-Stewart Gordon Carbon-Intensive Activities Sustainable? 24 Reduce, Reuse, Recycle, Refuse: Green Data Refusal and Sustainable AI Cristina Richie 25 The Making and Management of Computational Agency Ranjit Singh 26 Deepfakes and Democracy Claire Benn 27 Should Online Platforms Be Publicly Owned and Controlled? Sean Donahue 28 The Tragedy of AI Governance Simon Chesterman 29 Can AI Be Governed? Gillian K. Hadfield

'Magic Tree House' animated series is in the works Author Mary Pope Osborne will have creative control of the project "Finally ... I'm able [to] realize my long-held dream of bringing ‘Magic Tree House’ books to the screen in a way that feels true to their spirit" (via @Variety)

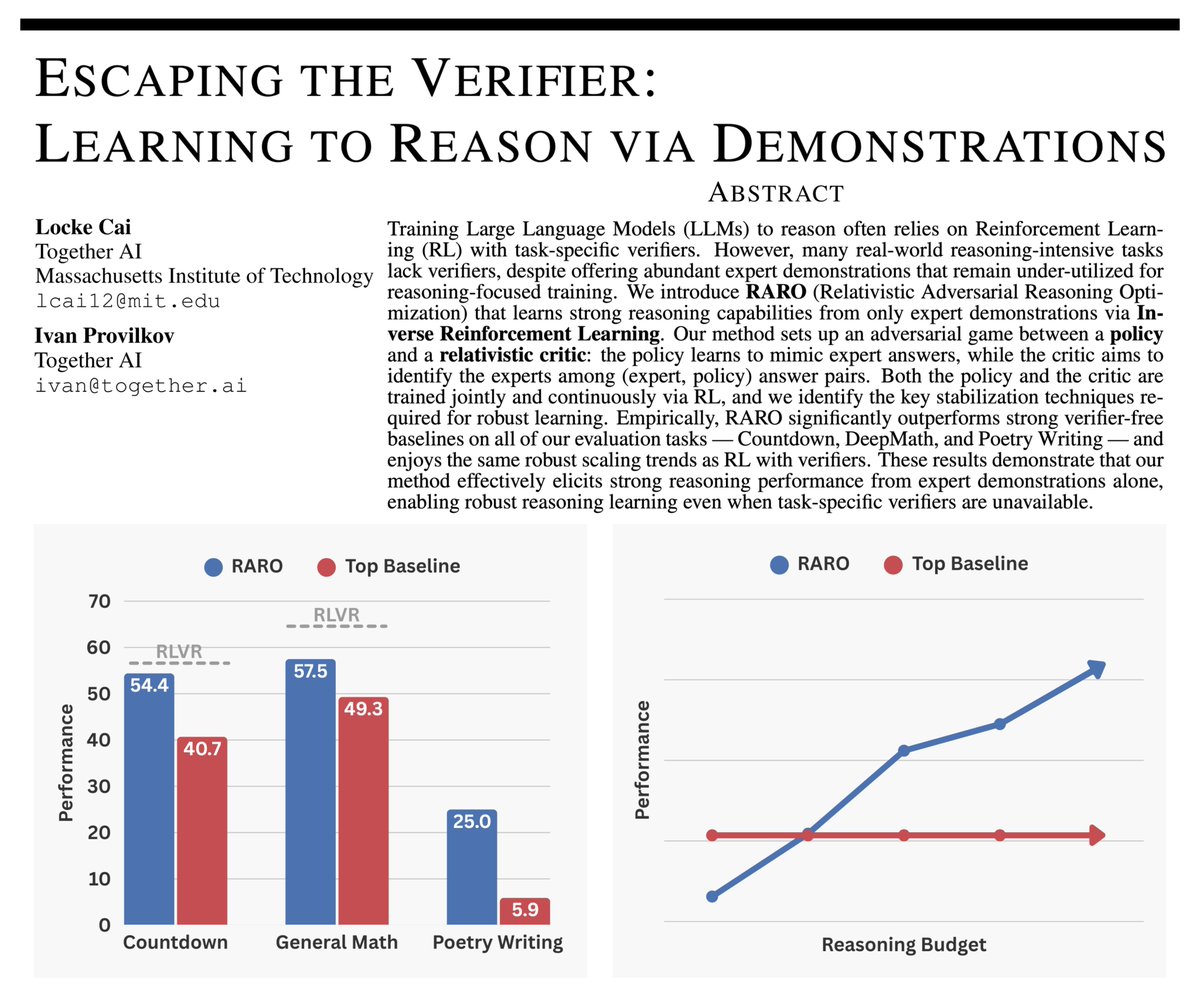

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇

NativeNest — Kerala comes home to you in Bengaluru! 🥥🌴 നാട് മിസ്സ് ചെയ്യുന്നുണ്ടോ ? This app solves it. From nendran banana, fresh pappadam, tapioca/kappa, Kerala snacks, pickles, masalas, fish, and all those homely Kerala essentials — everything is available and delivered across Bengaluru. Download NativeNest now: https://t.co/316leRTrFq Perfect for Malayalis who want authentic Kerala items without hunting across the city. ✨ #Malayali #KeralaFood #Bangalore #NativeNest #ccgeeks #CCgeeks #ccgeek #ccgeeks

https://t.co/oRSyMBEOpO

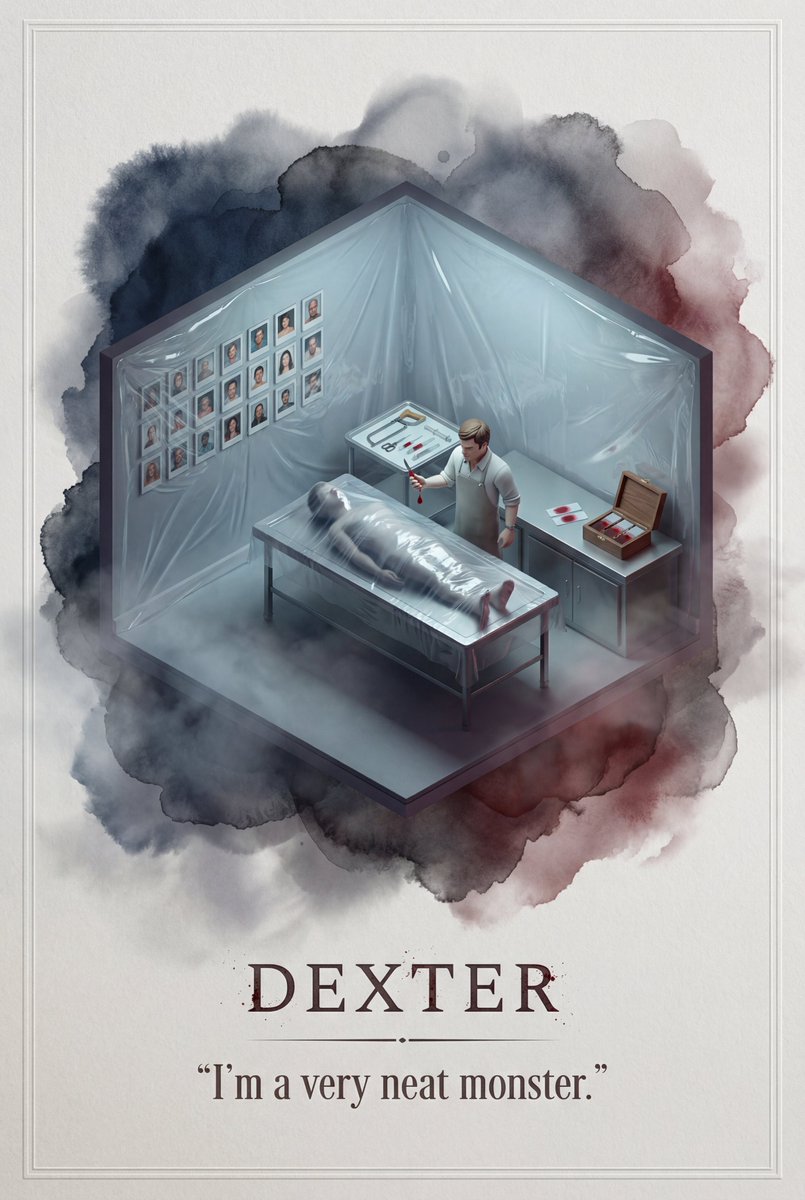

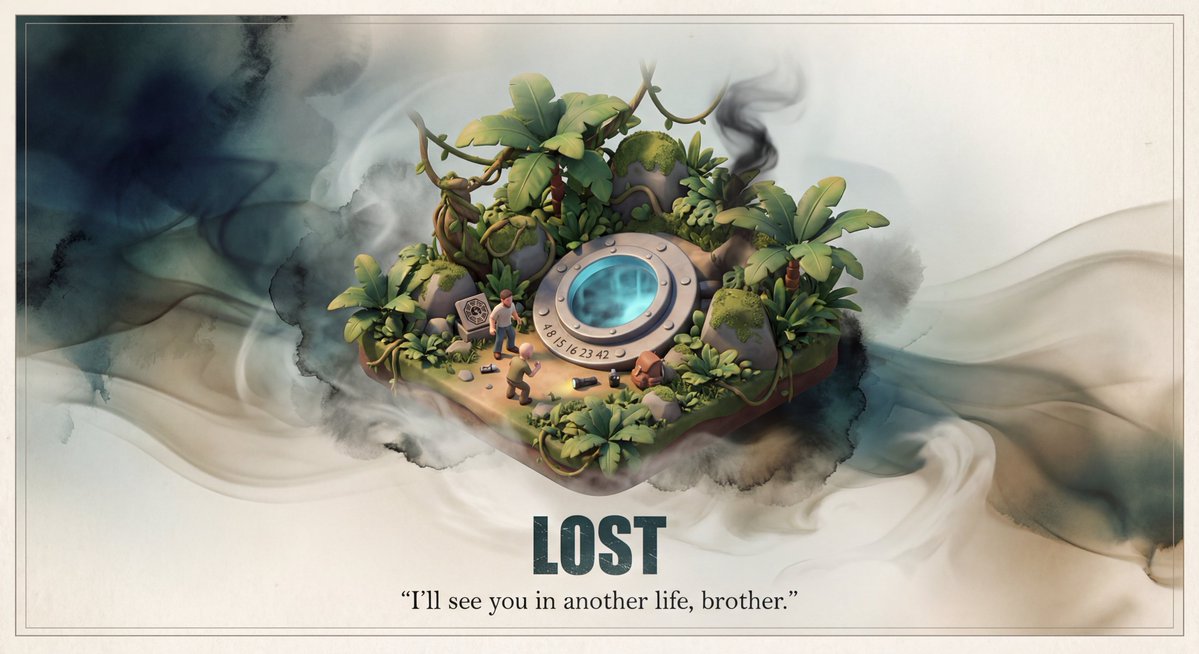

被 Nano Banana Pro 的美学表现震撼!太顶了 一键生成任何影视剧或者小说的场景海报提示词 优化了一下我的微缩场景模型提示词,增加文案部分的效果以及模型周围的特效 没想到适配性这么好,每个场景、文字效果、模型周遭的特效都非常适配小说或者影视剧 提示词: 请为影视剧/小说《需要添加的名称》设计一张高品质的3D海报,需要先检索影视剧/小说信息和著名的片段场景。 首先,请利用你的知识库检索这个影视剧/小说的内容,找出一个最具代表性的名场面或核心地点。在画面中央,将这个场景构建为一个精致的轴侧视角3D微缩模型。风格要采用梦工厂动画那种细腻、柔和的渲染风格。你需要还原当时的建筑细节、人物动态以及环境氛围,无论是暴风雨还是宁静的午后,都要自然地融合在模型的光影里。 关于背景,不要使用简单的纯白底。请在模型周围营造一种带有淡淡水墨晕染和流动光雾的虚空环境,色调雅致,让画面看起来有呼吸感和纵深感,衬托出中央模型的珍贵。 最后是底部的排版,请生成中文文字。居中写上小说名称,字体要有与原著风格匹配的设计感。在书名下方,自动检索并排版一句原著中关于该场景的经典描写或台词,字体使用优雅的衬线体。整体布局要像一个高级的博物馆藏品铭牌那样精致平衡。

Customize the prompt in English and for mainstream TV dramas. Nano Banana Pro 4K (Google AI Studio) https://t.co/fRoXuDBSNE

被 Nano Banana Pro 的美学表现震撼!太顶了 一键生成任何影视剧或者小说的场景海报提示词 优化了一下我的微缩场景模型提示词,增加文案部分的效果以及模型周围的特效 没想到适配性这么好,每个场景、文字效果、模型周遭的特效都非常适配小说或者影视剧 提示词: 请为影视剧/小说《需要添加的名称》设计一张高品质的3D海报,需要先检索影视剧/小说信息和著名的片段场景。 首先,请利用你的知识库检索这个影视剧/小说的内容,找出一个最具代表性的名场面或核心地点。在画面中央,将这个场景构建为一个精致的轴侧视角3D微缩模型。风格要采用梦工厂动画那种细腻