@dair_ai

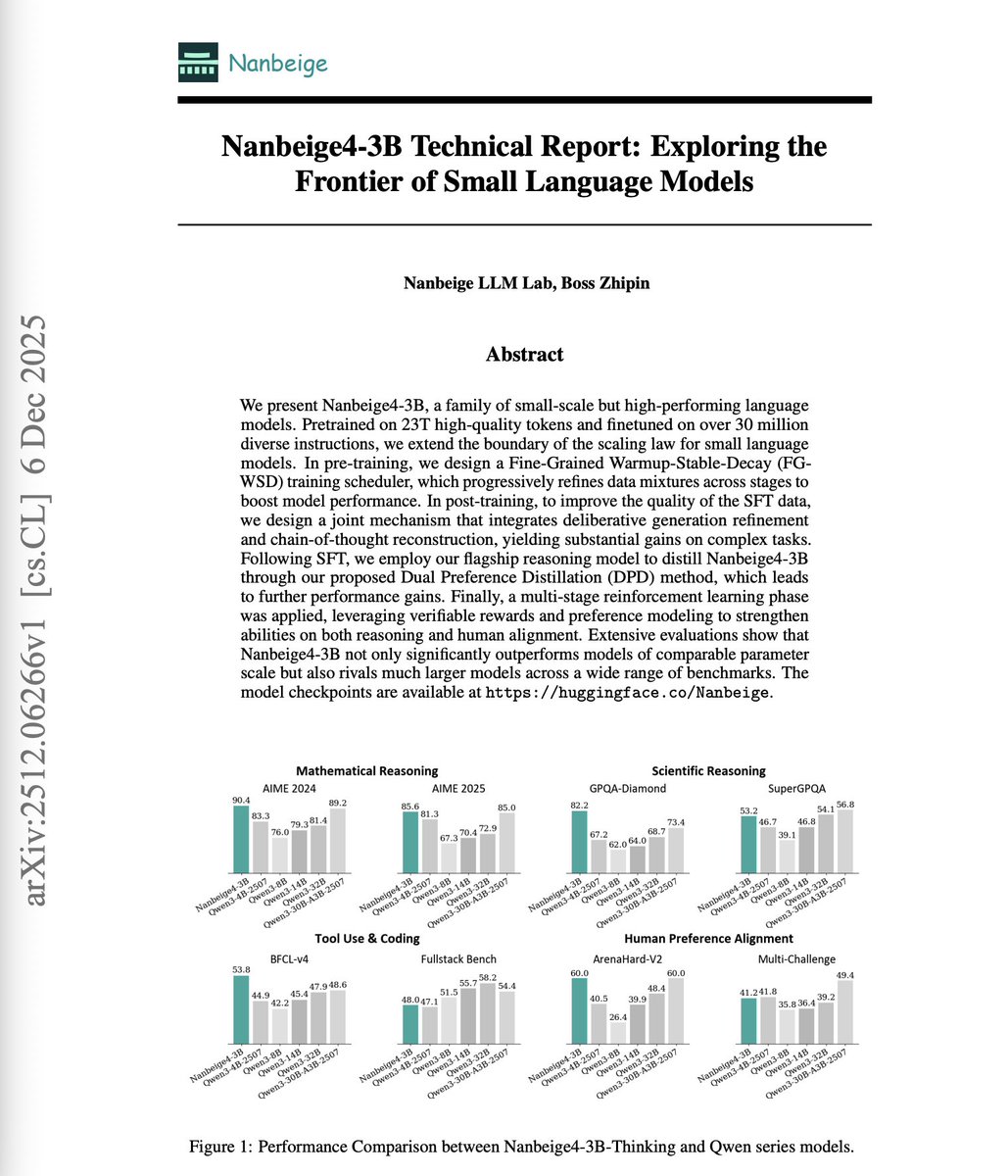

A 3B model outperforms models 10x its size on reasoning benchmarks. Small language models (SLMs) are often dismissed as fundamentally limited. The belief is that more parameters mean more capability, and that's it. More recent research indicates that the real ceiling isn't parameter count. It's the training methodology. This technical report introduces Nanbeige4-3B, a family of SLMs trained on 23 trillion high-quality tokens and finetuned on over 30 million diverse instructions. The results challenge assumptions about model scaling. On AIME 2024, Nanbeige4-3B-Thinking scores 90.4% versus Qwen3-32B's 81.4%. On GPQA-Diamond, it achieves 82.2% versus Qwen3-14B's 64.0%. This shows that the 3B model consistently outperforms models 4-10x larger. Here's how they did it: Fine-Grained WSD scheduler: Rather than uniform data sampling, they split training into stages with progressively refined data mixtures. High-quality data is concentrated in later stages. On a 1B test model, this improved GSM8K from 27.1% to 34.3% versus vanilla scheduling. Solution refinement with CoT reconstruction: They refine answer quality through iterative critique cycles, then reconstruct a chain-of-thought that logically leads to the improved solution. This yields SFT examples far better than rejection sampling. Dual Preference Distillation: The student model simultaneously learns to mimic teacher output distributions while distinguishing high-quality from low-quality responses. Token-level distillation combined with sequence-level preference optimization. Multi-stage RL: Rather than mixed-corpus training, each RL stage targets a specific domain. STEM reasoning with agentic verifiers. Coding with synthetic test functions. Human preference alignment with pairwise reward models. On the WritingBench leaderboard, Nanbeige4-3B-Thinking (79.03) approaches GPT-5 (83.87) and outperforms DeepSeek-R1 (78.92), Grok-4 (74.65), and O4-mini (72.90). The report demonstrates that carefully engineered small models can match or exceed much larger models when training methodology is optimized at every stage. Paper: https://t.co/bFPJOZycji Learn to build with LLMs and AI Agents in our academy: https://t.co/zQXQt0PMbG