@omarsar0

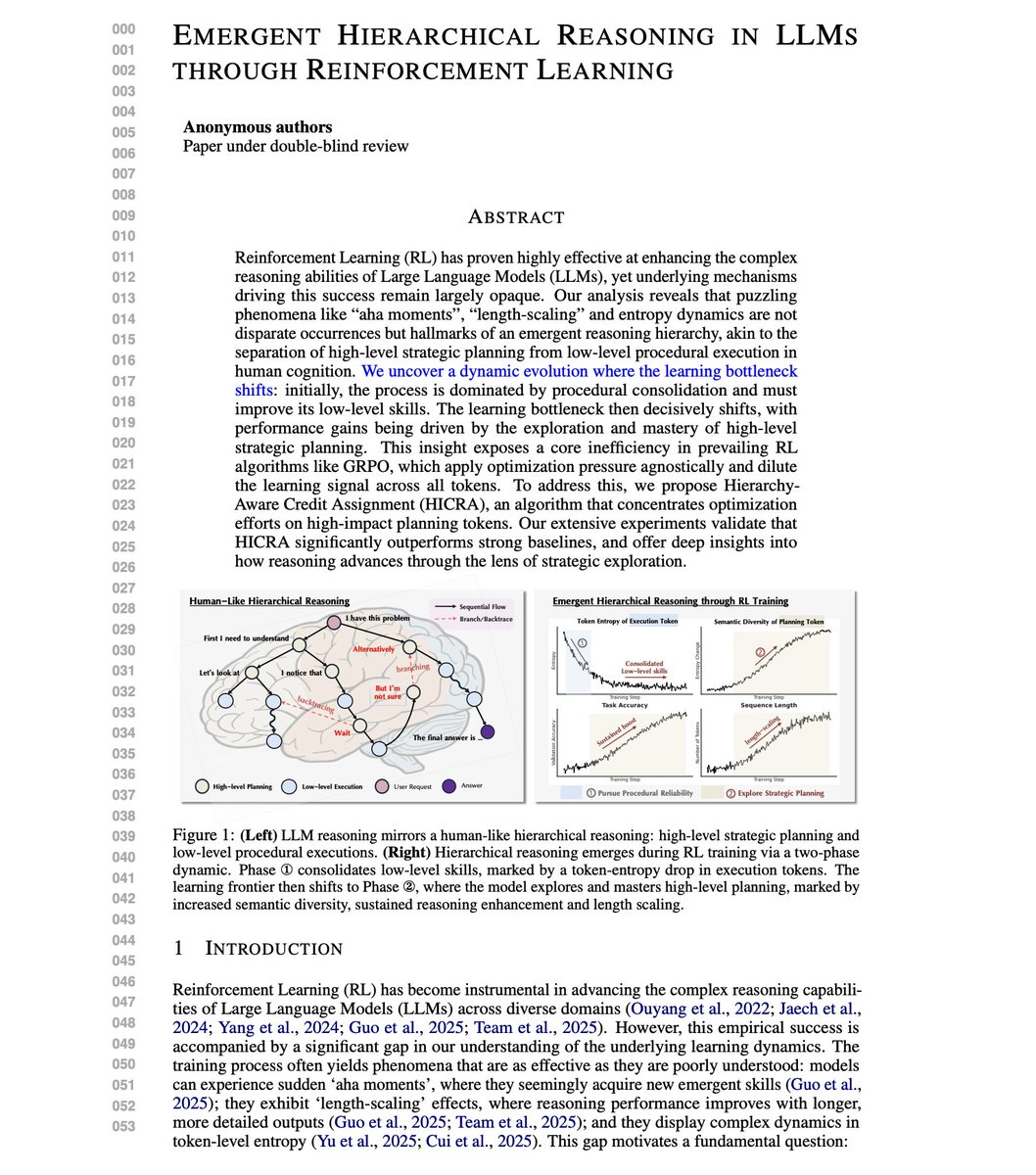

Great paper on why RL actually works for LLM reasoning. Apparently, "aha moments" during training aren't random. They're markers of something deeper. Researchers analyzed RL training dynamics across eight models, including Qwen, LLaMA, and vision-language models. The findings challenge how we think about training reasoning capabilities. RL training follows a two-phase dynamic that mirrors human cognition: first, the model masters low-level execution (calculations, formulas), then the learning bottleneck shifts to high-level strategic planning (logical maneuvers, backtracing, branching). It turns out that current algorithms like GRPO apply optimization pressure uniformly across all tokens. This dilutes the learning signal. Most tokens are procedural execution. The real gains come from strategic planning tokens. This new research introduces HICRA (Hierarchy-Aware Credit Assignment), an algorithm that concentrates optimization specifically on planning tokens rather than treating all tokens equally. How do they identify planning tokens? Through "Strategic Grams," n-grams that function as logical scaffolding: phrases like "let's try a different approach" or "but the problem mentions that." Human annotation validated 86% of identified Strategic Grams genuinely guide reasoning flow. On Qwen3-4B-Instruct, HICRA achieves 73.1% on AIME24 versus GRPO's 68.5%. On AIME25, 65.1% versus 60.0%. On Qwen2.5-7B-Base, gains of +8.4 points on AMC23 and +4.0 on Olympiad benchmarks. Error analysis reveals the mechanism: during RL training, strategic errors decrease far more than procedural errors. A perfectly executed incorrect plan still fails. RL preferentially fixes high-level strategic faults because that's where the leverage is. HICRA sustains higher semantic entropy than GRPO while maintaining lower token entropy. The difference matters because entropy regularization that promotes token-level diversity actually hurts performance. Only targeted strategic exploration improves reasoning. Overall, the paper provides a mechanistic explanation for mysterious RL phenomena like "aha moments" and length-scaling, and demonstrates that focusing optimization on the right tokens substantially improves training efficiency. (bookmark it) Paper: https://t.co/mpLvne0gGk Learn to build with AI Agents in my academy: https://t.co/JBU5beIoD0