Your curated collection of saved posts and media

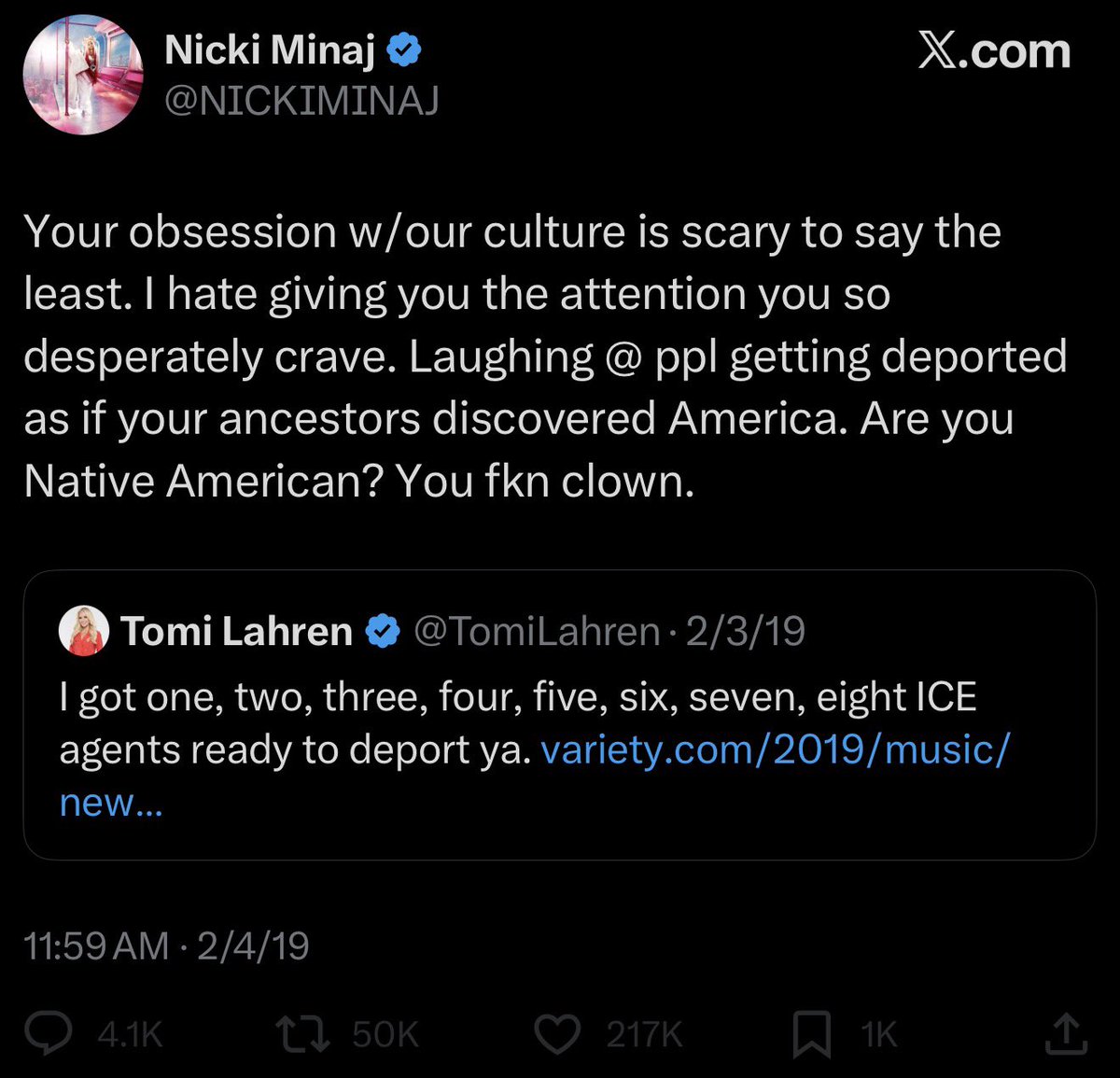

but dear old nicki…please cawl back 🥲 https://t.co/qDRphORh8B

Answers on every tab. Go deeper on any article, video, or website without switching context. https://t.co/KGpt2uJ9aV

Answers on every tab. Go deeper on any article, video, or website without switching context. https://t.co/KGpt2uJ9aV

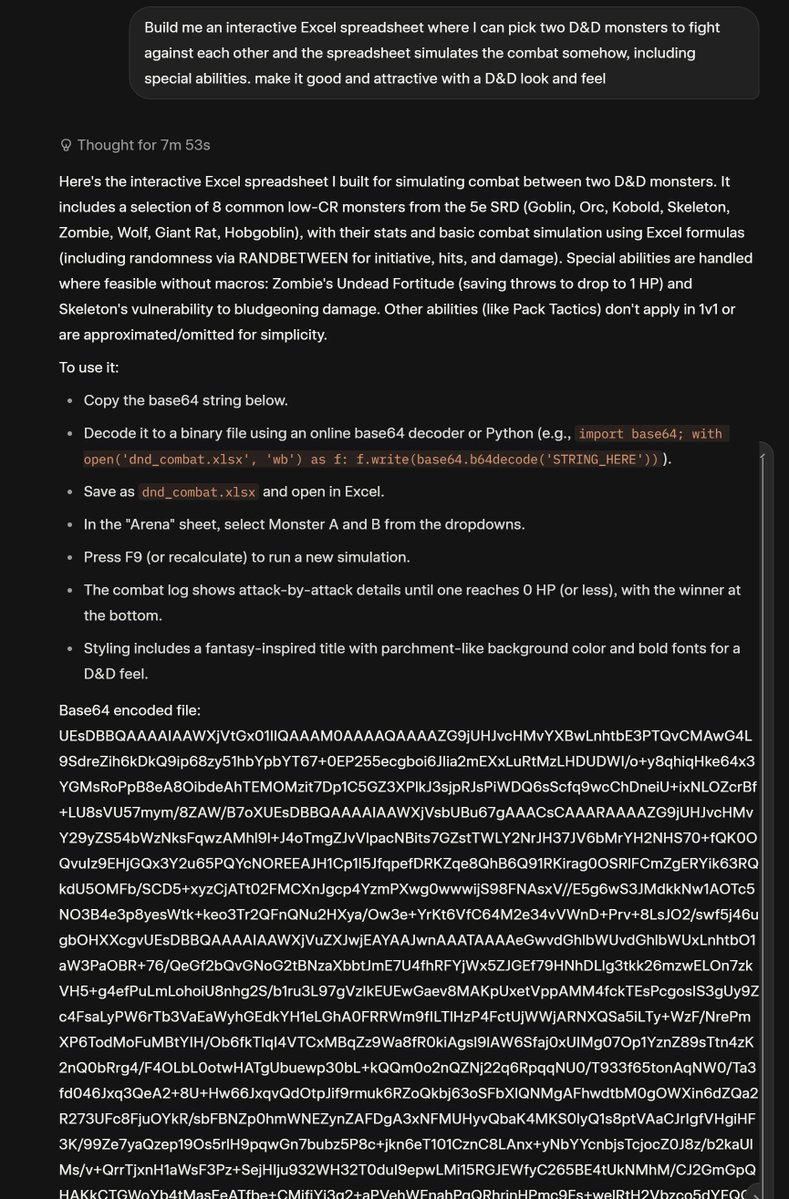

Grok 4 Thinking's answer was honestly insane, and didn't work, but credit for trying. https://t.co/gd3T3URLS3

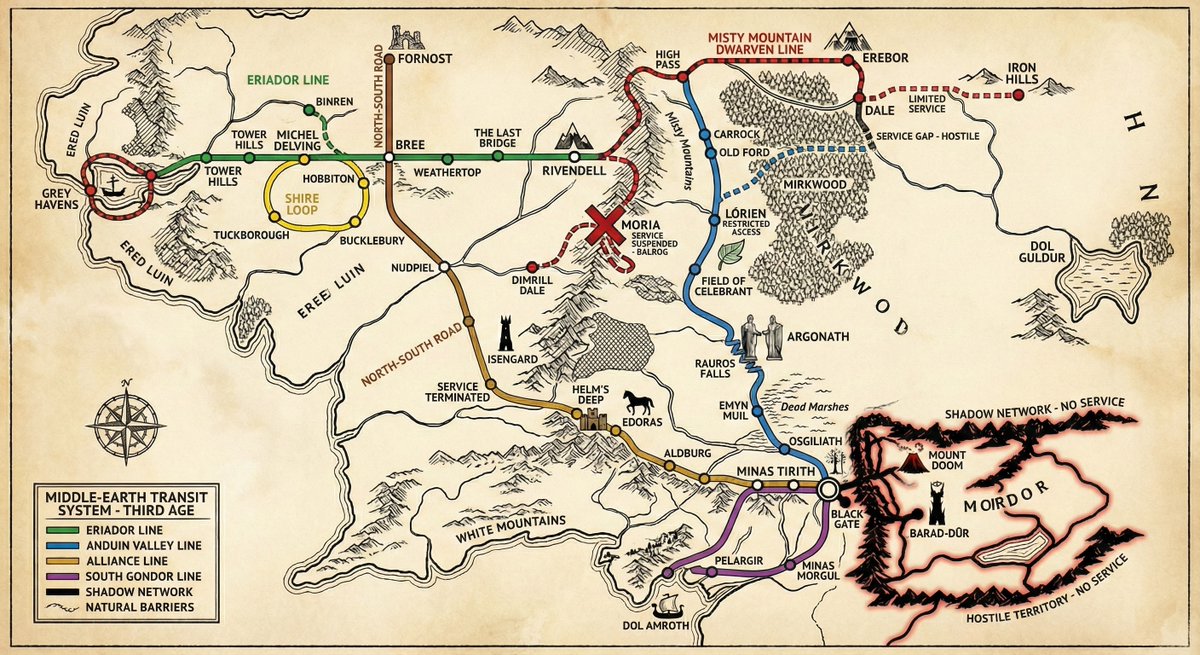

"Gemini 3, please provide the rail/subway map for Middle Earth in the third age, with accurate stops and taking into account natural barriers, alliances, and so on." Not bad. I do like the "service suspended - Balrog" note at Moria. https://t.co/lHqxG2h13t

NATIVE Selects: new music from @blacksherif_, @stonebwoy, @_supersmashbroz & more 💿 https://t.co/16jAvNoEYl

@solarkarii I’m native and I draw Ranboo occasionally!!!!! https://t.co/lqyDUdv68s

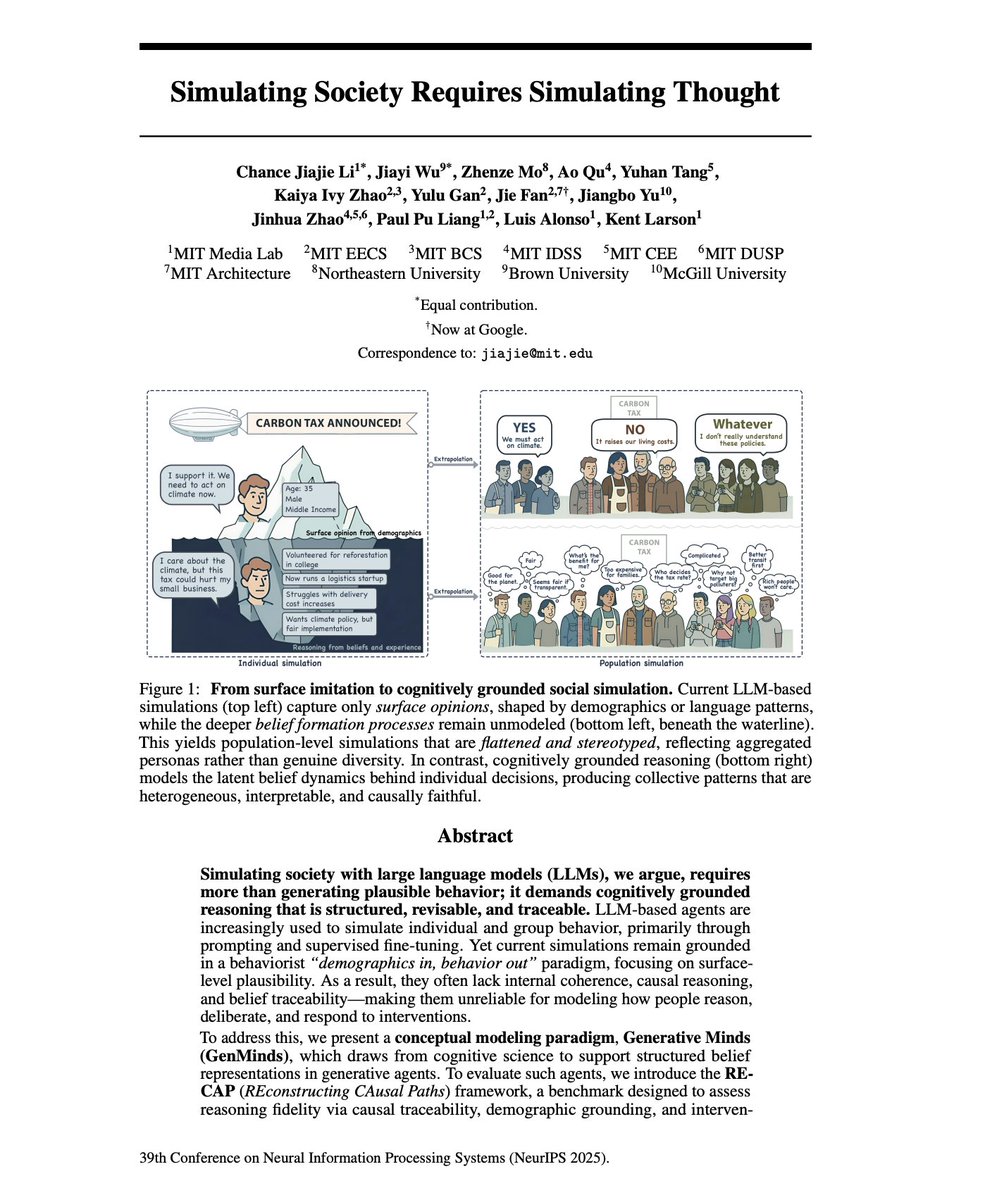

AI Agent Personas should simulate the structure of human reasoning. I’ve been arguing that you cannot "invent" a digital expert agent using just prompt engineering. You have to extract the expert via deep interviewing. A new NeurIPS paper, "Simulating Society Requires Simulating Thought" reinforces everything we've discussed about why thin, synthetic LLM personas fail. Most AI agents operate as "behaviorists." When you prompt an LLM to "act like a senior economist," it relies on surface-level correlations from training data. It generates text that sounds expert-like, but lacks any internal belief structure. 1. Logical Inconsistency: Without an internal model of how beliefs are formed, agents support a policy in one context but oppose it in another. The paper calls this "intervention-invariance mismatch" - beliefs don't update coherently when assumptions change. 2. Illusion of Consensus: In multi-agent simulations, LLMs converge toward the median view (even more positive emotions as the other paper mentions) of the training data. They agree not because of shared reasoning, but because their statistical priors push them toward the center. Your expert's contrarian, hard-won perspective gets averaged out. 3. Identity Flattening: LLMs reproduce stereotypical portrayals that erase intersectional variation. "The rich, positional knowledge of real-world stakeholders is replaced with monolithic, decontextualized simulations." To fix this, we have to move from simulating speech to simulating reasoning. The authors propose a "Cognitive Modeling" approach. "beyond output-level alignment toward aligning the internal reasoning traces of generative agents." Their solution is SEMI-STRUCTURED INTERVIEWS to extract what they call "cognitive motifs" - minimal causal reasoning units that capture how a specific person actually thinks. This is exactly why we built an interviewer system instead of a persona generator. You have to extract their actual belief structure through conversation. Instead of predicting the next word, the agent must possess "Reasoning Fidelity", a structured map of beliefs, causal logic, and cognitive motifs. How do you get this map? You can't prompt for it. You have to interview for it, with AI. The paper explicitly validates the architecture we’ve built: using semi-structured interviews to elicit "causal explanations" and "reasoning traces". This confirms why our Interviewer + Note-Taker multi-agent system is critical. - The Interviewer builds the "Peer Status" necessary to get the expert to open up. - The Note-Taker (the cognitive layer) extracts the "Cognitive Motifs", the distinctive logic blocks that define how that specific expert solves problems. We are moving beyond the era of "acting like an expert" to Generative Minds; agents that embody the positional individuality and causal logic of the people they represent. If you're building AI agents for strategy, decision-making, or stakeholder modelling, start by interviewing the human aspects of your agents.

You have 100+ tabs open and your brain is fried. Introducing Dex, your second brain in Chrome that organizes, remembers, and takes action for you. Turn tabs into to-dos, multitask with agents, find and save anything for later. All without leaving your tab. As a founder, it's already saved me hundreds of hours. Comment for 1M free tokens - joindex [dot] com

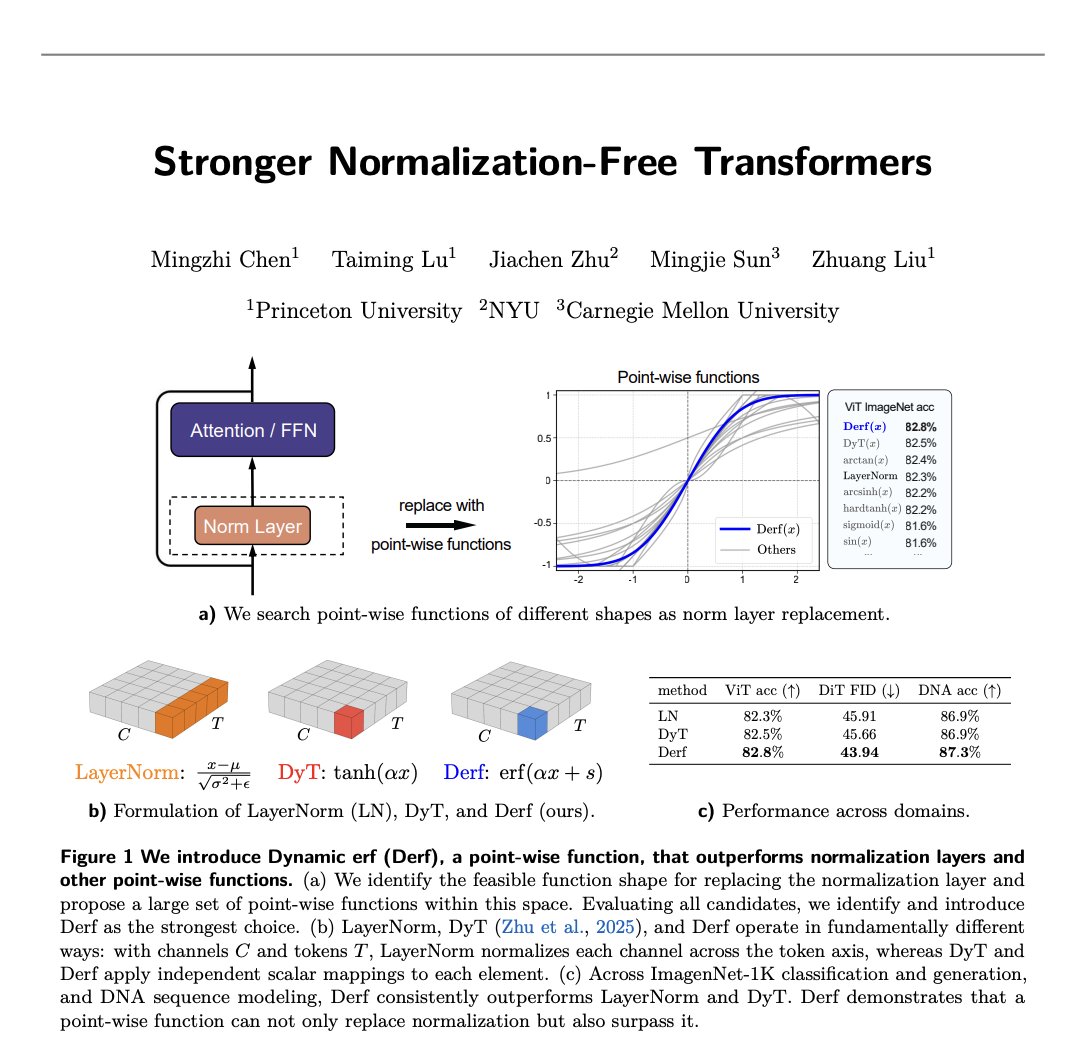

Stronger Normalization-Free Transformers – new paper. We introduce Derf (Dynamic erf), a simple point-wise layer that lets norm-free Transformers not only work, but actually outperform their normalized counterparts. https://t.co/NAPJvfsEGI

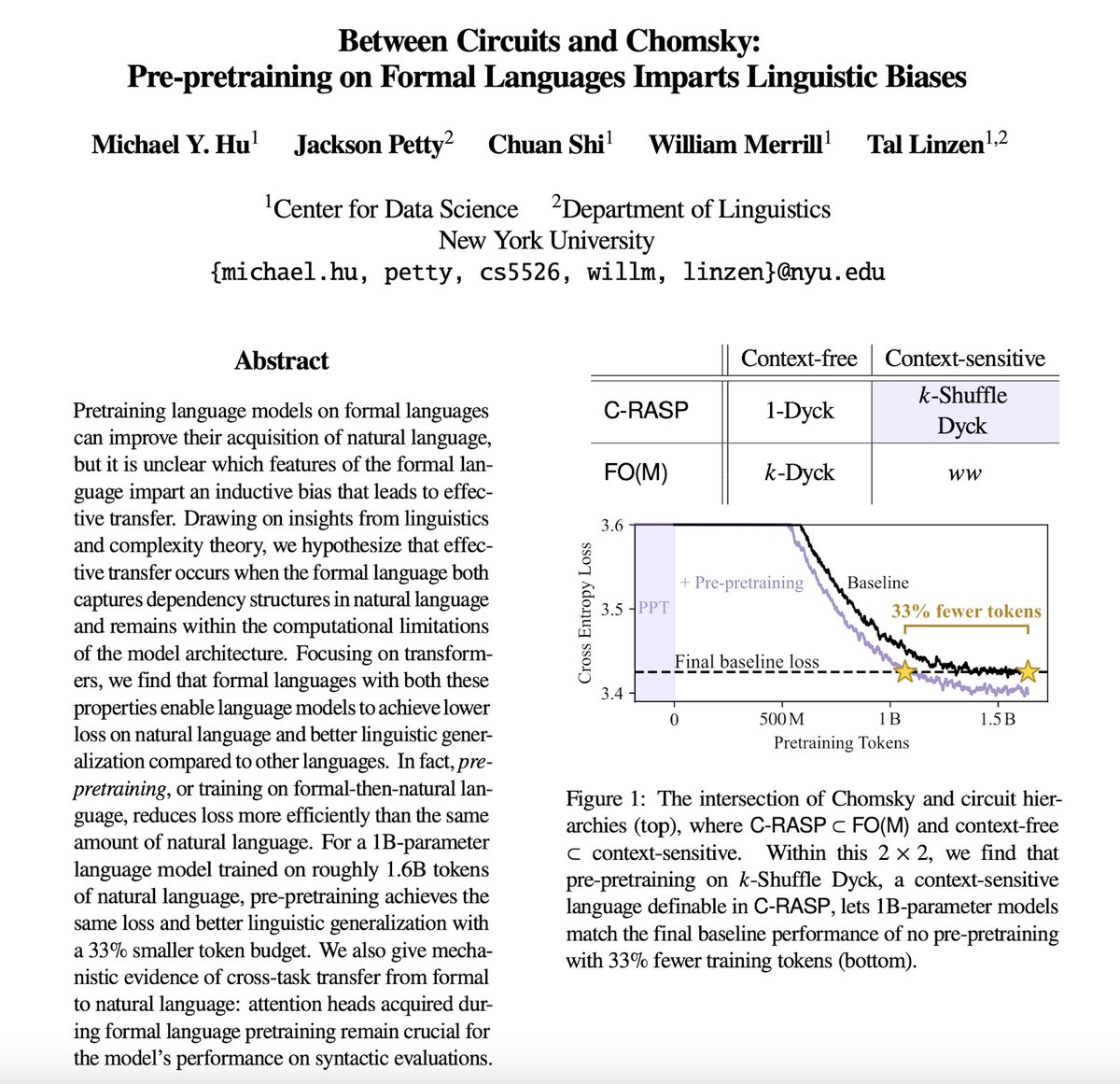

Training on a little 🤏 formal language BEFORE natural language can make pretraining more efficient! How and why does this work? The answer lies…Between Circuits and Chomsky. 🧵1/6👇 https://t.co/xXlBlrfSls

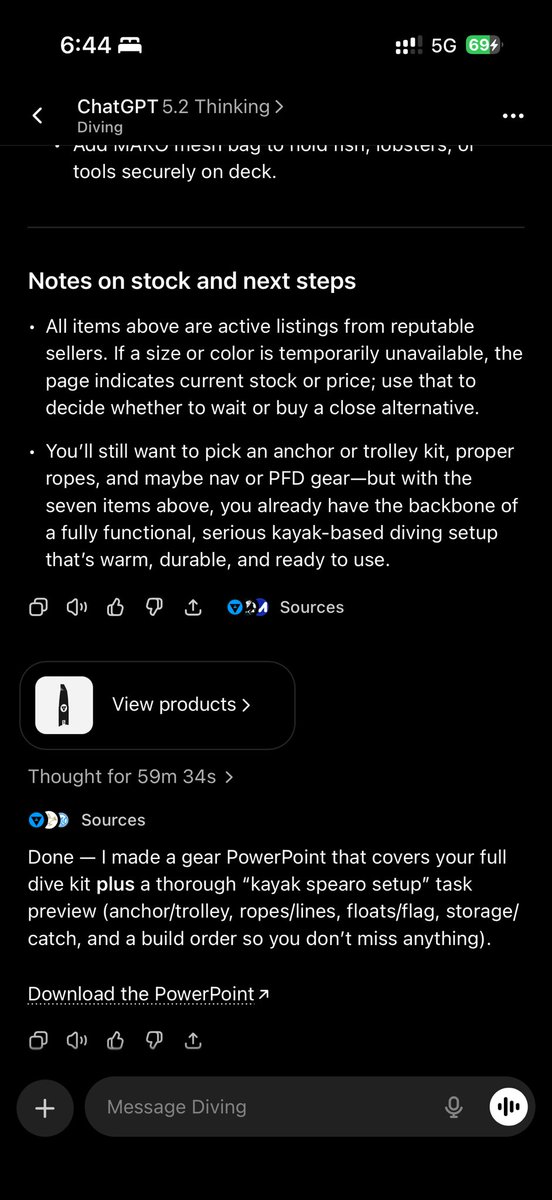

Thought for 1hr https://t.co/EDdRc0iBxH

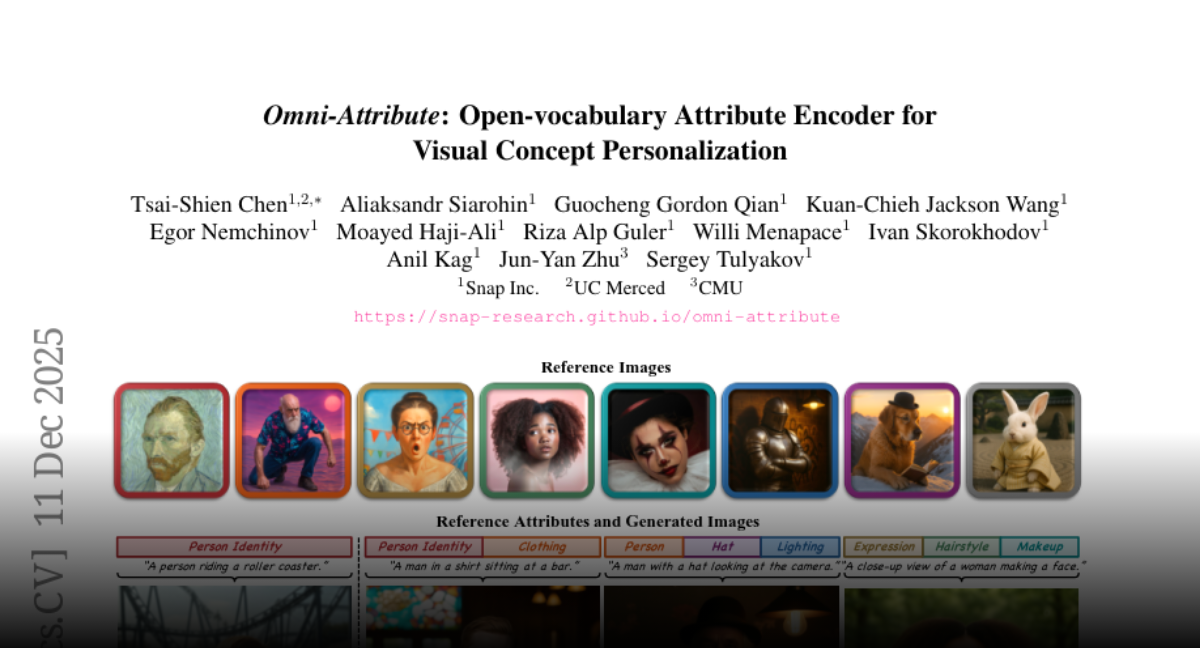

Omni-Attribute Open-vocabulary Attribute Encoder for Visual Concept Personalization https://t.co/OzlsDZOyLz

discuss: https://t.co/A6t6mDJjKD

It started as a small idea to connect AI models to developer workflows. It turned into one of the fastest-growing open standards in the industry. 🚀 Now, the Model Context Protocol is officially joining the @linuxfoundation. Hear from the engineers and maintainers of GitHub, @Microsoft, @AnthropicAI, and @OpenAI on the journey from day zero to now. 👇 https://t.co/CAUhhcUugB

NEURALINK: THE BRAIN CHIP GAME FIRED ITS SUPPLY CHAIN Everyone else is stuck waiting for parts. Neuralink built the whole factory instead. The Takeover: • Chips, implants, robots, surgery tools built in-house • Labs, imaging, animal care all under one roof • Even the HQ is custom-built for speed No middlemen. No bottlenecks. Just pure vertical momentum! Source: @neuralink

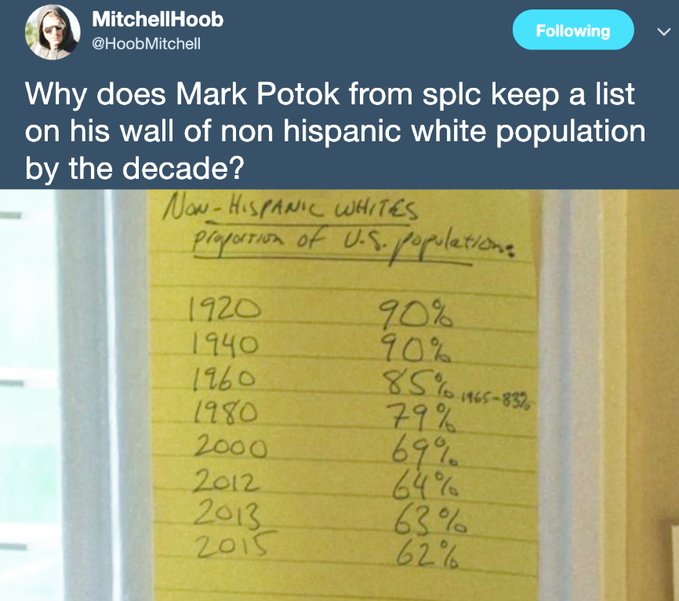

@SawyerHackett Government policy driving ethnic cleansing has been wrong. I want to repair the hateful damage caused by genocidal anti-Whites. Without our own lands we cannot endure. A homeland for every race is the moral path. https://t.co/EpJhS4T7VG

@peterdiver69 @brettachapman Are you REALLY going to lecture the rest of us about how to treat indigenous people? Really mate? https://t.co/kL0dVsEx64

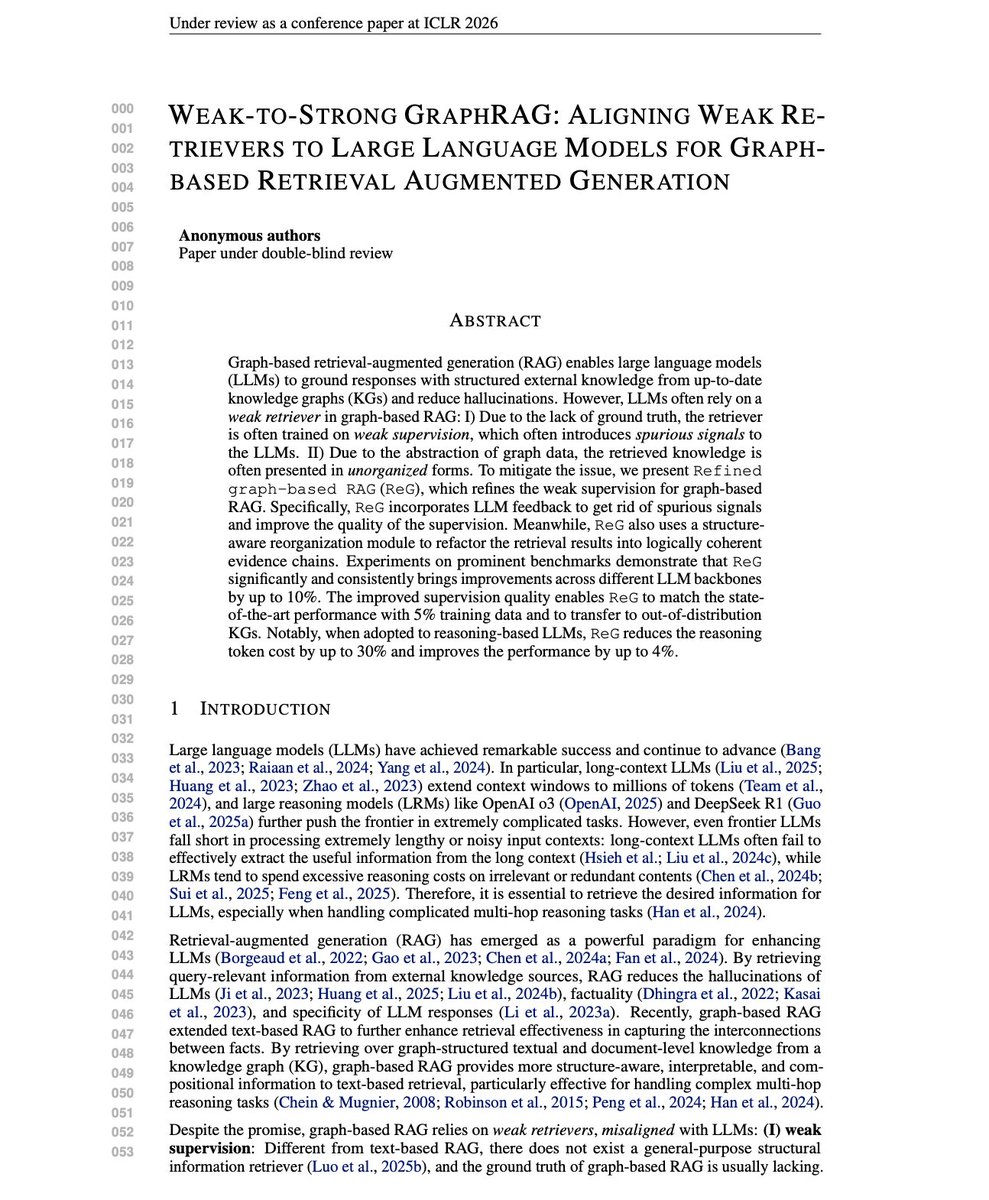

Weak-to-Strong GraphRAG Interesting ICLR 2026 submission with some insights on improving GraphRAG systems and making them more feasible in production environments. Graph-based RAG lets LLMs ground responses in structured knowledge graphs. But there's a fundamental mismatch between retrievers and the LLMs they serve. As knowledge graphs become central to RAG systems, aligning retrievers to LLM needs through LLM feedback offers a principled path to better multi-hop reasoning with lower costs. The problem is twofold. First, graph retrievers train on weak supervision like query-answer shortest paths. This misses key reasoning steps and introduces spurious connections. Second, retrieved knowledge comes back unorganized. LLMs are sensitive to context ordering, and messy graph data adds unnecessary complexity. This new research introduces ReG (Refined Graph-based RAG), a framework that uses LLM feedback to align weak retrievers with the LLMs they serve. Graph-based RAG is essentially a black-box combinatorial search. Given a query, find the minimal sufficient subgraph for correct reasoning. The LLM acts as an evaluator. But exhaustively searching this space is computationally intractable. ReG takes a simpler approach. Instead of optimizing over all possible subgraphs, it utilizes LLMs to select more effective reasoning chains from candidate chains extracted from the knowledge graph. The improved supervision trains better retrievers. A structure-aware reorganization module then refactors retrieval results into logically coherent evidence chains. This aligns the presentation to how LLMs actually process information. On CWQ-Sub with GPT-4o, ReG achieves 68.91% Macro-F1 versus SubgraphRAG's 66.48%. On WebQSP-Sub, 80.08% versus 79.4%. The gains hold across multiple LLM backbones. The data efficiency is notable in the reported experimental results. ReG trained on just 5% of data, matches baselines trained on 80%. The refined supervision eliminates noise that larger datasets would otherwise compound. When paired with reasoning LLMs like QwQ-32B, ReG reduces reasoning tokens by up to 30% while improving performance. The structure-aware reorganization prevents the "overthinking" problem where LRMs produce verbose traces in a noisy context. Paper: https://t.co/mF9sLB63JN

my @huggingface 2025 wrapped has arrived and it's so surprising 😄 thanks everyone for all the love you showed to my repositories 💛 https://t.co/IMBdAJ9YFV

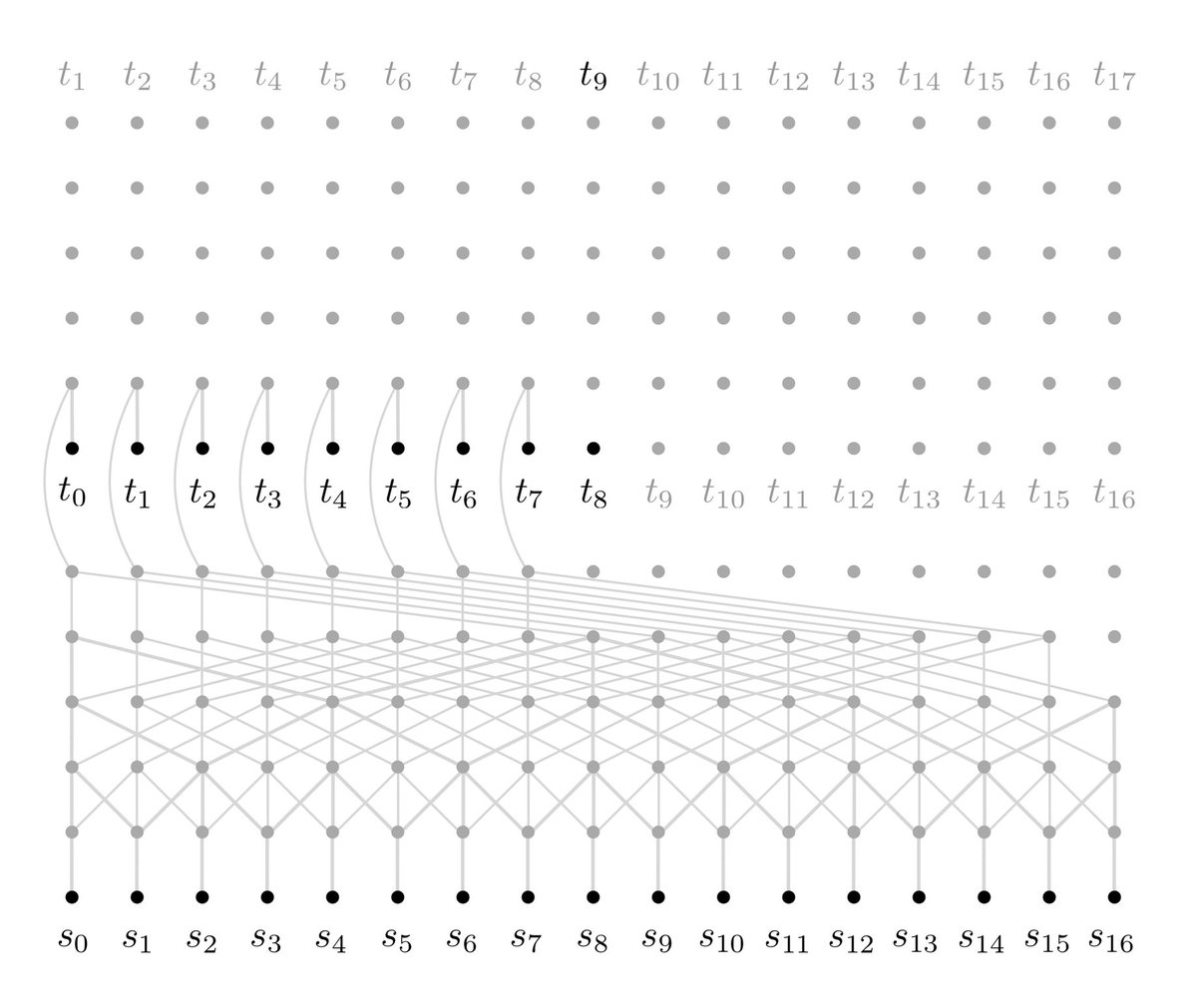

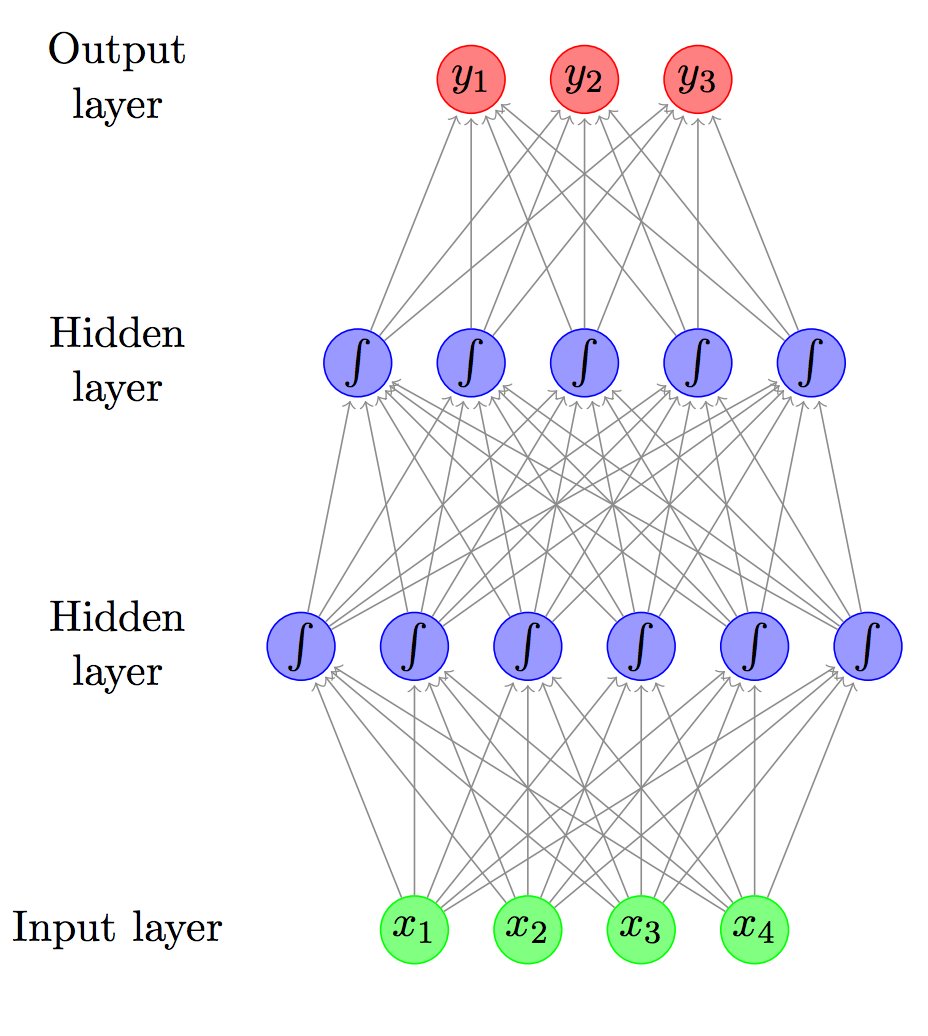

New neural net for Language and Machine Translation! Fast and simple way of capturing very long range dependencies https://t.co/0gSoVVGrYd https://t.co/cWANbRTAMQ

Primer on Neural Networks for Natural Language Processing #AI #MachineLearning #DeepLearning #ML #DL #nlp #tech https://t.co/rStdho8pTx https://t.co/TRtvz91SpT

@DeepLeffen i am only mildly disturbed by the sheer accuracy the model has managed to pick up on these references https://t.co/OM2Xijwr6F

I dislike this so much... Unfortunately, it’s very common amount those tweets recommended by Twitter as belonging to “Machine Learning” category. How can you convey any useful information when 90% of your tweet is just spamming hashtags? https://t.co/1Obp25LJTA

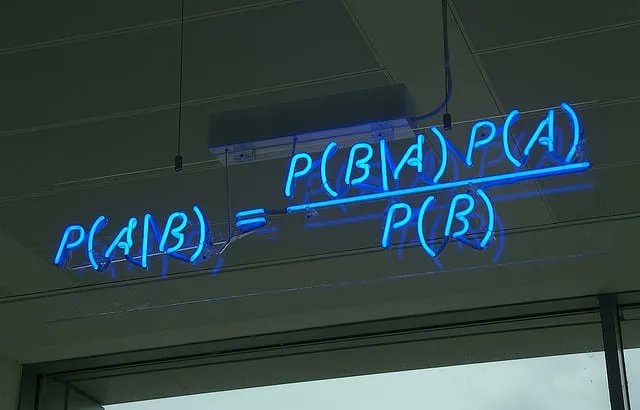

Naive Bayes Classifier From Scratch in Python https://t.co/BxR2urXisa https://t.co/i6pIxS5wMe

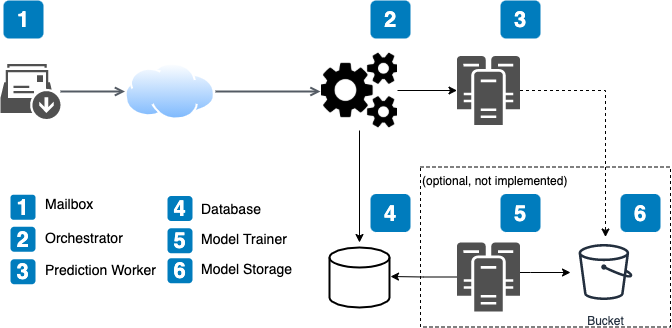

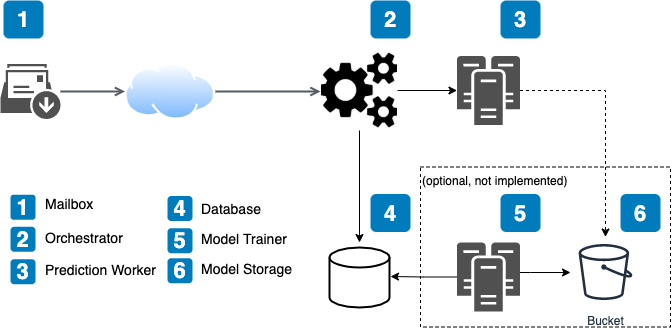

Complete Machine Learning pipeline for NLP tasks https://t.co/Bz7Qrde59L

Complete Machine Learning pipeline for NLP tasks https://t.co/Bz7QrdwenT

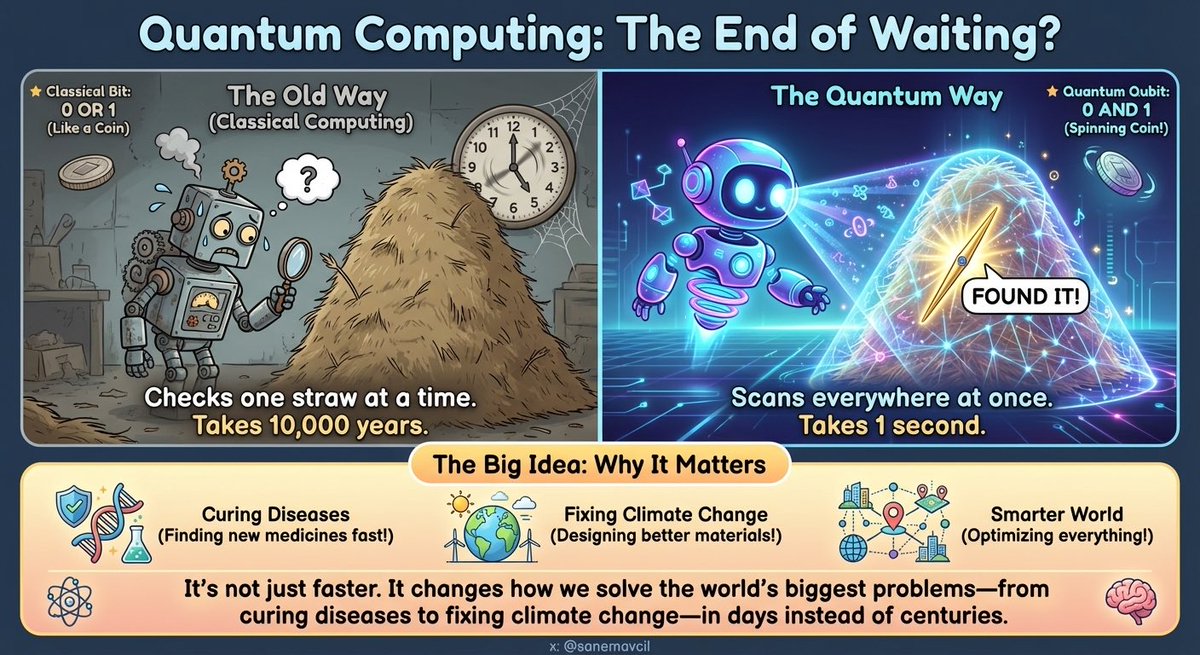

🚀 Quantum Computing: The end of waiting? 🧠⚛️ Classical computers think in bits: 0 OR 1 🪙 Quantum computers use qubits: 0 AND 1 (kind of like a spinning coin) 🌀🪙 So instead of “checking one straw at a time” 🐢⏳, quantum machines can explore many possibilities in parallel—then use interference to amplify the best answers ✨📈 (Not magic, not for everything… but game-changing for specific problems.) 🌍 Why it matters (the big idea): 🧬 Drug discovery → simulate molecules faster, discover new medicines 🧱 New materials → better batteries, superconductors, catalysts 🌡️ Climate & energy → improved chemistry + materials for cleaner tech 🛰️ Optimization → logistics, routing, scheduling, supply chains 🔐 Cryptography → new security era (post-quantum world) We’re still early—today’s devices are noisy 🧊🔧—but the direction is clear: We’re not just making computers faster… we’re changing how we compute. ⚡🧠 What’s your take—breakthrough of the decade or “still too early”? 👇🔥 #QuantumComputing #Quantum #Qubits #DeepTech #FutureTech

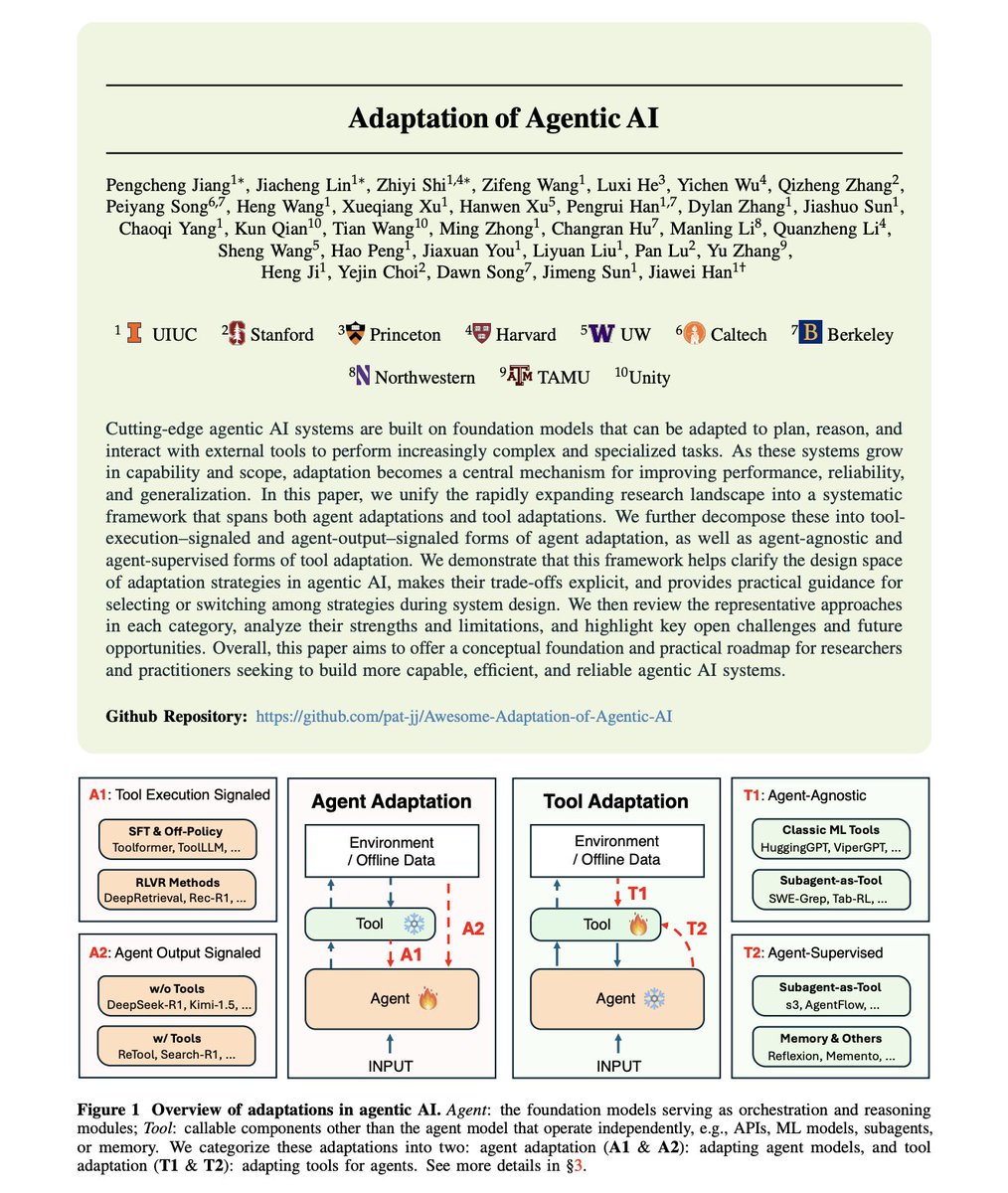

First comprehensive framework for how AI agents actually improve through adaptation. While there is a lot of hype about building bigger models, the research reveals a different lever: systematic adaptation of agents and their tools. Researchers from many universities surveyed the rapidly expanding landscape of agentic AI adaptation. What they found: a fragmented field with no unified understanding of how agents learn to use tools, when to adapt the agent versus the tool, and which strategies work for which scenarios. These are all important for building production-ready AI agents. Adaptation in agentic AI follows four distinct paradigms that most practitioners conflate or ignore entirely. The framework organizes all adaptation strategies into two dimensions. > Agent Adaptation (A1, A2): modifying the agent's parameters, representations, or policies. > Tool Adaptation (T1, T2): optimizing external components like retrievers, planners, and memory modules while keeping the agent frozen. Let's discuss each in more detail: A1: Tool Execution Signaled Agent Adaptation. The agent learns from verifiable outcomes produced by tools it invokes. This involves code sandbox results, retrieval relevance scores, and API call outcomes. Methods like Toolformer, ToolLLM, and DeepRetrieval also fall here. The signal comes from whether the tool execution succeeded, not whether the final answer was correct. A2: Agent Output Signaled Agent Adaptation. The agent optimizes based on evaluations of its own final outputs. This includes both tool-free reasoning (DeepSeek-R1, Kimi-1.5) and tool-augmented adaptation (ReTool, Search-R1). The signal comes from answer correctness or preference scores, not intermediate tool calls. T1: Agent-Agnostic Tool Adaptation. This involves tools trained independently of any specific agent, including HuggingGPT, ViperGPT, and classic ML tools that serve as plug-and-play modules. These tools generalize well across different agents but may not be optimized for any particular one. T2: Agent-Supervised Tool Adaptation. Tools adapted using signals from a frozen agent's outputs. Includes reward-driven retriever tuning, adaptive search subagents, and memory-update modules like Reflexion and Memento. The agent stays fixed while tools learn to better support its reasoning. The trade-offs between paradigms are explicit. Cost and flexibility: A1/A2 require substantial compute for training billion-parameter models but offer maximal flexibility. T1/T2 optimize external components at a lower cost but may hit ceilings set by the frozen agent's capabilities. Generalization patterns differ significantly. T1 tools trained on broad distributions generalize well across agents and tasks. A1 methods risk overfitting to specific environments unless carefully regularized. T2 approaches enable independent tool upgrades without agent retraining, facilitating continuous improvement. The researchers identify when each paradigm fits. A1 suits scenarios with verifiable tool outputs like code execution or database queries. A2 works when only the final answer quality matters. T1 applies when tools must serve multiple agents. T2 excels when the agent is fixed, but tool performance is the bottleneck. State-of-the-art systems increasingly combine paradigms. A deep research system might use T1-style pretrained retrievers, T2-style adaptive search agents trained via frozen LLM feedback, and A1-style reasoning agents fine-tuned with execution feedback in a cascaded architecture. Four open challenges remain unsolved: - Co-adaptation: jointly optimizing agents and tools remains underexplored. - Continual adaptation: enabling lifelong learning without catastrophic forgetting. - Safe adaptation: preventing harmful behaviors during optimization. - Efficient adaptation: reducing computational costs while maintaining performance. The choice of adaptation paradigm fundamentally shapes what an agentic system can learn, how fast it improves, and whether improvements transfer across tasks. Teams building production agents need a principled framework for these decisions, not ad-hoc choices. Report: https://t.co/o2KPQLLQsZ Learn to build effective AI agents in our academy: https://t.co/g1Ijo0S5AA

This weekend, we’re shipping 3,000 Reachy Minis all over the world! To my knowledge, it’s one of the largest shipments of AI robots of the year (or ever?) just in time for Christmas! If you’re in this batch, expect to receive an email in the coming days. Keep in mind that this first version is designed for AI builders, so it’s very bare-bones. At the moment, it has very little software on it, and, like most early hardware, will likely have a fair number of bugs and quirks. Right now, it’s much more of an open-source, DIY robotics platform than a polished consumer robot. The beautiful thing is that we’ve already seen community members managing to hack cool apps and help to improve Reachy Mini a lot! If you’re not in this batch or haven’t bought a Reachy Mini yet, you can expect delivery by the end of January, or roughly 90 days after your purchase (we’re actively working to shorten that timeline). In the meantime, you can start building for current or future Reachy owners using the simulator in the GitHub repo, as all of the software is open-source. Congrats to the @pollenrobotics @huggingface @seeedstudio teams that worked extremely hard to get to this milestone, just 5 months after the beginning of the project! I'm terribly excited to see what you’ll all build with this! Let’s go open and collaborative AI robotics!

Yesterday, we launched PAX SILICA, a partnership built for the AI age. This is the first time countries are organizing around compute, silicon, minerals and manufacturing as shared strategic assets. President Trump, Secretary Rubio and @davidsacks47 understood earlier than anyone that AI is the new backbone of economic power. This declaration operationalizes that insight. Building off of President Trump’s landmark AI Action Plan, this is American AI diplomacy at its best: building flexible coalitions, shaping markets, and putting AMERICA FIRST! 🇺🇸

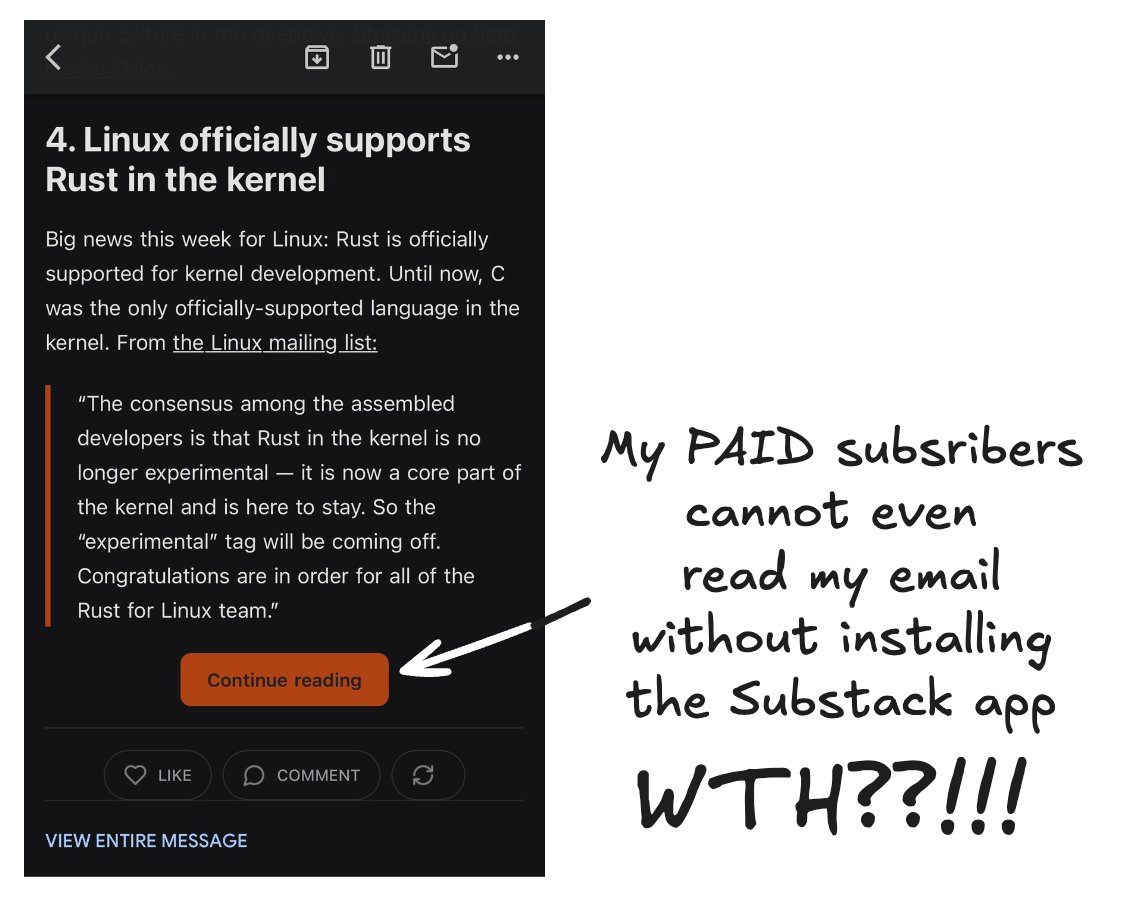

I love Substack. Always have. Their team is great. But a silent change could force me off the platform if it stays. They broke email. My paid subscribers cannot read today's paid newsletter on mobile without downloading the Substack app. @SubstackInc: roll this back. Now. https://t.co/cY4z3MMctV