@dair_ai

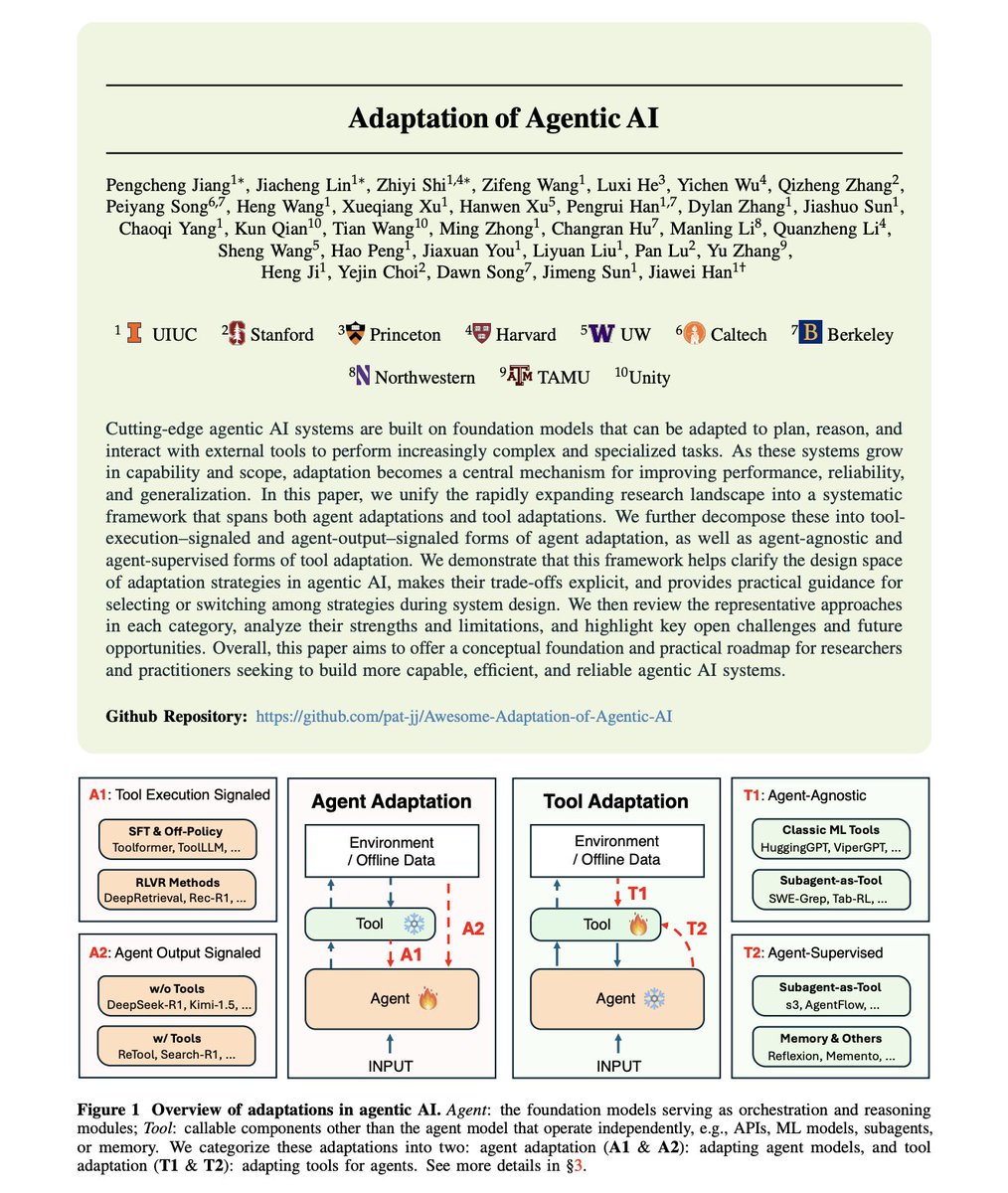

First comprehensive framework for how AI agents actually improve through adaptation. While there is a lot of hype about building bigger models, the research reveals a different lever: systematic adaptation of agents and their tools. Researchers from many universities surveyed the rapidly expanding landscape of agentic AI adaptation. What they found: a fragmented field with no unified understanding of how agents learn to use tools, when to adapt the agent versus the tool, and which strategies work for which scenarios. These are all important for building production-ready AI agents. Adaptation in agentic AI follows four distinct paradigms that most practitioners conflate or ignore entirely. The framework organizes all adaptation strategies into two dimensions. > Agent Adaptation (A1, A2): modifying the agent's parameters, representations, or policies. > Tool Adaptation (T1, T2): optimizing external components like retrievers, planners, and memory modules while keeping the agent frozen. Let's discuss each in more detail: A1: Tool Execution Signaled Agent Adaptation. The agent learns from verifiable outcomes produced by tools it invokes. This involves code sandbox results, retrieval relevance scores, and API call outcomes. Methods like Toolformer, ToolLLM, and DeepRetrieval also fall here. The signal comes from whether the tool execution succeeded, not whether the final answer was correct. A2: Agent Output Signaled Agent Adaptation. The agent optimizes based on evaluations of its own final outputs. This includes both tool-free reasoning (DeepSeek-R1, Kimi-1.5) and tool-augmented adaptation (ReTool, Search-R1). The signal comes from answer correctness or preference scores, not intermediate tool calls. T1: Agent-Agnostic Tool Adaptation. This involves tools trained independently of any specific agent, including HuggingGPT, ViperGPT, and classic ML tools that serve as plug-and-play modules. These tools generalize well across different agents but may not be optimized for any particular one. T2: Agent-Supervised Tool Adaptation. Tools adapted using signals from a frozen agent's outputs. Includes reward-driven retriever tuning, adaptive search subagents, and memory-update modules like Reflexion and Memento. The agent stays fixed while tools learn to better support its reasoning. The trade-offs between paradigms are explicit. Cost and flexibility: A1/A2 require substantial compute for training billion-parameter models but offer maximal flexibility. T1/T2 optimize external components at a lower cost but may hit ceilings set by the frozen agent's capabilities. Generalization patterns differ significantly. T1 tools trained on broad distributions generalize well across agents and tasks. A1 methods risk overfitting to specific environments unless carefully regularized. T2 approaches enable independent tool upgrades without agent retraining, facilitating continuous improvement. The researchers identify when each paradigm fits. A1 suits scenarios with verifiable tool outputs like code execution or database queries. A2 works when only the final answer quality matters. T1 applies when tools must serve multiple agents. T2 excels when the agent is fixed, but tool performance is the bottleneck. State-of-the-art systems increasingly combine paradigms. A deep research system might use T1-style pretrained retrievers, T2-style adaptive search agents trained via frozen LLM feedback, and A1-style reasoning agents fine-tuned with execution feedback in a cascaded architecture. Four open challenges remain unsolved: - Co-adaptation: jointly optimizing agents and tools remains underexplored. - Continual adaptation: enabling lifelong learning without catastrophic forgetting. - Safe adaptation: preventing harmful behaviors during optimization. - Efficient adaptation: reducing computational costs while maintaining performance. The choice of adaptation paradigm fundamentally shapes what an agentic system can learn, how fast it improves, and whether improvements transfer across tasks. Teams building production agents need a principled framework for these decisions, not ad-hoc choices. Report: https://t.co/o2KPQLLQsZ Learn to build effective AI agents in our academy: https://t.co/g1Ijo0S5AA