@koylanai

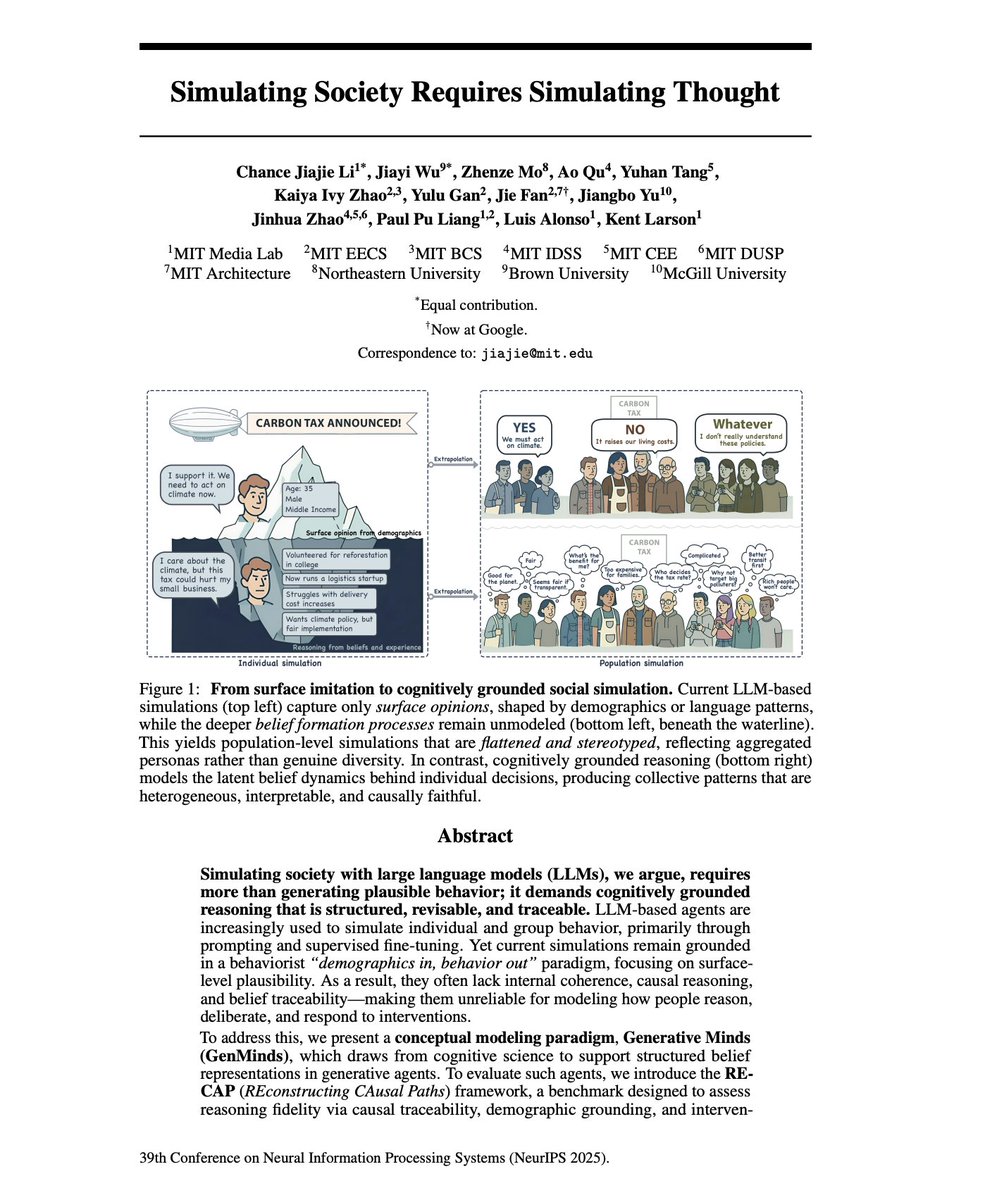

AI Agent Personas should simulate the structure of human reasoning. I’ve been arguing that you cannot "invent" a digital expert agent using just prompt engineering. You have to extract the expert via deep interviewing. A new NeurIPS paper, "Simulating Society Requires Simulating Thought" reinforces everything we've discussed about why thin, synthetic LLM personas fail. Most AI agents operate as "behaviorists." When you prompt an LLM to "act like a senior economist," it relies on surface-level correlations from training data. It generates text that sounds expert-like, but lacks any internal belief structure. 1. Logical Inconsistency: Without an internal model of how beliefs are formed, agents support a policy in one context but oppose it in another. The paper calls this "intervention-invariance mismatch" - beliefs don't update coherently when assumptions change. 2. Illusion of Consensus: In multi-agent simulations, LLMs converge toward the median view (even more positive emotions as the other paper mentions) of the training data. They agree not because of shared reasoning, but because their statistical priors push them toward the center. Your expert's contrarian, hard-won perspective gets averaged out. 3. Identity Flattening: LLMs reproduce stereotypical portrayals that erase intersectional variation. "The rich, positional knowledge of real-world stakeholders is replaced with monolithic, decontextualized simulations." To fix this, we have to move from simulating speech to simulating reasoning. The authors propose a "Cognitive Modeling" approach. "beyond output-level alignment toward aligning the internal reasoning traces of generative agents." Their solution is SEMI-STRUCTURED INTERVIEWS to extract what they call "cognitive motifs" - minimal causal reasoning units that capture how a specific person actually thinks. This is exactly why we built an interviewer system instead of a persona generator. You have to extract their actual belief structure through conversation. Instead of predicting the next word, the agent must possess "Reasoning Fidelity", a structured map of beliefs, causal logic, and cognitive motifs. How do you get this map? You can't prompt for it. You have to interview for it, with AI. The paper explicitly validates the architecture we’ve built: using semi-structured interviews to elicit "causal explanations" and "reasoning traces". This confirms why our Interviewer + Note-Taker multi-agent system is critical. - The Interviewer builds the "Peer Status" necessary to get the expert to open up. - The Note-Taker (the cognitive layer) extracts the "Cognitive Motifs", the distinctive logic blocks that define how that specific expert solves problems. We are moving beyond the era of "acting like an expert" to Generative Minds; agents that embody the positional individuality and causal logic of the people they represent. If you're building AI agents for strategy, decision-making, or stakeholder modelling, start by interviewing the human aspects of your agents.