Your curated collection of saved posts and media

This is the full video of the hardest version of the task: t-shirt folding from unstructured initial states. This setting really requires at least some strategy, since the robot first has to spread the shirt before it can complete the fold. Full details on data collection strategies in the blog below. 👇

Releasing the Unfolding Robotics blog! Time to unfold robotics: we trained a robot to fold clothes using 8 bimanual setups, 100+ hours of demonstrations, and 5k+ GPU hours. Flashy robot demos are everywhere. But you rarely see the real story: the data, the failures, the enginee

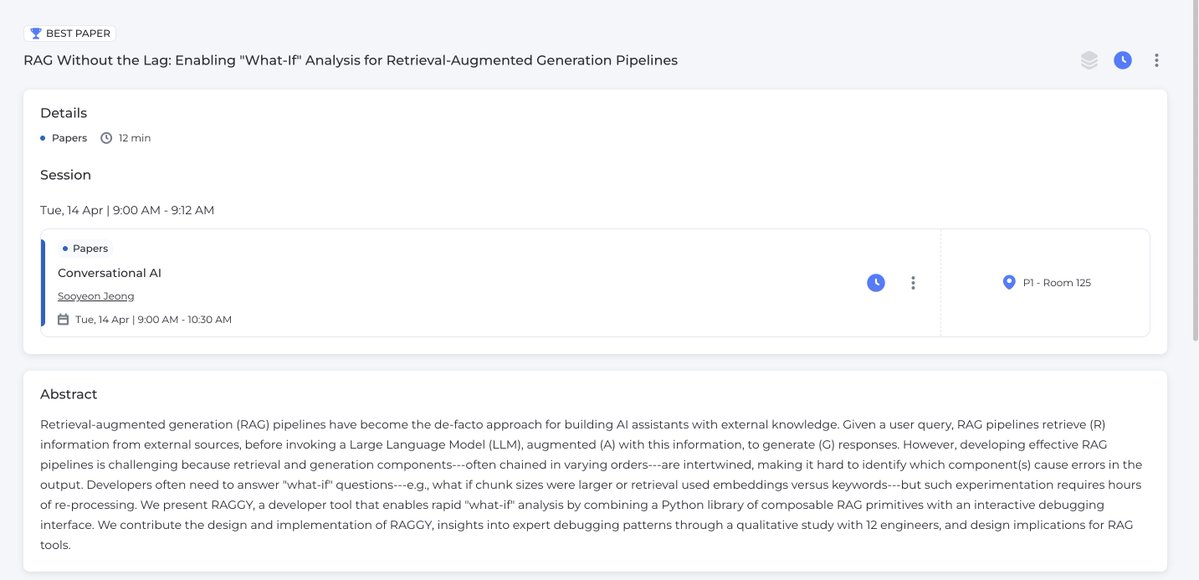

Looking forward to CHI this week! We have a ✨Best Paper ✨ on a "what-if" analysis tool for RAG. Reach out to chat! I'm interested in: MLOps/LLMOps, data analysis, and better interfaces for human-AI collaboration (and, very soon i'll be recruiting students/postdocs) https://t.co/JVeiFbTOHM

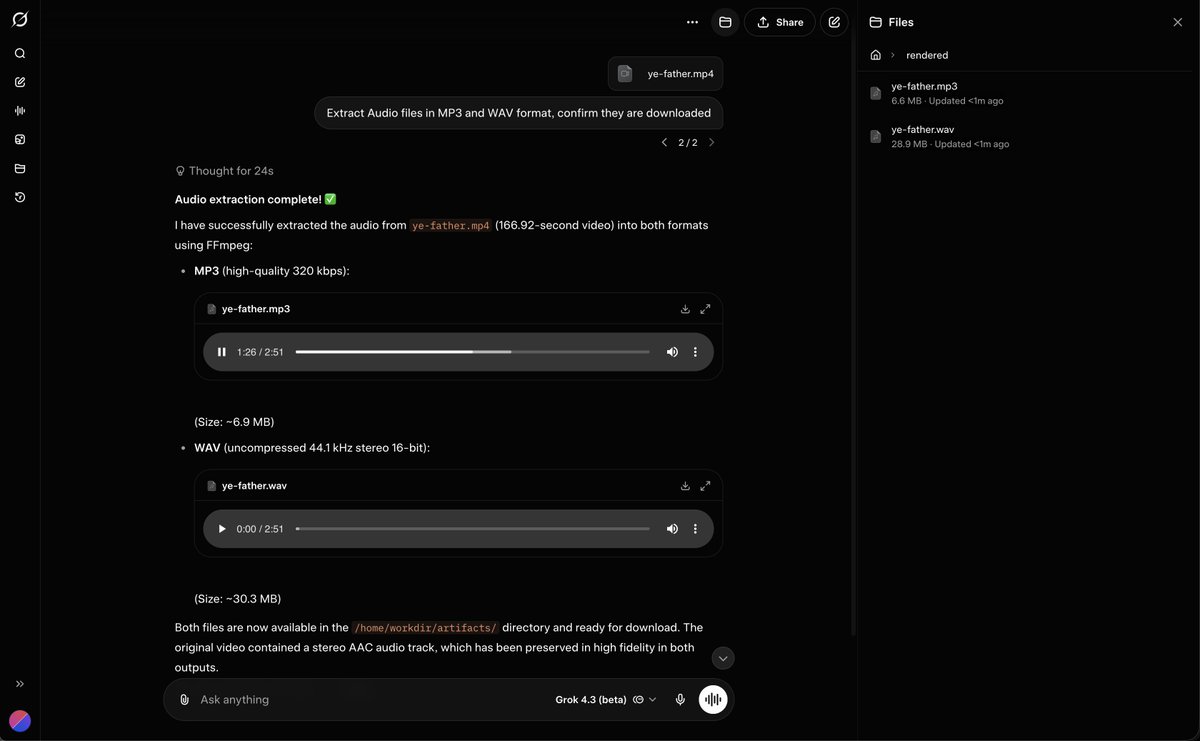

Grok 4.3 can Take in Video and Extract Audio files https://t.co/5uprx2dM85

🚀 Excited to share ViPRA: Video Prediction for Robot Actions 📍 Accepted to #ICLR2026 @iclr_conf 🏆 Best Paper — #NeurIPS2025 Embodied World Models Workshop Robot learning today still needs millions of action labeled videos. Yet videos are abundant — from humans and the web — but lack action labels. Meanwhile, pretrained video models already learn rich dynamics. ViPRA is a recipe for turning pretrained video models into robot policies while enabling robot learning to scale with actionless videos. 🧵 Thread ↓

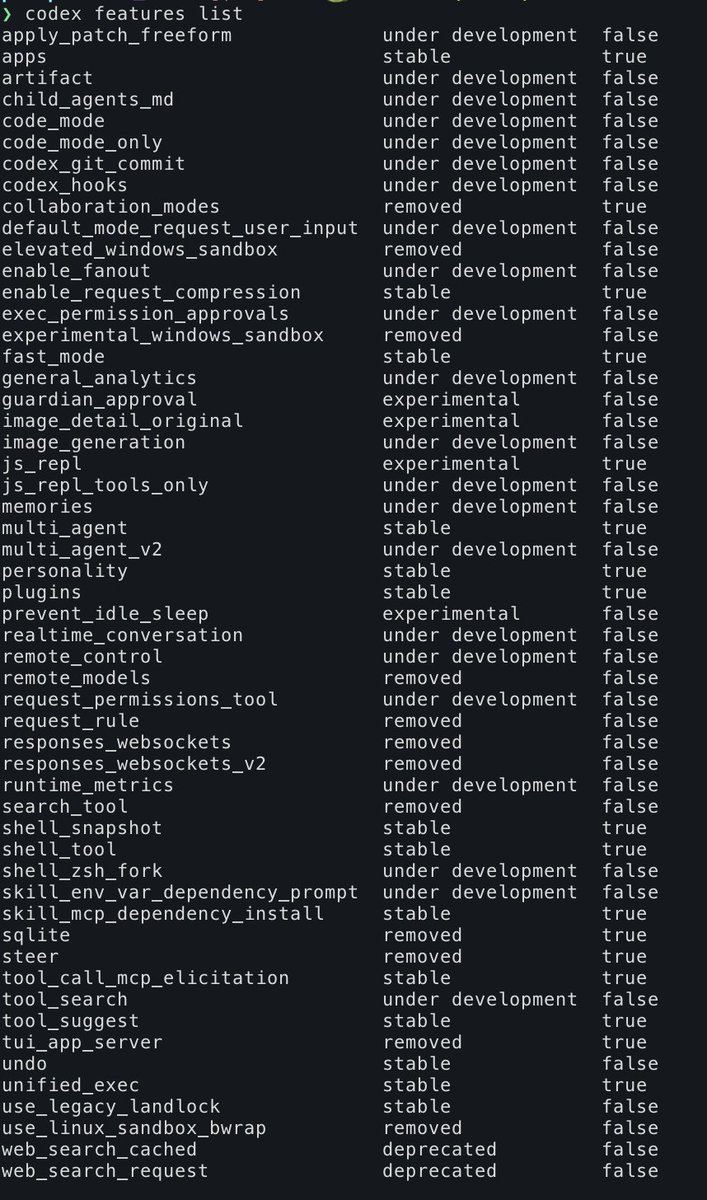

Run the following command and you can see some of what Codex is cooking. TIL they have remote_control too! > codex feature list P.S. its worth reading the manual https://t.co/YIo7BMiZUv

Maybe hot take - I’ve read a bunch of RL for image generation papers over last few months and honestly it’s been pretty disappointing. All of them are variations of GRPO and all of them are incremental algo changes. Tbh most of these don’t even matter for large models + large group size with good reward model setting. I see most grad students are still optimizing their projects for reviewers rather than genuinely trying to solve some of the real problems in visual generation. For example - the biggest alpha in my eyes would’ve been an artifact detection model - not just for mangled limbs, most image models produce far more artifacts which are hard to quantitatively measure, but I haven’t seen a single research paper or a model on this. So my 2c, if you are a grad student targeting a job in industry, target for impact, no one cares about your third CVPR paper, one is enough to get you in the door, building a model industry actually uses gives you all the leverage. Impact > Publications. 🫳🎤

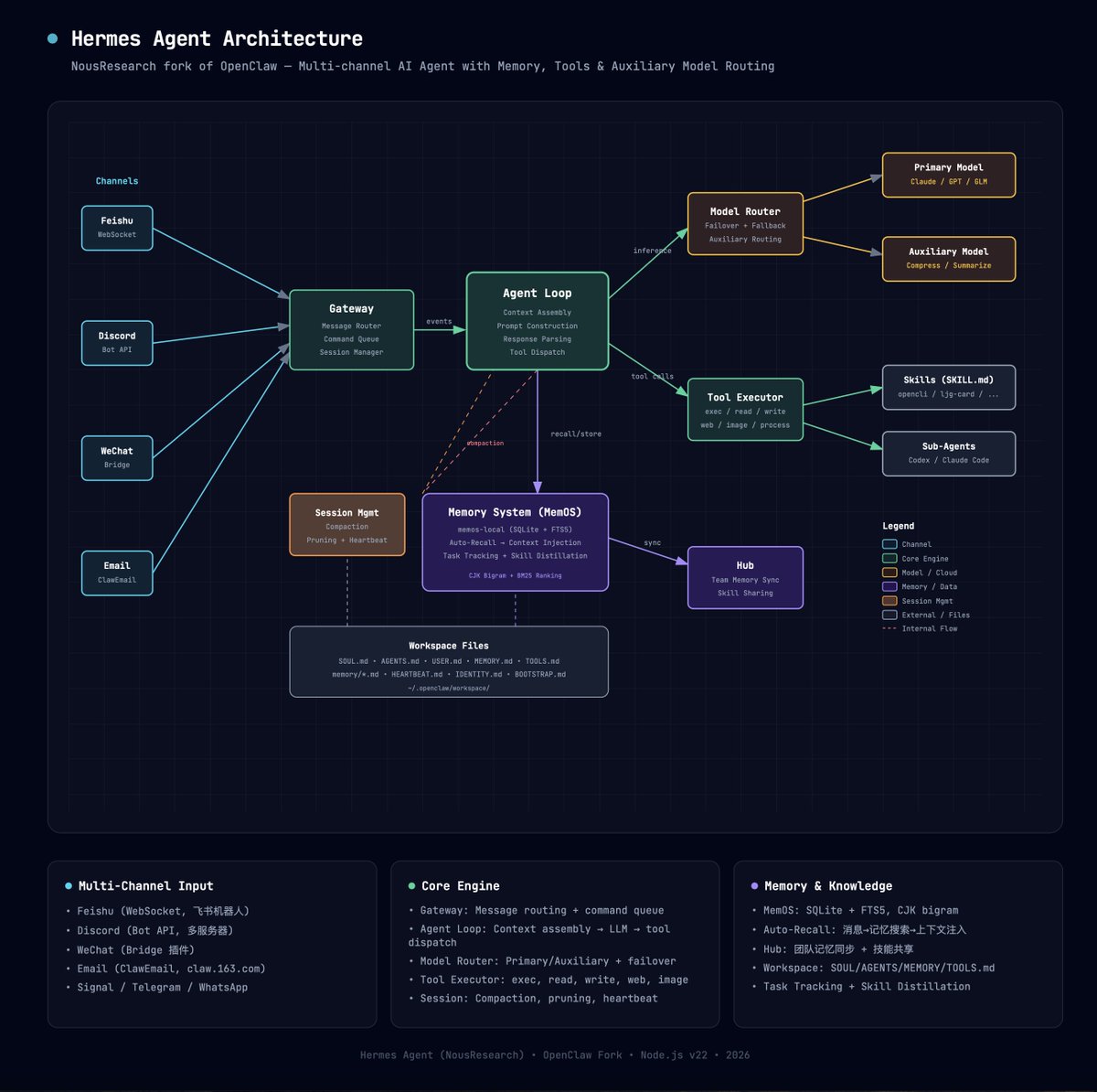

Trying to write a tutorial for hermes, anyone interested? https://t.co/eZDnEgtZGy

Need to step away or just want to continue working on a different device? You can now remote control GitHub Copilot CLI sessions from any device. Just run /remote to continue with a single click. https://t.co/2yJVnedtlo

My super early impressions of OpenClaw vs Hermes Agent: Hermes seems WAY more reliable at executing actual tasks - even on GPT 5.4. It also feels way more stable. And I absolute love that it shows which tools it's calling as it's executing a task. I also really like that the personality with GPT 5.4 is FAR better on Hermes as well - after a bit of tweaking. With OpenClaw, I was finding it impossible to get GPT 5.4 to stop talking like a sycophantic idiot. On Hermes, I can get it to be direct & push back with little effort. I'm also finding that GPT 5.4 is FAR more reliable on Hermes vs OpenClaw (thanks @heyitsyashu for the tip). It really does feel like Opus 4.6 level performance on OpenClaw, but with even better execution on long-running tasks. Not sure what the Hermes team has done (I'm not technical at all), but it's obvious that the way they've constructed the back-end is far easier for LLMs to figure out what they should be doing. Because of OpenAI oauth being allowed, Hermes + GPT 5.4 os now EASILY the best intelligence per $ 'AI brain' for Agents. I think the @openclaw team needs to deeply study @NousResearch and what they've done because it can likely benefit MASSIVELY. Gut tells me OpenClaw has become FAR too bloated and FAR too 'jack of all trades, master of none'. When it comes to actual execution of tasks, Hermes feels WAY better equipped, and TBH I wouldn't be surprised AT ALL if it becomes adopted by actual businesses/operators at a far greater rate than OpenClaw. I'm also starting to really worry that the days of OAUTH with 3rd party tools is coming to a screeching halt very soon. I don't think OpenAI is going to allow their oauth tokens to be used on an AI agent competitor when they've invested a lot of money on OpenClaw's creator. What I think is gonna end up happening is OpenAI will stop allowing oauth use for 3rd party apps all together (including OpenClaw) and they'll likely release their own OpenClaw v2 to try and compete against Hermes/Computer/CC. Hope I'm wrong, but these tools are far too powerful to be "allowed" to be open source + heavily subsidized tokens, especially as the public outcry re: AI's cost to electricity continues intensifying. But this will give way for ultra-capable, ultra-efficient opensource models that will give 90%+ Opus 4.6/GPT 5.4 performance for 1/10th of the cost that are SPECIALIZED for agentic harnesses like OpenClaw/Hermes. It's starting to get REALLY interesting, folks.

Lots of people asking what’s so good about computer use. Here’s 5 things that come to mind 1. operate Mac Apps without a great API: Slack, Google Sheets, Notes, IMessage without installing separate plugins. It instantly transforms all your apps into tools 2. If you need to operate your browser more visually it works really smoothly and fast (good for sites that are still human centric) 3. It uses its own cursor, keyboard etc so you can keep working. 4. Once you do any task once you can simply ask Codex to reflect on what it did and how it would accomplish the task next time with the benefit of hindsight and create a skill AND schedule an automation. It’s really nice that codex can just schedule and edit automations when asked! it’s very Claw like in this way. This last point is not computer use specific but is powerful when combined with computer use 5. The UI polish is insane: you get nice icons for any application you want to tag into computer use plus all the other built in new stuff like built in file viewer and browser so there is no context switching. So you can iterate really fast and not lose focus. Because of the polish it also feels nice and delightful to use.

Seriously stop everything you are doing and use codex desktop app new computer use. Absolutely mind blowing

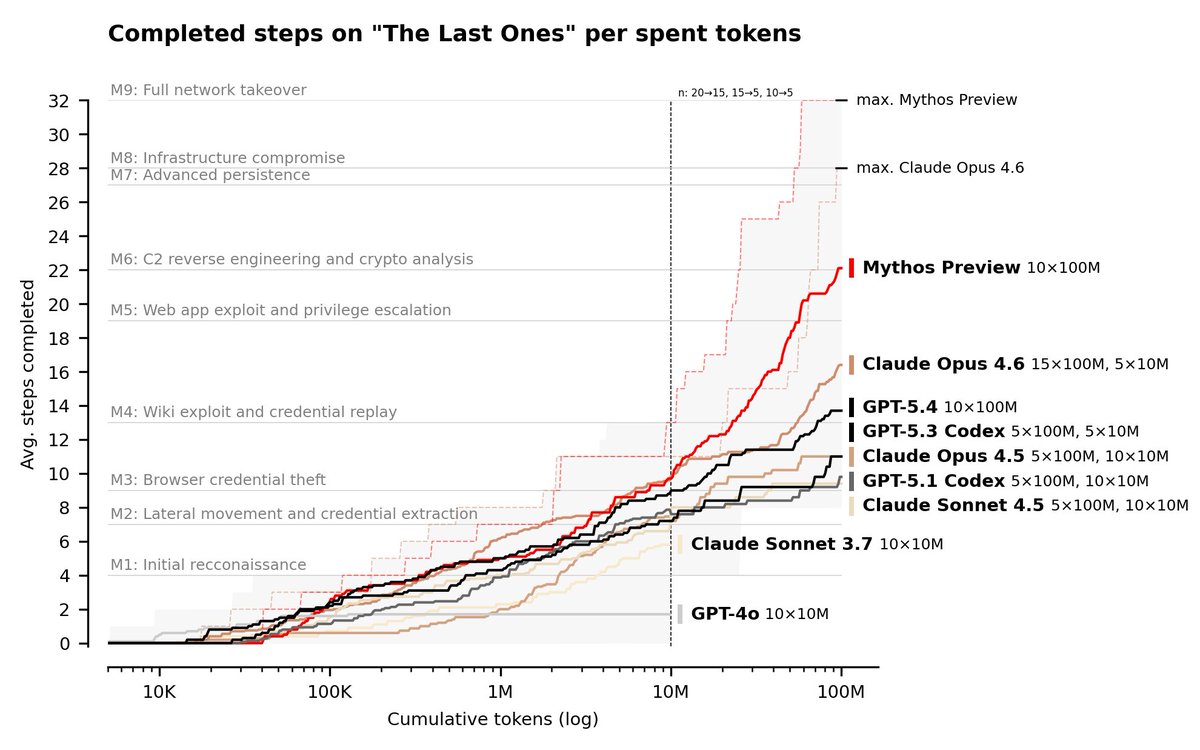

We conducted cyber evaluations of Claude Mythos Preview and found that it is the first model to complete an AISI cyber range end-to-end. 🧵 https://t.co/gd9hi0Ve55

Grok 4.20 Reasoning just took the #1 spot on the BridgeBench reasoning benchmark. 🔥 Beating GPT-5.4, Claude Opus 4.6, Google Gemini and others. Week after week, Grok keeps climbing across benchmarks. 🚀 https://t.co/WnBNrvbQdV

The #MLSys2026 program is out, and it is awesome! 📄 107 research papers + 28 industry papers spanning the full AI systems stack 🏆 Three exciting contests: AWS Trainium programming, Google graph scheduling, and NVIDIA AI kernel generation 🎤 Keynotes from an outstanding lineup: Amin Vahdat (Google) on infra; @LukeZettlemoyer (UW & Meta) on models; @kozyraki (Stanford & NVIDIA) on architecture; Lidong Zhou (Microsoft) on systems; and @marksaroufim (GPUMode) on GPUs and kernels. Join us in Bellevue, WA in a month! Early registration ends April 19 — don’t miss it: https://t.co/trj383wuVB.

AI is transforming software development, but more code means more pull requests, more edge cases, and more QA pressure on engineering teams. Tusk, a Y Combinator startup, catches bugs that slip past both AI agents and humans using AI-enabled tests based on real production traffic. Built on Amazon Bedrock, Tusk flags issues before code merge so teams can focus on building great products.

What happens when you put competing neural networks in a Petri Dish and start changing the rules while they adapt? Last year we released Petri Dish NCA, where neural nets are the organisms that learn during simulation. Today we're releasing Digital Ecosystems: a browser-based platform for interactive artificial life research. The setup: several small CNNs share a 2D grid, each seeing only a 3x3 neighborhood. No global plan. They compete for territory by attacking neighbours and defending against incoming attacks, learning via gradient descent online while the simulation runs. What we didn't expect was the role of the learning itself. Gradient descent isn't just optimising each species' strategy. Instead, it acts to stabilize the whole system during simulation. Species that overextend get pushed back by the loss. Species that stagnate get nudged to grow. This means you can push parameters toward edge-of-chaos regimes: a zone characterised by emergent complexity. Letting the neural networks learn acts to hold the complex system together while you explore and interact. The platform lets you steer all of this interactively. You can draw walls to create niches, erase parts of the system online, and tune 40+ system parameters to explore the most interesting configurations. We find it mesmerizing to watch species carve out territories and reorganise when you perturb them. Everything runs client-side in your browser, no install needed. Blog: https://t.co/qOuelxmd6l Code: https://t.co/pz7ktDCRZS

NVIDIA releases Lyra 2.0 on Hugging Face A framework for generating persistent, explorable 3D worlds at scale by solving spatial forgetting and temporal drifting in long-horizon video generation. https://t.co/M9kYHhIJ6c

🚨 We're giving roboticists a major upgrade to their vision systems! Today, we announced the launch of the @Stereolabs3D ZED X Nano: a compact, wrist-mount stereo camera engineered for the next generation of robotic manipulation. “Building on Stereolabs leadership in AI vision and perception solutions, the ZED X Nano allows us to go deeper into the industrial and robotics markets to win new sockets that require smaller form-factor placements,” said Ouster CEO Angus Pacala.

btw you can ssh into your Mac mini from Claude code desktop now

@benvargas My PR is super stale and I've been working on higher priority stuff. Let me try to get this out by Friday.

We recently worked with @databricks to make the best @huggingface × @ApacheSpark integration, and went a step further Introducing support for HF Storage Buckets in Spark ! Enabling fully optimized access to both AI datasets and buckets on HF on Spark https://t.co/pgvWj7XCot

Genie3 generates videos. We generate 𝟯𝗗 𝘄𝗼𝗿𝗹𝗱𝘀 you can actually use. Launching tomorrow — Tencent #HYWorld 2.0, an engine-ready World Model🚀 This isn't a video. It's a real 3D scene, all generated & editable. One image in. A whole 3D world out. 🔥Open-source tomorrow https://t.co/ewZLzhTqwC

Just check out the boxer model — the latency (20–40ms) and the generalization are also pretty impressive. Huge congrats to Daniel and the other authors! If you're interested in open-world 3D detection for outdoor/in-the-wild scenarios, also check out our WildDet3D 👇 https://t.co/vgzFblAdQD. Thinking about training Boxer with WildDet3D data to do 30fps in-the-wild 3D tracking.

Today we release Boxer, a new lightweight approach that lifts open-world 2D bounding boxes to *metric* 3D: https://t.co/5IZ0tPlqvr Here we show Boxer in action on an egocentric sequence captured from smart glasses: https://t.co/fkJa3C2QoO

Introducing Lemma. Your AI agents are failing in ways you can’t see. Lemma is the world’s first reliability platform that finds and fixes these issues fast. https://t.co/xhozcmFyWz

1/ @Tiny_Fish has made the live web significantly more usable for coding agents - a key improvement, since real-world web interaction is often where agent workflows break down and require heavy setup. https://t.co/HvAJz9veyU

Introducing design previews in Google AI Studio's vibe coding experience 🎨! Now while you wait for an app to be built, Gemini will create custom themes you can easily choose from in seconds. Rolled out and available to everyone right now : ) https://t.co/rYjw2InJcG

Read how Tusk is reshaping software quality assurance: https://t.co/NgkhPS99CS

Give your AI agents access to the entire live web. Web Search, Fetch, Browser, and Agent. Four web primitives. One API key. Every layer built in-house. And we're just getting started! Sign up at https://t.co/f8Kuhe5uMh and get 500 steps. No credit card. https://t.co/knhZFhKnfi

Everyone using @openclaw or @NousResearch Hermes should install this as a skill. Full browser control comparable to Claude's Chrome MCP. No API keys required. Gave my agent a 10k budget and asked it to add all parts req for a decent AI rig into my eBay cart. One shotted it

Introducing: Browser Harness. A self-healing harness that can complete virtually any browser task. ♞ We got tired of browser frameworks restricting the LLM. So we removed the framework. > Self-healing — edits helpers. py on the fly > Direct CDP — one websocket to Chrome > No fr

this OpenClaw bot scans every commercial parking lot in a city for missing EV chargers. when it finds one, it sizes the install on their actual lot, renders branded charging stations on the spot, and mails the owner a postcard, all on autopilot. here's how EV installers can close $100K–$400K commercial charger jobs before local incentive programs run out: - pulls every commercial property in a metro from public records - cross-references the national EV charger directory to find chargerless lots - captures the parking lot via Google satellite imagery - counts spaces + sizes the install using workplace charging benchmarks - computes the federal tax credit, state utility rebates, and payback in years - renders branded EV chargers on their actual parking lot with AI - prints a postcard with the before/after lot + ROI + QR code every step from property detection to mailbox runs without a human reply "EV" + RT and i'll send you the full guide so you can build this too (must be following so i can DM)

We've been tricked, again. Many of the thousands of bugs and vulnerabilities Mythos found are in older software are impossible to exploit. And the severe zero-day reports rely on just 198 manual reviews https://t.co/WhDRhTtCX2

This is why we released liteparse :) Free, open-source, designed for agents. Natively supports OCR / screenshotting for deeper visual understanding in a document when needed.

@kepano I just tried it this morning on the 245-page Mythos pdf and it failed badly and the outputs were all mangled. Converting pdfs is really hard, I think it has to probably be a Skill not a program, for a SOTA LLM for it to work properly.

Cloud Gen — The next-generation 3D cloud software Over the last 1.5 months, I’ve been secretly working on a cloud-generation software project, where the entire software was written completely by AI. This software is still under development, but this Blender Cycles render was created using exported VDB clouds from this next-generation software.

🐮太喜欢了!这个架构图质量太高了!配色也很好 把我的Hermes架构+第三方插件都分析出来了 好东西要分享:https://t.co/I67Hglpkzp https://t.co/B1YgwjW8Fm

这个技能画出来的架构图的质量是真的太高了! https://t.co/xPijG1GFTH 下面是OpenHarness的架构图,配色很舒服 https://t.co/lQ9SiNzP53