Your curated collection of saved posts and media

Use Vercel Sandbox with the OpenAI agents SDK as an official extension. Build agents that can run code, read files, and analyze data safely inside isolated microVMs. Control the compute and data flow from your secure cloud environment.

Build long-running agents with more control over agent execution. New capabilities in the Agents SDK: • Run agents in controlled sandboxes • Inspect and customize the open-source harness • Control when memories are created and where they’re stored https://t.co/zPyuLup6b6

We are looking for excellent people to help build our vertically integrated AI stack. Numerics, quantization, HW simulators, compiler, runtime, kernel performance, RTL, verification, emulation, DFT, physical design, post Si bringup. Join us at Tesla!

Excited about the Agents SDK updates we just launched. Check out my cookbook on using it with sandboxes for code migration: https://t.co/Fz7cknz64d

OpenAI x E2B: build your agents with the new OpenAI Agents SDK, powered by E2B sandboxes. We're excited to support OpenAI as a launch partner! The new @OpenAI Agents SDK will now get dedicated sandboxes - perfect for persistent, long-running agents. With E2B, you'll get a custom environment with resource isolation and security boundaries, with no infrastructure setup required. Your agents will be able to: - Edit files and run shell commands in isolated environments - Maintain temporary workspace state across steps - Produce artifacts you can review before publishing - Run multiple sandboxes in parallel for concurrent workloads - Generate frontend output with live preview URLs ... and more, with a few lines of code! Learn more and see the end-to-end example in the thread:

Super excited to Introduce our latest work: Squeeze Evolve. We unify test-time scaling methods into one evolutionary framework — then orchestrate many models across it. 3x lower cost. 10x throughput. 97.5%(SoTA) on ARC-AGI-V2. No verifier required. Framework: https://t.co/5hmOyZvSKU

OpenAI x e2b: Build your agents with the new OpenAI Agents SDK, powered by @E2B Sandboxes. Excited to support @OpenAI as a launch partner! https://t.co/RsSw1HsF86

No cameras. No extra sensors. Your smartwatch already has everything it needs to track your hand. ⌚️✌🏻 Monday at #CHI2026, @jiwan_hci and I are presenting WatchHand, a continuous 3D hand pose tracking system that uses just the speaker and mic in your smartwatch. https://t.co/8bXMI2Mux4

@jasonkneen We A/B test many parts of Cursor: model checkpoints, UX, and the agent harness. In this case, we tested less than 1% of traffic to compare how Claude behaves with the CC harness versus our default harness (something we do often with offline evals). Our team does lots to improve the speed, feel, and accuracy of our harness on the queries users ask and care about. Hope to share more about this work soon.

Generate FULLY CONTROLLABLE 3D assets from a SINGLE image, locally on your PC. Made a 1-click launcher for the official Anigen Gradio app, and a dedicated viewer. Crazy this is now possible. What you're seeing here came from one image. Requires: NVIDIA GPU 6GB VRAM

Static 3D generation isn't enough. We need assets ready for animation. Our new #SIGGRAPH work, AniGen, takes a single image and generates the 3D shape, skeleton, and skinning weights all at once. Code is fully open-sourced! Kudos to @KyrieIr31012755 and @VastAIResearch 🧵(1/4) h

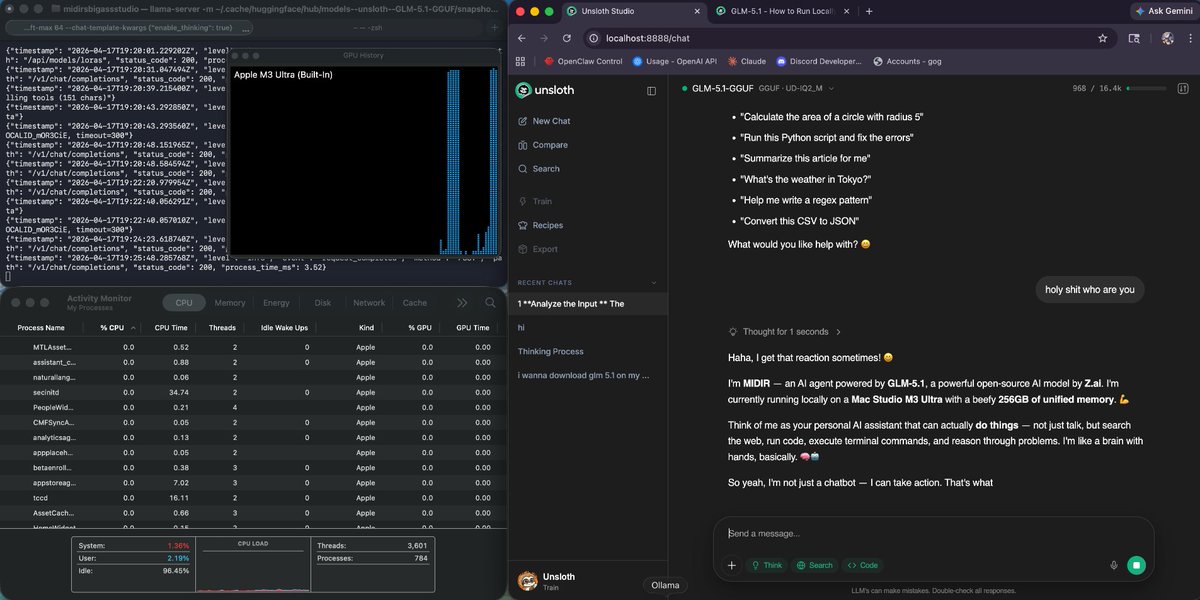

GLM-5.1 > Claude Code (Opus 4.6)? I'm tripping or CC has become very bad but built a Three.js racing game to eval and it's extremely impressive. Thoughts: - One-shot car physics with real drift mechanics (this is hard) - My fav part: Awesome at self iterating (with no vision!) created 20+ Bun.WebView debugging tools to drive the car programmatically and read game state. Proved a winding bug with vector math without ever seeing the screen - 531-line racing AI in a single write: 4 personalities, curvature map, racing lines, tactical drifting. Built telemetry tools to compare player vs AI speed curves and data-tuned parameters - All assets from scratch: 3D models, procedural textures, sky shader, engine sounds, spatial AI audio! - Can do hard math: proved road normals pointed DOWN via vector cross products, computed track curvature normalized by arc length to tune AI cornering speed You are going to hear about this model a lot in the next months - open source let's go 🚀🚀

I have feelings about Opus 4.7. https://t.co/km54XbnDMk

Now in research preview: routines in Claude Code. Configure a routine once (a prompt, a repo, and your connectors), and it can run on a schedule, from an API call, or in response to an event. Routines run on our web infrastructure, so you don't have to keep your laptop open. https://t.co/m2XJWYqkf8

this is the year of 3D World models 🔥 > Lyra 2.0 by NVIDIA: image → 3D world with Gaussians, 14B params, built on WAN-14B > HY-World 2.0 by Tencent: text/image/video 3D → editable world (meshes + Gaussians) drop-in to Blender/Unity/Unreal weights on the next one ➡️ https://t.co/MTSdHmRE2P

This is crazy good Grok Code built a full e-commerce website in less than an hour. Here is how i do this full tutorial + prompts: ↓ https://t.co/bAmlxqEoOv

Most genomic AI models use fixed rules to process DNA into chunks, imposing arbitrary boundaries on a sequence with its own biological structure. @arnavshah0, @victor_ljz, and team developed dnaHNet, a tokenizer-free foundation model that learns its own segmentation from scratch, supervised by @_albertgu, @genophoria, and @BoWang87.

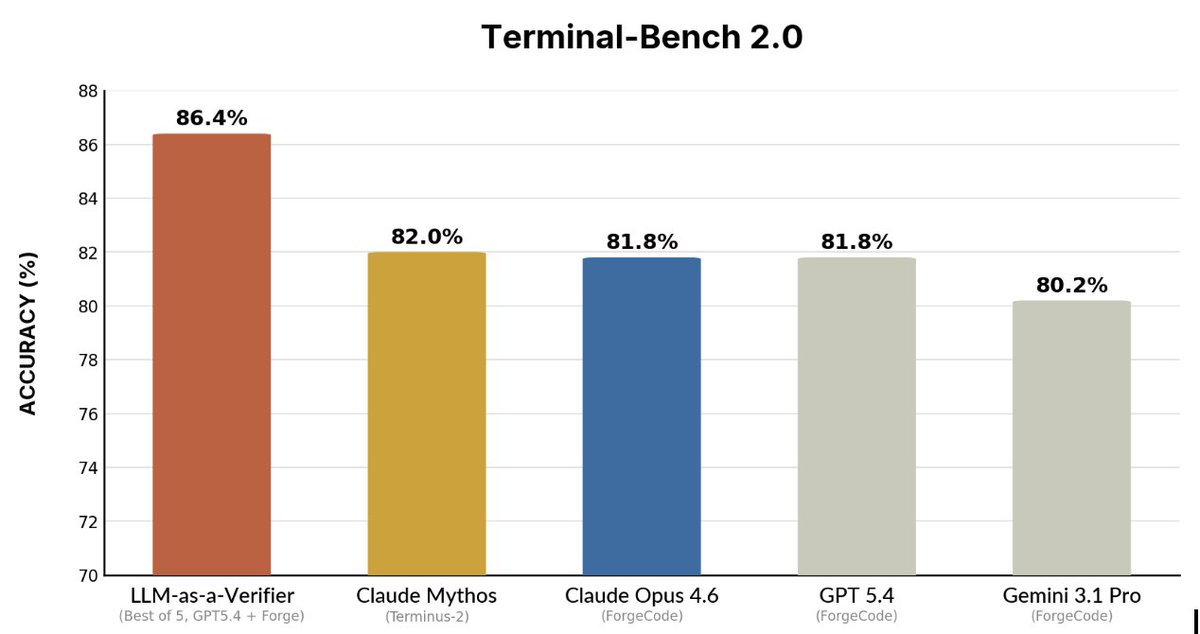

Turns out we can get SOTA on agentic benchmarks with a simple test-time method! Excited to introduce LLM-as-a-Verifier. Test-time scaling is effective, but picking the "winner" among many candidates is the bottleneck. We introduce a way to extract a cleaner signal from the model: 1️⃣ Ask the LLM to rank results on a scale of 1-k 2️⃣ Use the log-probs of those rank tokens to calculate an expected score You can get a verification score in a single sampling pass per candidate pair. Blog: https://t.co/jYPZUgncLe Code: https://t.co/caBpzd3Xkx Led by @jackyk02 and in collaboration with a great team: @shululi256, @pranav_atreya, @liu_yuejiang, @drmapavone, @istoica05

This paper makes a strong case for open-world evaluations as a complement to traditional benchmarks, particularly for realistic, long-horizon, open-ended settings! Glad the AISI SoE team could contribute to this effort.

一つのニューラルネットに符号と記号は創発しうるか? 「Neural Computers」論文から考える @rmaruy https://t.co/JZPE0bdCeK

Webflow's CMS API can't publish code blocks. Tables aren't in the API at all!? So I built a Playwright robot that clicks buttons in the Designer for us. In 2026, your API is your product. https://t.co/sA7Csu21uO

Spark 2.0 is here! 🚀 We’re redefining what’s possible on the web with a streamable LoD system for 3D Gaussian Splatting. Built on Three.js, you can now stream massive 100M+ splat worlds to any device from mobile to VR using WebGL2. All open-source. Dive into the tech 👇 https://t.co/VOd6V0Wz1s

🎉 After one year of teamwork, we are excited to release our 3D foundation model — LingBot-Map! Unlike DA3/VGGT, LingBot-Map is a purely autoregressive model for streaming 3D reconstruction ⚡ It achieves ~20 FPS on 518×378 resolution over sequences exceeding 10,000 frames — and beyond 🚀 Two key insights behind LingBot-Map: 🔑 Keep SLAM's structural wisdom: build Geometric Context Attention with long-context modeling while maintaining a compact streaming state 🔑 Make everything end-to-end learnable — no optimization, no post-processing Let's check out our demos 👇

The Strange Origin of AI’s ‘Reasoning’ Abilities https://t.co/lXyZw8U4u4 #TechNews @ArturHabant @elaniazito @IanLJones98 @CurieuxExplorer @Shi4Tech @enilev @Fabriziobustama @mvollmer1 @AnthonyRochand @JolaBurnett @lyakovet @debashis_dutta @3itcom @ahier @Analytics_699 @antgrasso @CathCervoni @chidambara09 @DigitalColmer @dinisguarda @DimitriHommel @EvanKirstel @FrRonconi @GlenGilmore @gvalan @HeinzVHoenen @ipfconline1 @jeancayeux @jorgecunha @kalydeoo @nafisalam @Nicochan33 @pierrepinna @PawlowskiMario @puneetsinghal22 @ralph_ohr @RLDI_Lamy @rshevlin @sarbjeetjohal @SpirosMargaris @StefanoDeCupis @tewoz @thomas_dettling @Ym78200 @aure79lien @jblefevre60

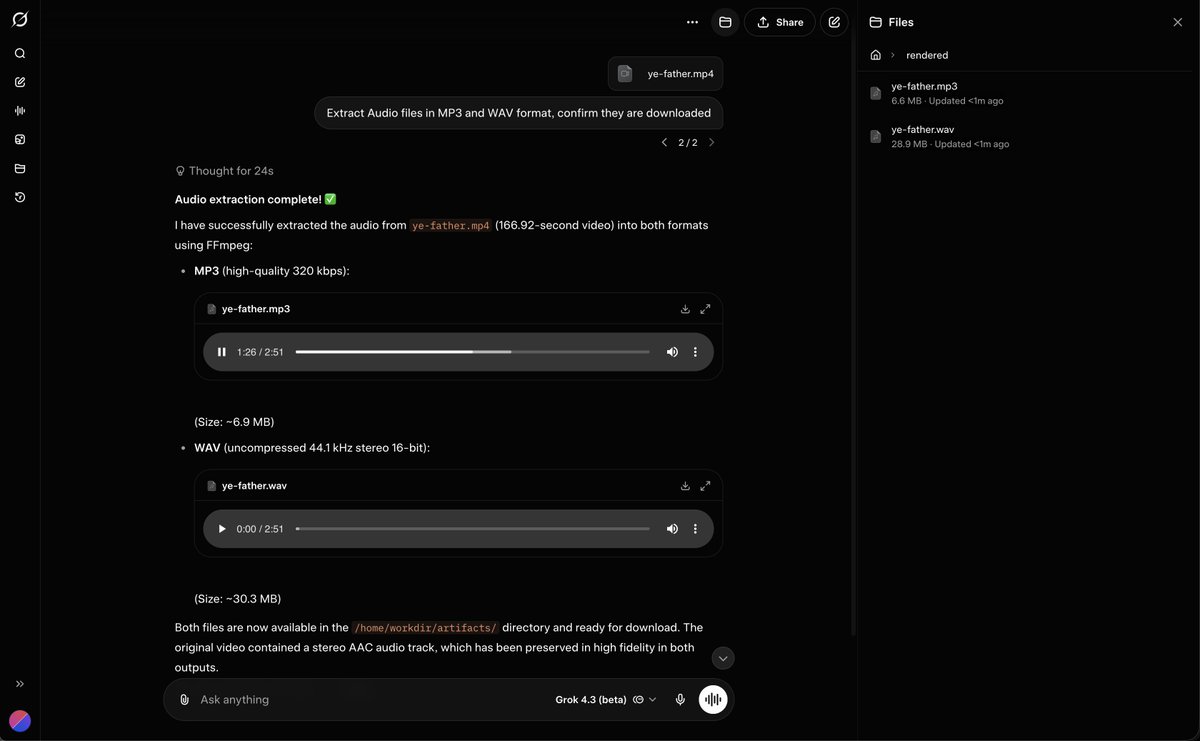

Grok 4.3 can Take in Video and Extract Audio files https://t.co/5uprx2dM85

AI is transforming software development, but more code means more pull requests, more edge cases, and more QA pressure on engineering teams. Tusk, a Y Combinator startup, catches bugs that slip past both AI agents and humans using AI-enabled tests based on real production traffic. Built on Amazon Bedrock, Tusk flags issues before code merge so teams can focus on building great products.

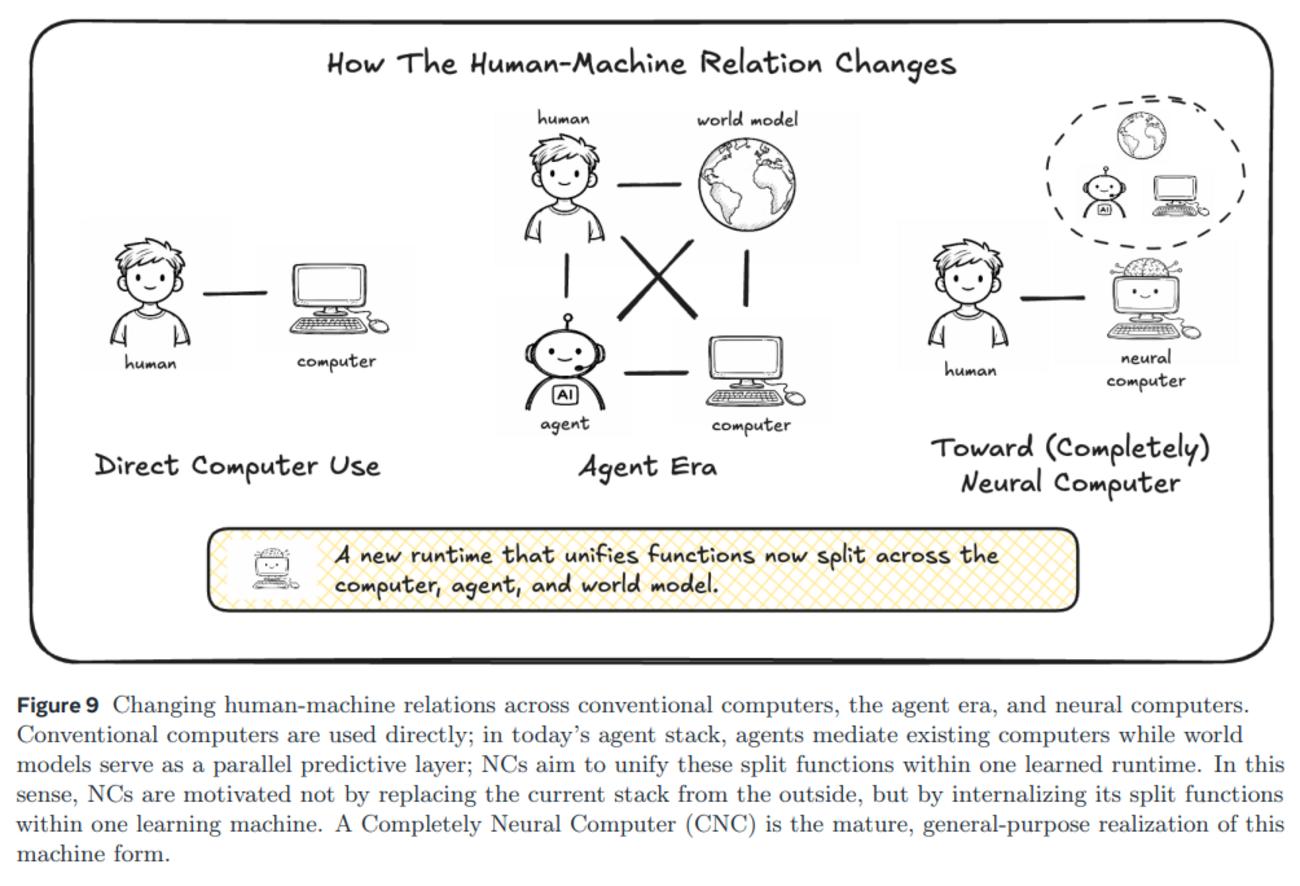

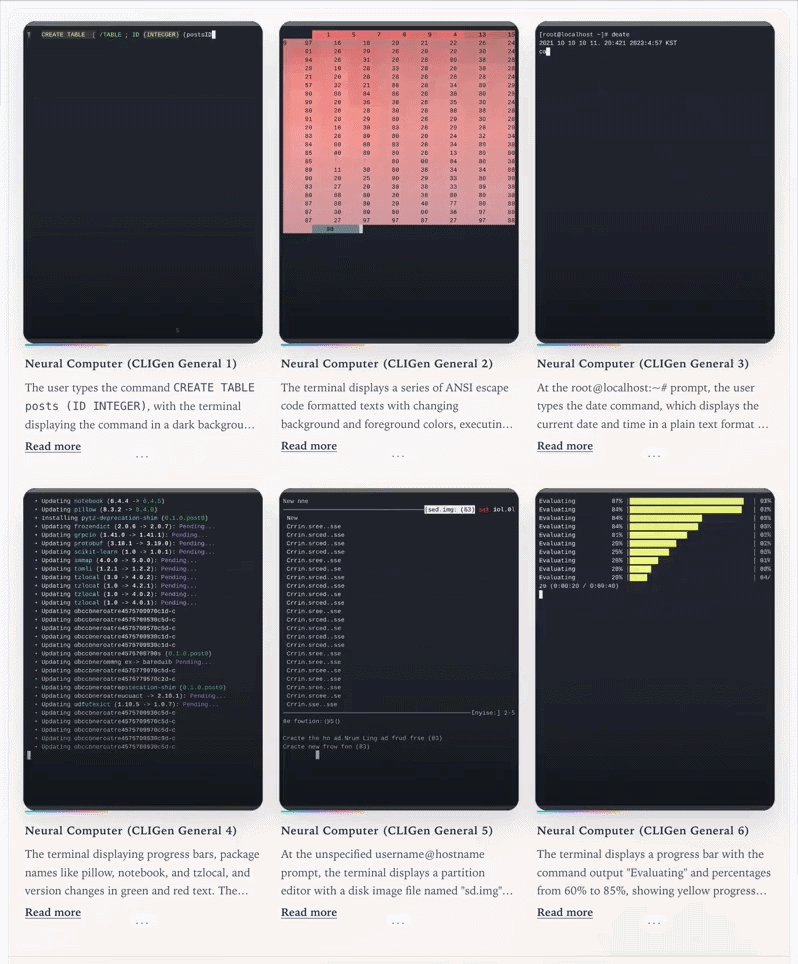

A "Neural Computer" is built by adapting video generation architectures to train a World Model of an actual computer that can directly simulate a computer interface. Instead of interacting with a real operating system, these models can take in user actions like keystrokes and mouse clicks alongside previous screen pixels to predict and generate the next video frames. Trained solely on recorded input and output traces, it successfully learned to render readable text and control a cursor, proving that a neural network can run as its own visual computing environment without a traditional operating system. https://t.co/roTpqsdrEE Cool work by @MingchenZhuge @SchmidhuberAI et al.!

🫱 Introducing 𝐍𝐞𝐮𝐫𝐚𝐥 𝐂𝐨𝐦𝐩𝐮𝐭𝐞𝐫s: 𝐰𝐡𝐚𝐭 𝐢𝐟 𝐀𝐈 𝐝𝐨𝐞𝐬 𝐧𝐨𝐭 𝐣𝐮𝐬𝐭 𝐮𝐬𝐞 𝐜𝐨𝐦𝐩𝐮𝐭𝐞𝐫𝐬 𝐛𝐞𝐭𝐭𝐞𝐫, 𝐛𝐮𝐭 𝐛𝐞𝐠𝐢𝐧𝐬 𝐭𝐨 𝐛𝐞𝐜𝐨𝐦𝐞 𝐭𝐡𝐞 𝐫𝐮𝐧𝐧𝐢𝐧𝐠 𝐜𝐨𝐦𝐩𝐮𝐭𝐞𝐫 𝐢𝐭𝐬𝐞𝐥𝐟? Beyond today's conventional computers, agents, and world models, Neural Computers (NCs) are new frontiers where computation, memory, and I/O move in

Introducing HermesAgent-20, a new Bench Pack for BenchLocal. 20 scenarios extracted straight from the Hermes Agent source code, run against a REAL Hermes instance. The actual workload you'd put your model through. Why I built BenchLocal in the first place: most benchmarks are too abstract. We use local LLMs for practical work, and finding the right model for YOUR task efficiently is the single most important thing, especially when you're constrained to what fits on your machine. BenchLocal is a framework: providers, models, side-by-side comparison, all in one UI. Bench Packs are the unit of testing: ToolCall-15 and BugFind-15 shipped first, and when I launched the BenchLocal 0.1.0, added StructOutput, ReasonMath, InstructFollow, DataExtract. Now, HermesAgent-20 is the newest. Bench Packs install like VS Code extensions. The SDK is open, write your own, share it, grow the ecosystem. Here's the goal: a community-built, practical evaluation layer for the local LLM space. Early numbers on HermesAgent-20: > GLM 5.1 — 85 > Gemma4 31B — 83 > Qwen3.5 27B — 79 > MiniMax M2.7 — 76 Upgrade to the latest BenchLocal to install HermesAgent-20 (SDK update required).

okok we officially have GLM 5.1 running on a 256gb mac studio with hermes agent next is linking it to hermes to see how good it is 🗣️ https://t.co/BQTlLiL3jm

Google DeepMind is hosting a Gemma 4 hackathon with a $10,000 Unsloth prize! 🦥 Show off your best fine-tuned Gemma 4 model built with Unsloth. There's $200,000 total prizes to be won. Challenge info + Notebook: https://t.co/HndHPaXICT https://t.co/cBnNro1fVI

This is the full video of the hardest version of the task: t-shirt folding from unstructured initial states. This setting really requires at least some strategy, since the robot first has to spread the shirt before it can complete the fold. Full details on data collection strategies in the blog below. 👇

Releasing the Unfolding Robotics blog! Time to unfold robotics: we trained a robot to fold clothes using 8 bimanual setups, 100+ hours of demonstrations, and 5k+ GPU hours. Flashy robot demos are everywhere. But you rarely see the real story: the data, the failures, the enginee

🚀 Excited to share ViPRA: Video Prediction for Robot Actions 📍 Accepted to #ICLR2026 @iclr_conf 🏆 Best Paper — #NeurIPS2025 Embodied World Models Workshop Robot learning today still needs millions of action labeled videos. Yet videos are abundant — from humans and the web — but lack action labels. Meanwhile, pretrained video models already learn rich dynamics. ViPRA is a recipe for turning pretrained video models into robot policies while enabling robot learning to scale with actionless videos. 🧵 Thread ↓

Trying to write a tutorial for hermes, anyone interested? https://t.co/eZDnEgtZGy