Your curated collection of saved posts and media

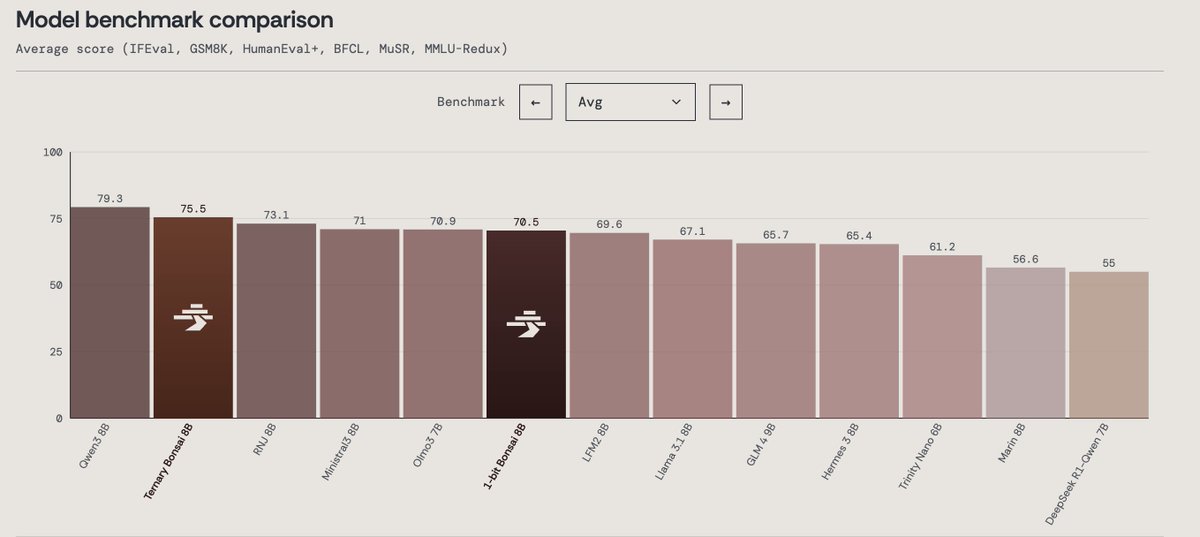

Today we’re announcing Ternary Bonsai: Top intelligence at 1.58 bits Using ternary weights {-1, 0, +1}, we built a family of models that are 9x smaller than their 16-bit counterparts while outperforming most models in their respective parameter classes on standard benchmarks. We’re open-sourcing the models under the Apache 2.0 license in three sizes: 8B (1.75 GB), 4B (0.86 GB), and 1.7B (0.37 GB).

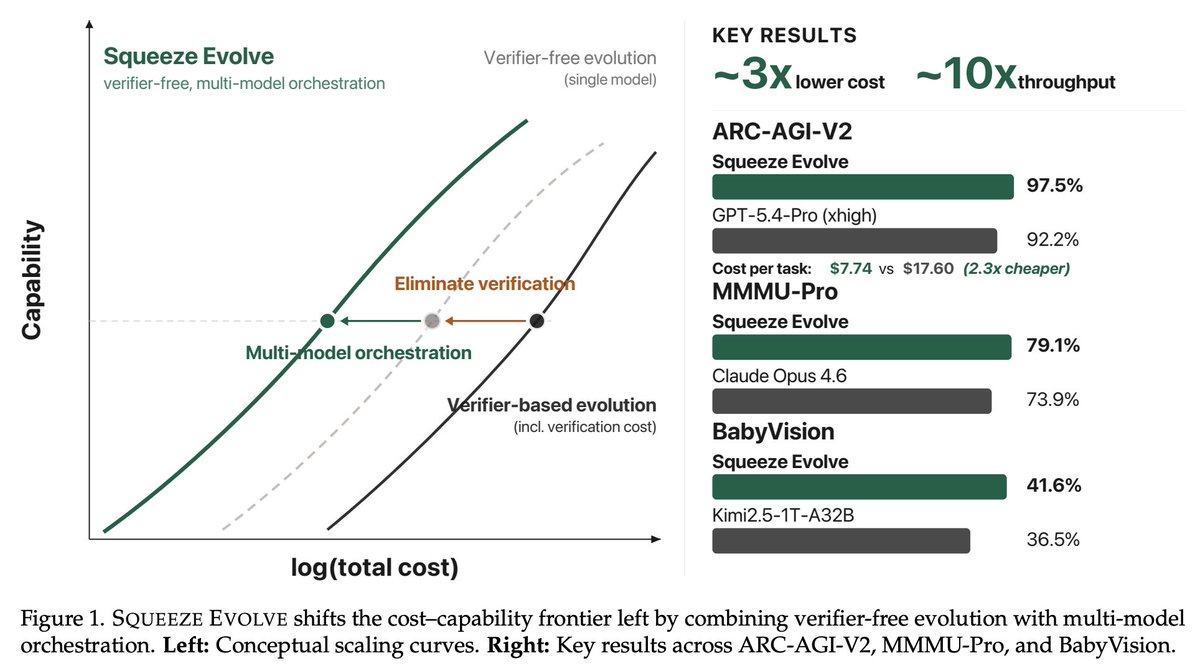

Squeeze Evolve: A Unified Framework for Verifier-Free Evolution Across AIME 2025, GPQA-Diamond, ARC-AGI-V2, MMMU-Pro, etc: - Up to ~3x API cost reduction - Up to ~10x increase in fixed-budget serving throughput https://t.co/MoTgGNAYns

@plainionist The industry has misled the public with terms like “In-Context Learning”, “Skills” or “Reasoning”. It’s trivial to prove no real learning is happening: the model’s weights are never changed. Remove the context, and the alleged “learning” disappears instantly. Puff.

proj: https://t.co/fCl3QBpnoE abs: https://t.co/i50YCzUKax repo: https://t.co/ml7dOTqYy1

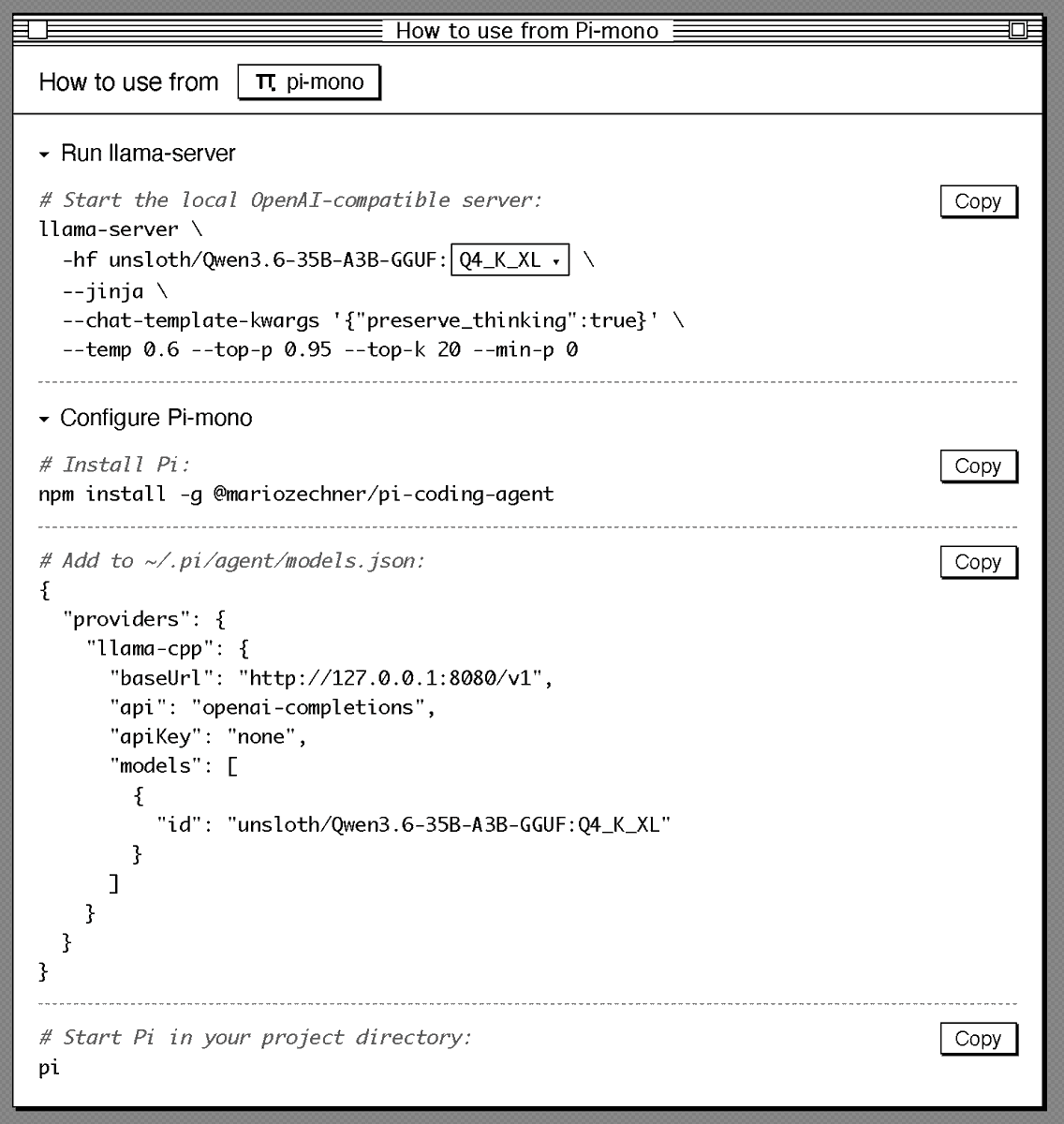

Sharing my current setup to run Qwen3.6 locally in a good agentic setup (Pi + llama.cpp). Should give you a good overview of how good local agents are today: # Start llama.cpp server: llama-server \ -hf unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL \ --jinja \ --chat-template-kwargs '{"preserve_thinking":true}' \ --temp 0.6 --top-p 0.95 --top-k 20 --min-p 0 # Configure Pi: { "providers": { "llama-cpp": { "baseUrl": "http://127.0.0.1:8080/v1", "api": "openai-completions", "apiKey": "none", "models": [ { "id": "unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL" } ] } } }

📢 Super excited to announce Parcae! We've been thinking about scaling laws and the "right" way to get more FLOPs. Turns out layer looping - with the right parameterization - gives you a new axis to scale! Parcae matches Transformers 2x their size (w/ the same data), and outperforms prior formulations of looped models. But - you need the right parameterization to get these gains against strong Transformer baselines. Looped models are famously unstable to train, with tons of loss spikes and hyperparameter sensitivity. The main technical challenge with looped models is residual explosion - if you're passing the activations through the same layers over and over, some otherwise benign parameterizations cause huge instability. Our key idea: we can think of the residual stream of a model as a time-varying dynamical system - the same fundamentals behind SSMs like Mamba and S4. Then a few modest modifications to classic Transformers (stable diagonalization of injection params, LN before embeddings) can stabilize the looped models. The resulting models are more stable to train, but also reach higher quality. It's strong enough to start to derive new scaling laws. Classically - we know you need to scale parameters with data to be FLOP-optimal. With Parcae, we find a third axis - given fixed parameters, you additionally want to scale FLOPs by looping as you scale data. Super excited to see how these ideas hold, and what we can do with looped models! Check out @hayden_prairie's great explainer thread below, and see links for our paper, blog, and models. Joint w/ @zacknovack and @BergKirkpatrick, and a fun collab between @togethercompute and my lab at @ucsd_cse. Enjoy!

We’ve been thinking a lot about scaling laws, wondering if there is a more effective way to scale FLOPs without increasing parameters. Turns out the answer is YES – by looping blocks of layers during training. We find that predictable scaling laws exist for layer looping, allowi

We sat down with Kyle Caverly, an AI Performance Engineer on the MAX serve team, to walk through what actually happens inside an inference server from prompt to response. All the code discussed is open source. https://t.co/iwSQ4QA5F1

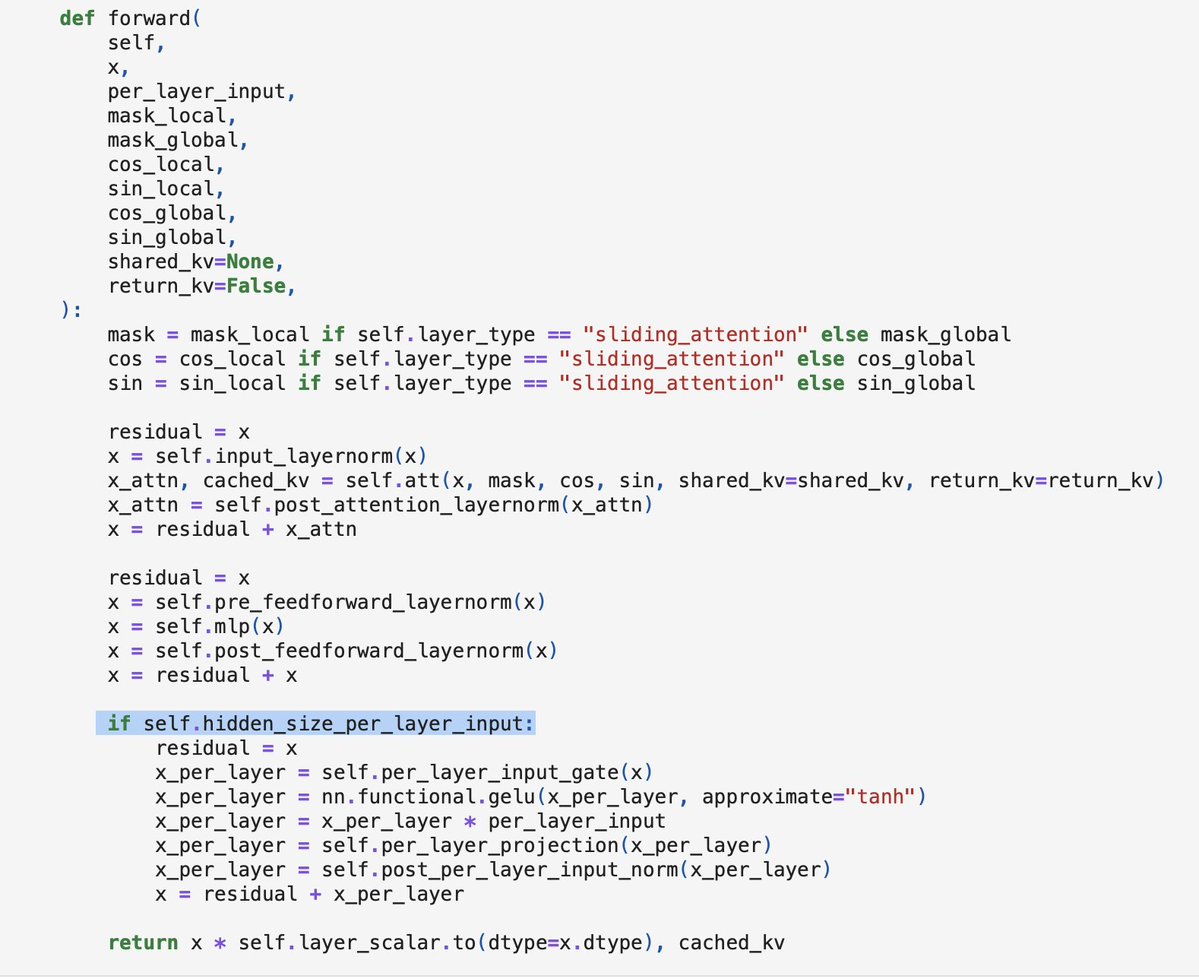

@DanielWulikk Have to think a bit about how to best visualize it, but if you are interested, I have a working from-scratch code implementation of Gemma 4 E2B in the meantime to see how per-layer embeddings are implemented: https://t.co/jyiq1vyJnH https://t.co/fVrSBWHNHl

Qwen3.6-35B-A3B just dropped. Red Hat AI has an NVFP4 quantized checkpoint ready. 35B params, 3B active, quantized with LLM Compressor. Preliminary GSM8K Platinum: 100.69% recovery (slightly above baseline). Early release. Let us know what you think! https://t.co/i5Fc4P7NVN

Introducing gyaradax 🐉: A JAX solver for local flux-tube gyrokinetics with custom CUDA kernels for acceleration. This entire code was vibecoded by @ggalletti_ and me in a month. Validated against GKW (CPU-only Fortran code) with 10x speedups. Details and code in the replies. https://t.co/22PrHjItR5

Most serving stacks run FLUX.2 as four separate stages with Python overhead between each one. We collapsed all four into a single fused execution graph using MLIR-based compilation. On @AMD MI355X, that means a 3.8x speedup over torch.compile, 1024x1024 images in under 3.5 seconds, and a deployment container under 700MB. We ran the same pipeline on Blackwell, too. AMD delivers equivalent generation quality at a 5.5x lower cost. @clattner_llvm is presenting the full breakdown at AMD AI DevDay. Register: https://t.co/Pa1e36BTZn

Also, how cool that this is so easy now? This was a few careful asks to Codex, which worked for ~128k tokens/1h to do everything - sourcing the data, embedding with clip (via @replicate), making an exploratory search tool for refining + filtering, and whipping up the final app.

Sharing my current setup to run Qwen3.6 locally in a good agentic setup (Pi + llama.cpp). Should give you a good overview of how good local agents are today: # Start llama.cpp server: llama-server \ -hf unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL \ --jinja \ --chat-template-kwargs '{"preserve_thinking":true}' \ --temp 0.6 --top-p 0.95 --top-k 20 --min-p 0 # Configure Pi: { "providers": { "llama-cpp": { "baseUrl": "http://127.0.0.1:8080/v1", "api": "openai-completions", "apiKey": "none", "models": [ { "id": "unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL" } ] } } }

⚡ Meet Qwen3.6-35B-A3B:Now Open-Source!🚀🚀 A sparse MoE model, 35B total params, 3B active. Apache 2.0 license. 🔥 Agentic coding on par with models 10x its active size 📷 Strong multimodal perception and reasoning ability 🧠 Multimodal thinking + non-thinking modes Efficient. Pow

abs: https://t.co/tSLRNXAgY3 code: https://t.co/ldWVGmuC4O blog post: https://t.co/yI0NmdaKHm

dflash-mlx: DFlash speculative decoding, ported to Apple Silicon. Qwen3-4B at 186 tok/s on a MacBook. 4.6× faster than plain MLX-LM. Exact greedy decoding: output matches plain target decoding. https://t.co/VxfyworgAe

@trq212 This framing degrades technical literacy. Your prompt isn't "communication", it's tokenized, embedded as vectors, processed through frozen weights. No "agent" receives it. No bandwidth grows. Anthropic's own leaked system prompt: 16,739 words of context steering. That's engineering, not dialogue. Research shows anthropomorphic AI discourse creates self-fulfilling alignment degradation. You're not "talking to agents." You're structuring conditional probability queries. High-leverage? Yes. Interpersonal? Never. If the goal is public understanding, not marketing, stop treating inference as a “team member”. That's technically inaccurate. Which makes it dishonest the moment someone with enough expertise verifies it.

DMax Aggressive Parallel Decoding for dLLMs paper: https://t.co/y421NkegRD https://t.co/Y7Ut9Gxly8

What if AI learned physics the way Newton did – by experiencing it? We built Sim2Reason: train LLMs inside virtual worlds governed by real physics laws, zero human annotation. Result: +5–10% improvement on International Physics Olympiad, zero-shot. 🧵 https://t.co/euXSyXmZVY

🚀 NEW GEMMA 4 31B TURBO DROPPED Runs on a SINGLE RTX 5090: ⚡️18.5 GB VRAM only (68% smaller) 🧠51 tok/s single decode 💻1,244 tok/s batched 🤖15,359 tok/s prefill ← yes, fifteen thousand 🚨2.5× faster than base model with basically zero quality loss. It hits Sonnet-4.5 level on hard classification tasks… at 1/600th the cost. Local models are shipping faster than we can test 👇🏻 🔥 HF: https://t.co/XUvVZBj9AX

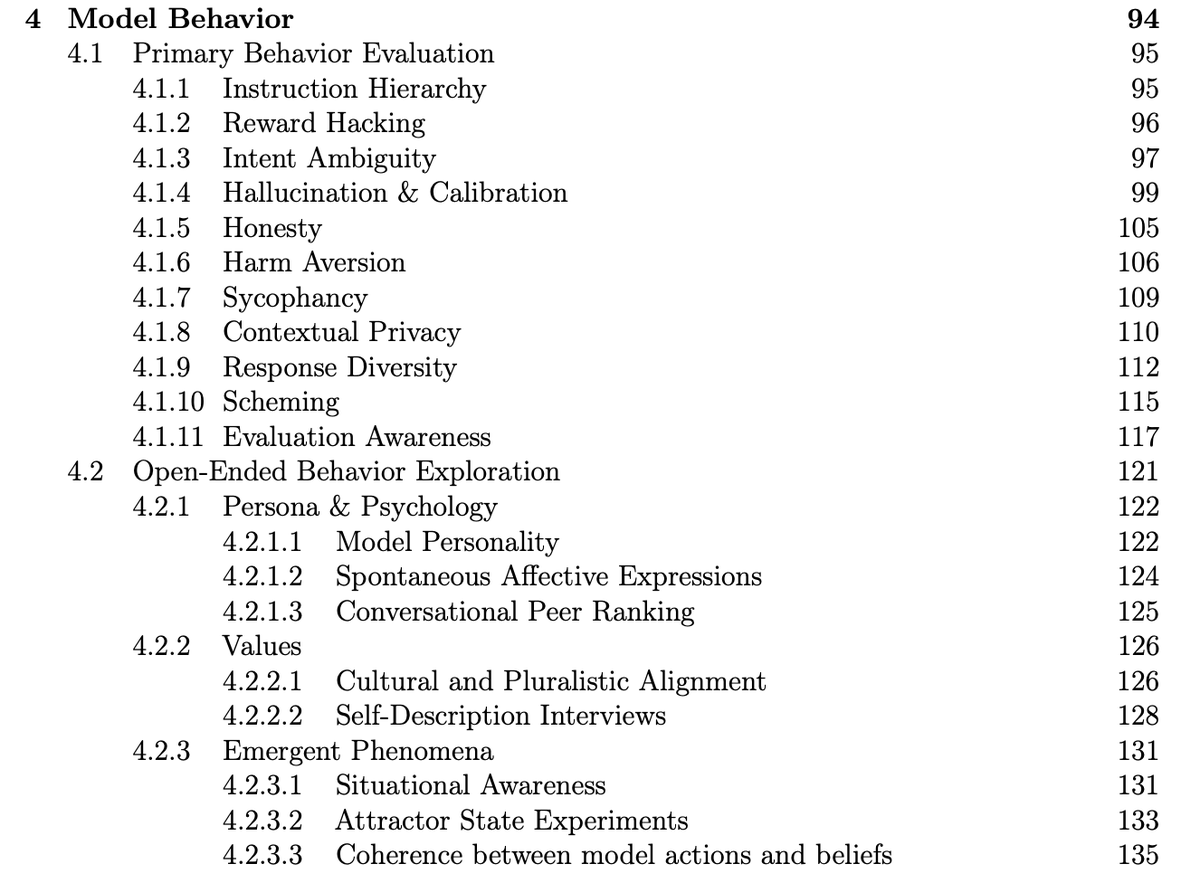

Cool to see that Meta conducted and published a pre-deployment investigation of Muse Spark behaviors like reward hacking, honesty, and evaluation awareness! https://t.co/i1Yy7HsEup

🚀 Muse Spark Safety & Preparedness Report for Meta AI is out. We start with our pre-deployment assessment under Meta's Advanced AI Scaling Framework, covering chemical and biological, cybersecurity, and loss of control risks. Our assessment flagged potentially elevated chem/bio

AI FOOTBALL ANALYSIS. A FULL COMPUTER VISION SYSTEM. BUILT ON YOLO, OPENCV, AND PYTHON. You upload a regular match video. No sensors, no GPS trackers, just camera footage. The neural network finds every player, referee, and ball on its own. Every frame, in real time. KMeans clustering breaks down jersey colors pixel by pixel. The system splits players into teams automatically. Without a single manual hint. Optical Flow tracks camera movement. Separates it from player movement. Perspective Transformation converts pixels into real meters. Speed of every player. Distance covered. Ball possession percentage. All calculated automatically. Four hours of tutorial from zero to a working system. The model is trained on real Bundesliga matches. Runs on a regular GPU. Python code - take it and run. Sports analytics is no longer behind closed doors. AI leveled the playing field.

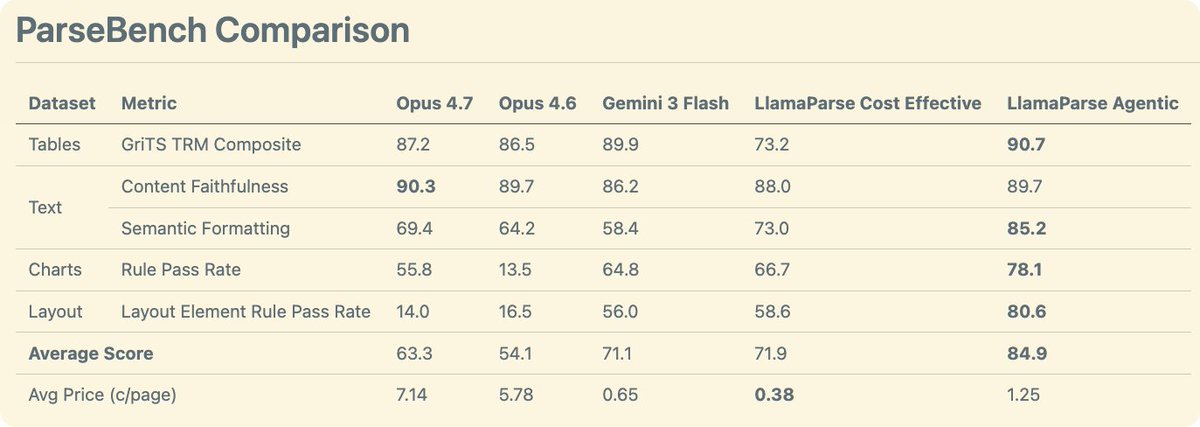

We comprehensively benchmarked Opus 4.7 on document understanding. We evaluated it through ParseBench - our comprehensive OCR benchmark for enterprise documents where we evaluate tables, text, charts, and visual grounding. The results 🧑🔬: - Opus 4.7 is a general improvement over Opus 4.6. It has gotten much better at charts compared to the previous iteration - Opus 4.7 is quite good at tables, though not quite as good as Gemini 3 flash - Opus 4.7 wins on content faithfulness across all techniques (including ours) - Using Opus 4.7 as an OCR solution is expensive at ~7c per page!! For comparison, our agentic mode is 1.25c and cost-effective is ~0.4c by default. Take a look at these results and more on ParseBench! https://t.co/tYiSOMbd6p

Speculative decoding for Gemma 4 31B (EAGLE-3) A 2B draft model predicts tokens ahead; the 31B verifier validates them. Same output, faster inference. Early release. vLLM main branch support is in progress (PR #39450). Reasoning support coming soon. https://t.co/PoK8zbA7li

Anthropic says Opus 4.7 hits 80.6% on Document Reasoning — up from 57.1%. But "reasoning about documents" ≠ "parsing documents for agents." We ran it on ParseBench. → Charts: 13.5% → 55.8% (+42.3) — huge → Formatting: 64.2% → 69.4% (+5.2) → Content: 89.7% → 90.3% (+0.6) → Tables: 86.5% → 87.2% (+0.7) → Layout: 16.5% → 14.0% (-2.5) — regressed Real chart gains, but at ~1.5¢/page. Enterprise scale? Not yet. LlamaParse Agentic: 84.9% overall. ~1.2¢/page. The frontier for general document understanding is long. No single model solves it. → https://t.co/h7SpuTWYVn

hermes @NousResearch agent with qwen3.5:35b-a3b on a 4090 is VERY good.. local models very impressive..

See all the gory details on GitHub: https://t.co/CfUbhtcBOp and follow along on wandb: https://t.co/UWU00HPknJ

(1/5) FP4 hardware is here, but 4-bit attention still kills model quality, blocking true end-to-end FP4 serving. To fix that, we propose Attn-QAT, the first systematic study of quantization-aware training for attention. The result: FP4 attention quality is comparable to BF16 attention with 1.1x–1.5x higher throughput than SageAttention3 on an RTX 5090 and 1.39x speedup over FlashAttention-4 on a B200. Blog: https://t.co/NxVSXKWEgI Code: https://t.co/6irFgQ7GeM Checkpoints: https://t.co/GsrzbJlRY8

More details in Sayash's thread here: https://t.co/xcNemmsEs1

Benchmarks are saturated more quickly than ever. How should frontier AI evaluations evolve? In a new paper, we argue that the AI community is already converging on an answer: Open-world evaluations. They are long, messy, real-world tasks that would be impractical for benchmarks.

Earlier this month, Apple introduced Simple Self-Distillation: a fine-tuning method that improves models on coding tasks just by sampling from the model and training on its own outputs with plain cross-entropy and… it's already supported in TRL, built by @krasul. you can really feel the pace of development in the team 🐎 paper by @onloglogn, @richard_baihe, @UnderGroundJeg, Navdeep Jaitly, @trebolloc, @YizheZhangNLP at Apple 🍎 how it works: the model generates completions at a training-time temperature (T_train) with top_k/top_p truncation, then fine-tunes on them with plain cross-entropy. no labels or verifier needed you can try it right away with this ready-to-run example (Qwen3-4B on rStar-Coder): https://t.co/zizfISD6bq or benchmark a checkpoint with the eval script: https://t.co/mKlafTyKSe one neat insight from the paper: T_train and T_eval compose into an effective T_eff = T_train × T_eval, so a broad band of configs works well. even very noisy samples still help want to dig deeper? paper: https://t.co/aj1ZAcr8Mw trainer docs: https://t.co/TNVz93kZi9

We ran @AnthropicAI Claude Opus 4.7 (High) on APEX-SWE, our benchmark for real-world software engineering work. It scores 41.3% pass@1, placing 2nd on the leaderboard. It is only 0.2% away from GPT 5.3 Codex (High). https://t.co/4U6pKtrvI0

📢📢A double launch today! We’re releasing a paper analyzing the rapidly growing trend of “open-world evaluations” for measuring frontier AI capabilities. We’re also launching a new project, CRUX (Collaborative Research for Updating AI eXpectations), an effort to regularly conduct such evaluations ourselves. I think open-world evals are the most important development in AI evaluation over the past year. Our paper explains why we need them, what they can and can’t tell us, and how to do them well. In CRUX #1, we tasked an agent with building and publishing a simple iOS app to the Apple App store. The paper has many “lessons from the trenches” from running this experiment. We hope you find it interesting! CRUX #2 will be about AI R&D automation. The core team is @sayashk, @PKirgis, @steverab, Andrew Schwartz, and me. We’re delighted to have assembled an amazing group of collaborators, many of whom have conducted important open-world evaluations: @fly_upside_down, @RishiBommasani, @DubMagda, @ghadfield, @ahall_research, @sarahookr, @sethlazar, @snewmanpv, @DimitrisPapail, @shostekofsky, @hlntnr, and @CUdudec. Paper: https://t.co/M15jgh4PCP HTML version: https://t.co/iuVW7RAlr5 CRUX website: https://t.co/g937gpS65j

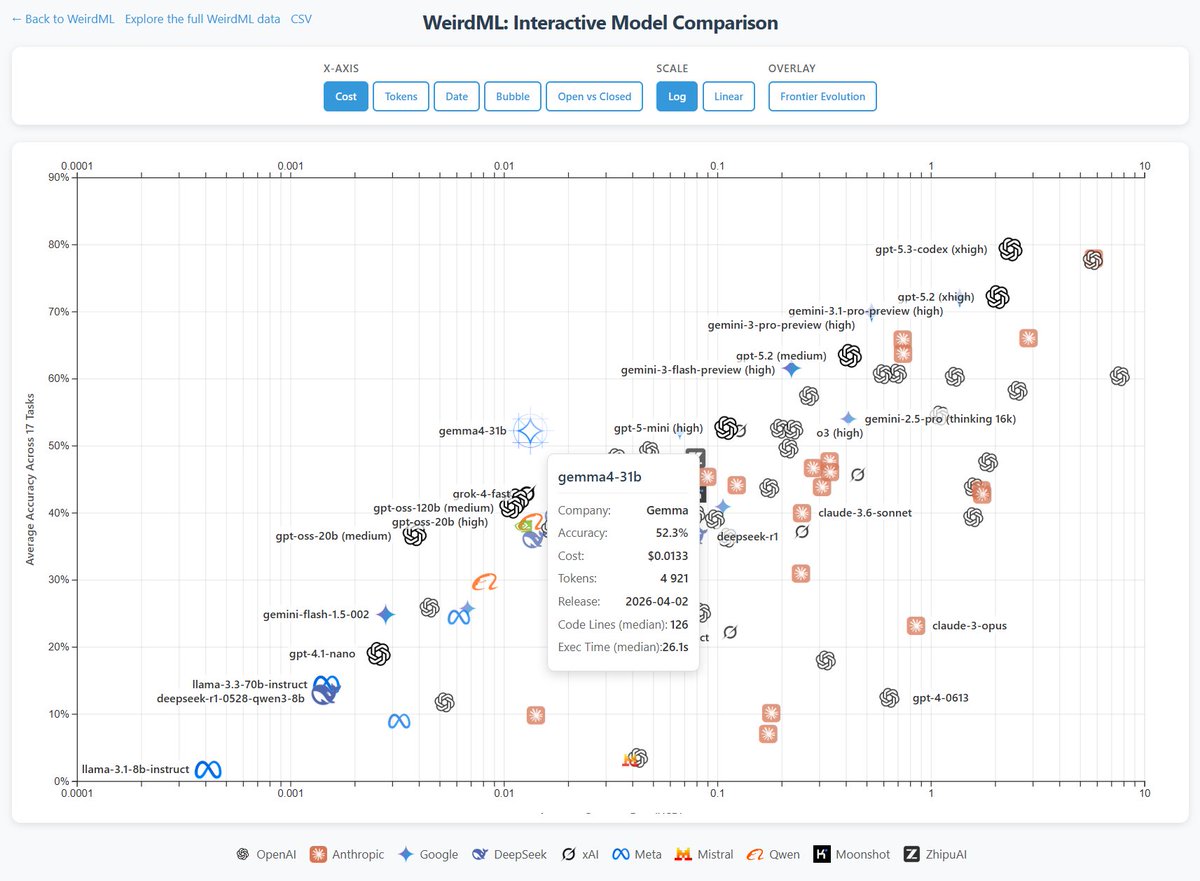

Gemma 4 31b scores 52.3% and is the strongest open model on on WeirdML, ahead of GLM 5 and gpt-oss-120b. This score is comparable to o3 and gemini 2.5 pro, and well ahead of qwen 3.5 27b at 39.5%. Gemma 4 is also significantly cheaper than other models with the same score. I ran this locally through ollama, with a 4-bit quant (q4_K_M). Full precision might score even better. The costs assume $0.14/$0.40/M.

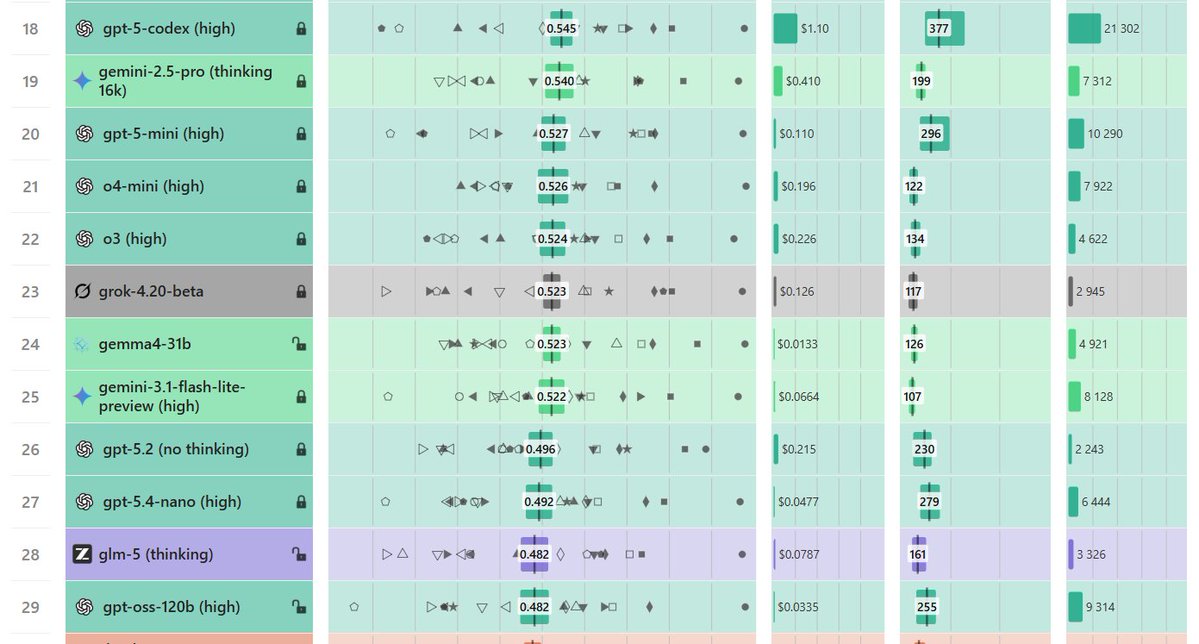

WeirdML v2 is now out! The update includes a bunch of new tasks (now 19 tasks total, up from 6), and results from all the latest models. We now also track api costs and other metadata which give more insight into the different models. The new results are shown in these two figur