@haoailab

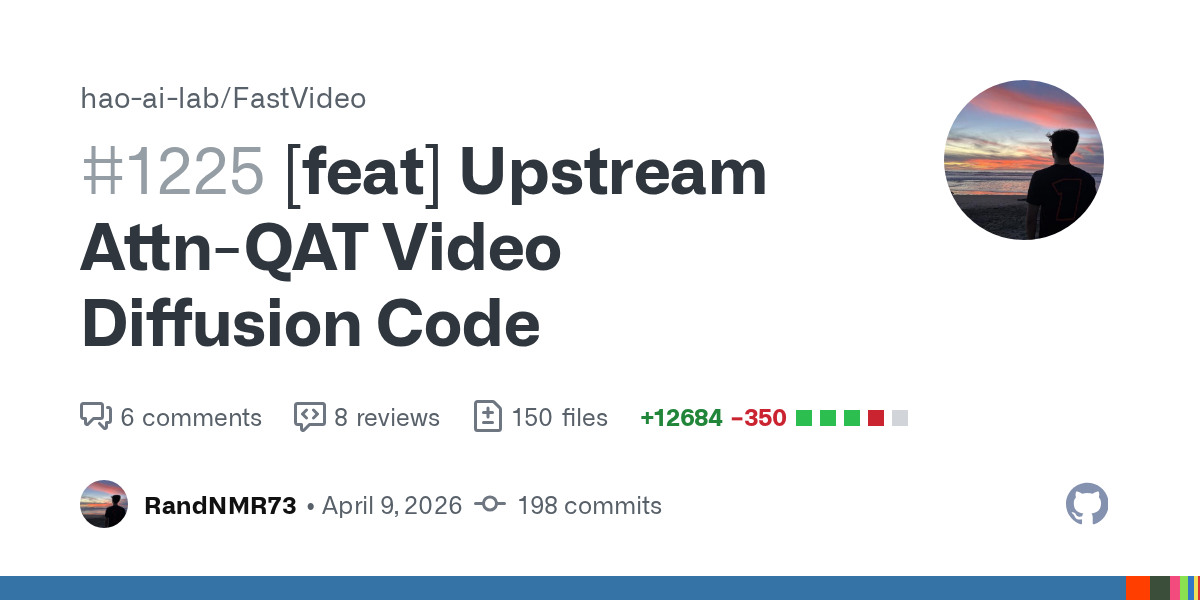

(1/5) FP4 hardware is here, but 4-bit attention still kills model quality, blocking true end-to-end FP4 serving. To fix that, we propose Attn-QAT, the first systematic study of quantization-aware training for attention. The result: FP4 attention quality is comparable to BF16 attention with 1.1x–1.5x higher throughput than SageAttention3 on an RTX 5090 and 1.39x speedup over FlashAttention-4 on a B200. Blog: https://t.co/NxVSXKWEgI Code: https://t.co/6irFgQ7GeM Checkpoints: https://t.co/GsrzbJlRY8