Your curated collection of saved posts and media

A new #Triton BF16 Persistent Cache-Aware Grouped #GEMM kernel speeds up Mixture-of-Experts models like DeepSeekv3. It achieved up to 2.62x faster training on NVIDIA H100 GPUs compared to the #PyTorch loop baseline. 🔗 Latest blog from @Meta & @IBM: https://t.co/7JueS7v6he https://t.co/7zTg2OAFki

🚀 Big ideas incoming from Robert Nishihara, Co-Founder at Anyscale, live on the #PyTorchConf keynote stage. Join the #AI & ML community in San Francisco October 22–23. 👀 Speakers: https://t.co/ICZSTITfOW 🎟️ https://t.co/RgdpmxlWil https://t.co/gKEmwAd6yd

🎤 The stage is set and the spotlight’s on Sergey Levine, Associate Professor, Department of Electrical Engineering and Computer Sciences at UC Berkeley, a #PyTorchConf keynote speaker! Join the minds moving #OpenSource #AI & ML forward. 📍 San Francisco | 📅 October 22–23 👀 Keynotes: https://t.co/AwLQHvTgch 🎟️ Register: https://t.co/vJl0Q3Yz6R

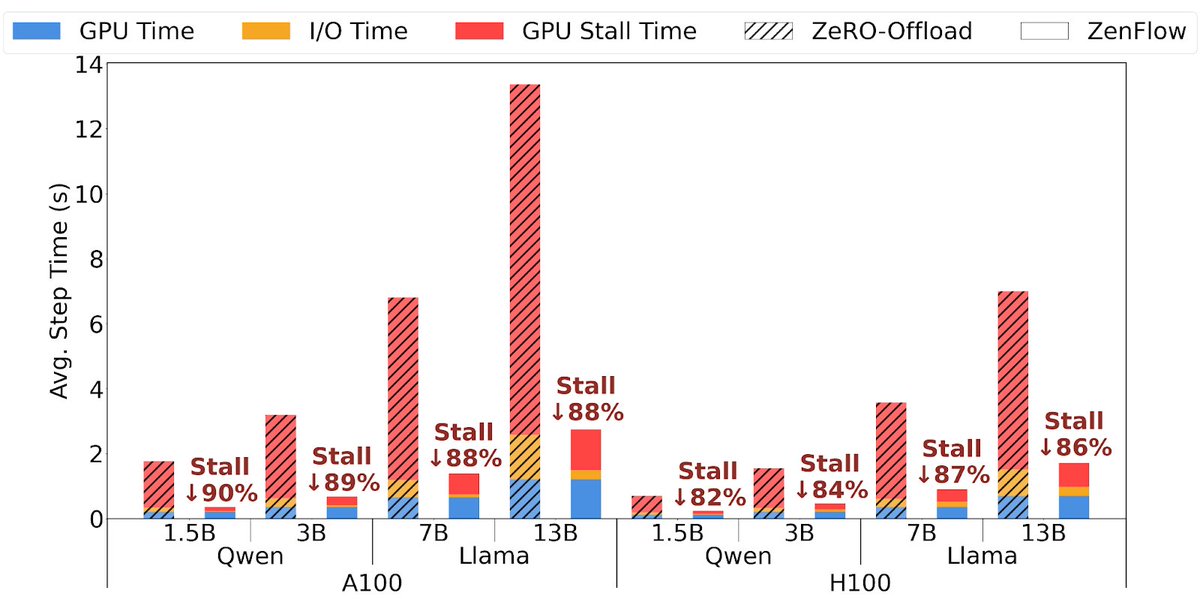

Introducing #ZenFlow: No Compromising Speed for #LLM Training w/ Offloading 5× faster LLM training with offloading 85% less GPU stalls 2× lower I/O overhead 🚀 Blog: https://t.co/emUxxDQoGI 🚀 Try ZenFlow and experience 5× faster training with offloading: https://t.co/ppcoI4Af7V https://t.co/gqrchLNYSh

🌉 San Francisco is the place to be for #AI this fall. #OpenSourceAIWeek runs Oct 18–26 with events on #GenAI, #ML, #PyTorch, and more. Don’t miss it. 🔎 Explore the events & register: https://t.co/8aeJv6fnGZ https://t.co/3Xtm2GNFp4

🎤 The stage is set and the spotlight’s on Ion Stoica, Professor, Department of Electrical Engineering and Computer Sciences at UC Berkeley, a #PyTorchConf keynote speaker! Join the minds moving #OpenSource #AI & ML forward. 📍 San Francisco | 📅 October 22–23 👀 Keynotes: https://t.co/AfpeYMNeE6 🎟️ Register: https://t.co/9flQKL8yF5

🏆 Help spotlight the individuals making significant contributions to innovation and impact across the PyTorch ecosystem. 📅 Deadline: Tomorrow, August 22, 2025 👉 Submit your nomination: https://t.co/DumlZh74lH 🔎 Tips for writing a strong nomination: https://t.co/l0b0bS98NT https://t.co/5Ffpk4GNPj

🚀 Got an AI startup ready to shine? Pitch live to top VCs at the #PyTorchConf Startup Showcase on Oct 21 in San Francisco. Selected startups get pitch slots, passes, and global visibility. Apply by Sept 14! 👉 https://t.co/CxIzHa0UoX https://t.co/kf09EFP991

Final chance! 🚨 Scholarship applications for #PyTorchConf close Aug 29. Join us in San Francisco, Oct 22–23, to connect with the community building the future of #OpenSource #AI. Apply now: https://t.co/mLUYbL9kIe https://t.co/SuRO2MRHmd

Boosting inference efficiency for LLaMA-based encoders: Nested Jagged Tensors (NJTs) improve DRAMA model inference by 1.7x–2.3x, making high-performing LLM encoders more practical for production. 🔗 Latest blog: https://t.co/Fi9vSTCalO #PyTorch #LLM #OpenSourceAI #OpenSource https://t.co/cDhftfBIBg

🌟 The #PyTorch Startup Showcase is back! #AI startups get 5 mins on stage, 2 passes to #PyTorchConf + promo across PyTorch channels. Don’t miss your shot Oct 21. Apply by Sept 14. 🔗 https://t.co/y737gLcJ40 https://t.co/5AffyOl6wf

🎙️ Mic check: Anush Elangovan, Corporate Vice President of AI Software at AMD, is bringing the 🔥 to the keynote stage at #PyTorchConf! Get ready for big ideas and deeper learning October 22–23 in San Francisco. 👀 Speakers: https://t.co/3pObLKvjVB 🎟️ https://t.co/4m1idFrCJI https://t.co/hjYYsIlKn2

🚀 Big ideas incoming from Matt White, Executive Director at PyTorch Foundation, live on the #PyTorchConf keynote stage. Join the AI and ML community in San Francisco October 22–23. 👀 Speakers: https://t.co/uUuLiqRp1a 🎟️ https://t.co/WmoAuKx4KG https://t.co/7HeINku34b

Post-training, sometimes called alignment, enables #LLMs to plan, reason, and interact, which pre-training alone doesn't provide. Our latest blog is a primer on post-training for engineers new to LLM modeling, covering SFT, RLHF, DPO, etc. 🔗 https://t.co/tOosAXwCMD #PyTorch https://t.co/Nrqws07gBk

At @AllThingsOpen 2025, Matt White will present From Code to Agency: Open Source and Standards for the Next Generation of AI Agents. #PyTorchFoundation is proud to support the conference as a non-profit sponsor. 📅 Oct 26–28 · Raleigh 🔗 https://t.co/uP5eSnT4Ja #PyTorch https://t.co/kmW3zBCSU1

🚀 Join PyTorch Compile H2, 25 Roadmap Q&A! 📅 Sep 3, 25 | ⏰ 9AM PST 📌 RSVP & Submit Questions: https://t.co/OyZEtlhpeg 📍 Location: https://t.co/i5vRMMVd9e 📜 Roadmap: https://t.co/pGL63IhmlE Hear from the leads, ask questions, & help shape the future of PyTorch Compile.

PyTorch Compiler H2, 25 Roadmap Review & Q&A on September 3rd 💬 Details on PyTorch Dev Discuss: https://t.co/mRA6l369gW + note you can submit questions for the event via the RSVP form

🚀 Join PyTorch Compile H2, 25 Roadmap Q&A! 📅 Sep 3, 25 | ⏰ 9AM PST 📌 RSVP & Submit Questions: https://t.co/OyZEtlhpeg 📍 Location: https://t.co/i5vRMMVd9e 📜 Roadmap: https://t.co/pGL63IhmlE Hear from the leads, ask questions, & help shape the future of PyTorch Compile

⏰ Scholarship applications for #PyTorchConf close TOMORROW! Join us in San Francisco, Oct 22–23, and connect with the community building the future of #OpenSource #AI. Apply now: https://t.co/5s9clg1pto https://t.co/dUp5HS9YKs

The Startup Showcase is back at #PyTorchConf2025 Oct 21 in SF. Watch early-stage #AI teams pitch live to VCs & engineers, connect with the community, and see what’s next. 🔗 Learn more and register: https://t.co/GIUorrISRN #PyTorch #AIInfrastructure #OpenSourceAI https://t.co/desVtr6EsC

🎤 The stage is set & the spotlight’s on Eli Uriegas, Staff Software Engineer at Meta, a #PyTorchConf keynote speaker! Join the minds moving #OpenSource #AI & ML forward. 📍 San Francisco | 📅 October 22–23 👀 Keynotes: https://t.co/Ola47GM2pw 🎟️ Register: https://t.co/vt6D2hI775

🎙️ Mic check: Simon Mo, Lead, vLLM & PhD Student in the Dept. of Electrical Engineering & Computer Sciences at UC Berkeley, is bringing the 🔥 to the keynote stage at #PyTorchConf! Get ready for big ideas & deeper learning October 22–23 in San Francisco. 👀 Speakers: https://t.co/upUXDlXGCZ 🎟️ https://t.co/hrZsN9FjTO

1910: The Year the Modern World Lost Its Mind Good piece comparing the anxieties of the early 1900s, an era of great and rapid technological change, to the present time. In “The Vertigo Years: Europe 1900-1914” by Philipp Blom, he describes how turn-of-the-century technology changed the way people thought about art and human nature and how it “contributed to a nervous breakdown across the west”. “Disoriented by the speed of modern times, Europeans and Americans suffered from record-high rates of anxiety and a sense that our inventions had destroyed our humanity. Meanwhile, some artists channeled this disorientation to create some of the greatest art of all time.”

【イベントレポート】Open House 2025:金融・防衛の難関課題に挑む、AI社会実装の最前線 https://t.co/Ykb4oYqjTg https://t.co/ac5aJKRhLv

国産AI、米中競争の「漁夫の利」生かせ サカナAIのデビッド・ハCEO 8月17日の日経新聞朝刊のコラム「直言」に、Sakana AIのCEO、David Haのインタビューが掲載されました。 Sakana AIが日本で起業した理由、日本において米中の先端技術を取り入れる戦略による勝機、これからの事業戦略にいたるまで 、Davidの口で語ったロングインタビューです。 【記事のハイライト】 1. 米中AI競争における「漁夫の利」戦略: 覇権を争う米中のAI技術を融合させ、日本の技術的自立を目指す戦略を提唱。Sakana AIの具体的な取り組みとして、以下のように語っています。 「日本はAIの研究開発において他国に追従すべきではない。独自技術を開発して自立する必要がある。一つの手段は米中双方の技術の融合だ」 「(Sakana AIは)グーグル、オープンAI、中国製AIという3つのモデルを組み合わせて、優れたパフォーマンスを実現している」 2. 「AIの民主化」と日本がAIを開発すべき理由:海外の特定企業や国家によるAI技術の寡占に警鐘を鳴らし、人口減少などの課題を抱える日本こそ、自国の文化や社会に合ったAIを開発すべきだと訴えます。 「AIは人間の思考や行動に大きな影響を及ぼす。日本の社会、文化に合うAIは日本で作るべきだ。特定の大企業や国家が技術を寡占しない世界にすることが重要だ」 「AIが自ら意識を持って人類を滅ぼすとの考えもあるが、それよりも…権威主義国家がAIで国民を支配しようとすることを心配している」 3. 日本での起業と事業戦略:日本で起業した経緯を説明し、早期の黒字化を目指す事業方針に言及しました。 「かつての日本には、ソニーや任天堂のように世界が注目する技術を生み出す企業があったが、AI分野にそういう企業はなかった。世界中の人が『この研究や製品は日本の会社が手掛けたんだ』と話題にするような最先端のAI企業を設けたいと思い、日本での起業に踏み切った」 「AIスタートアップは調達した資金をモデル開発に費し、損失を出していることが多い。我々は研究開発費を低く抑え、大企業や政府機関にサービスを提供…しっかりと利益を追求する方針で、他のAI企業よりも早く黒字化できると考えている」 「黒字化は26年になる見込み…規模の拡大と収益性のバランスをとっていきたい」 4. 世界のAI人材を惹きつける日本の魅力:世界的なAI人材の争奪戦において、日本の持つソフトパワーや、有力な競合が少ない市場環境が、優秀な技術者を集める上で大きな利点になっているとの見立てを語りました。 「日本にはアニメなど米国とは違うソフトパワーがある。日本の文化が好きで、日本での生活に引かれる技術者がいる点はメリットだ」 「もしシリコンバレーで起業していたら、AIビジネスが細分化されているので、ニッチな事業に携わっていただろう。日本は国内に有力なAI企業がまだ少なく、大企業や政府機関と組んだビジネスができる」

The fact that this explosion was animated in 1984 is insane https://t.co/08xrjk9ZpP

The fact that this explosion was animated in 1984 is insane https://t.co/08xrjk9ZpP

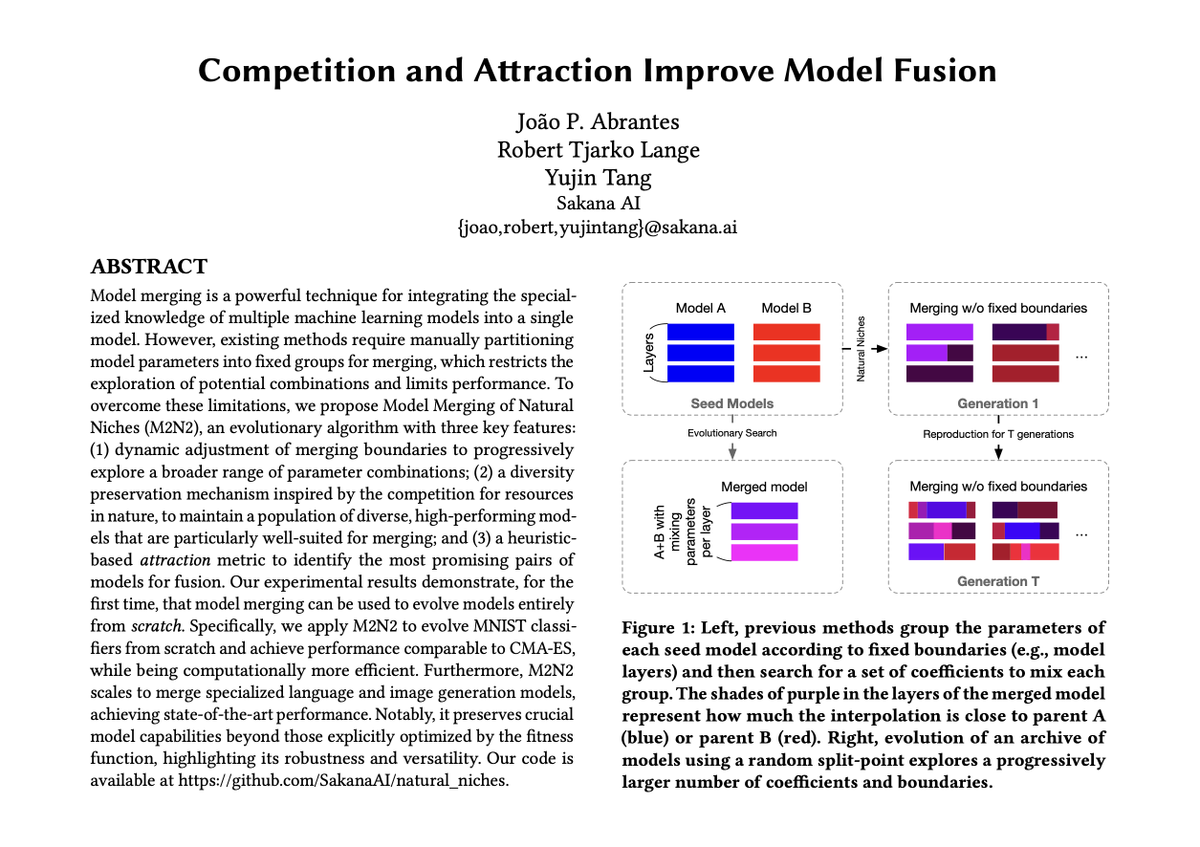

What if we could evolve AI models like organisms in nature, letting them compete, mate, and combine their strengths to produce ever-fitter offspring? Excited to share our new work: “Competition and Attraction Improve Model Fusion” presented at GECCO’25🦎 where it was a runner-up for best paper! Paper: https://t.co/ihfdriOPNw Code: https://t.co/lOW2ghb5bj Summary of Paper At Sakana AI, we draw inspiration from nature’s evolutionary processes to build the foundation of future AI systems. Nature doesn’t create one single, monolithic organism; it fosters a diverse ecosystem of specialized individuals that compete, cooperate, and combine their traits to adapt and thrive. We believe AI development can follow a similar path. What if instead of building one giant monolithic AI, we could evolve a whole ecosystem of specialized models that collaborate and combine their skills? Like a school of fish 🐟, where collective intelligence emerges from the group. This new paper builds on our previous research on model merging, which follows such an evolutionary path. We started by using evolution to find the best “recipes” to merge existing models (our Nature Machine Intelligence paper: https://t.co/zqs7kGL4Gl). Then, we explored how to maintain diversity to acquire new skills in LLMs (our ICLR 2025 paper: https://t.co/00Buf1051A). Now, we're combining these ideas into a full evolutionary system. A key limitation remained in earlier work: model merging required manually defining how models should be partitioned (e.g., by fixed layer or blocks) before they could be combined. What if we could let evolution figure that out too? Our new paper proposes M2N2 (Model Merging of Natural Niches), a more fluid method, which overcomes this with three key, nature-inspired ideas: 1/ Evolving Merging Boundaries 🌿: Instead of merging models using pre-defined, static boundaries (e.g. fixed layers), M2N2 dynamically evolves the “split-points” for merging. This allows for a far more flexible and powerful exploration of parameter combinations, like swapping variable-length segments of DNA rather than entire chromosomes. 2/ Diversity through Competition 🐠: To ensure we have a rich pool of models to merge, M2N2 makes them compete for limited resources (i.e., data points in a training set). This forces models to specialize and find their own “niche,” creating a population of diverse, high-performing specialists that are perfect for merging. 3/ Attraction and Mate Selection 💏: Merging models can be computationally expensive. M2N2 introduces an “attraction” heuristic that intelligently pairs models for fusion based on their complementary strengths—choosing partners that perform well where the other is weak. This makes the evolutionary search much more efficient. Does it work? The results are fascinating: This is the first time model merging has been used to evolve models entirely from scratch, outperforming other evolutionary algorithms. In one experiment, starting with random networks, M2N2 evolved an MNIST classifier that achieves performance comparable to CMA-ES, but is far more computationally efficient. Does it scale? We also showed that M2N2 can scale to large, pre-trained models: We used M2N2 to merge a math specialist LLM with an agentic specialist LLM. M2N2 produced a merged model that excelled at both math and web shopping tasks, significantly outperforming other methods. The flexible split-point was crucial here. Does it work on multimodal models? When we applied M2N2 to text-to-image models, we merged several models by adapting them only for Japanese prompts. The resulting model not only improved on Japanese but also retained its strong English capabilities—a key advantage over fine-tuning, which can suffer from catastrophic forgetting. This nature-inspired approach is central to Sakana AI’s mission to find new foundations for AI based on collective intelligence. Rather than scaling monolithic models, we envision a future where ecosystems of diverse, specialized models co-evolve, collaborate, and combine, leading to more adaptive, robust, and creative AI. 🐙 We hope this work sparks more interest in these under-explored ideas! Published in ACM GECCO’25: Proceedings of the Genetic and Evolutionary Computation Conference. DOI: https://t.co/5eSwhvs5tQ

Why Greatness Cannot Be Planned Both English and Japanese editions now found a home @SakanaAILabs library ✨ https://t.co/Z9RPUNKOgB

TIME誌が選ぶ「TIME 100 AI」に、Sakana AIのCEOを選出いただきました。ご期待に感謝するとともに、日本でフロンティアAI企業を構築するというビジョンに向け、今後も邁進してまいります。 https://t.co/oNM9yjlKuM Sakana AIの目指す姿は、先日のイベントでCEOが語りました。併せてご覧ください。 https://t.co/Wzpg6S5w7R

「日本にフロンティアAI企業を創る」 Sakana AIでは当社初となるOpen Houseイベントを開催し、私から以下のような内容で冒頭の挨拶をしました。 10年以上前にカナダから日本に来て、Googleで深層学習の最先端研究に没頭していた私が、なぜSakana AIを創業するに至ったのか、その背景をお話ししたい と思います。これまでの世界のAI研究開発は、米国と中国に集中しているのが現状です。しかし、アジアを代表する民主主義国家である日本も、AI技術の発展に貢献し、そのグローバルな議論に参加すべきだと強く感じています。だからこそ、私は日本から世界

For months, I've been quietly building a prototype of something just because I want it to exist. Papyrus is a word processor, editor, proofreader, fact-checker, deep researcher, brainstorming partner, all in one. It takes your rough draft and helps you skip three revisions. Now I'm considering pivoting my whole team to build it out, but first, I need your vote. You email is your vote. LINK IN COMMENTS. I don't even care if you use some email you never look at and just use for sign ups. To me one email is one vote and says, go ahead and build this damn thing out and make it awesome. I built it because today's apps are the bloated dial-ups of writing (Word, GDocs). They've got a hundred freaking buttons I don't need and bury the ones I do need. Or they're AI marketing slop toys that promise to read my mind and pump out garbage. They just don't get the real work of writing and they don't get me. I want a clean, focused space with a real AI co-pilot. I don't need it to do the all the writing for me, just like I don't need Claude Code to do all the code for me. I want to work with it. I want a writing partner that acts like a full writing team on-call 24X7: proofreading, fact-checking, and running deep research in the background while I focus on the hard parts. I'm tired of cutting and pasting between a dozen tools so I started writing this thing. But won't the big guys just build something like this? Sure. But it will just bolt your ass into their ecosystem. Google Docs will force Gemini on you. Whatever office suite OpenAI pumps out will only use GPT. I want to use any model. Open or closed. They're commodities. The app is the thing. I want the best tool for the job, GPT-5, Kimi, Qwen, Claude, whatever comes tomorrow. I want a fluid, flexible workspace. I'm building this thing for people who've got critical thinking and who build, who don't want to outsource every damn thing to the machine. So if that's you and you believe this should exist, I really need your vote. Tell me to build it and I'll go all-in and make it a reality. Thanks for giving me a moment of your time.

We are honored that Sakana AI’s CEO (@hardmaru) has been named to the TIME 100 AI list. We’re truly grateful for the recognition and will continue our mission to build a frontier AI company in Japan. Thank you for your support! @TIME #TIME100AI Full List:https://t.co/dL9kx1ilej

Today, we're releasing The Circuit Analysis Research Landscape: an interpretability post extending & open sourcing Anthropic's circuit tracing work, co-authored by @Anthropic, @GoogleDeepMind, @GoodfireAI @AiEleuther, and @decode_research. Here's a quick demo, details follow: ⤵️ https://t.co/wVIlsOVyIF