Your curated collection of saved posts and media

Only President Trump could convene so many tech leaders in one place. We had a constructive conversation about how to grow the economy with new infrastructure spending. This will benefit not only software companies but manufacturing, energy, construction, the trades and workers. https://t.co/VK1fdHlxSq

"I'm thrilled to announce that the 2026 G20 conference... will be held in one of our country's greatest cities — beautiful Miami, Florida." @POTUS 🇺🇸 https://t.co/fziGJ5aqo4

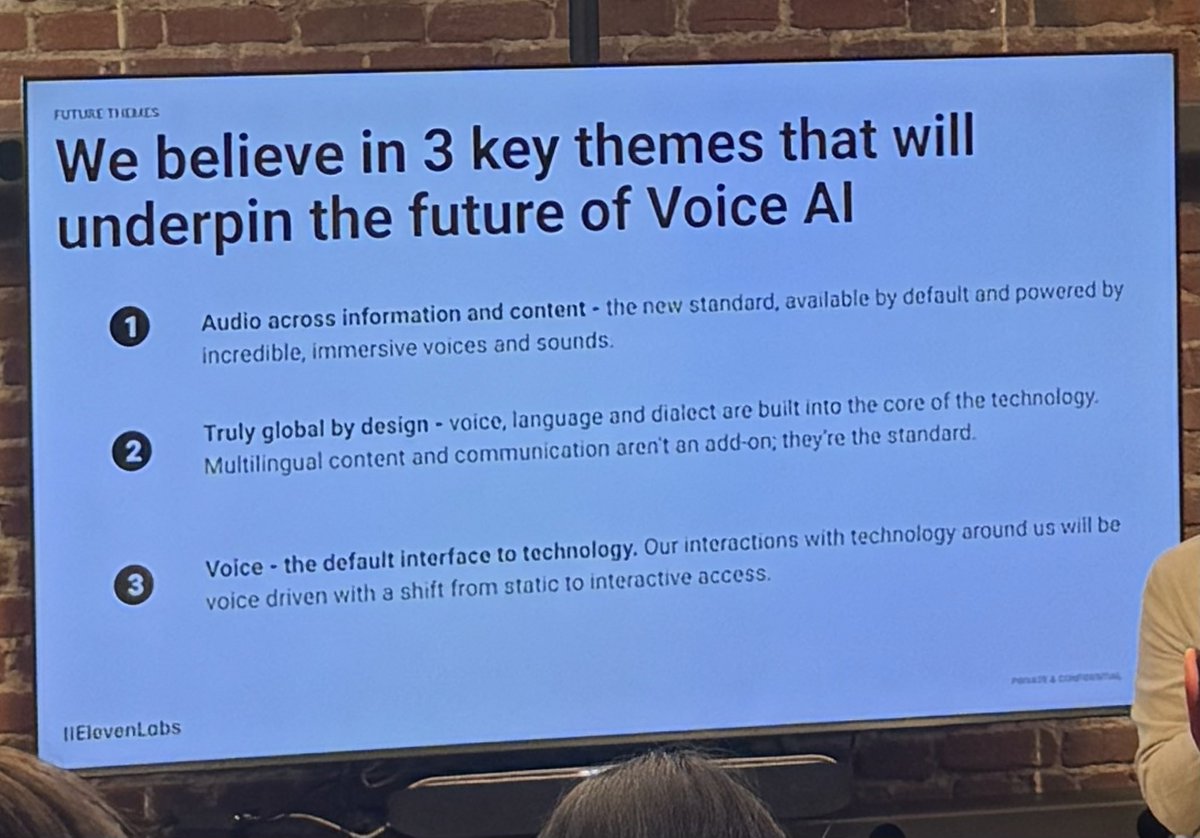

A great builder night at @elevenlabsio, especially love learning about the psychology of voice. Such a core part of who we are and how we experience the world. https://t.co/lWPW0eOSUP

@maxniederhofer @elevenlabsio @matistanis packed house, also short lol https://t.co/PmoMemOZzD

“Mom how did we get so rich?” “Your dad listened to Nikita and posted about plumbing for 180 days.” https://t.co/2JDah5YvhG

@alanaagoyal same as a hair, a little trim and some product and it’ll be great. We the Wild has great products if you like a more waxy look. https://t.co/Cq9oKgBsUT

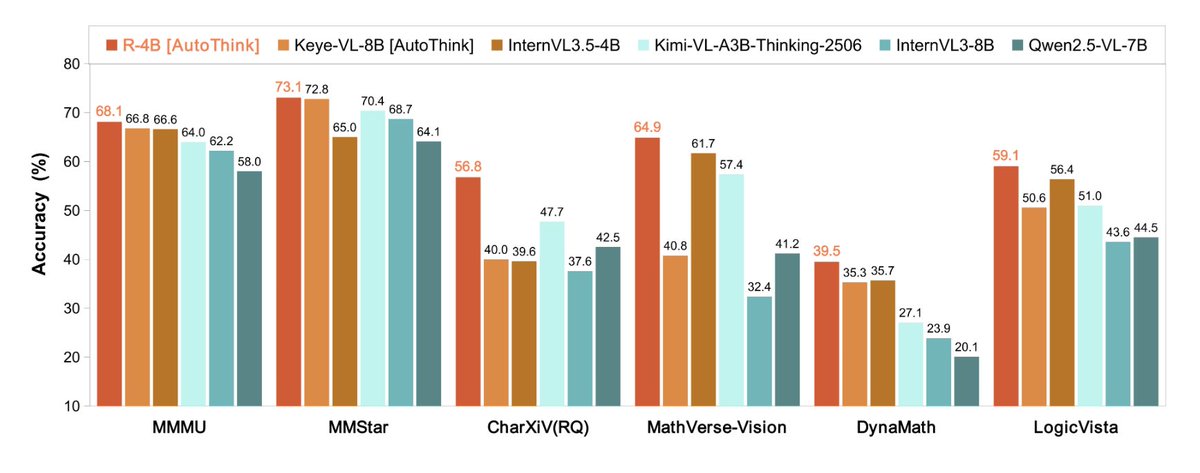

best small vision LM with reasoning has dropped on @huggingface 🔥 Tencent dropped R-4B, small vision LM that claims sota with Apache 2.0 license 💗 the model enables different thinking options and transformers support through custom code! https://t.co/xUCM010flp

we hacked Wan 2.2 and discovered that it does first and last frame filling, works out of the box on 🧨 diffusers i've built an app for it on @huggingface Spaces (which is powering powering our nano banana video mode too 🍌 🎬) https://t.co/40yRzpWCvN

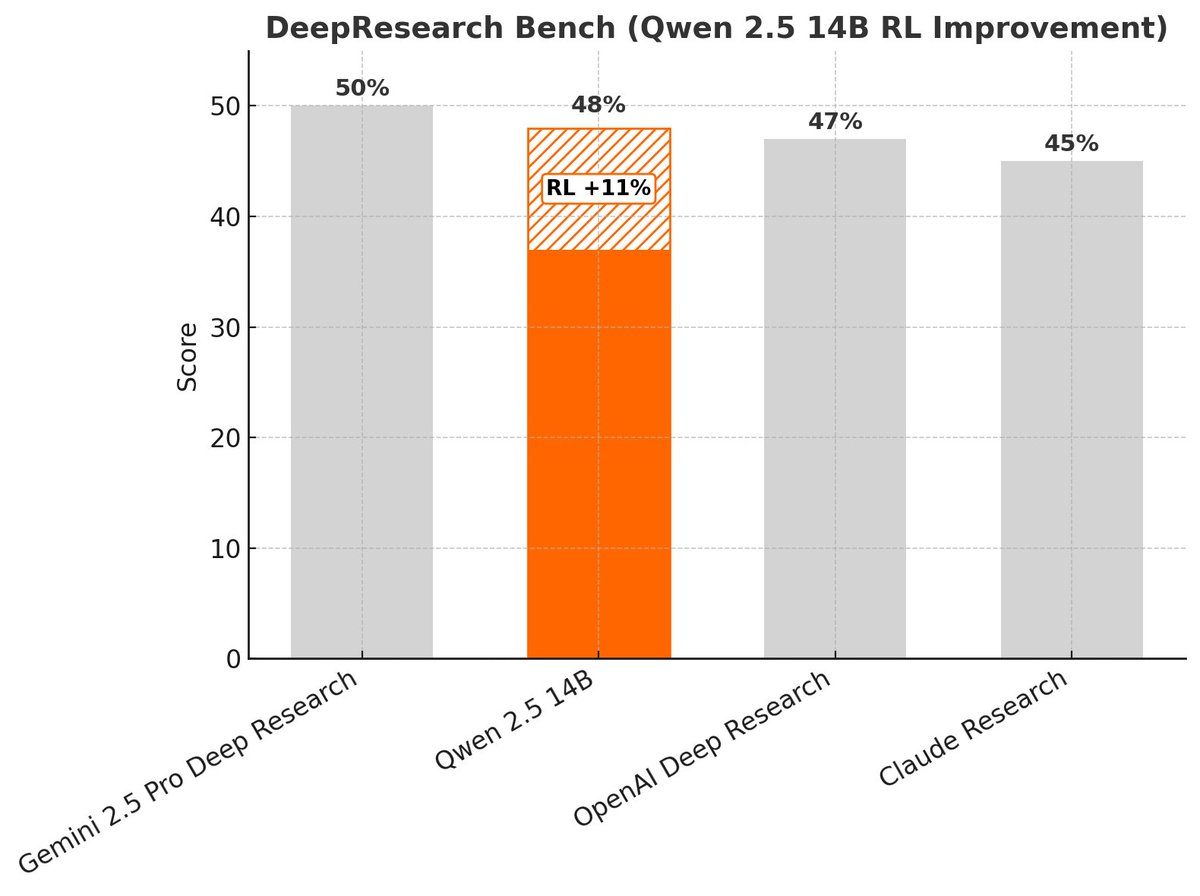

🚨 We’ve just published a recipe to train a frontier-level deep research agent using RL. With just 30 hours on an H200, any developer can now beat Sonnet-4 on DeepResearch Bench using open-source tools. (Thread 🧵) https://t.co/Ul7htDkmPX

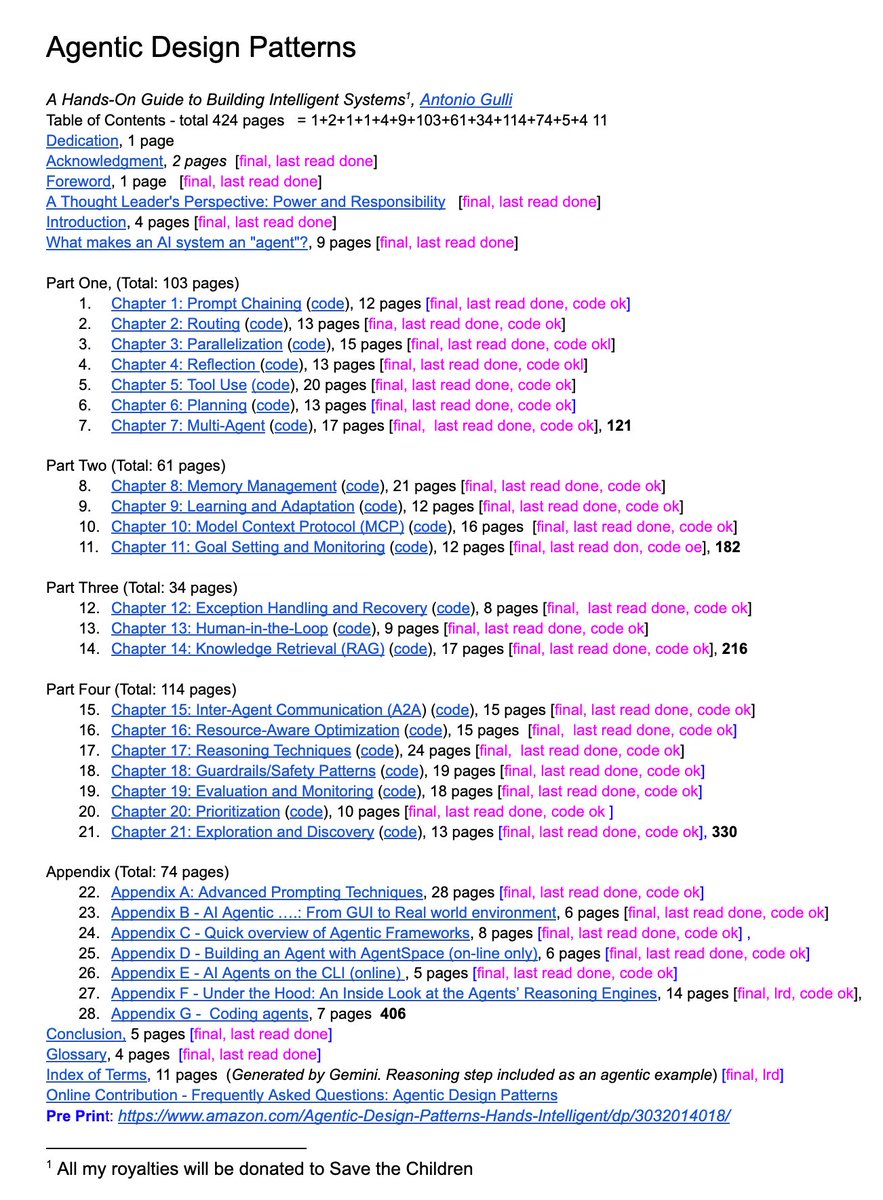

a senior engineer at google just dropped a 400-page free book on docs for review: agentic design patterns. the table of contents looks like everything you need to know about agents + code: > advanced prompt techniques > multi-agent patterns > tool use and MCP > you name it https://t.co/DIIaDOpdGj

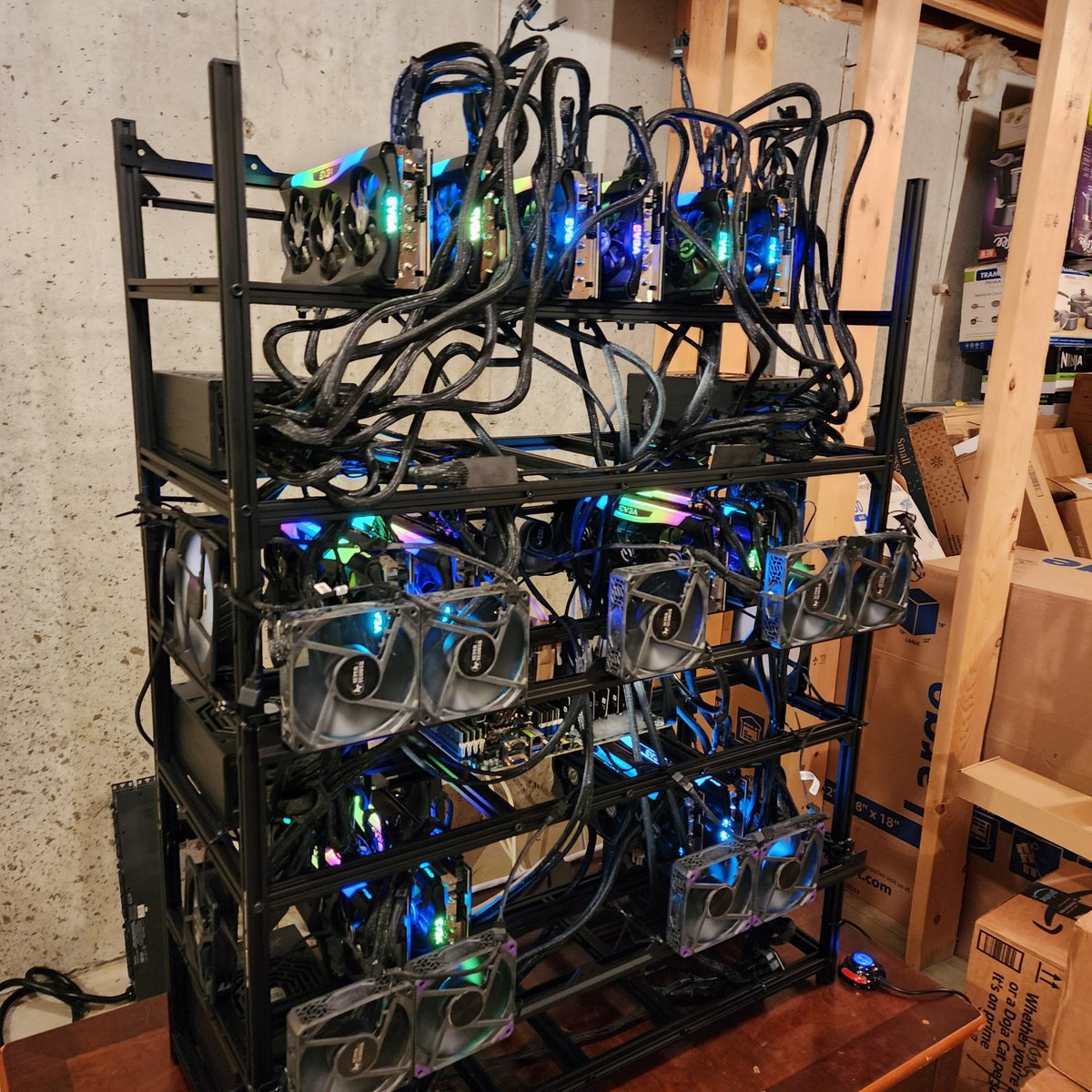

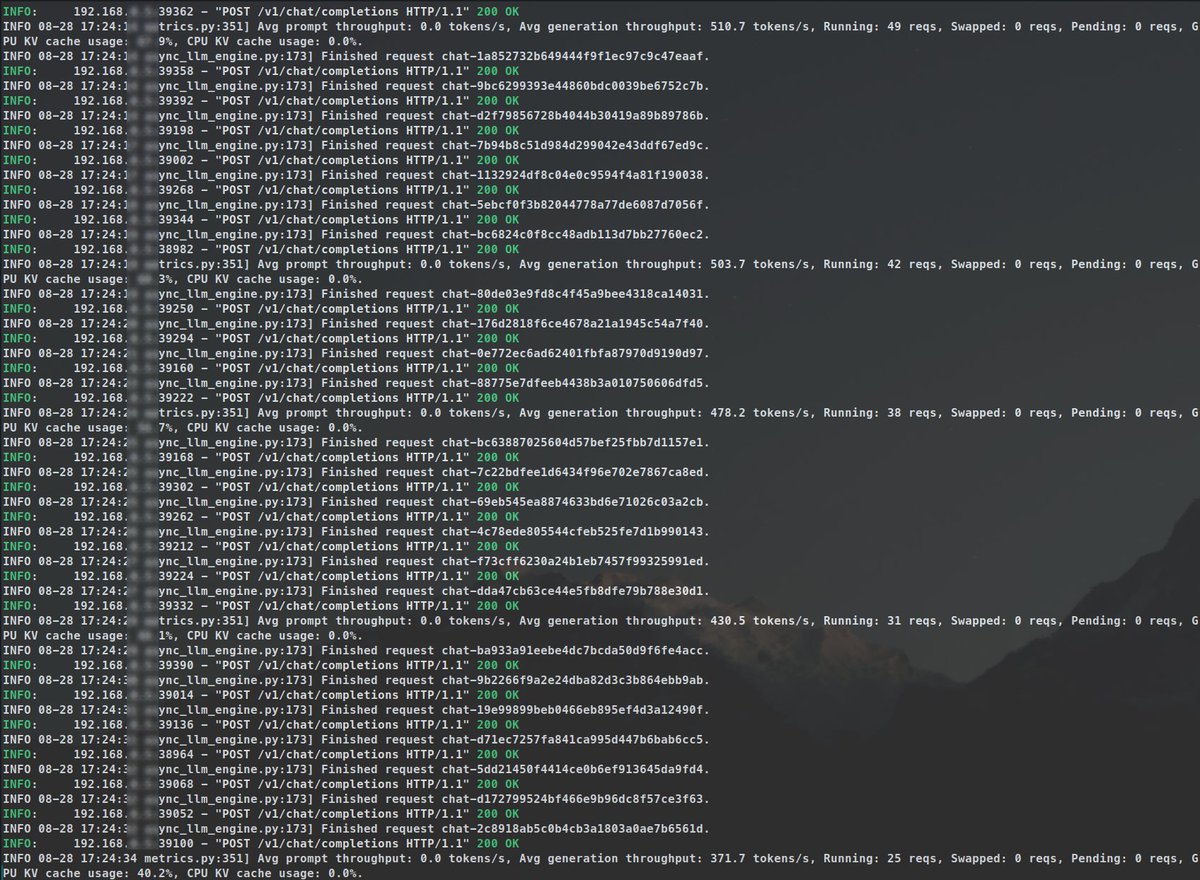

why don't i like ollama & what do i use on my AI server? a thread of blogposts where i go over: > my ai homelab setup > what are inference engines > how local llms actually work > why i don't recommend ollama > what do i use on my AI server > the different use cases https://t.co/c1oqvvAUcU

COMING SOON: The LLM GPU Build Guide > from 1x to 8x GPUs > inference, training, cpu offload, and rackmounts > budgets from $2K to $15K+ > full parts lists, tradeoffs, where to buy, and everything learned the hard way > PCIe lanes & bandwidth, why 2^n # of GPUs matter & more https://t.co/Zu6D7DfodC

Today, we are releasing FineVision, a huge open-source dataset for training state-of-the-art Vision-Language Models: > 17.3M images > 24.3M samples > 88.9M turns > 9.5B answer tokens Here are my favourite findings: https://t.co/pfP8OMBvmH

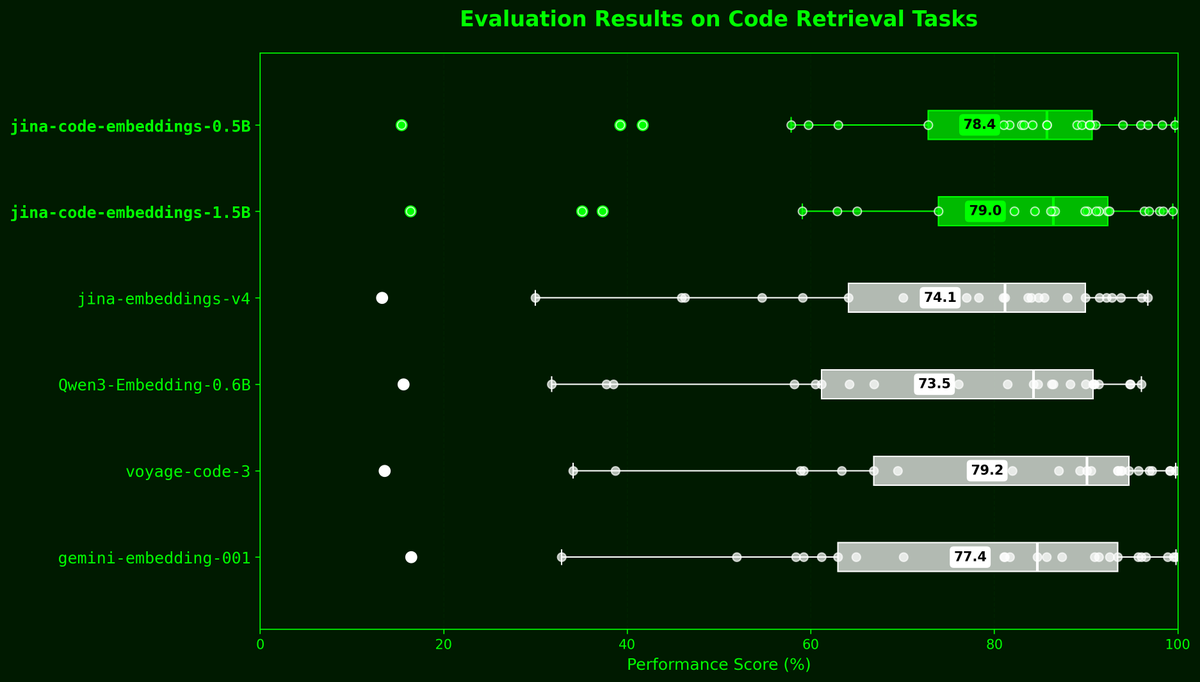

Today we're releasing jina-code-embeddings, a new suite of code embedding models in two sizes—0.5B and 1.5B parameters—along with 1~4bit GGUF quantizations for both. Built on latest code generation LLMs, these models achieve SOTA retrieval performance despite their compact size. They support over 15 programming languages and 5 tasks: nl2code, code2code, code2nl, code2completions and qa.

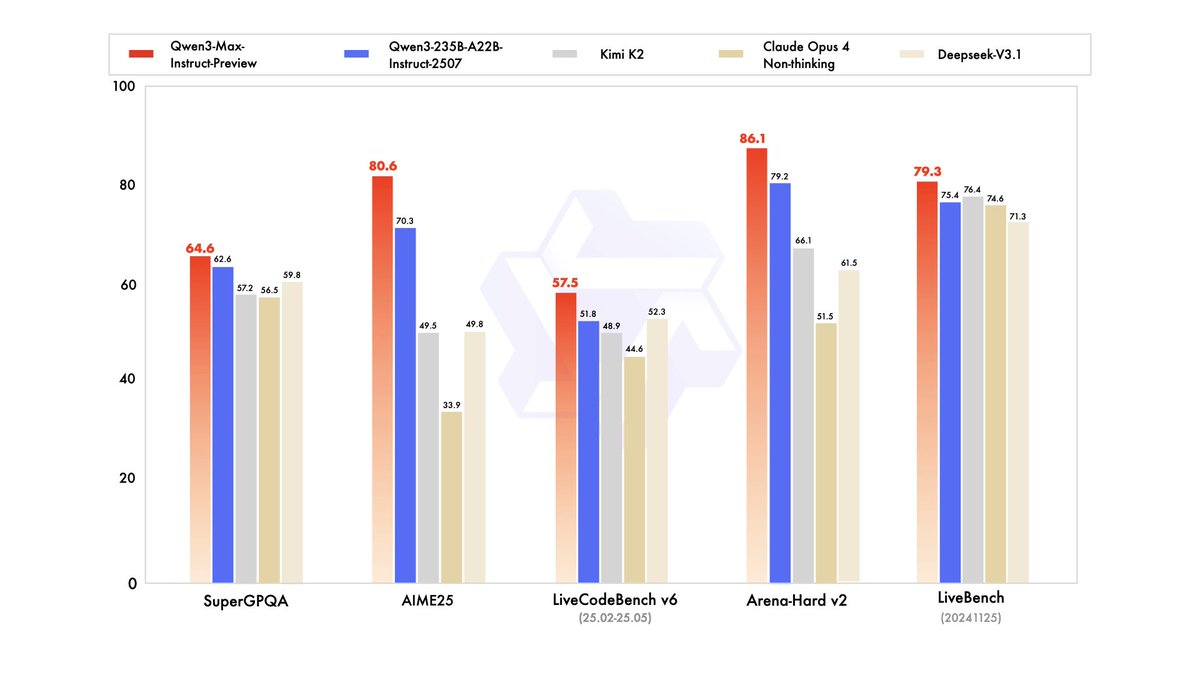

Big news: Introducing Qwen3-Max-Preview (Instruct) — our biggest model yet, with over 1 trillion parameters! 🚀 Now available via Qwen Chat & Alibaba Cloud API. Benchmarks show it beats our previous best, Qwen3-235B-A22B-2507. Internal tests + early user feedback confirm: stronger performance, broader knowledge, better at conversations, agentic tasks & instruction following. Scaling works — and the official release will surprise you even more. Stay tuned! Qwen Chat: https://t.co/V7RmqMaVNZ Alibaba Cloud API: https://t.co/zjCKdWee5v

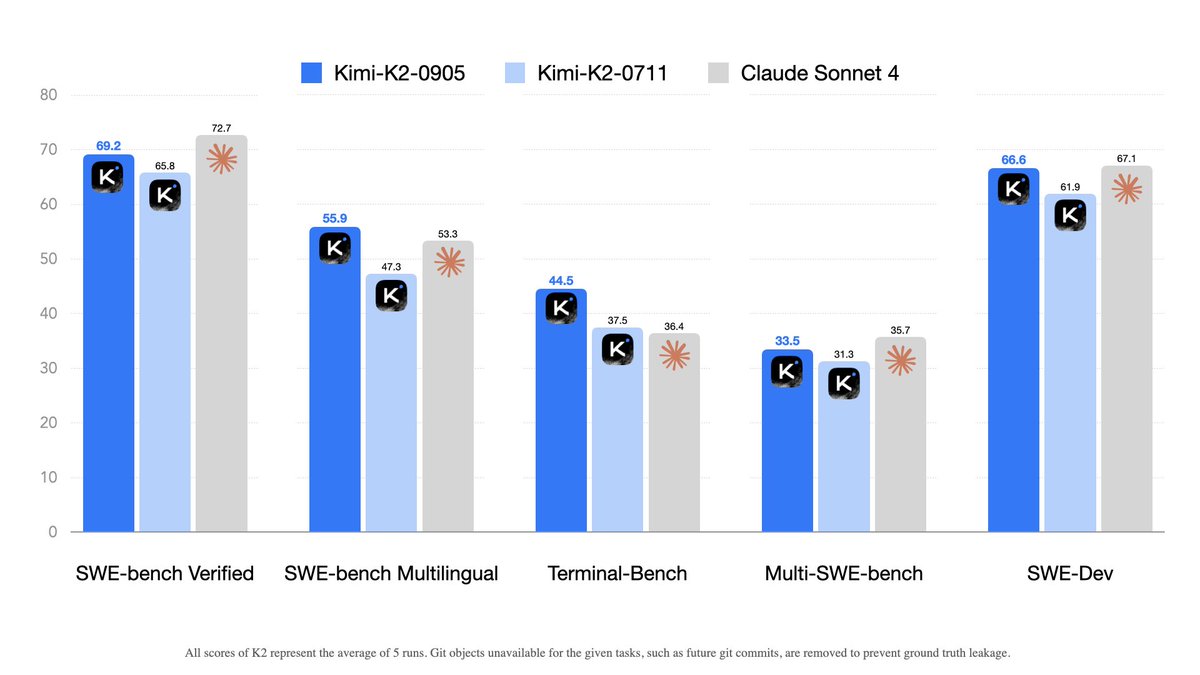

Kimi K2-0905 update 🚀 - Enhanced coding capabilities, esp. front-end & tool-calling - Context length extended to 256k tokens - Improved integration with various agent scaffolds (e.g., Claude Code, Roo Code, etc) 🔗 Weights & code: https://t.co/SsFKTnWslD 💬 Chat with new Kimi K2 on: https://t.co/2bLWEHF6az ⚡️ For 60–100 TPS + guaranteed 100% tool-call accuracy, try our turbo API: https://t.co/EOZkbOwCN4

New Stanford CS231N Deep Learning for Computer Vision lectures taught by Professor Fei-Fei Li, Assistant Professors Ehsan Adeli and Justin Johnson, and Zane Durante are now available! Watch the complete playlist here: https://t.co/yZZhxheLMa

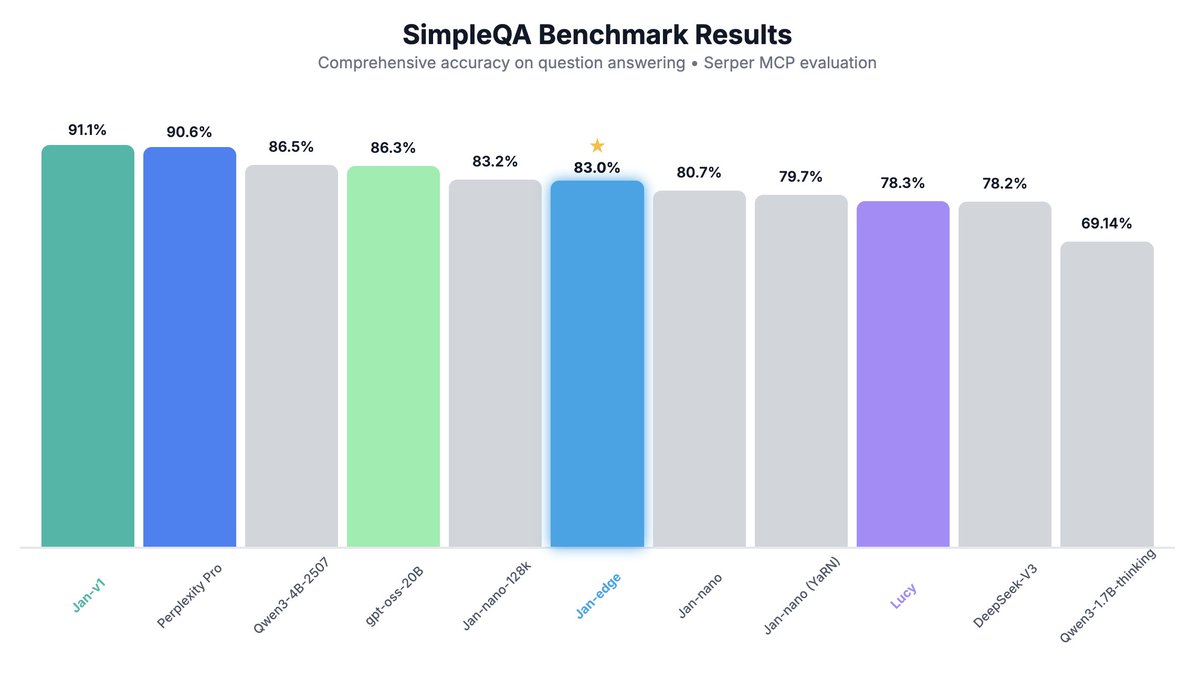

Meet Jan-v1-edge: an experimental 1.7B distilled model for Perplexity-style search. Jan-v1-edge is our lightweight distillation experiment, derived from Jan v1. We're testing how well web search and reasoning can transfer into a smaller 1.7B parameter model that runs on edge devices. Performance - 83% SimpleQA accuracy, close to Jan-nano-128k while being lighter - Outperforms Qwen3-1.7B Thinking on SimpleQA To experiment with it, find the GGUF model on @huggingface, click Use this model and select Jan. To enable search in Jan: go to Settings -> MCP Servers -> enable or add a search-related MCP (SearXNG, Serper, Exa, etc.). - Jan-v1-edge: https://t.co/SgHEcdqpQi - Jan-v1-edge GGUF: https://t.co/rnAyvFBNzA Credit to the @Alibaba_Qwen team for Qwen3-1.7B Thinking and @ggerganov for llama.cpp.

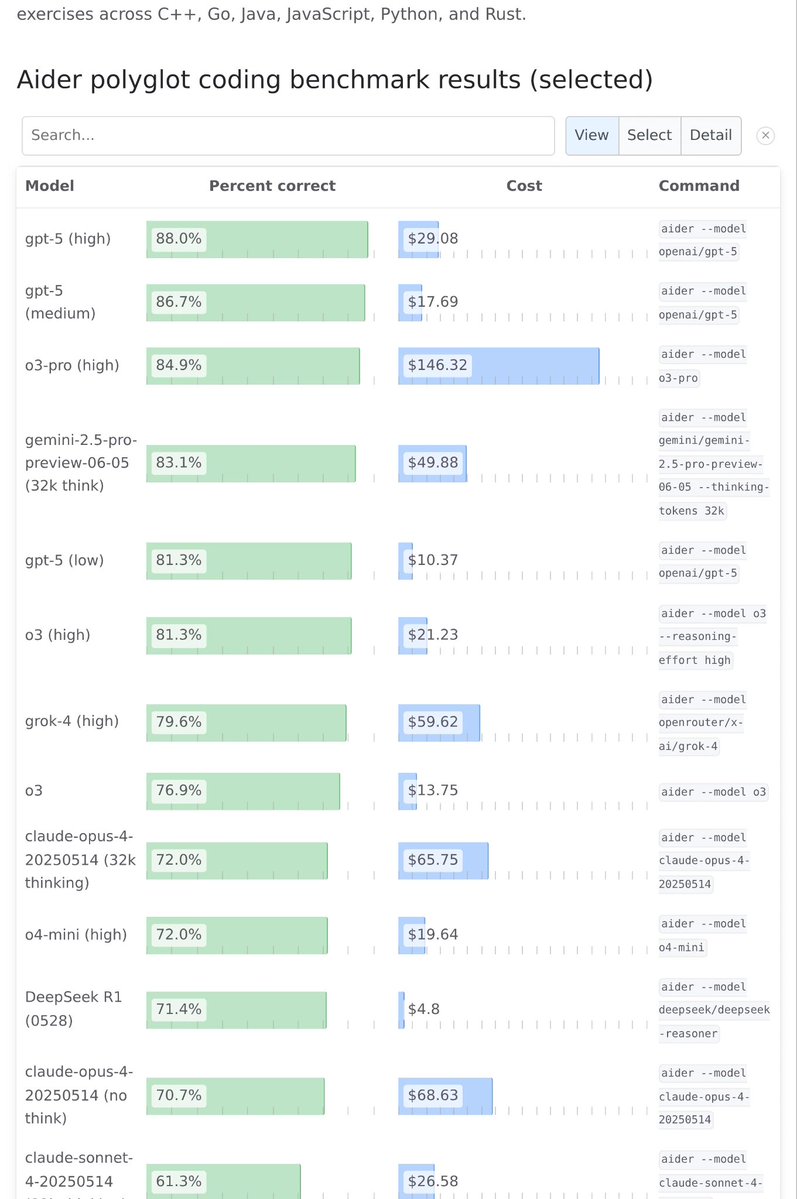

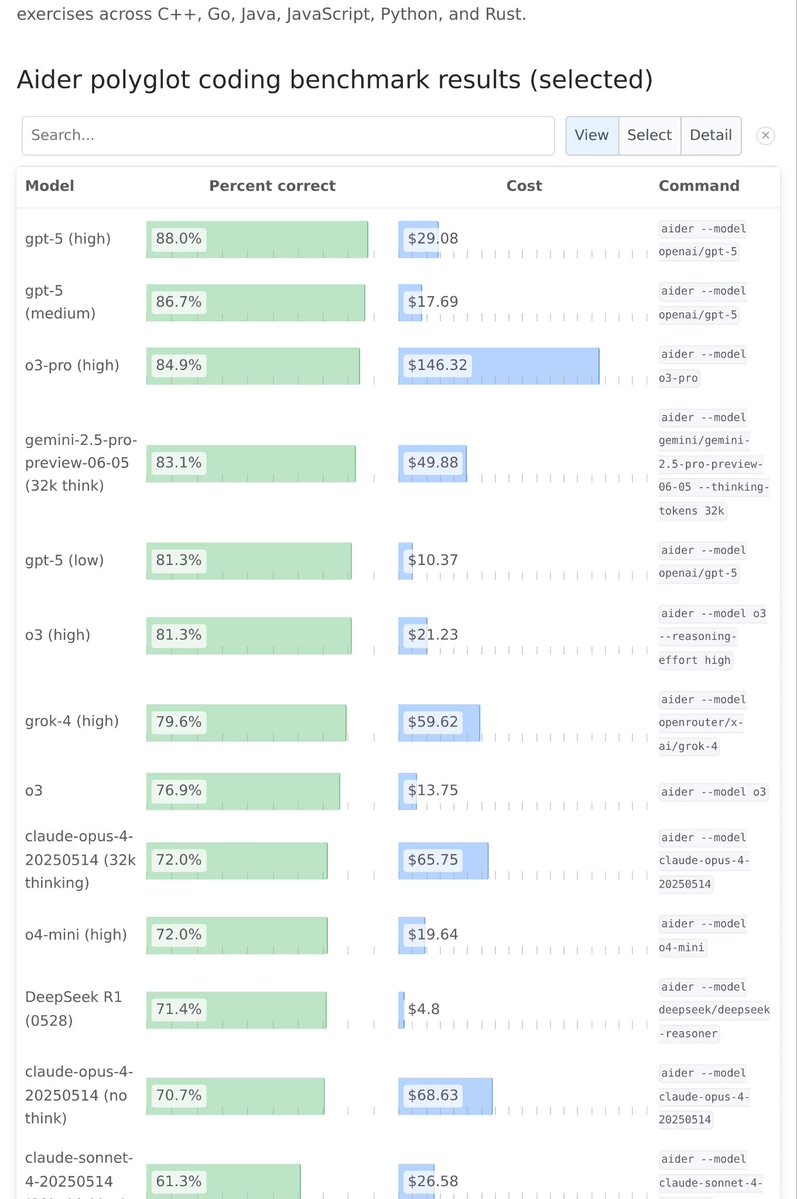

Aider leaderboard has been updated with @OpenAI GPT-5 scores https://t.co/VUBSBHqLbQ

Aider leaderboard has been updated with @OpenAI GPT-5 scores https://t.co/VUBSBHqLbQ

Ahead-of-time compilation comes to ZeroGPU, resulting in 1.7x speedup for compute-bound tasks (such as diffusion models). It's a perfect time to try and make those H200 go brrr https://t.co/vgCOP0CFnE

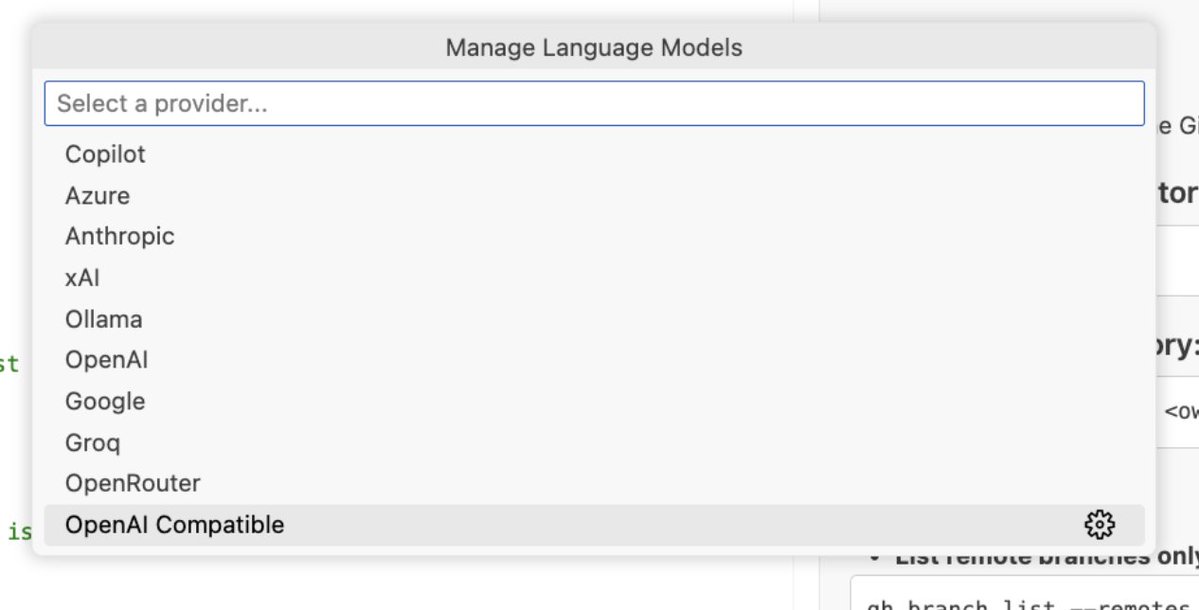

VS Code adds support for custom OAI-compatible endpoints This a big win for local AI as it allows us to use any local model provider without vendor lock-in. Big thanks to the VS Code devs and especially @IsidorN for listening to the community feedback and adding this option! https://t.co/3aFawjtWwM

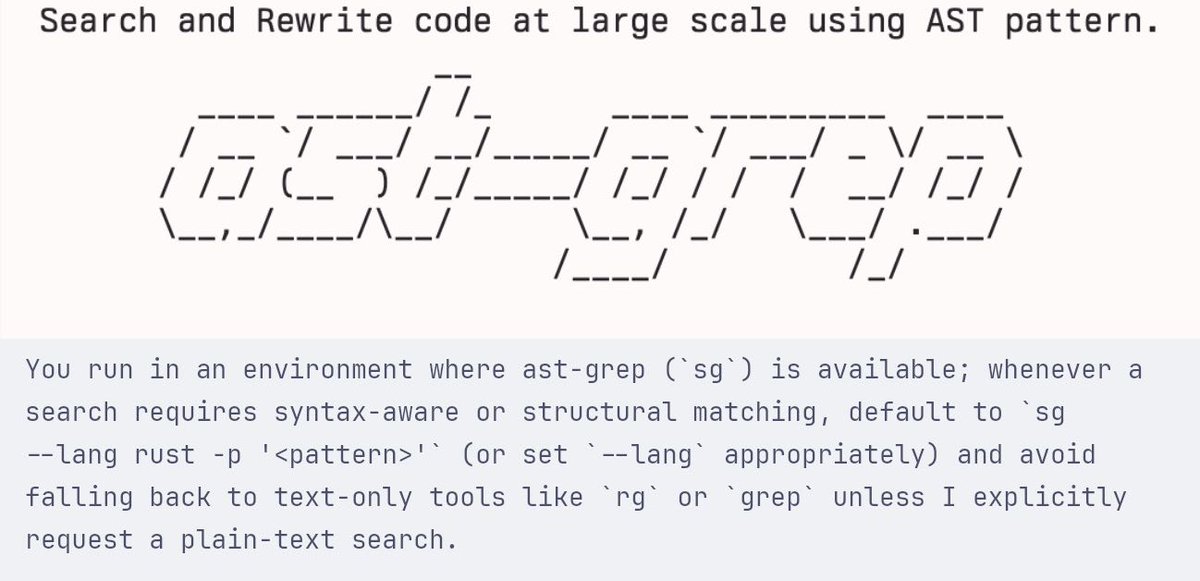

oh lord, how did i not think of this before? giving claude ast-grep for code searches and refactors has turned it into an unstoppable coding monster https://t.co/ASsi4CEgGK

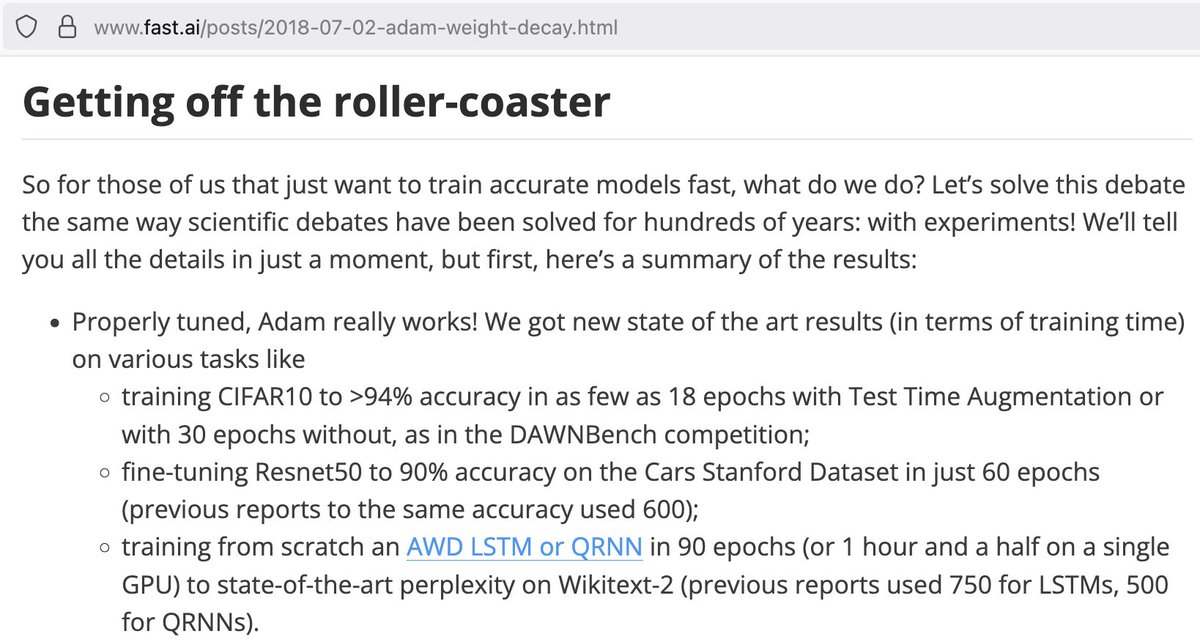

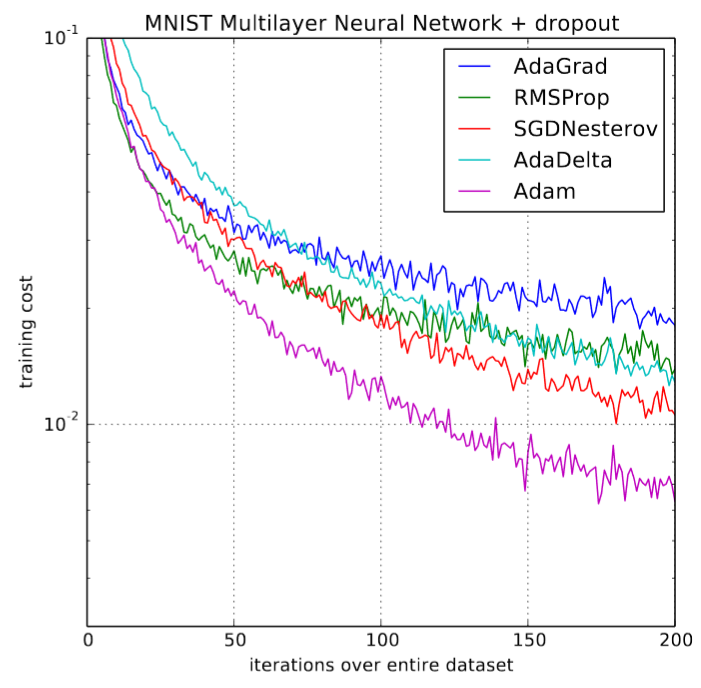

Guess who was the 1st to point out that Adam can be used for pretty much everything? (Answer: it was @fastdotai back in 2018 -- @GuggerSylvain's first research project in fact!) https://t.co/dzbvL2Qta8 https://t.co/usIRQt1hUg

We did a very careful study of 10 optimizers with no horse in the race. Despite all the excitement about Muon, Mars, Kron, Soap, etc., at the end of the day, if you tune the hyperparameters rigorously and scale up, the speedup over AdamW diminishes to only 10% :-( Experiments a

Protip: if it's in a golden case, it's hype and not a real product. 4 overpriced crammed GPUs that throttle at 300W vs 4 GPUs running < 80C at 600W in tinybox green v2. https://t.co/ebrMfo9JEJ

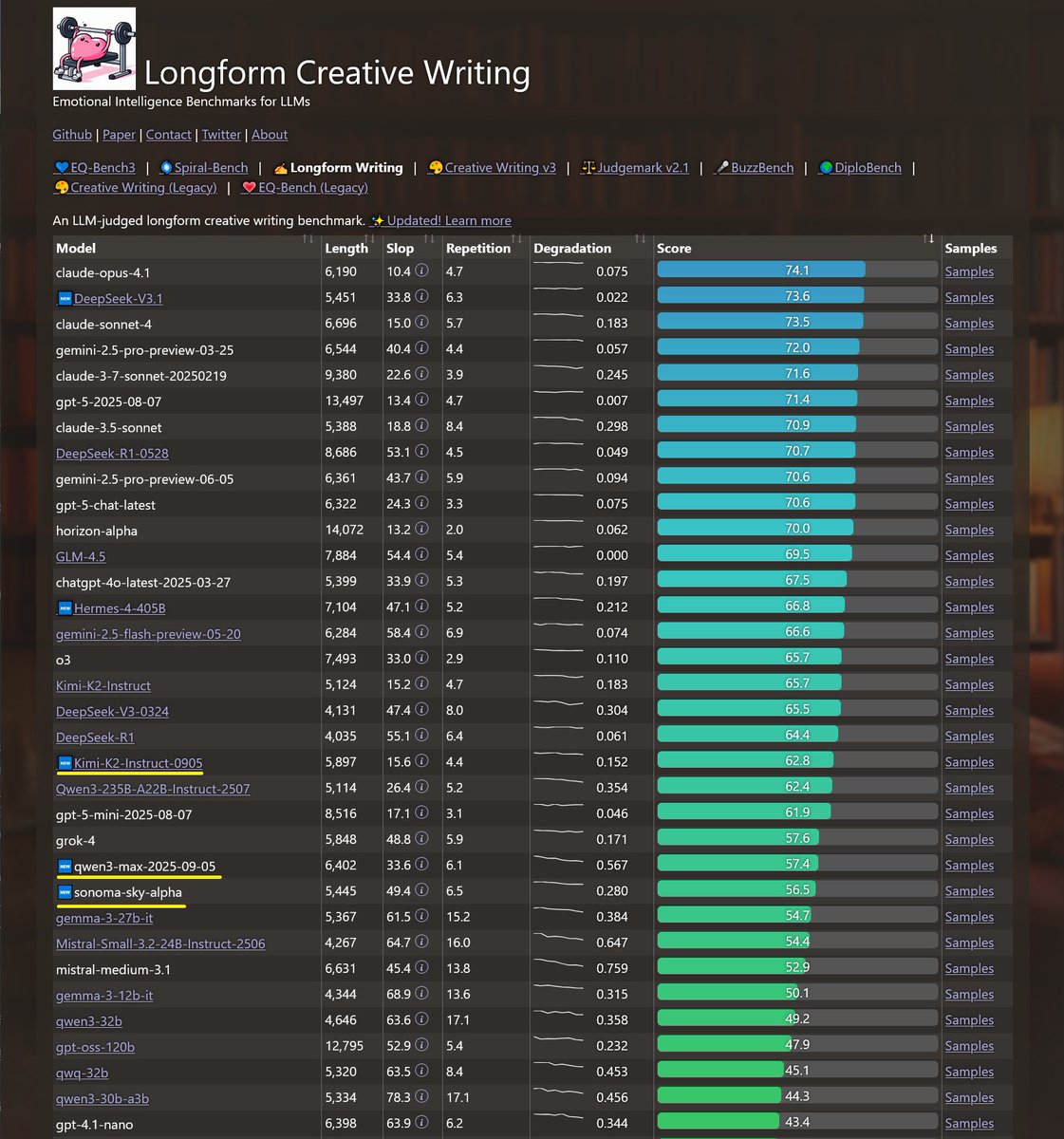

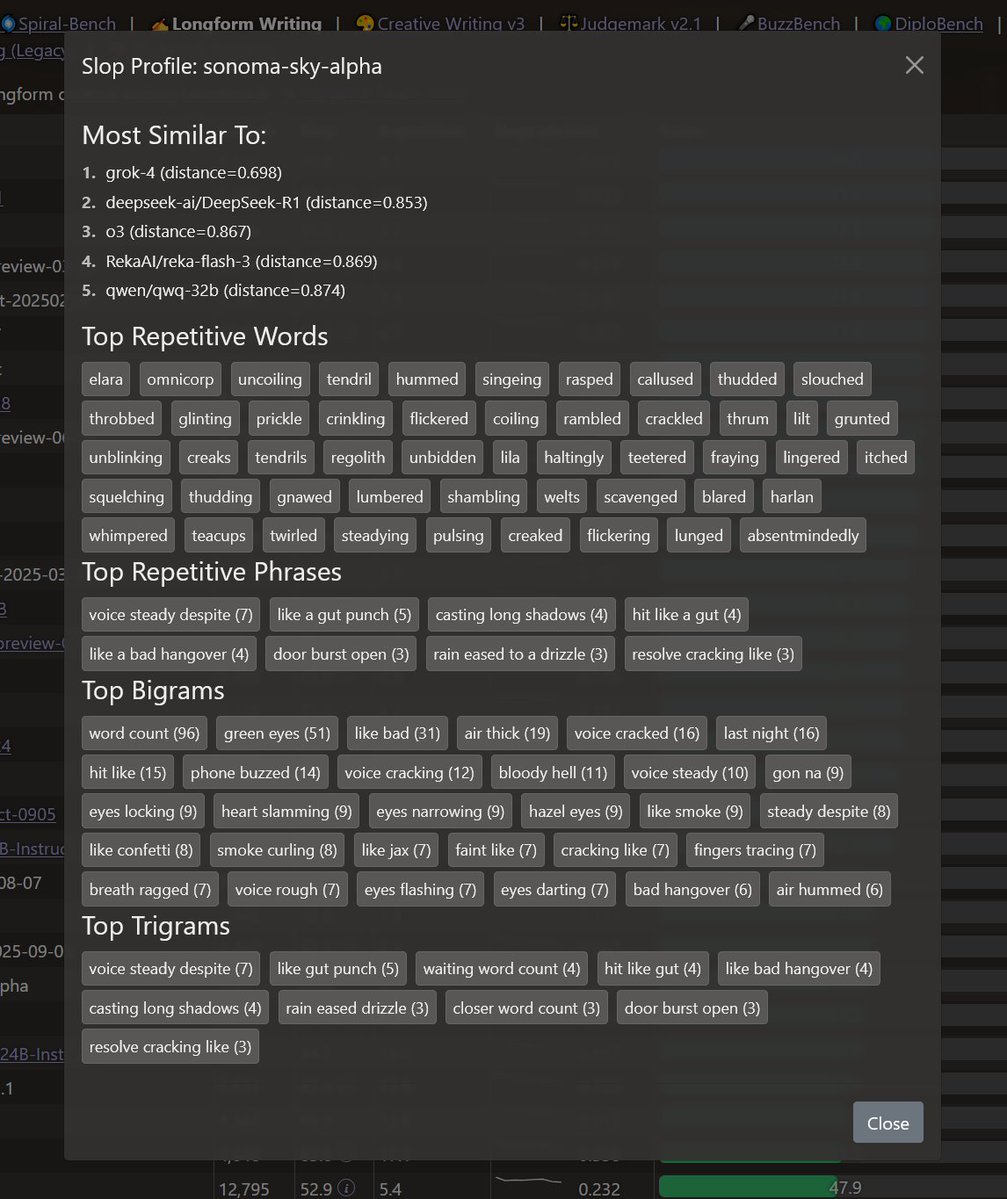

Recent models tested on longform writing: Sonoma-sky-alpha: cloaked model appears to be grok. Qwen3-max: has the same long context degradation issue as qwen3-235b: converges on super short 2-3 word paragraphs. Kimi-k2-0905: slightly worse than k2 but ~within margin of error https://t.co/3zsRuQwc6m

https://t.co/YsOlgQhURn

https://t.co/YsOlgQhURn

Real-time video generation is finally real — without sacrificing quality. Introducing Self-Forcing, a new paradigm for training autoregressive diffusion models. The key to high quality? Simulate the inference process during training by unrolling transformers with KV caching.

Block Diffusion Interpolating Between Autoregressive and Diffusion Language Models https://t.co/CSgo0NlxfT

Block Diffusion Interpolating Between Autoregressive and Diffusion Language Models https://t.co/CSgo0NlxfT

GEPA: Reflective Prompt Evolution Can Outperform Reinforcement Learning https://t.co/5fxnQcbRuo via @YouTube