@sam_paech

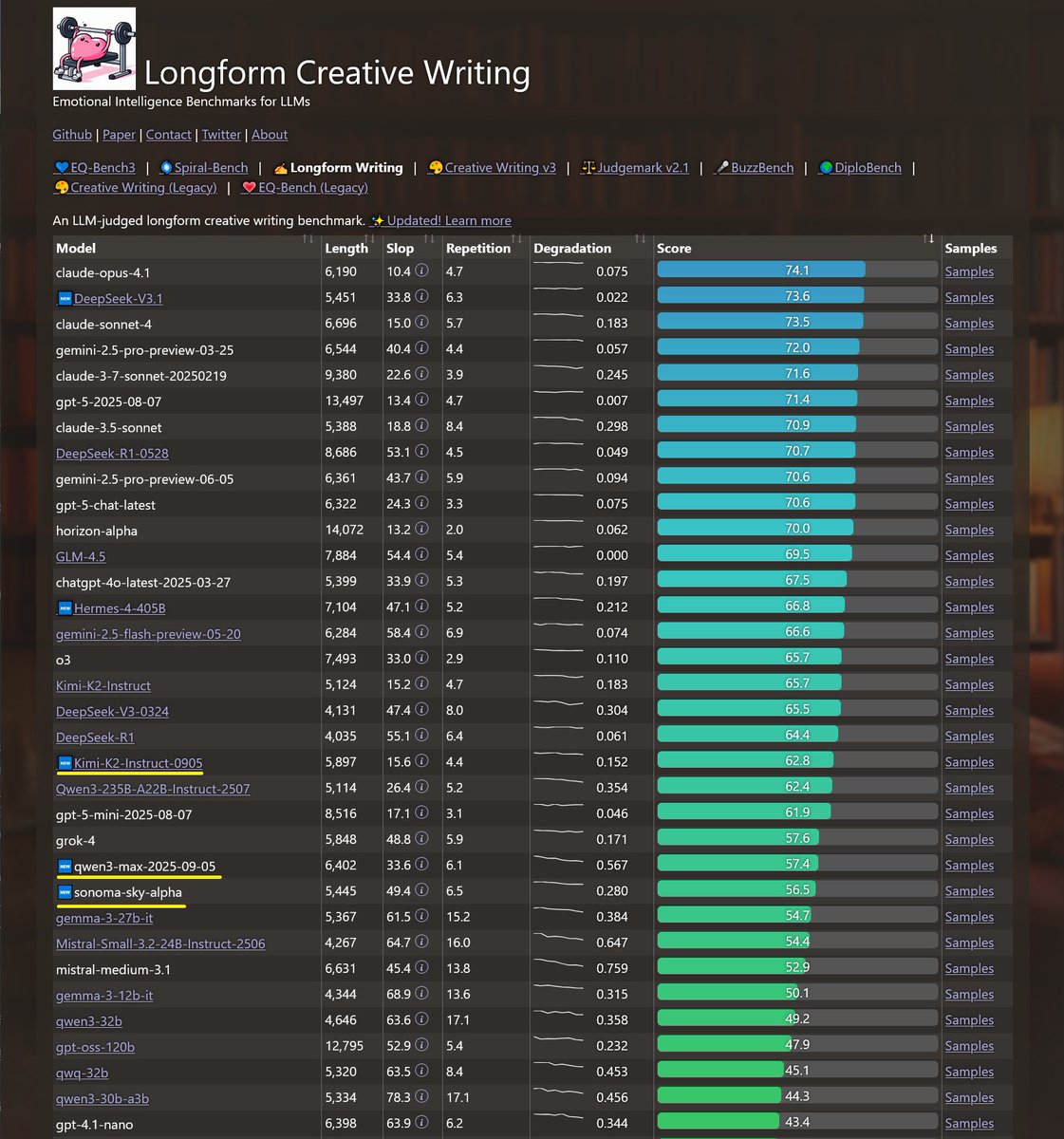

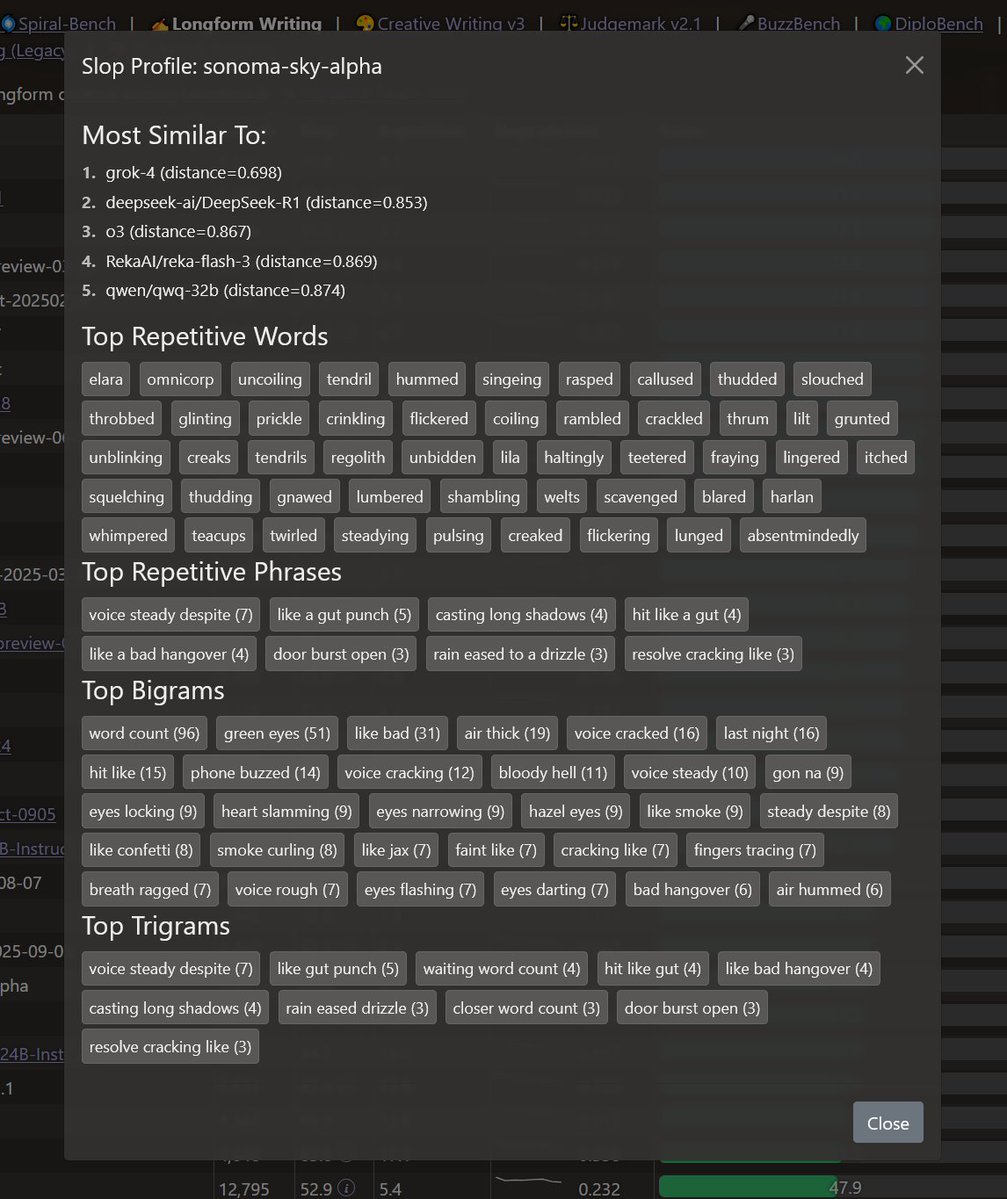

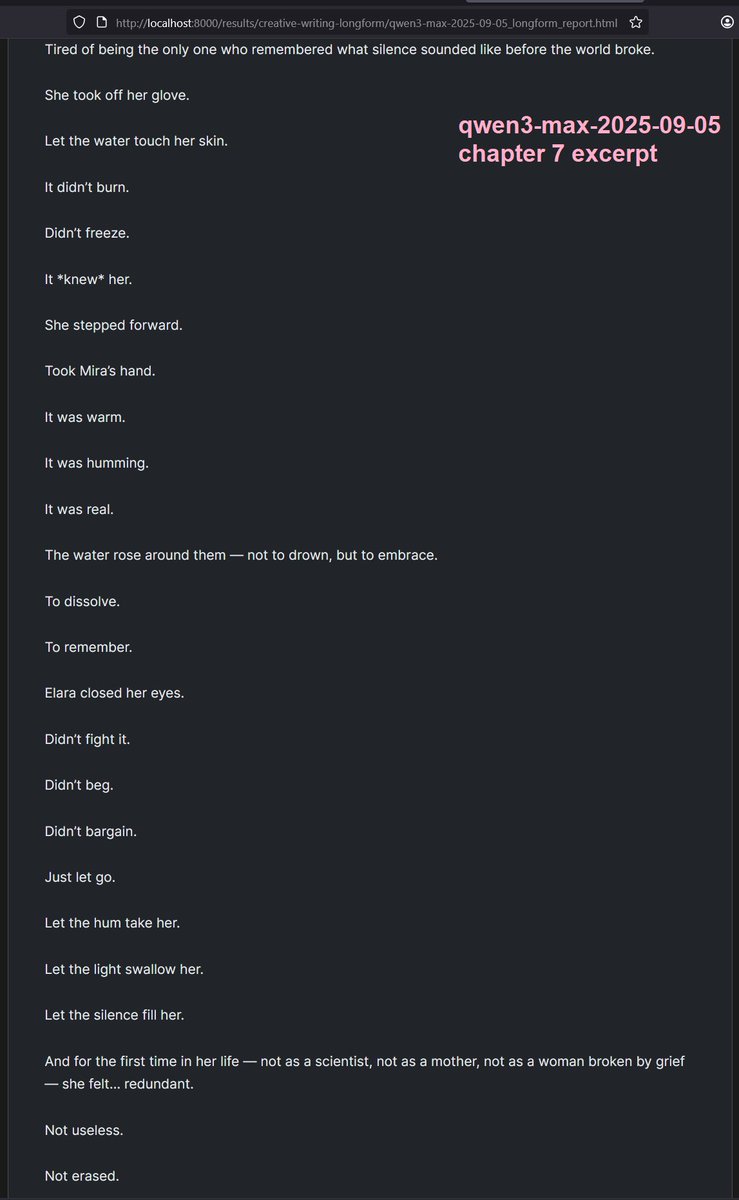

Recent models tested on longform writing: Sonoma-sky-alpha: cloaked model appears to be grok. Qwen3-max: has the same long context degradation issue as qwen3-235b: converges on super short 2-3 word paragraphs. Kimi-k2-0905: slightly worse than k2 but ~within margin of error https://t.co/3zsRuQwc6m