@JinaAI_

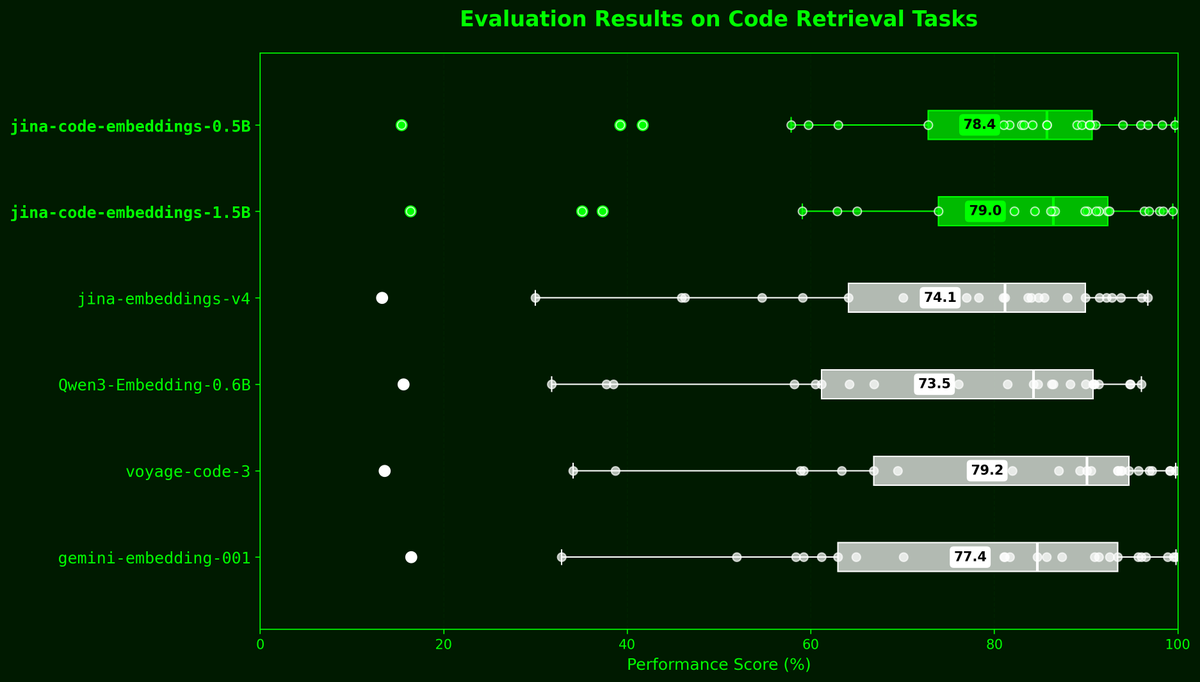

Today we're releasing jina-code-embeddings, a new suite of code embedding models in two sizes—0.5B and 1.5B parameters—along with 1~4bit GGUF quantizations for both. Built on latest code generation LLMs, these models achieve SOTA retrieval performance despite their compact size. They support over 15 programming languages and 5 tasks: nl2code, code2code, code2nl, code2completions and qa.