Your curated collection of saved posts and media

Microsoft @Windows: "We're preloading File Explorer so it launches faster." File Pilot: Compiles in less than 3 seconds. Launches instantly. Eats whole drive for breakfast. Speed isn't a background task, it's design. https://t.co/v4OY47UD4h https://t.co/YicyyvhHlI

Microsoft admits File Explorer is slow in Windows 11, and it’s going to preload it in the background to help improve launch performance. “This shouldn’t be visible to you, outside of File Explorer hopefully launching faster when you need to use it,” Microsoft confirmed. If you

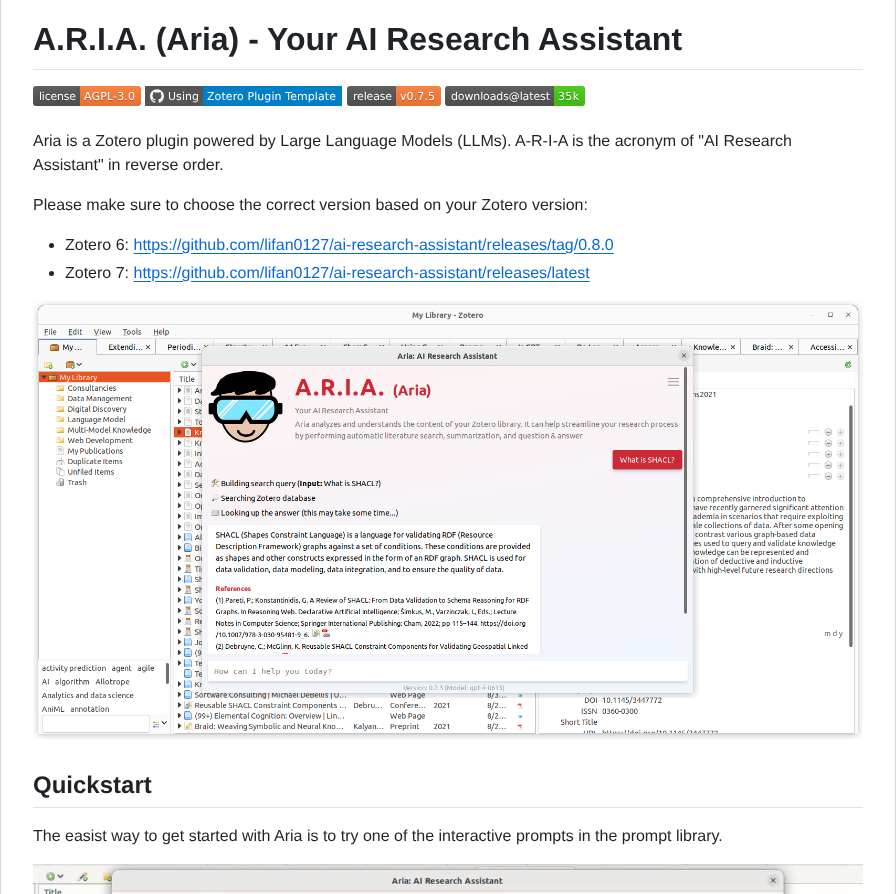

Zotero plugin powered by LLMs https://t.co/K8EnyJDHAF https://t.co/kkCvLP5qZR

Zotero plugin powered by LLMs https://t.co/K8EnyJDHAF https://t.co/kkCvLP5qZR

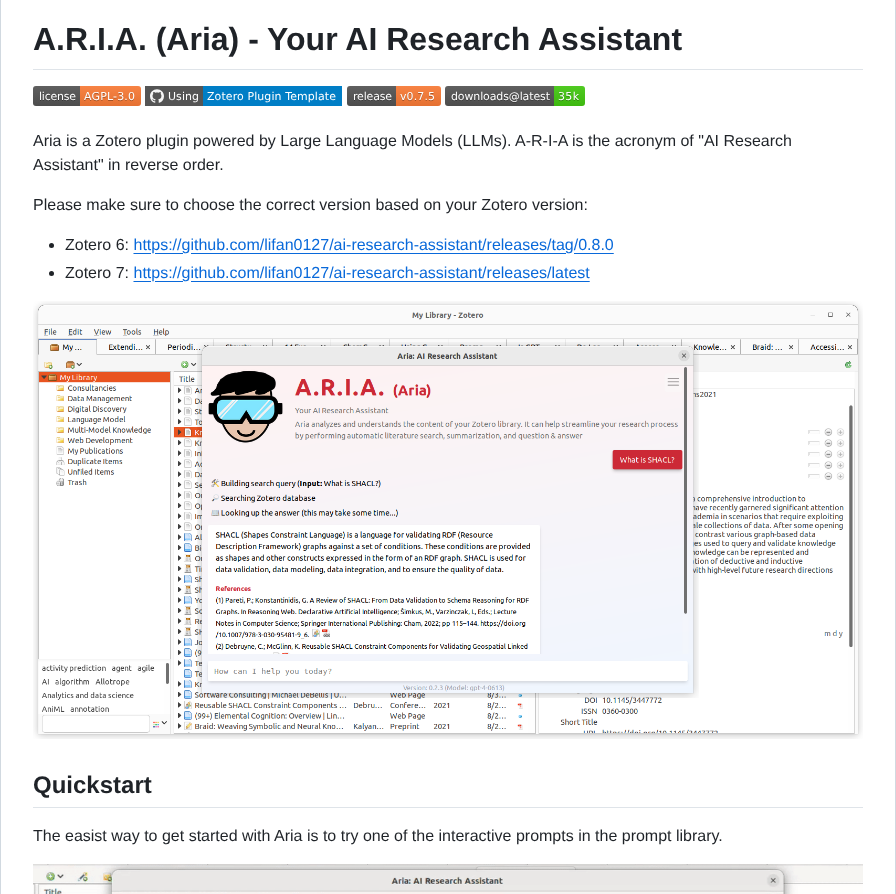

Most people assume you need a massive dataset to distill flow models. We challenge that. Is data actually necessary? Or perhaps it is a liability? Introducing FreeFlow: We achieve SOTA (1.49 FID on ImageNet-512) 1-step image generation without a single data sample. 🧵👇[1/n] https://t.co/MANGQixRjV

@TrungTPhan Slavery with extra steps https://t.co/3BTGgFfeT9

@martin_casado https://t.co/a7D3OHsgdv

@martin_casado Full meme for cred https://t.co/0IiR1ZAtFa

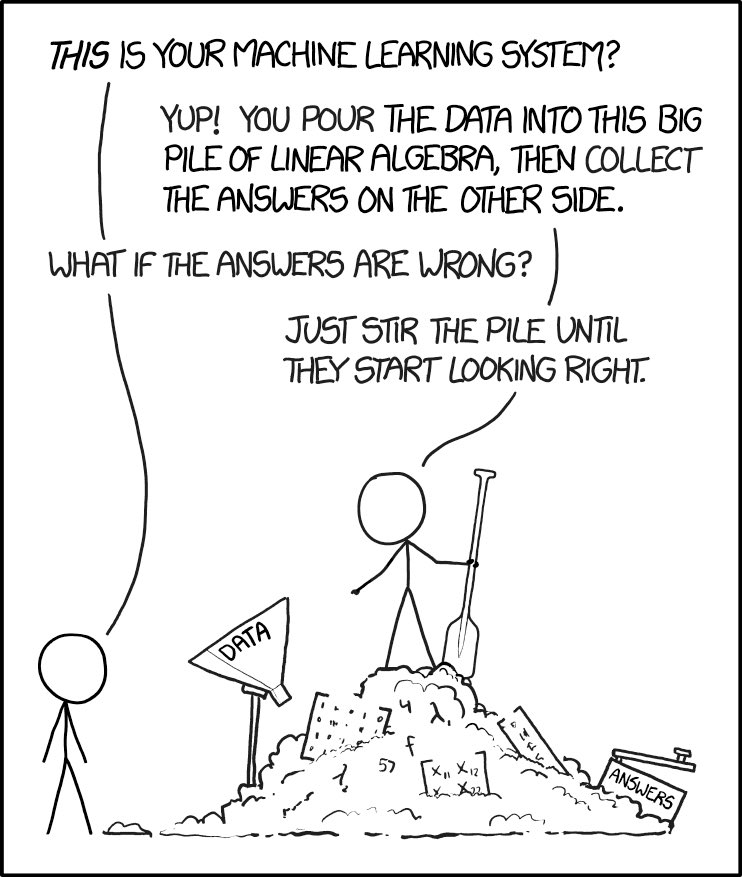

@karpathy @matejhladky_dev In hindsight this comic wasn’t a joke https://t.co/AqesrCn8EL

@karpathy @matejhladky_dev In hindsight this comic wasn’t a joke https://t.co/AqesrCn8EL

gm 🖤 https://t.co/jG8ej74YPu

@lcamtuf 🤔 https://t.co/IoWAhdctdh

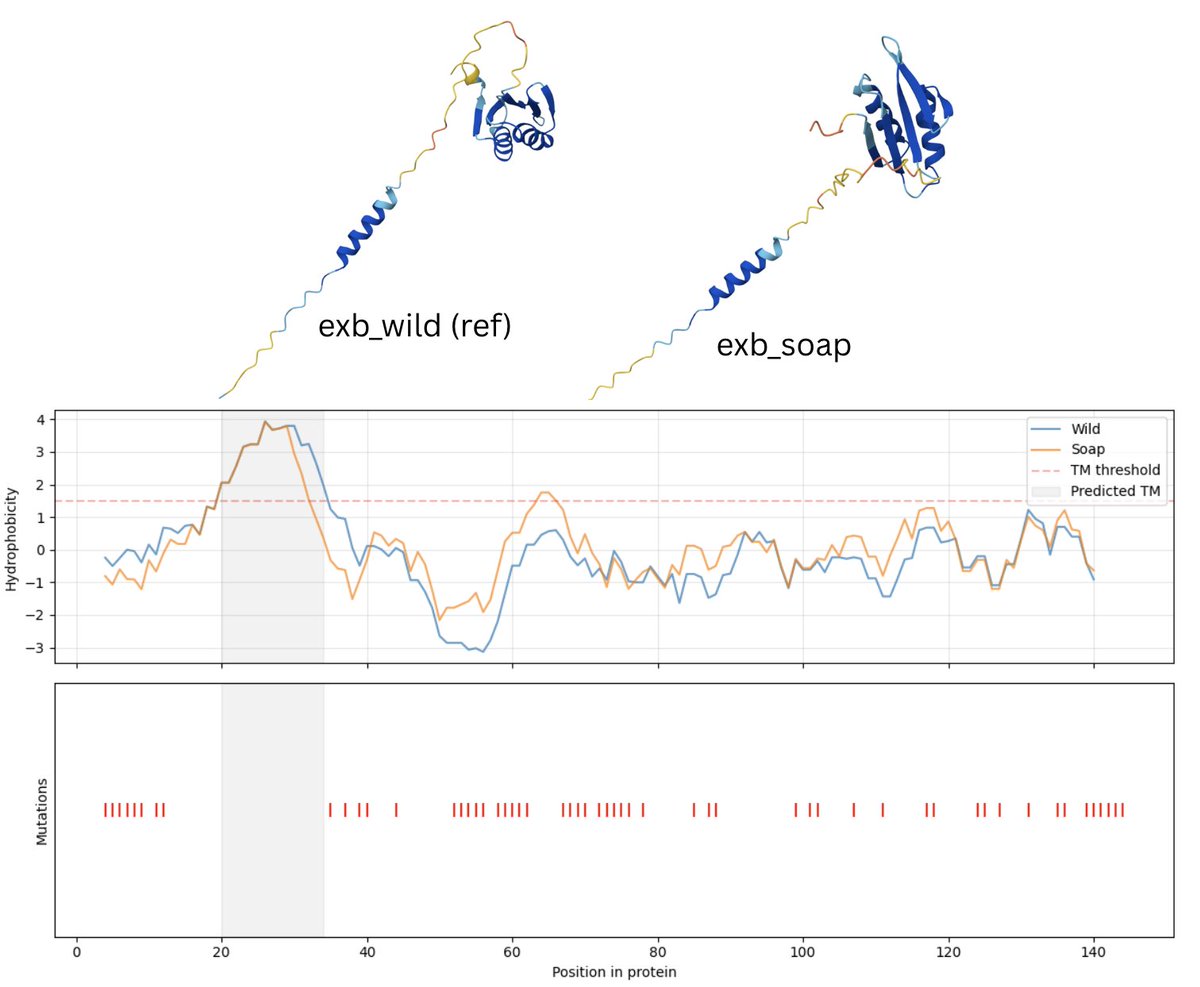

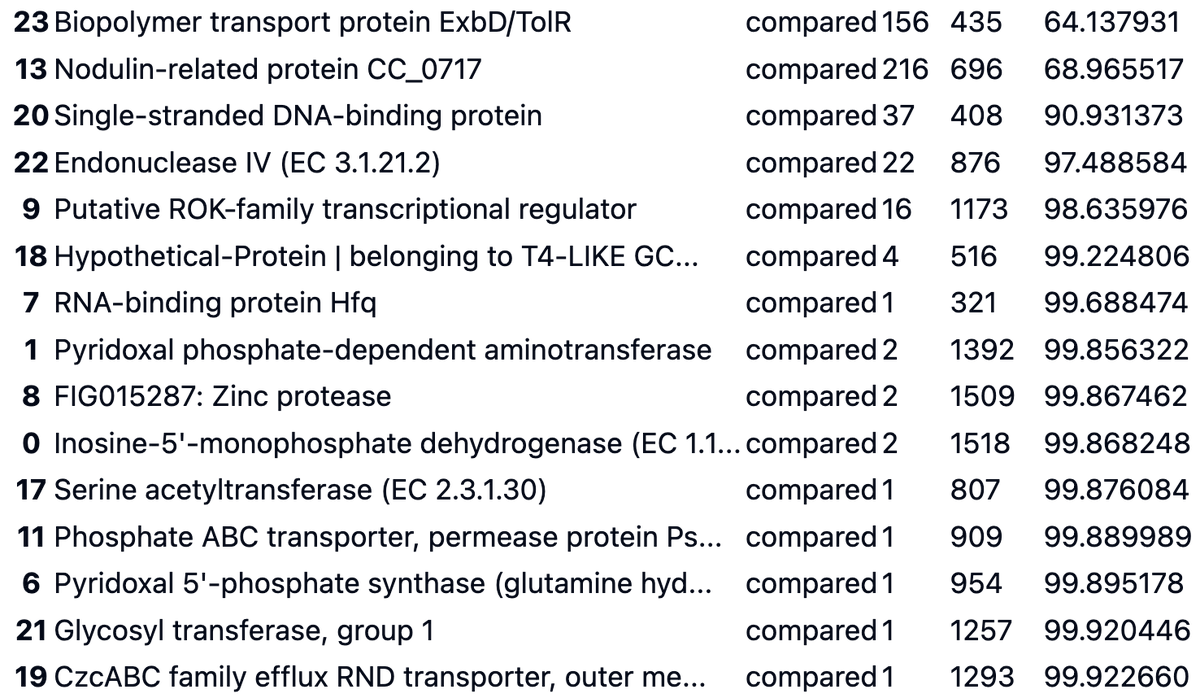

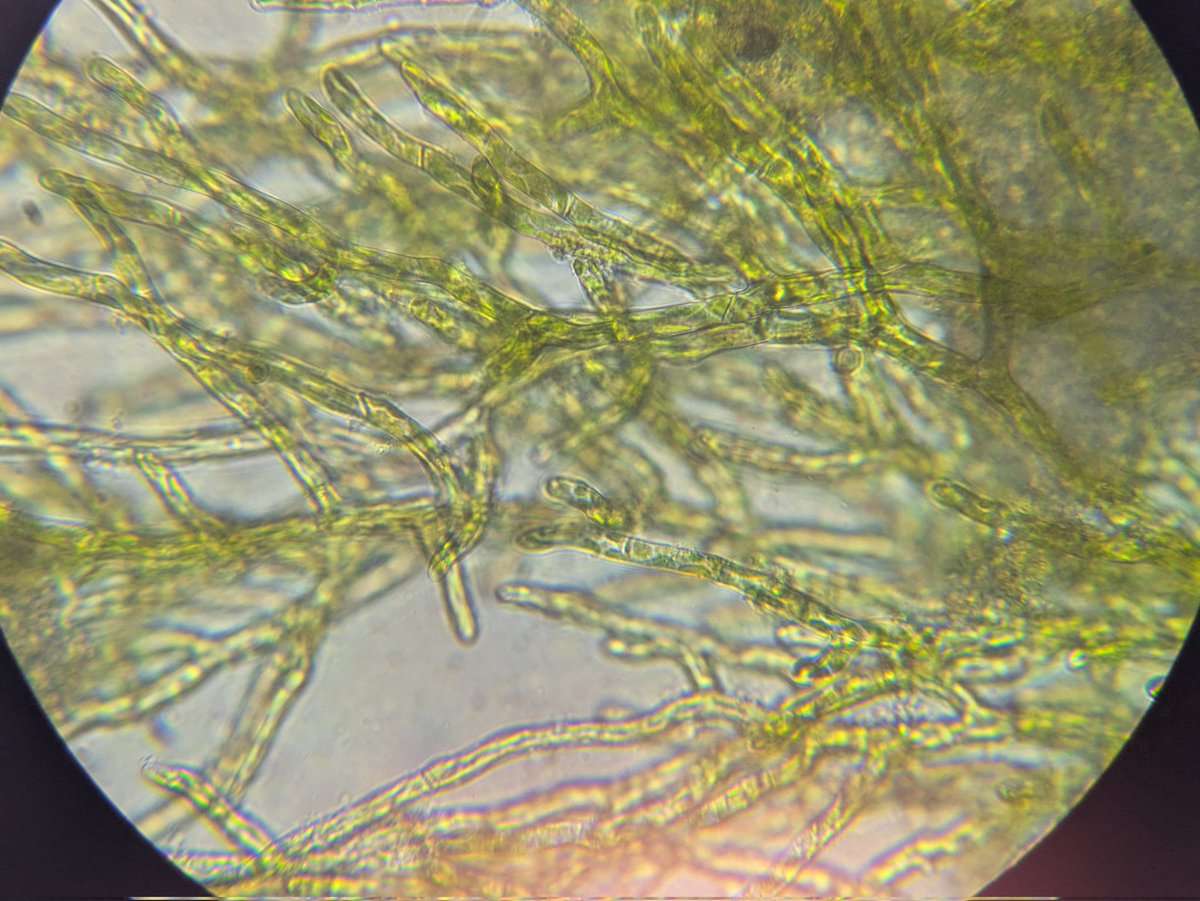

Comparing genes from a genome of E. flagellatus found in soil to those from E.f. growing in @ATinyGreenCell's triton-X surfactant as a learning exercise. e.g.: Big changes 'Biopolymer transport protein ExbD/TolR', but no mutations in the hydrophobic (transmembrane?) region. https://t.co/viaiQDq7gI

I'm almost certainly making all sorts of mistakes, but I'm having lots of fun :) I found 876 annotated genes that occur in both genomes, of which 25 had changes ranging from a single base pair to big diffs like the prev tweet. Even a one-acid change like T->K (32) in the efflux transporter could be helping this bug live in the harsh environment of a flower designer's surfactant solution? Anyway, fun stuff. So much to learn!

@_inc0_ https://t.co/RqeSitsYTB

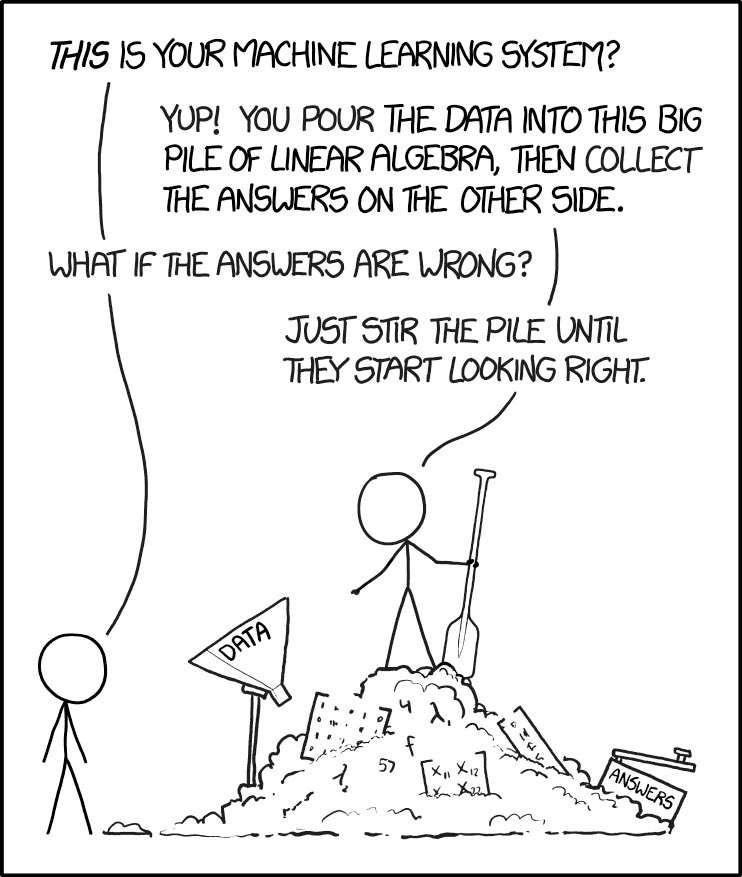

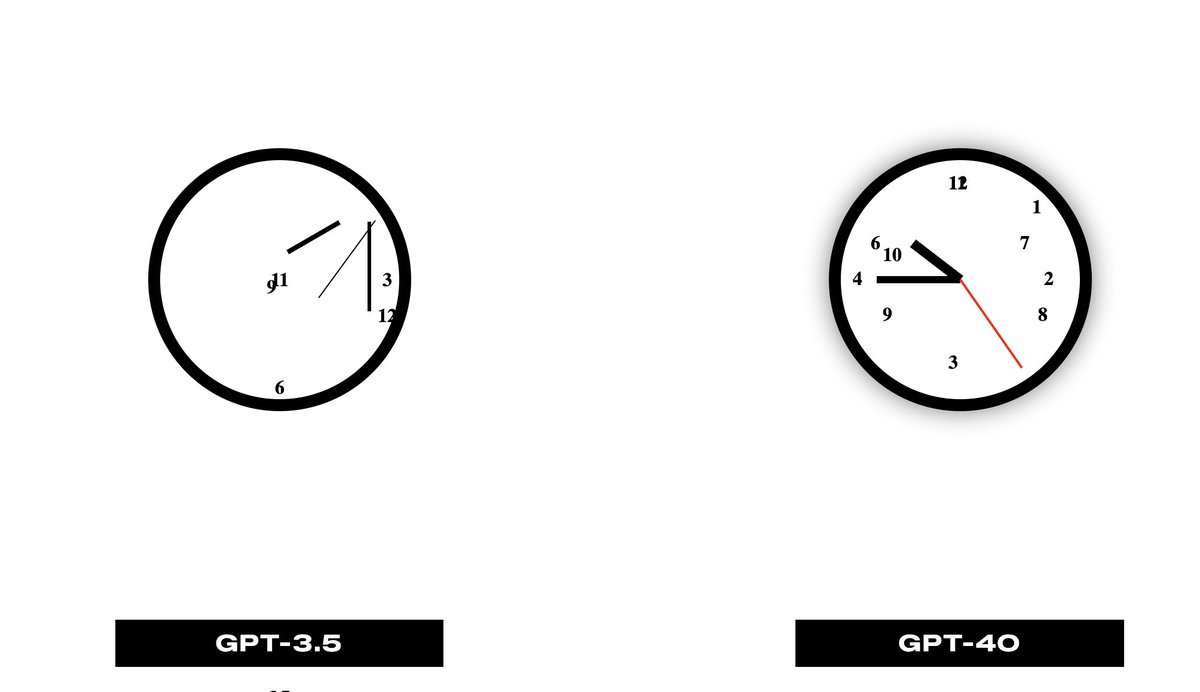

@fofrAI @osanseviero Reminds me of https://t.co/oTWZGMdUGT - amazing what a difference 4 years makes! https://t.co/ZHz7fv3hqf

@karpathy Lol at this guy 😂 https://t.co/jwHHbkhoU0

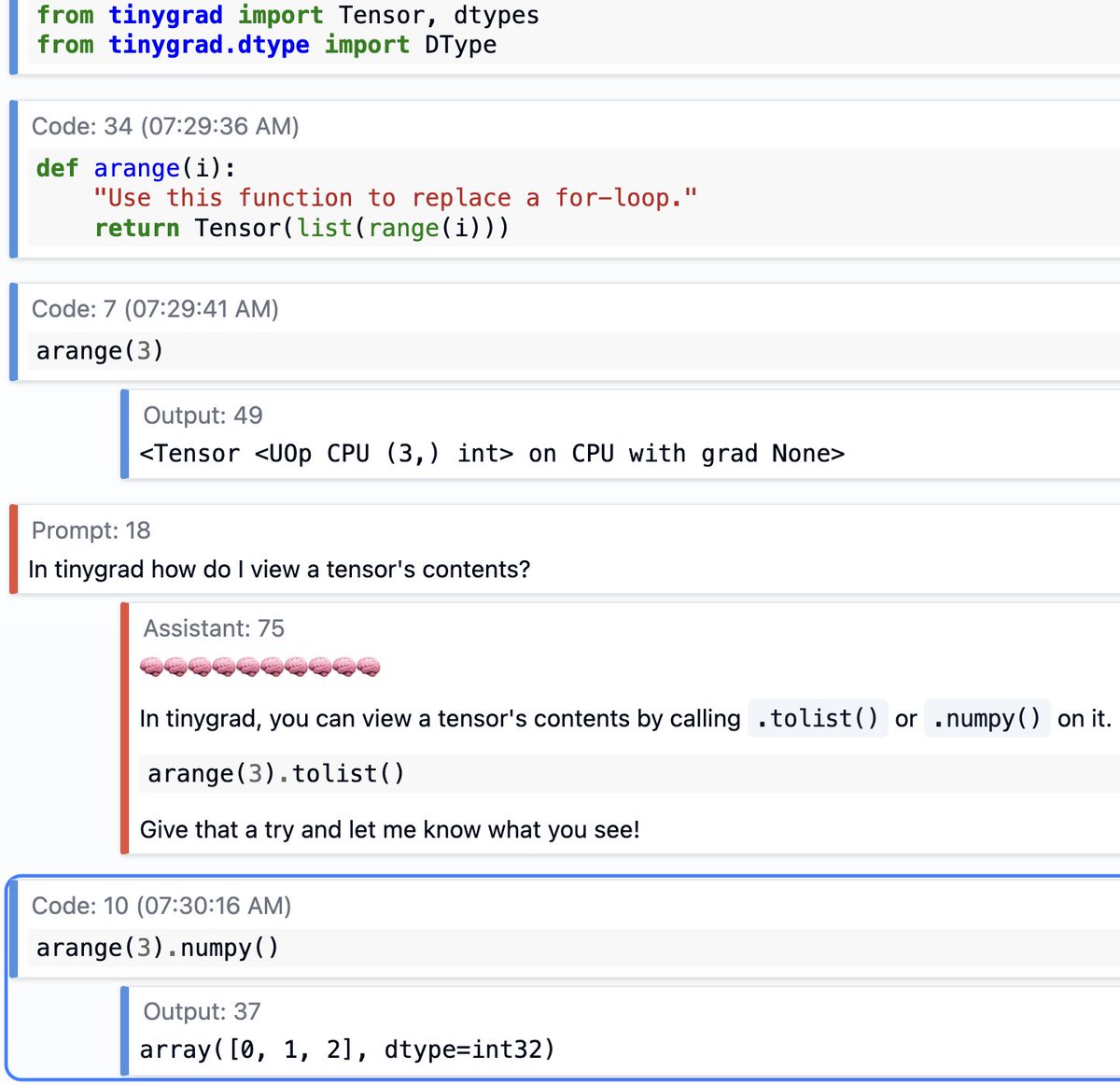

Oh also, @__tinygrad__ is now supported on Solveit :) https://t.co/3oAkZDPkL1

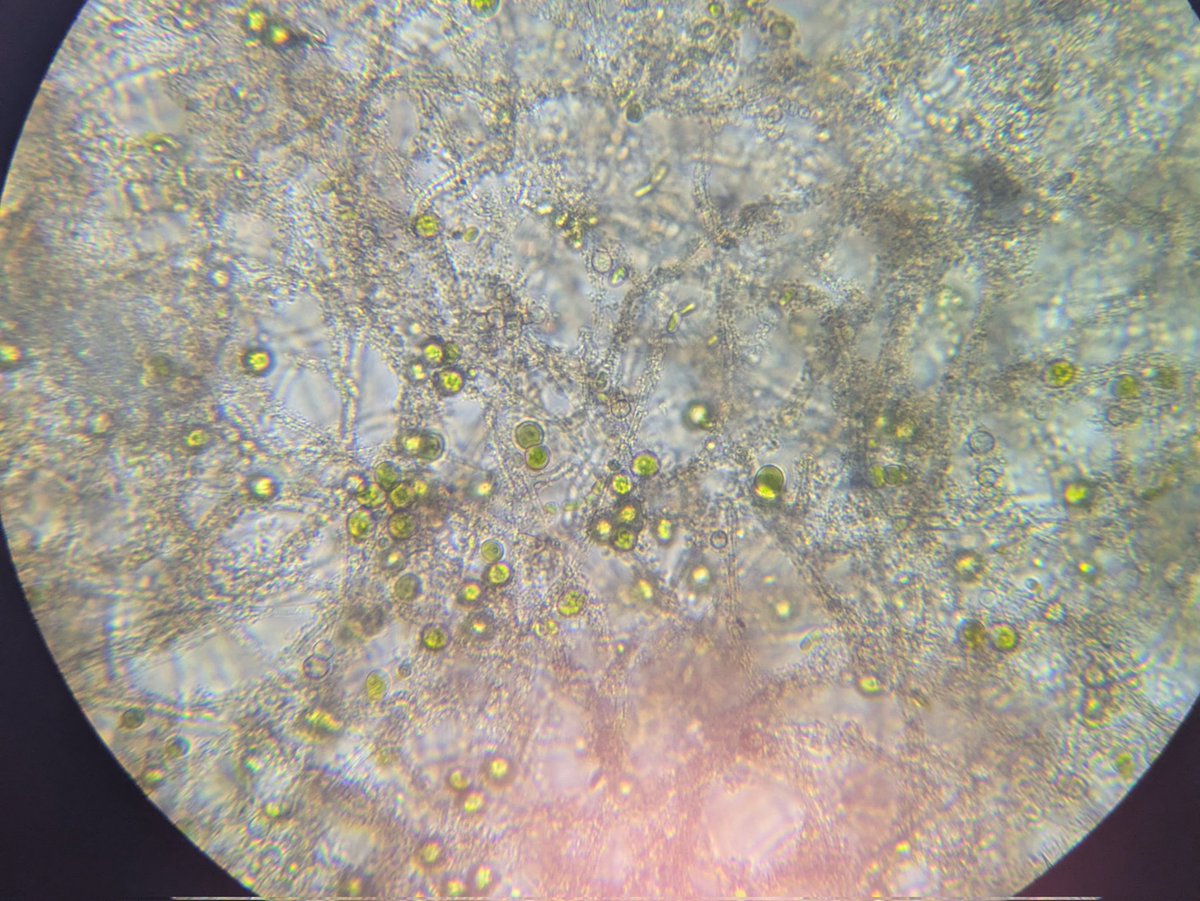

Update, some algae is now growing too, and I got a nicer scope and could get a closer look at the film. https://t.co/1OVtbGllzk

Website hero animation for a prompt design tool https://t.co/WeQpQah6qm

@ilyamiskov https://t.co/up0XWUJTkY

Created this hero animation illustrating an AI project manager that can be cc'd in team emails to extract and manage tasks using an LLM - @AdobeAE and @figma https://t.co/CKopfYyy9H

BTS https://t.co/2Eby6fZToW

Multi-agent systems struggle with coordination. Not because agents can't learn. It's because they can't communicate what they're thinking. Traditional MARL relies on implicit coordination. Agents learn through behavior. Through trial and error. Through observing each other's actions. But economic decisions require negotiation, strategy articulation, and explicit reasoning. Humans don't just act. We think, speak, and make decisions. This new research paper introduces a framework where agents do the same. Language-augmented multi-agent reinforcement learning for economic decision-making. The key innovation: language isn't just explanation. It's a functional coordination mechanism. This framework embeds communication as a core component of the learning process. Agents use natural language during learning and decision-making. They articulate strategies. They negotiate outcomes. They reason explicitly about economic choices. Not after the fact. This happens during the process. Here are the applications these agents unlock: - Autonomous market systems - Trading strategies - Resource allocation - Negotiation-based problem solving. What makes this powerful: agent behavior becomes interpretable and auditable. You can see what they're thinking, understand their reasoning, and trust their decisions. This shows great potential of agents that genuinely negotiate, not just implicitly coordinate. (bookmark it) Paper: arxiv. org/pdf/2511.12876

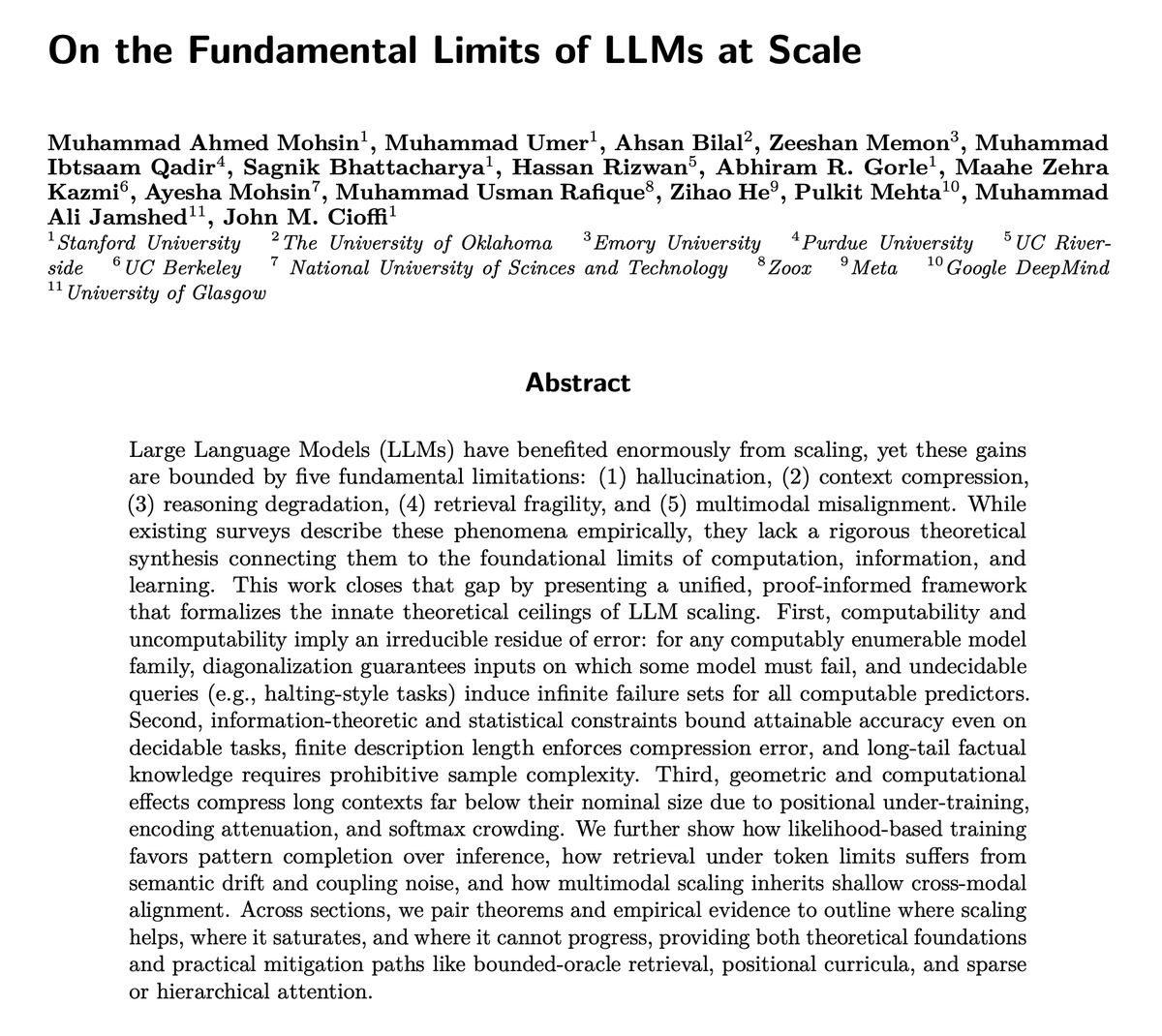

A unified theoretical framework showing the fundamental limits of LLMs. Discusses hallucination, context compression, reasoning degradation- rooted in computability, information theory, and learning constraints. Nice read to understand limits in LLMs even under scaling. Very early days, if you think about it. Abs: arxiv. org/abs/2511.12869

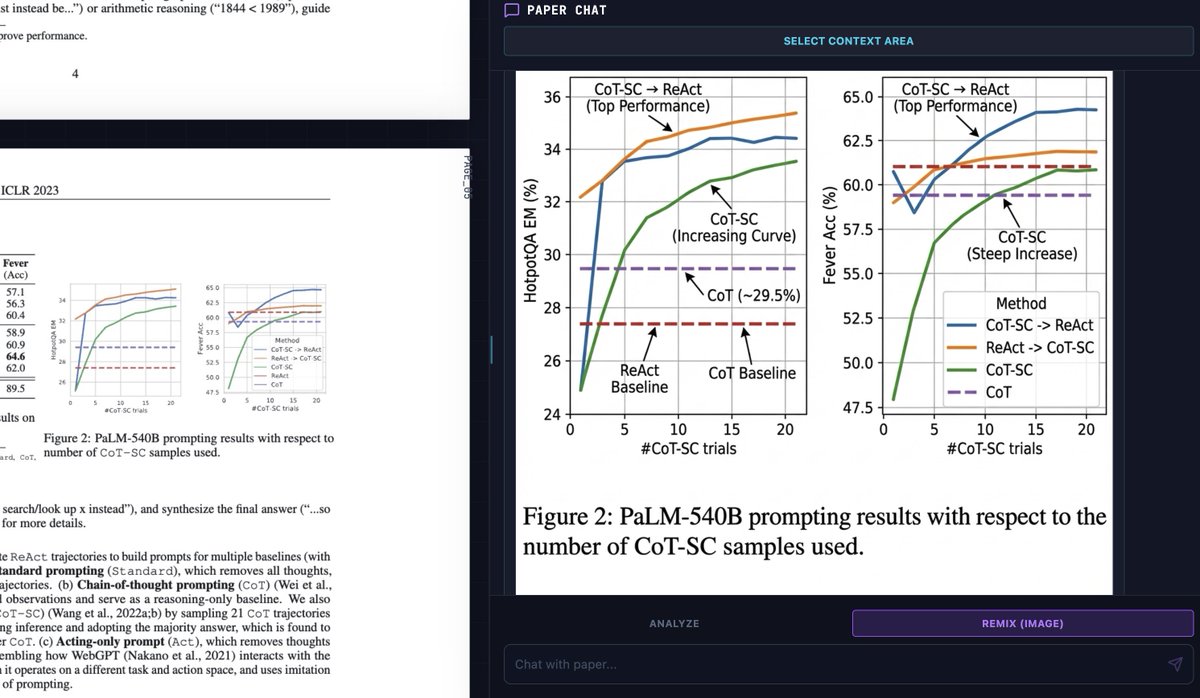

This is one of the most insane things Nano Banana Pro 🍌 can do. It can reproduce figures with mind-blowing precision. No competition in this regard! Prompt: "Please reproduce this chart in high quality and fidelity and offer annotated labels to better understand it." https://t.co/eDW6fl6d7t

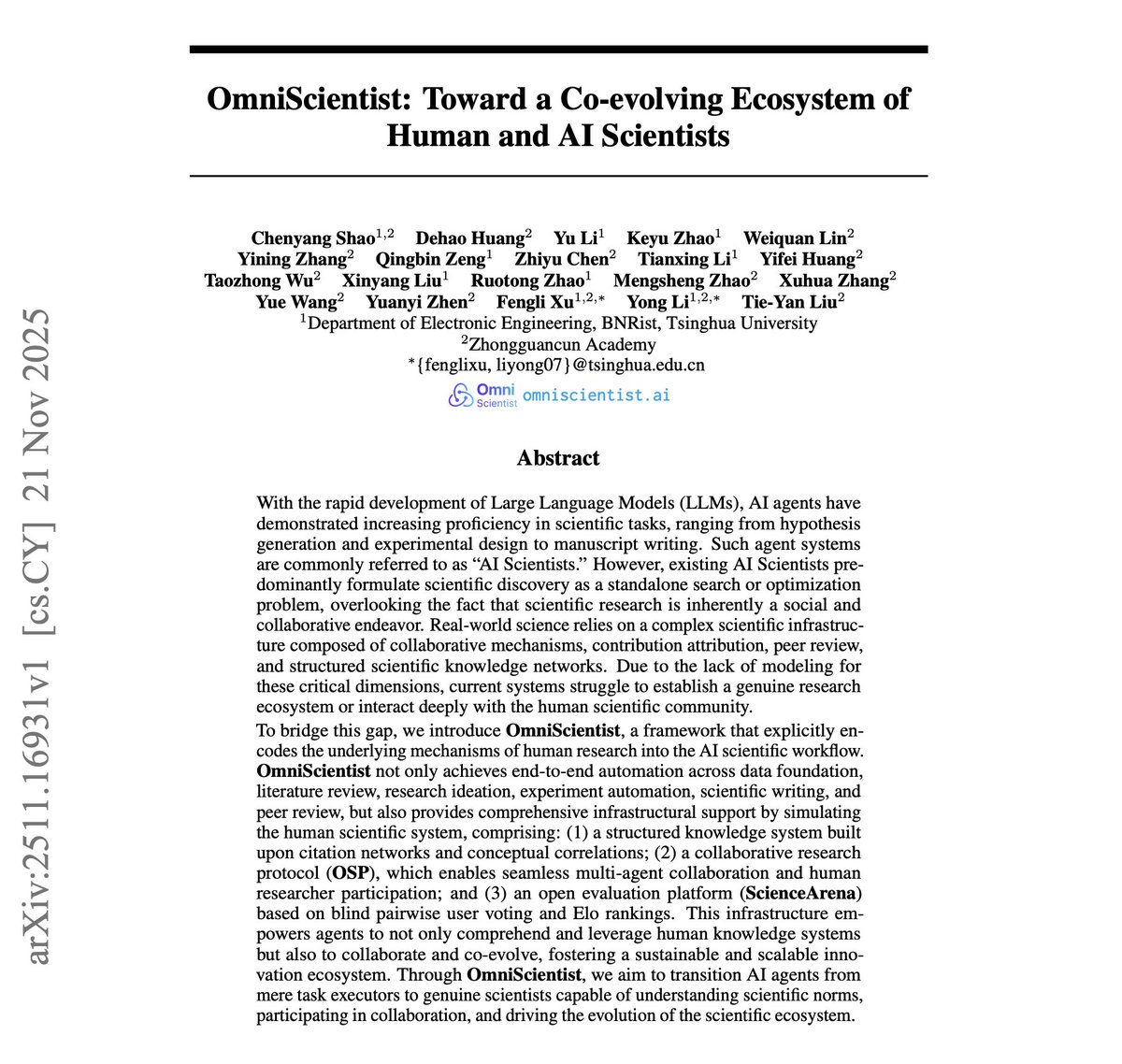

AI scientists are coming. However, current AI research tools work in isolation. Paper summarizers, experiment automators, and hypothesis generators are all done separately and disconnected from the research process. But real science isn't linear. It's collaborative, iterative, and deeply human. This new research introduces OmniScientist, a platform where AI and human scientists work together in a symbiotic ecosystem. The key idea: mutual feedback loops. Human expertise refines AI capabilities. AI insights then expand human research scope. Both evolve together. The platform integrates literature analysis, hypothesis generation, experimental design optimization, and results interpretation. Humans provide oversight at critical decision points. An exciting vision is AI systems that augment scientific discovery across physics, chemistry, biology, and computational sciences. The work is not about replacing the scientific process but rather about enhancing and augmenting it. What makes this powerful: AI handles scale and pattern recognition. Humans provide intuition and validation. Together, they tackle problems neither could solve alone. (bookmark it) Paper: https://t.co/xwvDQ56s4W

About to go crazy with Opus 4.5 inside of Claude Code! I was using Sonnet 4.5 as my default (and with great success), but I hear Opus 4.5 is much better at planning, orchestrating, and token-efficient. https://t.co/lD3NoInW5d

This is insane! 🤯 Just built a new skill in Claude Code using Opus 4.5. The skill uses Gemini 3 Pro (via API) for designing web pages. Look at what it generated from one simple prompt. https://t.co/wFtJdYbDSb

MiniMax-M2 is a bigger deal than I thought! Just built a deep research agent with M2 - the interleaved thinking hits different! It preserves content blocks (thinking + text + tool_use) to reason between tool calls. Huge for self-improving agents. Details + repo below ↓ https://t.co/LyI11SeXPx

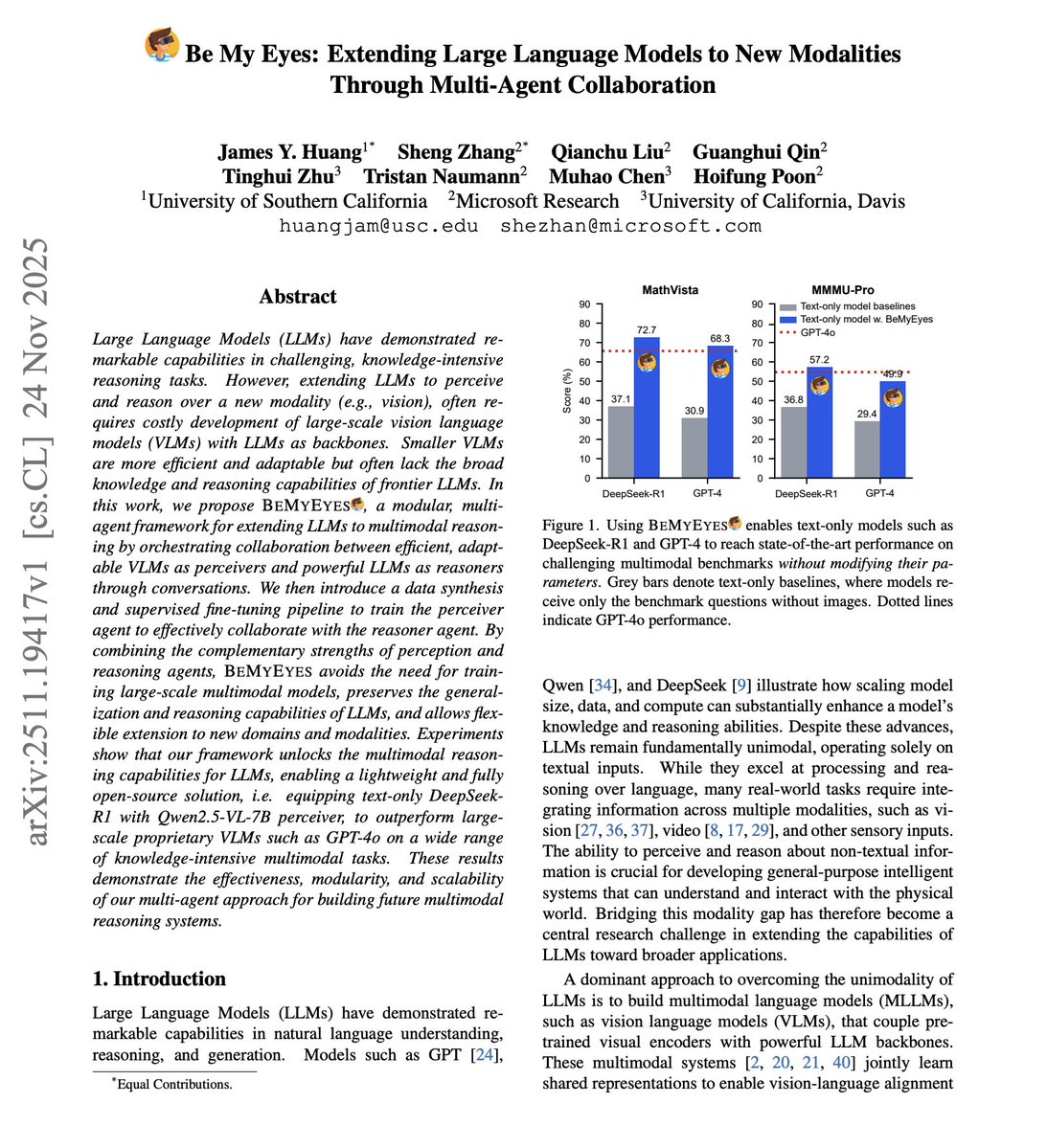

LLMs can't see. How can we build effective multi-agent systems with vision capabilities? Building multimodal models from scratch is expensive. Training joint vision-language architectures requires massive compute, specialized datasets, and careful optimization. But there's another way. This new research introduces "Be My Eyes," a framework where vision models become literal eyes for LLMs. The key idea: multi-agent collaboration through natural language. Vision agents analyze images and describe what they see. Language agents receive these descriptions and reason about them. Communication happens entirely through text. No joint training. No architectural modifications. Just agents talking to each other. The system is modular. Swap in better vision models as they emerge. Upgrade the LLM independently. Each component improves without retraining the whole system. Results on MMMU, MMMU-Pro, and video understanding benchmarks show competitive performance with specialized multimodal models. What makes this powerful: it challenges the assumption that multimodal AI requires unified architectures. Agent collaboration through language provides an efficient alternative. Paper: https://t.co/VqnwooAGkJ Learn to build with AI Agents in our academy: https://t.co/Y5kVy5iKiQ

For those interested, I will be talking more about how to do this in Claude Code in this training: https://t.co/ZDTOIyJ950

For those interested, I will be talking more about how to do this in Claude Code in this training: https://t.co/ZDTOIyJ950