@dair_ai

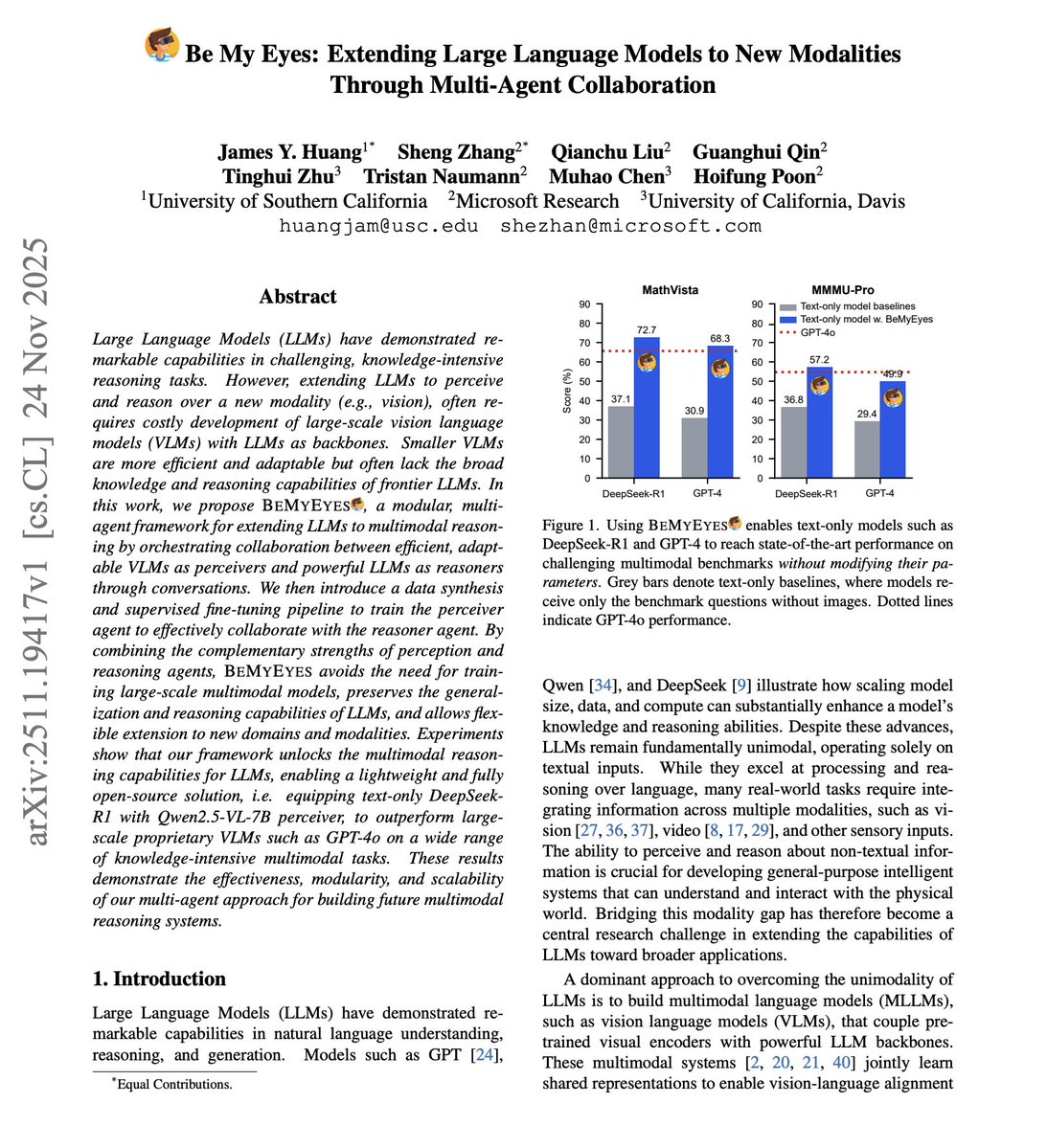

LLMs can't see. How can we build effective multi-agent systems with vision capabilities? Building multimodal models from scratch is expensive. Training joint vision-language architectures requires massive compute, specialized datasets, and careful optimization. But there's another way. This new research introduces "Be My Eyes," a framework where vision models become literal eyes for LLMs. The key idea: multi-agent collaboration through natural language. Vision agents analyze images and describe what they see. Language agents receive these descriptions and reason about them. Communication happens entirely through text. No joint training. No architectural modifications. Just agents talking to each other. The system is modular. Swap in better vision models as they emerge. Upgrade the LLM independently. Each component improves without retraining the whole system. Results on MMMU, MMMU-Pro, and video understanding benchmarks show competitive performance with specialized multimodal models. What makes this powerful: it challenges the assumption that multimodal AI requires unified architectures. Agent collaboration through language provides an efficient alternative. Paper: https://t.co/VqnwooAGkJ Learn to build with AI Agents in our academy: https://t.co/Y5kVy5iKiQ