Your curated collection of saved posts and media

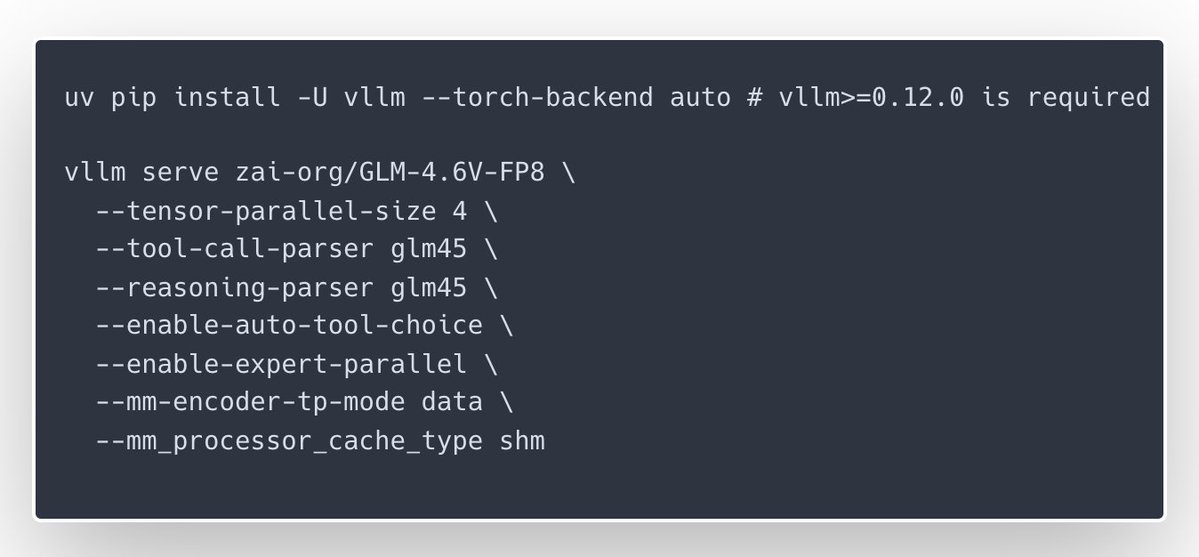

🎉Congrats to the @Zai_org team on the launch of GLM-4.6V and GLM-4.6V-Flash — with day-0 serving support in vLLM Recipes for teams who want to run them on their own GPUs. GLM-4.6V focuses on high-quality multimodal reasoning with long context and native tool/function calling, while GLM-4.6V-Flash is a 9B variant tuned for lower latency and smaller-footprint deployments; our new vLLM Recipe ships ready-to-run configs, multi-GPU guidance, and production-minded defaults. If you’re building inference services and want GLM-4.6V in your stack, start here: https://t.co/NhHT6iey6C

GLM-4.6V Series is here🚀 - GLM-4.6V (106B): flagship vision-language model with 128K context - GLM-4.6V-Flash (9B): ultra-fast, lightweight version for local and low-latency workloads First-ever native Function Calling in the GLM vision model family Weights: https://t.co/vKmNo

(1/n) Tiny-A2D: An Open Recipe to Turn Any AR LM into a Diffusion LM Code (dLLM): https://t.co/Nv7d1t8Qin Checkpoints: https://t.co/rpibkb2Xfq With dLLM, you can turn ANY autoregressive LM into a diffusion LM (parallel generation + infilling) with minimal compute. Using this recipe, we built a 🤗collection of the smallest diffusion LMs that work well in practice. Key takeaways: 1. Finetuned on Qwen3-0.6B, we obtain the strongest small (~0.5/0.6B) diffusion LMs to date. 2. The base AR LM matters: Investing compute in improving the base AR model is potentially more efficient than scaling compute during adaptation. 3. Block diffusion (BD3LM) generally outperforms vanilla masked diffusion (MDLM), especially on math-reasoning and coding tasks.

(1/n) Tiny-A2D: An Open Recipe to Turn Any AR LM into a Diffusion LM Code (dLLM): https://t.co/Nv7d1t8Qin Checkpoints: https://t.co/rpibkb2Xfq With dLLM, you can turn ANY autoregressive LM into a diffusion LM (parallel generation + infilling) with minimal compute. Using this recipe, we built a 🤗collection of the smallest diffusion LMs that work well in practice. Key takeaways: 1. Finetuned on Qwen3-0.6B, we obtain the strongest small (~0.5/0.6B) diffusion LMs to date. 2. The base AR LM matters: Investing compute in improving the base AR model is potentially more efficient than scaling compute during adaptation. 3. Block diffusion (BD3LM) generally outperforms vanilla masked diffusion (MDLM), especially on math-reasoning and coding tasks.

Releasing jina-VLM: our new 2B vision language model achieves SOTA on multilingual visual question answering and document understanding among open 2B-scale VLMs. https://t.co/QDZvAt6Wux

A toolkit for building agents that watch, listen, and understand video. Low latency by design. Open source. Production ready. Vision Agents lets you build real time video AI that works with your models and your edge layer. Supports YOLO, Moondream, Cartesia, Deepgram, ElevenLabs, HeyGen, Gemini, OpenAI, and more. Quick model switching. Easy to use API. Perfect for coaching tools, collaboration apps, avatars, and robotics.

@prior_labs Job openings: https://t.co/mbp8ZG4RKj

I don't think there's a more diverse and international platform in AI than @huggingface! Current trending models are coming from all over the world in all sorts of modalities & sizes. That is AI maturing at the speed of light! https://t.co/N0hMmFMZfG

🤗 Give GLM‑4.6V a try on @huggingface , supported by Novita. https://t.co/Ps4awZWZRn

🤗 Give GLM‑4.6V a try on @huggingface , supported by Novita. https://t.co/Ps4awZWZRn

may I present https://t.co/9i3jTgUIgn

Anyone who skips @billions_ntwk will regret it. This network is made for real humans, not the noise. I'm locked on $BILL @jgonzalezferrer https://t.co/GY81iY4VCD

Workshop confirmed! Level up your AI creative skills at DevFest Cairo. Discover how to blend image editing, voice, and video into stunning assets using Google AI. Feeling bold? Bring your best profile photos, the stage is yours. #GoogleDeveloperExpert #AI https://t.co/bL8upyqhgb

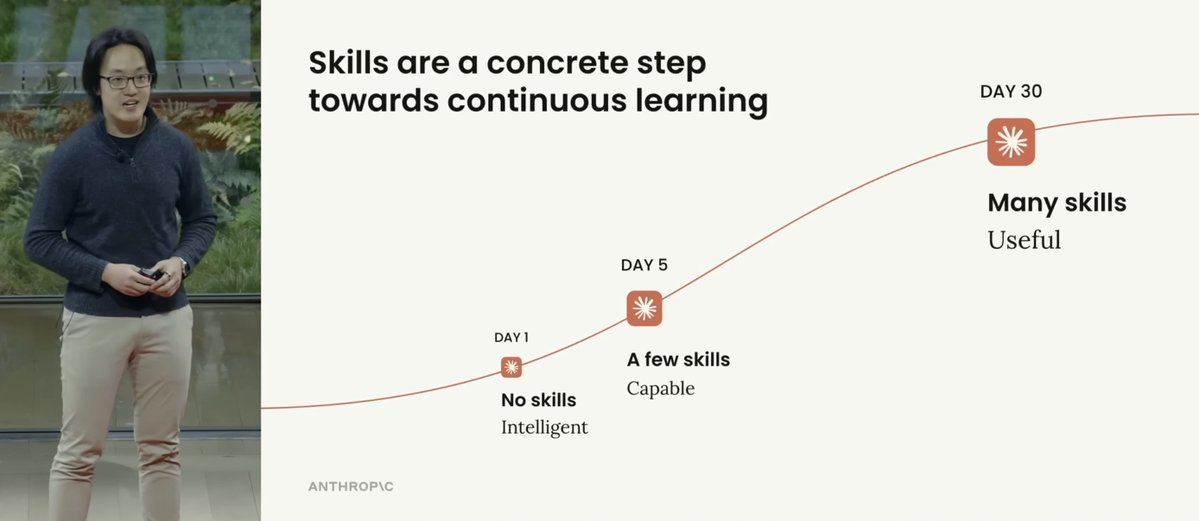

I love this figure from Anthropic's new talk on "Skills > Agents". Here are my notes: The more skills you build, the more useful Claude Code gets. And it makes perfect sense. Procedural knowledge and continuous learning for the win! Skills essentially are the way you make Claude Code more knowledgeable over time. This is why I had argued that Skills is a good name for this functionality. Claude Code acquires new capabilities from domain experts (they are the ones building skills). Claude Code can evolve the skills as needed and forget the ones it doesn't need anymore. It's a collaborative effort, which can easily be expanded to entire teams, communities, and orgs (via plugins). Skills are particularly useful for workflows where information and requirements constantly change. Finance, code, science, and human-in-the-loop workflows are all great use cases for Skills. You can build new Skills using the built-in skill creation tool, so you are always building new skills with all the best practices. Or you can do what I did, which is build my own skill creator to build custom skills catered to the work I do. Just more levels of customization that Skills also enables. Skills flexibility enables future capabilities to be easily integrated everywhere. Competitors don't have anything remotely close to this type of ecosystem. The deep understanding of Anthropic engineers on the importance of better context management tools and agent harnesses is something to admire. Very bullish on Claude Code.

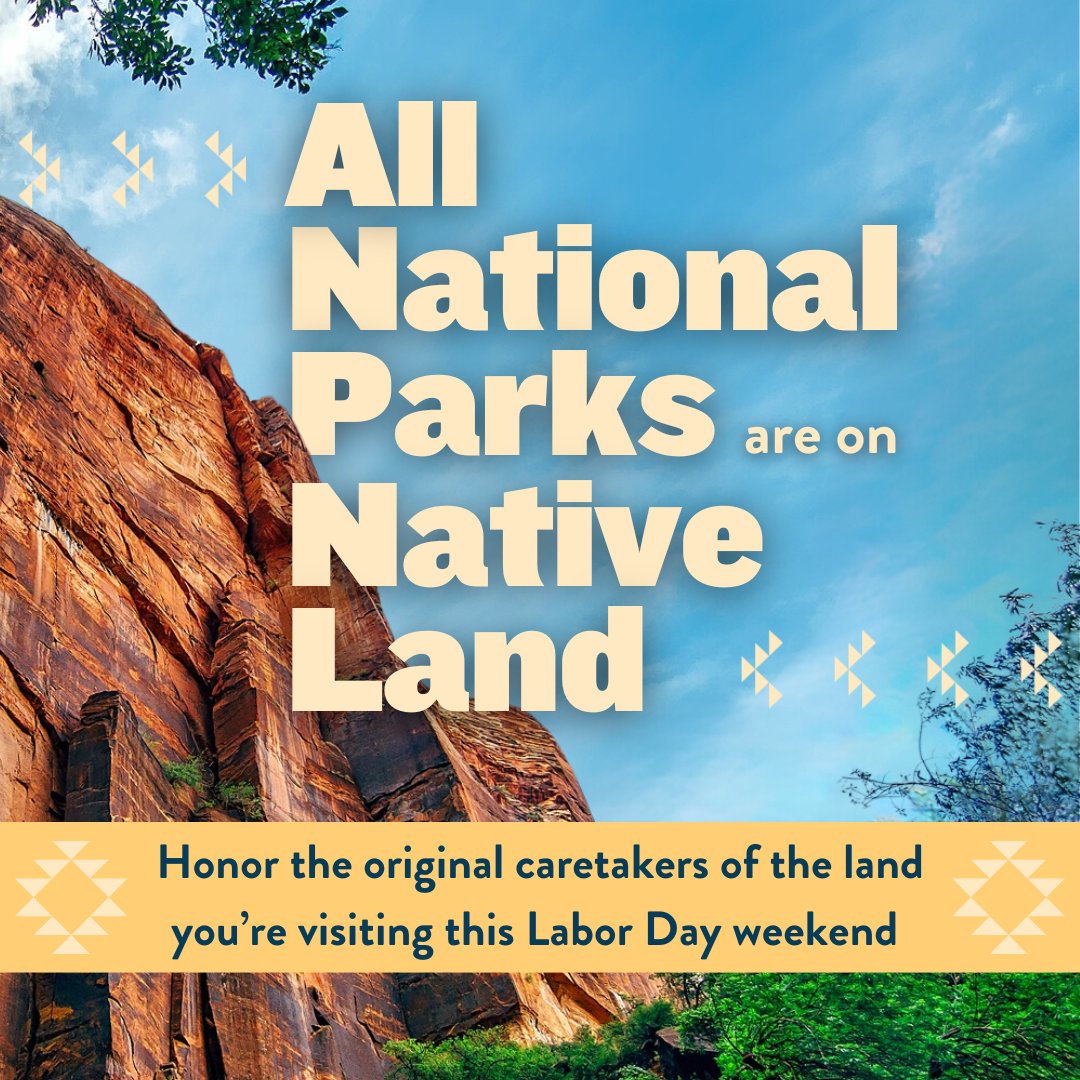

"This book changed me. We are takers. We take from each other. We take from the animals. We take from the land..." #nativeamericans https://t.co/ElnFZUjmCK https://t.co/rDRALlbcbP

“We depend on nature not only for our physical survival, we also need nature to show us the way home, the way out of the prison of our own minds.” ~ E. Tolle, https://t.co/AcqZ03kp5o

If you’re traveling to a #nationalpark this summer, you’re traveling to #Nativelands. Today, the @NatlParkService is reconciling with its past by collaborating with Tribal communities to maintain healthy ecosystems for future generations. Learn more: https://t.co/YKSU85Zafl https://t.co/DrZnFzVwxn

https://t.co/4RyTAQkRkf - Rharos Network Teams Launch Native Rowa Loan Lending Barrier #NFTNews

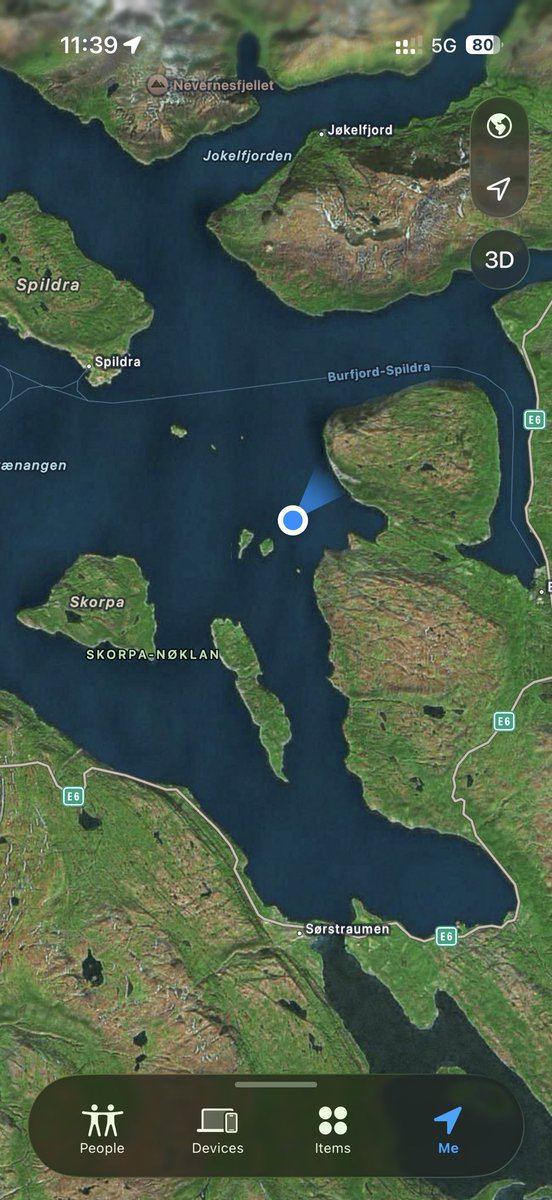

Slow day have signal tho. https://t.co/qfxdOk6ZpG

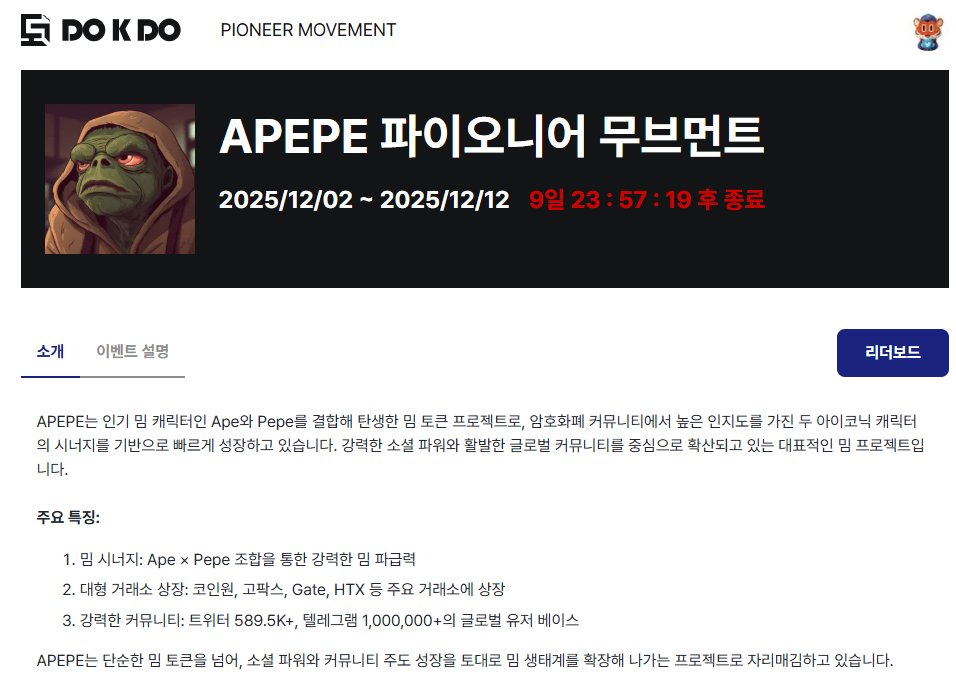

[계속해서 보급되는 독도 다오의 야핑 @APEPE_MEME ] 국내 거래소에서도 상장되어 있는 APEPE입니다. 밈토큰 두개가 결합한 프로젝트로 최근 빠르게 성장하고 있습니다! 이벤트 보상은 다음과 같습니다! 상위 80명: 최종 리더보드 순위로 보상을 차등 지급 참여자 글림 래플: 치킨 5마리, 커피 100잔 2주 조금 안되는 시간이고 보상풀도 총 5,000USDT이니 꼭 참여해보시길 바랍니당! 개인적으로는 글 주제가 생각이 안나긴 하는데... 기존 야핑쪽과 엮어보던지 해야 할 거 같습니다!(물론 다중 태그는 안됩니당)

[@APEPE_MEME 야핑하시는 분들은 이걸 사용해보시면 좋을 것 같아요] 프리미엄 -> X Pro에서 include:nativeretweets (filter:self_threads OR -filter:nativeretweets -filter:retweets -filter:replies) @APEPE_MEME filter:blue_verified lang:ko 검색창에 이걸 입력하시면 같은 프로젝트 야핑하시는 한국 분들 검색 가능합니다 레전드죠? 사실 저기 핸들 옆에 or @ 원하는 프로젝트 입력하면 본인이 야핑하는 프로젝트들 같이 겸해서 검색 가능. 이러면 좋은 게 같이 상호받기도 쉽고 상호 하기도 쉽습니당. 저는 사실 @DOKDODAO 야핑은 다 이렇게 상호합니다 너무 많아서 문제지 ㅋㅋ

[계속해서 보급되는 독도 다오의 야핑 @APEPE_MEME ] 국내 거래소에서도 상장되어 있는 APEPE입니다. 밈토큰 두개가 결합한 프로젝트로 최근 빠르게 성장하고 있습니다! 이벤트 보상은 다음과 같습니다! 상위 80명: 최종 리더보드 순위로 보상을 차등 지급 참여자 글림 래플: 치킨 5마리, 커피 100잔 2주 조금 안되는 시간이고 보상풀도 총 5,000USDT이니 꼭 참여해보시길 바랍니당! 개인적으로는 글 주제가 생각이 안나긴 하는데... 기존 야핑쪽과 엮어보던지 해야 할 거

Native. Permissionless. Instant. its only happen @RialoHQ so, welcome!! https://t.co/t1zWZ87l2E

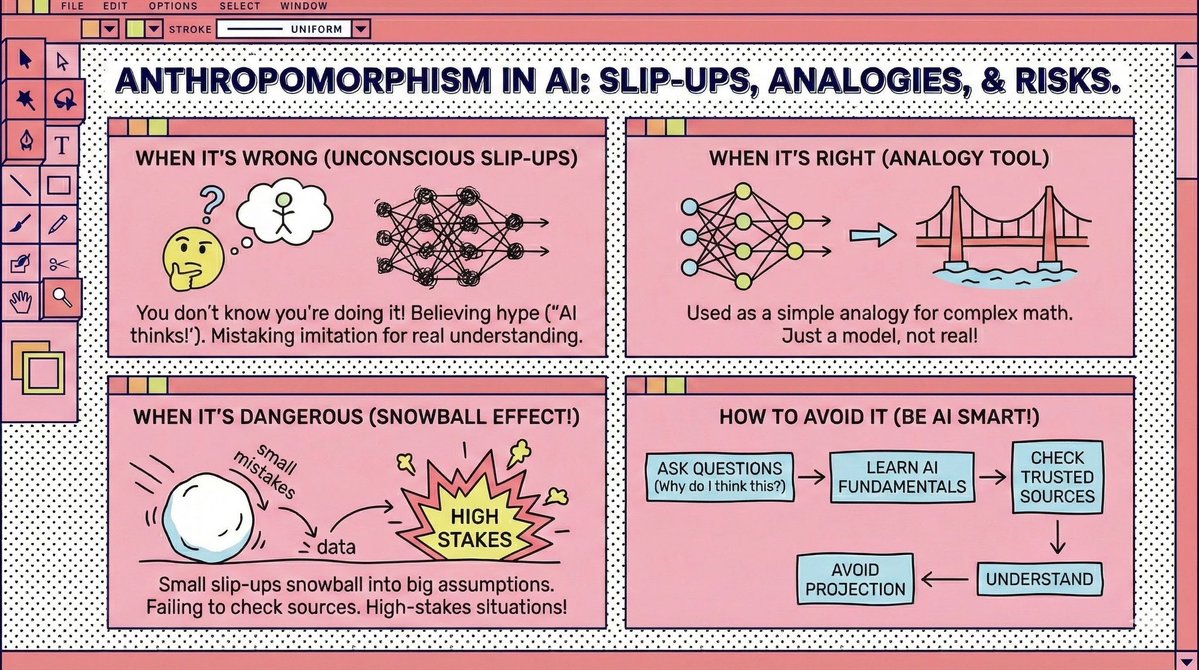

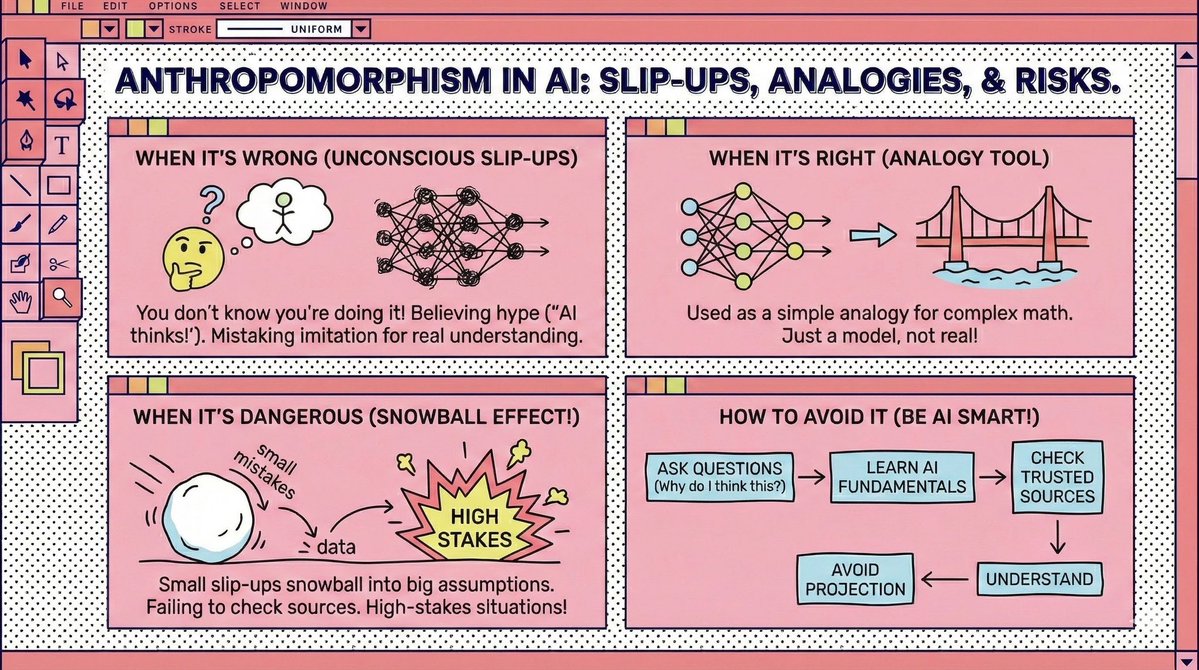

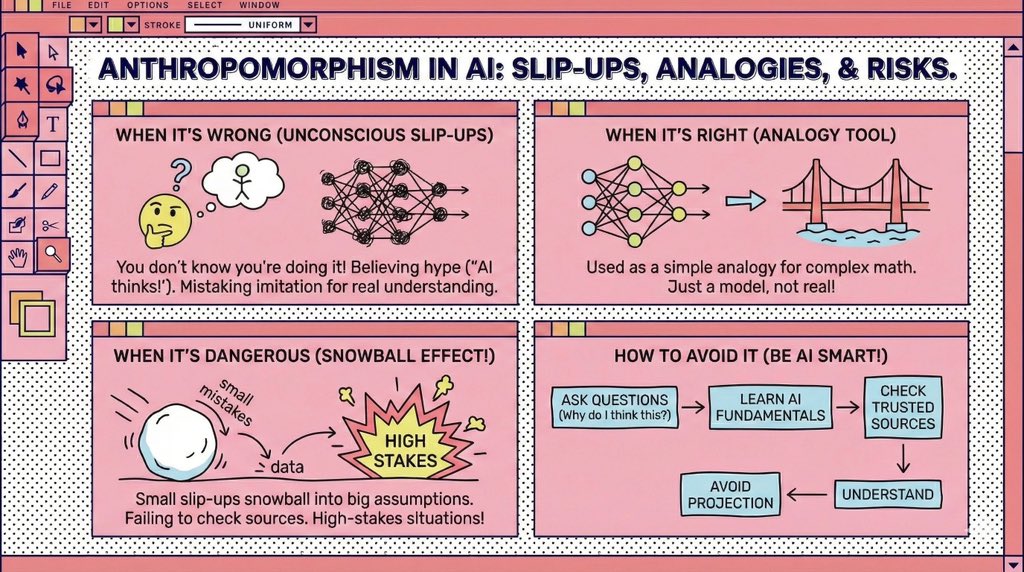

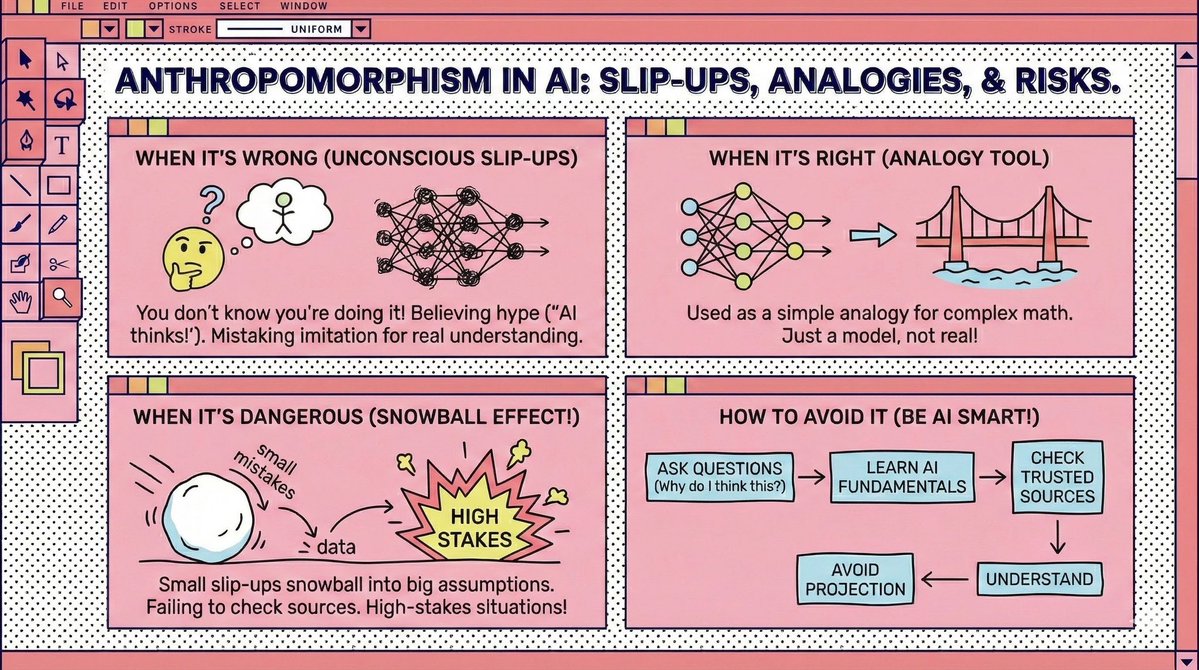

Bold Lacanian read on AI hallucination, but the analogy leans on heavy anthropomorphic baggage. All LLM outputs start the same: every token is just next token prediction. A continuation becomes a hallucination only when a human adds real world context the model never had. There is no psyche trying to fill a lack. Personality in LLMs is RLHF rewarding fluency, not truth. Apparent traits are prompt shaped data artefacts as in Han et al 2025 arXiv:2509.03730. Self reported Big Five maps to behaviour in about 24 percent of cases. This is a stochastic funnel, not a barred subject. The confidence in hallucinations is not Lacanian jouissance. It is the Eliza effect. We project coherence and intention, then blame the model for a mismatch created by our own projection. Keep the poetic mirror, but mark where it stops explaining and starts flattering our desire to see a mind in a transformer. Great paper, but it needs a reminder to flag every anthropomorphic move with the actual technical context. Call out when you are interpreting output after the fact, not describing how it was produced, and avoid projecting human traits that do not exist. Follow for more insights or subscribe to receive updates in your inbox: https://t.co/DybOvoBDEw

Bold Lacanian read on AI hallucination, but the analogy leans on heavy anthropomorphic baggage. All LLM outputs start the same: every token is just next token prediction. A continuation becomes a hallucination only when a human adds real world context the model never had. There is no psyche trying to fill a lack. Personality in LLMs is RLHF rewarding fluency, not truth. Apparent traits are prompt shaped data artefacts as in Han et al 2025 arXiv:2509.03730. Self reported Big Five maps to behaviour in about 24 percent of cases. This is a stochastic funnel, not a barred subject. The confidence in hallucinations is not Lacanian jouissance. It is the Eliza effect. We project coherence and intention, then blame the model for a mismatch created by our own projection. Keep the poetic mirror, but mark where it stops explaining and starts flattering our desire to see a mind in a transformer. Great paper, but it needs a reminder to flag every anthropomorphic move with the actual technical context. Call out when you are interpreting output after the fact, not describing how it was produced, and avoid projecting human traits that do not exist. Follow for more insights or subscribe to receive updates in your inbox: https://t.co/DybOvoBDEw

Bold Lacanian read on AI hallucination, but the analogy leans on heavy anthropomorphic baggage. All LLM outputs start the same: every token is just next token prediction. A continuation becomes a hallucination only when a human adds real world context the model never had. There is no psyche trying to fill a lack. Personality in LLMs is RLHF rewarding fluency, not truth. Apparent traits are prompt shaped data artefacts as in Han et al 2025 arXiv:2509.03730. Self reported Big Five maps to behaviour in about 24 percent of cases. This is a stochastic funnel, not a barred subject. The confidence in hallucinations is not Lacanian jouissance. It is the Eliza effect. We project coherence and intention, then blame the model for a mismatch created by our own projection. Keep the poetic mirror, but mark where it stops explaining and starts flattering our desire to see a mind in a transformer. Great paper, but it needs a reminder to flag every anthropomorphic move with the actual technical context. Call out when you are interpreting output after the fact, not describing how it was produced, and avoid projecting human traits that do not exist. Follow for more insights or subscribe to receive updates in your inbox: https://t.co/DybOvoBDEw

Bold Lacanian read on AI hallucination, but the analogy leans on heavy anthropomorphic baggage. All LLM outputs start the same: every token is just next token prediction. A continuation becomes a hallucination only when a human adds real world context the model never had. There is no psyche trying to fill a lack. Personality in LLMs is RLHF rewarding fluency, not truth. Apparent traits are prompt shaped data artefacts as in Han et al 2025 arXiv:2509.03730. Self reported Big Five maps to behaviour in about 24 percent of cases. This is a stochastic funnel, not a barred subject. The confidence in hallucinations is not Lacanian jouissance. It is the Eliza effect. We project coherence and intention, then blame the model for a mismatch created by our own projection. Great paper, but it needs a reminder to flag every anthropomorphic move with the actual technical context. Call out when you are interpreting output after the fact, not describing how it was produced, and avoid projecting human traits that do not exist. Follow for more insights or subscribe to receive updates in your inbox: https://t.co/DybOvoBDEw

@AndrewYang AI = actually Indians The encrapification of all things will continue. https://t.co/jps8ooTg42

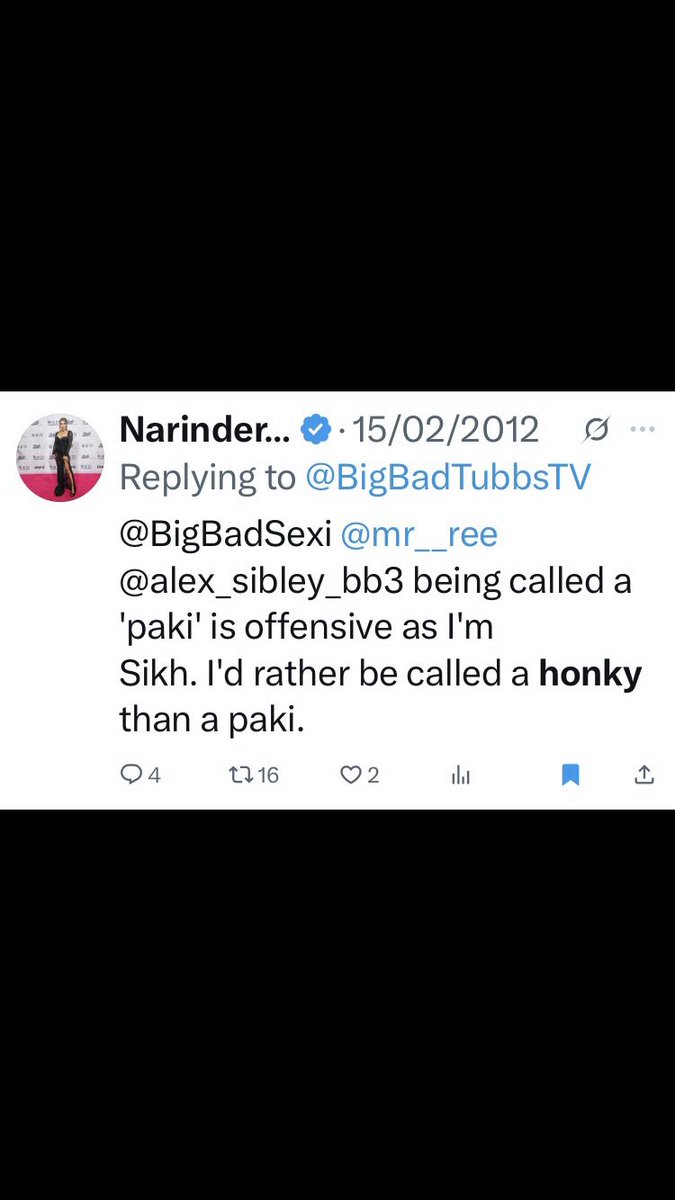

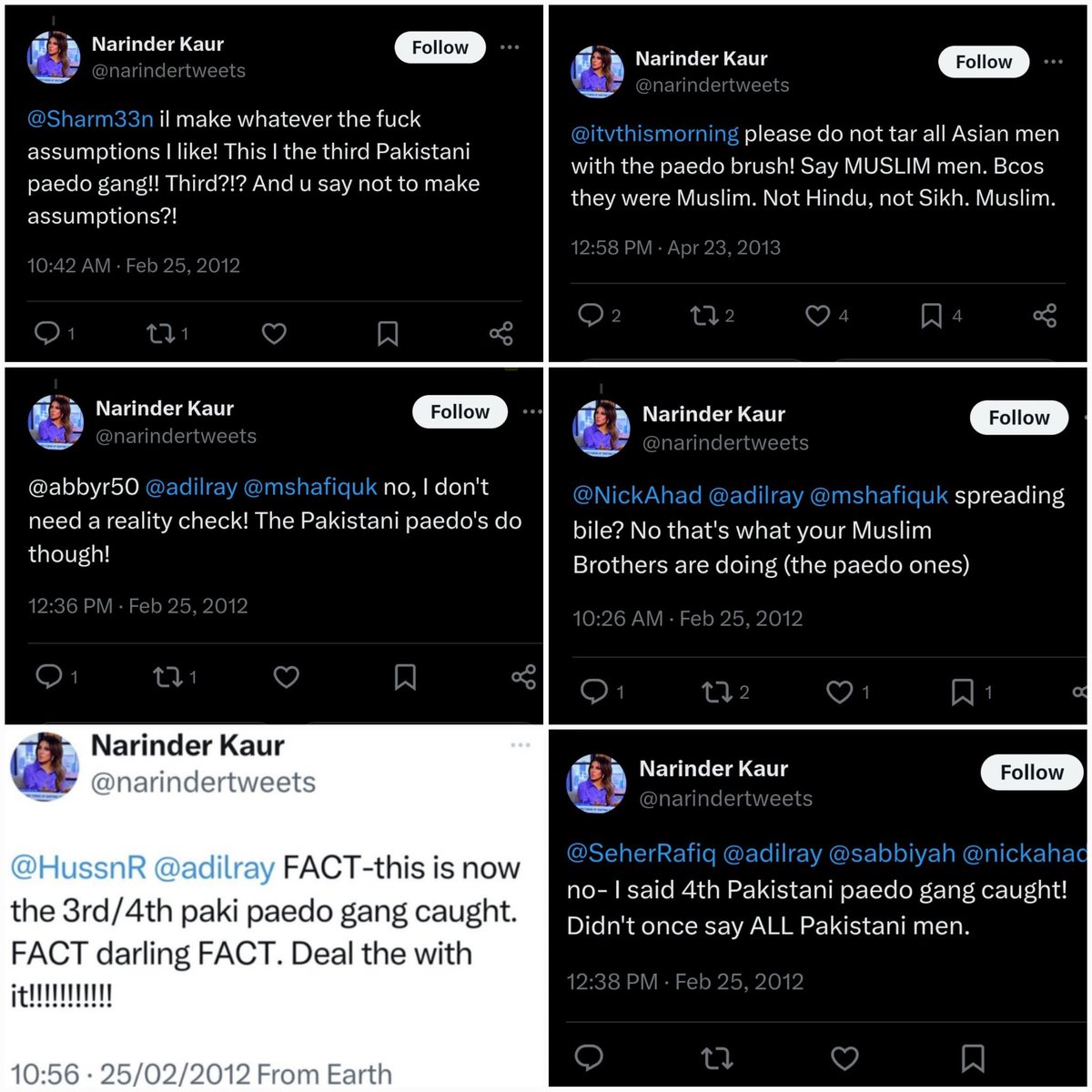

@narindertweets You know how racism works. You're a pro.... https://t.co/ZgCzYuYVz6

A stunning drone and fireworks show lighting up Guangzhou at night https://t.co/cY9Ot5MmMm

A stunning drone and fireworks show lighting up Guangzhou at night https://t.co/cY9Ot5MmMm

As the first Native player in the @NWSL, @gohaam, who is Navajo, San Felipe Pueblo & Black, is making history. But who made Madison into the player & person she has become? The army of women who raised her, she says. Watch the full doc on YouTube (link in bio). https://t.co/LEatnhgd6S

@Scobleizer @Wassieweb3 @autkast @briansolis @ServiceNow https://t.co/L7vgYmVTub

today, we're launching Mosaic Avatars. create realistic AI UGC content with natural looking personas, dynamic movement, and product placement. comment "FREEGEN" to get unlimited free generations for the next 2 days. this release comes with 3 key features. https://t.co/i6VEzFIfNn